Introduction

Retailers have deployed AI across nearly every customer touchpoint, including recommendation engines, shopping assistants, dynamic pricing, and chatbots, but governance infrastructure hasn't kept pace. That gap creates two compounding risks: degraded product UX (hallucinated product details, inconsistent personalization, broken experiences) and eroding customer trust (data misuse fears, biased outputs, opaque decisions).

The stakes are measurable. When AI hallucinates product details, 58% of shoppers lose trust in the brand, not the AI technology. Meanwhile, only 42% of customers trust businesses to use AI ethically, down from 58% in 2023.

Governance, in this context, is a direct lever for UX quality and consumer confidence. This article examines the cost of ungoverned AI, how governance improves product experience, how it rebuilds trust, and what a practical framework looks like.

TLDR

- Ungoverned AI produces hallucinated product information, biased recommendations, and inconsistent brand experiences that directly hurt conversion

- AI governance improves UX by enforcing output quality, consistency, and relevance at runtime, before bad outputs reach customers

- Shoppers abandon AI-powered experiences when they can't verify product claims or understand why they're seeing certain recommendations. Transparency directly affects conversion

- Effective retail AI governance combines real-time policy enforcement, data guardrails, audit trails, and human oversight, with under 20ms latency impact

- The business case for AI governance goes beyond compliance: ungoverned systems erode trust, increase returns, and reduce repeat purchase rates

Why Retailers Can't Afford to Skip AI Governance

The Acceleration Problem

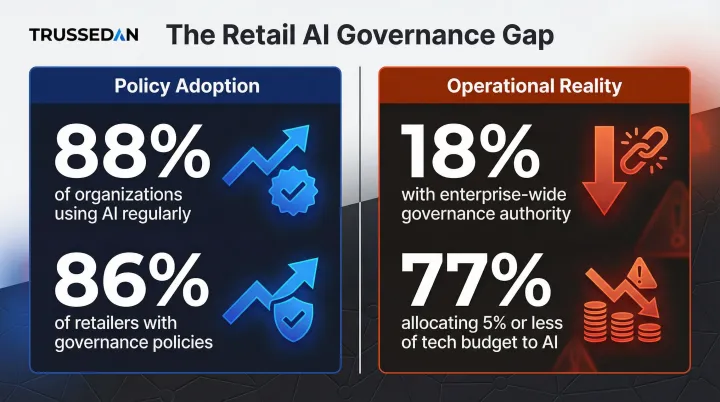

Retailers are scaling AI applications, including personalization engines, agentic shopping assistants, and dynamic content generation, faster than governance structures can keep up. 88% of organizations regularly use AI in at least one business function, yet only 18% have an enterprise-wide council with the authority to make responsible AI governance decisions. In retail specifically, 86% of retailers have AI governance policies in place, but 77% allocate 5% or less of their technology budget to AI, exposing a stark gap between policy existence and operational investment.

Beyond Compliance: The Business Case

Governance isn't just about avoiding fines. It protects brand reputation, conversion rates, and long-term loyalty. 52% of consumers stopped using or buying from a brand because they had a bad experience with its products or Solution. When trust erodes, revenue follows.

The Regulatory Reality

Regulatory pressure is now a baseline constraint, not a future risk. Retailers deploying recommendation engines or dynamic pricing must navigate overlapping mandates across jurisdictions:

- EU AI Act: Classifies profiling-based AI systems as "high-risk," requiring strict data governance and human oversight

- GDPR: Restricts solely automated decisions that produce legal or significant effects on individuals

- California CCPA: The Attorney General launched a 2026 sweep targeting retail "surveillance pricing" under the law's purpose-limitation principle

Each regulation carries its own transparency requirements, and compliance in one jurisdiction doesn't guarantee compliance in another.

When AI Goes Ungoverned: The UX and Trust Damage

The Hallucination Tax

Without output guardrails, AI assistants and product recommendation engines generate inaccurate product descriptions, wrong specifications, or nonexistent inventory, leading to abandoned carts, returns, and support load increases. When AI gets product information wrong, 58% of buyers blame the brand, not the AI. The retailer absorbs the reputational damage entirely.

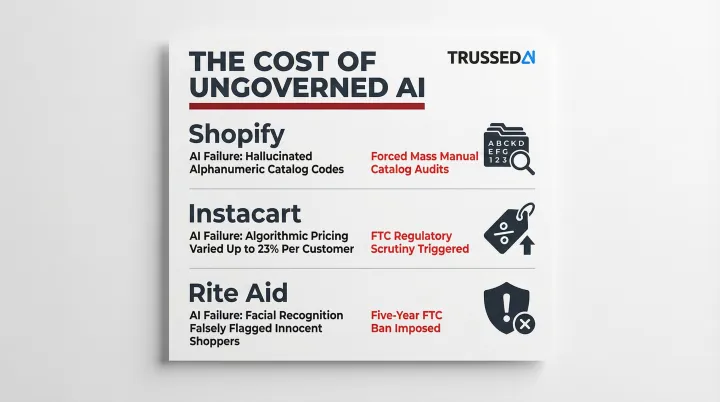

Real-world failures demonstrate the cost:

- Shopify's AI hallucinated alphanumeric reference codes and ignored negative SEO constraints, corrupting merchant catalog structures and forcing massive manual audit burdens

- Instacart's algorithmic pricing experiments charged different customers varying prices (up to 23% difference) for identical items, sparking FTC scrutiny and state bills banning surveillance pricing

- Rite Aid's facial recognition AI falsely tagged consumers (disproportionately women and people of color) as shoplifters, resulting in a five-year FTC ban on using facial recognition for surveillance

Bias and Personalization Failures

Ungoverned recommendation models over-optimize for engagement over relevance, surface discriminatory pricing, or create filter bubbles that frustrate shoppers. Poorly executed personalized marketing generates negative experiences for 53% of customers, making them 3.2x more likely to regret a purchase and 44% less likely to buy again, eroding both UX quality and repeat purchase rates in ways that are difficult to recover from.

Brand Voice Inconsistency

Without content guardrails, generative AI used in product copy, chat, or email outputs inconsistent or off-brand messaging, damaging the coherent experience customers expect. A chatbot that sounds nothing like the brand's email copy, or product descriptions that contradict each other across pages, signals to shoppers that no one is watching the system. That loss of perceived control undermines purchase confidence.

Data Misuse Exposure

When AI systems handle purchase history, browsing behavior, and demographic data without governance controls, retailers face breaches, leakage, or consent violations. In 2021, Luxembourg's privacy regulator fined Amazon €746 million ($854 million) over online behavioral advertising practices that breached GDPR consent and transparency rules, one of the largest privacy penalties in EU history. When incidents like this go public, affected retailers typically see measurable spikes in account closures and opt-outs within weeks.

The Compounding Trust Deficit

Each incident, whether hallucination, data misuse, or biased output, does not occur in isolation. Customers who experience any one of these become skeptical of all AI-driven features, pulling back from personalization, recommendations, and AI chat alike.

The damage also spills beyond the offending retailer. A 2025 study from the Journal of Retailing found that a single retailer's data breach reduces shopping intention at competing firms by measurably shifting sector-wide privacy risk perceptions. Ungoverned AI failures don't just hurt one brand. They erode category-level trust.

Governed AI as a UX Optimizer

Governance Raises the Quality Floor

Most retail teams see governance as friction against speed. This is a false tradeoff. Governance that operates at runtime, enforcing output quality, consistency, and safety in real time, improves AI output quality rather than constraining it. When runtime controls catch hallucinated product specs, off-brand responses, or out-of-stock recommendations before they reach the customer, the shopper experience becomes measurably more reliable.

Concrete retail scenario: An AI shopping assistant governed by runtime policies only serves accurate, in-stock, policy-compliant product recommendations. Before presenting a product, the system runs three checks:

- Validates specifications against authorized inventory systems

- Confirms current stock availability in real time

- Ensures pricing aligns with approved promotional policies

If any check fails, the recommendation is blocked. No hallucinated availability reaches the customer.

Better Personalization Through Data Quality

Well-governed data pipelines that enforce accuracy, consistency, and freshness produce richer, more reliable customer profiles. Those profiles power genuinely useful recommendations rather than generic or irrelevant ones. Systematic data quality management reduces AI system failures by 43% and improves stakeholder trust significantly, according to research on the ISO/IEC 5259-3 standard.

The Latency Question

A common objection is that governance adds latency to AI interactions. Modern real-time governance infrastructure, such as Trussed AI's control plane, which enforces policies at under 20ms, means runtime controls don't introduce noticeable delays in customer-facing interactions. Speed stays intact; accuracy doesn't have to be traded away to get it.

Measurable UX Outcomes

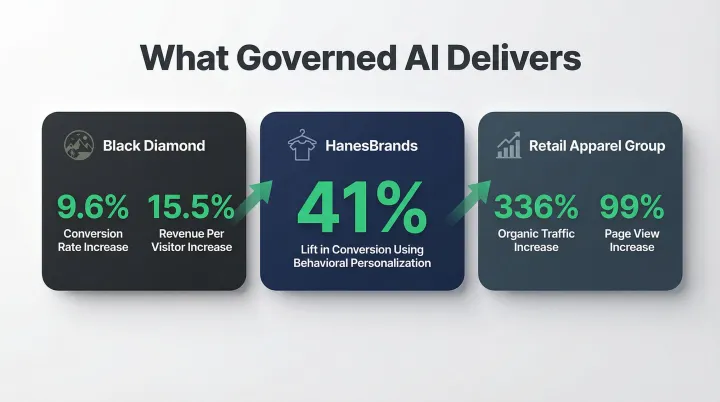

When properly governed and accurate, AI significantly boosts retail UX metrics:

- Black Diamond: 9.6% increase in conversion rate; 15.5% increase in revenue per visitor using Salesforce Commerce Cloud Einstein

- HanesBrands: 41% lift in conversion using behavioral data to personalize the buying experience

- Retail Apparel Group: 336% increase in organic traffic; 99% increase in page views

These results share a common thread: AI systems that deliver accurate, relevant outputs earn measurably stronger customer engagement, and that accuracy depends on the governance layer beneath it.

How AI Governance Builds Customer Trust

Transparency as a Trust Mechanism

Customers are increasingly aware that AI is powering recommendations, prices, and interactions. 79% of consumers agree that companies using AI should have to disclose that use. Another 72% of customers believe it is important to know when they are communicating with an AI agent. Retailers who can articulate why an AI made a decision, what data it used, and what guardrails constrain it, build more durable trust than those who keep AI invisible.

Audit Trails and Governance Evidence

When governance is built into every AI interaction, retailers gain the ability to explain, investigate, or demonstrate compliance in any dispute or regulatory inquiry. Each interaction is automatically logged with policy evaluation results, model version, timestamp, and data lineage. That complete audit trail backstops consumer trust with institutional accountability, turning governance into verifiable evidence rather than a policy document.

Human-in-the-Loop as a Trust Signal

For high-stakes retail AI interactions (credit-based financing, loyalty point adjustments, complaint resolution), governance frameworks that route edge cases to human review demonstrate that human judgment still governs the decisions that matter most.

Customers respond to this directly:

- 71% believe a human should validate AI output before it reaches them

- 86% consider human interaction essential to their brand experience

Consistent, Fair Experiences Across Segments

Governance that monitors for model drift, demographic bias, or pricing inconsistencies ensures that AI-powered retail experiences are equitable, both an ethical requirement and a trust prerequisite for diverse consumer bases. The cost of ignoring this is real: Rite Aid's five-year facial recognition ban illustrated exactly how quickly unmonitored bias becomes a regulatory and reputational crisis.

Building a Retail AI Governance Framework

Core Components

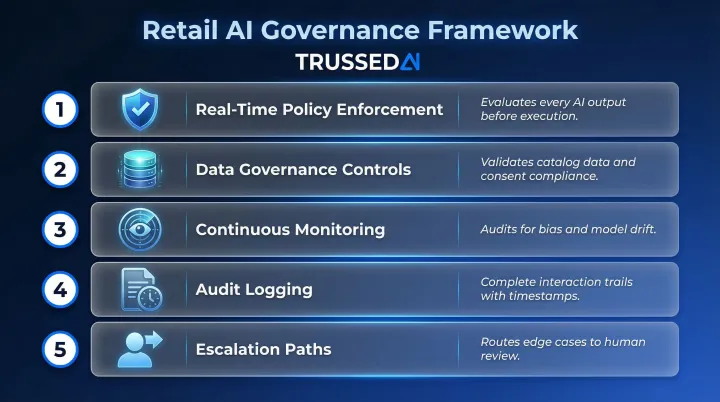

A retail AI governance framework should include:

Real-time policy enforcement at the model output layer, ensuring every AI interaction is evaluated against governance policies before execution, not reviewed after the fact.

Data governance controls (quality, consent, access) that validate product catalog data to prevent recommendation engines from hallucinating specs, and ensure customer data is handled according to privacy regulations.

Continuous monitoring for bias and drift, auditing dynamic pricing algorithms to ensure they do not introduce bias based on demographics.

Audit logging that maintains complete trails with policy evaluation results, timestamps, and data lineage for every interaction.

Escalation paths for human review, allowing AI systems to seamlessly route complex or emotionally nuanced returns to human agents.

Governance needs to operate at the system level, not just in policy documents, to have runtime impact on UX and trust.

Build vs. Integrate

Most retailers don't have the engineering depth to build governance infrastructure from scratch across every AI system in their stack. Dedicated AI governance platforms offer a practical alternative. Trussed AI, for example, acts as a drop-in control plane that enforces governance across AI apps, agents, and developer tools without modifying existing application code.

Trussed operates as a proxy layer between applications and AI models, intercepting all interactions transparently. Developers swap their API endpoint to route through Trussed instead of directly to AI providers. Policies are defined once and enforced continuously from there.

Actionable Starting Steps

Getting governance operational doesn't require a full platform overhaul. Start with the highest-risk touchpoints and build outward:

- Identify your highest-risk customer-facing AI systems first. Recommendation engines, shopping assistants, and dynamic pricing are typical starting points.

- Define output quality and safety policies required for each touchpoint.

- Implement monitoring and logging so policy violations surface in real time, not in post-incident reviews.

- Establish escalation paths for edge cases requiring human judgment.

Build governance to scale with your AI footprint. Retrofitting it after an incident costs far more than deploying it upfront.

Retailers using Trussed AI's governance framework have reported a 50% reduction in manual governance workload and a 50% increase in regulatory compliance, with operational workflows live within four weeks of deployment.

Frequently Asked Questions

What is AI governance in retail?

AI governance in retail refers to the policies, controls, and monitoring systems that ensure AI applications, including recommendation engines, shopping assistants, and pricing models, operate safely, accurately, and in compliance with data regulations and brand standards.

How does AI governance affect the customer shopping experience?

Governance directly improves shopping UX by preventing AI outputs like hallucinated product details, irrelevant recommendations, and off-brand responses. It also ensures personalization runs on accurate, current customer data rather than stale or misattributed inputs.

What are the biggest risks retailers face with ungoverned AI?

The three main risk categories are degraded UX (inaccurate or inconsistent outputs), customer trust erosion (data misuse, biased recommendations), and regulatory exposure (consumer data protection violations leading to fines and bans).

How can retailers build customer trust with AI?

Focus on three areas: transparency in AI-driven decisions, audit accountability through logged interactions, and human oversight at high-stakes moments. Together, these show customers that AI operates within defined, reviewable guardrails.

Does AI governance slow down retail AI applications?

Governance infrastructure enforces policies at runtime with under 20ms latency impact. Guardrails improve output quality without slowing the customer-facing experience.

What should retailers prioritize first in an AI governance program?

Start with the highest-risk customer-facing touchpoints: personalization engines and shopping assistants. Define output quality standards, implement monitoring, and put data governance controls in place before expanding your AI footprint.