Introduction

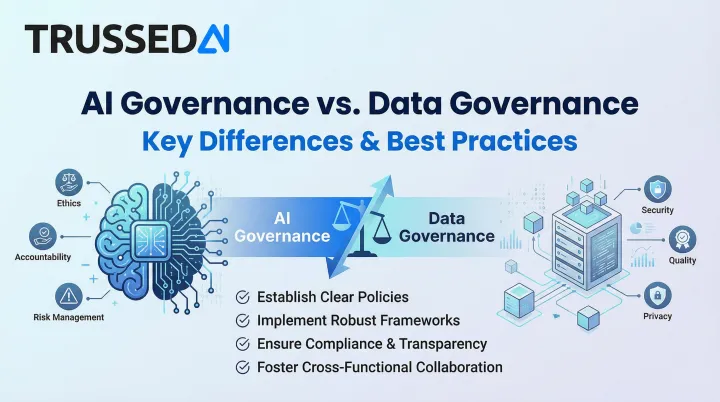

Most enterprises already have data governance in place: access controls, quality checks, and compliance frameworks refined over years. As AI deployments scale from experimental chatbots to production-grade agents making autonomous decisions, a critical blind spot emerges. Data governance doesn't cover how AI models behave, decide, or fail once deployed.

A healthcare insurer might have impeccable data governance: every patient record catalogued, HIPAA-compliant access controls, pristine data quality. But when their claims automation AI denies coverage based on a biased training dataset, data governance offers no answers. The data was perfect. The model's behavior was not.

These two disciplines are not the same, and conflating them creates real exposure. This article breaks down exactly what each one governs, where they overlap, and what a complete governance strategy looks like in practice , before regulators or a failed model decision force the issue.

TL;DR

- Data governance controls quality, access, security, and lifecycle of data assets,the raw material feeding AI

- AI governance addresses how models and agents behave once deployed,covering outcomes, decisions, accountability, and ethical use

- Strong data governance is a prerequisite for AI governance - but it cannot replace it, because each framework addresses different risks

- Regulated industries need both frameworks running in tandem to meet GDPR, HIPAA, EU AI Act, and NIST AI RMF obligations

- Unified governance with clear ownership, automated controls, and runtime enforcement keeps AI deployment audit-ready at scale

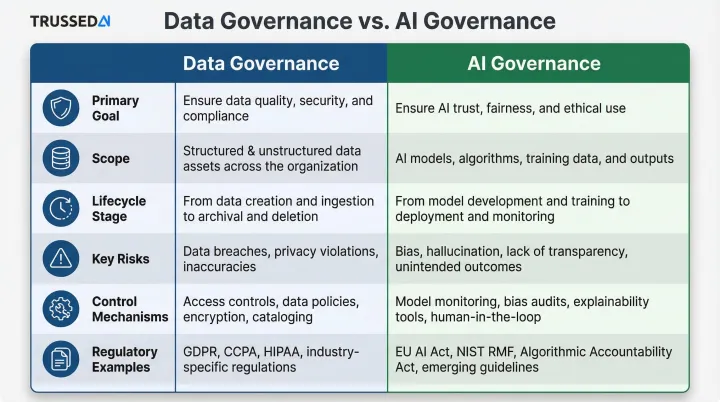

AI Governance vs. Data Governance: Quick Comparison

While both share goals around compliance and risk reduction, their scope, lifecycle focus, and risk types differ in meaningful ways. Data governance manages the foundation: ensuring trustworthy inputs. AI governance operates on what's built atop that foundation, focusing on safe, fair, and explainable outputs.

The oversight cadence also diverges. Data governance runs as continuous, steady-state monitoring with periodic audits. AI governance is more event-driven , triggered by model versioning, drift detection, deployment changes, or when automated decisions cross predefined risk thresholds.

| Dimension | Data Governance | AI Governance |

|---|---|---|

| Primary Goal | Accurate, secure, accessible, compliant data throughout its lifecycle | Ethical, safe, transparent, and accountable AI system operation throughout its lifecycle |

| Scope | Data assets: quality, lineage, access, privacy, storage, deletion | AI models and agents: behavior, decisions, fairness, explainability, performance |

| Lifecycle Stage | Data collection, storage, processing, archival, deletion | Model development, training, deployment, monitoring, retraining, retirement |

| Key Risks | Data breaches, unauthorized access, poor quality, regulatory violations (GDPR, CCPA) | Algorithmic bias, model drift, hallucinations, opacity, accountability gaps, discriminatory outcomes |

| Control Mechanisms | Data catalogs, quality tools, lineage tracking, access management, encryption | Model registries, bias testing, explainability tools, guardrails, runtime monitoring, policy enforcement |

| Regulatory Examples | GDPR, CCPA, HIPAA (mature privacy laws focused on data protection) | EU AI Act, NIST AI RMF, NYC Local Law 144 (emerging frameworks focused on algorithmic fairness and accountability) |

What Are Data Governance and AI Governance?

What is Data Governance?

Data governance is the system of policies, processes, roles, and standards ensuring organizational data is accurate, secure, private, accessible, and usable throughout its lifecycle,from collection to deletion. Its primary job: establish a reliable, trustworthy "source of truth" that the business and AI systems depend on.

Core pillars include:

- Data quality and consistency - Validation rules, deduplication, standardization across systems

- Access control - Who can use which data, under what conditions, with what permissions

- Data lineage - Tracking data origins, transformations, and movement across systems

- Compliance - Meeting GDPR, CCPA, HIPAA privacy regulations through documented controls

Data governance is a relatively mature discipline. Most enterprises have some version in place, even if implementation varies widely. The challenge isn't whether to do it,it's doing it well enough to support AI.

What is AI Governance?

AI governance is the system of rules, policies, oversight processes, and technical controls guiding how AI systems are developed, deployed, monitored, and retired. The goal: ensure they operate ethically, safely, transparently, and in line with organizational values and regulatory requirements. It covers the entire model lifecycle, not just the data feeding it.

Core pillars include:

- Algorithmic fairness and bias monitoring - Testing for discriminatory patterns across demographic groups

- Model explainability (XAI) - Making AI decisions interpretable and auditable

- Accountability - Assigning ownership for automated decisions and their consequences

- Performance drift monitoring - Detecting when model accuracy degrades over time

- Compliance - Meeting EU AI Act, NIST AI RMF, and emerging AI-specific regulations

AI governance is an emerging, rapidly evolving discipline. Many enterprises are still building it out, often discovering gaps only when models fail in production or regulators ask uncomfortable questions.

One practical implication: AI governance can't rely on after-the-fact documentation alone. Platforms like Trussed AI address this by enforcing policies at the model and agent level in real time, generating audit evidence as interactions happen rather than requiring manual review weeks later.

Key Differences Between AI Governance and Data Governance

The fundamental scope difference: data governance manages the input (the data asset itself), while AI governance manages the behavior and output (what the model does with that data). One framework controls what goes in; the other controls what comes out,and what harm it might cause.

Risk Profiles

Data governance risks are tangible: data breaches, silos, inaccuracies, unauthorized access. AI governance risks are dynamic: algorithmic bias, model drift, hallucinations, "black box" opacity, and accountability gaps when automated decisions cause harm.

Consider a credit scoring model trained on historically biased data. It passes every data governance check: data is accurate, properly stored, access-controlled, GDPR-compliant. Yet it produces discriminatory outcomes, denying loans to qualified applicants from underrepresented groups. Data governance verified the ingredients. AI governance would have caught the poisoned recipe.

Regulatory Landscapes

| Domain | Governing Frameworks | Primary Focus |

|---|---|---|

| Data Governance | GDPR, CCPA, HIPAA | Consent, access, deletion, breach notification |

| AI Governance | EU AI Act, NYC Local Law 144, NIST AI RMF, Colorado SB21-169 | Algorithmic fairness, explainability, bias audits, risk management |

Data governance operates under mature, well-defined privacy laws with established compliance playbooks. AI governance faces a newer, more fluid environment , each regulation above targets a different slice of AI risk, from hiring bias to insurance underwriting to general algorithmic accountability.

The EU AI Act explicitly preserves GDPR's authority , the regulation states that EU data protection law "applies to personal data processed in connection with the rights and obligations laid down in this Regulation." Organizations must comply with both simultaneously: existing data privacy laws and emerging AI-specific frameworks.

Stakeholders and Ownership

Data governance is led by a defined core team. AI governance requires a broader coalition:

- Data governance: Data stewards, Chief Data Officers, compliance teams

- AI governance: ML engineers, legal counsel, ethics boards, risk officers, and business unit leaders , all with shared accountability

The key ownership question shifts too. Data governance asks: who owns this data asset? AI governance asks: who's accountable when this specific model denies coverage incorrectly, or screens out a qualified candidate? Ownership follows the decision, not just the data.

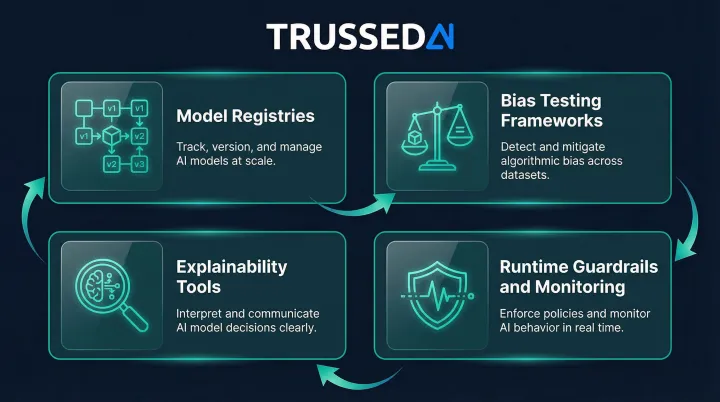

Implementation Maturity and Tooling

Data governance draws on an established toolchain , data catalogs, quality platforms, lineage tracking, access management. AI governance requires a newer set of capabilities:

- Model registries to track deployed models and versions

- Bias testing frameworks to detect discriminatory outputs before and after deployment

- Explainability tools to surface how models reach specific decisions

- Runtime guardrails and monitoring that enforce policies continuously, not just during periodic audits

That last point is the sharpest distinction in practice. Data governance tooling largely operates at rest , cataloging, classifying, auditing. AI governance must operate at runtime, where model behavior can drift, degrade, or produce harmful outputs between audit cycles.

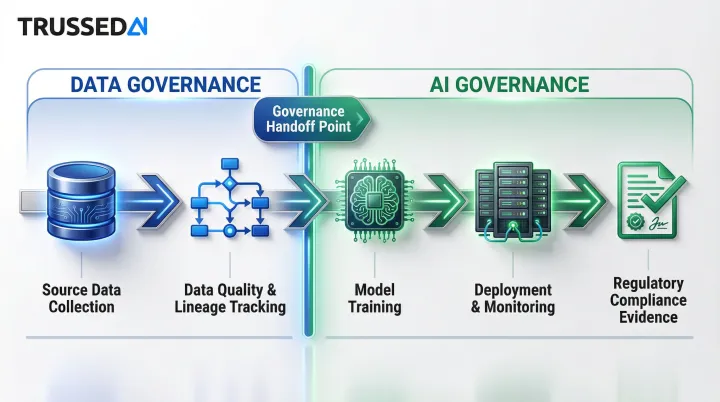

How AI Governance and Data Governance Work Together

Poor data governance directly sabotages AI governance. If data feeding a model is incomplete, biased, or ungoverned, no amount of AI governance policy fixes downstream outputs.

High-stakes examples:

- Hiring algorithms trained on historical data reflecting past discrimination perpetuate bias regardless of fairness policies

- Healthcare diagnostics trained on unrepresentative datasets (predominantly male, predominantly white) misdiagnose underrepresented populations

- Credit models with incomplete records for underserved communities systematically deny access to financial Solution

Data lineage is where the two frameworks become inseparable. AI governance depends on end-to-end traceability , knowing exactly which datasets (and which versions) trained a specific model, and how changes to source data propagate downstream. Strong data governance creates this traceability. Without it, AI governance teams cannot explain model behavior, remediate drift, or demonstrate regulatory compliance.

The EU AI Act explicitly requires that deployers of high-risk AI systems use transparency information provided by developers to fulfill GDPR Data Protection Impact Assessment (DPIA) obligations. You cannot demonstrate algorithmic transparency without data privacy compliance. The two frameworks are legally inextricable.

That legal entanglement has practical consequences for compliance teams. The EU AI Act introduces a narrow exception allowing processing of sensitive personal data strictly for bias detection and correction , bridging historical tension between data minimization and algorithmic fairness. Organizations must coordinate both governance frameworks to use this exception safely.

One emerging risk deserves specific attention: synthetic data contamination.

As LLMs and generative agents create new records, ungoverned synthetic data can corrupt primary datasets , causing model collapse, where future models train on AI outputs rather than ground truth. At scale, AI-generated content quietly replaces the real-world data foundation that accurate models depend on. Governing both data and AI outputs together is the only way to prevent it.

Best Practices for Building a Unified Governance Framework

Start with data foundations before layering AI-specific controls. Catalog existing data assets with business context, establish data quality thresholds, implement access controls for sensitive data. Enterprises rushing to implement AI governance without solid data foundations face cascading failures.

Define "AI-ready data": documented, lineaged, quality-checked, and access-controlled. Skip this step, and even well-written AI governance policies break down at the first production incident.

Define clear ownership and accountability at both levels. Without named owners, well-written policies go unenforced. Assign roles explicitly:

- Data stewards responsible for data asset quality and access

- AI model owners covering training data selection, performance monitoring, and compliance

- A cross-functional governance committee with legal, compliance, IT, data science, and business representation

When a model produces a discriminatory outcome, you need an answer to "who's responsible?" before deployment , not during the regulatory investigation.

Runtime enforcement is what separates compliance theater from operational control. A governance policy that lives in a document does nothing at the moment a model makes a decision. Enforcement has to happen where execution happens - before every tool call, data access, and workflow trigger.

Trussed AI operates as a drop-in control layer that enforces governance policies in real time across AI apps, agents, and developer tools. Governance evidence is generated as a byproduct of every governed interaction, not assembled retroactively when regulators ask questions.

Automate compliance monitoring and audit trail generation. Manual governance cannot scale when AI systems number in dozens or hundreds. Continuous monitoring , automated bias detection, drift alerts, policy violation flags , and auto-generated audit trails are table stakes at that scale.

Trussed AI maintains less than 1% compliance violations and complete audit trails automatically, demonstrating what modern governance infrastructure enables: real-time assurance rather than point-in-time audits conducted months after decisions are made.

Conduct regular cross-functional policy reviews and define measurable KPIs. Governance is a living program, not one-time setup. Schedule quarterly reviews including legal, compliance, and technical teams.

Define KPIs beyond "we have a policy":

- Percentage of models audited for fairness

- Time-to-remediate identified risks

- Compliance violation rates

- Governance coverage across AI stack

- Audit evidence completeness

Track these metrics continuously. Governance maturity is measured by outcomes, not documentation volume.

Conclusion

Data governance and AI governance are not alternatives - they are sequential and interdependent layers of a complete enterprise risk strategy. Start with data foundations, then extend controls to cover model behavior, automated decisions, and runtime enforcement. Building them in the right sequence,and ensuring they operate as a unified system,is the actual governance challenge.

For enterprises in regulated industries,insurance, healthcare, financial Solution,the cost of governance gaps is not just regulatory fines. It's broken AI workflows, customer-facing failures that damage retention, and failed deployments. Organizations treating governance as infrastructure (not overhead) deploy AI faster, with fewer violations, and with the audit readiness regulators increasingly require.

Those deployment advantages also have a regulatory dimension. The EU AI Act and GDPR now explicitly intertwine: achieving algorithmic transparency requires data privacy compliance, and regulators expect organizations to demonstrate both together. For enterprises in scope, unified governance is no longer optional,it is the compliance baseline.

Frequently Asked Questions

How is AI governance different from data governance?

Data governance manages data assets,ensuring quality, access, security, and compliance throughout the data lifecycle. AI governance manages how AI models and systems behave once deployed,ensuring fairness, transparency, explainability, and accountability in automated decisions. Data governance governs the input; AI governance governs the behavior and outcome.

Can strong data governance replace the need for AI governance?

Data governance is a necessary foundation, but it doesn't address AI-specific risks like algorithmic bias, model drift, hallucinations, or accountability gaps from autonomous decision-making. Organizations need both: one ensures the raw material is trustworthy; the other ensures what's built from it is safe and explainable.

Which should organizations implement first,data governance or AI governance?

Build data governance foundations first, then layer AI-specific controls on top. AI models trained on ungoverned data produce unreliable, potentially biased outputs regardless of how strong your AI governance policies are. If AI is already in production, prioritize developing both in parallel.

What regulations apply specifically to AI governance versus data governance?

Data governance falls under mature privacy laws like GDPR, CCPA, and HIPAA, which focus on data protection and individual rights. AI governance is covered by newer frameworks , including the EU AI Act, NIST AI RMF, NYC Local Law 144, and Colorado SB21-169 , which focus on algorithmic fairness, explainability, and accountability for automated decisions. Most enterprises must comply with both simultaneously.

How does AI governance handle model bias and hallucinations?

AI governance addresses model bias through mandatory bias testing before deployment, continuous monitoring of outputs across demographic groups, and bias detection algorithms flagging unfair patterns. Hallucinations are addressed through output monitoring guardrails, human-in-the-loop review for high-stakes decisions, and performance thresholds triggering alerts when outputs fall outside acceptable ranges.

What does a unified data and AI governance framework look like in practice?

A unified framework typically includes:

- A shared metadata and policy layer covering both data assets and AI models

- Cross-functional governance ownership across data and AI/ML teams

- Automated compliance monitoring across data and AI lifecycles

- Runtime enforcement that applies policies continuously, not just during periodic audits

The goal is a single control plane where data stewards and AI teams operate from shared context and accountability structures.