Introduction

The EU AI Act is now enforceable law. Banned AI practices have been prohibited since February 2, 2025, and full compliance is required by August 2, 2026. The stakes are severe: fines reaching €35 million or 7% of global annual turnover, whichever is higher. This regulation applies to any organization whose AI systems affect EU residents, regardless of where your company is headquartered.

Most enterprises already understand what the regulation says. The harder problem is identifying which AI systems fall under it, mapping specific obligations to each system, and operationalizing compliance before deadlines arrive. Many enterprises remain unprepared, and a significant portion lack even a basic AI system inventory, while enforcement timelines continue to accelerate.

This guide covers the risk categories that determine your obligations, who must comply, the critical differences between provider and deployer responsibilities, the enforcement timeline, and a practical action checklist to achieve compliance.

TL;DR

- Applies to any organization whose AI affects EU residents, regardless of where they're headquartered

- AI systems fall into four risk tiers plus a General-Purpose AI category, each with distinct obligations

- Your role as provider or deployer determines your specific obligations

- Key dates: February 2025 (prohibited AI banned), August 2026 (full high-risk compliance required)

- Non-compliance penalties reach €35 million or 7% of global turnover

What Is the EU AI Act and Why Does It Matter?

The EU AI Act is the first binding comprehensive legal framework for artificial intelligence, formally adopted in May 2024 and in force since August 1, 2024. The regulation operates on a risk-based philosophy: the greater the potential harm an AI system poses, the stricter the obligations. Just as GDPR became the de facto global standard for data privacy, the EU AI Act is already shaping how governments worldwide approach AI regulation.

The Act's extraterritorial reach extends far beyond EU borders. Any company offering AI-enabled products or Solution to EU residents, or whose AI systems affect people within the EU, must comply, regardless of headquarters location. The penalty structure reflects this serious enforcement approach:

- €35 million or 7% of global turnover for prohibited AI violations

- €15 million or 3% of global turnover for most high-risk AI obligations

- €7.5 million or 1.5% of global turnover for providing incorrect information

The EU's prescriptive approach contrasts sharply with more flexible frameworks elsewhere. The United States relies on voluntary guidelines like the NIST AI RMF and sector-specific agency regulations. The UK employs a principles-based approach through existing regulators. The EU AI Act, by contrast, creates binding legal requirements with mandatory conformity assessments.

For multinationals, this means harmonizing AI governance across jurisdictions rather than managing each region in isolation. In practice, the EU's strict requirements tend to set the compliance floor for global operations.

Who Needs to Comply with the EU AI Act?

Compliance obligations apply to five distinct roles: providers (those who develop or place an AI system on the market), deployers (organizations using AI in professional activities), importers, distributors, and authorized representatives.

Most enterprises are primarily deployers. They use AI built by third parties, but this role still carries mandatory obligations. Deployers can become providers if they make substantial modifications to a third-party system or change its intended purpose (Article 25(1)).

The sectors most affected and likely to encounter high-risk AI obligations include:

- Healthcare and medical devices

- Financial Solution (credit scoring, insurance pricing)

- HR and recruitment

- Education and vocational training

- Critical infrastructure

- Law enforcement and judicial processes

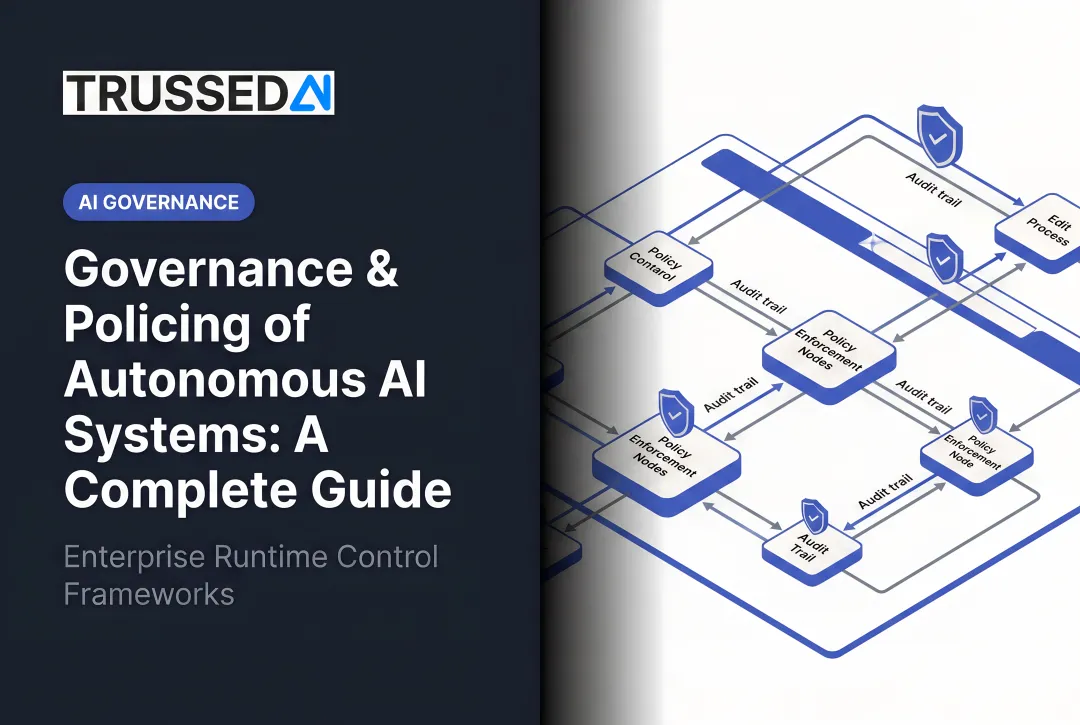

These sectors face the most stringent requirements, but compliance scope extends further. Even organizations using AI in supporting roles, such as internal analytics and marketing automation, must conduct assessments to confirm risk classification. Organizations deploying high-risk AI systems are required under Articles 11, 12, and 26 to maintain documentation and records of those systems. Classification is therefore the mandatory first step, and building an AI system inventory is the practical starting point.

Non-EU companies face specific requirements: any organization serving EU customers through AI-driven products or Solution must comply. Failure risks both regulatory penalties and loss of EU market access. Non-EU providers must designate an authorized representative within the EU to act on their behalf for compliance purposes.

EU AI Act Risk Categories Explained

The Act's risk-based framework scales obligations with potential harm, from no requirements for minimal-risk systems to outright bans for unacceptable-risk practices. Organizations cannot self-select their category; classification is determined by the Act's legal criteria in Articles 5, 6, and Annex III.

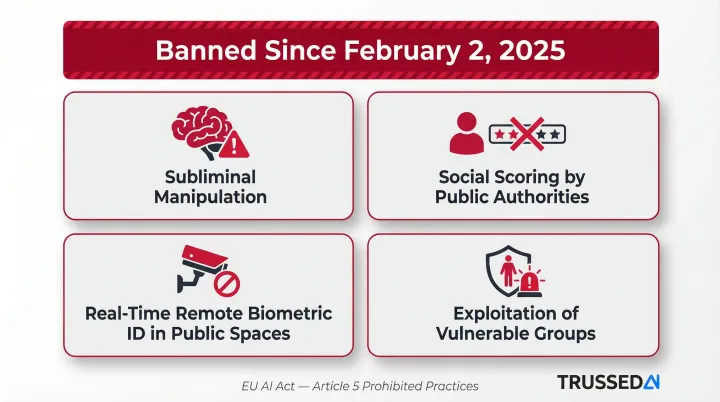

Prohibited AI Practices

These AI practices have been banned since February 2, 2025 under Article 5:

- Subliminal manipulation causing psychological or physical harm

- Social scoring of individuals by public authorities

- Real-time remote biometric identification in publicly accessible spaces (with narrow law enforcement exceptions)

- Exploitation of vulnerabilities of children, elderly, or people with disabilities