Introduction

Enterprises face a critical tension: AI adoption is accelerating across applications, agents, and developer tools, but governance frameworks have not kept pace. This gap creates compounding exposure in regulatory risk, data security, and operational fragility. 63% of breached organizations lacked AI governance policies to manage AI or prevent the proliferation of shadow AI, while 97% of organizations that reported an AI-related security incident lacked proper access controls.

The consequences are measurable. Shadow AI incidents cost organizations an average of $4.63 million,$670,000 more than standard breaches. Meanwhile, 27.4% of corporate data entered into AI tools is sensitive, up from 10.7% the previous year. That exposure grows every week governance falls further behind deployment.

We'll cover what enterprise AI governance is, the six foundational pillars, a practical implementation roadmap, how to enforce governance at runtime, and considerations for regulated industries. By the end, you'll have a clear framework to govern AI responsibly while enabling safe innovation.

What Is Enterprise AI Governance?

Most organizations define AI governance too narrowly. It's not just about end-user applications , it covers every layer where AI operates: models, agents, developer tools, and automated workflows.

At its broadest, enterprise AI governance is the framework of policies, processes, organizational structures, and technical controls that ensure AI systems are deployed responsibly, securely, and in compliance with regulatory obligations.

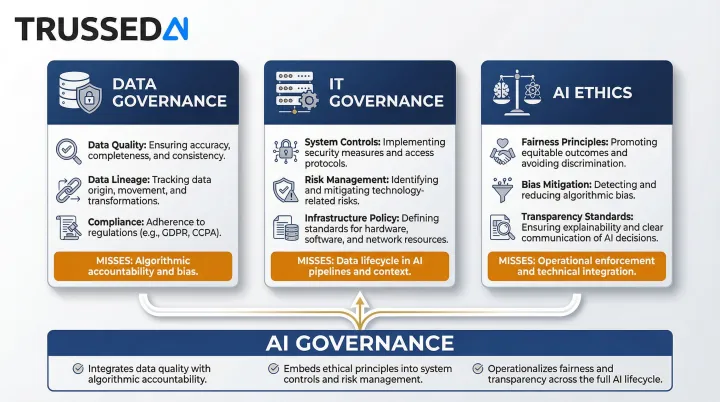

How AI Governance Differs from Related Disciplines

AI governance is distinct from adjacent disciplines. The table below shows where each one stops , and where AI governance picks up:

| Discipline | What It Covers | What It Misses |

|---|---|---|

| Data governance | Data lifecycle , quality, lineage, access, retention | Model-specific risks: hallucinations, prediction bias, agent autonomy |

| IT governance | Change management, access controls, infrastructure security | AI-specific risks: model drift, adversarial attacks, autonomous agent behavior |

| AI ethics | Moral principles , fairness, transparency, accountability | Operational enforcement through policies, controls, and audits |

AI governance is where ethics becomes enforceable. It takes the principles established by ethics frameworks and implements them through technical controls and audit mechanisms.

Five Core Principles of Effective AI Governance

Any effective AI governance program rests on five foundational principles:

- Accountability and ownership - Named individuals own each AI system, with defined escalation paths when something goes wrong

- Transparency and explainability - Stakeholders understand how AI systems make decisions and when AI is being used

- Risk-based proportionality - High-risk systems get the most scrutiny; low-risk ones aren't over-burdened with controls

- Compliance by design - Regulatory requirements are embedded into systems from the start, not retrofitted

- Continuous monitoring and improvement - Policies and controls adapt as AI capabilities, regulatory requirements, and risk profiles shift

Why Enterprises Can't Ignore AI Governance in 2025

The Emerging Risk Landscape

Ungoverned AI creates measurable exposure. Data leakage into public models occurs when 73.8% of ChatGPT usage at work happens through non-corporate accounts that incorporate shared data into public models. Compliance violations under GDPR, HIPAA, and CCPA multiply as AI systems process regulated data without proper controls.

The data leakage problem extends to IP. 82.8% of legal documents and 50.8% of source code put into AI tools are sent to non-corporate accounts. AI hallucinations in high-stakes decisions,credit approvals, medical diagnoses, legal advice,create liability when outputs aren't validated. Adversarial prompt injection attacks compound this, with 73% of audited production AI deployments showing exposure.

Regulatory Momentum Building Globally

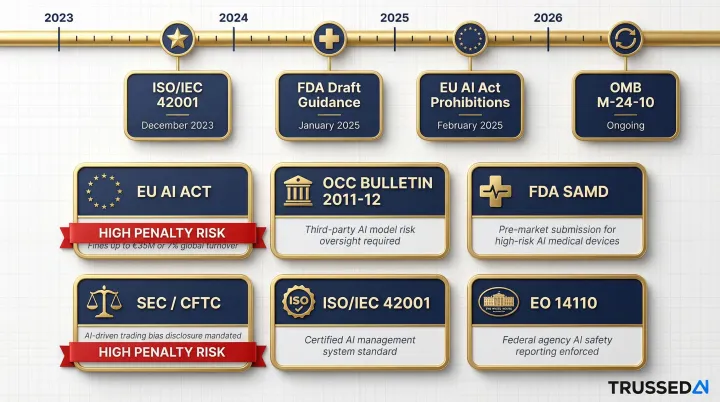

Multiple jurisdictions are now enforcing AI-specific requirements, not just proposing them:

- EU AI Act: Prohibitions took effect February 2025; fines reach €35 million or 7% of global annual turnover for non-compliance, with general provisions applying from August 2026

- OCC Bulletin 2011-12: Requires banks to apply rigorous model risk management standards directly to AI systems

- FDA: Released draft guidance on AI-enabled device software functions in January 2025

- SEC and CFTC: Actively applying existing oversight frameworks to AI use in financial Solution

- ISO/IEC 42001: Published December 2023 as the first international standard for AI management systems

- Executive Order 14110 + OMB M-24-10: Federal agencies must designate Chief AI Officers and implement documented AI risk management practices

The Business Case Beyond Risk Avoidance

Governance isn't just about avoiding fines - organizations that treat it as infrastructure see measurable returns:

- Higher ROI: Regular AI system assessments make organizations 3x more likely to achieve high GenAI business value; those embedding responsible AI governance report up to 40% higher ROI through reduced rework and audit costs

- Procurement advantage: 94% of business buyers use AI during the buying process, but increasingly validate AI outputs against trusted external sources , governance maturity is becoming a vendor differentiator

- Longer production lifecycles: 45% of high-AI-maturity organizations keep AI projects running for three or more years, versus only 20% in low-maturity organizations , ungoverned AI fails at deployment or forces costly rollbacks

The 6 Pillars of a Strong AI Governance Framework

These six pillars form an interconnected system addressing the full spectrum of AI risk,from policy-setting to runtime enforcement. Implement them as a cohesive program rather than isolated initiatives.

Policy and Acceptable Use

Policies form the governance foundation. An acceptable use policy defines which AI tools and use cases are permitted, establishing clear boundaries for employees and developers. Data handling standards align to classification levels and regulatory requirements, specifying how sensitive data can be processed by AI systems.

A third-party AI vendor policy covers contractual protections and audit rights, ensuring external AI providers meet your governance standards. Incident response procedures for AI-related events establish escalation paths, investigation protocols, and remediation steps.

Policies must be living documents reviewed at least quarterly. Include specific examples and defined consequences for violations,vague policies invite inconsistent enforcement.

Risk Assessment and Classification

Maintain a comprehensive AI use case inventory, including shadow AI,the unapproved tools employees are actively using. The average enterprise unknowingly hosts 1,200 unofficial applications, creating massive potential attack surfaces.

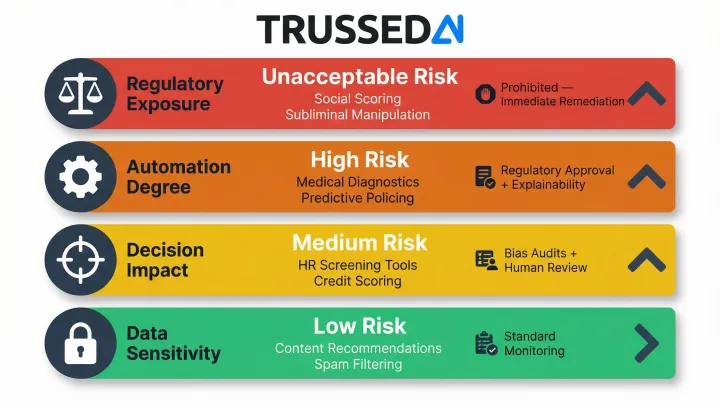

Classify each use case as Low, Medium, High, or Unacceptable Risk based on:

- Data sensitivity (public, internal, confidential, regulated)

- Decision impact (informational, operational, strategic, life-affecting)

- Degree of automation (human-in-the-loop vs. fully autonomous)

- Regulatory exposure (subject to compliance obligations or not)

Apply proportionate controls at each tier. High-risk systems require independent validation, ongoing monitoring, and strict access controls. Low-risk systems may need only basic logging and periodic review. Every governance decision in the pillars below depends on this inventory being accurate and current.

Regulatory and Compliance Alignment

Map applicable regulations to specific governance controls and audit evidence. Key frameworks most enterprises must address include:

- GDPR: Data processing transparency and individual rights

- CCPA: Automated decision-making disclosure obligations

- HIPAA: Security and privacy rules for protected health information

- EU AI Act: Risk-tiered obligations for AI system classification

- OCC Bulletin 2011-12: Model risk management for banking institutions

Compliance mapping must be maintained as regulations evolve. Designate an owner responsible for tracking regulatory developments, assessing impact on your AI systems, and updating controls accordingly. This proactive approach prevents compliance gaps that only surface during audits or incidents.

Technical Controls and Security

Layered technical controls must operate at runtime, not just at deployment. The core controls every enterprise AI environment needs:

- Role-based access management integrated with enterprise identity providers limits who can access AI systems and sensitive data

- Data loss prevention blocks sensitive data in prompts before it reaches models

- Prompt and output filtering enforces policies at the model interaction layer, stopping prohibited content and malicious instructions

- Tamper-proof audit logging captures every interaction with policy evaluation results, model versions, timestamps, and data lineage

- Adversarial testing for custom models surfaces vulnerabilities before they reach production

User behavior and threat patterns shift constantly. Controls configured once at setup will drift out of alignment,runtime enforcement is what keeps governance effective as your AI environment evolves.

Ethics, Fairness, and Human Oversight

Ethical guardrails go beyond compliance. Four controls address the human dimension of AI risk:

- Bias testing for systems affecting hiring, lending, or clinical care identifies and mitigates discriminatory outcomes

- Human-in-the-loop requirements for high-stakes decisions ensure humans review and approve critical actions before execution

- Transparency standards define when and how to disclose AI use to affected parties

- Privacy-by-design principles including data minimization and purpose limitation keep AI systems from collecting more than they need

Continuous Monitoring and Accountability

Establish an ongoing governance loop. Automated monitoring detects policy violations, model drift, security anomalies, and performance degradation in real time. A formal AI Governance Committee with cross-functional representation,Security, Risk, Legal, Compliance, Technology,meets at least quarterly to review incidents, update policies, and approve high-risk use cases.

Assign model owners accountable for specific systems, responsible for performance, compliance, and incident response. Track KPIs over time including policy violation rate, incident frequency, mean time to remediate, and training completion rate. These metrics reveal governance effectiveness and highlight areas needing improvement.

How to Build an Enterprise AI Governance Program

Step 1: Assess and Inventory

Before building structure, map all current AI usage across the organization. Workers from over 90% of surveyed companies reported regular use of personal AI tools for work tasks, despite only 40% of companies having official LLM subscriptions. This shadow AI represents ungoverned risk.

For each use case, document purpose, users, data accessed, and current controls. This inventory reveals governance gaps and sets priorities. Organizations that skip this step build policies that employees simply ignore because the policies don't reflect actual usage patterns.

Step 2: Establish Structure and Ownership

Form a cross-functional AI Governance Committee with representatives from business units, IT, security, legal, compliance, and risk. Assign a dedicated AI Governance Lead for day-to-day program management, policy development, and cross-functional coordination.

Define a RACI matrix across business, technical, compliance, and legal teams so accountability is explicit. For each decision type, clarify:

- Who develops and owns policies

- Who approves high-risk use cases

- Who must be consulted before deploying new AI systems

- Who gets notified of incidents

Without this clarity, decisions stall. Executive sponsorship is equally critical,without it, governance programs never move past the policy document stage because they lack the authority to enforce controls or allocate resources.

Step 3: Develop and Publish Policies

Draft the core policy suite, covering: acceptable use, data handling, third-party AI, incident response, and ethics. Use established frameworks like NIST AI RMF or ISO/IEC 42001 as starting points rather than building from scratch. These frameworks provide proven structures covering governance, risk mapping, measurement, and management.

Strong policies share a few traits:

- Reviewed cross-functionally to confirm they're practical and enforceable

- Published with version control so everyone knows which version applies

- Updated on a quarterly cycle as AI capabilities and regulations shift

- Written with specific consequences, not vague directives

Generic language like "use AI responsibly" provides no actionable guidance. Specific language like "do not input customer PII into unapproved AI tools; violations result in access suspension and disciplinary action" sets clear expectations.

Step 4: Implement Technical Controls and Governance Infrastructure

Deploy layered technical controls across the core pillars: access management, DLP, prompt and output filtering, and audit logging. Evaluate whether to build custom solutions or adopt purpose-built governance platforms.

Most enterprises achieve faster time-to-value and more consistent controls with a dedicated platform that integrates with existing security infrastructure like SIEM, IAM, and DLP. Trussed AI, for example, provides an enterprise AI control plane that enforces governance at runtime across AI apps, agents, and developer environments. It operates at under 20ms latency and gets operational workflows live in roughly four weeks.

Step 5: Train, Monitor, and Continuously Improve

Roll out role-specific training tailored to how each group actually uses AI:

- General staff: acceptable use policies and incident reporting

- Power users: approved tools and data handling requirements

- Developers: secure AI development practices

- Leadership: governance objectives and the organization's risk landscape

Require training completion before granting AI tool access. Establish real-time monitoring dashboards showing usage patterns, policy violations, and incidents. Set a quarterly governance review cadence where the committee reviews metrics, incidents, and policy effectiveness.

After incidents, conduct root cause analysis, update policies and controls to prevent recurrence, and share lessons learned across the organization. Expect to progress through governance maturity stages (from ad hoc through optimizing) over an 18 to 36 month horizon as controls become embedded in daily operations.

From Governance Policy to Runtime Enforcement

The Critical Gap Most Enterprises Face

Most enterprises have governance policies on paper, but those policies are not enforced at the moment AI interactions actually occur. Only 17% of organizations have implemented automated controls with DLP scanning for AI. The remaining 83% rely on ineffective measures like training, warnings, or have no policies at all.

Employees use unapproved tools because enterprise alternatives have lower rate limits, missing features, or slower performance. 98% of data entered into AI tools is pasted directly into interfaces rather than uploaded as files, which means traditional file-based DLP controls miss it entirely. Audit trails are incomplete because logging happens inconsistently or not at all.

What Runtime Enforcement Means in Practice

Policies must be active at the point of every AI interaction. That requires:

- Screening prompts before submission to block sensitive data or malicious instructions

- Validating outputs before delivery to prevent hallucinations or policy violations

- Blocking or redacting sensitive data in real time

- Routing requests through policy-compliant channels automatically

Audit evidence must be generated as a byproduct of each governed interaction rather than as a manual post-hoc process. When a regulator asks how a specific AI decision was governed, the answer should be available instantly with full chain of custody,not reconstructed over weeks.

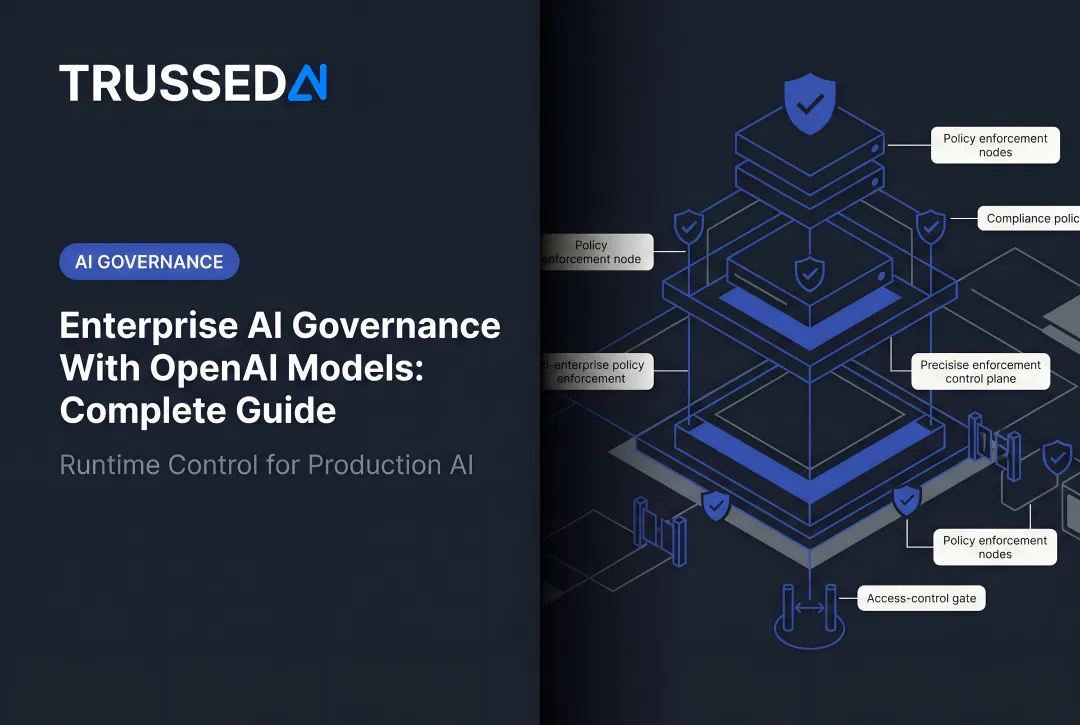

The AI Control Plane as Runtime Enforcement Infrastructure

Meeting those enforcement requirements demands dedicated infrastructure. An AI control plane puts governance into practice at runtime,functioning as a unified proxy between users, agents, developer tools, and AI models. Policies are enforced without requiring code changes to existing applications.

Key capabilities include:

- Evaluates every interaction against defined policies before execution, across all models and agents

- Automatically switches to alternative providers when primary models degrade, maintaining SLA continuity

- Tracks and attributes AI spending across teams, models, and applications in real time

- Generates tamper-proof logs of every interaction, policy evaluation, and outcome automatically

Trussed AI is designed specifically as this enterprise AI control plane, enforcing governance at runtime across AI apps, agents, and developer environments. Organizations achieve less than 1% compliance violations and can get operational workflows live in approximately four weeks.

Addressing the Shadow AI Problem

When governed alternatives are harder to access than ungoverned ones, employees route around the system. 16.9% of all sensitive data exposures happen through personal accounts because enterprise tools often have lower rate limits, missing features, or slower performance.

Runtime enforcement addresses this by making the governed path the default path,not through prohibition alone, but by providing full-capability AI access through a controlled channel. The system automatically applies policies, logs interactions, and maintains compliance without friction for the end user. Governance becomes invisible to users while remaining active in every interaction.

AI Governance Best Practices for Regulated Industries

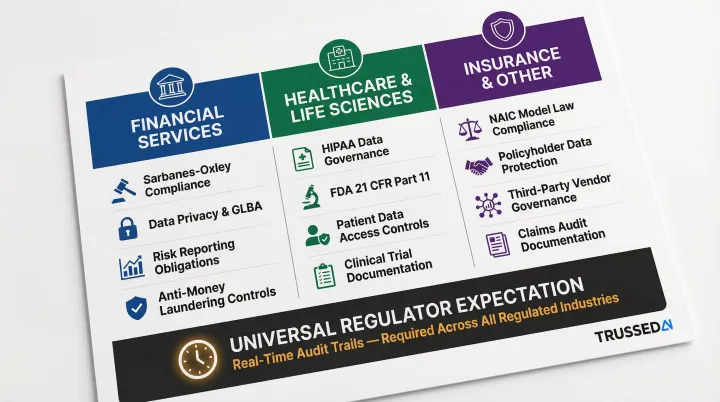

The six pillars apply universally, but regulated industries carry additional governance obligations on top of that baseline. Three verticals show what this looks like in practice.

Financial Services

Financial institutions operate under some of the densest AI oversight requirements of any sector. Key obligations include:

- Model risk management: OCC Bulletin 2011-12 applies to all AI/ML models, requiring independent validation and ongoing monitoring

- Fair lending explainability: Credit and lending decisions must provide specific, accurate adverse action reasons under fair lending law

- Vendor governance: Third-party AI providers require contractual audit rights and demonstrated compliance with the institution's standards

- Multi-regulator audit trails: Simultaneous oversight from OCC, SEC, CFTC, CFPB, and FINRA makes a centralized, searchable decision log non-negotiable

Healthcare and Life Sciences

Healthcare AI governance carries direct patient safety implications, which elevates every requirement from advisory to mandatory. Core obligations include:

- HIPAA compliance: All AI systems processing protected health information (PHI) must meet security and privacy standards, with comprehensive audit logging and access controls

- Physician oversight: AI-assisted clinical decisions require mandatory physician review before action , human-in-the-loop is a hard requirement, not a design suggestion

- FDA SaMD framework: Regulated AI systems fall under Software as a Medical Device requirements, including lifecycle management and marketing submission documentation

- Informed consent: When AI influences clinical outcomes, patients must be informed , disclosure obligations attach at the point of AI involvement

Insurance and Other Regulated Industries

Several other regulated sectors carry their own AI-specific obligations:

- Insurance: State regulations govern algorithmic underwriting and claims decisions, requiring documented transparency and fairness testing in automated outcomes

- Higher education: FERPA applies to any AI system processing student records, with access controls and disclosure requirements carrying over to AI workflows

- Cross-sector: Data residency rules restrict where AI processing can occur , a constraint that affects model selection, cloud deployment, and vendor contracts alike

Across all three verticals, regulators share one consistent expectation: they want to pull records on a specific AI decision , and get them immediately. Periodic compliance reports don't satisfy that requirement. Continuous, real-time audit trails do.

Frequently Asked Questions

What is enterprise AI governance?

Enterprise AI governance is the framework of policies, processes, and technical controls ensuring AI is deployed responsibly, securely, and in compliance. It covers the full AI stack , from models and agents to developer tools , not just end-user applications.

How do you implement AI governance?

Five steps form the foundation:

- Inventory current AI usage, including shadow AI

- Establish cross-functional ownership and a governance committee

- Develop and publish core policies

- Implement technical controls for runtime enforcement

- Set up continuous monitoring with regular review cycles

What are the 6 pillars of AI governance?

The six pillars are policy and acceptable use, risk assessment and classification, regulatory compliance alignment, technical controls and security, ethics and human oversight, and continuous monitoring and accountability. Each pillar reinforces the others , gaps in any one create exposure across the rest.

What are the best AI governance solutions for regulated industries?

Regulated industries need platforms that enforce policy at runtime , not just document it. Prioritize real-time enforcement across models and agentic workflows, automatic audit trail generation, and continuous compliance monitoring. A policy document that isn't enforced in production offers little protection.

How is AI used in regulatory compliance?

AI assists compliance through automated monitoring, real-time anomaly detection, and evidence generation. The catch: AI systems themselves must also be governed. Any tool used for compliance needs to operate within a governance framework , or it introduces the same risks it's meant to prevent.