Introduction

Manual risk management is losing ground. Financial institutions tracked 61,228 regulatory events globally - averaging 234 daily alerts - while compliance teams face tighter staffing and shrinking response windows.

The fraud picture is worse. Fraud losses reached $12.5 billion, a 25% year-over-year increase that legacy detection systems were not built to absorb.

AI's real value in banking risk and compliance shows up quietly: fewer false positives, faster regulatory responses, and audit trails generated automatically. This article examines the specific advantages AI delivers, where those advantages matter most, and what's at stake when governance fails to keep pace with adoption.

TL;DR

- AI detects fraud and financial crime by analyzing transaction patterns in real time, outperforming rule-based systems in both speed and accuracy

- Automated compliance monitoring cuts manual workload by maintaining audit documentation continuously,no scrambling when examiners arrive

- AI-driven credit models improve lending decisions by processing broader datasets with less bias than traditional scoring methods

- AI systems themselves require governance - without oversight, the compliance gap simply shifts to the models

- Banks that build AI risk governance into deployment from the start scale without accumulating regulatory exposure

What Is AI-Driven Risk and Compliance Management?

AI-driven risk and compliance management uses machine learning, natural language processing, and automated monitoring systems to identify, assess, and respond to risk while verifying regulatory adherence across banking operations. It applies across the full compliance stack:

- Credit underwriting and risk scoring

- Fraud detection and AML transaction monitoring

- Regulatory change tracking and impact analysis

- Model validation and audit documentation

- Oversight of AI systems themselves

This is infrastructure for control - designed to give banks the visibility and response speed that manual processes cannot match at modern transaction volumes.

The scale of the problem drives the need. Compliance employee hours increased 61% from 2016 to 2023, and banks report 42% of C-Suite time now devoted to regulatory compliance. At that burden, automated risk management isn't optional , it's operational.

Key Advantages of AI in Banking Risk and Compliance

The advantages below are grounded in operational and financial outcomes, not theoretical capabilities. Each maps to metrics compliance officers, CROs, and bank directors are already measured against.

Real-Time Fraud and Financial Crime Detection

Machine learning models analyze millions of transactions simultaneously to flag anomalies, suspicious patterns, and potential financial crime far faster than rule-based systems or manual review. Trained on historical fraud data and continuously updated, these models detect deviations from normal behavior at the transaction level - enabling banks to intervene before fraud completes rather than after.

How this works in practice:

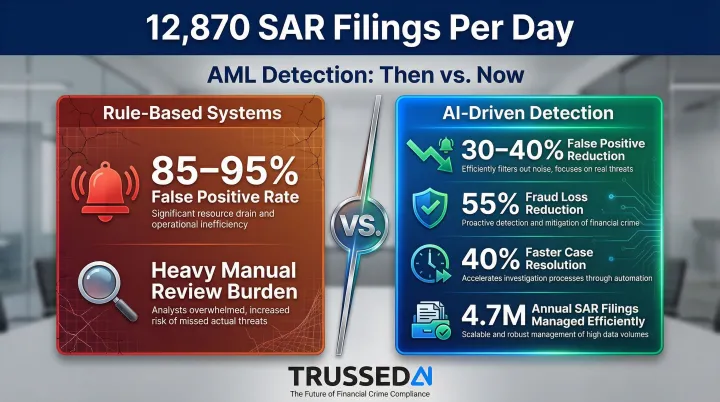

Traditional rule-based AML systems generate 85% to 95% false positives, creating a massive manual review burden. AI models reduce false positives by 30% to 40% while improving detection accuracy. Bank of America reported a 55% reduction in fraud losses after deploying AI models, and case resolution times improved by 40%.

Why this matters:

Real-time detection directly reduces financial losses and the operational cost of reversing fraudulent transactions. Faster detection reduces BSA/AML exposure, lowers false positive rates that burden compliance staff, and provides regulators with documented evidence of monitoring activity. In FY 2024, US institutions filed 4.7 million Suspicious Activity Reports,averaging 12,870 filings per day. AI-enabled triage becomes operationally necessary to manage the sheer volume of alerts.

KPIs impacted:

- Fraud loss rate

- False positive rate

- SAR filing accuracy

- AML monitoring efficiency

- Cost per compliance investigation

When this advantage matters most:

Impact scales with transaction volume. Community banks with limited compliance headcount benefit from automated detection workflows. Large institutions gain speed and accuracy at volumes no human team can match.

Automated Regulatory Compliance and Audit Readiness

AI - particularly NLP and automated monitoring systems - continuously scans regulatory publications, maps changes to internal policies, and generates documentation as a byproduct of normal operations rather than as a separate effort. NLP models ingest regulatory filings and guidance documents, compliance systems flag policy gaps, and governed AI workflows automatically maintain logs, audit trails, and evidence of controls.

Why this matters:

Manual regulatory tracking is both slow and error-prone. Banks often discover compliance gaps during examinations rather than before them. While the volume of US regulatory enforcement actions dropped in late 2024, total penalty amounts surged 83% to $5.44 billion, highlighted by TD Bank's $3.09 billion fine for systemic BSA/AML transaction monitoring failures.

Platforms like Trussed AI are built specifically for this gap: they enforce policies at runtime across AI models and workflows and generate audit-ready compliance evidence continuously. The platform operates as a drop-in proxy that intercepts AI interactions at the API level, applying governance controls without requiring code changes to existing systems. When regulators ask how a specific AI decision was governed, banks have immediate, complete evidence rather than needing weeks to reconstruct the answer manually.

KPIs impacted:

- Regulatory examination findings

- Time to produce audit evidence

- Compliance violation rate

- Manual governance workload (hours)

- Policy coverage gap rate

When this advantage matters most:

Banks running multiple AI models or workflows simultaneously face the steepest governance exposure. Complexity scales with AI adoption - and without automated oversight, compliance teams fall behind before they realize it.

Proactive Credit Risk Assessment

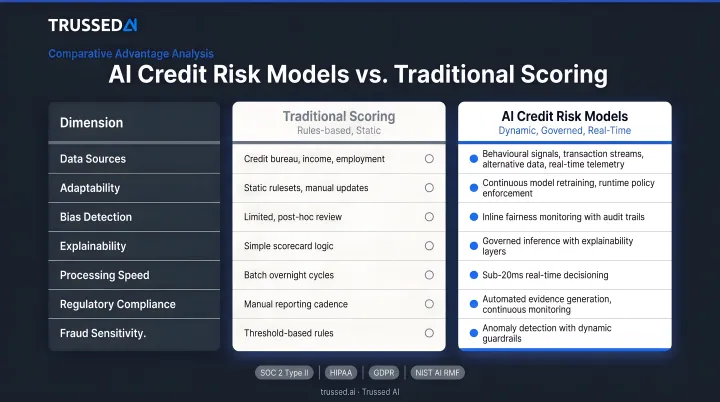

AI-powered credit models move beyond traditional credit scores by incorporating broader datasets - transaction history, behavioral patterns, alternative data - to produce more accurate and current assessments of borrower risk. Three capabilities drive that accuracy improvement:

- Identifies subtle correlations across large datasets that traditional statistical models miss

- Enables dynamic credit limit adjustments through real-time monitoring rather than periodic reviews

- Reduces over-reliance on any single scoring model, improving overall predictive stability

Why this matters:

More accurate credit risk assessment reduces non-performing loan rates and improves capital allocation decisions. AI/ML models outperform logistic regression by 2% to 15% in predictive accuracy (measured by Area Under the Curve metrics). Traditional models result in a 70% higher probability of rejection for "invisible primes" compared to alternative data fintech models, meaning creditworthy borrowers are denied due to limited credit history rather than actual risk.

AI can reduce human bias in lending decisions when properly governed, which is increasingly relevant for fair lending compliance under ECOA and CRA requirements. In 2025, the CFPB issued guidance stating that lenders using complex algorithms must provide specific and accurate reasons for credit denials, stating plainly: "There is no special exemption for artificial intelligence."

KPIs impacted:

- NPL ratio

- Loan default rate

- Credit approval accuracy

- Fair lending compliance rate

- Time to credit decision

When this advantage matters most:

High loan volumes and complex commercial portfolios are where AI credit models deliver the clearest ROI , manual review can't scale to match. For institutions under fair lending scrutiny, the added benefit is defensible, documented decision logic that holds up during examination.

What Happens When Banks Skip AI Governance

As banks deploy AI for risk management, the models themselves become a new source of risk. Model drift, hallucinations, biased outputs, and unexplainable decisions create regulatory and operational exposure that traditional governance frameworks weren't built to catch.

Practical consequences of unmanaged AI in banking risk:

- Exposes AML and credit decision systems to regulatory challenge when risk teams can't explain model outputs

- Lets compliance gaps accumulate silently until an examination surfaces them , because no system is continuously verifying that AI outputs align with policy

- Forces reactive firefighting when models drift or produce errors at scale, rather than catching issues before they become violations

- Drives rising governance costs as compliance teams manually document AI behavior retroactively or face penalties for failing to do so

The data backs this up. A 2023 Risk Management Association survey found that explainability remains the top challenge for validating AI models, cited by 69.4% of risk managers. Despite 73% of firms reporting the use of AI/ML models, only 12.2% of financial institutions describe their AI/ML strategy as "well-defined and resourced".

Federal Reserve Governor Christopher Waller stated in 2026 that AI requires "clear guardrails on how and where it's used, strong information-security controls, rigorous model validation, human accountability for decisions, and ongoing evaluation". Banks that deploy AI without those controls in place aren't just taking on operational risk , they're inviting examiner scrutiny they may not be prepared for.

How to Get the Most Value from AI in Banking Risk and Compliance

Institutions seeing the strongest outcomes from AI in risk and compliance are those where policy enforcement, monitoring, and audit documentation are built into how the AI operates , not managed as separate workstreams layered on afterward.

Practical conditions for maximum value:

- Consistent deployment across all relevant workflows - patchy adoption creates uneven risk coverage and compliance blind spots

- Continuous monitoring with real-time alerting, not periodic review - model drift and regulatory changes don't wait for quarterly audits

- Governance-native infrastructure that generates audit evidence as a byproduct of operation - platforms like Trussed AI enforce policies at runtime without requiring changes to existing application code

- Human oversight mechanisms that keep risk professionals in the loop on high-stakes decisions - AI should augment their judgment, not replace it

That last point matters particularly for banks already running AI in production. Trussed AI operates as a proxy between applications and the models they call, evaluating every request against defined policies before execution. Integration requires minimal code changes - essentially swapping API endpoints to route through the governance layer. This delivers immediate policy enforcement across existing systems without the disruption and risk of refactoring live production code.

Conclusion

AI's value in banking risk and compliance shows up in concrete outcomes: faster detection, broader compliance coverage, and audit readiness that holds up under scrutiny. That value compounds when AI systems are governed with the same rigor banks apply to their other risk controls.

The institutions best positioned for regulatory scrutiny and operational scale treat AI governance as ongoing infrastructure, not a one-time deployment decision. The risk landscape, the regulatory environment, and the AI models themselves are all continuously changing , and governance has to keep pace.

Platforms like Trussed AI are built for exactly this: enforcing policies at runtime, generating audit evidence automatically, and giving compliance teams continuous visibility across every AI system in production.

Frequently Asked Questions

What are the best AI tools for risk management in banking?

The most impactful tools include ML-based fraud detection platforms, NLP-driven regulatory monitoring systems, and AI governance infrastructure that enforces policy compliance across models in production. The right fit depends on use case maturity and whether the bank's priority is detection, compliance automation, or model oversight.

How can AI be used for compliance in banking?

AI scans and maps regulatory changes to internal policies, automates suspicious activity monitoring and SAR workflows, maintains continuous audit trails, and monitors AI models themselves for policy adherence in real time.

What is model risk management in banking?

Model risk management is the practice of identifying, measuring, and controlling risks arising from the use of models in decision-making,including AI/ML models. It covers validation, performance monitoring, bias testing, and documentation to satisfy regulatory requirements like SR 11-7.

What are the biggest risks of using AI in banking?

Primary risks include:

- Model opacity - black-box decisions that can't be explained to regulators

- Algorithmic bias in credit and AML applications

- Overreliance on automated outputs without human oversight

- Data quality failures and governance gaps when AI deployment outpaces controls

How does AI help with AML and fraud detection in banking?

AI analyzes transaction patterns in real time to flag anomalies, adapts to new fraud tactics through continuous learning, and cuts the false positives that drain compliance teams. At high transaction volumes, it outperforms static rule-based systems where manual review simply isn't feasible.

What are the 5 C's of risk management?

The 5 C's (Character, Capacity, Capital, Collateral, Conditions) form the traditional credit risk framework used to evaluate borrower risk. AI enhances each dimension by processing more data points and providing real-time updates rather than point-in-time assessments, which tightens underwriting precision without adding analyst overhead.