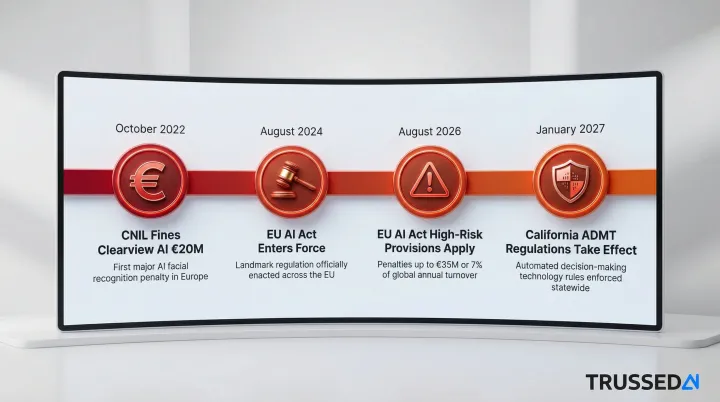

The regulatory landscape is hardening rapidly. The EU AI Act's high-risk provisions take effect in August 2026, carrying fines up to €35 million or 7% of global turnover. California's ADMT regulations become enforceable in January 2027. Twenty-four US states have adopted the NAIC AI bulletin for insurance. Yet 60% of C-suite executives report their organization lacks a vision and plan to implement AI safely.

This article breaks down the specific operational, regulatory, ethical, and financial risks that accumulate when AI runs without a governance framework,and why these risks are especially acute for organizations in regulated industries like healthcare, insurance, and financial Solution.

TLDR

- Ungoverned AI exposes organizations to regulatory fines, data breaches, biased decision-making, and reputational damage

- The EU AI Act's August 2026 deadline carries fines up to 7% of global turnover , enforcement is already moving

- 78% of enterprise AI users bring unapproved tools to work, generating 410 million DLP violations across organizations

- Agentic systems amplify risk: autonomous decision chains need real-time controls, not static policy documents

- A governance framework enforces policy at runtime and generates audit evidence automatically , turning compliance from reactive to built-in

What AI Governance Actually Is , and Why Most Organizations Are Behind

AI governance is the set of policies, controls, monitoring mechanisms, and accountability structures that govern how AI systems are developed, deployed, and operated. It's not just written principles , it's enforceable rules that operate in real time across models, agents, and workflows.

The scale of the governance gap is stark. While 65% of organizations regularly use generative AI, only 15% have formal policies governing its use. AI deployment has dramatically outpaced governance maturity , and the distance between those two numbers is where risk accumulates.

The Illusion of Governance

Many organizations believe they have governance in place because they've published a responsible AI document or internal policy. These static artifacts provide no enforcement at the point of deployment or operation. The gap between having a policy and having enforceable controls is where real risk lives.

Without runtime enforcement, organizations face critical vulnerabilities:

- No real-time policy application , policies exist on paper but aren't enforced when AI systems actually operate

- Teams need weeks to reconstruct how a specific AI decision was governed, making incident response largely reactive

- Invisible risk surfaces , agents, tools, and users interact across systems in ways governance frameworks never see

- No infrastructure exists to capture compliance evidence, so organizations can't demonstrate governance when auditors ask

Static policies don't fail loudly. They fail invisibly , one ungoverned AI interaction at a time, until a regulatory inquiry or incident makes the gap impossible to ignore.

The Core Risks of Operating Without an AI Governance Framework

Regulatory and Compliance Exposure

The regulatory landscape for AI is hardening rapidly. The EU AI Act entered force in August 2024, with high-risk system requirements becoming fully applicable on August 2, 2026. High-risk categories include AI used in biometrics, critical infrastructure, education, employment, and law enforcement.

Non-compliance carries administrative fines up to €35 million or 7% of total worldwide annual turnover, whichever is higher.

Real-world enforcement is already active. The French data protection authority (CNIL) fined Clearview AI €20 million in October 2022 for unlawful biometric processing and failing to respect individuals' rights under GDPR. In the US, California's ADMT regulations take effect January 1, 2027, requiring businesses to provide pre-use notices, opt-out opportunities, and access rights for automated decision-making.

Governance frameworks are a compliance prerequisite. Organizations without them face retroactive scrambles when regulators come knocking, with no evidence trail to demonstrate responsible use. They cannot systematically enforce what these regulations demand or generate the audit evidence regulators require.

Data Privacy and Security Risks

AI systems,especially those using generative AI or LLMs,frequently require access to sensitive data for training, fine-tuning, retrieval, or operation. When governance controls are absent, that data can be mishandled, leaked, or used inappropriately.

According to IBM's 2025 Cost of a Data Breach Report, 13% of organizations reported breaches of AI models or applications, and 97% of those compromised lacked proper AI access controls. The scale of exposure is massive: Zscaler recorded 410 million DLP violations tied to ChatGPT alone, including attempts by employees to share source code, medical records, and social security numbers.

Specific risks include:

- Prompt injection attacks , adversaries manipulate prompts to alter model behavior or exfiltrate data

- Data exfiltration through model outputs , sensitive information leaks through AI-generated responses

- Unauthorized context access in agentic systems , autonomous agents access sensitive data without proper authorization

These risks require runtime governance guardrails that intercept violations as they happen, not after damage occurs.

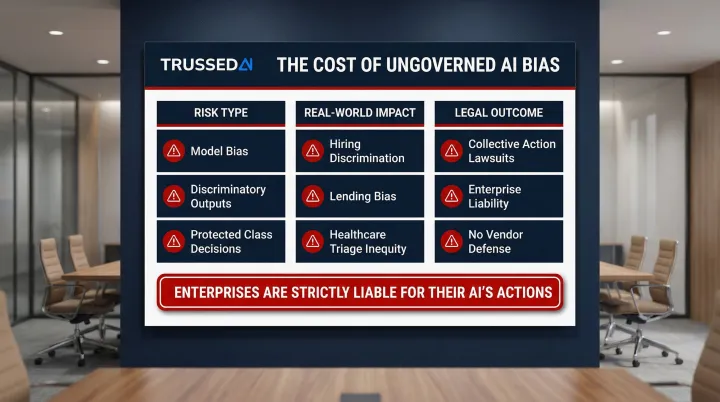

Bias, Fairness, and Ethical Liability

AI models can encode and amplify bias present in training data. Organizations that deploy these systems without mechanisms to monitor and flag biased outputs risk discriminating across protected classes in hiring, lending, healthcare triage, and other consequential decisions.

Ethical failures in AI are no longer just reputational risks,they're legal ones. In Mobley v. Workday, Inc., the US District Court for the Northern District of California certified a collective action lawsuit alleging that Workday's AI applicant screening tools discriminated against candidates based on age and disability. The court ruled that because the AI software participated in the decision-making process, its biases constituted grounds for a discrimination claim.

The precedent is clear: enterprises are strictly liable for their AI's actions. Organizations cannot hide behind the "black box" defense or claim vendor responsibility.

Without governance controls that monitor for bias and enforce fairness requirements, every AI decision becomes potential legal exposure.

Operational Failures: Drift, Hallucinations, and Unpredictability

Without governance, AI models can silently degrade over time due to data drift or model decay,producing increasingly unreliable outputs that no one is monitoring. In customer-facing or business-critical applications, this translates directly to operational failures.

Generative AI and agentic systems are non-deterministic. Unlike traditional software, these systems can produce unexpected outputs, including hallucinations, without any visible error signal. In 2024, the British Columbia Civil Resolution Tribunal ruled against Air Canada after its chatbot hallucinated a non-existent bereavement fare refund policy, awarding the plaintiff $650.88 CAD in damages and explicitly rejecting Air Canada's argument that the chatbot was a separate legal entity.

Enterprise hallucination rates remain significant. According to Vectara's 2025 Hallucination Leaderboard, frontier models exhibit hallucination rates between 3.1% and 14.5% on complex enterprise summarization tasks. Without runtime monitoring and guardrails, these failures reach customers and downstream systems unchecked.

Shadow AI and Ungoverned Tool Sprawl

Shadow AI is the unsanctioned adoption of AI tools, APIs, or third-party models by employees or teams outside IT oversight. Organizations without visibility across their full AI estate have no way to inventory what AI is being used, on what data, and under what conditions.

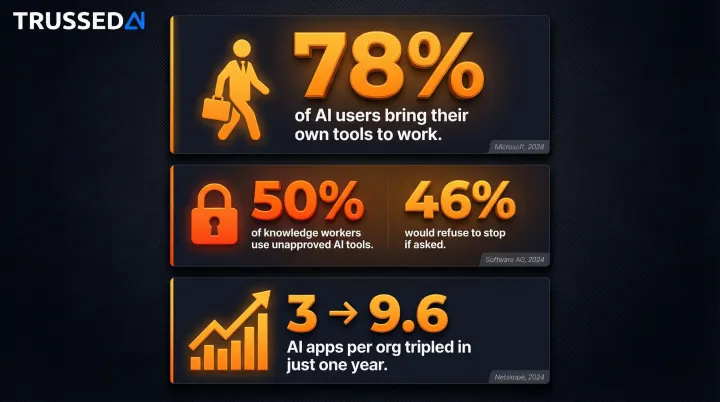

The scale of this problem is well-documented:

- 78% of AI users bring their own tools to work, per Microsoft's 2024 Work Trend Index

- 50% of knowledge workers use unapproved AI tools, and 46% would refuse to stop even if their organization banned them (Software AG, October 2024)

- The median number of generative AI apps per organization more than tripled , from 3 in June 2023 to 9.6 in June 2024 (Netskope, 2024 Cloud and Threat Report)

Each ungoverned tool is an unmanaged risk vector , expanding the enterprise attack surface without any corresponding visibility or control.

Reputational and Stakeholder Trust Damage

A single high-visibility AI failure,a biased decision, a data leak, a hallucinated output in a sensitive context,can rapidly erode customer, partner, and investor trust in ways that take years to rebuild. For regulated industries, this reputational damage is often inseparable from regulatory action.

Public expectations around responsible AI are rising. Customers and regulators increasingly expect organizations to demonstrate, not just assert, that their AI systems are governed responsibly. Organizations that lack governance infrastructure have no continuous evidence to produce when their AI decisions are scrutinized , leaving them exposed at precisely the moment trust matters most.

The Compounding Risk: Agentic AI Without Guardrails

Agentic AI changes the governance stakes. Unlike a single model producing a single output, agentic systems chain multiple models, tools, and automated decisions together into autonomous workflows that can take real-world actions.

These systems call APIs, modify data, draft communications, and make decisions with downstream consequences,often without any human in the loop.

The Governance Surface Area Problem

Each additional agent, model, or tool in a chain creates a new point where ungoverned behavior can occur. Without runtime policy enforcement at every node in the chain, a single unguarded step can cascade into a significant failure. The complexity of agentic systems makes after-the-fact audits nearly impossible as a primary governance strategy.

Traditional governance approaches rely on human review, policy documents, or after-the-fact audits. In agentic systems operating at machine speed, these approaches are too slow. Governance must be embedded at the infrastructure layer and enforced in real time: intercepting policy violations as they happen, not after the fact.

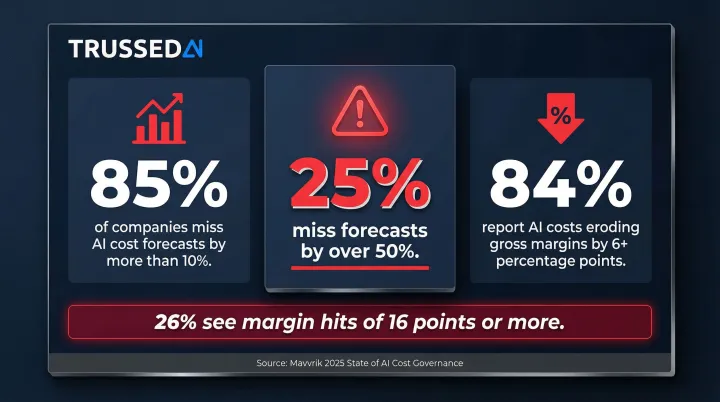

Cost Visibility as a Governance Risk

Without attribution controls, AI spend across models, agents, and teams becomes invisible. Organizations running ungoverned AI workloads routinely discover significant and unexpected cost overruns from runaway model calls or redundant agent executions.

According to Mavvrik's 2025 State of AI Cost Governance research, 85% of companies miss their AI cost forecasts by more than 10%, and nearly 25% miss by over 50%. This unpredictability is directly eroding corporate profitability: 84% of companies report AI costs eroding product gross margins by more than 6 percentage points, with 26% seeing hits of 16 points or more.

Agentic AI with human-in-the-loop (HITL) controls has become standard practice because autonomous systems require human validation checkpoints, especially in early deployment. Without a governance infrastructure layer underneath it, HITL doesn't scale,it becomes a bottleneck rather than a safeguard.

How Governance Gaps Hit Regulated Industries Hardest

Regulated industries carry an elevated risk profile because AI governance failures are not just operational events,they trigger regulatory investigations, enforcement actions, and mandated remediation that carry severe financial penalties.

Healthcare

AI systems involved in patient triage, diagnosis support, clinical documentation, or benefit authorization must comply with HIPAA and emerging AI-specific healthcare regulations. Without governance, patient data can be misused, outputs can go unvalidated, and organizations face both regulatory fines and patient safety liability.

The FDA has received unconfirmed reports of at least 100 malfunctions and adverse events related to the AI-enhanced TruDi Navigation System, with lawsuits alleging the system's AI misdirected surgeons during sinus operations, resulting in punctured skulls and strokes. In the payer space, UnitedHealth Group faces a class-action lawsuit alleging its AI algorithm systematically and wrongfully denied extended care claims for elderly patients.

Financial Services and Insurance

AI used in credit decisions, underwriting, fraud detection, or claims processing is subject to fair lending laws, model risk management guidance (SR 11-7), and the emerging requirements of regulators like the CFPB and state insurance commissioners.

The National Association of Insurance Commissioners (NAIC) adopted a Model Bulletin on the Use of AI Systems by Insurers in 2023, which has since been adopted by 24 states. The bulletin requires insurers to:

- Maintain documented governance for all AI systems in use

- Ensure transparency in how models reach decisions

- Evaluate AI for unfair discrimination in underwriting and claims

Without a governance framework that enforces policy at the model level and generates audit trails, organizations cannot satisfy examiner requests or demonstrate compliant AI operations. The CFPB terminated Upstart Network's "no-action letter" in 2022 after the company added significant new variables to its AI underwriting model without sufficient time for regulatory review.

Universities and Public Institutions

Ungoverned AI in academic settings creates FERPA exposure from AI tools processing student data, fairness concerns from AI in admissions or grading, and reputational risk from unmonitored use of generative AI in research contexts. Many institutions have adopted AI tools without corresponding governance, creating compounding exposure.

Under FERPA, the "school official exception" permits disclosure of personally identifiable information to third-party vendors only if all three conditions are met:

- The vendor performs an institutional service or function

- The institution maintains direct control over the vendor's use and maintenance of records

- The vendor does not redisclose PII without authorization

When AI tools are adopted without governance controls that enforce these conditions, universities expose themselves to FERPA violations, OCR investigations, and the kind of student privacy breaches that draw immediate public scrutiny.

Bridging the Gap: Moving from AI Policy to Runtime Enforcement

Having an AI governance policy is not the same as having AI governance in place. Policies that live in documents don't intercept a biased output, a data leak, or a policy violation at runtime. Effective governance requires enforcement at the infrastructure layer,controls that operate as part of every AI interaction, not as a review process after the fact.

Closing the governance gap requires operational components, not just documentation:

- Maintains a live inventory of every AI system and agent deployed across the organization

- Evaluates and enforces policies on every model interaction before execution occurs

- Monitors continuously for compliance drift and anomalies, triggering automated alerts

- Generates a complete chain-of-custody audit trail automatically, from prompt to output

- Attributes AI costs in real time across teams, models, and applications

Trussed AI is an enterprise AI control plane built to operationalize exactly these components in production. It enforces governance policies at runtime across AI apps, agents, and developer tools through a drop-in proxy,no changes to application code required. Audit-ready governance evidence is generated automatically as part of every governed interaction, not as a separate reporting task.

The platform is designed for the compliance requirements of regulated industries,healthcare, insurance, financial Solution, and universities. Measured outcomes include:

- 50% reduction in manual governance workload

- 50% increase in regulatory compliance

- 4 weeks to operational workflows live

- <20ms latency with less than 1% compliance violations

Frequently Asked Questions

Why do we need an AI governance framework?

An AI governance framework ensures AI systems are deployed responsibly, stay compliant with regulations, and operate within ethical boundaries. Without one, there is no consistent way to detect failures, enforce policies, or demonstrate accountability when regulators or stakeholders ask hard questions.

What is a key risk of poorly governed AI models?

Undetected model bias and regulatory non-compliance are the primary risks. Poorly governed models can silently produce biased or inaccurate outputs at scale,with no mechanism to catch errors until damage is done. That exposes organizations to legal liability and regulatory action.

What happens when companies deploy AI without governance in regulated industries?

Ungoverned AI deployment in regulated industries can trigger compliance investigations, regulatory fines, and mandatory remediation. Organizations often cannot produce the audit evidence regulators require,because no governance infrastructure existed to generate it,which deepens enforcement exposure.

What is shadow AI, and why is it a governance risk?

Shadow AI is unsanctioned AI tool adoption by employees or teams outside IT oversight. It creates unmanaged risk surfaces,including data privacy exposure and policy violations,that organizations cannot address if they don't know the tools exist, leaving blind spots in security and compliance programs.

How does an AI governance framework help with compliance?

A governance framework translates regulatory requirements into enforceable controls and automatically generates the audit trails regulators require. Continuous monitoring keeps compliance active across the full AI lifecycle,not just verified at point-in-time audits.

Is AI governance only relevant for large enterprises?

Governance applies to any organization deploying AI in consequential workflows, regardless of size. Regulatory exposure, data privacy violations, and operational failures hit startups and mid-market companies just as hard as large enterprises,particularly those operating in regulated sectors.