Introduction

Enterprise customer experience leaders face a critical tension: AI adoption in CX is accelerating faster than governance frameworks can keep pace. According to Gartner's survey, 85% of customer service leaders will explore or pilot customer-facing conversational AI in 2025. Yet McKinsey's 2025 State of AI report reveals that while 88% of organizations use AI regularly, nearly two-thirds haven't begun scaling it across the enterprise.

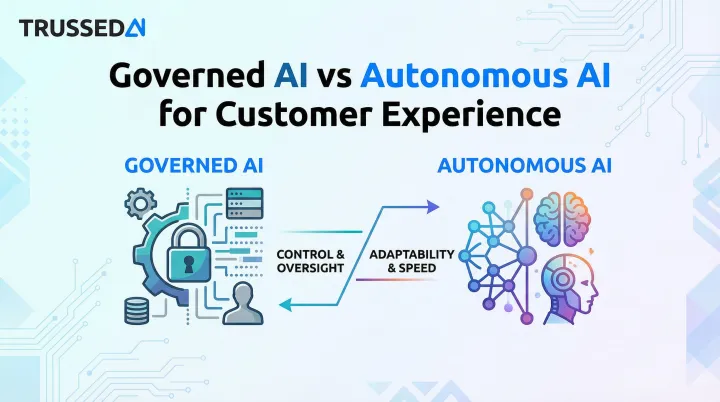

That gap defines the core choice for CX teams: governed AI or autonomous AI.

The stakes differ from other automation decisions. Unlike manufacturing errors that stay internal, AI missteps in customer interactions involve sensitive data, legally binding communications, and brand reputation. A single hallucinated refund policy or unauthorized PII access can erode years of customer trust, trigger compliance violations, or escalate into regulatory action.

PwC's 2025 Responsible AI survey found that 50% of executives cite translating AI principles into operational processes as their biggest barrier. That governance gap becomes a direct liability when AI systems are the ones talking to your customers.

TL;DR

- Governed AI enforces policies at runtime with human oversight at key decision points, keeping compliance and consistency in check

- Autonomous AI handles multi-step workflows independently, trading oversight for speed and scale

- Neither is universally better; the right choice depends on interaction risk, regulatory environment, and oversight maturity

- Regulated industries (insurance, healthcare, financial Solution) typically need governed AI as the foundation

- Top CX deployments layer both, with governed AI as the baseline and calibrated autonomy added for lower-risk workflows

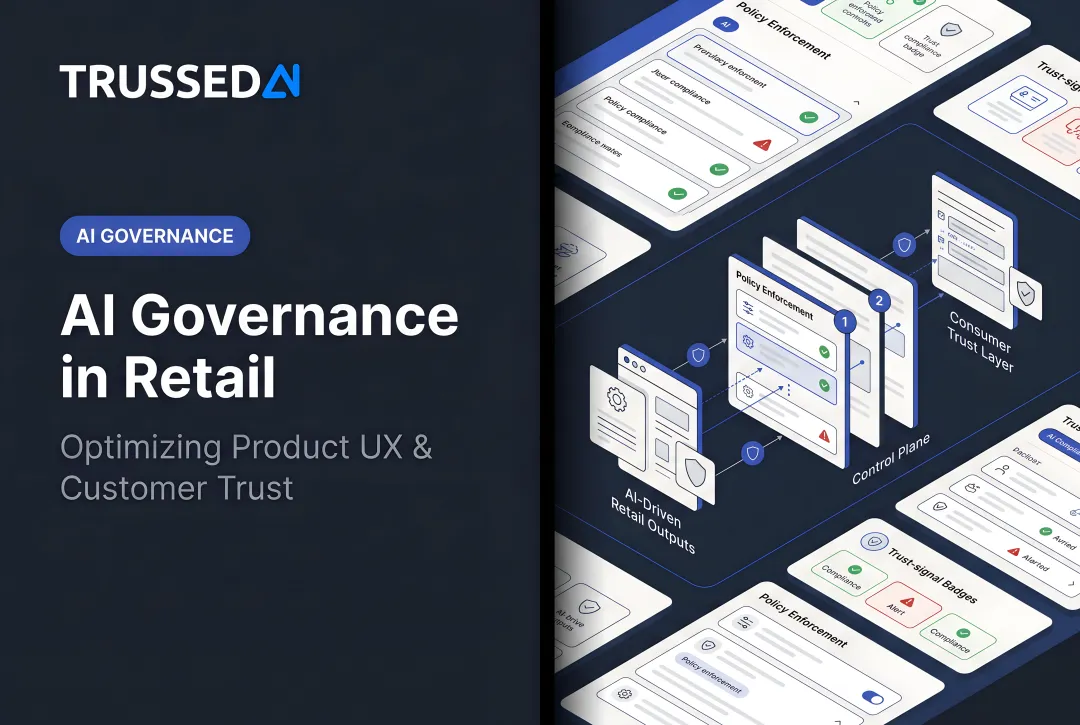

Governed AI vs Autonomous AI: At a Glance

| Dimension | Governed AI | Autonomous AI |

|---|---|---|

| Human Oversight | Human-in-the-loop or on-the-loop; defined escalation paths | Minimal real-time oversight; human-out-of-the-loop for most decisions |

| Decision-Making | AI acts within policy boundaries; humans approve high-stakes actions | AI plans, executes, and validates outcomes independently based on goals |

| CX Risk Profile | Lower risk of policy violations, hallucinations, or brand inconsistency | Higher risk surface without guardrails; errors can compound across workflows |

| Best For | Regulated industries, sensitive interactions (billing, claims, identity), compliance-heavy CX | High-volume, lower-stakes tasks (FAQ resolution, routing, standard follow-ups) once trust is established |

| Auditability | Full audit trails, explainable decisions, evidence generated as a byproduct of operation | Requires observability infrastructure built into the deployment stack |

What is Governed AI for Customer Experience?

Governed AI refers to AI systems that operate within explicitly defined policies, constraints, and oversight mechanisms. The key distinction: rules governing what AI can do, say, and access are enforced at runtime, not just configured at setup and forgotten.

This separates governed AI from both traditional rule-based automation (brittle and static) and fully autonomous AI (no real-time policy enforcement).

The Operating Model

Governed AI in CX enforces policies as live guardrails that intercept and evaluate every AI action before or as it happens. These policies cover:

- Tone and brand voice consistency

- Data access permissions and PII handling

- Escalation thresholds for human intervention

- Regulatory constraints specific to your industry

The AI still acts, but within a defined operating boundary. When a customer service AI attempts to access sensitive data or make a commitment, the governance layer checks that action against policy before it executes.

Core Benefits

Governed AI delivers measurable CX outcomes:

- Maintains consistent brand voice across all channels and interactions

- Prevents hallucinated responses that create legal exposure

- Generates automatic audit trails for compliance requirements

- Supports incremental autonomy expansion as confidence in the system builds

According to research on HIPAA compliance, healthcare organizations must maintain continual, real-time monitoring of systems containing protected health information, a requirement that governed AI architectures address through continuous audit controls.

Where Governed AI Fits

Governed AI is essential for high-stakes CX workflows:

- Insurance: Claims processing communications, underwriting assistance, fraud detection support

- Healthcare: Patient support interactions, clinical documentation, appointment scheduling

- Financial Services: Account queries, transaction disputes, identity verification

Regulatory frameworks increasingly mandate this approach. The EU AI Act (Article 50) requires that users be informed when interacting with AI systems, with high-risk systems requiring automatic event logging over the system's lifetime. California AB 316, effective January 2026, establishes that it shall not be a defense that AI autonomously caused harm, making governance frameworks legally necessary, not optional.

Meeting these mandates requires enforcement at the moment of execution, not after the fact. Trussed AI operates as a runtime control plane that applies these policies inline, without requiring changes to the underlying AI applications running in production.

What is Autonomous AI for Customer Experience?

Autonomous AI in CX refers to systems that pursue defined goals by perceiving inputs, planning multi-step actions, executing tasks across systems, and validating outcomes, with minimal real-time human involvement. The defining characteristic is goal-driven, adaptive decision-making. Speed is incidental.

The Operational Loop

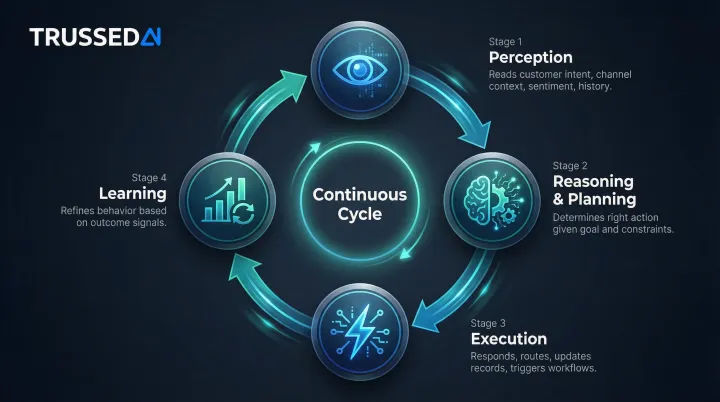

Autonomous CX AI operates through a continuous cycle:

- Perception: Reads customer intent, channel context, sentiment, and interaction history

- Reasoning and Planning: Determines the right action given the goal and current constraints

- Execution: Responds, routes, updates records, and triggers downstream workflows

- Learning: Refines behavior based on outcome signals over time

This loop operates continuously without waiting for human input at each step.

Core Benefits

When properly governed, autonomous AI delivers measurable advantages:

- Handles interaction volume without proportional headcount increases

- Personalizes responses across touchpoints in real time

- Resolves issues faster through immediate, automated response

- Detects problems proactively before customers need to escalate

Gartner predicts that by 2029, agentic AI will autonomously resolve 80% of common customer service issues without human intervention, leading to a 30% reduction in operational costs.

Where Autonomous AI Excels

Autonomous AI performs best in high-volume, lower-stakes interactions:

- Standard order inquiries and status updates

- FAQ resolution and knowledge base queries

- Ticket classification and intelligent routing

- Proactive outreach (shipping notifications, appointment reminders)

- Social media triage at scale

Agent Portfolio Design

Not all autonomous agents carry the same risk profile. Sprinklr's framework identifies four types:

| Agent Type | Scope | Risk Profile |

|---|---|---|

| Task-level | Completes well-defined tasks end-to-end (e.g., billing query resolution) | Low to moderate |

| Workflow-orchestrating | Coordinates multiple tasks across systems, determines sequencing and escalation | Moderate |

| Decision-support | Analyzes, prioritizes, and recommends alongside human teams | Moderate to high |

| Goal-driven | Assigned business objectives, empowered to plan and adjust strategies | High (requires mature controls) |

Bank of America's AI assistant Erica has surpassed 3.2 billion client interactions since launch. Internally, "Erica for Employees" is used by over 90% of employees and has reduced IT service desk calls by 50%.

Governed AI vs Autonomous AI: Which Approach Fits Your CX Strategy?

The choice between governed and autonomous AI isn't binary. It's a spectrum. CX leaders should evaluate five factors:

Evaluation Framework

1. Interaction Risk Profile

- What's the consequence of an AI error in this interaction?

- Does it involve PII, financial commitments, or medical information?

- Could a mistake create regulatory exposure or customer harm?

2. Regulatory Environment

- What compliance frameworks apply to your industry?

- Are there specific AI disclosure or logging requirements?

- Is there legal liability for AI-driven decisions?

3. AI Oversight Maturity

- Do you have observability infrastructure to detect AI errors quickly?

- Can you trace AI decisions back to specific policies and data inputs?

- Are audit trails automatically generated or manually reconstructed?

4. Interaction Volume and Variability

- How many customer interactions occur daily?

- Are they highly standardized or contextually unique?

- Can errors be detected and corrected quickly at scale?

5. Consequences of Error

- What happens if AI provides incorrect information?

- Can the error be easily reversed, or does it create lasting harm?

- Will customers know they're interacting with AI?

The Oversight Spectrum

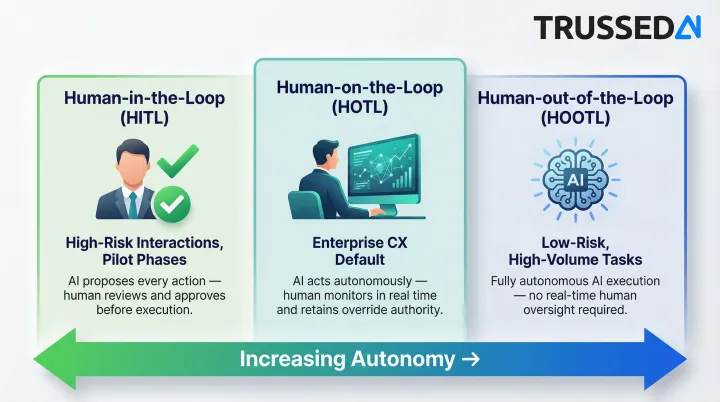

Splunk's framework defines three oversight tiers:

Human-in-the-Loop (HITL): AI proposes decisions; humans approve every action before execution. Best for high-risk interactions, financial approvals, and initial pilot phases where error tolerance is low.

Human-on-the-Loop (HOTL): AI acts autonomously, but humans monitor outputs and can intervene in real time. This is the operational default for most enterprise CX, covering triage, initial response, and standard workflows where speed matters but oversight still applies.

Human-out-of-the-Loop (HOOTL): AI is fully autonomous with no real-time oversight. Appropriate only for low-risk, high-volume repetitive tasks when guardrails and observability are mature.

Situational Recommendations

Choose governed AI as the foundation when:

- Operating in regulated industries (insurance, healthcare, financial Solution)

- Handling PII-heavy interactions

- Deploying AI for the first time in customer-facing workflows

- Regulatory accountability for AI decisions is legally defined

Expand autonomy (with governance as the underlying layer) when:

- Interaction volumes are high and tasks are well-scoped

- You have observability infrastructure to detect errors quickly

- Performance data demonstrates consistent policy compliance

- The cost of human review exceeds the risk of AI error

The Convergence Argument

In practice, the highest-performing enterprise CX deployments treat governance and autonomy as complementary rather than competing priorities. Governance becomes the infrastructure layer that makes expanding autonomy a calculated decision rather than a gamble.

"Well-governed AI systems should expand autonomy gradually as performance, monitoring, and confidence improve."

The implication for CX teams: start with tighter controls, instrument everything, and use real performance data to justify each step toward greater AI independence.

Risks of Getting the Balance Wrong

Too much autonomy without governance:

- Permission escalation and unauthorized data access

- Orphaned agent workflows operating without accountability

- Regulatory exposure from ungoverned AI decisions

- Brand damage from hallucinated or inconsistent responses

Too much restriction without intelligent governance:

- AI bottlenecked at human review queues

- Slow resolution times that frustrate customers

- Inability to scale CX operations cost-effectively

- Teams reverting to manual processes

The cost of imbalance shows up quickly. IDC research cited by DataRobot found that 96% of organizations deploying generative AI reported costs were higher than expected, with 71% admitting they have little to no control over where those costs originate. Without governance infrastructure in place before scaling, both operational risk and financial exposure compound faster than most teams anticipate.

The Real Cost of Ungoverned AI in CX

Governance failure in customer experience is a documented pattern, not a theoretical risk. A single AI agent that hallucinates a refund policy, makes an unauthorized commitment, or exposes PII creates immediate regulatory and reputational damage.

The Zombie Agent Problem

The subtler failure mode is the "orphaned agent": AI workflows that keep executing after employees change roles, policies shift, or systems are decommissioned. Without centralized governance, these agents operate invisibly, making consequential decisions with no accountability. By the time organizations find them, the damage is done.

Regulatory Exposure is Accelerating

Regulatory frameworks are shifting from voluntary guidelines to strict legal liability:

California AB 316 (effective January 2026): It shall not be a defense that AI autonomously caused harm. Developers and users share liability for AI-driven outcomes.

EU AI Act (transparency requirements effective August 2026): High-risk AI systems must maintain automatic event logs over the system's lifetime, with minimum six-month retention.

HIPAA Security Rule: Healthcare organizations must implement audit controls that record and examine activity in real-time, not retroactively.

CFPB Guidance: Financial institutions using AI for credit decisions must provide specific, accurate reasons for adverse actions. Generic explanations are insufficient.

In sectors like insurance, healthcare, and financial Solution, an AI that communicates a wrong policy, denies a claim incorrectly, or mishandles a patient inquiry is a compliance event with direct legal consequences, not a UX edge case.

Privacy Litigation is Emerging

In Taylor v. ConverseNow Technologies, Inc. (N.D. Cal. 2025), a federal court allowed California Invasion of Privacy Act claims to proceed against a provider of an AI virtual assistant used for restaurant phone orders, highlighting risks of intercepting and recording customer PII without consent.

These cases share a common thread: the absence of runtime governance infrastructure. Addressing that gap is the practical challenge for enterprise CX teams operating AI at scale.

The Infrastructure Solution

Trussed AI's control plane is built for exactly this environment. It enforces policies in real time across every AI interaction, generates audit trails automatically, and maintains compliance violation rates below 1%. The platform deploys as a drop-in proxy, requiring no changes to existing applications.

Conclusion

Governed AI and autonomous AI are not competing philosophies. They are complementary capabilities. For CX leaders in regulated industries, governed AI provides the operational foundation that makes autonomous AI deployable at scale. The real question is how to sequence the path from a governed baseline to earned autonomy.

Organizations that invest in governance infrastructure now are not slowing down AI adoption. They are building the trust, auditability, and compliance posture that lets them expand AI autonomy faster and with less risk over time. In customer experience, where a single AI misstep can damage a relationship that took years to build, governance is a competitive advantage, not a cost center.

The enterprises that scale AI successfully in CX will be those that treat governance as the chassis, not the brake. In practice, that means:

- Deploying governed AI as the operational foundation

- Expanding autonomy incrementally as performance data builds confidence

- Maintaining continuous audit evidence as a byproduct of normal operation

This approach transforms AI governance from a compliance checkbox into an operational capability that enables speed, scale, and trust at once.

Frequently Asked Questions

Which AI is best for customer service?

There is no single "best" AI. The right choice depends on the interaction type, industry risk profile, and governance maturity. Governed AI is typically the right starting point for regulated or sensitive CX workflows, while greater autonomy can be layered in for high-volume, repeatable interactions once monitoring and performance baselines are in place.

What is the difference between autonomous AI and automated AI?

Automated AI executes predefined, rule-based logic deterministically, following scripts without adaptation. Autonomous AI is goal-driven and adaptive, reasoning about context and selecting its own course of action. Autonomous AI can handle variability and novel situations. Automated AI cannot.

What is autonomous CX?

Autonomous CX refers to customer experience workflows where AI agents independently handle interactions end-to-end, from perceiving customer intent to executing across systems, without requiring human approval at each step. Effective autonomous CX still requires governance guardrails to operate safely at enterprise scale.

What is the difference between AI governance and AI strategy?

AI strategy is the organizational plan for where and how AI will be deployed to create value. AI governance is the operational framework that defines how AI systems are controlled, monitored, and held accountable once deployed. Strategy decides the destination; governance determines whether you can operate safely at scale to get there.

Is ChatGPT autonomous?

ChatGPT in standard use responds to prompts but does not maintain persistent goals or execute tasks across systems independently. When integrated into agentic frameworks with tool access and memory, it can exhibit autonomous behavior, but this requires deliberate architecture and governance to deploy safely in enterprise CX environments.