Introduction

Financial Solution firms face an escalating compliance challenge: AI adoption is accelerating across broker-dealers, RIAs, and FinTech companies, but each AI-generated communication, model output, and autonomous agent action now falls under the same SEC and FINRA scrutiny as any human representative's conduct. Most firms are not prepared for that scrutiny.

The regulatory posture has hardened. FINRA's 2026 Annual Regulatory Oversight Report marks a clear pivot from guidance to accountability. Examiners are now asking firms to produce documentation of how their AI systems are supervised. The SEC has already begun enforcement actions, fining two investment advisers a combined $400,000 in March 2024 for false and misleading statements about their AI capabilities under the Marketing Rule.

This guide covers:

- The specific regulatory obligations that apply to AI systems

- The highest-impact use cases for compliance automation

- What features to demand from AI tools

- How to govern AI itself, which FINRA now treats as part of your supervisory chain

TLDR

- SEC and FINRA apply existing rules to AI the same way they apply them to registered representatives. Human or algorithm, the standard is identical

- The highest-impact compliance use cases are communications surveillance, customer identification and suitability screening, trade surveillance and best execution monitoring, and regulatory change management

- FINRA's 2026 report shifts from observation to accountability: examiners now expect documented AI supervision, not just human oversight

- The critical compliance gap is deploying AI without governance infrastructure that can explain, log, and defend every automated decision to regulators

What SEC and FINRA Regulations Require When Using AI

FINRA Rule 3110, Supervision, and the Algorithm

FINRA's technology-neutral principle is unambiguous: existing supervisory obligations under Rule 3110 apply to AI systems just as they do to human representatives. If an AI tool is drafting communications, flagging suspicious activity, or streamlining supervisory workflows, it is now considered part of the firm's supervisory chain and will be examined as such.

FINRA's 2026 Annual Regulatory Oversight Report explicitly states: "FINRA's rules, which are intended to be technologically neutral, and the securities laws more generally, continue to apply when firms use GenAI or similar technologies in the course of their businesses, just as they apply when firms use any other technology or tool."

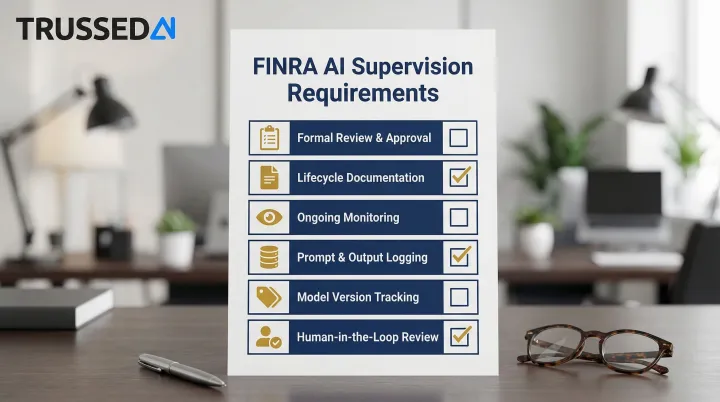

Examiner expectations have escalated significantly. Firms must now demonstrate:

- Formal review and approval processes for AI use cases

- Comprehensive documentation throughout the AI lifecycle

- Ongoing monitoring of prompts, outputs, and model performance

- Storing prompt and output logs for accountability and troubleshooting

- Tracking which model version was used and when

- Validation and human-in-the-loop review of model outputs

The report clarifies: "If a firm is relying on Gen AI tools as part of its supervisory system, its policies and procedures may consider the integrity, reliability and accuracy of the AI model."

SEC Marketing Rule, Recordkeeping, and Communications Obligations

The SEC Marketing Rule (206(4)-1) prohibits advertisements that include untrue statements or omit material facts necessary to avoid being misleading. AI-generated copy is not exempt. For firms using generative AI to draft outreach, research summaries, or proposals, this means every AI-generated communication must meet the same factual accuracy standards as human-written content.

Accuracy alone isn't enough. Regulators also require a paper trail. FINRA Rule 4511 requires firms to make and preserve books and records as mandated under FINRA rules and the Exchange Act. This obligation extends to AI-generated communications: firms must retain prompts, responses, timestamps, and sender/recipient details. Consumer AI tools that lack built-in archiving create an unauditable gap in required records, exposing firms to regulatory action.

FINRA has identified three risk categories drawing the most examiner scrutiny:

- Outputs that are confidently wrong, including hallucinations that could mislead clients or regulators

- Skewed responses from limited or inaccurate training data, introducing systematic bias

- Sensitive client data fed into unsecured AI platforms, creating privacy exposure

Regulators will not accept delegating responsibility to the model. Firms are fully accountable for every AI-generated output, which means governance, documentation, and human oversight must be built into AI workflows from the start.

Top AI Use Cases for SEC and FINRA Compliance

Communications Surveillance and Marketing Review

AI pre-screening tools analyze emails, marketing materials, social media posts, and client outreach before publication, flagging promissory language, missing disclosures, cherry-picked performance claims, and other FINRA Rule 2210 violations. A single non-compliant marketing asset reaching the public can trigger both regulatory action and lasting reputational damage.

Smarsh's Noise Reduction Agent reduces false positives by up to 60%, saving reviewers 40+ hours per month. AI-native tools suppress low-risk communications, including spam, disclaimers, and newsletters, before they reach supervision queues, directly reducing review backlogs.

Automated archiving is a compliance feature requirement, not an add-on. Every message sent through a compliant AI platform should be logged automatically with timestamps, recipient details, and sender IDs, satisfying recordkeeping obligations and enabling supervisors to produce audit-ready records within seconds rather than days.

Customer Identification and Suitability Screening

For SEC- and FINRA-regulated firms, customer identification obligations extend well beyond opening an account. FINRA Rule 4512 requires broker-dealers to maintain current essential customer information, while suitability obligations under FINRA Rule 2111 and the SEC's Regulation Best Interest require firms to understand each customer's investment profile before making a recommendation. AI tools now automate the data collection and analysis that underpin both requirements.

Practical applications include:

- Automated collection and verification of customer identifying information at onboarding

- Continuous profile updates that flag material changes in customer circumstances relevant to suitability

- AI-assisted suitability screening that cross-references customer risk tolerance, investment horizon, and financial situation against recommended products

- Documentation of the reasonable-basis and customer-specific suitability analysis required under Reg BI

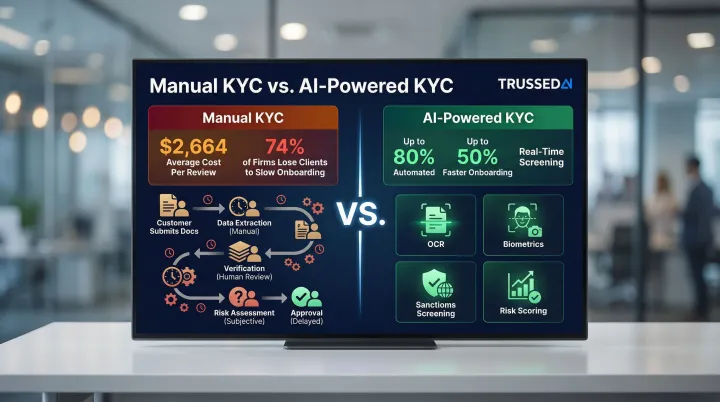

The average customer information and suitability review per institutional investor account costs $2,664, and 74% of asset managers have lost clients due to slow onboarding. AI tools automate up to 80% of customer identification and suitability review steps under FINRA Rule 4512 and Reg BI and cut onboarding time by up to 50%.

Trade Surveillance and Best Execution Monitoring

ML-based trade surveillance learns individual customer and account behavior patterns and flags genuine anomalies rather than tripping static thresholds. Rule-based systems produce 85% to 95% false positive rates by comparison, generating alert fatigue that masks genuine misconduct in the noise.

In SEC and FINRA-regulated contexts, two use cases illustrate the impact. First, AI-powered trade surveillance platforms analyze order flow and execution data in real time, detecting layering, spoofing, and front-running patterns that fall under FINRA Rule 3110 supervisory obligations and SEC market manipulation rules. FINRA's own surveillance infrastructure uses AI and advanced analytics to identify these patterns across billions of transactions daily, and it expects member firms to apply comparable rigor to their internal supervisory systems. Second, best execution monitoring tools use ML to evaluate whether orders are being routed to achieve the most favorable terms reasonably available, a core obligation under SEC Rule 606 and FINRA Rule 5310. These tools benchmark executed prices against real-time market conditions and flag systematic deviations that warrant supervisory review, replacing manual sampling with continuous, documented analysis.

Regulatory Change Management

NLP and LLM-driven regulatory intelligence tools scan publications from hundreds of regulators daily, summarize relevant changes, and automatically map updates to internal policies, procedures, and controls. Compliance teams tracked over 201,200 regulatory developments in 2024 alone, roughly 550 per day, a volume no manual review process can absorb.

AI-driven tools handle this intake automatically, surfacing only the changes that affect your specific policies and controls, so compliance teams stay current on SEC and FINRA guidance without adding headcount to do it.

How Wealth Management Firms and Financial Advisors Are Using AI

Wealth managers are using AI to build real-time client profiles from account data, browsing behavior, past communications, and social signals. This gives registered representatives a sharper basis for personalized outreach, with compliance controls embedded to keep the AI from crossing into discretionary investment advice territory without proper oversight.

According to Broadridge's 2024 Digital Transformation Study, 80% of wealth management firms project active AI use by 2026, up from 31% at the time of the survey. Firms find AI brings the most value to communications and messaging (30%), research (20%), marketing/sales (15%), and data synthesis (14%).

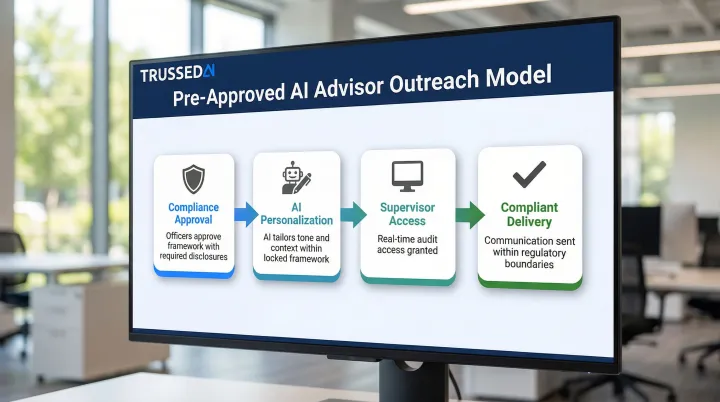

The pre-approved template model for AI-assisted advisor outreach works as follows:

- Compliance officers approve a framework with required disclosures and locked language

- AI personalizes tone and context within that framework

- Supervisors get real-time audit access

- This approach reduces back-and-forth compliance review cycles while keeping every communication within regulatory boundaries

Beyond client communications, firms are adopting AI for portfolio management support and smart order routing, though both areas carry heightened regulatory scrutiny. FINRA warns that models trained on historical data can fail to anticipate unusual market conditions, making human oversight essential.

The SEC has proposed rules requiring broker-dealers and investment advisers to eliminate or neutralize conflicts of interest tied to predictive data analytics and AI. The SEC expects firms to maintain human review processes for any AI outputs that influence trading or investment recommendations.

What to Look for in AI Compliance Tools for Financial Services

Automatic Audit Trail and Evidence Generation

The tool should log every AI interaction, decision, and output as a byproduct of normal operation, not require manual export or after-the-fact reconstruction. Examiners expect firms to produce complete communication histories on demand. Tools that cannot deliver this create direct regulatory exposure.

Explainability and Human-in-the-Loop Design

SEC and FINRA examiners will ask not only what the AI decided, but why. Look for tools that:

- Document the data and logic behind outputs

- Support human review checkpoints in regulated workflows

- Do not operate fully autonomously for decisions that carry supervisory or suitability implications

Security Certifications and Data Handling

Client data fed into AI systems must be encrypted, access-controlled, and protected from exposure to consumer AI platforms. Minimum acceptable certifications include:

- Enterprise security certifications demonstrating sustained control effectiveness

Verify these independently rather than relying on vendor claims.

Beyond compliance requirements, operational fit matters too.

Drop-In Integration and Enterprise Performance

Compliance tools should integrate with existing workflows without requiring firms to rebuild their application stack. Evaluate:

- Latency impact: governance layers should add less than 20ms to avoid disrupting production systems

- Scalability: verify the tool handles increasing communication volume as the firm grows without performance degradation

Governing the AI That Powers Your Compliance Program

FINRA has surfaced a core challenge: firms often believe they are not using AI, when in fact generative AI capabilities are already embedded in vendor products, internal tools, and third-party platforms. FINRA's 2026 report expects firms to inventory all AI use cases, map each to relevant supervisory and recordkeeping obligations, and prepare documentation that answers the examiner question: "Who is supervising the algorithm?"

The specific governance components FINRA looks for include:

- Pre-deployment testing and validation

- Model version tracking

- Ongoing monitoring of prompts and outputs

- Human review of AI outputs in regulated workflows

- Comprehensive documentation throughout the AI lifecycle

Meeting these requirements across the full AI stack requires more than point solutions. Customer identification tools, surveillance platforms, and regulatory intelligence software each address a specific use case, but none of them enforces policy across all models, agents, and workflows simultaneously. That's the function of an AI governance control plane: the infrastructure layer beneath every tool that satisfies FINRA's supervision expectations at the enterprise level.

Trussed AI builds this control plane for financial firms. The platform enforces policies at runtime and generates audit-ready evidence automatically, capturing policy evaluation results, model version, timestamp, and data lineage for every governed interaction.

Documented outcomes from governed deployments include a 50% reduction in manual governance workload and less than 1% compliance violations.

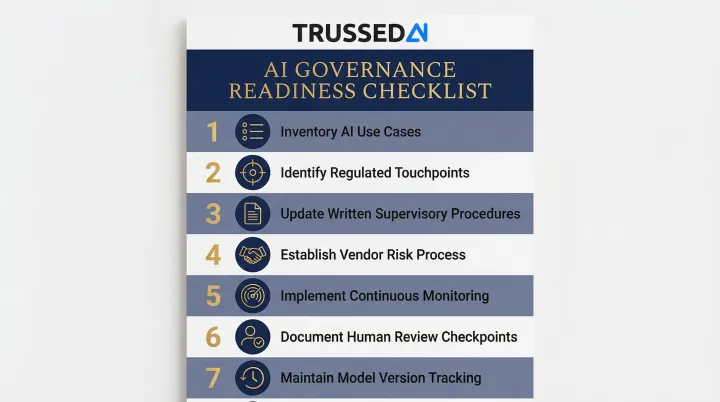

Practical Governance Readiness Checklist:

- Inventory current and planned AI use cases across all departments and functions

- Identify which use cases touch supervised communications, books and records, or investment recommendations

- Update written supervisory procedures to cover AI systems, not just human representatives

- Establish a vendor risk process for third-party AI tools, including confirming whether vendors have embedded generative AI without disclosure

- Implement continuous monitoring and audit logging for all AI interactions

- Document human review checkpoints within regulated workflows

- Maintain model version tracking and change management processes

Frequently Asked Questions

Does FINRA use AI?

Yes, FINRA uses AI and advanced analytics for market surveillance, pattern detection, and examination analysis as part of its FINRA Forward initiative. This is precisely why FINRA expects member firms to govern AI with comparable rigor. Regulators are now examining AI-driven firm activity with AI-powered tools of their own.

How are wealth management firms using AI?

Wealth managers use AI primarily for real-time client profiling, automating customer identification and onboarding workflows, monitoring communications for compliance, and supporting portfolio management. All applications operate within supervisory frameworks designed to satisfy FINRA and SEC oversight requirements.

What AI tools are financial advisors using?

Advisors are adopting purpose-built tools for compliant AI-assisted outreach with built-in archiving and pre-approved templates, regulatory change monitoring, communications surveillance, and audit trail generation. These tools are distinct from consumer AI platforms that lack the governance features required in regulated environments.

What is FINRA Rule 3110 and how does it apply to AI?

Rule 3110 requires broker-dealers to establish and maintain a supervisory system for all activities. FINRA has clarified this obligation extends to AI systems and their outputs, meaning firms must document how AI is supervised, not just human representatives. That includes maintaining logs, tracking model versions, and implementing human review checkpoints.

Can AI-generated compliance evidence satisfy SEC and FINRA audit requirements?

Regulators increasingly accept AI-generated evidence when it is transparent, traceable, and backed by human oversight. They want to see not just the output, but the data, logic, and review process behind it. Tools that auto-generate timestamped, auditable logs for every interaction meet this standard and let firms respond to examiner requests in seconds rather than weeks.

What are the biggest risks of using AI for SEC and FINRA compliance?

The four primary risks are:

- Hallucinations producing inaccurate compliance decisions

- Model bias from skewed training data

- Data privacy exposure from feeding client information into unsecured platforms

- Lack of explainability when regulators ask firms to justify automated decisions

Each risk requires a governance response, including policy enforcement, human review checkpoints, and continuous monitoring, not just a one-time technical fix.