Introduction

Compliance requirements in financial Solution are growing faster than human teams can manage. Financial crime compliance costs reached $61 billion in the U.S. and Canada in 2024, and Suspicious Activity Report (SAR) filings exceeded 4.1 million across all filing groups in 2025, a 7.66% increase year-over-year.

Compliance now consumes 42% of C-suite time, reflecting a 61% increase in employee hours dedicated to regulatory mandates between 2013 and 2023.

Transaction volumes are exploding, communications now span Slack to Zoom, and cross-jurisdictional regulations multiply faster than policy teams can absorb them. These pressures create bottlenecks that manual processes cannot resolve. Financial institutions face a choice: scale compliance infrastructure at unsustainable cost, or transform how compliance work gets done.

This article examines how AI is shifting compliance from a reactive, manual function to a proactive, continuous capability. We'll cover use cases from KYC and AML to regulatory reporting, explore how AI enhances fraud detection, and address the emerging governance challenge: how do you govern the AI systems doing the compliance work?

TLDR

- AI automates labor-intensive compliance tasks including KYC, AML monitoring, fraud detection, and regulatory reporting with measurable efficiency gains

- Machine learning models reduce AML false positives by 40-60% compared to rule-based systems, freeing analysts to focus on genuine threats

- AI adoption in KYC/AML operations surged from 42% in 2024 to 82% in 2025, reflecting industry-wide recognition of AI's compliance value

- Deploying AI for compliance introduces a meta-governance challenge: the AI systems themselves need monitoring, explainability, and audit trails

- Human oversight remains mandatory; regulators require human accountability for final decisions, with AI augmenting rather than replacing compliance officers

What Is AI Regulatory Compliance and RegTech?

AI regulatory compliance refers to using machine learning, natural language processing, and automation to help financial institutions meet regulatory obligations across KYC, AML, fraud monitoring, reporting, and conduct surveillance. Rather than replacing compliance teams, these technologies handle high-volume pattern detection and evidence generation, enabling human officers to focus on judgment-intensive decisions.

RegTech represents a distinct category: technology solutions purpose-built to help firms manage regulatory requirements efficiently and at scale. The global RegTech market was valued at $24.34 billion in 2025 and is projected to reach $112.10 billion by 2033, growing at 21.1% annually. RegTech serves as the tooling layer that operationalizes compliance within fintech products and institutions, the infrastructure that makes compliance scalable.

AI suits compliance work precisely because compliance is a data-pattern problem. The volume, velocity, and variety of financial data, including transactions, communications, documents, and identity records, exceeds what manual processes can reliably handle.

Reported use of advanced AI tools in KYC/AML operations surged from 42% in 2024 to 82% in 2025, with regional adoption rates reflecting how quickly this shift has taken hold:

- Singapore: 92% of institutions using advanced AI tools

- United States: 79% adoption across surveyed firms

- United Kingdom: 77% adoption

High adoption numbers tell only part of the story, though. Automation of periodic KYC reviews averages roughly one-third across surveyed institutions, meaning most firms are still managing the bulk of review work manually. That gap is where AI integration has the most immediate room to scale.

Key AI Use Cases Transforming Fintech Compliance

KYC Automation

AI models validate customer identity documents, match faces against ID records, and detect synthetic or fraudulent documentation in real time, dramatically reducing onboarding friction while maintaining regulatory standards. This enables fintechs to scale customer acquisition without scaling compliance headcount proportionally.

The business impact is substantial. In 2025, 70% of financial institutions worldwide reported losing clients due to slow and inefficient onboarding, an increase from 67% in 2024 and 48% in 2023. Average annual spend on AML/KYC operations stands at $72.9 million per firm globally.

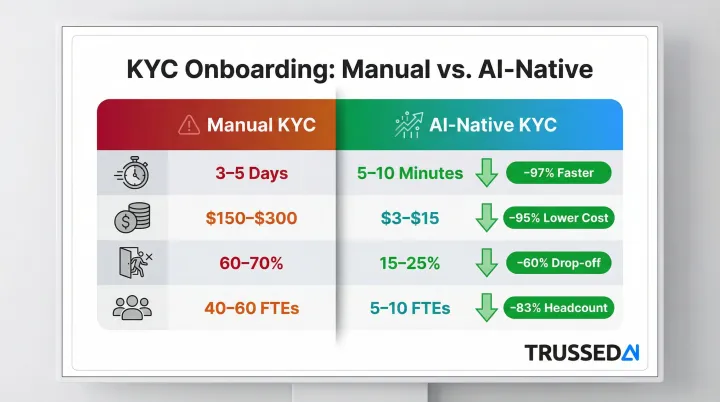

AI-native KYC processes demonstrate measurable improvements:

- Time to account: 5-10 minutes vs. 3-5 days (99% faster)

- Cost per onboarding: $3-$15 vs. $150-$300 (95% lower)

- Abandonment rate: 15-25% vs. 60-70% (45-55 percentage points improvement)

- Compliance staff required: 5-10 FTEs vs. 40-60 FTEs (85% reduction)

The result: onboarding stops being a compliance liability and becomes a competitive differentiator.

AML Transaction Monitoring

Traditional rule-based AML systems apply static thresholds that generate false positive rates between 85% and 95%, meaning only 1-5% of alerts result in confirmed suspicious activity or SARs.

While regulators estimate two hours to file a SAR, independent studies indicate the real burden can stretch to 22 hours per alert when factoring in investigation, documentation, and review cycles.

AI-powered behavioral models learn what constitutes normal activity per customer and flag contextual deviations. Industry reviews suggest AI-powered fraud management platforms can reduce false positives by 40-60% compared to traditional methods. One leading financial institution improved suspicious activity identification by up to 40% and efficiency by up to 30% by replacing rule-based tools with machine learning models.

The operational impact is significant: a financial institution processing 10,000 alerts daily with a 90% false positive rate and 30 minutes per alert investigation generates 4,500 analyst hours spent on non-actionable investigations daily.

Digital Communications Governance

Modern workplaces use multiple collaboration platforms, including Slack, Teams, and Zoom, making manual review impossible. AI analyzes voice, video, chat, and email across these platforms to detect compliance risks including missing disclosures, market-sensitive information in screen shares, or suspicious communication patterns.

The surveillance challenge is growing. In a 2025 survey, 74% of financial Solution respondents reported it is likely their employees are using unmonitored communications channels, up from 66% in 2022. Meanwhile, 99% of financial Solution firms plan to expand AI use in communications, yet 88% cite challenges with AI governance and security.

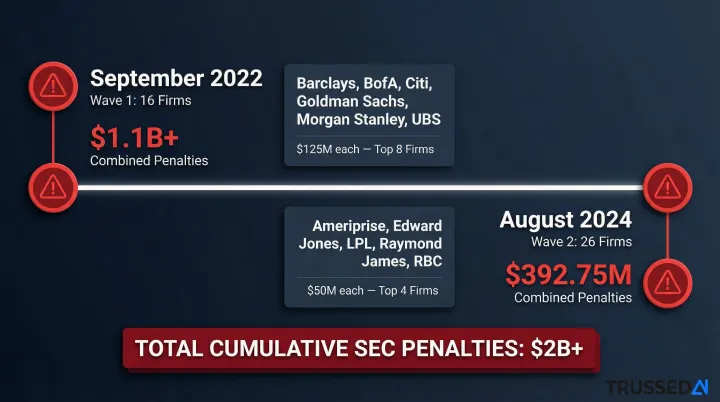

Regulators have demonstrated zero tolerance for unmonitored communications. Since December 2021, the SEC has charged more than 100 firms with over $2 billion in penalties for recordkeeping failures related to off-channel communications. Major enforcement actions include:

- September 2022: 15 broker-dealers and 1 investment adviser (including Barclays, BofA, Citi, Goldman Sachs, Morgan Stanley, UBS) paid over $1.1 billion combined ($125 million each for top 8 firms)

- August 2024: 26 broker-dealers and investment advisers (including Ameriprise, Edward Jones, LPL, Raymond James, RBC) paid $392.75 million combined ($50 million each for top 4 firms)

At $50-$125 million per firm, the cost of unmonitored channels dwarfs the investment in AI-driven surveillance.

Risk Assessment and Conduct Monitoring

AI enables continuous behavioral analytics across communications, transactions, and participant networks. This shifts compliance from periodic audits to always-on monitoring. In practice, that means detecting risks in days or hours rather than discovering violations months later during a manual review cycle.

Key signals AI surfaces in real time include:

- Clusters of anomalous communication patterns between unexpected participants

- Transaction sequences inconsistent with a customer's established behavior

- Cross-channel activity that no single-system audit would catch

GenAI Governance as a New Compliance Frontier

As firms deploy generative AI tools, including Copilot, AI meeting assistants, and LLM-based workflows, in day-to-day operations, these tools generate prompts and responses that may contain sensitive customer data, intellectual property, or present cybersecurity risk. Every prompt sent to an LLM and every response returned is now a potential compliance event, and most firms have no systematic way to capture, review, or audit that activity.

That creates a structural problem: the same AI tools deployed to accelerate compliance work can introduce new regulatory exposure if they aren't themselves governed. Ensuring AI systems are compliant, monitored, and auditable isn't optional. It's the next layer of the compliance stack that regulated firms need to build.

How AI Enhances Fraud Detection and Financial Crime Prevention

The Evolving Fraud Threat Landscape

Bad actors now use AI-powered tools including deepfake technology to defeat biometric checks and impersonate customers. Multi-vector fraud attacks combining multiple techniques increased by 180% year-over-year in 2025, while deepfake-related fraud losses exceeded $410 million in the first half of 2025 alone.

Nearly 70% of surveyed fraud-management and AML officials state that criminals are more adept at using AI to commit financial crime than banks are at using the technology to stop it. The fraud detection arms race means that static, rule-based defenses are consistently outpaced.

Multi-Variable Real-Time Scoring

Modern AI fraud systems analyze hundreds of variables simultaneously in sub-second time to assign per-customer risk scores dynamically. Variables analyzed in real time include:

- Transaction pattern variances and geolocation consistency

- Device fingerprinting and biometric signals

- Historical fraud pattern matching across accounts

- Obfuscation attempts (misspellings, code words, unusual formatting) that defeat lexicon-based tools

This contextual intelligence outperforms rule-based systems by recognizing patterns across seemingly unrelated data points, connections human analysts would miss.

False Positive Reduction and Business Impact

Lower false positive rates mean fewer legitimate transactions blocked, improving customer experience and reducing operational costs. False declines cost online merchants an estimated $443 billion annually, while approximately 39% of cardholders abandon a merchant or issuer after experiencing a false decline.

The cost multiplier is significant. The average merchant spends $4.60 on each dollar lost to fraud, representing a 32% increase since 2022. In North America, financial institutions now lose an average of more than $5 for every $1 lost to fraud, up 25% from four years ago.

Identity Verification and Synthetic Identity Detection

Reducing false positives is only half the challenge. AI models must also catch fraud that traditional verification misses entirely, particularly synthetic identities (combinations of real and fabricated identity elements) and deepfake-assisted account takeovers.

More than 70% of surveyed fraud and compliance officials reported their company identified synthetic identities during client onboarding in the past year, while 73% of financial institutions report a rise in synthetic identity fraud.

AI-Powered Regulatory Reporting and Audit Readiness

The Reporting Burden

Fintech firms must submit structured, timely reports to regulators across domains including liquidity risk, fraud, data privacy, and AML. As organizations scale, aggregating data from disparate systems and formatting it correctly for multiple jurisdictions becomes a major operational challenge. The financial industry spends approximately $70 billion annually on risk, risk data, and regulatory reporting.

Automated Reporting Workflows

NLP tools extract relevant data from contracts, communications, and system logs, while data aggregation algorithms consolidate information across platforms. Some advanced systems offer predictive analytics to anticipate regulatory outcomes or flag pre-submission gaps.

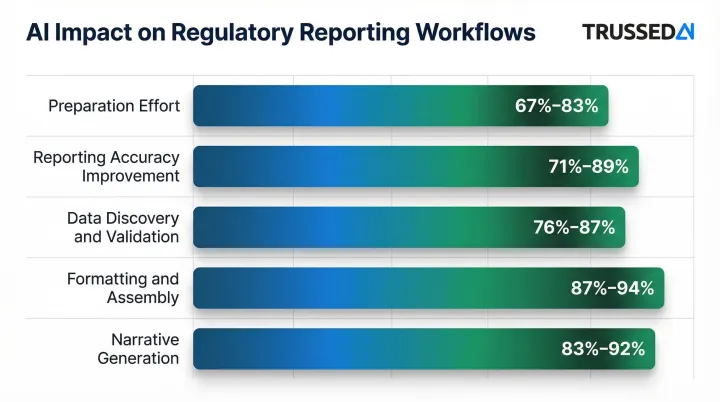

Advanced analytics for regulatory reporting delivers measurable results:

- Preparation effort reduction: 67-83%

- Reporting accuracy improvement: 71-89%

- Data discovery and validation effort reduction: 76-87%

- Formatting and assembly effort reduction: 87-94%

- Narrative generation effort reduction: 83-92%

These efficiency gains reduce manual effort and submission errors, giving compliance teams capacity for higher-value work like regulatory engagement and policy interpretation.

AI-Generated Audit Trails

Well-governed AI compliance systems generate explainability artifacts automatically, including:

- Plain-language rationale for each detection or decision

- Flagged evidence with precise location markers

- Interaction logs that capture the full decision chain

These audit-ready outputs let compliance officers verify and defend decisions to regulators without reconstructing evidence manually. When a regulatory inquiry arrives, organizations can produce immediate, structured documentation covering transaction monitoring decisions, KYC determinations, or reporting outputs, rather than spending weeks gathering it after the fact.

Governing the AI That Governs Compliance

The Meta-Governance Problem

As fintech firms deploy AI across compliance workflows, including transaction monitoring agents, KYC pipelines, and reporting automation, a question emerges: who governs those AI systems themselves?

AI models can drift, develop bias, produce unexplainable outputs, or expose sensitive data. Left unmonitored, each of these creates new regulatory risk. Static governance policies documented in PDFs and reviewed periodically can't keep pace with AI systems making real-time decisions. Those systems require real-time governance.

What AI Governance for Compliance Systems Requires

Effective AI governance for compliance workflows demands:

- Real-time policy enforcement across models and agents, preventing unauthorized actions before they occur

- Continuous monitoring for model drift, bias, and accuracy degradation

- Complete audit trails of every governed AI interaction, automatically maintained

- Explainability outputs that satisfy regulatory scrutiny, documenting not just what decisions were made but why

The Explainability Requirement from Regulators

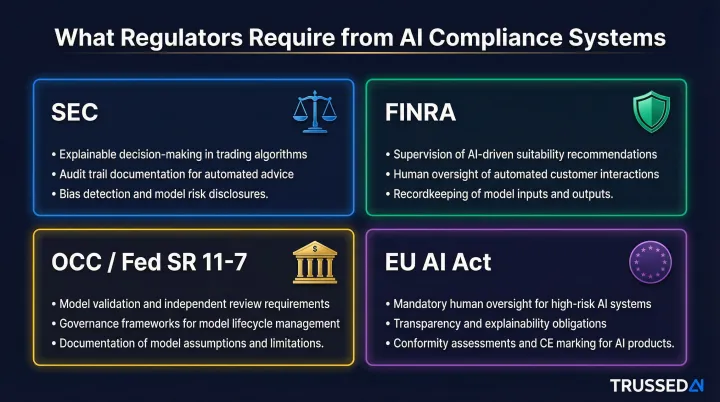

Regulators increasingly require firms to explain how automated systems make decisions in areas like fraud detection, credit scoring, and AML alerts:

- SEC: Fiscal Year 2025 Examination Priorities emphasize reviewing whether broker-dealers appropriately tailor AML programs to their business models and conduct independent testing

- FINRA: The 2025 Annual Regulatory Oversight Report highlights Generative AI as an emerging trend, noting firms must establish supervision, governance, or model risk management frameworks with robust testing for privacy, integrity, reliability, and accuracy

- OCC/Federal Reserve (SR 11-7): Supervisory Guidance on Model Risk Management requires "effective challenge" of models by objective, informed parties, mandating robust development, rigorous data quality assessment, and ongoing validation

- EU AI Act: Classifies AI systems used for creditworthiness evaluation or risk assessment in insurance as "high-risk," requiring technical documentation, automatic event recording, and transparency enabling deployers to understand system functionality

Black-box AI creates direct regulatory exposure. Firms must be able to surface why a decision was made, not just what it was.

Trussed AI: Infrastructure for AI Governance

Enterprises deploying AI for compliance workflows need a control plane that enforces governance at runtime, not just at model training time. Trussed AI's platform addresses this by acting as the enforcement layer between policy and execution, covering AI apps, agents, and developer tools.

The platform operates as a proxy layer in the flow of AI interactions, evaluating policies before every tool call, data access request, and workflow trigger. This execution-layer control prevents unauthorized actions in compliance pipelines where violations could create regulatory exposure.

Key capabilities include:

- Runtime policy enforcement: Blocks non-compliant actions at the moment of execution, before KYC automation, AML monitoring, or reporting agents can act on them

- Automatic audit trail generation: Captures policy evaluation results, model versions, timestamps, and data lineage for every AI interaction, so audit evidence exists without manual reconstruction

- Security and data protection: Enforces access controls and data leakage prevention at the model interaction layer, keeping PII out of unintended outputs and external systems

- Regulatory alignment: Links each governance policy to a specific regulatory requirement, such as SEC, FINRA, OCC, or EU AI Act, so compliance gaps surface in monitoring dashboards rather than audits

Trussed AI is designed for regulated industries like financial Solution, where demonstrable security controls and audit readiness are mandatory.

What Fintech Teams Should Know Before Implementing AI in Compliance

Data Quality as the Foundational Prerequisite

AI compliance models are only as reliable as the data they are trained on. Incomplete, biased, or poorly structured training data produces inaccurate outputs, and in compliance contexts, those inaccuracies translate into either missed violations or wrongful flags, both of which carry regulatory and reputational risk.

The overall AI project failure rate stands at 80.3%, with 33.8% of projects abandoned before reaching production and 28.4% failing to deliver expected business value. Data quality issues are the primary reason for abandonment in 38% of those cases.

Financial Solution compounds the problem: the sector's AI project failure rate hits 82.1%, regulatory compliance adds an average of 7.4 months to deployment timelines, and explainability requirements cause 38% of machine learning approaches to be rejected outright. Before deployment, teams should audit data pipelines end-to-end, checking for gaps, skewed sampling, and any training inputs that could produce discriminatory outcomes under scrutiny.

Hybrid Human-AI Operating Model

Regulators continue to require human accountability for compliance decisions. The most effective model is one where AI handles high-volume pattern detection and evidence generation, and human compliance officers review findings, interpret context in grey-area cases, and make final determinations.

AI reduces manual workload but does not transfer regulatory responsibility. Compliance professionals still own:

- Interpreting context in ambiguous cases where rules don't clearly apply

- Making judgment calls that require understanding of business relationships and customer circumstances

- Taking responsibility for regulatory filings and decisions before regulators

- Handling exceptions and edge cases that fall outside model training data

Iterative, Governed Deployment

Human accountability only holds when the underlying AI system has been properly validated. Full-scale rollout before that validation creates risk. Testing in sandboxed environments lets teams assess model behavior against real-world scenarios, surface edge cases, and build confidence before production deployment.

Governance frameworks should be in place before any AI compliance tool goes live:

- Version control: Tracking which model version is deployed and maintaining rollback capability

- Ongoing validation schedules: Regular testing to ensure models perform as intended and haven't drifted

- Exception handling protocols: Defined processes for cases where AI produces uncertain or contradictory outputs

- Audit trail maintenance: Automatic logging of all decisions for regulatory review

Frequently Asked Questions

What is AI regulatory compliance?

AI regulatory compliance refers to using AI technologies, including machine learning, NLP, and automation, to help financial institutions meet regulatory requirements such as KYC, AML, and fraud monitoring obligations more efficiently and accurately than manual processes allow.

How is AI being used in fintech?

AI handles customer onboarding, identity verification, fraud detection, AML monitoring, regulatory reporting, and communications surveillance. These applications cut manual workload while improving detection accuracy across the board.

What is RegTech?

RegTech is a category of technology solutions that helps financial institutions manage regulatory compliance more efficiently. These tools use AI, automation, and data analytics to reduce the cost and complexity of compliance at scale.

Can AI replace compliance officers in financial Solution?

No. AI augments rather than replaces compliance officers. Regulators still require human accountability for compliance decisions, and compliance professionals are needed to interpret context, handle grey-area judgment calls, and take responsibility for regulatory filings.

What are the main risks of using AI for compliance?

The main risks are:

- Model bias producing unfair or discriminatory outcomes

- Black-box decisions that fail regulatory explainability requirements

- Data quality issues leading to inaccurate outputs

- Governance gaps when AI systems run without adequate monitoring

How does AI reduce false positives in AML monitoring?

AI models learn individual customer behavioral baselines and flag deviations in context, rather than applying generic static thresholds. This contextual intelligence means fewer legitimate transactions are incorrectly flagged, freeing compliance teams to focus on genuinely suspicious activity.