Introduction

Financial Solution firms face a critical tension: AI search platforms and GenAI assistants are accelerating productivity across trading floors and compliance teams, yet every query, retrieval, and generated answer now carries regulatory weight that most firms aren't prepared to govern. While 100% of surveyed financial institutions increased their AI/ML investments in 2024, only 9% have implemented AI governance systems. This gap between adoption and control creates exposure that regulators are already scrutinizing.

This article examines the compliance risks AI search platforms introduce in financial Solution, which regulatory frameworks apply, and what governance infrastructure actually looks like in practice.

Unlike predictive AI models, AI search platforms, including RAG-based tools, internal knowledge assistants, and agentic query systems, interact with sensitive data in real time. That makes governance at the point of use, not just in policy documents, the critical challenge.

TL;DR

- FINRA supervision rules, GLBA, SOX, and the EU AI Act all apply to AI search. No AI-specific rule gap means no compliance gap

- AI-generated outputs, not just retrieved documents, may qualify as regulated business communications requiring archiving

- Firms remain liable for third-party AI tools embedded in search workflows under existing vendor risk rules

- Static supervisory procedures cannot monitor real-time AI retrieval. Governance must shift to runtime enforcement

- Firms that embed audit-ready evidence generation into AI search infrastructure now will meet regulatory scrutiny before it arrives

What AI Search Platforms Actually Do in Financial Services

AI search platforms in financial Solution encompass GenAI-powered internal knowledge search, retrieval-augmented generation (RAG) tools, AI assistants embedded in compliance workflows, and customer-facing virtual assistants. These systems differ fundamentally from traditional predictive AI models: rather than applying pre-defined scoring logic, they generate novel outputs from retrieved data, creating new compliance considerations at every interaction.

Operational Use Cases Across the Firm

Internal compliance applications dominate current deployments. FINRA reports that "Summarization and Information Extraction" ranks as the top GenAI use case among member firms, condensing large volumes of unstructured documents into actionable summaries. Firms use AI search to retrieve policy documentation, track regulatory updates, automate report drafting, and prepare for audits. These tools transform how compliance teams access institutional knowledge.

Client-facing and investment use cases are expanding just as fast. Common deployments include:

- Virtual assistants handling account inquiries and client communications

- Tools surfacing curated market research and investment insights

- Systems retrieving KYC/AML documentation on demand

Each use case triggers different regulatory obligations based on what data is accessed and what output is generated. An AI assistant retrieving client account information activates GLBA privacy protections; one generating investment recommendations may trigger SEC fiduciary obligations.

The Shift Toward Agentic Search

Modern AI search is increasingly agentic: a query doesn't just retrieve information. It can trigger downstream actions such as generating a client communication draft, flagging a compliance exception, or populating a report. This shifts the compliance surface from passive retrieval to active decision support, raising the governance stakes considerably. When a single query can autonomously trigger a chain of downstream actions, every step in that chain needs oversight, not just the final output.

Why AI Search Platforms Create Distinct Compliance Exposure

The Recordkeeping Gray Zone

When an AI search tool generates a summary, a client-facing answer, or a compliance recommendation, it may constitute a business record or regulated communication under FINRA and SEC rules. Legal analysis indicates that AI-generated information not subsequently transmitted likely does not constitute a written communication requiring retention under investment adviser and broker-dealer recordkeeping rules. However, if AI-generated records are transmitted through email, chat, or otherwise, they trigger retention requirements under SEC Rule 17a-4 and FINRA Rule 4511.

Regulators have acknowledged there is not yet a clean answer for when internal AI outputs must be archived, but firms cannot use that ambiguity as cover for inaction. FINRA has explicitly stated that firms are responsible for their communications, regardless of whether they are generated by a human or AI technology.

The Data Access Scope Problem

AI search systems, especially those with broad access to internal data repositories, can surface sensitive client data, PII, or confidential firm information in response to queries. Unlike a database query that pulls a defined record, AI search can synthesize across data sources in unpredictable ways, creating exposure under GLBA, CCPA, and GDPR for firms operating across jurisdictions.

The FTC Safeguards Rule (16 CFR Part 314) mandates strict controls for financial institutions handling customer non-public personal information (NPI). Specific requirements include:

- Access controls limiting users to only the information they need to perform their duties

- Multi-factor authentication across systems

- Encryption of customer information in transit and at rest, or effective alternative compensating controls where encryption is infeasible

- Logging and monitoring to detect unauthorized access

Third-Party Vendor Risk Specific to AI Search

Many financial firms are embedding AI search capabilities through third-party platforms, including LLM providers, SaaS tools, and cloud AI Solution. Firms remain responsible for how client data is handled by those providers. FINRA Regulatory Notice 24-09 emphasizes that firms retain governance obligations even when leveraging the technology of a third party or embedded features in existing third-party products. Firms must understand what data is fed into third-party AI tools and ensure contracts prohibit unauthorized data use.

The Explainability and Bias Problem in Search Outputs

Vendor accountability addresses who handles your data, but a separate risk sits inside the model itself. Unlike a credit scoring model that can be audited after the fact, an AI search tool's reasoning for surfacing a specific result or generating a specific answer may be opaque. This creates risk under fair lending laws, UDAAP, and FCRA for any use case that touches credit, collections, or customer communications. The Federal Reserve and OCC's Supervisory Guidance on Model Risk Management (SR 11-7) requires that model development and validation documentation be sufficiently detailed to allow unfamiliar parties to understand how the model operates, its limitations, and key assumptions.

Shadow AI and Ungoverned Search Tools

Rapid adoption means employees are often using unauthorized or unvetted AI search tools outside official IT procurement. Gartner predicts that by 2030, more than 40% of enterprises will experience security or compliance incidents linked to unauthorized shadow AI. When shadow AI incidents occur, the cost premium is measurable: $4.63 million per breach versus $3.96 million for standard breaches, a $670,000 gap, and 65% of those incidents involve customer PII. Without a governed perimeter, firms have no visibility into what data those tools are touching or retaining.

The Regulatory Landscape Governing AI Search in Financial Services

FINRA's Position: Technology-Neutral Supervision

FINRA Regulatory Notice 24-09 confirms that FINRA's rules are technology-neutral. AI tools must be supervised like any other communication or decision-making system. This means:

- Written Supervisory Procedures (WSPs) must explicitly address AI search tools

- AI-generated content must meet FINRA Rule 2210 standards (clear, balanced, not misleading)

- Firms must demonstrate human oversight over AI-assisted processes

The emerging risk of "AI washing", overstating AI capabilities in marketing or regulatory filings, has become an enforcement concern. In March 2024, the SEC charged investment advisers Delphia and Global Predictions for making false and misleading statements about their use of AI, resulting in $400,000 in combined penalties. Delphia falsely claimed to use AI and machine learning to analyze client data for investment decisions, while Global Predictions falsely claimed to be the "first regulated AI financial advisor."

SEC Expectations: Conflicts of Interest and Fiduciary Obligations

The SEC has flagged conflicts of interest arising from AI and predictive analytics, particularly for broker-dealers and investment advisors. The concern is that AI systems, including search and recommendation tools, may generate outputs that prioritize the firm's interests over clients'. While SEC AI-specific rules remain in proposal stages, existing Regulation Best Interest and fiduciary obligations apply to AI-assisted advice workflows.

Data Privacy Obligations That Apply Directly to AI Search

AI search tools that synthesize personal data trigger overlapping privacy obligations that go beyond traditional data processing:

- GLBA: Requires financial firms to protect consumers' non-public personal information at every touchpoint, including AI search interfaces

- GDPR Article 22: Grants individuals the right not to be subject to solely automated decisions that produce legal or similarly significant effects - transparency duties for automated decision-making are addressed separately in GDPR, but Article 22 itself focuses on this individual right for firms with European clients

- CCPA: Gives consumers the right to know what data is used and how it informs outputs

Each framework applies independently. Firms operating across jurisdictions may need to satisfy all three simultaneously.

SOX and Audit Trail Requirements

For publicly traded financial institutions, Sarbanes-Oxley requires tamper-proof records and auditable internal controls over financial reporting. Any AI search tool used in reporting workflows, compliance documentation, or regulatory filing preparation must produce auditable records. Inputs, outputs, and decision logic must be documentable and testable.

PCAOB Auditing Standard 2201 extends this to the IT control environment, covering access controls, system operations, and program change management. AI tools embedded in these workflows fall squarely within its scope.

The EU AI Act: High-Risk Classifications as a Global Baseline

The EU AI Act (Regulation (EU) 2024/1689) establishes a high-risk classification for specific AI use cases enumerated in Annex III - not all KYC processes or financial customer interactions broadly. The categories directly relevant to financial Solution are AI systems used for creditworthiness evaluation and AI systems used for risk assessment and pricing in life and health insurance. Organizations deploying AI in these specific categories must meet a demanding set of requirements:

- Rigorous risk management systems and data governance controls

- Comprehensive technical documentation before deployment

- Automatically generated interaction logs for auditability

- Ongoing human oversight mechanisms

US-based firms with European operations or clients should be building toward these standards now. The Act is increasingly treated as a de facto global baseline, even in jurisdictions where it has no direct legal force.

The Four Pillars of Compliant AI Search Governance

Pillar 1: Real-Time Policy Enforcement

Governance cannot be applied only at the model-selection or deployment stage. Policies governing what data AI search can access, what outputs it can generate, and which users or roles can query which systems must be enforced at runtime, at the moment of every query.

In practice, this means policy rules that intercept and evaluate AI interactions before they reach users, not audit logs reviewed after the fact. For agentic workflows where a single query may trigger multiple downstream actions, enforcement must occur before every tool call, data access, and workflow trigger.

Trussed AI's enterprise AI control plane operates as a drop-in proxy, enforcing policy at runtime with under 20ms latency and evaluating every interaction against compliance rules before output is delivered.

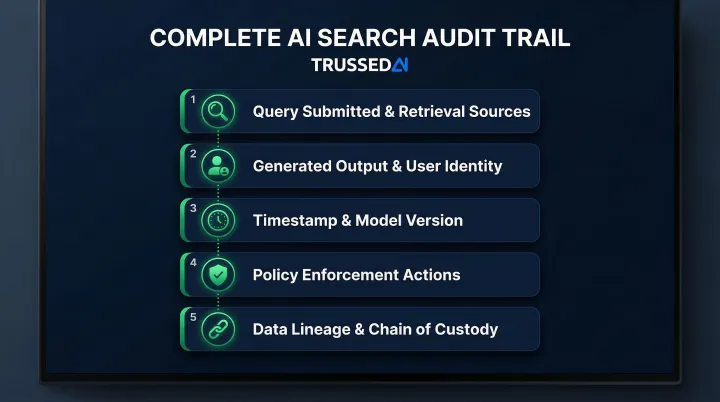

Pillar 2: Automatic and Complete Audit Trails

Compliance teams and regulators need to reconstruct what any AI search interaction retrieved, what was generated, and what action followed. Manual logging is insufficient at scale. Audit evidence must be generated automatically as a byproduct of every governed interaction, not assembled after the fact.

A complete audit record for an AI search interaction should contain:

- Query submitted and retrieval sources accessed

- Generated output and user identity

- Timestamp and model version

- Policy enforcement actions triggered

- Data lineage for full chain of custody

Platforms like Trussed AI generate continuous audit evidence automatically, maintaining complete audit trails where every AI interaction is logged with policy evaluation results, model version, timestamp, and data lineage, enabling organizations to trace any decision on demand.

Pillar 3: Data Access Scoping and Least-Privilege Retrieval

AI search systems should be governed by the same data access principles applied to human employees: users and AI agents should only retrieve data they are authorized to access.

This maps directly to GLBA and data privacy obligations. If an AI search tool surfaces a client's non-public personal information in response to a query from an unauthorized user, the firm has a privacy control failure, regardless of whether the disclosure was intentional.

Enterprise AI search tools must inherit existing Identity and Access Management (IAM) permissions so employees can only query non-public information (NPI) they are explicitly authorized to view. If an employee cannot access a document via traditional search, the AI assistant must not surface that document's contents in its generated responses.

Pillar 4: Continuous Monitoring and Model Drift Detection

Unlike static software, AI search systems can change their behavior as underlying models are updated by third-party providers. A compliance posture established at deployment may degrade without any IT intervention. Based on 2024-2025 release notes, OpenAI averages an update every 31.5 days, and Microsoft Azure every 58.4 days.

Firms need continuous monitoring that detects behavioral drift, flags policy violations in real time, and maintains a running compliance record rather than relying on annual audits of static configurations. Two operational controls make this sustainable:

- Continuous monitoring: Real-time detection of drift and policy violations as they occur, not after the fact

- Automated regression testing: Validating AI search outputs every time a third-party API or model version is updated

Operationalizing AI Search Compliance: From Policy to Enforcement

The Core Gap Most Firms Face

Financial firms have written AI policies, risk assessments, and WSPs that reference AI, but these documents describe intent rather than enforce behavior. While 84% of firms are planning or implementing an AI governance framework, only 9% have working AI governance systems. The gap between what the policy says and what the AI search tool actually does at runtime is where regulatory exposure lives.

The SEC's enforcement actions against Delphia and Global Predictions show what's at risk: firms that claim AI governance in regulatory filings but lack operational controls face material penalties and reputational damage.

The Infrastructure Shift Required

Moving from documentation-based governance to runtime enforcement requires a control layer that sits between AI applications and the models and data they access. This means API-level or proxy-level interception that evaluates every AI interaction against compliance rules before output is delivered, without introducing meaningful latency or requiring application code changes.

Trussed AI's enterprise AI control plane uses a drop-in proxy architecture that enforces policy at runtime and automatically generates audit-ready compliance evidence as a natural output of every interaction. Key technical specs:

- Latency: Under 20ms, with no changes to application code

- Cloud integrations: AWS and Google Cloud

- LLM support: OpenAI and Anthropic, via Python, TypeScript, Go, and REST API SDKs

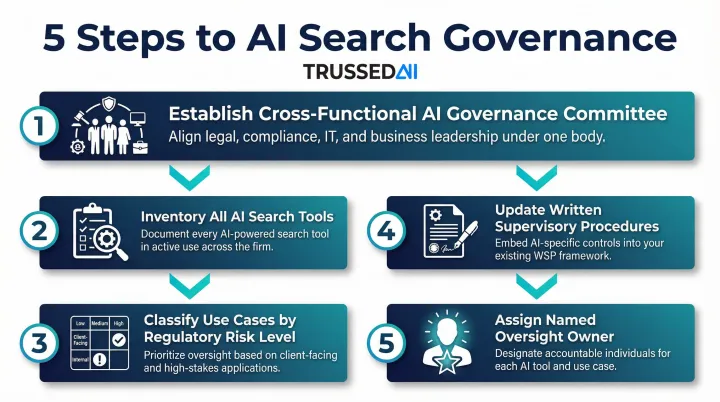

Organizational Steps Firms Should Take Now

Regardless of which technology controls are in place, financial Solution firms should take these operational steps:

- Establish a cross-functional AI governance committee spanning compliance, legal, IT, and risk

- Inventory all AI search tools in use, including those adopted informally by business units

- Classify each use case by regulatory risk level based on data accessed and outputs generated

- Update WSPs to address AI-generated outputs explicitly, including recordkeeping obligations and supervisory review requirements

- Assign a named owner for ongoing oversight, as diffused accountability is a common failure point in AI governance

These steps and technology controls are complementary. Firms that combine structured governance processes with Trussed AI's platform typically see a 50% reduction in manual governance workload and reach operational workflows within four weeks. The path from AI experimentation to production-ready governance runs through governance strategy design, cross-functional training, risk and approval workflow design, and platform deployment with built-in audit readiness.

Frequently Asked Questions

How can AI be used in financial Solution?

AI is used across customer service automation, fraud detection and AML monitoring, investment research and portfolio insights, compliance workflow assistance, and internal knowledge search. Each use case carries distinct regulatory obligations depending on whether it involves client data, generates regulated communications, or supports decision-making subject to fiduciary standards.

What compliance regulations apply to AI search platforms in banking?

Key frameworks include FINRA's technology-neutral supervision rules (Regulatory Notice 24-09), GLBA data privacy obligations, SOX audit requirements for publicly traded firms, SEC guidance on AI and conflicts of interest, and the EU AI Act for firms with international operations. All existing financial Solution regulations apply to AI tools regardless of the absence of AI-specific rules.

Do AI-generated outputs from search tools need to be archived as business records?

Firms are expected to apply existing recordkeeping standards to AI outputs that constitute business communications or advice. Content transmitted to clients triggers SEC Rule 17a-4 and FINRA Rule 4511 retention obligations. Archiving practices should be in place now, ahead of any explicit AI-specific rules.

What is the difference between an AI governance policy and AI governance enforcement?

Written policies document intent and assign accountability. Runtime enforcement goes further. It actively intercepts AI interactions, applies compliance rules, and generates audit evidence at every query. Policies define the standard; enforcement is what actually controls AI behavior in production.

How do financial firms manage third-party AI vendor risk in search tools?

Firms remain responsible under FINRA and GLBA for how third-party AI tools handle client data. Vendor contracts should prohibit unauthorized data use, due diligence should cover how AI features are embedded, and firms need ongoing visibility into what data flows into third-party systems.

Can financial Solution firms govern AI search without rewriting existing applications?

Yes. Proxy-based or API gateway architectures apply compliance controls at the infrastructure layer, with no changes required to existing AI applications. Firms can enforce policies, log interactions, and generate audit evidence across all AI search tools from a single control point.