Introduction

Financial institutions face a critical paradox: they're deploying AI faster than they can govern it. While banks and payment processors rush to implement machine learning models for fraud detection and transaction monitoring, criminals are already weaponizing the same technology. The FBI's Internet Crime Complaint Center reported $16.6 billion in cybercrime losses during 2024, a 33% jump from the previous year, with sophisticated fraud attacks combining synthetic identities and deepfakes surging 180% year-over-year.

The stakes extend beyond fraud losses. A multinational company lost $26 million in Hong Kong after scammers used deepfake video technology to impersonate the CFO on a conference call. FinCEN issued alerts noting increased suspicious activity reports describing deepfake media used to bypass customer due diligence controls. The velocity and sophistication of AI-enabled financial crime now outpaces traditional detection methods.

As detection capabilities improve, the binding constraint shifts from finding fraud to governing the systems doing the finding. AI models in AML, fraud detection, and sanctions screening make high-stakes decisions at machine speed, but most organizations lack the infrastructure to enforce policies, validate decisions, or produce audit evidence in real time.

Regulators are tightening scrutiny in parallel. The OCC has issued 17 matters requiring attention related to AI use since 2020, and the CFPB has taken six AI-related enforcement actions targeting automated systems that produce unfair outcomes.

This guide provides a practical framework for understanding and implementing AI governance specifically for financial crime prevention, covering the core pillars of effective governance, how governance applies across AML, fraud, and sanctions functions, what regulators now expect, and how to move from written policy to runtime enforcement.

TLDR

- AI serves as both weapon and defense in financial crime. Governance determines which side prevails

- Effective governance means runtime enforcement and automated audit trails, not just written policy

- Five core pillars: fairness controls, explainability, auditability, security/privacy, and human oversight

- MAS, HKMA, the EU AI Act, and U.S. banking agencies are all tightening scrutiny of AI-driven compliance decisions

- Most institutions are exposed at the gap between governance on paper and governance enforced at runtime

Why AI Governance Is Critical for Financial Crime Prevention

The Dual Nature of AI in Financial Crime

AI capabilities that power fraud detection, including pattern recognition, behavioral modeling, and natural language generation, simultaneously lower barriers for sophisticated criminal activity. Generative AI tools have reduced the resources required to produce high-quality synthetic content, allowing criminals to commit fraud at scale by targeting multiple victims simultaneously.

Deloitte's Center for Financial Services predicts generative AI could enable fraud losses to reach $40 billion in the United States by 2027, up from $12.3 billion in 2023, a 32% compound annual growth rate. The velocity increase is measurable: sophisticated fraud attacks rose 180% year-over-year in 2025 according to industry data.

Traditional rule-based AML systems generate 85-95% false positive rates, consuming massive analyst capacity on non-actionable alerts. AI-driven systems can reduce false positives by 70% while improving detection of high-risk events by 30%, but only when properly governed and validated.

The Governance Gap That Creates Regulatory Exposure

Financial institutions are deploying AI models, agentic workflows, and generative AI tools in AML, fraud, and sanctions faster than governance frameworks can absorb. An IIF-EY survey found 88% of financial institutions are applying predictive AI/ML in production. Yet validation processes, bias checks, and audit mechanisms haven't kept pace.

A 2025 Deloitte EMEA survey found that while two-thirds of banks and insurers use AI/ML models, governance gaps persist. In the UK, 46% of firms reported only partial understanding of the AI technologies they use, largely due to reliance on third-party models.

That knowledge gap creates both regulatory exposure and operational fragility. Rule-based systems are static and auditable by inspection. ML models learn, drift, and produce probabilistic outputs, requiring ongoing monitoring, performance benchmarking, and documentation that most institutions haven't yet operationalized.

The Cost of Inaction

Ungoverned AI in financial crime contexts creates specific failure modes:

- Biased alert generation leading to discriminatory outcomes and fair lending violations

- Black-box decisions that can't be explained to regulators during examinations

- Model drift resulting in degraded detection and rising false negative rates

- Agentic systems taking compliance actions without traceable rationale or human oversight

The business case for proactive governance extends beyond avoiding penalties. Institutions that govern AI well deploy models faster, waste less analyst time on false positives, and walk into regulatory examinations with documented evidence rather than explanations. That's a structural advantage, not just a risk management exercise.

The Five Core Pillars of AI Governance in Financial Crime

Pillar 1: Fairness and Bias Controls

AI models trained on historical transaction or behavioral data can inadvertently encode demographic or institutional biases. This leads to disproportionate flagging of certain customer segments, creating both regulatory exposure under fair lending laws and reputational risk.

Research on bias in AI-based fraud detection revealed that certain demographic groups, particularly individuals from lower-income zip codes and ethnic minorities, experienced significantly higher rates of false fraud flags. Fairness metrics including disparate impact ratios showed measurable discrimination embedded in model outputs.

Practical controls include:

- Diverse, representative training data vetted for historical bias

- Regular bias audits using disparate impact testing

- Ongoing monitoring of alert rates across demographic cohorts

- Documentation of fairness testing for regulatory examination

The EU AI Act requires high-risk AI systems to use high-quality datasets that are relevant, representative, and vetted to mitigate biases that might create discriminatory outcomes. The CFPB has stated clearly that federal consumer financial laws apply regardless of technology used. The complexity or opacity of an AI model is not a defense for violating these laws.

Pillar 2: Transparency and Explainability

Explainability serves dual purposes: investigators need to understand why a transaction was flagged to make sound judgments, and regulators need to understand model logic to assess fairness and soundness during examinations.

Black-box models that produce accurate predictions but offer no rationale create regulatory and operational problems. When an examiner asks "why did this model flag this customer?" the institution must provide a clear answer.

Key requirements:

- Model documentation covering architecture, features, and decision logic

- Explainability techniques such as SHAP values for feature importance

- Plain-language rationale generation for investigator review

- Traceability from model input to output to human decision

MAS Singapore's 2024 AI Model Risk Management paper states that banks must mandate global and local interpretability methods, especially for high-risk applications. HKMA requires that AI applications be explained to an appropriate level. The EU AI Act requires high-risk systems to be designed so deployers can interpret outputs and use them appropriately.

Pillar 3: Accountability and Auditability

Explainability tells examiners what a model decided; auditability tells them how that decision was reached, by whom, and when. Tamper-evident logs of model inputs, outputs, decisions, and human review actions across the full investigation lifecycle are the evidentiary foundation for regulatory examinations, SAR filing justifications, and post-incident forensics.

Governance infrastructure should generate this evidence automatically. Platforms like Trussed AI produce audit trails as a byproduct of every governed interaction, capturing the complete decision chain without adding manual documentation overhead.

Audit trail requirements:

- Inputs and prompts sent to models

- Model outputs and recommendations

- Policy evaluation results showing which rules were applied

- Model version and timestamp for each decision

- Data lineage tracking sources and transformations

- Human review actions, approvals, and overrides

The EU AI Act Article 12 requires high-risk AI systems to automatically record events over the system's lifetime to ensure traceability. Deployers must keep logs for at least six months.

Pillar 4: Security and Privacy

Financial crime AI systems process sensitive transaction data and behavioral profiles, creating high-value attack surfaces. Security risks extend beyond traditional data breaches to include model-specific threats.

Key security concerns:

- Prompt injection attacks against LLM-based investigative tools that could manipulate outputs

- Data poisoning where adversaries inject malicious training data to degrade model performance

- Adversarial attacks designed to evade fraud detection through carefully crafted inputs

- Privacy violations when models process personal data to generate risk profiles

GDPR Article 35 requires Data Protection Impact Assessments for systematic evaluation of personal aspects based on automated processing that produces legal or significant effects. California's CCPA regulations mandate risk assessments for automated decision-making technology used for significant decisions. BSA/SAR confidentiality requirements add additional constraints on how transaction data can be used and stored.

Pillar 5: Human Oversight and Controllability

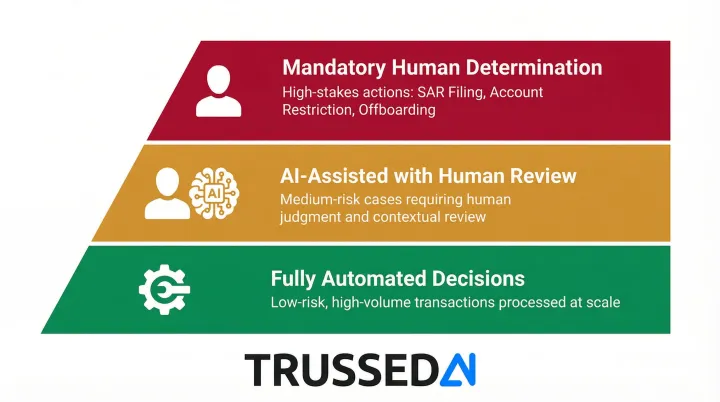

Human oversight is both a governance requirement and a risk management control. The design question is when human review is required and at what thresholds, not whether it belongs in the process at all.

Tiered oversight models:

- Fully automated decisions for low-risk, high-volume transactions with defined thresholds

- AI-assisted decisions with human review for medium-risk cases requiring judgment

- Mandatory human determination for high-stakes actions such as SAR filing, account restriction, or customer offboarding

These thresholds and escalation paths must be defined explicitly and enforced at the infrastructure level. Policy manuals alone are insufficient; the controls need to be embedded in the systems that execute decisions.

AI Governance Across Key Financial Crime Functions

AML and Transaction Monitoring

AI governance in ML-based transaction monitoring requires model validation aligned with BSA/AML program requirements and examiner expectations. The shift from static rule thresholds to dynamic anomaly detection changes oversight requirements.

Governance requirements:

- Pre-deployment validation including performance benchmarking (precision, recall, false positive rates)

- Change management processes when models are retrained or updated

- Ongoing performance monitoring to detect drift

- Documentation of model logic and feature importance for examiner review

- Audit trails showing how alerts were generated and investigated

The 2021 Interagency Statement on Model Risk Management clarifies that SR 11-7 principles may guide BSA/AML compliance frameworks, though duplicative processes aren't required. The focus is on demonstrating sound model risk management practices appropriate to the institution's risk profile.

Fraud Detection

Where AML monitoring operates on batched review cycles, fraud scoring runs in real time, making sub-second decisions with direct customer impact. False declines damage customer relationships; false negatives result in fraud losses. The governance stakes are immediate and bilateral.

Critical governance elements:

- High accuracy requirements due to customer impact

- Full explainability of adverse actions (account blocks, transaction declines)

- Fairness controls to prevent discriminatory outcomes by customer segment

- Outcome tracking and dispute resolution processes

- Clear escalation paths for edge cases

Agentic AI fraud workflows that autonomously chain detection, analysis, and response actions introduce additional requirements around autonomous action boundaries and approval gates.

Sanctions Screening and Agentic Compliance Workflows

Sanctions screening is a zero-tolerance compliance domain where governance stakes are highest. A false negative can result in sanctions violations and enforcement actions; an ungoverned AI agent taking autonomous screening actions creates regulatory accountability gaps.

Governance requirements for agentic compliance AI:

- Defined task boundaries specifying what agents can and cannot do autonomously

- Escalation triggers that route edge cases to human review

- Human approval gates for consequential actions

- Comprehensive action logs showing agent decision chains

- Runtime enforcement of boundaries, not just policy documentation

OFAC enforcement actions emphasize looking beyond legal formalities to underlying economic realities. In 2025, OFAC issued 14 enforcement actions totaling $266 million.

The UK's OFSI fined a bank £160,000 for processing payments to a designated person after a spelling variation slipped past screening systems. That case illustrates the gap runtime governance is designed to close: sophisticated detection paired with mandatory human review before consequential action.

High-Risk Customer Management and KYC/EDD

AI used in customer risk scoring, CDD, and EDD must produce scores that are explainable, consistent, and defensible in examinations. When AI augments enhanced due diligence, the institution remains accountable for adequacy of review.

Governance controls:

- Validation that risk scores align with actual customer risk profiles

- Human review thresholds appropriate to risk levels

- Traceability to underlying data sources and rationale

- Documentation showing how AI-generated analysis informed human decisions

Navigating the Regulatory Landscape for AI in Financial Crime

Converging Global Expectations

While regulatory approaches differ by jurisdiction, expectations are converging: regulators expect documented governance, validation evidence, and human accountability for consequential AI decisions.

Key regulatory frameworks:

United States: SR 11-7 from OCC/Fed/FDIC establishes the foundational MRM requirements. FinCEN expects explainable AML models with documented validation, and the CFPB has taken enforcement actions against automated systems producing unlawful outcomes.

Singapore: MAS's 2024 AI Model Risk Management paper requires cross-functional AI oversight forums, comprehensive AI inventories, risk materiality assessments, and independent validations before deployment.

Hong Kong: HKMA and SFC guidance mandates effective policies, procedures, and internal controls with senior management oversight throughout the AI lifecycle. SFC's 2024 circular on generative AI emphasizes responsible use with appropriate controls.

European Union: The EU AI Act classifies creditworthiness AI as high-risk, with an explicit exception for fraud detection. High-risk systems must meet requirements across:

- Risk management systems

- Data governance and documentation

- Record-keeping and transparency

- Human oversight mechanisms

Model Risk Management Framework Evolution

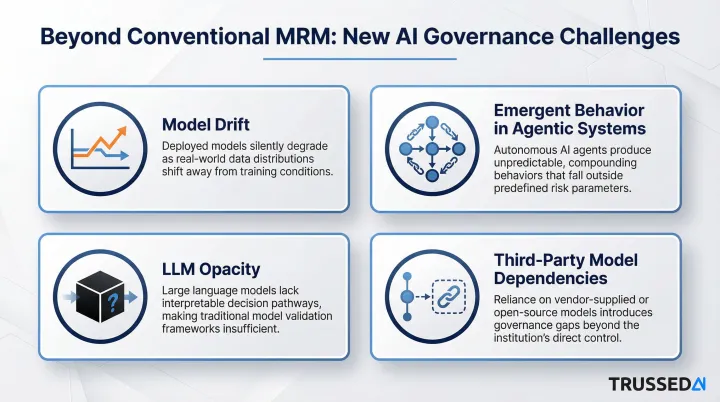

SR 11-7's MRM framework, covering model inventory, pre-deployment validation, ongoing monitoring, periodic revalidation, and change management, remains the established standard. ML and generative AI systems strain each of these disciplines in ways traditional models did not.

New challenges beyond conventional MRM include:

- Model drift as criminal typologies evolve and input data distributions change

- Emergent behavior in agentic systems that chain multiple models and tools

- Opacity of large language models that make explainability more complex

- Third-party model dependencies that create validation and accountability gaps

Regulatory Posture Shift: From Reactive to Proactive

These MRM gaps are precisely what regulators are now probing. Examiners are no longer checking that governance policies exist. They're demanding evidence of runtime enforcement, continuous monitoring, and documented outcomes. Institutions with policy documents but no operational governance infrastructure are increasingly the ones drawing scrutiny.

GAO reporting on OCC findings revealed that some banks' risk assessments didn't explicitly capture risk factors unique to AI models, leading to AI models being classified as low risk and evaluated less frequently than appropriate. As AI adoption in financial crime detection scales, that classification gap is becoming a primary examiner focus.

From Governance Policy to Runtime Enforcement

The Critical Distinction

Most financial institutions have AI ethics principles and model risk policies on paper. What they lack is infrastructure to enforce those policies at runtime, across every model invocation, every agentic action, and every AI-generated recommendation.

This gap concentrates regulatory and operational risk. Ungoverned AI systems can violate policies between audit cycles without detection. Written policies become unused documentation when no technical control prevents policy violations in production.

Technical Requirements for Operationalization

Operationalizing governance requires a control plane that:

- Intercepts AI interactions across models, agents, and applications

- Enforces policy rules in real time with latency appropriate for financial applications

- Routes outputs through guardrails that prevent policy violations

- Triggers escalation when thresholds are breached

- Generates evidence logs automatically, without manual documentation

Purpose-built infrastructure such as Trussed AI's enterprise AI control plane can be deployed as a drop-in proxy with no changes to existing application code. This makes it practical to governance-enable AI systems already in production, cutting manual governance overhead while keeping complete audit trails for every governed interaction.

With the control plane requirements clear, the next step is sequencing deployment to deliver compliance value as quickly as possible.

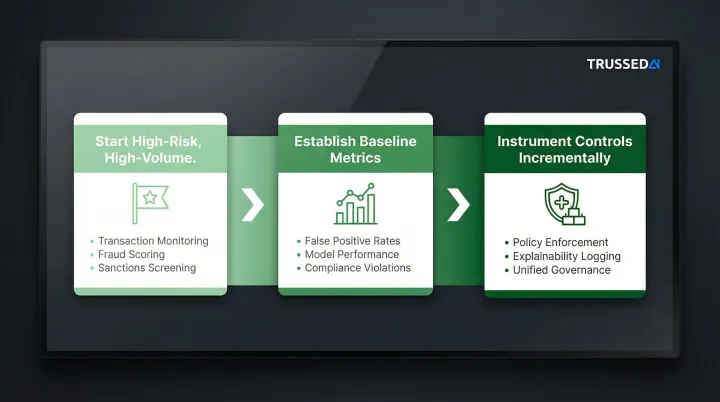

Practical Implementation Guidance

Start with High-Risk, High-Volume Functions

- Transaction monitoring systems generating thousands of daily alerts

- Real-time fraud scoring with direct customer impact

- Sanctions screening where false negatives create enforcement exposure

Establish Baseline Metrics

- Current false positive and false negative rates

- Model performance on key segments

- Compliance violation rates

- Time to investigate and resolve alerts

Instrument Governance Controls Incrementally

- Deploy policy enforcement for highest-risk decisions first

- Add explainability and audit logging

- Expand to developer tools and pre-production environments

- Build toward unified governance across all AI systems

Done right, governance becomes a built-in property of every AI system, measurable, auditable, and operational from day one.

Frequently Asked Questions

What is AI governance in the context of financial crime prevention?

AI governance in financial crime prevention refers to the policies, processes, and technical controls ensuring AI models and agents used in fraud detection, AML, and sanctions screening operate fairly, transparently, and within defined boundaries. The result: decisions that are accountable, auditable, and compliant with regulatory expectations.

How is AI governance different from traditional model risk management?

Traditional model risk management (SR 11-7) focuses on pre-deployment validation and periodic review. AI governance extends further by continuously monitoring model behavior, enforcing policy guardrails on every inference, and generating compliance evidence automatically rather than relying on point-in-time audits.

What do financial regulators currently expect from AI used in compliance decisions?

Key regulators including U.S. banking agencies, MAS Singapore, and HKMA expect documented model validation, explainable outputs, evidence of fairness testing, and human accountability for consequential decisions. Examiners increasingly look for operational evidence of governance, not just written policies.

How can financial institutions ensure AI models remain explainable to examiners?

The core techniques are SHAP-based feature importance logging, plain-language rationale generation for generative AI outputs, and complete audit trails covering inputs, outputs, and human review actions. Comprehensive model documentation ties these together for examiner review.

What makes governing agentic AI in compliance workflows especially challenging?

Agentic AI autonomously chains multi-step actions across data collection, analysis, and case drafting with no human in each loop. Governance must define task boundaries, escalation triggers, and approval gates at the infrastructure level, not just in policy documents, to preserve regulatory accountability.

How does AI governance reduce false positives in financial crime detection?

Governed AI systems with ongoing performance monitoring, bias controls, and continuous revalidation produce more calibrated alerts, reducing low-quality flags that drain analyst capacity and allowing teams to prioritize genuine suspicious activity.