This guide covers the unique regulatory pressures on financial Solution, the four pillars of effective AI governance, a practical implementation framework, and how to maintain compliance as AI deployments scale.

TLDR

- FINRA, SEC, OCC, and CFPB enforce AI under existing rules, with firms already absorbing six-figure penalties

- Four pillars of AI governance: accountability structures, transparency and explainability, regulatory compliance alignment, and internal usage controls

- Static policies don't enforce themselves; governance must operate at runtime across models, agents, and workflows

- Third-party and agentic AI are the fastest-growing governance blind spots for financial firms

- Building governance into AI infrastructure from the start dramatically reduces compliance overhead and audit risk

Why AI Governance in Financial Services Is Different

Unlike other industries, financial Solution institutions face uniquely layered regulatory oversight. Federal banking regulators (OCC, FDIC, FRB), securities regulators (SEC, FINRA, CFTC), consumer protection agencies (CFPB), and state-level regulators (e.g., NYDFS) all have overlapping jurisdiction over AI use. None have issued comprehensive AI-specific rules, meaning existing frameworks apply by default - and institutions are held to those standards regardless of technology complexity.

The Financial Stakes Are Real

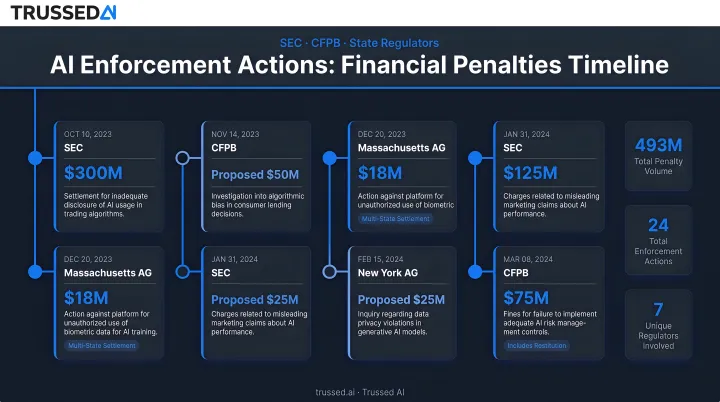

AI failures in financial Solution don't just create operational problems - they constitute legal violations. AI systems can trigger enforcement under fair lending laws (ECOA, Fair Housing Act), UDAAP prohibitions, FCRA liability, or supervisory action. Recent enforcement actions make the exposure concrete:

- The SEC charged two investment advisers with "AI washing," resulting in $400,000 in civil penalties for false and misleading statements about their AI capabilities.

- A U.S. federal court ordered over $130 million in penalties against the founders of EmpiresX for an illegal cryptocurrency platform that falsely promised profits using a fake AI trading bot.

- The Massachusetts Attorney General secured a $2.5 million settlement with Earnest Operations LLC after its AI underwriting models produced unlawful disparate impact based on race and immigration status.

The Scale Challenge

These enforcement actions aren't isolated incidents - they reflect how broadly AI is embedded across financial operations. Financial institutions deploy AI across credit underwriting, fraud detection, algorithmic trading, customer service, AML/CFT compliance, and back-office automation simultaneously.

That scale makes governance a cross-functional, enterprise-wide operational problem, not a one-time policy exercise. The U.S. Department of the Treasury's 2024 report on AI in financial Solution identifies governance gaps across all of these functions as a systemic risk - one that spans every line of business, not just compliance teams.

The Regulatory Landscape: What Financial Institutions Are Actually Required to Do

No single comprehensive federal AI law exists yet for financial Solution. Instead, multiple agencies have issued binding or de facto guidance that applies immediately:

Key regulatory frameworks:

- FINRA Regulatory Notice 24-09: Technology-neutral supervision rules apply to AI-generated content and decisions

- SEC enforcement on AI-related disclosures and conflicts of interest

- OCC/FDIC/FRB model risk management guidance (SR 11-7)

- CFTC Staff Letter 24-17 on system safeguards and market surveillance

- NAIC Model Bulletin on AI for insurers

FINRA and SEC Core Expectations

Existing supervision, recordkeeping, and marketing rules - including FINRA Rule 2210 - apply directly to AI tools. Firms must supervise AI-generated content and AI-assisted decisions with the same rigor as any other business process. The absence of an AI-specific rule is not a compliance shield. FINRA's 2024 guidance makes clear that member firms using Generative AI for summarization, information extraction, and surveillance must maintain full supervisory oversight.

Consumer Protection Requirements

The CFPB has explicitly stated that fair lending laws (ECOA, Fair Housing Act) and UDAAP prohibitions are technology-neutral. AI models used in credit underwriting must be explainable enough to support adverse action notices. The CFPB's Consumer Financial Protection Circular 2023-03 states: "Creditors cannot justify noncompliance with ECOA based on the mere fact that the technology they use to evaluate credit applications is too complicated, too opaque in its decision-making, or too new."

Black-box models that cannot provide specific denial reasons may be non-compliant regardless of accuracy.

Data Governance Obligations

The Gramm-Leach-Bliley Act (GLBA) requires financial institutions to protect customers' nonpublic personal information (NPI) - and that obligation extends directly to AI model training and inference. Covered firms must enforce data minimization, access controls, and security safeguards at every stage of the AI lifecycle.

The FTC's Safeguards Rule goes further, requiring an active information security program with administrative, technical, and physical controls. Key GLBA/Safeguards obligations for AI include:

- Limiting NPI used in model training to what is necessary and documented

- Controlling which teams and systems can access inference pipelines

- Logging data flows to support audit and breach response requirements

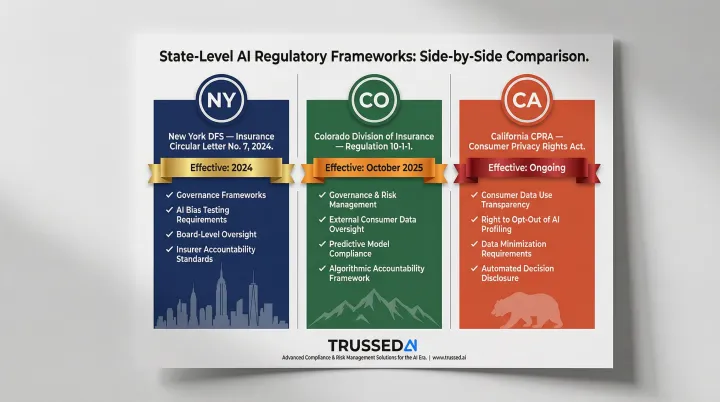

State-Level Complexity

State regulators are increasingly active:

- New York DFS (Insurance Circular Letter No. 7, 2024): Requires governance frameworks, bias testing, and board oversight for AI use

- Colorado Division of Insurance (Amended Regulation 10-1-1, effective October 2025): Mandates governance and risk management for external consumer data and predictive models

- California (California Privacy Rights Act): Advancing AI-specific rules that will impose additional data use and transparency requirements on covered firms

Financial institutions operating across jurisdictions need more than awareness of these rules - they need documented rationale for how conflicting requirements are reconciled, and controls that can produce that evidence on demand.

The Four Pillars of AI Governance in Financial Services

Pillar 1: Accountability Structures

Governance must start at the leadership level. Financial institutions should establish a dedicated AI governance function with clear ownership - whether an AI ethics committee, a Chief AI Risk Officer, or a cross-functional working group spanning compliance, legal, IT, and line-of-business leaders. Without organizational authority behind it, accountability is a policy on paper.

What accountability structures must cover operationally:

- Model inventory: Knowing which AI systems are deployed, for what purpose, and who owns them

- Defined escalation paths: Clear processes when AI systems produce unexpected or harmful outputs

- ERM integration: AI risk embedded into the institution's existing enterprise risk management framework

Pillar 2: Transparency and Explainability

Explainability is both a regulatory requirement and a risk management necessity. Regulators and consumers have the right to understand how AI-driven decisions are made - particularly in credit, insurance, and investment contexts. Interpretable models are designed to be understandable from the start; post-hoc explainability methods explain black-box models after the fact. The right approach depends on the risk level of the use case.

Generative AI introduces unique risks:

- Hallucination risk: LLMs can produce confidently stated but incorrect outputs, creating liability in customer-facing applications

- Bias propagation: Bias in training data can propagate discriminatory outcomes in credit or insurance decisioning that violate fair lending laws

According to McKinsey's 2026 AI Trust Maturity Survey, roughly 8% of organizations report AI-related incidents. The CFPB found that credit card and auto lenders using complex AI/ML credit scoring models produced disproportionately negative outcomes for Black and Hispanic applicants, requiring institutions to search for less discriminatory alternatives.

Pillar 3: Regulatory Compliance Alignment

Compliance alignment means mapping each AI use case to the specific regulations that apply to it before deployment - not after. A risk-tiered approach is essential:

- High-risk applications (credit underwriting, fraud decisions, customer communications): Require rigorous pre-deployment review, documentation, and testing

- Low-risk internal tools: Lighter governance requirements

A shared compliance baseline: The U.S. Department of the Treasury formally released the Financial Services AI Risk Management Framework (FS AI RMF), which explicitly adapts the NIST AI Risk Management Framework to financial Solution. The NIST "Govern, Map, Measure, Manage" structure aligns well with SR 11-7 model risk management expectations, reducing fragmentation across compliance silos.

Pillar 4: Internal Usage Controls and Data Governance

Internal usage controls must cover:

- Restricting which employees can use which AI tools

- Controlling what data (especially PII, NPI, and proprietary information) can be fed into AI systems

- Applying encryption and access controls

- Maintaining audit logs of AI interactions

The risk intensifies when employees use third-party or consumer-grade AI tools like ChatGPT in business contexts.

AI models are only as reliable as their training data. Financial institutions must maintain data lineage, validate data quality before use in model training, and monitor for data drift over time. Poor data governance remains one of the leading causes of model failures that attract regulatory scrutiny.

Building Your AI Governance Framework: A Practical Roadmap

Step 1: Inventory and Risk-Tier Your AI Use Cases

Governance starts with visibility. Without a complete picture of every AI system in use, enforcement is impossible. Build a full AI inventory cataloging all models, tools, agents, and third-party AI Solution - including the data they access, the decisions they influence, and the regulatory obligations they trigger. Assign risk tiers (high/medium/low) to direct governance effort where it matters most.

Step 2: Design Policies That Map to Operational Reality

Policies must be specific enough to be enforceable. Generic AI ethics statements don't constitute governance. Institutions need written policies that address:

- Model validation requirements

- Acceptable use of third-party AI

- Data handling for AI training and inference

- Documentation standards

- Escalation procedures

Build these policies into Written Supervisory Procedures (WSPs) and vendor contracts.

Step 3: Implement Runtime Enforcement, Not Just Documentation

The most common gap in financial Solution AI governance: documentation exists, but nothing enforces it at the moment AI is actually used. Effective governance requires runtime controls that operate continuously, not just at audit time.

That means building in:

- Guardrails that block prohibited outputs before they reach users

- Real-time policy enforcement across models, agents, and workflows

- Automated audit trail generation as a byproduct of every governed interaction

Platforms like Trussed AI function as an enterprise AI control plane , enforcing governance policies in real time across AI apps, agents, and developer tools via a drop-in proxy integration that requires zero changes to existing application code. Static compliance documents become operational controls.

Step 4: Train Staff and Establish Cross-Functional Ownership

Compliance teams, IT, legal, and line-of-business owners each carry different governance responsibilities , and need training that reflects those differences. One-time onboarding isn't enough. As AI capabilities expand and regulatory expectations shift, training programs need to keep pace with both.

Monitoring, Auditing, and Maintaining Compliance at Runtime

AI governance is not a one-time implementation,it requires continuous monitoring. Model performance can drift over time, regulatory requirements change, and new AI use cases create new risk surfaces.

An ongoing monitoring program should track:

- Model accuracy and performance metrics

- Bias indicators across protected classes

- Output quality and hallucination rates

- Policy violations and remediation

- Cost attribution across teams and models

Audit-Readiness in Practice

Regulators expect documentation of model development, testing, validation, approvals, and ongoing performance reviews. According to the Bank Policy Institute, rigid application of SR 11-7 MRM processes to AI can delay adoption of new tools by up to nine months due to excessive documentation requirements.

Automatically generated audit trails reduce this burden directly. When governance evidence is captured as a byproduct of every governed interaction, manual workload drops by up to 50% - and compliance teams spend less time reconstructing history and more time managing risk.

Continuous Compliance Monitoring vs. Periodic Audits

Point-in-time audits catch historical issues; continuous monitoring prevents violations from occurring or escalating. Essential components of a mature governance program include:

- Threshold-based alerting for cost, usage, and policy violations

- Automated policy checks at runtime

- Real-time visibility across the AI stack

Together, these capabilities shift the compliance posture from periodic review to persistent assurance , which is the standard regulators in financial Solution increasingly expect.

Managing Third-Party and Agentic AI Risk

Third-Party AI Risk

Most financial institutions rely heavily on vendor-provided AI models and embedded AI features in enterprise software. Regulators expect the same level of oversight for third-party AI as for internally developed systems. The 2023 Interagency Guidance on Third-Party Relationships states: "A banking organization's use of third parties does not diminish or remove a banking organization's responsibility to perform all activities in a safe and sound manner."

Effective Third-Party Risk Management (TPRM) for AI should include:

- Pre-contract due diligence on model documentation and data practices

- Contractual prohibitions on unauthorized use of client data

- Ongoing monitoring and performance validation

- Incident response SLAs

Agentic AI: The Emerging Governance Frontier

Autonomous AI agents that can take actions, query systems, and make sequences of decisions without human review represent a new governance frontier. Traditional compliance frameworks assume human-in-the-loop processes. Agentic AI requires governance infrastructure that can enforce policies at the agent level in real time.

FINRA has flagged that AI agents introduce unique risks around system authority, data sensitivity, and auditability. Addressing these risks means defining clear controls over:

- What data agents can access

- What actions they can take

- When human review is triggered

Vendor Contract Requirements

Both third-party AI tools and agentic systems require explicit contractual protections. Include specific AI governance clauses in vendor agreements:

- Data residency and handling requirements

- Model documentation and explainability obligations

- Notification requirements for model updates

- Audit rights and on-demand access to model performance records

Note that "AI washing",vendors overstating their AI capabilities,is something the SEC is actively scrutinizing. Firms can face enforcement risk for relying on and repeating vendor claims without independent validation.

Frequently Asked Questions

How does AI impact governance in finance?

AI introduces new risks to financial governance,including bias in decision-making, model opacity, data privacy exposure, and the speed of autonomous decisions,that existing frameworks must now address. Regulators expect AI to be supervised with the same rigor as any other business system, applying technology-neutral rules to AI deployments.

What are the four pillars of AI governance?

The four pillars are:

- Accountability: Clear ownership at the leadership level

- Transparency: Explainability of AI decisions

- Regulatory alignment: Compliance mapped to specific use cases before deployment

- Usage controls: Policies governing data access and employee AI use

What are examples of AI governance in financial Solution?

Practical examples span the industry:

- A bank's written supervisory procedures (WSPs) covering AI-generated client communications

- A credit union's model validation process for underwriting AI

- An insurer's bias testing protocol before deploying a claims-scoring model

- Automated audit trail generation for AI-assisted decisions, capturing policy results and data lineage

What regulations apply to AI in financial Solution?

No single AI-specific law covers all of financial Solution. Instead, technology-neutral rules apply across agencies: FINRA Notice 24-09, SEC disclosure rules, CFPB fair lending and UDAAP guidance, OCC/FDIC/FRB model risk management guidance (SR 11-7), GLBA data privacy requirements, and state-level rules like NYDFS Circular Letter No. 7.

How do financial institutions manage third-party AI risk?

Institutions should apply existing Third-Party Risk Management (TPRM) processes to AI vendors: pre-deployment due diligence, contractual data protections, ongoing monitoring, and audit rights. Regulators hold the institution responsible for AI outcomes regardless of who built the model.

What is the difference between AI compliance and AI governance?

Compliance is about meeting specific external regulatory requirements, while governance is the broader internal framework of policies, controls, oversight structures, and processes that ensures AI is developed and deployed responsibly. Good governance enables and simplifies compliance, but the two are not the same.