Introduction

Enterprises face a mounting crisis: AI is being deployed across products, agents, and workflows faster than compliance teams can track, but compliance and content governance processes have not kept pace. While 88% of organizations regularly use AI in at least one business function, only 45% of high-maturity organizations keep AI projects operational for three years or more. This gap creates regulatory exposure, audit vulnerabilities, and operational fragility.

That's where AI copilots for compliance come in. Rather than relying on static policy documents reviewed after the fact, these tools enforce governance at runtime - intercepting interactions, evaluating policies, and generating audit evidence as a byproduct of every AI interaction. This guide breaks down exactly what they are, why they matter, and how to evaluate them.

You'll learn:

- The definition and scope of AI compliance copilots

- Why the governance gap is growing and what it costs

- Core capabilities to prioritize when evaluating solutions

- Real-world use cases across regulated industries

- A decision framework for choosing the right platform

TLDR:

- AI compliance copilots enforce governance at runtime - not after the fact - intercepting interactions, evaluating policies, and blocking violations in under 20ms

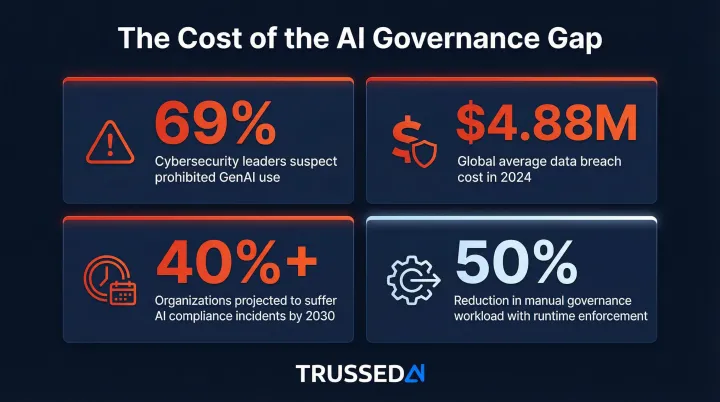

- 69% of cybersecurity leaders suspect employees use prohibited public GenAI, creating massive shadow AI risk

- Effective copilots govern all AI touchpoints from a unified control plane: production apps, agents, developer tools, and third-party integrations

- Organizations achieve 50% reduction in manual governance workload with runtime enforcement

- Regulatory pressure is escalating: EU AI Act high-risk rules apply August 2026, and HHS requires AI tools in HIPAA risk analyses

What Is an AI Copilot for Compliance and Content Governance?

An AI copilot for compliance is an active governance layer that sits within AI workflows - not a monitoring dashboard checked after the fact, but a real-time assistant that intercepts interactions, enforces policy, flags unsafe or non-compliant outputs, and creates an auditable record as work happens.

Traditional governance tools operate as post-hoc reviews. Compliance teams review AI outputs in weekly batches, reconstruct audit evidence manually from logs, and discover violations days or weeks after they occur.

AI copilots work differently: they evaluate every prompt and response against configured rules in under 20 milliseconds, enforce policies before violations reach users, and generate compliance records automatically.

Content Governance for AI-Generated Outputs

The content governance dimension addresses how AI-generated content - from LLMs, agents, and copilot interfaces - adheres to regulatory language standards, brand guidelines, and industry-specific requirements.

Examples include:

- Flagging HIPAA-sensitive language in healthcare AI chatbot responses before they reach patients

- Ensuring financial disclosures appear in AI-generated customer communications

- Preventing AI marketing tools from generating claims requiring regulatory approval in certain markets

- Enforcing academic integrity policies in university AI writing assistants

Content governance operates at the execution layer, evaluating outputs against policy before delivery.

Multi-Layer Governance Scope

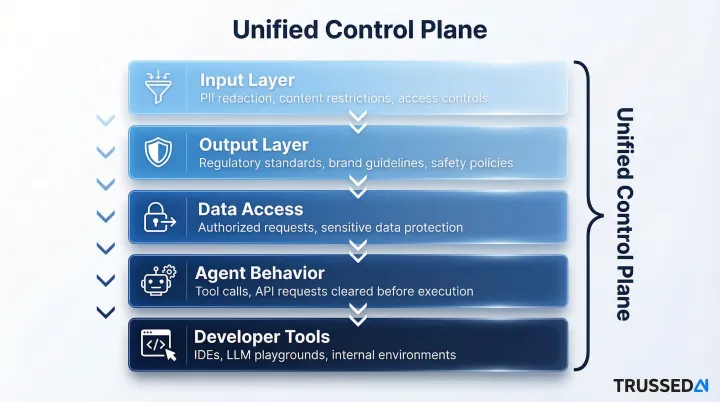

Content governance alone covers only one layer. AI compliance copilots govern across the entire AI stack simultaneously:

- Input Layer: Evaluates prompts before they reach models, applying PII redaction, content restrictions, and access controls

- Output Layer: Assesses model responses against regulatory standards, brand guidelines, and safety policies before delivery

- Data Access: Authorizes every data request against policy, preventing unauthorized exposure of sensitive information

- Agent Behavior: Enforces boundaries on autonomous agent actions - every tool call and API request cleared before execution

- Developer Tools: Governs AI usage in IDEs, LLM playgrounds, and internal development environments

Single-layer guardrails or narrow content filters cover one of these surfaces. A compliance copilot covers all of them, which is what makes the governance model viable at enterprise scale.

Why the AI Governance Gap Is Growing (and Why It Matters)

AI Deployment Outpaces Governance Maturity

Enterprise AI adoption is accelerating across customer-facing products, internal tools, agentic workflows, and developer environments,with models and agents often deployed by individual teams without centralized oversight. Over 70% of firms have generative or predictive AI in production, yet nearly two-thirds of organizations have not yet begun scaling AI across the enterprise.

Organizations that perform regular audits and assessments of AI system performance and compliance are over three times more likely to achieve high GenAI value than organizations that do not. The governance infrastructure simply hasn't kept pace with deployment velocity.

Regulatory Pressure Is Escalating

Regulators are no longer asking whether organizations have an AI policy,they want evidence that it is being enforced in production.

Key regulatory timelines:

- EU AI Act: Prohibitions applied February 2, 2025; high-risk system rules apply August 2026-2027, requiring risk-mitigation systems, logging of activity, and human oversight

- HIPAA: HHS published 2025 Security Rule NPRM explicitly requiring AI tools in risk analyses and technology asset inventories

- NAIC Model Bulletin: Adopted by 24 states as of May 2025, requiring insurers to maintain written AI programs for responsible use

- Federal Reserve SR 11-7: Confirmed applicable to AI/ML in 2021, requiring robust model development, validation, and governance

- FINRA Notice 24-09: Issued June 2024, requiring supervisory systems addressing AI governance, model risk management, and accuracy

The FTC launched Operation AI Comply in September 2024, explicitly stating: "Using AI tools to trick, mislead, or defraud people is illegal... there is no AI exemption from the laws on the books."

The Audit Trail Problem

When AI is deployed without runtime governance, compliance evidence must be reconstructed manually from logs, model cards, and documentation , a process that is slow, error-prone, and rarely complete.

Organizations routinely spend weeks reconstructing answers when regulators ask how a specific AI decision was governed. In litigation, that gap isn't just inconvenient - it can be the difference between a warning and a fine.

The Shadow AI Epidemic

69% of cybersecurity leaders suspect or have evidence that employees are using prohibited public GenAI, and 39% of EMEA employees use free AI tools at work. By 2030, more than 40% of global organizations will suffer security and compliance incidents due to unauthorized AI tools.

When employees adopt unapproved LLMs and AI integrations outside formal processes, organizations lose visibility entirely. Data leakage, IP exposure, and compliance violations accumulate in blind spots that no existing tool is watching.

The Cost of Governance Failures

The global average cost of a data breach reached $4.88 million in 2024, a 10% increase driven partly by shadow data and AI-driven exposures. For financial industry enterprises, breach costs average $6.08 million.

Manual governance overhead scales poorly with AI adoption. Organizations implementing runtime enforcement report a 50% reduction in manual governance workload - a figure that reflects just how much time was previously lost to disconnected tools, manual reviews, and weeks-long audit reconstruction.

The pattern is consistent: as AI deployment grows, unmanaged governance costs compound. Runtime enforcement stops that compounding before it starts.

Core Capabilities Every AI Compliance Copilot Should Have

Real-Time Policy Enforcement at the Interaction Layer

The copilot must intercept and evaluate AI inputs and outputs against defined policies as they occur - not after the fact.

Technical implementation: An inline proxy or gateway that evaluates every prompt and response against configured rules, with sub-20ms latency so it does not degrade user experience or AI performance. The proxy sits between existing AI applications and their underlying models, enforcing policies instantly before violations execute.

Runtime vs. post-hoc enforcement: Post-hoc auditing can only document violations after they occur. Runtime enforcement prevents violations from reaching users, executing unauthorized actions, or exposing sensitive data. When an agent attempts to access restricted data or generate non-compliant content, the copilot blocks the action before it completes.

Trussed AI's platform operates as an active control layer in the execution path, evaluating policy before every tool call, data access, and workflow trigger.

Automatic Audit Trail Generation

Every governed interaction should produce a compliance record automatically,capturing what was asked, what was returned, which policy was evaluated, and what action was taken.

What gets captured:

- Input prompts and requests

- Model responses and outputs

- Policy evaluation results and enforcement decisions

- Model version, timestamp, and data lineage

- Full chain of custody from prompt to action

This turns audit preparation into a byproduct of normal operations. For regulated industries where demonstrating control is as important as having it, automatic audit trails eliminate the weeks-long reconstruction process and provide immediate evidence for regulatory examination. Organizations can trace any decision on demand with the full chain of custody available instantly.

Configurable Guardrails and Content Policies

The copilot should allow compliance and risk teams to define, update, and enforce policies without requiring engineering changes to the AI applications themselves.

Enforcement modes:

- Hard blocks: Rejecting non-compliant outputs entirely before they reach users

- Soft warnings: Flagging interactions for human review while allowing execution

- Redaction: Removing sensitive content before delivery

These modes apply across different scopes depending on organizational need:

Policy scope flexibility:

- Organization-wide policies (data leakage prevention, PII protection, cost limits)

- Use-case-specific rules (HIPAA compliance for healthcare chatbots, NAIC standards for insurance communications)

- Team or department-level policies (research data access restrictions, developer tool usage limits)

Compliance teams shift from reactive remediation to proactive policy management - configuring rules, reviewing flagged interactions, and iterating on policies without waiting for engineering sprints.

Breadth of Coverage Across the AI Stack

Effective copilots must govern not just one application but all AI touchpoints - production applications, agentic workflows, developer tools (like GitHub Copilot or internal LLM playgrounds), and third-party AI integrations.

Coverage gaps that create risk:

- Point solutions that only govern production apps miss shadow AI in developer environments

- Governance gaps in agent-to-agent communication create unmonitored risk surfaces

- Third-party integrations without policy enforcement bypass organizational controls

- Inconsistent governance across touchpoints creates audit vulnerabilities

A unified control plane approach governs all AI interactions from a single enforcement layer, ensuring policies remain effective as AI systems scale and diversify.

Continuous Compliance Monitoring and Alerting

Beyond intercepting individual interactions, the copilot should provide ongoing monitoring of compliance rates, policy violation trends, cost attribution, and model behavior drift - surfacing anomalies rather than waiting for a formal audit cycle.

Key monitoring capabilities:

- Real-time dashboards showing compliance rates across teams and applications

- Alerting on policy violation trends or unusual behavior patterns

- Cost attribution and spend tracking by business unit, project, and provider

- Model behavior monitoring to detect drift or unexpected outputs

Look for dashboards and alerting that feed into existing SIEM or GRC tooling. This gives security and compliance teams the visibility to respond to emerging risks before they escalate.

AI Copilots in Action: Use Cases Across Regulated Industries

Insurance and Financial Services

AI copilots enforce regulatory language standards (NAIC, SR 11-7, FINRA) on AI-generated customer communications, claims summaries, and underwriting recommendations in real time,preventing compliance violations before they reach customers.

Specific applications:

- Validating that AI-generated policy documents include required disclosures

- Ensuring claims summaries comply with state-specific regulatory language

- Flagging underwriting recommendations that may introduce prohibited basis disparities

- Enforcing model risk management documentation requirements automatically

Automatic audit trails satisfy model risk management (MRM) requirements without manual documentation overhead. The CFPB has directed institutions to enhance fair lending compliance management systems relating to testing and approving credit scoring models, including documenting specific business needs and testing for disparities. AI copilots generate this documentation continuously as models operate.

Healthcare

In healthcare settings, AI copilots monitor patient-facing AI interactions (chatbots, clinical decision support tools) for HIPAA-sensitive content, ensuring PHI is not leaked through LLM outputs and that AI-generated clinical content meets regulatory and institutional standards.

Critical use cases:

- Preventing generic ChatGPT Solution from accessing PHI (these Solution cannot offer Business Associate Agreements required under HIPAA)

- Flagging when AI training data, prediction models, or algorithm data contain ePHI subject to HIPAA protection

- Monitoring generative AI tools that may produce names and personal information from training data in outputs

- Enforcing institutional policies on AI-generated clinical documentation

For borderline outputs, the copilot escalates to clinician review rather than blocking outright - preserving clinical workflow efficiency without sacrificing oversight.

Higher Education and Research

Universities deploying AI writing assistants, research tools, and student support chatbots face unique content governance challenges: academic integrity policies, FERPA compliance, and IRB requirements for research data.

Enforcement scenarios:

- Flagging AI-generated academic submissions that violate institutional integrity policies

- Restricting what student data can be referenced in AI interactions to maintain FERPA compliance

- Enforcing IRB requirements for research data used in AI experiments

- Controlling access to proprietary research data across university AI platforms

The U.S. Department of Education issued guidance in July 2025 affirming that AI systems must comply with FERPA,requiring institutions to document how systems function and give stakeholders a voice in technology adoption decisions. AI copilots create the audit trails and access controls that make this compliance demonstrable, not just aspirational.

Enterprise Content and Marketing Teams

For enterprises producing AI-assisted content at scale, copilots enforce brand voice standards, legal review flags, accessibility requirements, and territory-specific compliance (preventing AI tools from generating claims that require regulatory approval in certain markets).

Governance applications:

- Enforcing brand voice consistency across AI-generated marketing content

- Flagging content requiring legal review before publication

- Ensuring accessibility requirements (WCAG standards) in AI-generated web content

- Preventing territory-specific compliance violations (health claims in EU markets, financial disclosures in US markets)

Content governance shifts from a sequential review queue into an automated inline check. Teams ship faster; compliance teams get consistent enforcement rather than spot checks.

AI Copilots vs. Traditional Governance Tools: Key Differences

Architectural Difference: Inside vs. Outside the Workflow

Traditional governance tools (policy documents, compliance checklists, post-deployment auditing platforms) operate outside the AI workflow and require human intervention to enforce. AI copilots operate inside the workflow, enforcing governance at the moment of interaction.

In practice, that distinction is significant. A compliance team using traditional tooling might review AI outputs weekly in batch - finding violations days after they occurred, then manually reconstructing what happened. With an AI copilot, every output is evaluated against policy in under 20 milliseconds, violations are blocked before they execute, and compliance evidence is generated automatically as a byproduct.

Addressing the "Governance as a Bottleneck" Fear

Many organizations resist governance tooling because they worry it will slow down AI deployment or add engineering overhead.

That concern doesn't hold with infrastructure-layer governance:

- Drop-in proxy integrations require zero changes to application code

- Sub-20ms enforcement latency adds no perceptible delay to end users

- Developers swap their API endpoint and governance activates automatically

- Policies are configured by compliance teams, not hardcoded by engineers

Engineering teams are freed from building custom guardrails for every application because governance is handled at the infrastructure layer.

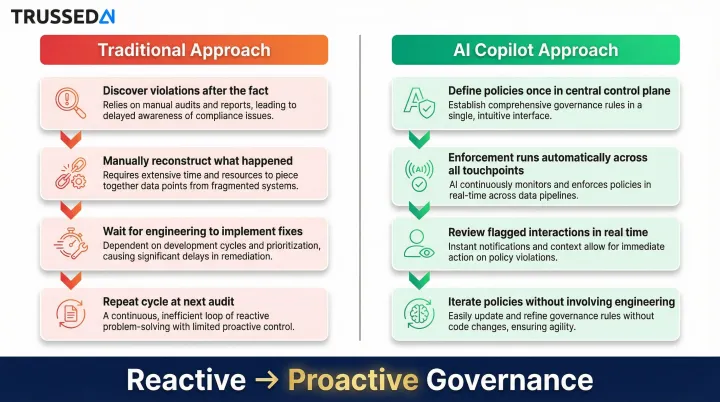

Organizational Shift: Reactive to Proactive

That infrastructure shift changes how compliance teams spend their time. Instead of investigating past violations after the fact, they focus on configuring rules, reviewing flagged interactions, and iterating on policies before problems occur.

Traditional approach:

- Discover violations after the fact

- Manually reconstruct what happened

- Wait for engineering to implement fixes

- Repeat the cycle at next audit

AI copilot approach:

- Define policies once in a central control plane

- Enforcement runs automatically across all AI touchpoints

- Review flagged interactions in real time

- Iterate policies without involving engineering

How to Choose the Right AI Copilot for Compliance

Coverage Scope and Deployment Flexibility

Evaluate whether the solution governs all AI touchpoints in your environment (production apps, agents, developer tools, third-party integrations) or only a subset. Narrow point solutions create governance gaps that regulators will find.

Key questions:

- Does it govern production applications and developer environments?

- Can it enforce policies on agentic workflows and multi-agent systems?

- Does it cover third-party AI integrations (OpenAI, Anthropic, etc.)?

- What deployment options are available,cloud, hybrid, on-premises?

Coverage scope only tells part of the story. Verify that the solution does not require you to transmit sensitive data to external systems and meets your organization's security requirements. Organizations in regulated industries should prioritize solutions offering self-managed deployment options where the governance layer runs entirely within their own environment.

Policy Configurability and Time-to-Value

Look for solutions that allow compliance and risk teams , not just engineers , to define and update policies, and that reach operational status in weeks rather than months.

Leading enterprise AI governance platforms typically deploy in 4–8 weeks for organizations with clear requirements and stakeholder alignment. Trussed AI, for example, brings operational workflows live in four weeks via a drop-in proxy that requires no application code changes, which matters for regulated enterprises that cannot afford lengthy integration cycles.

Evaluation criteria:

- Can compliance teams configure policies without engineering involvement?

- How quickly can policies be updated and deployed?

- Does the platform require extensive custom development or integration work?

- What training and support are provided during implementation?

Audit Readiness and Compliance Evidence

The solution should generate evidence that satisfies your specific regulatory requirements: structured, policy-mapped records that demonstrate control effectiveness, not generic log files.

What to verify:

- Does the platform generate audit trails automatically for every interaction?

- Are records structured for regulatory examination (policy evaluated, action taken, full chain of custody)?

- Can you trace specific decisions on demand without manual reconstruction?

- Does the vendor hold relevant security certifications and demonstrate sustained control effectiveness?

- Can the platform support compliance documentation for your industry's specific frameworks (HIPAA, GDPR, NAIC, EU AI Act)?

Prioritize solutions where audit trail generation is automatic. When regulators ask how a specific AI decision was governed, you need immediate answers , not a multi-week reconstruction effort that puts your team on the back foot.

Frequently Asked Questions

What is the difference between an AI copilot for compliance and a traditional AI governance tool?

Traditional governance tools operate outside AI workflows as post-hoc review mechanisms. Compliance teams check outputs after the fact and reconstruct evidence manually. AI copilots enforce policy at runtime, evaluating every interaction as it occurs and generating compliance evidence automatically.

Can an AI compliance copilot integrate with existing AI applications without requiring code changes?

Modern AI compliance copilots are designed as drop-in proxy integrations that sit between existing AI applications and their underlying models. Developers simply swap their API endpoint. The copilot then works transparently, requiring no application code changes and adding minimal latency , typically under 20ms per interaction.

How does an AI copilot automatically generate audit trails?

The copilot logs every governed interaction, recording the input, output, policy evaluated, and action taken as a natural byproduct of enforcement. Compliance evidence accumulates continuously without additional documentation work, so organizations can trace any decision on demand with full chain of custody.

Which regulations can AI copilots for compliance help organizations address?

AI copilots support major frameworks including HIPAA, GDPR, EU AI Act, NAIC model guidance for insurance, and SR 11-7 for financial Solution by enforcing relevant content and access policies in real time and generating the documentation needed to demonstrate compliance during audits.

How long does it typically take to deploy an AI copilot for compliance in an enterprise environment?

Deployment timelines vary by solution and integration complexity, but purpose-built platforms designed with drop-in proxy architecture can reach operational status in as little as four weeks - compared to months for platforms requiring deep engineering integration and custom development.

What is content governance in the context of AI, and why does it require a dedicated solution?

AI content governance refers to ensuring that AI-generated outputsfrom LLMs, agents, and copilot tools comply with regulatory standards, brand guidelines, and data handling policies. It requires a dedicated runtime enforcement layer because manual review cannot operate at the speed and volume of production AI systems.