Introduction

In regulated industries - healthcare, insurance, financial Solution, universities - AI projects rarely die because the technology doesn't work. They die in compliance review, governance gaps, or post-deployment audits. This is the core tension: AI capability has outpaced AI governance, and regulated industries are bearing the cost.

The problem isn't a lack of AI frameworks or policy documents. Most organizations have those. The problem is that governance lives on paper while AI runs in production - and the gap between the two is where regulatory exposure accumulates.

Three failure patterns show up repeatedly across regulated industries:

- Compliance teams see AI for the first time at the deployment gate, and projects stall

- Audit trails don't exist or can't be reconstructed, so regulators find violations

- AI-specific risks like prompt injection or data leakage fall into the gap between AI teams and compliance teams, owned by neither

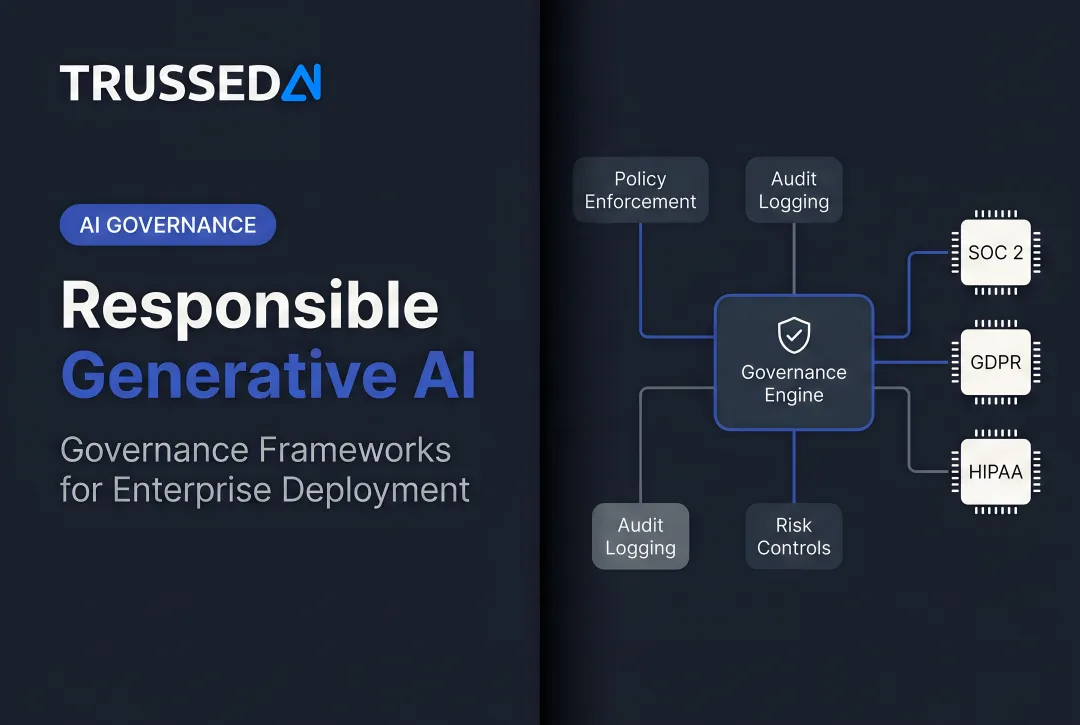

Responsible AI governance means building controls into the infrastructure of how AI runs - so every deployment inherits them automatically, rather than being bolted on after deployment. This post covers the unique governance bar regulated industries face, the four non-negotiable pillars, the common failure points, and what runtime enforcement actually looks like in practice.

TLDR

- Regulated industries face higher governance stakes - AI decisions affect patient outcomes, financial integrity, and legal liability

- Responsible AI governance means enforcing policies at the moment AI runs, not just documenting them

- Four pillars matter most: audit trails, explainability, data residency, and role-based access control

- Programs most often fail at three points: late compliance involvement, no clear AI risk owner, and vendor contracts that ignore AI obligations

- Governance built as a reusable control layer - one all AI workloads inherit by default - is the only model that scales without slowing compliance teams down

Why Regulated Industries Face a Higher Governance Bar

Regulated industries operate under frameworks - HIPAA, GDPR, MiFID II, FERPA, state insurance regulations - that were designed for traditional software systems and are now being applied to AI systems that behave in non-deterministic ways. The EU AI Act's high-risk classification explicitly covers healthcare diagnostics, credit scoring, and educational assessment tools,the exact use cases regulated industries are building.

The asymmetry of stakes is clear: in a retail context, a governance failure might mean a poor recommendation. In a regulated industry, it can mean a biased lending decision, a missed clinical risk flag, an improperly handled claim, or a data residency violation with real penalties. The cost of governance failure is not uniform across sectors.

Enforcement is accelerating, and the actions are AI-specific,not just extensions of existing data rules:

- The EDPB's Opinion 28/2024 clarifies that AI models trained on personal data cannot automatically be considered anonymous, and that embeddings and model parameters fall under GDPR

- The Italian Data Protection Authority fined OpenAI €15 million for processing personal data without appropriate legal basis

- HHS clarified in January 2025 that AI training data, prediction models, and algorithm outputs maintained by covered entities are protected under HIPAA

In the US, the HHS warning goes further: generative AI tools can expose names and personal information embedded in training data,a risk most compliance teams haven't fully accounted for.

For regulated industries, this means governance frameworks need to account for AI behavior at runtime, not just at deployment,before the next enforcement action defines the standard for them.

What Responsible AI Governance Actually Means for Regulated Industries

Responsible AI governance is often treated as a documentation exercise - a policy document, a risk register entry, a vendor questionnaire. In a regulated context, that's not enough. Governance only counts when it can be demonstrated to an auditor with evidence,not merely asserted.

In operational terms, responsible AI governance is the combination of controls, evidence, and accountability structures that ensure AI systems behave as intended, within defined boundaries, and can prove it. This is distinct from frameworks like NIST AI RMF or ISO/IEC 42001, which define what governance should address - not how to operationalize it in production.

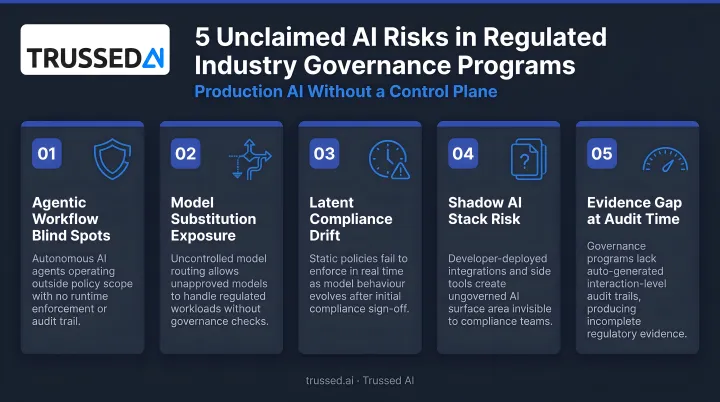

The Agentic AI Complication

As organizations move from single-model AI tools to multi-step agents and automated workflows, governance complexity multiplies. Each agent action is a potential compliance event. Governance frameworks designed for static software don't account for AI systems that make decisions sequentially and autonomously across systems and data sources without direct human review at each step.

Governance by Design

Responsible governance is built into how AI is deployed, not bolted on afterward. This means the logging, the access controls, the policy enforcement, and the audit trail are active from the first interaction,not retrofitted after the system is live.

The distinction matters in practice:

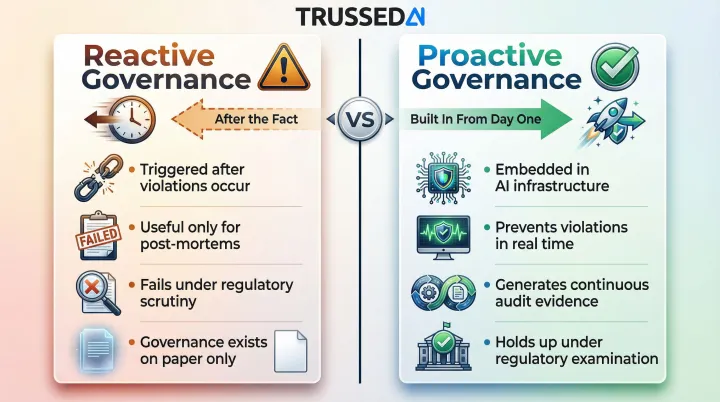

- Reactive governance is triggered after something goes wrong - useful for post-mortems, not examinations

- Proactive governance is embedded in the AI infrastructure itself, preventing or catching violations in real time

Only the latter holds up under regulatory scrutiny.

The Four Pillars of Responsible AI Governance

Audit trails, explainability, data residency, and access control are the non-negotiable foundation of responsible AI governance. Each pillar addresses a distinct failure mode that regulators will probe , and each one below requires deliberate architectural decisions, not afterthoughts.

Audit Trails: From Logging to Traceability

Logging records that something happened. Traceability lets you reconstruct exactly what happened, why it happened, and which data was involved - and in regulated environments, traceability is the only acceptable standard.

What a complete audit trail captures:

- Model version used

- Prompt template and context

- Retrieval sources if using RAG

- Identity of the requesting user

- Timestamp of the interaction

- Policy state in effect at the time

Store this data immutably, with retention periods that match your regulatory requirements. Structured, correlation-ID-based logging lets you trace the full chain of custody - from prompt to model to output to action.

Regulatory requirement: In financial Solution, MiFID II requires that automated decision-support systems maintain reconstructible records. In healthcare, AI-assisted clinical tools must demonstrate what information was used to produce an output. Append-only storage ensures audit trails can't be altered after the fact.

Explainability: What It Means in Practice

Explainability for generative AI is not the same as explainability for traditional ML. There are no feature importance scores. Instead, explainability in a regulated context means:

- Every claim the system produces references the source document it drew from

- Confidence levels are communicated to end users

- High-stakes decisions involve a human checkpoint

Position AI as a research assistant - surfacing and citing information - rather than a decision-maker. This is both a technical and a governance decision. Systems designed for explainability from the ground up don't just satisfy auditors - they produce outputs that practitioners can actually trust and act on.

Data Residency and Sovereignty

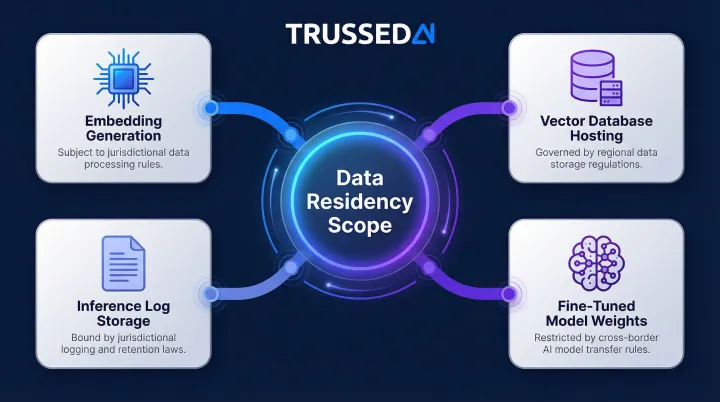

Data residency in an AI context is not just where the training data lives. It includes:

- Where embeddings are generated

- Where vector databases are hosted

- Where inference logs are stored (which may contain fragments of original data)

- Where fine-tuned model weights reside

Most AI architectures don't account for all of these. Each must be treated as derived data subject to the same jurisdictional rules as the source data.

GDPR implications: The EDPB warns that personal data from training sets can be embedded in model parameters, and that it's possible to extract personal data by querying the model. AI models and embeddings are subject to GDPR compliance and require case-by-case anonymity assessments.

HIPAA implications: HHS explicitly states that AI training data, prediction models, and algorithm data are protected by HIPAA when maintained by regulated entities. Generative AI tools have produced names and personal information from training data in outputs, which could result in impermissible disclosures.

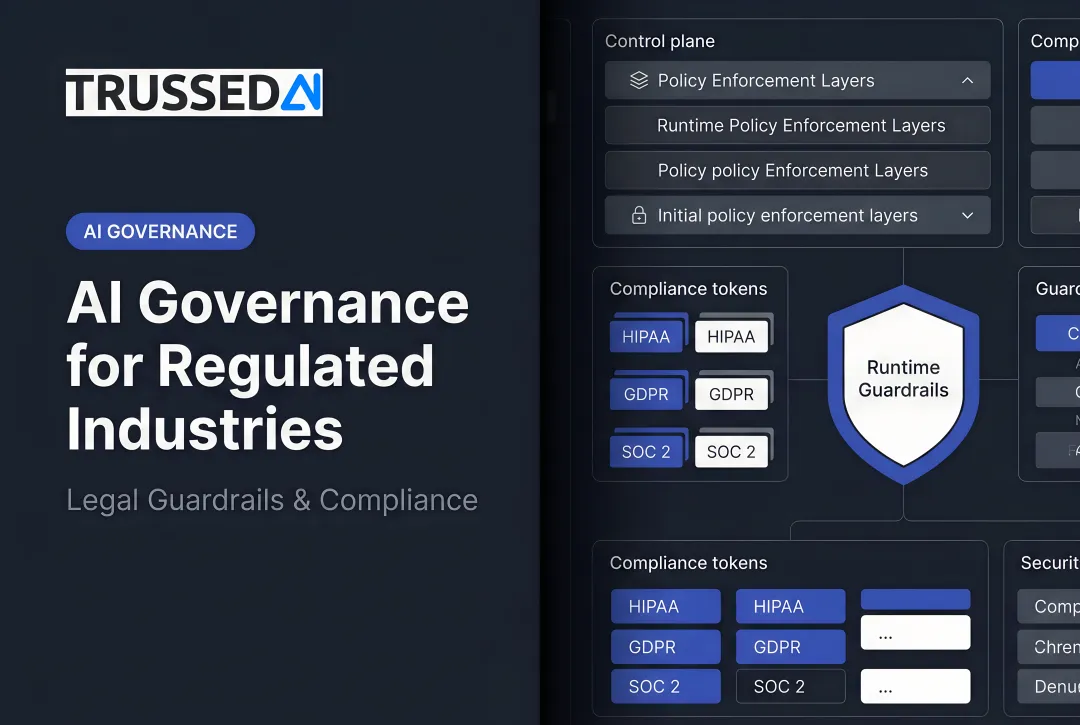

Role-Based Access Control at the Model Level

A single shared API key across teams is unacceptable in a regulated environment. Responsible governance means:

- Different teams can access different data

- Different prompt-level permissions are enforced

- Different escalation thresholds apply based on role

All enforced at the access control layer, not just at the application layer. Without access control at the model level, you have the illusion of governance - any user with application access can send any prompt and retrieve data they were never meant to see.

Where AI Governance Breaks Down in Practice

Failure Point 1: Compliance Involvement Comes Too Late

When compliance and legal teams see the AI for the first time at the deployment gate, organizations end up redesigning significant portions of their systems after the fact. Governance reviews should happen at the design stage, not the deployment stage. That means giving compliance teams a working framework early enough to shape decisions - not a finished product to accept or send back.

Failure Point 2: No Clear Owner for AI-Specific Risk

AI introduces novel risks that don't map cleanly to existing risk register categories:

- Hallucination and factually incorrect outputs

- Data leakage through model inputs or inference logs

- Prompt injection in user-facing applications

- Model drift degrading accuracy over time

- Access control gaps in agentic workflows

Each risk needs an assigned owner, a defined mitigation strategy, and a scheduled review cycle. Without that structure, these issues sit unclaimed in the space between AI teams and compliance teams,and nothing gets resolved.

Failure Point 3: Vendor Contracts Weren't Written for AI

Cloud AI provider agreements typically don't address:

- Whether the provider trains models on customer data

- How long inference logs are retained

- Who bears liability for AI-generated outputs

These terms must be explicitly negotiated. Regulated organizations should push for contract provisions covering:

- Data usage rights (including training data restrictions)

- Retention and deletion policies for inference logs

- Indemnification for AI-generated outputs

- Alignment with applicable regulatory frameworks

Enforcing AI Governance at Runtime, Not Just on Paper

Most AI governance programs produce documentation but not enforcement. A policy document that says "AI outputs must be auditable" doesn't make them auditable. A governance framework that requires access controls doesn't enforce them. That gap between written governance and enforced governance is where regulatory exposure lives - and it's where most regulated organizations currently operate.

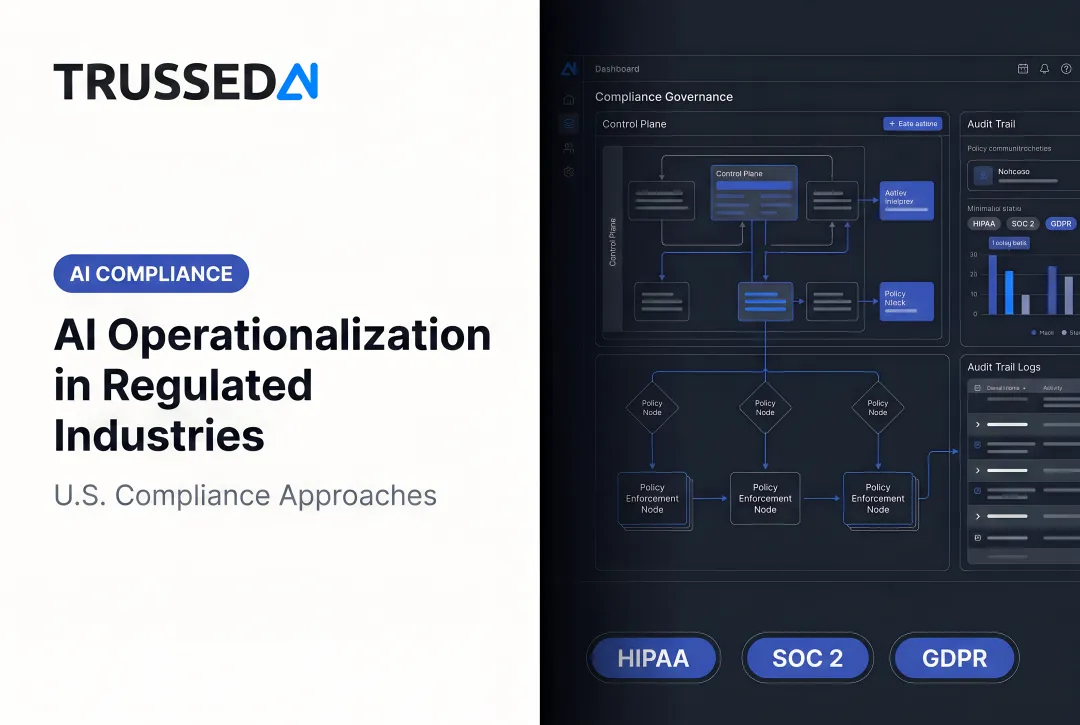

Runtime governance enforcement means governance controls that are active at the moment of AI inference, not just present in documentation. Policy enforcement happens at the proxy layer - sitting between the application and the model. Every AI call is checked against access policies and logged with a full trace. Routing follows the rules in force, regardless of which application is calling the model.

The Advantage of a Governance Control Plane

When governance is enforced at the infrastructure layer rather than the application layer, every AI workload deployed on top of it inherits the controls automatically. New use cases don't require re-solving compliance from scratch. Teams move faster precisely because governance is no longer a separate workstream - it's built into how the AI runs.

Trussed AI is built around this model: a unified control plane that enforces governance at runtime across AI apps, agents, and developer tools. Static policies become live controls, and audit evidence is generated automatically as a byproduct of every governed interaction.

In practice, this means teams get:

- Consistent policy enforcement across every model, agent, and workflow

- Automatic audit trails without manual logging or documentation prep

- Compliance coverage that extends to new use cases without additional configuration

- Full visibility across both developer environments and production systems

Addressing the Speed Objection

Regulated organizations often assume that adding governance controls adds latency and slows teams down. That assumption doesn't hold when governance is built into infrastructure rather than bolted on as process. Runtime policy enforcement at the proxy layer adds under 20ms of overhead. The real time cost sits in manual review cycles and compliance rework - both of which infrastructure-level governance eliminates.

Audit Readiness as a Continuous State

When governance is enforced at runtime, audit evidence is not something teams prepare for an auditor - it's continuously generated as AI runs. Every governed interaction produces a complete, timestamped, immutable record of what was accessed, by whom, under what policy. Auditors get a verifiable record without any preparation sprint. Compliance teams stop scrambling to reconstruct what happened and start pointing to what the system already captured.

Frequently Asked Questions

What does responsible AI governance mean for regulated industries specifically?

Responsible AI governance in regulated industries is the set of enforced controls, audit evidence, and accountability structures that ensure AI systems operate within defined regulatory and ethical boundaries,and can prove it to an auditor. It differs from general AI governance by emphasizing a higher evidentiary standard and alignment with specific regulatory frameworks like HIPAA, GDPR, and MiFID II.

What are the most important regulations governing AI deployment in healthcare, insurance, and financial Solution?

Core frameworks include HIPAA and FDA guidance (healthcare), NAIC model bulletins (insurance), MiFID II and AML/KYC obligations (financial Solution), and the EU AI Act's high-risk classification. AI-specific rules are layering rapidly on top of existing data and privacy frameworks, so the landscape is still evolving.

How is AI governance different when deploying AI agents versus single AI models?

With AI agents, each autonomous action is a potential compliance event. Multi-step workflows can make decisions across systems without human review at every step, so governance must cover the full action chain,not just the final output,with controls that can interrupt or log actions in real time.

What should be included in an AI audit trail for a regulated industry?

A complete audit trail captures model version, prompt template, retrieval context and source documents (for RAG systems), requesting user identity, timestamp, and the active policy state at the time of the interaction. All records should be stored in append-only, immutable storage with retention periods matched to applicable regulatory requirements.

How do you build AI governance that doesn't slow down development teams?

Governance built as infrastructure - enforced at a proxy or control plane layer rather than through manual review processes - actually accelerates development by removing per-project compliance rework. Teams build on top of a governed foundation, and compliance is inherited rather than earned from scratch each time.

What is the difference between an AI governance framework and AI governance infrastructure?

A governance framework (NIST AI RMF, EU AI Act, ISO/IEC 42001) defines what organizations should do. Governance infrastructure is the technical layer that enforces it: the proxy, the policy engine, the audit logging system, and the access controls. Regulated industries need both: frameworks to define the standards and infrastructure to enforce them at runtime.