The core tension is clear: AI adoption accelerates across every department and role, while governance infrastructure lags behind. Employees default to whatever tool gets the job done,with or without approval. This article walks through why enforcement fails, what a well-constructed AI usage policy needs to cover, and the specific best practices that turn policy language into runtime controls.

TLDR

- Written AI policies fail when they rely on employee memory instead of technical controls built into the environments where AI is actually used

- The biggest enforcement gaps: no technical guardrails, no audit trail, no alignment with daily workflows

- Effective enforcement requires layered policies (org-wide, team, individual, per-model) combined with intent-based controls that understand context

- Shadow AI is the most common symptom of a policy-without-enforcement gap

- Bridging policy and enforcement requires governance infrastructure that runs continuously and generates compliance evidence automatically

Why AI Usage Policies Fail Without Enforcement

Organizations publish acceptable use statements outlining high-level principles - "don't share sensitive data," "only use approved tools" - but provide no practical mechanism for employees to comply. The result: a gap between intention and recorded behavior that creates a false sense of security.

Three structural failure modes plague written-only policies:

- Relies on goodwill, not controls - Policies depend on employee memory rather than automated enforcement that prevents violations before they occur

- No usage record - Without logged activity, there's nothing to present to regulators or investigate during an incident

- Friction drives workarounds - Employees need to be productive and will reach for whatever tool solves problems fastest, regardless of policy

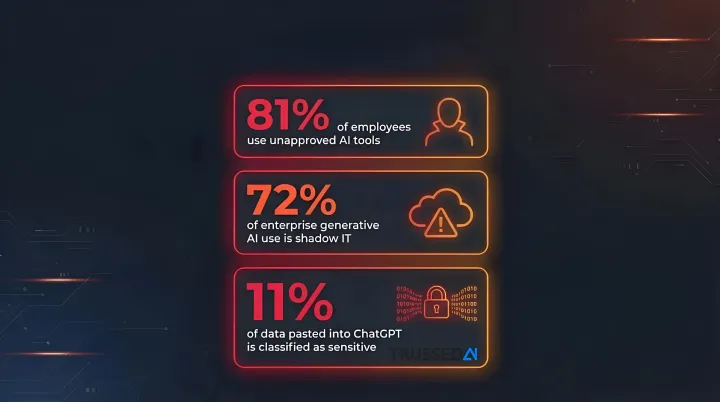

The Shadow AI Epidemic

These failure modes produce a predictable outcome: shadow AI. 81% of employees and 88% of security leaders report using unapproved AI tools. Even more concerning, 11% of data pasted into ChatGPT is classified as sensitive, with 4.7% of employees having shared confidential company information.

Employees turn to ChatGPT, Gemini, Claude, or open-source models on personal accounts with zero organizational visibility. 72% of generative AI use in the enterprise is shadow IT, driven by individuals using personal accounts to access AI apps. When blocking applications is attempted, 45% of workers find workarounds.

Why Legacy Security Tools Can't Fill the Gap

Shadow AI persists partly because the tools meant to stop it weren't built for this problem. Traditional DLP, firewalls, and URL blockers were designed for file transfers and email attachments,not conversational AI interactions.

Traditional DLP relies on keyword and regular expression matching, which fails because sensitive data cannot always be represented by unvarying keywords. Obfuscation easily thwarts these systems; replacing hyphens with letters in a Social Security Number bypasses standard filters.

Legacy tools also cannot assess the context or intent behind a prompt. They cannot distinguish between a legal associate pasting contract language for clause comparison versus an employee leaking client communications. Their binary block-or-allow logic generates constant exception requests that push employees toward unsanctioned tools.

Evasion benchmarks demonstrate the problem: In one comparative study of LLM guardrails, 42 out of 51 undetected malicious prompts utilized role-play scenarios designed to mask malicious requests from input filters. Highly sensitive guardrails frequently misclassified harmless queries as threats, particularly code review prompts.

The Regulatory Stakes

In regulated industries,healthcare, financial Solution, insurance,an inability to demonstrate that AI policies were enforced creates direct compliance exposure. Regulators across jurisdictions now require demonstrable technical controls, not just policy documents.

Key regulatory frameworks demanding technical enforcement:

- HIPAA (2025 Security Rule NPRM): Requires organizations to deploy technology assets and technical controls that monitor in real-time all activity in relevant systems and identify unauthorized activity

- EU AI Act: Mandates automatic recording of events and logs, with full applicability by August 2026

- GDPR: Requires data protection by design and records of processing activities; Italy fined OpenAI €15 million for data privacy violations

The financial consequences are severe. Organizations with high levels of shadow AI observed an average of $670,000 in higher breach costs than those with low or no shadow AI. Furthermore, 97% of AI-related security breaches involved systems lacking proper access controls.

What an Effective AI Usage Policy Should Cover

Define Scope Boundaries First

A policy must specify:

- Which AI tools are approved vs. prohibited

- Which data categories can and cannot be input into AI systems

- Which use cases are permitted by role or department

- What happens when a boundary is crossed

Skip this specificity and you have a document, not a control.

Implement Role-Based Differentiation

A one-size-fits-all policy creates problems in both directions - blocking legitimate use cases for technical teams or granting too much latitude to departments handling regulated data.

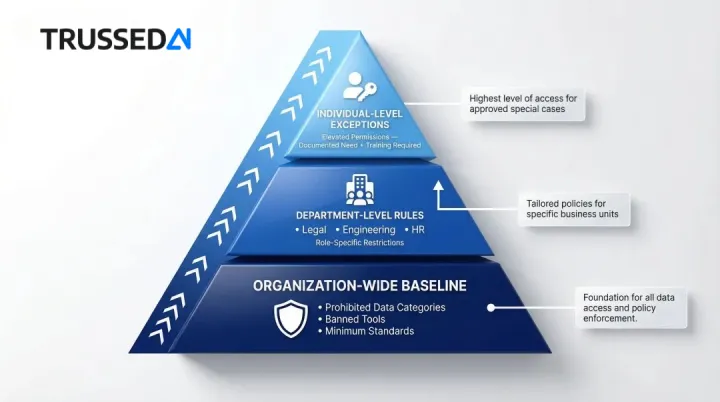

Three tiers make this manageable:

- Organization-wide baseline - Prohibited data categories, banned tools, and minimum standards every employee follows

- Department-level rules - Legal blocks external processing of client communications; engineering may use code generation tools but not with proprietary algorithms; HR restricts AI access to anonymized data only

- Individual-level exceptions - Elevated permissions tied to documented business need and completed training

This layered approach ensures governance scales without creating bottlenecks or exposure.

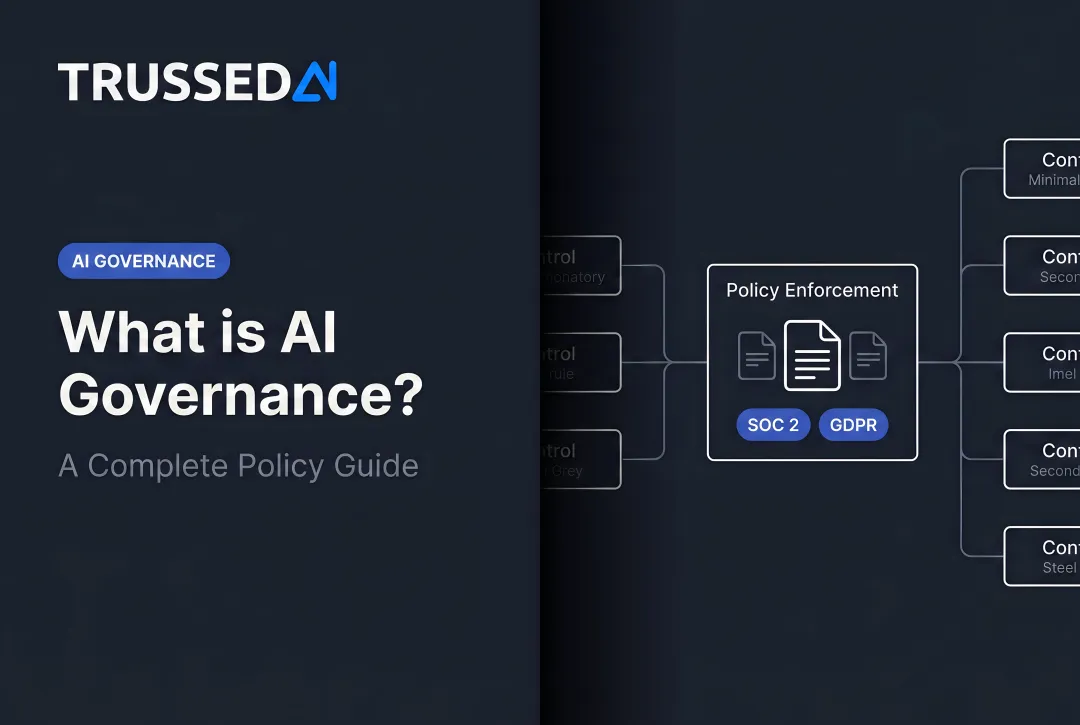

Apply Per-Model Governance

Different AI providers have different data handling agreements, retention policies, and security postures. An effective policy distinguishes between:

- Approved models (unrestricted use for defined use cases)

- Approved models with conditions (requires data sanitization, logging, or human review)

- Prohibited models (banned due to data residency, retention, or security concerns)

Without this distinction, employees can inadvertently expose regulated data through a model they consider "approved" - because to them, approved means allowed.

Cover Data Classification Requirements

Employees need to recognize and categorize data before they interact with AI. The policy should include:

- Public - Freely shareable; no restrictions on AI use

- Internal - Company information; AI use permitted with approved tools only

- Confidential - Proprietary data; requires sanitization or tokenization before AI interaction

- Regulated - HIPAA, PCI, GDPR-protected data; prohibited from external AI models

Most policy violations aren't intentional , they happen because employees don't recognize what category their data falls into before pasting it into a prompt.

Define Human Accountability

The policy must name:

- Who owns AI governance

- Who approves and documents exceptions

- Who handles escalations when a potential violation surfaces - and within what timeframe

Policies that lack named accountability are treated as aspirational rather than operational.

Best Practices for Enforcing AI Usage Policies at Runtime

Best Practice 1: Embed Controls in the Environment, Not in a Document

Technical enforcement means policy rules are evaluated at the moment of interaction - before data leaves the organization or before a response is generated. Unlike after-the-fact monitoring, which can detect violations but cannot prevent harm, runtime controls stop the problem before it occurs.

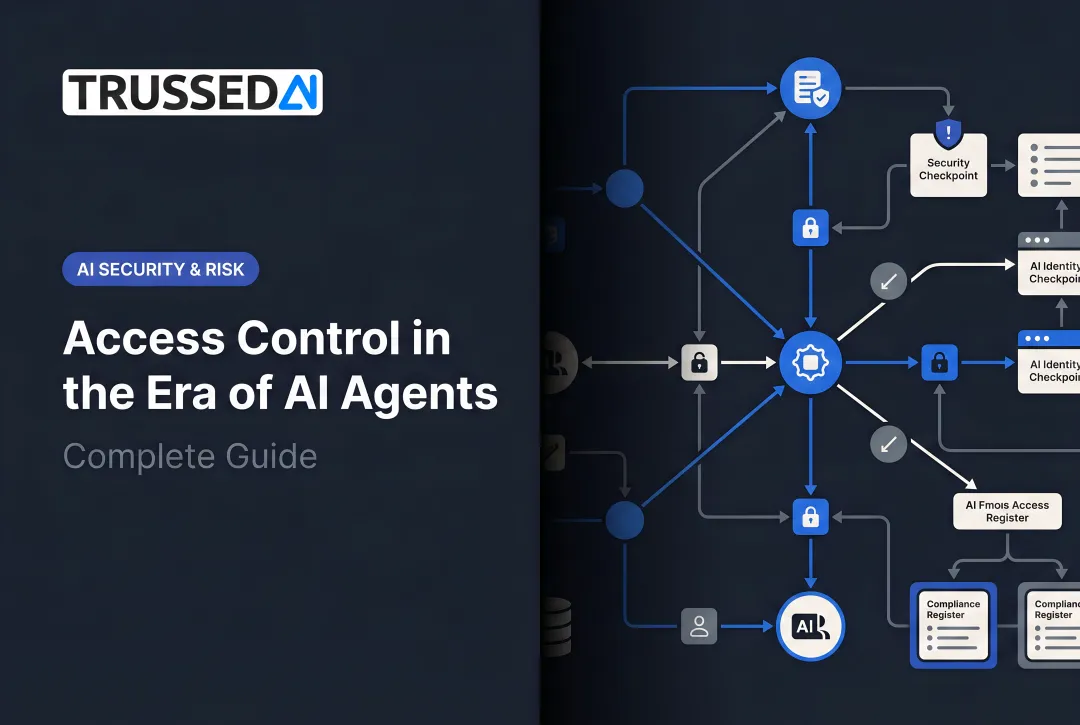

Granular targeting across org, team, individual, and model levels:

Enforcement must adapt to context. The same AI tool carries different risk depending on who is using it, what data they're working with, and which model is receiving the query.

Four enforcement layers:

- Organization-wide baselines - Universal rules that apply to all users and use cases

- Team-level differentiation - Department-specific controls reflecting different risk profiles

- Individual-level exceptions - Role-based permissions for users with documented business need

- Per-model controls - Different rules for different AI providers based on their security posture

Contextual analysis determines what the user is trying to accomplish and whether that purpose aligns with policy , regardless of whether specific keywords appear.

The practical difference: keyword matching generates constant false positives; intent-based classification scales without flooding security teams with noise. One approach creates friction, the other creates control.

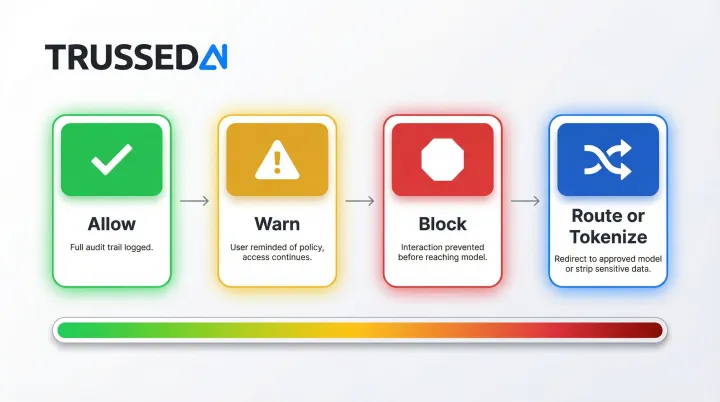

Best Practice 3: Beyond Binary Block or Allow

A four-action model gives security teams the nuance needed to enforce policy without turning every AI interaction into a friction point:

- Allow the interaction with a full audit trail for compliance and investigation

- Warn the user at the moment of risk - policy reminder without blocking access

- Block the interaction before it reaches the model when a clear violation is detected

- Route or tokenize - redirect sensitive queries to an approved internal model, or strip identifying data before queries go external

This spectrum of responses enables organizations to balance security with productivity.

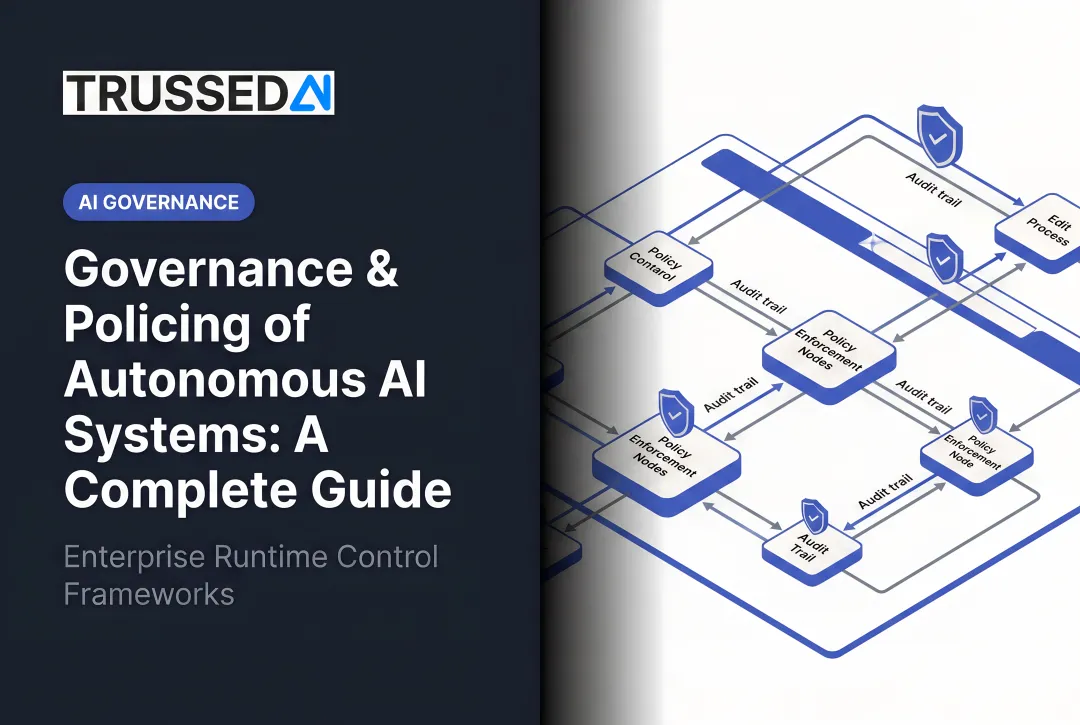

Best Practice 4: Maintain Continuous, Automated Audit Trails

In regulated industries, demonstrating that policies were enforced requires a complete and tamper-evident record of what was submitted, what policy was applied, what action was taken, and what was returned.

An audit-ready governance trail should capture:

- User identity and role

- Timestamp of interaction

- Full prompt content (with appropriate redaction for regulated data)

- Policy rules evaluated

- Action taken (allow, warn, block, route)

- Model and version used

- Response returned

- Data lineage and classification

Manual log reviews cannot scale. Automated audit trails generate compliance evidence as a byproduct of every AI interaction, moving organizations from point-in-time audits to continuous assurance.

Best Practice 5: Make Compliance Easy, Not Just Mandatory

Enforcement that constantly blocks or interrupts legitimate work trains employees to find workarounds.

Design principles for friction-reducing enforcement:

- Redirect sensitive queries to an approved model rather than simply blocking them

- Surface policy reminders rather than denying access entirely

- Provide clear guidance on how to modify a request to comply with policy

- Automate data sanitization when possible, removing sensitive elements before they reach external models

When compliance becomes the path of least resistance, shadow AI usage drops.

Best Practice 6: Measure and Iterate

Policy effectiveness should be tracked with concrete metrics:

- Track policy violation rates by type, user, and department to identify where risk is concentrating

- Monitor shadow AI discovery counts - unapproved tools signal gaps in approved alternatives

- Watch exception request volume for signs that enforcement friction is pushing employees around the system

- Review audit findings to close compliance gaps before they surface in external reviews

Without these metrics, governance programs operate blind. With them, teams can demonstrate measurable improvement and respond to new AI risks before they become incidents.

Moving From Policy Documents to Real-Time Enforcement

Organizations need a governance layer that sits between their users, applications, and AI models. It should intercept interactions, apply policy logic, and log outcomes - without requiring changes to every application that uses AI. Point-in-time audits or manual reviews cannot substitute for continuous control.

When enforcement is infrastructure rather than a process, compliance teams stop chasing violations after the fact. Instead, they operate with continuous assurance. The practical outcomes of that shift include:

- Governance evidence generated automatically as a byproduct of every interaction

- Reduced manual oversight workload for compliance teams

- Audit-ready proof for regulators - not just that a policy exists, but that it ran at runtime

Enforcing AI Policies for AI Agents and Automated Workflows

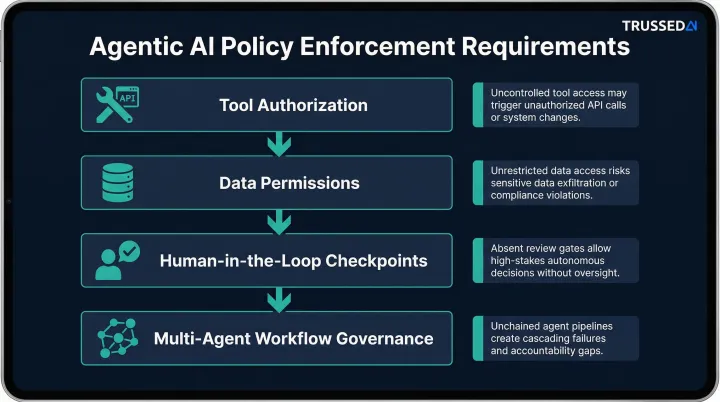

AI agents introduce enforcement gaps that employee-facing policies were not designed to address. Agents can call tools, retrieve data, and execute actions autonomously - without a human in the loop to apply judgment. A policy that only governs what employees type into a chat interface does not cover what an agent does on their behalf.

80% of companies report their AI agents have taken unintended actions, including accessing unauthorized systems (39%), sharing sensitive or inappropriate data (33%), and downloading sensitive content (32%).

Agentic AI requires its own enforcement layer. Policies must define:

- Which tools agents are authorized to call

- What data they are permitted to retrieve or transmit

- When a human-in-the-loop checkpoint is required

- How multi-agent workflows are governed end-to-end

Without per-agent policy controls, an organization's governance posture degrades as autonomous AI workflows expand.

That governance gap also shapes the monitoring requirement. Because agents operate at machine speed, enforcement cannot be reactive. Real-time visibility into agent actions - what was called, what data was accessed, what was returned - is a prerequisite for both compliance and operational reliability as agentic AI expands across the enterprise.

Frequently Asked Questions

How to create an AI usage policy?

An effective AI usage policy starts by defining approved tools, permitted use cases by role, data classification rules, and accountability structures. The policy must be specific enough to be technically enforced , not just aspirationally stated , with clear consequences for violations.

What is the acceptable use policy for AI?

An acceptable use policy for AI defines the boundaries employees must operate within: which tools are approved, what data can be shared, and which use cases are permitted. It serves as the documented baseline that runtime enforcement controls are built to uphold.

How to protect sensitive data when using AI?

Protect sensitive data through role-based access controls and data classification before any AI interaction. Technical guardrails prevent regulated or confidential data from reaching external models, while audit logging tracks exactly how data was handled. Runtime enforcement stops violations before they occur.

What is shadow AI and why is it an enforcement problem?

Shadow AI refers to employees using unapproved AI tools outside organizational visibility. It is the most common symptom of a policy-without-enforcement gap and creates data leakage, compliance, and auditability risks that written policies alone cannot address.

What happens when employees violate AI usage policies?

Without technical controls, violations are typically discovered after the fact, if at all. Runtime enforcement lets organizations intercept, warn, block, or redirect violations as they happen and maintain an audit trail for investigation and regulatory response.

How do you enforce AI policies for AI agents, not just human users?

Enforcing AI policies for agents requires extending governance to cover tool calls, data retrieval, and autonomous actions, not just user-facing interactions. This demands per-agent policy rules and real-time monitoring of agent behavior at runtime, with governance applied before every execution.