Introduction

AI deployments are scaling faster than oversight structures can keep up. Models are in production, agents are making decisions autonomously, and most organizations still rely on static policies that can't enforce themselves at runtime. The numbers make the gap concrete: 88% of organizations report regular AI use in at least one business function, yet fewer than 25% have fully operationalized enterprise AI governance, despite 87% of executives claiming to have frameworks in place.

ISO/IEC 42001:2023, published in December 2023, is the first international management system standard specifically designed for AI , and it directly addresses that gap. This article breaks down what ISO 42001 requires, how it structures risk management across the AI lifecycle, and what operationalizing it looks like when governance must move from documentation to enforcement.

TLDR

- ISO 42001 establishes a certifiable AI Management System (AIMS) across the full AI lifecycle

- 38 Annex A controls cover governance, data management, lifecycle oversight, and third-party risk

- Risk assessment and continuous monitoring are core requirements , not one-time audits

- ISO 42001 complements NIST AI RMF and the EU AI Act rather than replacing them

- Certification signals to regulators and customers that AI systems are governed responsibly

What Is ISO 42001 and Why Does It Matter Now

ISO/IEC 42001:2023 is a certifiable international standard published jointly by ISO and IEC that provides a framework for organizations to establish, implement, maintain, and continuously improve an Artificial Intelligence Management System (AIMS). It is the first global standard of its kind, utilizing a Plan-Do-Check-Act (PDCA) methodology familiar to organizations already certified under other ISO management standards.

Who it applies to: Organizations of all sizes that develop, deploy, provide, or use AI-based products or Solution,including private companies, public sector entities, and non-profits,across any industry.

The Urgency: Regulatory Pressure Meets Operational Reality

The timing of ISO 42001 reflects accelerating regulatory pressure worldwide. The EU AI Act, proposed national AI legislation in multiple jurisdictions, and rising enterprise pressure to demonstrate AI accountability to regulators, customers, and partners have created an environment where documented governance is no longer enough.

That pressure has a factual basis: 51% of organizations using AI report at least one negative consequence, with AI inaccuracy ranking as a primary driver.

General information security and enterprise risk frameworks don't fully address what AI actually introduces. ISO 42001 was built to cover the gaps:

- Model bias producing discriminatory or inaccurate outputs

- Explainability failures that prevent meaningful human oversight

- Data poisoning attacks that corrupt training or inference

- Behavioral drift, where model outputs shift over time without detection

- Ethical misuse across sensitive use cases

The standard governs these risks across the full AI lifecycle , from initial deployment through decommissioning.

Certification Benefit

Organizations that achieve ISO 42001 certification demonstrate that their AI management system is not just documented , it's operating effectively under audit. That distinction matters to regulators, enterprise customers, and procurement teams evaluating AI suppliers.

One important boundary: ISO 42001 is not currently an OJEU-listed harmonized standard. Certification does not automatically grant a legal "presumption of conformity" with the EU AI Act. Organizations pursuing EU AI Act compliance need to treat ISO 42001 as a strong foundation , not a substitute for the Act's specific conformity assessment requirements.

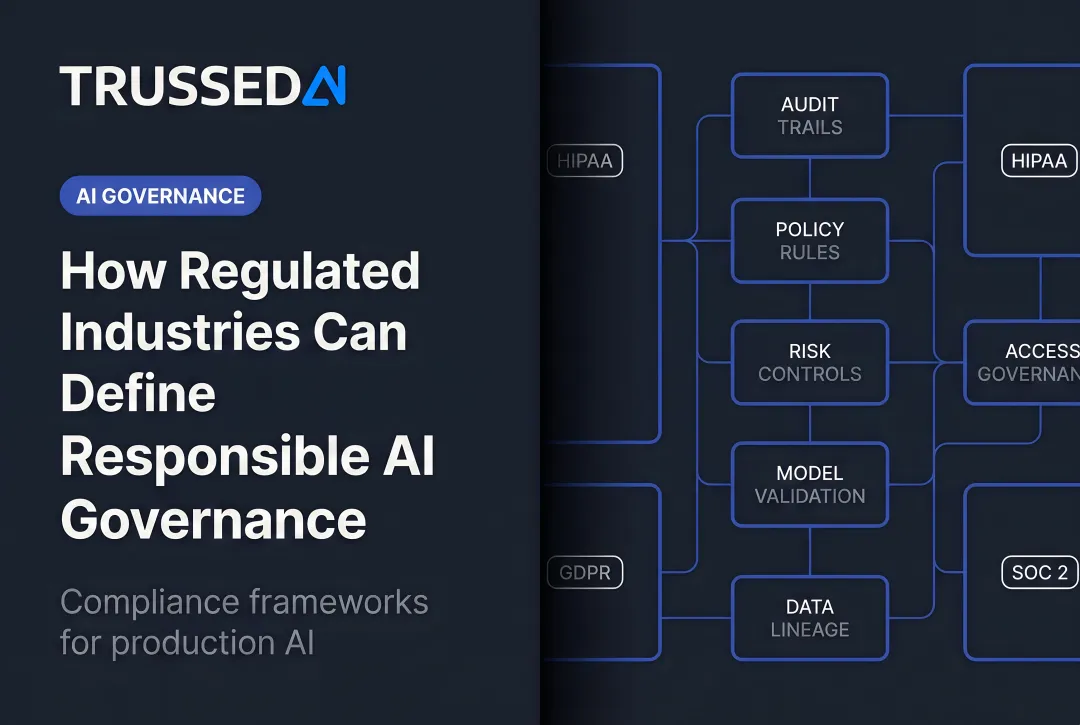

Key Components of ISO 42001: Structure and Annex A Controls

The PDCA Foundation

ISO 42001 follows the High-Level Structure (HLS) common to ISO management system standards, using the Plan-Do-Check-Act (PDCA) methodology. Governance under this framework is iterative, not static. Organizations must continuously evaluate and update their AIMS as AI systems evolve and regulatory expectations shift. The seven foundational elements below map directly to that PDCA cycle.

Seven Foundational Elements

Organizations must establish seven core elements, each tied to a phase of the PDCA cycle:

- Organizational Context , Understanding internal/external environment and stakeholder expectations

- Leadership Commitment , Senior management must set AI policy, define roles, and integrate AI governance into strategic objectives

- AI System Planning , Proactive identification of risks and opportunities

- Resource Allocation , Financial, human, and technological resources for governance

- Operational Controls , Processes for responsible AI development, deployment, and use

- Performance Monitoring , Continuous evaluation mechanisms

- Continuous Improvement , Closing the loop by acting on audit findings, performance gaps, and new risk sources

The Four Annexes

ISO 42001 includes four annexes that provide structure and guidance:

- Annex A (normative): Reference control objectives and controls

- Annex B (normative): Implementation guidance for AI controls

- Annex C (informative): Potential AI-related organizational objectives and risk sources,covering fairness, safety, privacy, bias, data poisoning, performance drift

- Annex D (informative): Domain-specific guidance for industries like healthcare, finance, and defense

The 38 Annex A Controls

The standard includes 38 reference controls organized into nine objectives:

| Control Group | Focus Area |

|---|---|

| A.2 | Policies related to AI |

| A.3 | Internal organization |

| A.4 | Resources for AI systems |

| A.5 | Assessing impacts of AI systems |

| A.6 | AI system lifecycle |

| A.7 | Data for AI systems |

| A.8 | Information for interested parties |

| A.9 | Use of AI systems |

| A.10 | Third-party and customer relationships |

Not every control is mandatory for every organization. Each organization selects which controls apply through a Statement of Applicability (SOA), based on its specific AI use cases and risk profile.

Statement of Applicability (SOA)

The SOA is a foundational document required for certification. Organizations must formally document which controls they are applying and why. Specifically, the SOA must:

- Identify each Annex A control and state whether it is applied or excluded

- Justify any exclusions with documented rationale

- Demonstrate that selected controls are operational, not just planned

During Stage 1 and Stage 2 audits, external auditors use the SOA to verify that all relevant controls have been considered, selected, and implemented , making it the primary evidence document for certification.

ISO 42001's Approach to AI Risk Management

Risk Assessment and Treatment Requirements

Clause 6.1.2 requires organizations to conduct formal risk assessment before deploying AI systems, and Clause 8.2 requires implementing operational controls to mitigate identified risks. Continuous monitoring under Clauses 9 and 10 closes the loop.

AI Impact Assessments (AIIAs)

AIIAs are required for high-risk use cases and complement baseline risk assessments by focusing on societal, ethical, and legal impacts. AIIAs specifically evaluate:

- Whether an AI system could cause discrimination

- Potential violations of fundamental rights

- Risks in sensitive domains like healthcare or finance

- Effects on individual autonomy and fairness

Triggers for requiring an AIIA include systems that make decisions materially affecting people, deployment in regulated domains, or flagged risks to fundamental rights. In all cases, AIIAs must include mitigation and monitoring plans , not just flag risks.

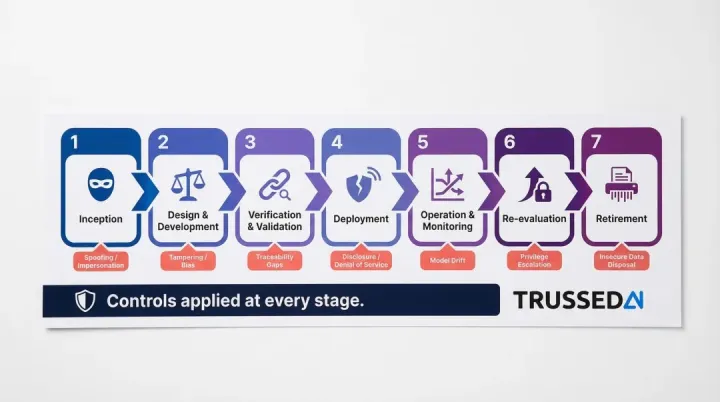

Lifecycle Stages and Risk Application

ISO 42001 requires governance across the AI lifecycle, based on ISO/IEC 22989:2022:

- Inception , Spoofing and impersonation risks

- Design/Development , Tampering, unauthorized changes, bias in training data

- Verification/Validation , Repudiation, lack of traceability

- Deployment , Information disclosure, denial-of-service risks

- Operation/Monitoring , System overload, behavioral drift

- Re-evaluation , Elevation of privilege, model drift

- Retirement , Data retention and disposal

Risk controls must be applied at each stage,not just at launch.

Threat Modeling Connection

Each lifecycle stage above surfaces specific technical risks , and identifying them requires more than policy checklists. While ISO 42001 does not explicitly mandate specific threat modeling frameworks, methodologies like STRIDE, OWASP Top 10 for LLMs, and MITRE ATLAS are highly compatible and well-suited for surfacing those risks systematically. In practice, teams that apply structured threat modeling at each lifecycle stage are better positioned to demonstrate due diligence during audits and certification reviews.

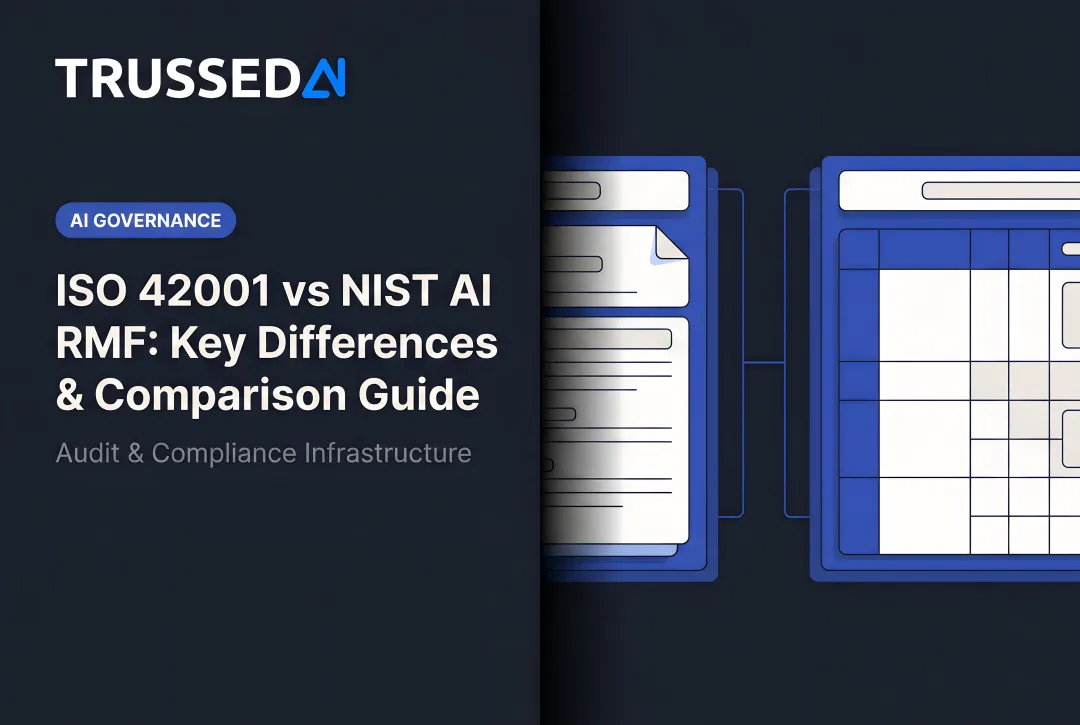

How ISO 42001 Relates to Other AI and Security Standards

Complementing Existing Security Standards

ISO 42001 builds on existing information security management standards rather than replacing them. Organizations with existing security governance frameworks have a structural head start and can extend established processes to meet AI governance requirements.

For implementation timelines, organizations with existing management systems in place can typically complete ISO 42001 in 4-6 months , compared to 9-12 months for mid-market and 12-18+ months for large enterprises.

ISO 42001 vs. ISO 23894

ISO 42001 is a full management system standard,it defines requirements for establishing and running an AIMS with the possibility of certification. ISO 23894 provides guidance (not requirements) specifically on AI risk management processes. ISO 23894 can be used as a supporting reference within an ISO 42001 program but does not yield certification on its own.

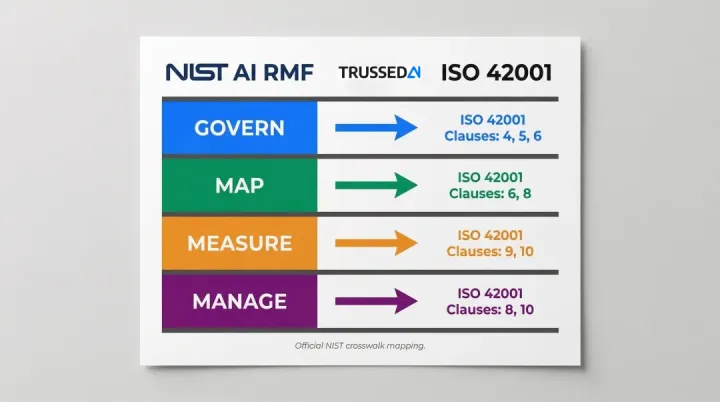

NIST AI RMF Alignment

NIST provides an official crosswalk mapping the AI Risk Management Framework to ISO 42001:

| NIST AI RMF Function | Mapped ISO 42001 Clauses |

|---|---|

| GOVERN | 4.1, 5.2, 6.1.2, 6.1.3, 8.2, 8.3, 8.4 |

| MAP | 6.1.4, 8.2, 8.3, 8.4, B.5.2, B.5.4, B.5.5 |

| MEASURE | 6.1.1, 6.1.2, 8.2, 8.4, 9.1, 9.2.2 |

| MANAGE | 6.1.2, 6.1.3, 6.1.4, 10.1, 10.2 |

Organizations pursuing both frameworks can use this crosswalk to align their implementation work and avoid duplicating effort.

EU AI Act Alignment

ISO 42001's controls align closely with the EU AI Act's requirements for high-risk AI systems, including:

- Risk management processes and documentation

- Technical documentation requirements

- Post-market monitoring obligations

ISO 42001 certification does not currently grant legal "presumption of conformity" with the EU AI Act. It can, however, serve as meaningful evidence of responsible AI governance in EU-regulated markets.

Steps to Implement ISO 42001 and Pursue Certification

Starting Point: Gap Assessment

Conduct a gap assessment comparing current AI governance practices against ISO 42001's clauses and Annex A controls. Many enterprises already have partial overlaps with ISO 42001 in their existing data governance, security, and risk programs.

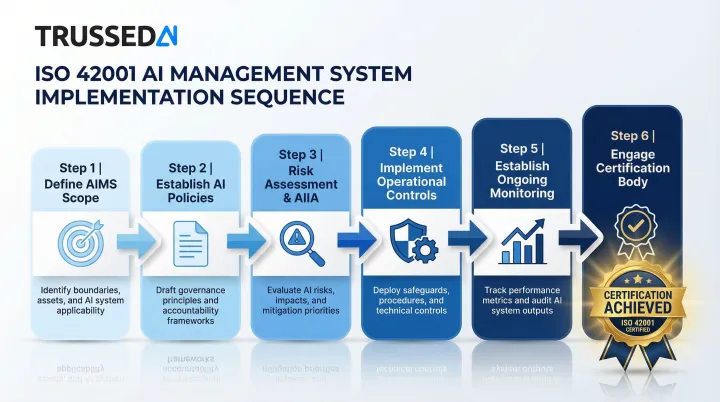

Implementation Sequence

- Define AIMS scope , Which AI systems, products, and use cases are in scope

- Establish AI policies , Assign ownership across product, engineering, legal, compliance, and security teams

- Complete risk assessment and AIIA , For in-scope systems, especially high-risk use cases

- Implement operational controls , Aligned to the Statement of Applicability

- Establish ongoing monitoring , Internal audit and management review processes

- Engage certification body , For Stage 1 (document review) and Stage 2 (on-site audit)

Implementation timelines vary by organization size and existing maturity , see this ISO 42001 practical implementation guide for detailed estimates. Certification is valid for three years with annual surveillance audits.

The Evidence Generation Challenge

Certification requires demonstrating that controls operate effectively over time,not just that policies exist. Organizations need to retain artifacts such as:

- Model design documentation

- Accuracy and performance monitoring logs

- Data audit trails

- Risk assessment records

- Incident reports

Manually gathering this evidence adds up quickly , especially for teams running AI at scale across multiple systems and use cases. The answer is governance infrastructure that produces audit evidence as a byproduct of every governed interaction, rather than a separate compliance effort.

Trussed AI's platform enforces policies at runtime and automatically maintains complete audit trails. Organizations using it have cut manual governance workload by 50%, improved regulatory compliance by 50%, and had operational workflows live in approximately four weeks.

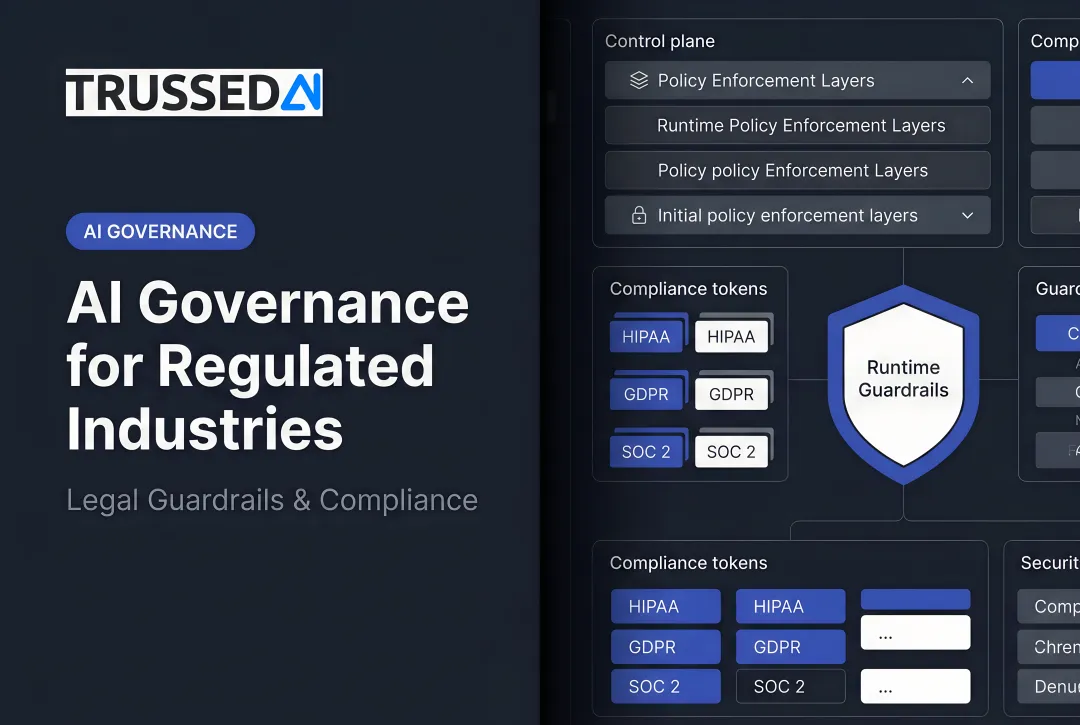

ISO 42001 in Practice: What It Means for Regulated Industries

Elevated Relevance in Regulated Industries

ISO 42001 carries the most weight in regulated industries, where AI systems make decisions that directly affect people's health, finances, and livelihoods. In healthcare, the FDA authorized over 1,000 AI-enabled medical devices between 2015 and 2024. In financial Solution, AI drives credit decisions, fraud detection, and underwriting. These are precisely the use cases where ISO 42001 requires AI Impact Assessments (AIIAs) and the strongest operational controls.

Sector-Specific Applications

Healthcare:

- Governing diagnostic AI tools

- Patient data use in model training

- Clinical decision support transparency

- Regulatory audit readiness for AI-enabled medical devices

Financial Services:

- Model fairness in lending and credit decisions

- Explainability requirements for automated decisions

- Model audit trails for regulatory examinations

- Fraud detection oversight

Insurance:

- AI-driven underwriting governance

- Claims automation controls

- Risk assessment transparency

Universities and Research Institutions:

- Responsible AI experimentation structure

- Student and faculty AI tool governance

- Research data protection

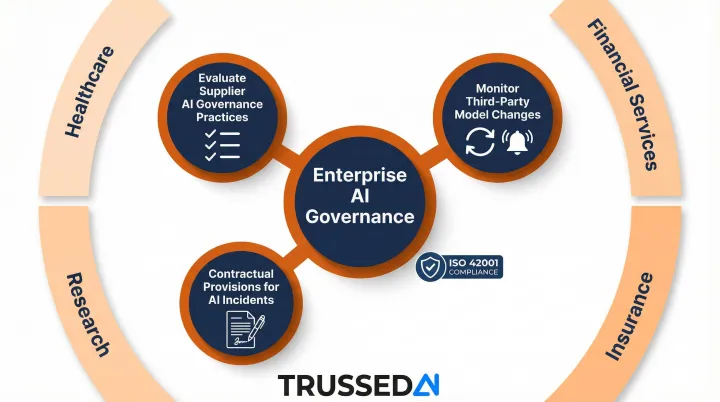

Third-Party AI Risk

Governance obligations don't stop at your organization's boundary. Across all these sectors, AI is increasingly embedded in vendor products , and ISO 42001 holds enterprises accountable for governing those systems too, not just the ones they build internally. The standard requires evaluating supplier AI governance practices, monitoring changes to third-party models, and including contractual provisions for AI-related incidents.

Frequently Asked Questions

What is the ISO standard for AI risk?

ISO/IEC 42001:2023 is the primary international management system standard for AI, while ISO/IEC 23894 specifically provides guidance on AI risk management processes. ISO 42001 covers governance broadly and is certifiable; ISO 23894 focuses narrowly on risk identification and treatment within AI programs and is not certifiable.

What is the difference between ISO 42001 and ISO 23894?

The key distinction is scope and enforceability. ISO 42001 defines what your AI management system must do and allows third-party certification. ISO 23894 goes deeper on the how , providing detailed risk assessment methodologies that teams use as a technical reference when building out their ISO 42001 program.

Is ISO 42001 certification mandatory?

No. ISO 42001 certification is currently voluntary, though increasingly expected by enterprise customers and regulators. Its controls align closely with mandatory EU AI Act requirements for high-risk systems, so organizations pursuing certification often find themselves well-positioned ahead of binding compliance deadlines.

How does ISO 42001 complement existing security standards?

Existing information security management standards address security management broadly, while ISO 42001 adds AI-specific governance requirements on top of that foundation. Organizations already holding management system certifications have a structural head start, as both share the PDCA management system structure and many foundational risk management processes.

What are the 38 Annex A controls in ISO 42001?

The 38 Annex A controls are reference control objectives organized across areas including AI policies, organizational structure, resource allocation, lifecycle management, data governance, stakeholder communication, and third-party relations. Organizations select applicable controls via a Statement of Applicability rather than implementing all 38 by default.

How does ISO 42001 relate to the EU AI Act?

ISO 42001 is voluntary; the EU AI Act is binding law. That said, their governance expectations for high-risk AI systems overlap significantly. ISO 42001 certification produces documented evidence of responsible AI practices that can support EU AI Act compliance , but it does not grant automatic legal presumption of conformity.