Introduction

Regulated industries are deploying AI faster than their governance structures can absorb. Claims processors use algorithms to evaluate insurance payouts. Clinical decision support systems recommend treatments. Credit underwriting platforms approve or deny loans. Universities leverage AI to screen applicants and allocate financial aid. Yet governance has not kept pace with adoption, creating not just a compliance gap but a mounting legal and operational liability.

The stakes are uniquely high in regulated sectors. Patients, borrowers, students, and policyholders are all protected by strict legal frameworks that carry severe penalties for violations. When an AI system denies a loan based on protected characteristics, misdiagnoses a patient, or discriminates in hiring, the organization bears full responsibility, regardless of whether the error came from a human or an algorithm.

This guide covers what enterprise leaders need to act on: the legal frameworks already governing AI use, the components of a defensible governance structure, and how to move from static policy documents to enforceable, real-time controls that generate continuous audit evidence.

TL;DR

- Existing laws, including HIPAA, Fair Lending, employment discrimination, and SEC rules, apply to AI decisions right now, with no grace period

- Regulated industries face amplified risk because AI outcomes directly affect the people they serve, and regulators know it

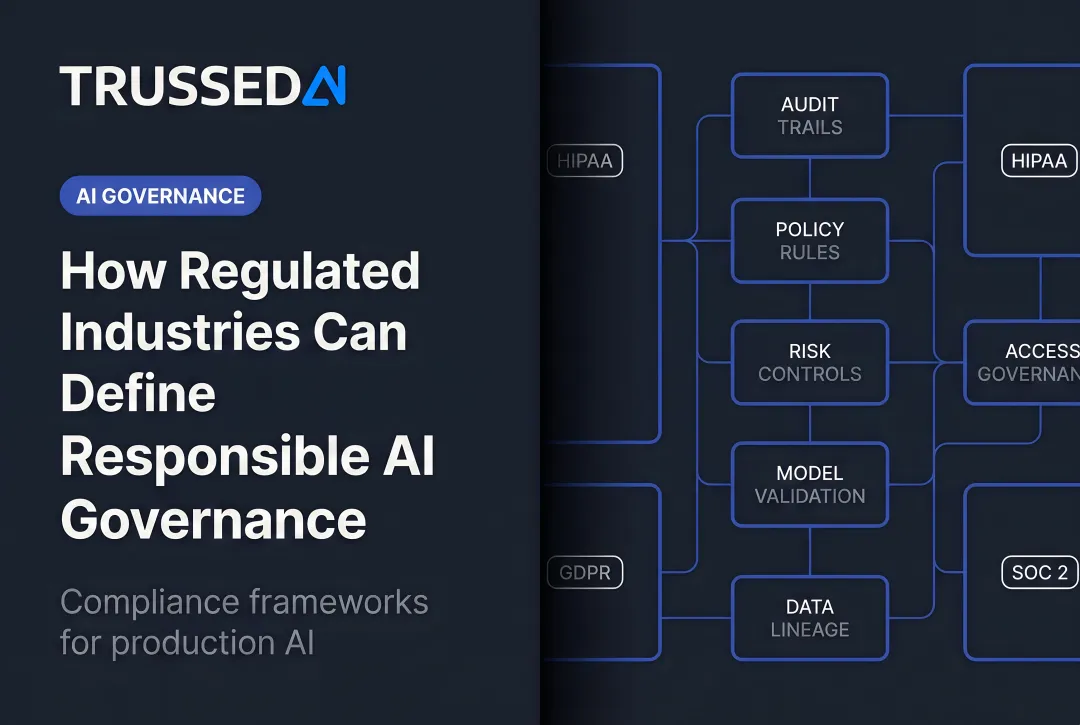

- A governance framework must span the full lifecycle: inventory, risk classification, data integrity, human oversight, and monitoring

- Written policies alone are not governance; enforcement must happen at runtime across every model and agent interaction

- The standard for compliance is demonstrable, auditable evidence of control

Why Regulated Industries Can't Afford to Wing AI Governance

The Categorical Difference

Regulated industries occupy a fundamentally different risk category than other AI adopters. Their AI outputs directly affect high-stakes decisions, including loan approvals, diagnostic recommendations, insurance claims, and student financial aid, where errors carry legal, financial, and patient safety consequences. A recommendation engine that suggests the wrong movie is a minor annoyance. An underwriting algorithm that systematically denies credit to protected classes triggers federal liability and enforcement action.

The Governance Gap

Organizations have rolled out AI applications, agents, and agentic workflows faster than governance structures can absorb them. The problem intensifies as AI moves from experimental projects to production systems making thousands of consequential decisions daily.

Three exposure areas compound simultaneously: regulatory risk, operational fragility, and audit readiness. Miss any one of them and the other two become harder to defend.

Shadow AI amplifies this danger. Employees and teams adopt AI tools outside approved channels. Marketing uses an unapproved chatbot, HR tests a resume screener without legal review, finance experiments with forecasting models. In regulated environments where data sensitivity and access controls are legally mandated, shadow AI creates invisible compliance violations that surface only during audits or enforcement actions.

The Business Case for Mature Governance

The organizations that govern AI well don't move slower. They move faster. Mature governance removes the friction that stalls deployment: unclear accountability, missing audit trails, and regulatory uncertainty that keeps high-value use cases in perpetual review. Organizations with that foundation in place benefit from:

- Pre-approved frameworks that accelerate deployment

- Clear accountability structures that prevent decision bottlenecks

- Audit-ready evidence built into workflows rather than reconstructed after the fact

- Confidence to deploy AI in high-value use cases without regulatory uncertainty

For regulated industries, that confidence isn't a nice-to-have. It's the difference between AI that stays in pilot indefinitely and AI that reaches production.

The Legal Reality: Existing Laws Already Apply to Your AI

Deploying AI to perform a task does not exempt the organization from the regulations governing that task. The law does not care whether bias emerged from a human decision-maker or a neural network. Regulators have made this principle explicit across multiple sectors.

Financial Services

The Equal Credit Opportunity Act (ECOA), Fair Housing Act, and CFPB enforcement authority apply to algorithmic loan decisions. CFPB Circulars 2022-03 and 2023-03 make clear that the complexity or opacity of an AI algorithm is not a valid defense for failing to provide specific, accurate reasons for adverse credit actions.

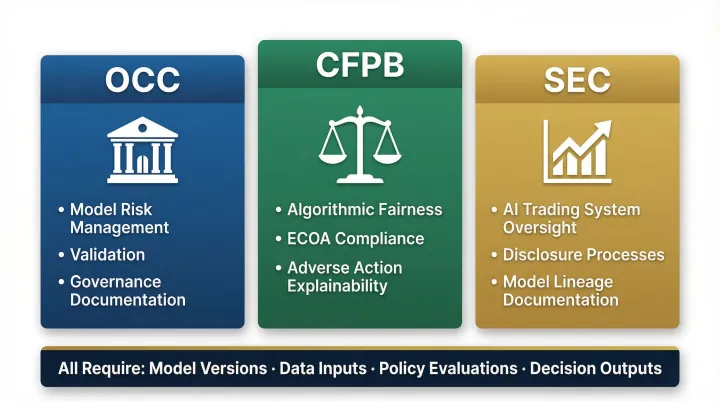

Banking regulators expect the same model risk management frameworks for AI that apply to traditional credit models, including validation, monitoring, and governance documentation. The following agencies scrutinize AI-driven trading systems, disclosure processes, and customer-facing decisions with the same rigor applied to human-executed functions:

- OCC: national bank supervision and model risk guidance

- Federal Reserve: governance expectations for AI in risk management

- SEC: AI-driven trading systems and customer-facing disclosures

Healthcare

HIPAA applies to all AI systems that process protected health information. The FDA regulates AI/ML-based software as a medical device (SaMD), requiring pre-market review for higher-risk applications. The FDA's 2025 draft guidance on AI-Enabled Device Software Functions and its Predetermined Change Control Plan (PCCP) framework mandate continuous post-market performance monitoring, rejecting the traditional static validation approach.

Physicians remain professionally responsible for AI-assisted clinical decisions. State medical boards have begun clarifying that clinicians bear professional accountability for outcomes generated by AI tools, regardless of the system's sophistication.

Employment and Education

EEOC guidance confirms that employment discrimination laws apply fully to AI hiring tools. Organizations using AI for candidate screening, performance evaluation, or promotion decisions must demonstrate that these systems do not produce discriminatory outcomes based on protected characteristics.

FERPA governs how student data may be used in AI systems at universities. Institutions using AI for admissions or financial aid must demonstrate equitable, auditable processes. Trussed AI's audit and compliance infrastructure helps universities maintain the continuous audit trails and access controls that FERPA-governed AI deployments require.

The Emerging Layer: EU AI Act and State Statutes

The European Union's AI Act (Regulation 2024/1689) introduces risk-tiered requirements for AI systems affecting fundamental rights and safety. High-risk applications, including credit scoring, health insurance pricing, employment screening, and educational assessment, must undergo conformity assessments, maintain technical documentation, and provide transparency to affected individuals by August 2, 2026.

Several U.S. states including Colorado and Illinois have enacted or are advancing AI-specific statutes. With the EU AI Act's August 2026 deadline approaching and state statutes advancing on different timelines, compliance frameworks built around a single regulatory snapshot will already be outdated. The more durable approach: governance infrastructure that enforces policy at runtime and generates audit evidence continuously, regardless of which regulation triggers the next review.

The Core Components of an Enterprise AI Governance Framework

Component 1: AI Inventory and Risk Classification

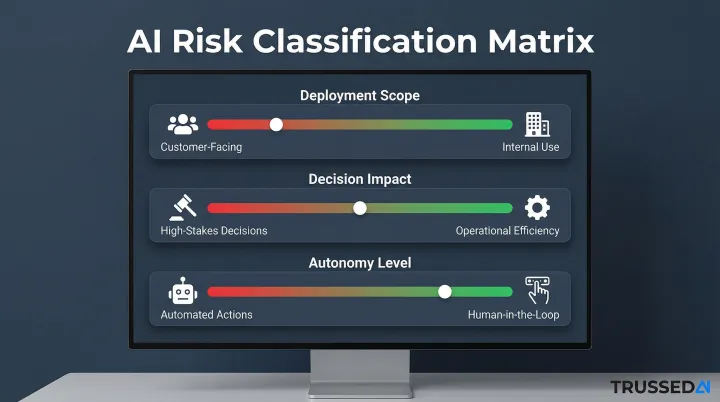

Organizations cannot govern what they have not identified. A comprehensive, continuously updated inventory of all AI systems in use is foundational. Each system must be classified by risk level:

- Customer-facing vs. internal use

- High-stakes decisions vs. operational efficiency

- Automated actions vs. human-in-the-loop workflows

Risk classifications must be reviewed as AI tools evolve and employees discover new uses. What begins as an internal productivity tool can migrate to customer-facing applications, changing its risk profile entirely.

Component 2: Data Governance and Integrity

AI compliance extends well beyond GDPR. Data used to train and run AI systems must be relevant, representative, and free from errors that could produce biased or unreliable outputs.

Accountability structure required:

- Legal teams define compliance boundaries

- Data science teams ensure statistical validity

- Compliance teams monitor adherence

- Business owners understand their responsibilities in the AI data lifecycle

Without clear ownership, data quality degrades and compliance gaps emerge undetected.

Component 3: Human Oversight and Accountability

Regulators expect meaningful human involvement in high-stakes AI decisions, not nominal review. Organizations must define:

- When human oversight is required

- What level of review it entails

- How oversight personnel are trained

- How oversight is documented

Regulators will demand evidence that human review occurred and was substantive. "A human signed off" is insufficient without documentation of what was reviewed, what criteria were applied, and what action was taken.

Component 4: Validation, Testing, and Bias Management

Human oversight closes one gap, but it cannot catch errors the model was never tested to surface. Before deployment, higher-risk AI systems must be tested for accuracy, fairness, and robustness. After deployment, ongoing drift monitoring is required because AI systems can produce increasingly problematic outputs over time.

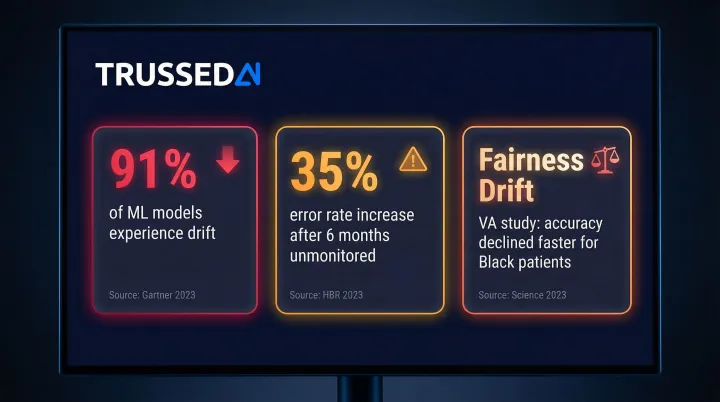

Research shows 91% of machine learning models experience drift, and models left unmonitored for six months see error rates jump 35% on new data. A 2025 VA clinical study revealed "fairness drift," where a pneumonia risk model's accuracy declined significantly faster for Black patients over time. Demographic disparities widened despite overall population-level recalibration.

Fairness audits and bias assessments belong on a defined schedule, quarterly reviews at minimum, with additional checks triggered by model updates, data distribution changes, or adverse outcomes.

Component 5: Audit Trails and Transparency

Explainability is both a regulatory expectation and a litigation defense. Organizations should be able to articulate:

- What a system does

- What data it uses

- What guardrails exist

- How errors are detected and remediated

Continuous, automated audit logging is the operational mechanism. Both boards and regulators will expect to see it on demand. Trussed AI's governance platform generates this evidence automatically, logging policy evaluation results, model version, timestamp, and data lineage as a byproduct of every governed interaction, with no manual documentation burden on compliance teams.

Industry-Specific Compliance Considerations

Healthcare

HIPAA-aligned architecture requires strict controls on AI systems handling PHI. That means enforcing data access controls, preventing unauthorized data leakage, and maintaining complete audit trails for every AI interaction involving protected health information.

The FDA's SaMD framework for diagnostic AI tools requires pre-market review and continuous post-market monitoring. Clinical AI decisions must be explainable to medical professionals and auditors. Physicians remain professionally responsible for AI-assisted clinical outcomes regardless of automation, making documentation of every AI-assisted decision legally critical.

Financial Services

Financial Solution firms face overlapping regulatory pressure from multiple directions:

- OCC and Federal Reserve: Require model risk management frameworks covering validation, ongoing monitoring, and governance documentation

- CFPB: Enforces algorithmic fairness standards. Credit decisions must be fair, explainable, and compliant with ECOA and the Fair Housing Act

- SEC: Scrutinizes AI-driven trading and disclosure systems, demanding model lineage documentation that traces how customer outcomes were determined

Across all three, organizations must maintain complete records of model versions, data inputs, policy evaluations, and decision outputs.

Higher Education

FERPA requirements govern student data used in AI systems. Universities must implement controls that prevent unauthorized access to educational records and ensure AI systems comply with student privacy protections.

Beyond student records, IRB and export control constraints apply to research AI. Bias testing obligations arise when AI is used for candidate screening, admissions, or financial aid decisions, and institutions must demonstrate that those systems do not produce discriminatory outcomes against protected groups.

From Written Policy to Runtime Enforcement

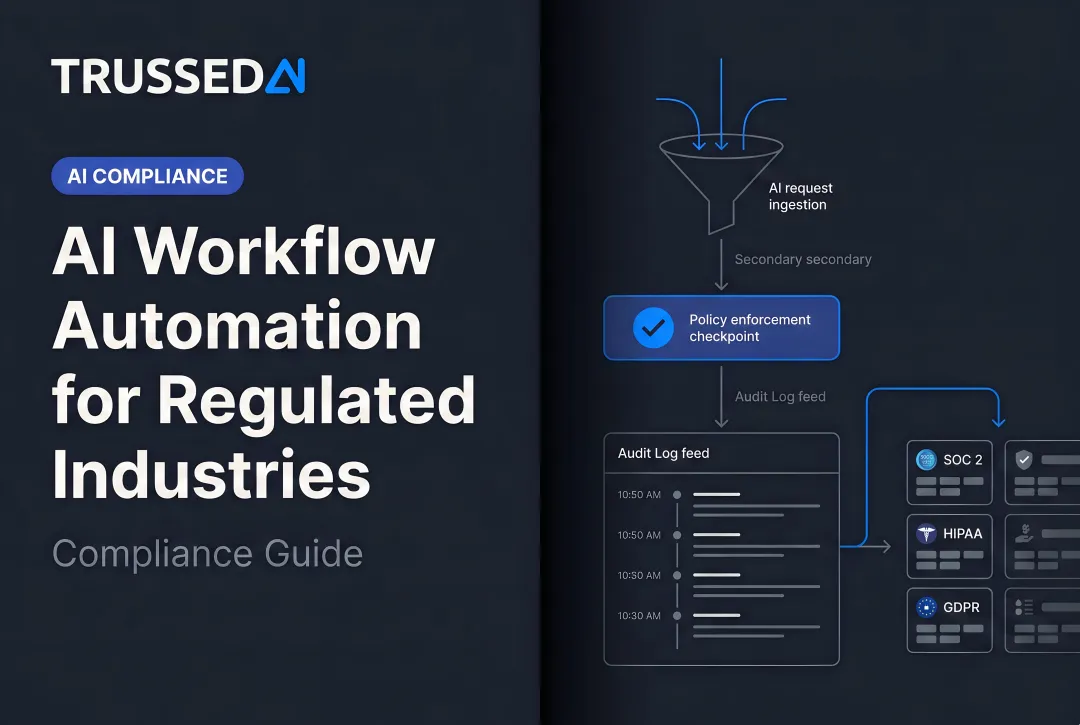

The Enforcement Gap

Most organizations have documented AI policies. But policies sitting in governance documents are not the same as policies being enforced at the moment an AI model or agent makes a call. The difference between paper compliance and operational compliance is the moment of enforcement, at inference time rather than audit time.

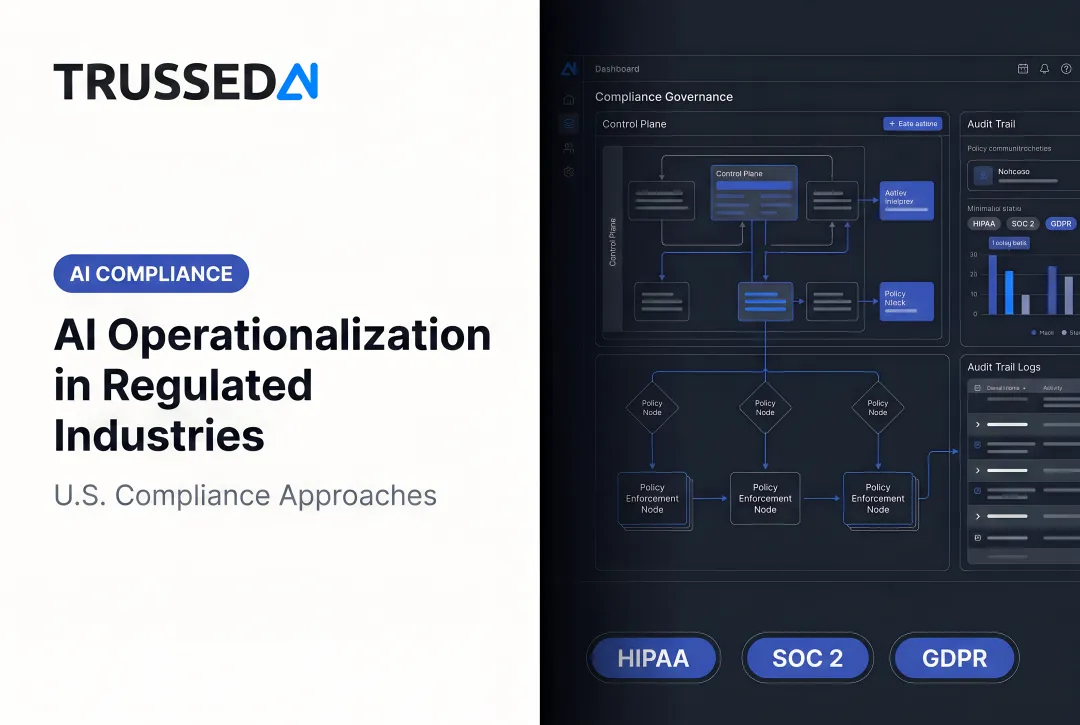

What Runtime Enforcement Looks Like

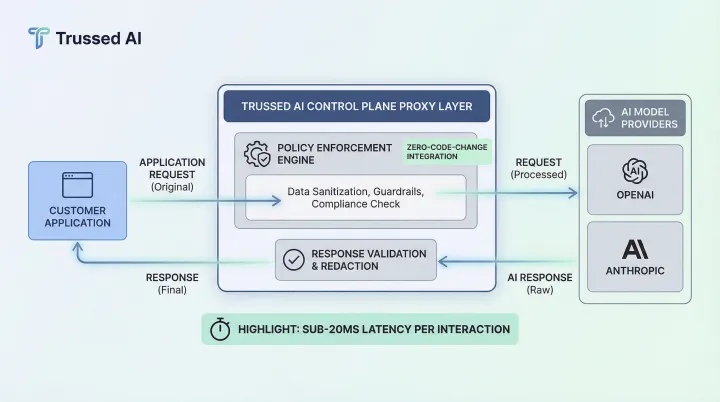

Runtime enforcement means every interaction gets evaluated against defined rules in real time. That means policies evaluate model interactions, agent actions, and data access requests at sub-20ms latency, without degrading application performance.

Trussed AI functions as an enterprise AI control plane, enforcing governance across AI apps, agents, and developer tools. It integrates as a proxy requiring zero changes to application code: developers swap their API endpoint to route through Trussed, which intercepts requests, applies policies, and forwards interactions to underlying models, including OpenAI and Anthropic, before returning responses.

The platform is built to provide the security posture required for regulated deployments across AWS, Google Cloud, and Azure environments. It is aligned to NIST AI RMF and other enterprise governance frameworks.

Governance Evidence as a Byproduct

Runtime enforcement does more than block non-compliant outputs. It automatically generates an audit record for every governed interaction. This eliminates the manual labor of compliance documentation and produces continuous, timestamped evidence trails that regulators and auditors increasingly require.

Organizations move from point-in-time audits to continuous assurance, where the platform generates governance evidence in real time rather than reconstructing it after the fact.

Common AI Governance Mistakes in Regulated Industries

Mistake 1: Treating Governance as an Audit Preparation Exercise

Organizations that only address governance when a regulatory examination is approaching will lack the continuous monitoring, drift detection, or incident documentation that modern regulators expect. Governance is an operational function, not a periodic review.

75% of businesses observed AI performance declines in 2024, with over half reporting direct revenue losses stemming from AI errors and unnoticed statistical misalignment. Reactive governance cannot catch these issues before they create compliance violations or operational failures.

Mistake 2: Failing to Govern Agentic AI with the Same Rigor as Predictive Models

Agentic AI systems, those capable of autonomous multi-step actions, introduce new risks because they can take consequential actions without direct human initiation. The real risk isn't what the model outputs. It's what the agent does next, across tool calls, data access requests, and workflow triggers that no output filter ever sees.

The same inventory, oversight, and audit-trail requirements that apply to predictive models must extend to agents, orchestration workflows, and any AI system that can act on behalf of users. Trussed AI addresses this by enforcing policy at runtime, evaluating every agent action before it executes, not after.

Mistake 3: Siloing AI Governance from Existing Compliance Programs

Rather than building a separate AI compliance function from scratch, effective governance integrates AI-specific controls into existing anti-discrimination policies, vendor management frameworks, and risk management processes. This reduces duplication, eliminates policy conflicts, and keeps governance manageable as AI deployments scale.

Frequently Asked Questions

What are the six pillars of AI governance?

The commonly cited pillars are accountability, transparency, fairness/non-discrimination, security and privacy, safety and reliability, and continuous oversight/monitoring. Specific frameworks like NIST AI RMF and the EU AI Act organize these slightly differently but cover the same foundational dimensions.

What regulations currently govern AI use in healthcare and financial Solution?

Healthcare AI is governed by HIPAA and FDA SaMD frameworks. Financial Solution AI is governed by ECOA, Fair Housing Act, CFPB authority, OCC model risk guidance, and SEC rules. These apply to AI decisions today without new legislation being required.

What is the difference between AI governance and AI compliance?

Compliance is the outcome, meeting legal and regulatory requirements. Governance is the operational structure, including policies, processes, accountability roles, and monitoring systems, that makes compliance achievable and consistently demonstrable.

How do regulated industries enforce AI governance policies at runtime?

Runtime enforcement requires a control plane or proxy layer that evaluates every model call, agent action, and data access request against defined policies at the moment of execution. Audit evidence is generated automatically as a byproduct of each governed interaction, with no after-the-fact documentation needed.

What happens if a regulated company deploys AI without adequate governance?

Consequences include enforcement actions, regulatory penalties, litigation exposure, and reputational damage. Regulators have already brought actions against companies whose AI systems produced discriminatory or non-compliant outcomes. Responsibility stays with the organization that deployed the system, regardless of how the decision was generated.

How long does it take to implement an AI governance framework in a regulated enterprise?

Foundational elements, including AI inventory, risk classification, and policy documentation, can be established in weeks, with runtime enforcement operational in as little as four weeks with the right tooling. Full alignment across legal, IT, and compliance functions takes longer and develops incrementally as the program matures.