Introduction

Healthcare AI adoption is outpacing the governance infrastructure built to oversee it. As of 2024, 71% of U.S. hospitals use predictive AI integrated into their EHRs, yet only 59% have a formal, documented approval process before AI implementation. This gap creates exposure across three critical regulatory domains.

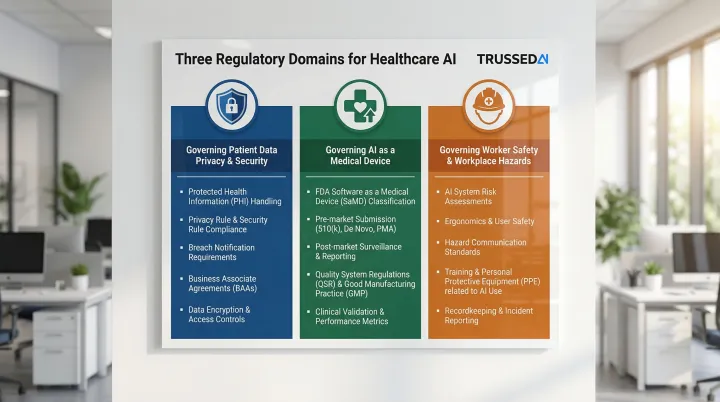

AI systems in healthcare inherit every obligation that already applies to the clinicians they augment, plus new ones specific to how they operate. Three frameworks apply in distinct, non-overlapping ways:

- HIPAA governs how AI handles patient data

- FDA determines whether AI qualifies as a medical device requiring premarket review

- OSHA addresses how AI systems affect healthcare worker safety

Given the pace of deployment and the compliance gaps already forming, this guide breaks down what each framework specifically requires of AI, where organizations are getting it wrong, and how to build governance that holds up as regulations continue to evolve.

TLDR

- HIPAA applies fully to any AI system accessing, processing, or transmitting ePHI. The same controls that govern clinicians govern AI agents

- The FDA distinguishes between AI that informs clinician judgment and AI that replaces it, with the latter potentially requiring premarket device review

- OSHA's workplace safety obligations extend to AI systems affecting healthcare worker environments, including robotic tools and alert-heavy workflows

- The biggest compliance risk is organizational: AI deployed without clear governance ownership, access controls, or audit infrastructure

- Proactive, real-time governance closes the gaps that static compliance frameworks cannot address

HIPAA Compliance Requirements for Healthcare AI

HIPAA Applies Without Exception to AI Systems

HIPAA's Privacy Rule, Security Rule, and Minimum Necessary standard apply without modification to any AI system accessing electronic protected health information (ePHI). The HHS Office for Civil Rights (OCR) explicitly states that ePHI in AI training data, prediction models, and algorithm data maintained by regulated entities is protected by HIPAA Rules and all applicable standards. AI is not a carve-out.

Recent OCR enforcement actions underscore this position. In 2024, OCR settled with MMG Fusion, LLC for a breach affecting 15 million individuals, citing failure to conduct accurate risk analysis on systems holding ePHI. Similarly, Top of the World Ranch paid $103,000 for failing to assess risks to ePHI confidentiality, integrity, and availability.

Business Associate Agreements: The Deployment Gate

Any AI vendor creating, receiving, maintaining, or transmitting PHI on a covered entity's behalf must execute a HIPAA-compliant Business Associate Agreement (BAA) before any PHI flows to their systems. BAA verification must function as a deployment gate, not a post-deployment checklist item.

Vendors unwilling to execute BAAs cannot legally be used in PHI-touching workflows. In practice, this means:

- AI tools embedded in EHR platforms require executed BAAs before go-live

- Scheduling and administrative workflow tools processing PHI carry the same obligation

- Many deployed tools currently operate without executed BAAs, creating direct liability exposure

Minimum Necessary Rule at the Operation Level

HIPAA's Minimum Necessary standard (45 CFR §164.502(b)) requires that AI agents be technically restricted, not just policy-restricted, to only the PHI their specific function requires.

Enforcement mechanism: Attribute-based access control (ABAC) enforces this for AI agents by restricting access based on specific attributes of the request, the agent, and the data. Broad access to EHR fields violates minimum necessary even when overall system permissions appear correct. Policy statements alone do not satisfy this requirement. Technical enforcement is mandatory.

Audit Controls Standard: Operation-Level Logging Required

45 CFR §164.312(b) requires organizations to implement mechanisms that record and examine activity in information systems containing or using ePHI. Session logs showing an AI tool was used are insufficient.

HIPAA requires operation-level logs capturing:

- Which agent accessed which PHI

- What action the agent performed

- Who authorized the workflow

- When the access occurred

Missing audit records constitute a HIPAA violation independent of any underlying incident. Trussed AI generates this audit evidence automatically as a byproduct of every governed AI interaction, reducing manual governance workload rather than adding to it.

Encryption and Authentication Requirements

ePHI must be encrypted in transit and at rest. AI agents must be authenticated with an identity linked to a human authorizer to satisfy HIPAA's person authentication requirements.

Under 45 CFR §§164.400–414, covered entities must notify affected individuals, HHS OCR, and in some cases media when AI systems expose PHI. The cause of the breach, human or automated, does not alter the definition or notification obligation.

FDA Regulatory Framework for Healthcare AI

The Jurisdictional Trigger: Software as a Medical Device

Under Section 201(h) of the FD&C Act (21 U.S.C. 321(h)), software intended for use in the diagnosis, cure, mitigation, treatment, or prevention of disease qualifies as a medical device. Administrative AI tools (scheduling, billing) generally fall outside FDA jurisdiction unless their intended use crosses into clinical territory.

Critical distinction: Software as a Medical Device (SaMD) carries regulatory obligations that administrative tools do not. Misclassification creates FDA enforcement exposure for marketing unlicensed device software and patient safety liability.

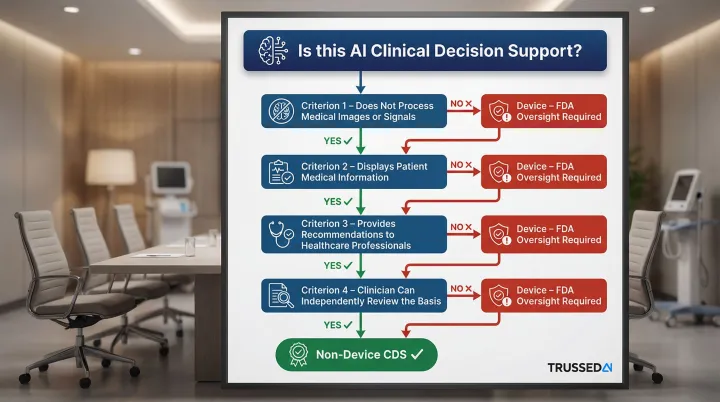

The CDS Line That Determines Regulatory Burden

The FDA's 2022 Clinical Decision Support guidance distinguishes non-device CDS from device software based on four criteria. All four must be satisfied for software to qualify as non-device CDS:

- Does not acquire, process, or analyze medical images or signals from diagnostic devices

- Displays, analyzes, or prints medical information about a patient

- Provides recommendations to healthcare professionals about prevention, diagnosis, or treatment

- Enables healthcare professionals to independently review the basis for recommendations, so they do not rely primarily on the software for clinical decisions

Why this matters: AI that makes clinical decisions autonomously, or in ways clinicians cannot meaningfully verify, crosses into device territory. Healthcare organizations frequently characterize AI tools as "clinical decision support" exempt from FDA oversight when those tools are functionally routing patients, recommending treatments, or producing determinations that clinicians ratify rather than genuinely review. That distinction carries real enforcement consequences.

Premarket Authorization Pathways

Most AI-enabled devices clear through 510(k) by demonstrating substantial equivalence to a predicate device. The FDA's AI-Enabled Medical Devices List contains 1,430 entries as of March 2026.

Risk classification determines which pathway applies:

- 510(k) clearance: For devices substantially equivalent to existing devices (Class I/II)

- De Novo classification: For novel low-to-moderate risk devices with no predicate

- Premarket Approval (PMA): For high-risk Class III devices requiring clinical data

Predetermined Change Control Plans: Enabling Iterative AI Updates

Cleared devices don't stay static. AI models update, drift, and improve post-deployment. PCCPs address this directly, allowing manufacturers to implement model updates within a pre-approved framework without triggering a new FDA submission. This is especially relevant for adaptive AI systems that learn post-market.

A valid PCCP must include three elements:

- Description of Modifications: Specifications for characteristics and performance of planned changes

- Modification Protocol: Verification and validation testing activities with acceptance criteria

- Impact Assessment: Benefits, risks, and risk mitigation plan for implementing changes

Postmarket Obligations and Cybersecurity Requirements

Manufacturers must monitor real-world performance, document corrections, and report adverse events through the Medical Device Reporting (MDR) program under 21 CFR Part 803.

Cybersecurity requirements: The FDA's June 2025 final cybersecurity guidance enforces Section 524B of the FD&C Act, requiring:

- Software Bill of Materials (SBOM)

- Secure-by-design practices including threat modeling

- Vulnerability management protocols

OSHA and Healthcare AI: The Often-Overlooked Compliance Layer

Where OSHA Authority Intersects with AI Deployment

OSHA's mandate covers workplace safety, and AI systems that affect the physical or cognitive work environment of healthcare workers fall within scope.

Examples of OSHA-relevant AI systems:

- Robotic surgical assistants

- AI-driven patient handling systems

- Automated medication dispensing

- AI tools that significantly increase cognitive load or alert fatigue among clinical staff

General Duty Clause: Recognized Hazards from AI

Once you've identified which AI systems fall under OSHA's scope, the next question is liability. OSH Act Section 5(a)(1) requires employers to provide a workplace free from recognized hazards likely to cause serious harm. AI-induced risks create General Duty Clause exposure if the organization has not identified and mitigated those risks.

AI-induced workplace hazards:

- Clinical decision support override rates frequently exceed 90%, and an Epic Sepsis Model validation showed it missed 67% of sepsis cases while generating alerts for 18% of all patients, a textbook alert fatigue scenario.

- Robotic tools operating alongside workers without proper guarding or safety protocols create physical injury risk

- Autonomous systems that reduce human oversight in safety-critical tasks increase exposure when failures occur

Practical OSHA Compliance Steps for AI Deployment

With those hazards identified, healthcare organizations need documented controls. Key steps include:

- Conduct hazard assessments that include AI system impacts on workers

- Document risk controls for identified AI-related hazards

- Train staff on AI tool limitations and safe interaction procedures

- Maintain incident records involving AI-related workplace harm

- Implement proper guarding for robotic systems per 29 CFR 1910.212

- Follow lockout/tagout procedures per 29 CFR 1910.147 during AI system servicing

One point worth keeping clear as you map compliance requirements: OSHA protects workers, not patients, and covers physical and cognitive safety rather than data privacy. That distinction matters when deciding which regulatory framework applies to a given AI risk, and why HIPAA, FDA, and OSHA obligations often need to be managed in parallel rather than treated as a single compliance checklist.

The Critical AI Compliance Gaps Healthcare Organizations Are Creating Right Now

Four compliance gaps show up repeatedly across healthcare AI deployments - and each carries distinct regulatory exposure under HIPAA, FDA, and OCR frameworks.

AI Agents with Overbroad PHI Access

The most pervasive HIPAA gap is AI systems with broad access to EHRs or clinical data warehouses without operation-level controls restricting each agent to the minimum PHI its specific function requires.

Why policy statements alone fail: Technical enforcement is required to satisfy the minimum necessary standard. Organizations should audit which AI agents can access which PHI fields, whether that access is technically restricted by function, and whether audit logs capture operation-level detail.

Missing BAAs and Undocumented Vendor Relationships

Many AI tools embedded in EHR platforms, scheduling systems, and administrative workflows process PHI without executed BAAs. 35% of healthcare cyberattacks stem from third-party vendors, yet 40% of contracts are signed without security assessments.

The liability exposure: Without a BAA, the covered entity bears full responsibility for any PHI breach involving the vendor. AI vendor discovery, identifying every tool touching PHI, must precede governance work.

Absent or Insufficient Audit Trails

Most healthcare organizations have logging for their AI tools but lack the operation-level specificity HIPAA's audit controls standard requires. Only 22% of hospitals said they are highly confident they could produce a complete AI audit trail within 30 days.

The critical difference:

- Insufficient: Log showing a tool was used

- Compliant: Tamper-evident record capturing agent identity, PHI fields accessed, action performed, and human authorizer

This is precisely what OCR investigations and FDA inspections will request. Trussed AI's governance platform generates this audit evidence automatically for every governed AI interaction. No manual log compilation required.

CDS Misclassification: The Hidden FDA Exposure

Healthcare organizations frequently label AI tools as "clinical decision support" exempt from FDA device regulation. The problem: many of these tools are functionally routing patients, recommending treatments, or generating determinations that clinicians ratify rather than genuinely review.

How to identify misclassified tools:

- Does the AI provide specific directives rather than recommendations?

- Can clinicians meaningfully review the basis for recommendations?

- Does the AI acquire or process medical images or signals?

- Do clinicians rely primarily on the AI's output to make decisions?

If the answer to any of these is yes, the tool may require FDA device classification. Remediation steps include:

- Conducting a formal intended use analysis

- Engaging regulatory counsel early

- Pursuing 510(k) clearance if warranted, before FDA scrutiny increases

Building a Healthcare AI Governance Framework That Scales

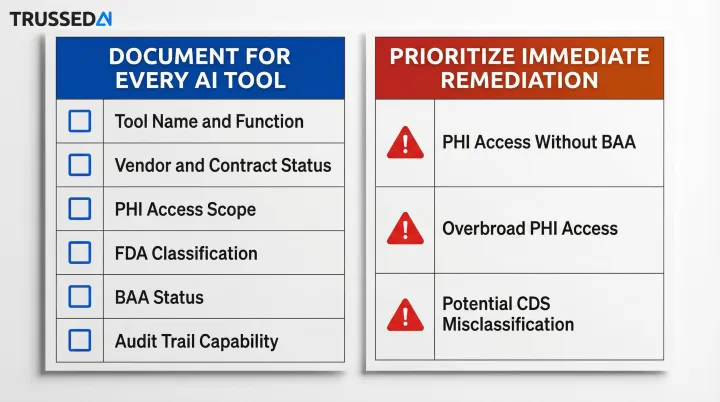

Start with an AI Inventory

Organizations consistently underestimate the AI already operating in their facilities, including tools embedded in EHR vendors, third-party scheduling and billing systems, and clinical decision support modules.

What to document:

- Tool name and function

- Vendor and contract status

- PHI access scope

- FDA classification (device vs. non-device)

- BAA status

- Current audit trail capability

Prioritize immediate remediation for:

- Tools with PHI access but no executed BAA

- Tools with broad PHI access lacking minimum necessary controls

- Tools potentially misclassified as non-device CDS

Shift from Periodic Review to Continuous Enforcement

Static governance, including annual risk assessments, periodic vendor reviews, and point-in-time audits, cannot keep pace with AI systems that evolve continuously.

Governance for AI must include:

- Real-time policy enforcement across models and agents

- Automated compliance monitoring

- Audit evidence generated as a byproduct of operations rather than reconstructed retrospectively

Platforms like Trussed AI's enterprise AI control plane address this directly by enforcing governance at runtime across AI applications, agents, and developer tools. With drop-in proxy integration requiring no application code changes, regulated healthcare organizations can operationalize continuous governance in weeks rather than months.

Cross-Functional Governance Structure and Vendor Contract Standards

Governance structure should include:

- Executive ownership with accountability

- Clinical, legal, IT, and compliance representation

- Regular review cadence with escalation paths

- Clear decision-making authority

AI vendor contracts should require:

- Local validation evidence demonstrating performance in your patient population

- Bias assessment documentation

- Performance monitoring commitments with defined metrics

- Indemnification for AI system failures

- BAA execution before PHI access

- Audit rights and security assessment cooperation

Vendor contract standards and internal governance structures don't exist in isolation. They need to anticipate where regulation is heading.

Preparing for future requirements: The Joint Commission's 2025 Responsible Use of AI in Healthcare (RUAIH) guidance establishes elements for responsible AI use. Its voluntary certification program signals that formal accreditation requirements are on the way. Aligning your governance framework with RUAIH principles now positions your organization ahead of those standards.

Frequently Asked Questions

Does the FDA regulate AI in healthcare?

Yes, the FDA regulates AI that qualifies as a medical device under the FD&C Act, specifically AI intended for diagnosis, treatment, or prevention of disease. Administrative AI tools (billing, scheduling) generally fall outside FDA jurisdiction unless their intended use crosses into clinical decision-making.

Can AI be HIPAA compliant?

AI can be HIPAA compliant, but only when the same technical safeguards required of human clinicians are applied to AI systems, including access controls, minimum necessary enforcement, audit trails, encryption, and executed BAAs with AI vendors.

What's the difference between OSHA and HIPAA?

HIPAA protects patient health information privacy and security, while OSHA protects healthcare worker safety in the workplace. In the context of AI, HIPAA governs how AI handles patient data, while OSHA governs how AI systems affect the physical and cognitive safety of healthcare workers using or working alongside them.

What are the 4 pillars of ethical AI?

The broadly recognized pillars are fairness (bias mitigation), transparency (explainability), accountability (human oversight), and safety/reliability (validated performance). These map directly to compliance requirements under HIPAA's Security Rule, FDA device safety standards, and the Joint Commission's RUAIH guidance.

What happens if an AI system causes a HIPAA breach in healthcare?

HIPAA breach notification requirements apply regardless of whether the breach was caused by a human or an AI system. The covered entity must notify affected individuals, HHS OCR, and in some cases media, and remains subject to the full range of civil and criminal penalties.

How do healthcare organizations maintain audit trails for AI systems?

Healthcare organizations maintain compliant AI audit trails by logging operation-level detail for every interaction: agent identity, PHI fields accessed, actions performed, and the human authorizer. These logs must feed into the organization's Security Information and Event Management (SIEM) system. General session logs showing an AI tool was accessed do not satisfy HIPAA's audit controls standard.