Introduction

Autonomous AI agents are making real-time decisions across enterprise systems: routing customer requests, approving transactions, accessing sensitive data, and triggering workflows. Yet most organizations lack the governance infrastructure to audit or control them. Only 21% of companies have a mature governance model for autonomous agents, even as 62% plan to deploy agentic AI within two years. In regulated industries like insurance, healthcare, and financial Solution, that gap creates direct compliance exposure - every automated decision needs a traceable record.

Standard AI monitoring tools were not built for agents that take actions, not just produce outputs. Traditional governance frameworks focus on model performance and periodic audits - reviewing what happened after the fact.

Agentic systems require runtime controls that evaluate and enforce policies before an agent accesses data, calls a tool, or triggers a workflow. Without execution-layer governance, organizations face unauthorized data access, compounding workflow failures, and regulatory inquiries they cannot answer. This article examines which platforms provide the audit trail and governance infrastructure needed to deploy autonomous agents safely in production.

TL;DR

- Audit trails must capture the full decision chain: agent identity, tools called, data accessed, reasoning steps, and outcomes

- Runtime policy enforcement matters more than post-hoc review , agentic systems need controls that act before damage occurs

- Key evaluation criteria: runtime policy enforcement, immutable audit logging, automated compliance reporting, identity and access controls, integration flexibility

- The five platforms covered range from dedicated AI governance control planes to observability-native and enterprise agentic platforms with embedded governance

- For regulated industries, prioritize NIST AI RMF and EU AI Act alignment, along with enterprise-grade security governance

What Audit Trails and Governance Mean for Autonomous Agents

Traditional AI governance focuses on model outputs and periodic audits,reviewing predictions, monitoring drift, and documenting model cards. Agentic AI governance must cover autonomous decision chains, tool-calling sequences, and inter-agent communication in real time. These are actions that may be irreversible before a human can review them: an agent authorizing a claim, routing sensitive patient data, or initiating a financial transaction.

Existing frameworks like NIST AI RMF, EU AI Act, and ISO/IEC 42001 require adaptation for agentic systems. None were written with autonomous agents explicitly in mind, yet compliance obligations still apply. That gap leaves organizations exposed to three agent-specific risks:

- Cascading failures , one agent's decision triggers unintended downstream actions across the chain

- Unauthorized tool access , agents operate beyond their intended scope without detection

- Logic opacity , decision paths crossing multiple models and external systems become impossible to reconstruct after the fact

A complete audit trail for agentic AI must include:

- Agent identity: Which agent or application initiated the action

- Data sources accessed: Every database, API, or system queried

- Tools called: External Solution, APIs, and functions invoked

- Reasoning steps: The decision logic or prompt chain that led to the action

- Authorization chain: Which policies were evaluated and who or what granted access

- Timestamps: Exact timestamps for every step, enabling sequence reconstruction

- Outcome: The result or action taken

Most enterprises are still catching up. Technical capabilities for autonomous agents are scaling faster than the oversight structures designed to govern them - which is precisely why the architecture of an audit trail matters before deployment, not after an incident.

Top Platforms with Audit Trails and Governance for Autonomous Agents

The platforms below were evaluated on governance depth, audit trail completeness, real-time enforcement capability, compliance support, and enterprise deployment readiness,not just general agentic capabilities.

Trussed AI

Trussed AI is an enterprise AI control plane built to deliver production-ready governance, security, and compliance infrastructure for organizations deploying AI agents, models, and workflows at scale. It is the only platform in this list built governance-first from the ground up. Trussed serves regulated industries including insurance, healthcare, and financial Solution.

What sets Trussed AI apart is its runtime enforcement model: governance policies are enforced as a byproduct of every governed interaction. Audit-ready evidence is generated automatically,with no changes required to existing application code,through drop-in proxy integration.

Key differentiators include:

- Real-time policy enforcement across models and agents

- Less than 20ms latency

- Complete and immutable audit trails

- Continuous compliance monitoring that reduces manual governance workload by 50%

- Intelligent routing and failover to maintain enterprise SLAs

| Feature Category | Details |

|---|---|

| Key Governance Features | Real-time policy enforcement, automatic audit trail generation, continuous compliance monitoring, cost tracking and attribution, intelligent routing and failover |

| Integration Model | Drop-in proxy; zero changes to application code; works across models, agents, and developer tools; AWS and Google Cloud partnerships |

| Target Use Cases / Certifications | Regulated industries (insurance, healthcare, financial Solution, universities); operational workflows live in under 4 weeks |

IBM watsonx.governance

IBM watsonx.governance is an enterprise AI governance platform designed to monitor, explain, and enforce policies across AI models and workflows. It is widely deployed in large regulated enterprises and supports alignment with NIST AI RMF, EU AI Act, and sector-specific compliance frameworks.

The platform provides model risk management, automated AI Factsheets that log and monitor model facts throughout the lifecycle, and policy enforcement dashboards.

Where watsonx.governance stands out is the breadth of pre-built compliance frameworks, deep integration with IBM Cloud and third-party model providers like OpenAI and Amazon SageMaker, and a lifecycle governance approach. Note that its audit capabilities were built primarily for traditional AI models and may require additional configuration for fully autonomous multi-agent workflows. IBM documentation notes that FedRAMP-authorized Solution are available on AWS GovCloud for public sector deployments.

| Feature Category | Details |

|---|---|

| Key Governance Features | AI Factsheets for model documentation, bias and drift detection, preset thresholds for toxic language and hate speech, compliance accelerators for EU AI Act and ISO 42001, audit logging |

| Integration Model | IBM Cloud native, multi-cloud support, integrates with Watson Studio and third-party LLMs including OpenAI and Amazon SageMaker |

| Target Use Cases / Certifications | Finance (Banco do Brasil), public sector; FedRAMP-authorized on AWS GovCloud; compliance accelerators for NIST AI RMF, EU AI Act, ISO 42001 |

Dynatrace

Dynatrace is an observability and AIOps platform that introduced dedicated data governance and audit trail capabilities for AI Solution. It captures end-to-end AI event lineage from platforms like Amazon Bedrock, retaining AI-related events for up to 10 years in its Grail data lakehouse. The platform uses OpenTelemetry to collect real-time traces and metrics of AI workloads, logging every user interaction.

The core advantage here is governance woven directly into existing observability workflows. OpenPipeline on Grail routes AI events to custom retention buckets automatically without requiring teams to change tools. Dynatrace Segments enable instant filtering of audit data by region, environment, model, or custom criteria,particularly strong for organizations that already use Dynatrace for infrastructure monitoring and want to extend coverage to AI agents.

| Feature Category | Details |

|---|---|

| Key Governance Features | Automated AI event retention (up to 10 years), full AI user interaction logging, data lineage tracking via OpenTelemetry, compliance-aligned retention policies, Segments for filtered audit views |

| Integration Model | OpenTelemetry-based collection, Amazon Bedrock integration, OpenPipeline routing, extends existing Dynatrace deployments |

| Target Use Cases / Certifications | EU AI Act alignment, NIST AI and ISO/IEC 42001:2023 support; CSA STAR 2 certification |

Kore.ai

Kore.ai is an enterprise agentic AI platform with a built-in AI governance and security module. It provides full lifecycle oversight with end-to-end decision tracing, audit logs, and real-time monitoring across customer experience, employee experience, and process automation use cases.

Gartner, Forrester, and Everest Group each recognize Kore.ai as a Leader in this space, and the platform is trusted by over 400 Fortune 2000 companies.

Unified visibility across the entire agent stack is what sets Kore.ai apart. Role-based access controls, configurable guardrails, policy enforcement, and a comprehensive governance dashboard are bundled into the same platform used to build and deploy agents,meaning governance is not bolted on but embedded. Governance depth is strongest for agents built natively on Kore.ai and may vary for externally orchestrated agents.

| Feature Category | Details |

|---|---|

| Key Governance Features | Audit logs tracking user actions, logins, role changes, and model updates; Enterprise Guardrails Framework with real-time input/output scanning; RBAC; exportable logs for compliance reporting |

| Integration Model | 250+ plug-and-play enterprise integrations, model- and cloud-agnostic, no-code and pro-code deployment options |

| Target Use Cases / Certifications | Healthcare (major health insurer), banking (RCBC); HIPAA, GDPR, EU AI Act alignment |

Microsoft Copilot Studio (Power Platform)

Microsoft Copilot Studio, built on Power Platform, offers a governance framework for AI agents that extends Microsoft's existing low-code governance model. Used by over 230,000 organizations including 90% of the Fortune 500, it delivers agent governance through Power Platform Admin Center, DLP policies, managed environments, role-based access controls, and Microsoft Purview and Entra ID for identity and audit.

For organizations already running on Microsoft infrastructure, the governance advantage is ecosystem depth: audit logs, telemetry, and controls all flow through tools IT teams already operate. The zoned governance model (personal, collaboration, enterprise managed) provides a scalable framework for progressive agent autonomy.

Note that governance capabilities are strongest within the Microsoft ecosystem and less applicable for agents operating across non-Microsoft platforms.

| Feature Category | Details |

|---|---|

| Key Governance Features | Maker audit logs in Microsoft Purview, agent activity monitoring via Microsoft Sentinel, Data Loss Prevention policies, environment routing, zoned governance model, Entra ID integration |

| Integration Model | Native Microsoft 365 and Azure ecosystem, Power Platform Admin Center, Entra ID and Purview integration, on-premises and cloud deployment |

| Target Use Cases / Certifications | Microsoft Trust Center coverage; HIPAA BAA and FedRAMP via underlying workloads; 230,000 organizations including 90% of Fortune 500 |

Key Features to Evaluate in an Agent Governance Platform

Choosing the right governance platform means looking past marketing language and into architectural specifics. The features below determine whether a platform can actually enforce control at runtime , or just report on failures after they happen.

Real-Time Policy Enforcement vs. Post-Hoc Review

Platforms that only log decisions after the fact cannot prevent unauthorized actions or cascading failures. Governance must operate at agent runtime, not just in quarterly reviews. Runtime enforcement means policies are evaluated and applied at the moment an agent attempts an action,before a tool is called, before data is accessed, before a workflow triggers.

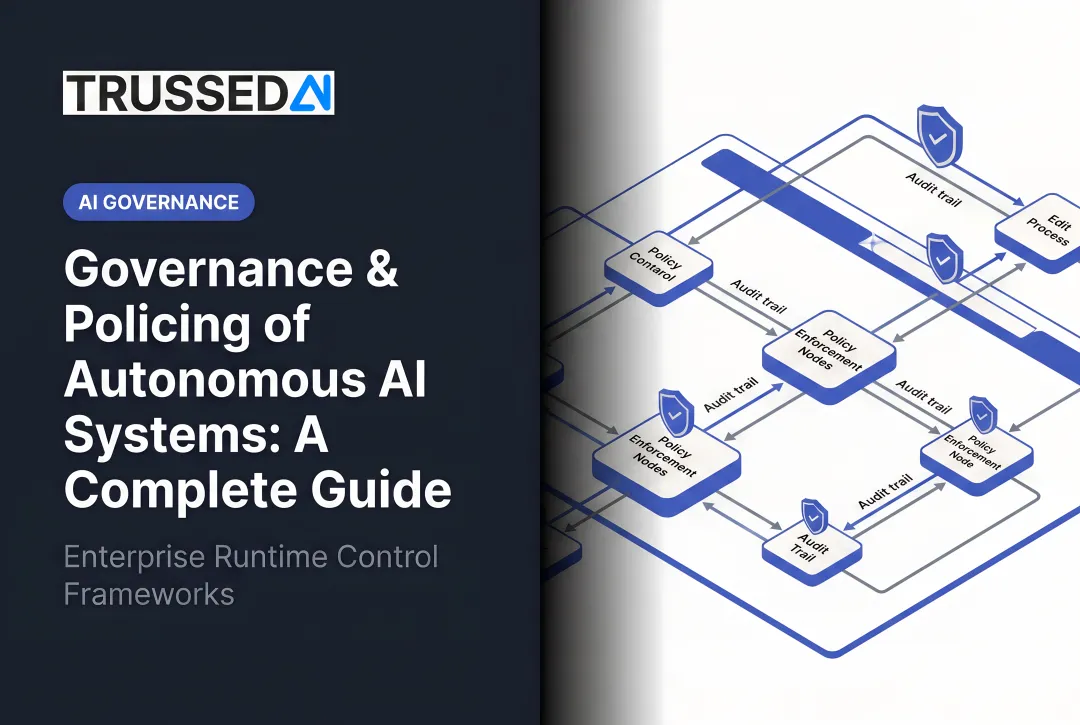

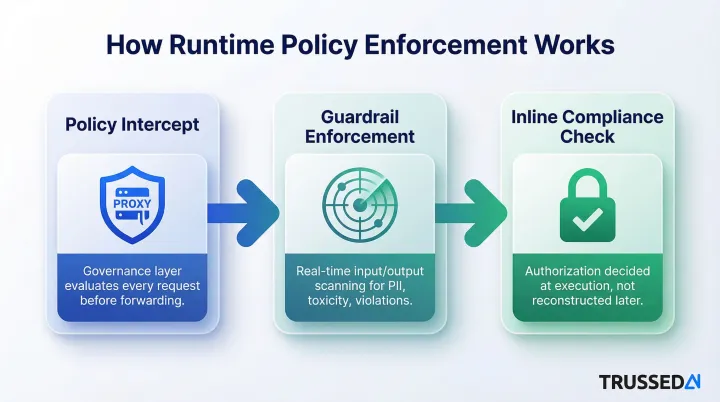

Technically, runtime enforcement looks like:

- Policy intercept: The governance layer sits in the execution path as a proxy, evaluating every request against policies before forwarding it

- Guardrail enforcement: Real-time scanning of inputs and outputs for toxic language, PII, or policy violations

- Inline compliance checks: Authorization decisions made at the point of execution, not reconstructed later

Audit Trail Completeness and Immutability

Complete audit trails capture the full decision chain - not just final outputs. A production-ready governance platform should log:

- Agent identity and tool calls: Which agent acted, which tools were invoked, and in what sequence

- Data access records: What data was read or written, with authorization source documented

- Reasoning steps and timestamps: Enough context to reconstruct why a decision was made

- Immutable storage: Records that cannot be altered after the fact, satisfying regulatory proof requirements

Long-term retention matters too , regulatory inquiries often surface months or years after an incident.

Identity and Least-Privilege Access Controls

Assign each autonomous agent a unique, verifiable identity with minimum necessary permissions tied to specific tasks. Agent identity management includes:

- Unique identifiers for each agent or application

- Role-based access control defining what tools and data each agent can access

- Authorization chains documenting which policies granted or denied access

- Scoped permissions that limit agents to their intended function

Permissions scoped for development environments routinely become production liabilities - agents that can access more than they need will eventually do so.

Compliance Reporting Automation

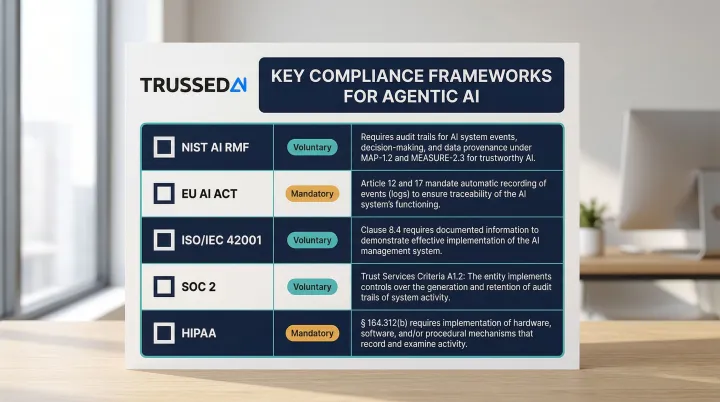

At agentic scale, compliance evidence must be generated automatically as a byproduct of governed interactions - not assembled manually after the fact. Relevant frameworks include:

- NIST AI RMF: Govern, Map, Measure, Manage functions

- EU AI Act: Article 12 (record-keeping), Article 13 (transparency), Article 14 (human oversight)

- ISO/IEC 42001: AI management systems

- Enterprise audit standards: Security, availability, processing integrity, confidentiality, privacy

- HIPAA: 45 CFR § 164.312(b) audit controls for ePHI

Integration Model and Deployment Footprint

Evaluate whether the platform requires:

- Code changes: Does it require refactoring existing applications, or does it work as a drop-in proxy?

- Proxy or sidecar: Does it sit in the request path transparently, or require embedding SDKs?

- Native-only governance: Does governance only apply to agents built within the platform, or does it extend to externally built or third-party agents?

Proxy-based deployments typically reach full coverage faster; SDK-embedded models offer more granular control but require code changes across every integrated system.

How We Chose These Platforms

Platforms were selected based on governance depth, not general agentic capability. A common mistake buyers make: choosing platforms for their agent-building features without checking whether governance applies to externally built or third-party agents.

Priority went to platforms offering real-time enforcement, complete audit trail generation, compliance framework support, and proven enterprise deployment.

Key factors evaluated:

- Does the platform enforce policies before actions execute, or only log them after?

- Are full decision chains logged immutably with configurable retention periods?

- Can agents be assigned unique identities with least-privilege permissions?

- Is compliance evidence generated continuously, or must it be manually assembled?

- Does governance apply across your entire AI stack, or only within a single environment?

- Is there evidence of production use in healthcare, insurance, or financial Solution?

These factors map directly to business outcomes. Complete audit trails cut regulatory inquiry response time and simplify incident attribution. Runtime enforcement stops unauthorized actions before they cause compliance or operational risk , not after.

Conclusion

The right governance platform for autonomous agents does more than log activity after the fact. It enforces policy at runtime, generates audit evidence automatically, and keeps up with the pace at which agents actually operate. That shift , from reactive compliance tracking to continuous automated control , is what separates purpose-built governance from bolt-on monitoring.

Evaluate each platform against your regulatory context, existing infrastructure, and deployment model. An organization running agents natively within a single vendor ecosystem has different governance requirements than one coordinating agents across multiple models, tools, and cloud environments , and the platform you choose should reflect that distinction.

If your environment spans multiple models, tools, and vendors, governance that only covers one slice of that stack creates blind spots. For enterprises in regulated industries , insurance, healthcare, financial Solution , that need consistent enforcement across the full AI stack, Trussed AI's control plane provides runtime policy enforcement and automatic audit trail generation with no code changes required. Learn more or schedule a demo to see how it works in your environment.

Frequently Asked Questions

What are the most popular agentic AI platforms?

Widely adopted agentic AI platforms include Kore.ai, Microsoft Copilot Studio, IBM watsonx, AWS Bedrock Agents, and Google Vertex AI. Enterprise selection increasingly depends on governance and compliance capabilities, not just agent-building features, particularly in regulated industries where audit trails and runtime policy enforcement are mandatory.

Which tool helps ensure model compliance and governance in AI?

Governance platforms like Trussed AI, IBM watsonx.governance, and Dynatrace serve this function. Distinguish between runtime enforcement tools that prevent policy violations before they occur and post-hoc compliance reporting tools that reconstruct evidence after the fact , regulated industries require both to meet audit and oversight obligations.

What should an audit trail for AI agents include?

Essential elements include agent identity, data sources accessed, tools called, decision reasoning steps, authorization chain, timestamps, and outcome. Immutability and long-term retention are required for regulatory defensibility , records must be tamper-evident and available for inquiries that may occur months or years after an incident.

How does runtime governance differ from periodic compliance audits for AI agents?

Periodic audits review past behavior after the fact, while runtime governance enforces policies at the moment an agent takes action. For autonomous agents making irreversible decisions at machine speed - approving transactions, routing sensitive data, triggering workflows - runtime enforcement is the only viable control model. Post-hoc audits cannot prevent unauthorized actions or cascading failures.

What regulations require audit trails for autonomous AI systems?

The EU AI Act (Article 14) mandates automatic logging and traceability for high-risk systems; NIST AI RMF provides a voluntary risk management framework. Sector-specific requirements include Federal Reserve SR 11-7 for financial Solution and HIPAA 45 CFR § 164.312(b) for healthcare audit controls.

How do I choose a governance platform for agentic AI in a regulated industry?

Prioritize runtime enforcement capability, audit trail completeness, compliance framework alignment (NIST, EU AI Act), and integration model. Verify that governance coverage extends to all agents in your environment and that the platform generates audit evidence automatically rather than requiring manual assembly for regulatory inquiries.