Introduction: AI and HIPAA Compliance in Healthcare

Picture this: A mid-sized health system deploys an AI powered ambient scribing tool to cut documentation time in half. Within weeks, clinicians are dictating patient encounters, and the AI is generating clinical notes in real time. Then the compliance officer asks a simple question: "Where is our Business Associate Agreement?" The vendor's response stops everyone cold: "Our consumer tier doesn't require a BAA. We use your inputs to improve our public model." No BAA. No safeguards. Potential breach. The health system just exposed thousands of patient records.

This scenario isn't hypothetical. It's happening across U.S. healthcare as AI adoption outpaces governance. In 2024, 71% of U.S. hospitals adopted predictive AI, yet fewer than half established formal governance processes. AI tools now handle ambient scribing, diagnostic imaging analysis, predictive analytics for patient deterioration, and chatbot driven patient engagement, all of which can trigger HIPAA obligations the moment they touch Protected Health Information (PHI).

The core tension: compliance infrastructure is still rooted in pre AI frameworks, while AI deployments are already in production.

This article covers:

- How HIPAA applies to AI systems

- The specific compliance requirements you must meet

- Hidden risks most organizations overlook

- How to build governance that holds up under Office for Civil Rights (OCR) scrutiny

TL;DR

- HIPAA applies to any AI system that creates, receives, maintains, or transmits PHI. AI tools receive no exemption

- AI vendors handling PHI on behalf of covered entities are Business Associates and must sign a BAA

- Public AI tools like ChatGPT's consumer interface are not HIPAA compliant. Avoid using them with any patient data

- The minimum necessary standard, authorization requirements, and Security Rule safeguards all apply to AI systems without exception

- AI systems require continuous, runtime governance. Point in time audits alone won't hold as models and workflows change

How HIPAA Applies to AI in Healthcare

The PHI Test for AI Systems

HIPAA coverage triggers any time an AI system touches "individually identifiable health information" under 45 CFR §160.103. This includes:

- Electronic health record inputs

- Voice transcriptions from ambient scribing tools

- Uploaded diagnostic images

- Clinical notes processed by documentation assistants

- De identified data carrying re identification risk

PHI isn't limited to structured EHR data. Any information that can identify an individual and relates to their health condition, treatment, or payment qualifies, including free text notes, appointment dates, and metadata.

Three HIPAA Rules Governing AI

1. Privacy Rule (45 CFR §164.502): Controls when and how PHI can be used or disclosed. AI systems must comply with the minimum necessary standard, limiting PHI access to what the intended purpose requires. Training AI models on PHI typically requires explicit patient authorization unless it falls under Treatment, Payment, or Healthcare Operations (TPO).

2. Security Rule (45 CFR §164.312): Mandates administrative, physical, and technical safeguards for electronic PHI (ePHI). AI platforms must implement:

- Encryption: AES-256 at rest, TLS 1.2+ in transit

- Access controls: Role based authentication with unique user identification

- Audit logs: Recording who accessed what data, when, and for what purpose

- Integrity controls: Prevent unauthorized ePHI modification

3. Breach Notification Rule: Requires notification to affected individuals, HHS, and potentially media if PHI is improperly exposed. AI systems that leak PHI, whether through model training data exposure or unauthorized access, trigger breach notification obligations.

These three rules don't just govern covered entities. They extend directly to the AI vendors those entities work with.

When AI Vendors Become Business Associates

If an AI vendor creates, receives, maintains, or transmits PHI on behalf of a covered entity,even to perform analytics, documentation, or workflow automation, HIPAA classifies them as a Business Associate. This subjects them to direct HIPAA liability and requires a signed Business Associate Agreement (BAA).

The HIPAA Omnibus Final Rule clarified that the "conduit exception," intended for entities like internet service providers that merely transport information, does not apply to cloud AI platforms that store or process ePHI. These vendors require BAAs.

The Consumer App Gap: Where HIPAA Doesn't Reach

A critical vulnerability: when patients share PHI directly with consumer AI apps not contracted with a covered entity, HIPAA may not apply. If the AI developer is neither a covered entity nor a Business Associate, patient data falls outside HIPAA's scope.

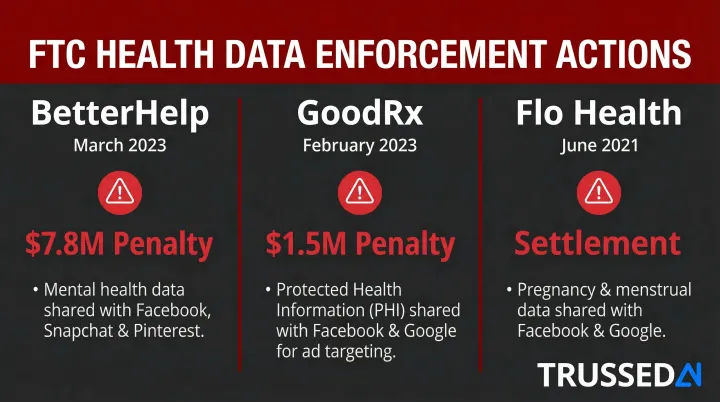

The Federal Trade Commission (FTC) has moved directly into this gap:

| Company | Date | Penalty | Key Findings |

|---|---|---|---|

| BetterHelp | March 2023 | $7.8 Million | Shared mental health data with Facebook, Snapchat, and Pinterest for targeted advertising despite privacy promises |

| GoodRx | February 2023 | $1.5 Million | Failed to notify consumers of unauthorized PHI disclosures to Facebook and Google; misrepresented HIPAA compliance |

| Flo Health | June 2021 | Settlement | Shared pregnancy and health data with Facebook and Google for marketing despite promises |

De identification: A Compliance Path with Limits

HIPAA provides two methods for de identifying PHI under 45 CFR §164.514:

Safe Harbor: Requires removing 18 specific identifiers (names, addresses, dates except year, medical record numbers, etc.) and no actual knowledge that remaining information could identify individuals.

Expert Determination: A qualified expert determines that re identification risk is very small and documents the methods used.

De identified datasets aren't risk free. In Dinerstein v. Google, plaintiffs alleged that the University of Chicago shared "de identified" EHRs with Google for AI training, but the records included dates of service and free text notes, potentially enabling re identification when cross referenced with Google's user data.

The Seventh Circuit ultimately dismissed the case for lack of standing, but the dispute exposed a structural risk: dominant technology companies processing "de identified" health data may have the supplemental data needed to reverse that de identification.

Key HIPAA Compliance Requirements for AI Systems

Business Associate Agreements: Non Negotiable Requirements

A signed BAA is legally required if the AI vendor touches PHI. A compliant BAA must explicitly cover:

- Prohibited uses: The vendor cannot use your patient data to train public models or for purposes outside your authorized use case

- Breach notification timelines: Typically 60 days or less from discovery

- Subcontractor obligations: Under 45 CFR §164.504(e), the BAA must flow down to any downstream vendors the AI platform uses

- Security responsibilities: Specific technical safeguards the vendor will implement

Without a signed BAA, using an AI tool with PHI is a HIPAA violation. Full stop.

Technical Safeguards Under the Security Rule

45 CFR §164.312 mandates specific technical controls for systems handling ePHI:

| Standard | Requirement | Application to AI |

|---|---|---|

| Access Control | Unique user identification | Every user accessing AI systems must have unique credentials; role based access controls enforce minimum necessary |

| Audit Controls | Record and examine activity | AI systems must log every PHI access event: who accessed what data, when, and for what purpose |

| Integrity Controls | Verify ePHI hasn't been altered | Mechanisms to detect unauthorized changes to AI processed clinical data |

| Transmission Security | Encrypt ePHI in transit | TLS 1.2+ required for all API calls, model queries, and data transfers |

These requirements apply to all data stores in AI systems, including logs, prompt caches, temporary buffers, and model training datasets.

The Minimum Necessary Standard Applied to AI

Under 45 CFR §164.502(b), covered entities must limit PHI to the minimum necessary to accomplish the intended purpose. For AI, this creates specific challenges:

- AI training often requires large datasets to achieve accuracy

- Organizations must document why the volume of PHI used is necessary

- Broader use cases (like training foundational models) typically require patient authorization

The practical barrier: if you want to train an AI model on thousands of patient records for research or model improvement, you generally need explicit patient authorization. It doesn't qualify as routine Healthcare Operations.

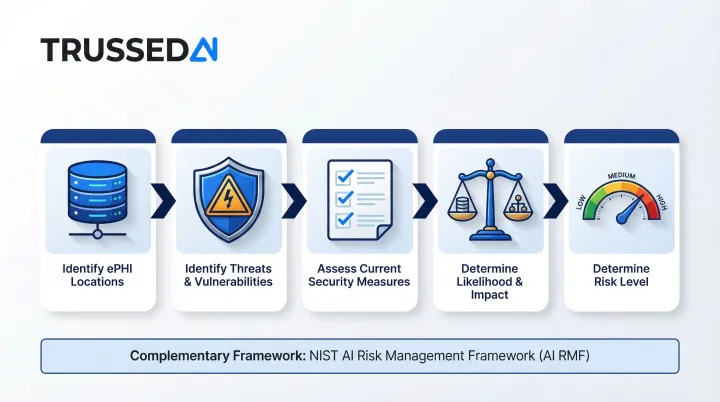

Risk Assessment Requirements

45 CFR §164.308(a)(1)(ii)(A) requires an accurate and thorough assessment of potential risks to ePHI. OCR guidance specifies this must include:

- Identifying where ePHI is stored, received, maintained, or transmitted

- Identifying reasonably anticipated threats and vulnerabilities

- Assessing current security measures

- Determining likelihood and potential impact of threats

- Determining the level of risk

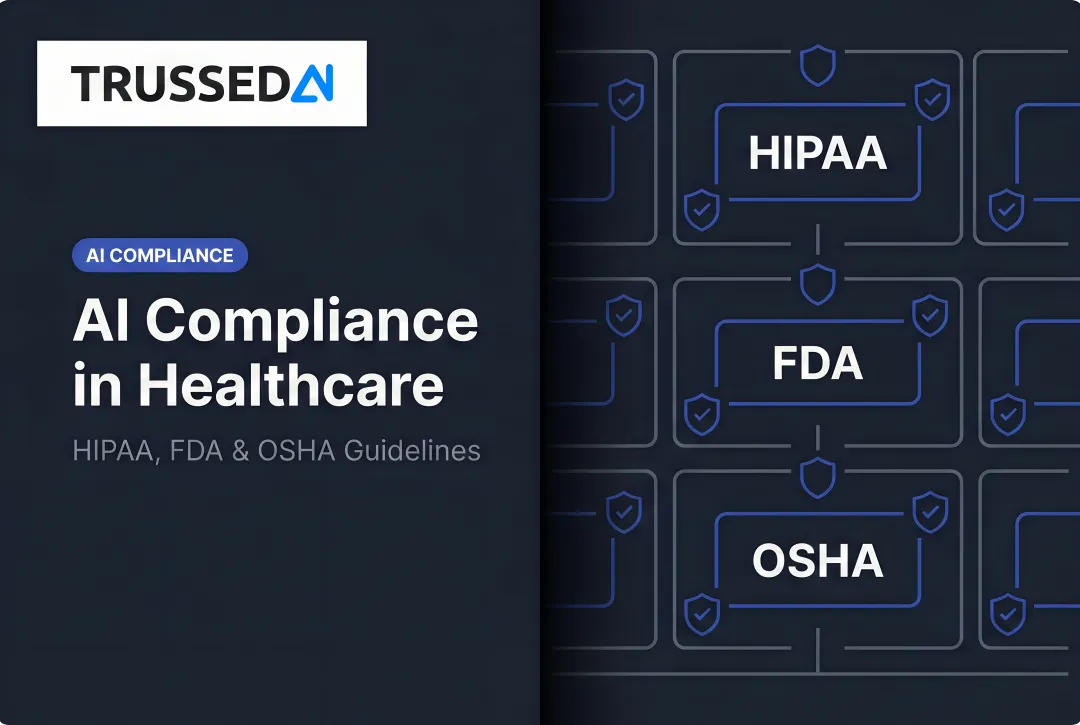

Each AI tool should be evaluated as a new system requiring both a HIPAA Security Rule risk assessment and AI specific risk analysis. The NIST AI Risk Management Framework (AI RMF 1.0) can be used alongside HIPAA to address AI specific dimensions like explainability, bias, and reliability.

Authorization Requirements for Non TPO AI Uses

HIPAA allows PHI use without patient authorization for Treatment, Payment, and Healthcare Operations (TPO). Using PHI to train AI models, conduct research, or support marketing analytics generally falls outside these categories and requires explicit patient authorization.

The authorization burden is significant. Most foundational model training scenarios won't qualify as Healthcare Operations, meaning organizations need either patient consent or a path to de identified data before they can proceed.

Hidden HIPAA Compliance Risks in AI Deployments

Shadow AI: The Most Pervasive Risk

The biggest compliance threat isn't the AI systems your organization officially deploys. It's the ones staff are already using without approval. Clinicians use consumer AI tools (public ChatGPT, Gemini, Claude) to draft clinical notes, summarize patient records, and generate referral letters. Consumer tools don't sign BAAs and may retain inputs to retrain public models.

Under HIPAA's penalty structure (45 CFR §160.404), violations due to willful neglect that aren't corrected within 30 days carry penalties of at least $50,000 per violation, with an annual cap of $1.5 million for identical violations. If 100 staff members each use unapproved AI tools with patient data, that's 100 separate violations.

Model Training Data Exposure: The Vendor Side Risk

Even when a BAA is in place, covered entities must verify whether the vendor uses PHI submitted through the platform to train or fine tune its foundational model. Key terms to understand:

- Zero retention: The vendor does not store, log, or use your data for model training. OpenAI provides BAAs for API customers with zero data retention configured for HIPAA compliance.

- Segregated instance: Your data is processed in a dedicated environment isolated from other customers and model training pipelines.

Questions to ask vendors:

- Does my data leave my dedicated instance?

- Is it used to fine tune your shared base model?

- Can you provide contractual guarantees prohibiting training on my PHI?

Re identification Risk from De identified Data

Even airtight vendor contracts don't eliminate risk if the data itself is the vulnerability. De identified datasets aren't as safe as they appear: when AI vendors with access to large external datasets (location data, browsing history, social media) process "de identified" PHI, they can re identify individuals through data triangulation.

The FTC has pursued companies that misrepresent this risk. In the GoodRx, Flo Health, and BetterHelp cases, regulators emphasized that sharing "anonymized" health data with advertising platforms created re identification exposure that violated consumer expectations, regardless of what the data was labeled.

The Shared Responsibility Trap

Many organizations assume that purchasing a HIPAA eligible platform makes them automatically compliant. This misunderstands the shared responsibility model:

- Vendors secure the infrastructure, provide technical safeguards, and maintain certifications

- Customers own access management, configuration, input data governance, and staff usage controls

A signed BAA provides legal protection but not practical protection if configuration and usage controls are absent. You remain liable for how your staff use the platform.

Agentic AI and HIPAA: New Risks from Autonomous Systems

How Agentic AI Differs in a HIPAA Context

AI agents take multi step autonomous actions, including querying databases, retrieving patient records, calling external APIs, and writing clinical summaries, potentially touching PHI across multiple systems in a single workflow. This creates a PHI exposure surface that's both larger and harder to define than a single query AI tool.

That scale is exactly the problem. Existing HIPAA governance frameworks were designed for static systems where data flows could be mapped in advance. Agentic workflows are dynamic: the agent decides at runtime which databases to query, which APIs to call, and which records to access based on the user's request.

The Audit Trail Challenge with AI Agents

HIPAA's Security Rule requires documentation of who accessed PHI, when, and for what purpose. With agentic workflows, a single user request may trigger dozens of PHI access events across multiple systems.

Standard LLM API logs often capture that a session occurred, not what specific PHI was accessed or which operations were performed. Before deploying agentic AI in a healthcare setting, verify that the platform logs every individual action, not just the user's input and final output.

Key logging requirements to confirm:

- Every PHI access event recorded with timestamp and context

- Logs tied to individual agent actions, not just session level summaries

- Evidence that audit trails are tamper-resistant and retrievable on demand

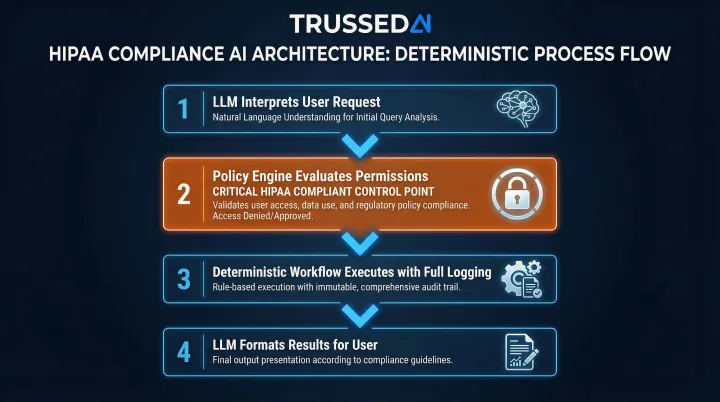

Why Deterministic, Policy Constrained Architectures Are Safer

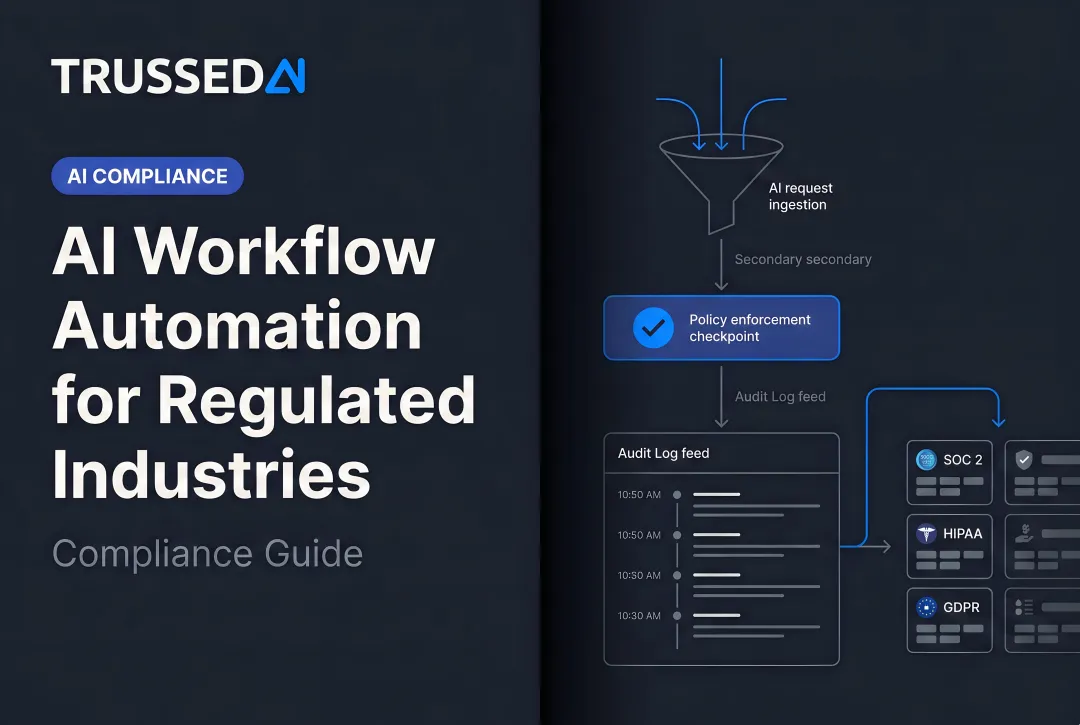

Systems that separate intent recognition (which uses an LLM) from action execution (which follows pre defined, auditable rules) reduce hallucination risk and provide clearer audit trails. In this architecture:

- The LLM interprets the user's request and identifies the intended action

- A policy engine evaluates whether the action is permitted

- If approved, a deterministic workflow executes the action with full logging

- The LLM formats the results for the user

Under HIPAA review, this structure holds up because every action traces back to an explicit policy decision, not a model inference that can't be fully explained or reproduced.

How to Evaluate AI Vendors for HIPAA Compliance

Not every vendor claiming "HIPAA compliance" actually meets the bar. Before deploying any AI vendor that handles PHI, verify these non negotiables:

Non Negotiable Evaluation Checklist

- Will they sign a BAA? If not, they're disqualified. Full stop.

- Contractual guarantee that your PHI won't be used to train or fine tune their models

- Third party certifications:

- Third-party security certification: Proves sustained security controls over 6 to 12 months

- HITRUST CSF: Healthcare specific gold standard harmonizing 60+ frameworks including HIPAA, NIST, and ISO

- Control over data residency and retention, including where it's stored and for how long

- Breach notification timelines committed to in the BAA

- Subcontractor coverage, including who their downstream vendors are and whether BAAs extend to them

Specific Questions to Ask AI Vendors

- Does my data leave my dedicated instance?

- Is it used to fine tune your shared base model?

- What certifications do you hold, and can you provide the audit report?

- How quickly will you notify us of a breach?

- Who are your subprocessors, and are they covered by a BAA?

- Can you provide audit logs showing every PHI access event?

Red Flags That Disqualify a Vendor

- Refusal to sign a BAA, specifically this is a legal disqualifier with no workaround

- Vague answers about whether your data trains their model, specifically if they can't state it clearly, assume it does

- No third-party security certifications, namely HITRUST CSF is a baseline expectation for healthcare

- Inability to produce audit logs showing PHI access, specifically you can't demonstrate compliance without them

- No clear access revocation process for when staff leave or roles change

Evaluating Hyperscaler AI Services

Once you've confirmed a vendor clears the red flags above, the next question is infrastructure. Major cloud providers offer HIPAA eligible generative AI Solution, but eligibility and configuration are separate problems:

| Provider | Service | BAA Availability | Notes |

|---|---|---|---|

| Microsoft Azure | Azure OpenAI Service | Yes | BAA included in Enterprise Agreements by default |

| Google Cloud | Vertex AI | Yes | Covered under Google Cloud BAA |

| AWS | Amazon Bedrock | Yes | Customers must execute AWS BAA and configure appropriately |

A signed BAA with a hyperscaler doesn't transfer compliance responsibility. Your team still owns access controls, logging, and PHI handling configurations.

Building a Continuous AI Governance Framework

Why Point in Time Compliance Fails for AI

Unlike a database or email system, AI deployments evolve continuously. Models update, agents acquire new tools, and workflows are added. A compliance review at deployment time doesn't account for how the AI system's behavior and data access patterns change over time. Point in time audits alone won't hold as models and workflows change.

OCR guidance explicitly states that risk analysis must be ongoing and continuous. Recent OCR enforcement actions under the Risk Analysis Initiative have penalized entities for failing to conduct accurate, thorough, and periodic risk analyses.

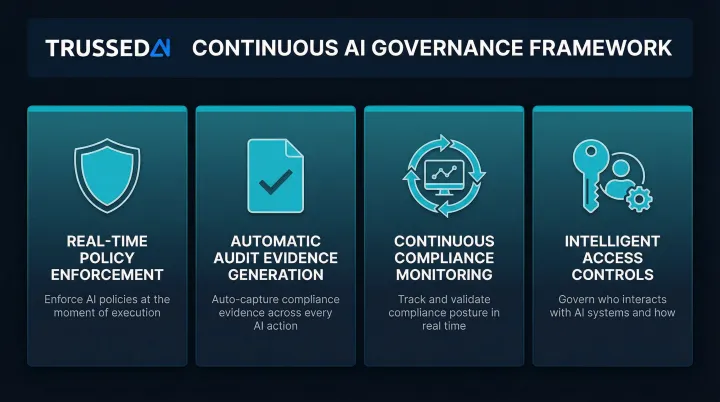

Key Components of Runtime AI Governance

A continuous governance framework requires:

- Real time policy enforcement that intercepts AI interactions and applies compliance rules before PHI is exposed, blocking unauthorized access at the execution layer rather than flagging it after the fact.

- Automatic audit evidence generation so every governed interaction produces audit ready records as a byproduct: policy evaluation results, model versions, timestamps, data lineage, user identity, and actions taken.

- Continuous compliance monitoring that detects compliance drift as AI workflows evolve. When models update or agent behaviors change, the system evaluates whether those changes remain aligned with defined policies and regulatory requirements.

- Intelligent access controls that enforce the minimum necessary standard dynamically across models, agents, and developer tools, restricting PHI access to what each role actually requires.

Enterprise AI Control Planes: The Governance Solution

Delivering these capabilities requires infrastructure built specifically for dynamic AI environments, not retrofitted from static IT governance tools.

Platforms like Trussed AI, an enterprise AI control plane built to close this governance gap, enforce HIPAA-aligned policies at runtime across AI apps, agents, and developer tools via a drop-in proxy with zero application code changes. The platform automatically maintains complete audit trails and provides continuous compliance monitoring.

Healthcare organizations using this model report a 50% reduction in manual governance workload, freeing compliance teams to focus on higher risk decisions rather than routine audit assembly.

Frequently Asked Questions

What are the HIPAA compliance requirements for AI in healthcare?

Any AI system that creates, receives, maintains, or transmits PHI must comply with HIPAA's Privacy Rule, Security Rule, and Breach Notification Rule. This requires a BAA with vendors, technical safeguards (encryption, access controls, audit logs), risk assessments, and data minimization practices aligned with the minimum necessary standard.

Are AI agents HIPAA compliant?

AI agents can be HIPAA compliant when deployed with proper safeguards: a signed BAA, audit trails for every action, role based access controls, and a policy constrained architecture limiting PHI access to the minimum necessary. Their autonomous, multi step nature demands more rigorous governance than static AI tools.

Are there any HIPAA compliant AI tools for healthcare?

No AI tool is inherently HIPAA compliant. Compliance depends on configuration, BAAs, and organizational safeguards. Enterprise platforms like Azure OpenAI Service, Google Vertex AI, and AWS generative AI Solution can be used in HIPAA compliant configurations when properly set up within a signed BAA and with appropriate access controls.

What is a Business Associate Agreement (BAA) and why does it matter for AI?

A BAA is a legally binding contract required under HIPAA whenever a vendor handles PHI on behalf of a covered entity. It defines security obligations, prohibits unauthorized uses like training public models on patient data, and sets breach notification requirements. Using an AI tool with PHI without a signed BAA is a HIPAA violation.

Does HIPAA require patient consent for AI use in healthcare?

HIPAA does not require separate patient authorization when AI is used for Treatment, Payment, or Healthcare Operations (TPO), such as clinical documentation or scheduling. However, uses of PHI to train AI models, conduct research, or support marketing related analytics typically require explicit patient authorization.

What happens if an AI vendor is not HIPAA compliant?

If a vendor handles PHI without a BAA or proper safeguards, both the vendor and the covered entity face HIPAA penalties. Willful neglect carries $50,000 per violation, up to $1.5 million annually for repeated violations. Additional exposure includes OCR investigations, reputational damage, and potential FTC enforcement for deceptive health data practices.