Introduction

Healthcare organizations face a critical tension: AI adoption is accelerating across clinical documentation, patient engagement, and decision support, yet most don't know who bears responsibility for HIPAA compliance when AI systems process protected health information. Is it the covered entity deploying the tool, the vendor providing it, or both?

The stakes are substantial. A signed Business Associate Agreement alone does not ensure compliance,HHS guidance explicitly states that covered entities remain responsible for their business associates' noncompliance. Consumer AI tools like standard ChatGPT cannot legally touch PHI because they lack the required safeguards.

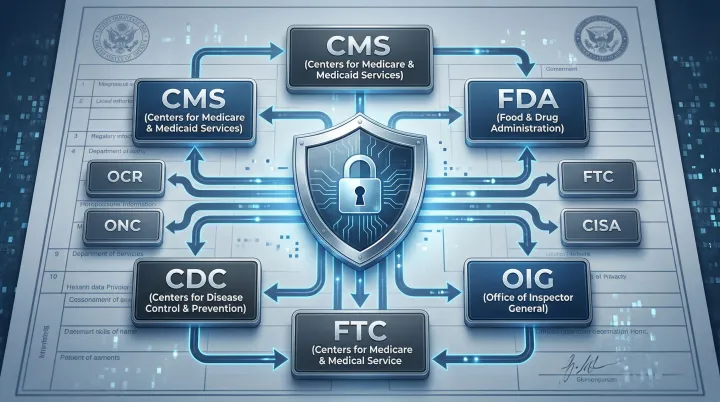

Enforcement reflects the cost of getting this wrong. HHS OCR has levied over $144 million in HIPAA penalties since 2003, while the FTC has imposed fines against digital health platforms including BetterHelp ($7.8 million) and GoodRx ($1.5 million) for unauthorized health data sharing.

The January 2025 HIPAA Security Rule proposal makes the direction clear. The proposed rule explicitly requires covered entities to inventory AI software that touches ePHI and apply heightened risk analysis - treating AI as a distinct infrastructure category, not an extension of existing IT.

This guide clarifies who HIPAA applies to in AI contexts, what core requirements must be met, where the law has genuine gaps, and how to build governance frameworks that hold as AI agents, workflows, and third-party integrations multiply.

TL;DR

- HIPAA compliance for AI requires verified technical, administrative, and contractual safeguards - not vendor marketing claims

- Any AI tool processing PHI on behalf of a covered entity makes the vendor a Business Associate, requiring a signed BAA and Security Rule compliance

- Consumer AI tools (ChatGPT Free, Claude web, Gemini consumer) cannot process PHI,they lack BAAs, audit logging, and data retention controls

- HIPAA has real gaps: patient-initiated AI use, unregulated tech vendors, and complex agentic pipelines can all process health data outside current regulatory reach

- Governance must be continuous and runtime-enforced across every AI interaction,a deployment checklist alone is not enough

What Makes AI Subject to HIPAA in Digital Health

Before deploying AI in any healthcare context, the first question to answer is whether HIPAA applies at all. The rule triggers whenever an AI system creates, receives, maintains, or transmits Protected Health Information. In practice, that covers more data types than most teams initially expect:

- Structured EHR data fed into clinical decision support models

- Chatbot transcripts where patients describe symptoms or history

- Model training datasets derived from patient records

- Clinical prediction outputs tied to individual patients

- Audio transcriptions of provider-patient conversations

If an AI tool touches any of these data types, HIPAA rules apply , regardless of whether the system was built for healthcare or adapted from a general-purpose model.

Business Associate Classification

When a hospital, insurer, or clinic uses an AI vendor to process PHI on its behalf, that vendor becomes a Business Associate under 45 CFR §160.103. HHS cloud computing guidance (2022) clarifies that even "no-view" cloud providers storing encrypted ePHI without decryption keys are business associates. Activities that trigger BA status include:

- Data aggregation for analytics or reporting

- Clinical decision support generating treatment recommendations

- Documentation generation from clinical encounters

- Transcription Solution processing provider-patient audio

Activities that fall outside BA classification typically involve patients independently using AI tools without covered entity involvement,but this creates a critical coverage gap.

The Critical Coverage Gap

When a patient independently shares health information with an AI chatbot, or when a hospital employee uses a general-purpose AI tool whose vendor operates outside the covered entity/BA framework, that PHI exits HIPAA's jurisdiction entirely.

This is not an edge case. A 2025 AMA survey found 66% of physicians used healthcare AI, with documented instances of staff pasting patient data into consumer AI tools for convenience. In these scenarios, HIPAA does not apply. Enforcement falls instead to the FTC under Section 5 of the FTC Act and the Health Breach Notification Rule.

AI-Specific ePHI Requirements

The January 2025 HIPAA Security Rule NPRM (90 FR 898) explicitly addresses AI for the first time. The proposed rule clarifies that ePHI used in AI training data, prediction models, and algorithm outputs maintained by regulated entities falls under HIPAA protection.

The Department also proposes requiring covered entities to develop written inventories of technology assets , including AI software that creates, receives, maintains, or transmits ePHI. Regulators now treat AI as a distinct infrastructure category, separate from traditional IT systems and requiring dedicated oversight to match.

Minimum Necessary Principle in AI

AI systems should access only the PHI required for their specific function. This applies to:

- Model training datasets

- Inference inputs and API calls

- Prompt engineering and context windows

- Output generation and storage

Organizations should apply de-identification wherever full PHI access is not operationally required. HIPAA provides two de-identification methods:

Safe Harbor: Remove 18 specific identifiers (names, geographic subdivisions smaller than a state, dates except year, phone numbers, Social Security numbers, biometric identifiers, full-face photos) and confirm no actual knowledge that remaining information could identify individuals.

Expert Determination: A qualified expert determines the re-identification risk is very small and documents the methods and results using generally accepted statistical and scientific principles.

Both methods reduce compliance burden in training pipelines. Neither eliminates re-identification risk entirely, particularly when external datasets can be combined with model outputs , which is why ongoing data access controls matter even after de-identification is applied.

The Three HIPAA Requirements Every AI System Must Meet

Privacy Rule Compliance

The Privacy Rule (45 CFR §164.502) governs when and how PHI may be used or disclosed. For AI, using patient data to train models, generate outputs, or personalize responses requires a lawful basis:

- Treatment – Clinical decision support providing diagnostic recommendations

- Operations – Quality improvement analytics, population health management

- Patient Authorization – Explicit written consent for uses outside treatment/operations

De-identification can reduce compliance burden. Safe Harbor removes 18 identifiers; Expert Determination requires certification that re-identification risk is very small. However, NIST SP 800-188 warns that de-identification based simply on removing identifiers is often insufficient to prevent re-identification in large datasets, especially with high-resolution geolocation and external data sources available.

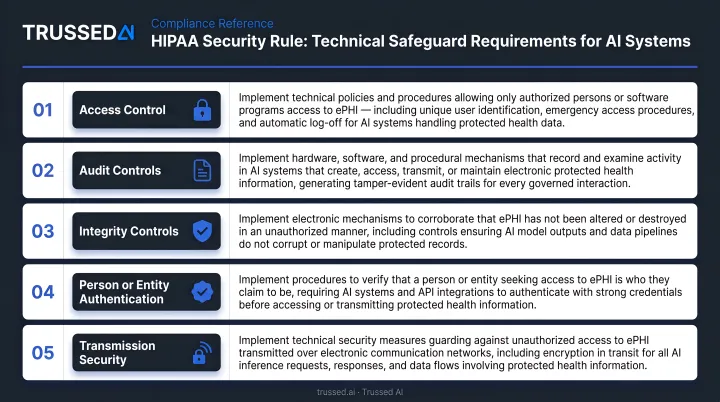

Security Rule Technical Safeguards

The Security Rule (45 CFR §164.312) mandates specific technical controls for any system handling ePHI. For AI systems, each safeguard carries specific implementation requirements:

| Safeguard | Regulation | AI Implementation Requirement |

|---|---|---|

| Access Control | §164.312(a)(1) | Role-based controls enforcing least-privilege; unique user IDs; automatic session timeouts |

| Audit Controls | §164.312(b) | Log all data access with timestamps and actions; logs must be secured and tamper-evident |

| Encryption | NIST FIPS 197 / SP 800-52 | AES-256 for data at rest; TLS 1.2+ for data in transit |

| Integrity | §164.312(c)(1) | Prevent unauthorized modification of training data, model outputs, and audit logs |

| Transmission Security | §164.312(e)(1) | Secure all API calls, inference requests, and inter-component data transfers |

Properly implemented encryption renders PHI "unusable, unreadable, or indecipherable to unauthorized individuals" under the Breach Notification Rule , a critical threshold when evaluating breach liability.

Business Associate Agreement Requirements

BAAs are enforceable legal contracts with real liability consequences. Generic vendor templates typically minimize vendor obligations and warrant careful review before signing. A compliant BAA must specify:

- Permitted uses of PHI – Explicitly define what the vendor may do with data

- Encryption standards – Require AES-256 for stored data, TLS 1.2+ for transmission

- Breach notification timeline – Mandate notification within 60 days of discovery (not vague "prompt notification")

- Subprocessor approval – Require written approval before adding LLM providers or cloud infrastructure

- Audit rights – Grant the covered entity rights to audit vendor compliance

A March 2022 CMS/HHS guidance letter clarified that engaging a business associate does not relieve a covered entity from its responsibility to comply with applicable requirements. Covered entities remain responsible for business associate noncompliance.

Administrative Safeguards

Vendor agreements establish external accountability. Internal administrative safeguards address what happens inside your own organization , and regulators scrutinize both equally.

Key requirements include:

- Risk Analysis – Document AI-specific threats: prompt data leakage, model memorization of training data, and re-identification from de-identified datasets

- Workforce Training – Use concrete breach examples, not regulatory citations. The average healthcare data breach cost $10.93 million in 2023 , the highest of any sector for the 13th consecutive year

- Incident Response – Under 45 CFR §164.404, affected individuals must be notified within 60 days of discovery. Breaches affecting 500+ individuals require simultaneous HHS and media notification

- Annual Security Reviews – The proposed 2025 Security Rule update would explicitly require AI software inventory and heightened risk analysis for AI systems

HIPAA-Eligible vs. HIPAA-Compliant

Major cloud providers and enterprise AI platforms describe Solution as "HIPAA eligible" , meaning they can support compliance under correct configuration. Eligibility does not equal compliance. HHS guidance emphasizes that while covered entities may use cloud Solution, they must conduct their own risk analysis and establish risk management policies.

The customer bears responsibility for correctly configuring the system, documenting that configuration, and maintaining it through ongoing monitoring. HHS is explicit: "OCR does not endorse, certify, or recommend specific technology or products."

Common AI Use Cases and Their HIPAA Risk Profiles

Clinical Documentation AI

AI transcription and note-generation tools carry high PHI exposure risk. Ambient documentation systems, discharge summaries, and prior authorization drafts process full provider-patient conversations in real time. Every audio file and generated note is PHI.

By 2025, 62.6% of U.S. hospitals using Epic had adopted ambient AI documentation tools. Larger, not-for-profit hospitals with stronger financial performance drove most of that adoption. A 2025 AMA survey found 21% of physicians used AI for documentation of billing codes or visit notes.

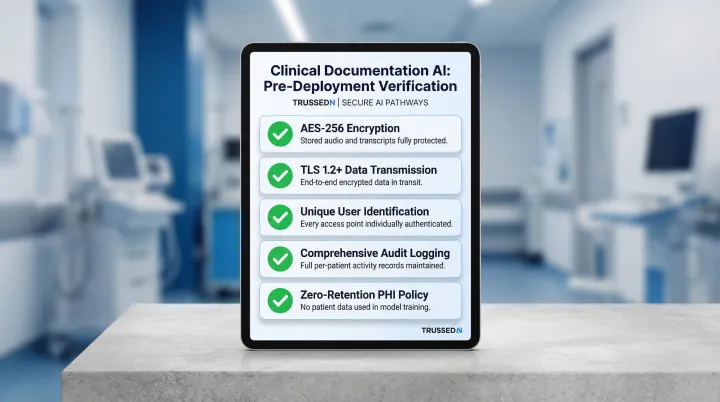

Infrastructure processing these inputs must be fully encrypted, access-controlled, and operated under a BAA. Vendors must not retain data for model training without explicit authorization. Before deployment, verify:

- AES-256 encryption for stored audio and transcripts

- TLS 1.2+ for transmission

- Unique user identification for every access

- Comprehensive audit logging of who accessed which patient's data

- Zero-retention policies for PHI in model training pipelines

Patient-Facing AI Chatbots and Virtual Assistants

Chatbots collecting symptoms, appointment preferences, insurance details, or health histories process PHI. Conversation logs, session data, and any data transmitted to third-party LLM providers are all in scope.

The 2025 AMA survey found 10% of physicians deployed patient-facing chatbots for customer service, and another 10% used them for health recommendations and self-care engagement , making this one of the faster-growing AI deployment categories in clinical settings.

The risk intensifies when consumer AI tools are deployed in patient-facing contexts without enterprise-grade BAAs or data retention controls. The FTC has aggressively pursued exactly this scenario.

BetterHelp faced $7.8 million in penalties for sharing email addresses, IP addresses, and health questionnaire data with Facebook, Snapchat, and advertising platforms despite explicit privacy promises. GoodRx faced $1.5 million in penalties for sharing prescription and health condition data with Facebook and Google.

Organizations deploying patient-facing AI must ensure:

- Enterprise API tiers with signed BAAs (not consumer web interfaces)

- No data sharing with advertising networks or analytics platforms

- Explicit consent for any use beyond the stated purpose

- Audit logging of all conversations

- Incident response procedures meeting 60-day notification deadlines

Predictive Analytics and Clinical Decision Support

AI models for risk stratification, sepsis prediction, and treatment recommendations are trained on large PHI datasets. The ONC HTI-1 Final Rule (89 FR 1192, January 2024) established transparency requirements for "Predictive Decision Support Interventions" (DSIs) in certified health IT.

Under 45 CFR §170.102, a Predictive DSI is defined as:

"Technology that supports decision-making based on algorithms or models that derive relationships from training data and then produces an output that results in prediction, classification, recommendation, evaluation, or analysis."

The rule requires developers to give users access to source attribute information and apply Intervention Risk Management practices.

The proposed HHS Security Rule NPRM specifically targets predictive decision support interventions using machine learning, which would require greater transparency about model design, training data, and bias evaluation from business associates providing DSI tools.

Organizations deploying predictive AI must:

- Conduct thorough risk assessments before and after deployment

- Document training data sources and de-identification methods

- Evaluate model outputs for bias and clinical safety

- Maintain audit trails of which predictions influenced which clinical decisions

- Update risk assessments as models are retrained or modified

Where HIPAA Falls Short: Gaps AI Teams Must Address

The Unregulated AI Vendor Gap

When a physician pastes patient data into a general-purpose AI tool whose vendor is neither a covered entity nor a BA, that PHI exits HIPAA's jurisdiction entirely. This happens regularly in healthcare organizations where staff reach for consumer AI tools out of convenience.

HIPAA applies only to covered entities (health plans, healthcare clearinghouses, healthcare providers conducting electronic transactions) and their business associates. If a technology company does not fall into these categories and has no BAA with a covered entity, HIPAA does not reach them,even if they process sensitive health data.

The FTC has stepped in where HIPAA does not reach, enforcing under Section 5 of the FTC Act (prohibiting unfair or deceptive practices) and the Health Breach Notification Rule (16 CFR Part 318). The FTC's HBNR applies to vendors of personal health records and PHR-related entities not covered by HIPAA. The FTC clarified in 2024 that makers of health apps and connected devices drawing identifiable health information from multiple sources are covered by the Rule.

Still, FTC enforcement is reactive,acting only after breaches or consumer complaints surface. It does not provide the proactive compliance framework that HIPAA offers.

Re-identification Risk from De-identified Data

Large technology companies can potentially re-identify health datasets de-identified via Safe Harbor by cross-referencing with behavioral and location data. NIST SP 800-188 notes that while removing identifiers was once considered sufficient, new privacy attacks can re-identify individuals in ostensibly clean datasets.

High-resolution geolocation data and third-party behavioral datasets make this exposure worse every year.

In Dinerstein v. Google (7th Cir. 2023), plaintiffs alleged the University of Chicago Medical Center shared anonymized records with Google to train predictive algorithms in violation of patient privacy. The court dismissed the case,the plaintiff lacked standing, and the Data Use Agreement barred Google from attempting re-identification. The court found re-identification threats "wholly speculative and implausible."

The case still illustrates a real exposure pattern: healthcare organizations integrating generative AI through major tech platforms face amplified triangulation risk (where a vendor combines de-identified health data with its existing personal data from other sources to reconstruct individual identities). The more data a vendor already holds, the narrower the anonymization margin becomes.

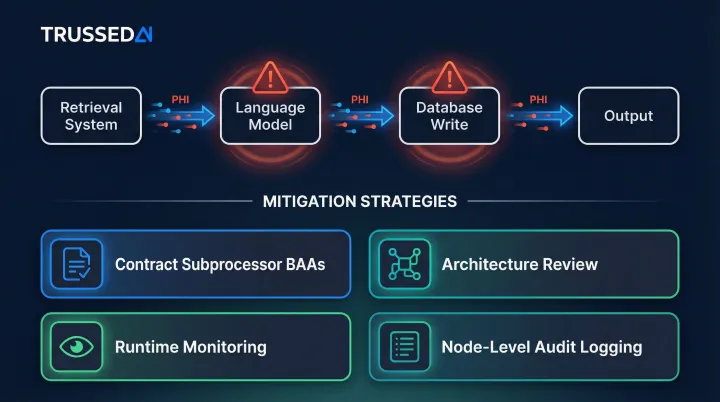

Agentic AI and Multi-Model Pipeline Gap

As healthcare organizations deploy AI agents that route tasks, call APIs, and aggregate outputs across multiple models, PHI passes through multiple handoff points,some of which may involve unprotected subprocessors.

Existing HIPAA guidance does not clearly address governance at each node of an agentic workflow. A clinical documentation assistant might route data through a retrieval system, then a language model, then a database write operation. If governance applies only at entry and exit points, intermediate nodes create compliance exposure.

Organizations must proactively address this through:

- Contract language requiring subprocessor BAAs

- Architecture review documenting every handoff point

- Runtime monitoring of data flow through pipelines

- Audit logging at each node, not just system boundaries

Building a HIPAA-Compliant AI Governance Framework

Infrastructure and Vendor Selection

Foundational requirements include HIPAA-compliant cloud infrastructure with encrypted storage, SIEM logging, intrusion detection, and geo-redundant backups. During vendor evaluation, require:

Third-party security certifications: Look for evidence of sustained security controls over time. Healthcare organizations should demand certifications that verify controls functioned effectively over an extended period, not just at a single point in time.

Signed BAA covering all products in scope: Verify the BAA explicitly covers all AI tools, APIs, and infrastructure components that will touch PHI.

LLM subprocessor data protection agreements: Confirm that third-party LLM providers are bound by data protection agreements with zero-retention policies for PHI. Require written approval before adding new subprocessors.

PHI Minimization and Data Architecture

The best compliance posture reduces the amount of PHI AI systems encounter:

Tokenization: Replace PHI with non-reversible identifiers before passing data to AI tools. This reduces blast radius if a breach occurs.

Synthetic data: Use for testing and development environments. Synthetic data mimics real data structure without containing actual PHI.

Automated PHI detection: Screen prompts before they reach AI models. Detection mechanisms can include regex patterns, NLP classifiers, or third-party PII detection libraries.

These controls simplify compliance documentation and reduce exposure.

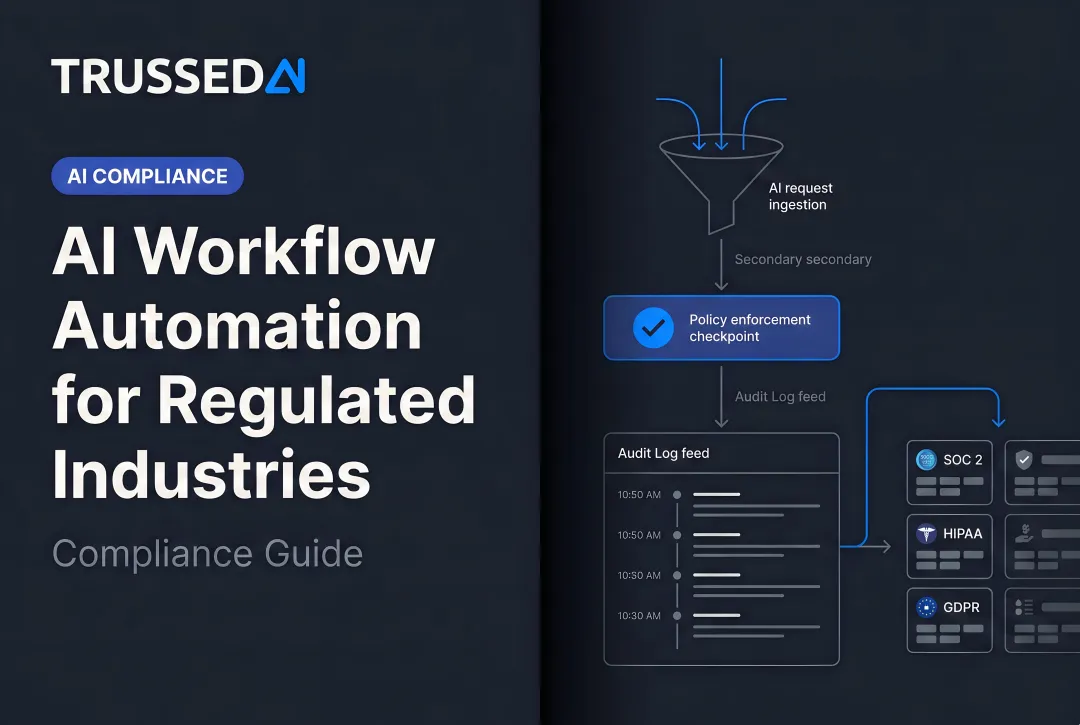

Runtime Governance and Continuous Policy Enforcement

Signing a BAA and configuring encryption handles deployment-time requirements. AI models, however, operate continuously , every interaction, agent action, and data access needs to be checked against active HIPAA policies in real time.

Most compliance checklists miss this gap. Traditional governance treats compliance as a procurement checkpoint: sign the BAA, configure encryption, check the box. AI systems interact with data thousands of times per day, with prompts, outputs, and actions varying unpredictably , static checkpoints can't keep up.

A control plane approach enforces policies as a layer across AI apps, agents, and workflows rather than configured individually per tool. This lets organizations grow AI deployments without adding proportional compliance staff or processes.

Trussed AI's governance platform enforces these policies at runtime , evaluating every request against configured HIPAA rules before execution and generating audit-ready evidence automatically as a byproduct of each governed interaction. It operates as a proxy in the AI interaction flow, covering:

- PHI access controls – Role-based policies determining which users and applications can access specific data

- Data leakage prevention – Automatic identification and masking of patient identifiers before data reaches models

- Audit trail generation – Complete chain of custody logging with policy evaluation results, model version, timestamp, and data lineage

- Subprocessor governance – Control over which LLM providers and cloud Solution can process data

The platform generates records structured for compliance teams, internal audit, and regulatory examination.

Monitoring, Audit Readiness, and Breach Response

Ongoing operational requirements include:

Tamper-evident access logs: Maintain logs that cannot be altered without detection. Logs should capture user identity, data accessed, timestamp, and action taken,satisfying 45 CFR §164.312(b) audit control requirements.

Periodic HIPAA risk assessments: Conduct annual reviews that specifically include AI systems in scope. The proposed 2025 Security Rule update would explicitly require AI software inventory and heightened risk analysis.

PHI exposure incident documentation: Document any incidents where PHI was accessed, disclosed, or compromised, along with remediation taken. Treat near-misses as systemic signals rather than isolated incidents.

Breach response protocol: Satisfy the 60-day HHS notification deadline for incidents affecting 500+ individuals. The BAA should specify the vendor's obligation to detect and notify the covered entity promptly,typically within 24-48 hours of discovery. Breaches affecting 500+ residents of a state require media notification within 60 days.

Organizations should update governance controls based on incident patterns, not just respond to individual breaches.

Frequently Asked Questions

Is AI Assistant HIPAA compliant?

No AI assistant is inherently HIPAA compliant. Compliance depends on the specific product tier (enterprise vs. consumer), whether a BAA is in place, and how the system is configured and monitored. Consumer versions like ChatGPT Free, Claude web, and Gemini do not meet HIPAA requirements.

Is there a HIPAA compliant AI tool?

HIPAA-compliant AI tools exist, but the compliance designation applies to specific enterprise configurations, not tools broadly. Organizations should verify a signed BAA, subprocessor data agreements, and audit logging capabilities before deploying any AI tool in healthcare contexts.

What is a key requirement for an AI tool to be considered HIPAA compliant?

The Business Associate Agreement is the foundational legal requirement, followed by technical safeguards (encryption, access controls, audit logging) mandated by the Security Rule. A tool must satisfy all three requirements,contractual, technical, and administrative,before any marketing claim of compliance holds up.

Does the HIPAA privacy rule apply to conversations?

Yes. Conversations between patients and healthcare providers,including AI chatbot sessions, transcribed audio, and documented virtual visits,can constitute PHI if they contain individually identifiable health information. Any AI system that records, processes, or stores these conversations must comply with both the Privacy Rule and the Security Rule.

What happens if an AI vendor causes a HIPAA breach?

Under the Breach Notification Rule, the covered entity must notify affected individuals within 60 days and report to HHS (and media if 500+ individuals are affected). The BAA should specify the vendor's obligation to detect and notify the covered entity promptly,typically within 24-48 hours of discovery.

Do AI tools used only internally still need to comply with HIPAA?

Yes. Internal AI tools used by clinicians to generate notes or by billing staff to process claims are subject to HIPAA if they access or process PHI. The internal/external distinction does not determine HIPAA scope,what matters is whether the tool touches PHI and whether appropriate safeguards govern its use.