Introduction

Enterprises in regulated industries face mounting pressure: AI adoption is accelerating,driven by competitive necessity and operational efficiency gains,yet production deployment requires meeting non-negotiable compliance obligations. Up to 95% of enterprise generative AI pilots fail to reach production, creating a widening gap between what AI can do and what organizations can safely operationalize.

Operationalizing AI in regulated industries is as much a governance infrastructure problem as a technical one. Organizations that treat compliance as documentation rather than enforceable control keep stalling at the pilot stage - or deploy systems that draw regulatory enforcement.

This post covers what AI operationalization actually means, what the U.S. regulatory landscape demands, why the path from pilot to production breaks down, and what a compliance-first approach looks like in practice.

TLDR

- AI operationalization means running AI in production under enforceable, continuously monitored governance

- U.S. regulated industries must align AI systems with HIPAA, SOX, SR 11-7, and FERPA from day one

- The biggest failures stem from governance gaps: no real-time policy enforcement, no automated audit trails, no continuous monitoring

- Compliant operationalization requires governance built into the runtime layer - enforced automatically, at every interaction

What Is AI Operationalization?

AI operationalization is the process of moving AI systems from pilot phases into reliable, scalable production environments. It goes beyond deploying a model. Operationalization adds the governance, observability, and enforcement layers required to keep AI systems compliant and performant over time.

Why AI Is Different from Traditional Software

AI systems are non-deterministic: the same input can yield different outputs over time. They drift as data distributions shift, and they often lack the explainability traditional software provides. Compliance validation is an ongoing process, not a one-time event.

Governance vs. Operationalization

Most organizations have governance documentation , policies, frameworks, risk assessments. Far fewer have the infrastructure to enforce those policies at runtime. Governance defines the rules; operationalization is the mechanism that applies them to every AI interaction in production.

The Agentic AI Challenge

Autonomous agents that take actions, call APIs, and chain reasoning steps create far greater compliance surface area than single-model deployments. The scale of the problem is accelerating:

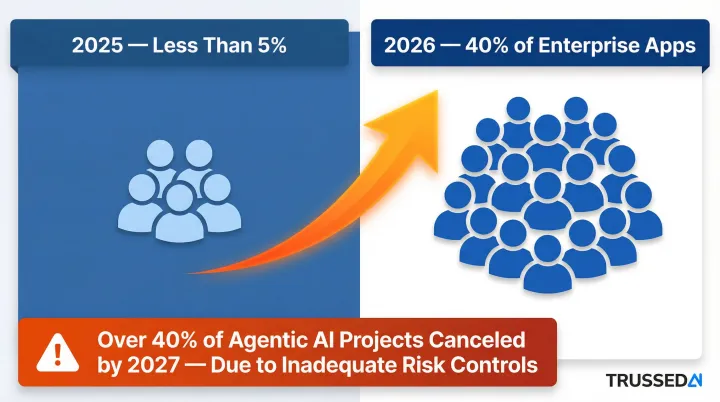

- Gartner predicts 40% of enterprise applications will feature task-specific AI agents by 2026, up from less than 5% in 2025

- Over 40% of agentic AI projects will be canceled by 2027 due to inadequate risk controls

Why Regulated Industries Face a Higher Bar

AI decisions in healthcare, financial Solution, insurance, and education directly affect patient safety, credit access, claims outcomes, and student welfare. Regulators require documented control at the system level , not policy documents that stop at the door of production.

The U.S. Regulatory Landscape for AI in Regulated Industries

U.S. AI regulation is sector-specific and fragmented,unlike the EU's horizontal AI Act. Enterprises operating across industries must navigate overlapping requirements simultaneously.

Key Frameworks by Sector:

Healthcare:

- HIPAA governs data privacy and security for Protected Health Information (PHI)

- FDA guidance on AI/ML-based software as medical devices, including Predetermined Change Control Plans for AI-enabled device software functions

- HHS AI Strategy released December 2025 for responsible AI deployment

Financial Services:

- SR 11-7 (Federal Reserve and OCC model risk management guidance) requires comprehensive model inventory, independent validation, and ongoing performance monitoring

- SOX mandates auditability and data integrity for public companies

- ECOA/fair lending requirements enforced by CFPB for AI-driven credit decisions,complex algorithms do not exempt lenders from providing specific, accurate reasons for adverse actions

Insurance:

- NAIC Model Bulletin on AI Systems adopted by 24 states and the District of Columbia as of early 2026

- Requires written AI Systems Programs, board oversight, validation, and vendor due diligence

Higher Education:

- FERPA protects student education records

- Third-party AI vendors must meet "school official exception" requirements,no unauthorized data use or model training

Federal Baseline:

Executive Order 14110 and OMB M-24-10 mandate Chief AI Officers, AI Governance Boards, public use-case inventories, and minimum risk management practices for federal agencies.

What These Frameworks Have in Common

Across every sector, regulators require organizations to demonstrate that AI systems are controlled, explainable, and generating auditable evidence of compliance. Benchmark performance alone doesn't satisfy these obligations.

The Compliance Burden Is Not Static

Regulations are evolving faster than most governance frameworks can keep pace. HHS proposed HIPAA Security Rule updates requiring written technology asset inventories, 72-hour recovery procedures, and 24-hour workforce access termination notifications , and those requirements explicitly cover AI systems interacting with ePHI.

Why Operationalizing AI in Regulated Environments Is Hard

Most organizations didn't build compliance infrastructure before scaling AI - they built pilots. Deloitte's 2026 State of AI report reveals that only 21% of companies report having a mature model for the governance of autonomous AI agents, despite rapid agentic AI adoption. When those pilots move toward production, four structural problems surface:

- Governance debt: No systematic way to enforce data handling policies, track model decisions, or generate auditor evidence - leaving a growing backlog of compliance exposure.

- Explainability gaps: High-performing models often function as black boxes. Credit decisions, claims adjudication, diagnostic support, and student assessments all require explainable outcomes, regardless of model accuracy.

- Fragmented deployments: AI runs across multiple models, agents, APIs, and applications simultaneously. Without a unified control layer, teams rely on siloed monitoring tools and inconsistent documentation that make compliance reviews incomplete.

- Audit-readiness failures: Regulators expect organizations to show who deployed a model, what data it used, when it was updated, and what decisions it influenced. Reconstructing that trail after the fact is slow and error-prone.

A 2025 healthcare survey found that only 22% of hospitals reported high confidence they could deliver a complete, auditable AI explanation within 30 days to regulators, with 41% citing limited explainability artifacts from vendors as their top audit barrier.

Audit evidence has to be generated continuously as a byproduct of operations. Assembling it under pressure, once an audit is announced, is a risk most regulated organizations can no longer afford to take.

A Framework for Compliant AI Operationalization

Risk and Regulatory Mapping Before Deployment

Identify which regulatory frameworks apply to each AI use case, map data flows, and define policy requirements before building or deploying. This prevents costly rework and reduces deployment delays.

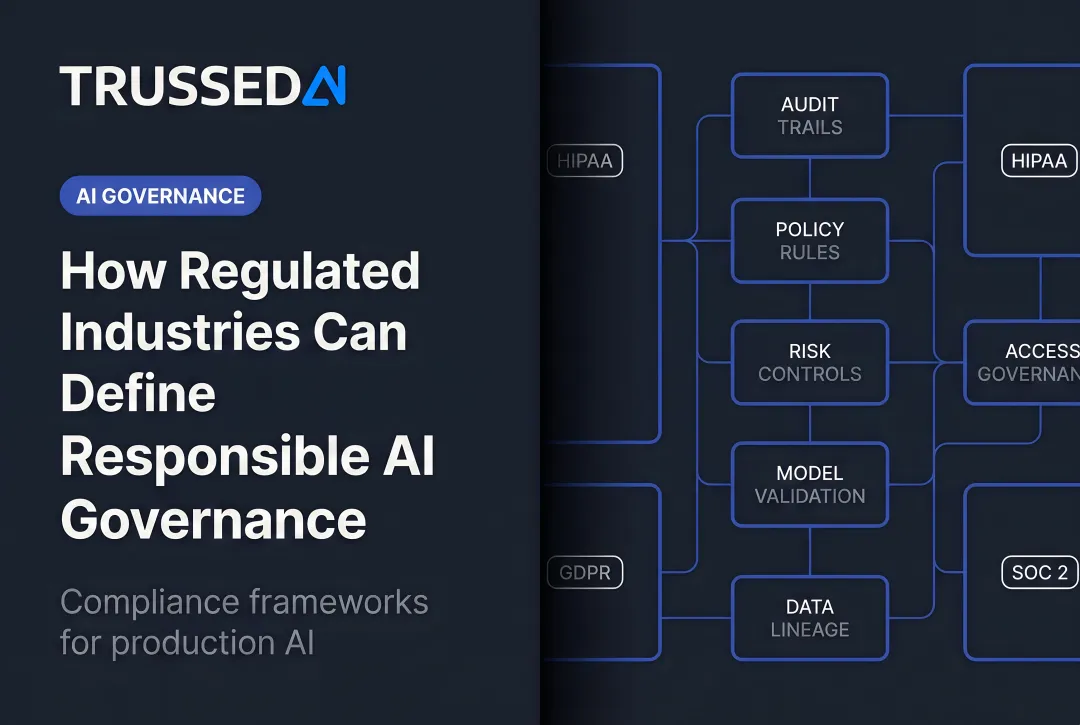

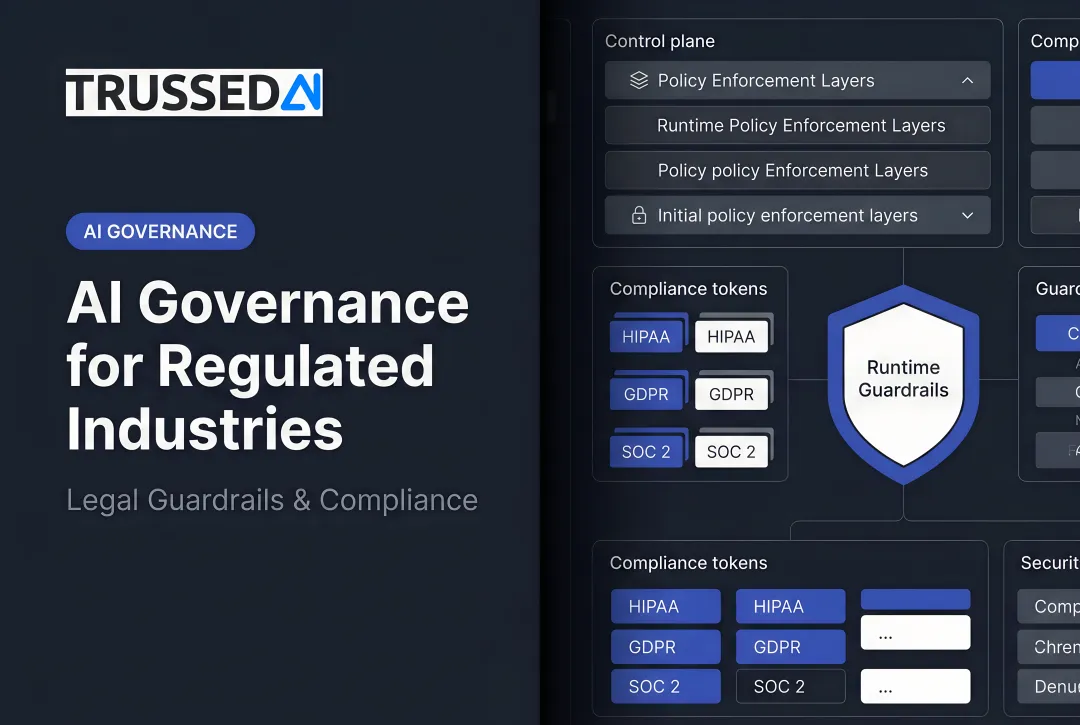

Policy Encoding and Governance by Design

Translate regulatory requirements into enforceable machine-readable policies,not just compliance documents. Governance must be embedded into system architecture, not layered on after deployment.

Trussed AI's control plane enables organizations to define policies once and enforce them continuously across models, agents, and tools,turning static policies into real-time control.

Continuous Monitoring and Drift Detection Post-Deployment

Production AI requires ongoing monitoring for model drift, output anomalies, and policy violations. A single post-deployment review is insufficient. Compliance requires continuous, automated oversight.

In Anti-Money Laundering systems, concept drift and data drift lead to higher false-positive rates and missed suspicious activity, directly increasing compliance exposure.

Human Review for High-Stakes Decisions

Monitoring catches drift and anomalies, but some decisions still require a human call. In regulated industries, AI should support human judgment on decisions that carry significant regulatory or ethical weight , not substitute for it. Define which use cases require mandatory human review, and build escalation paths and override mechanisms into the architecture before deployment.

Cross-Functional Ownership

Successful AI operationalization requires clear accountability across data science, IT, legal, compliance, and operations , not just the AI team. Define governance ownership before deployment, not after an incident.

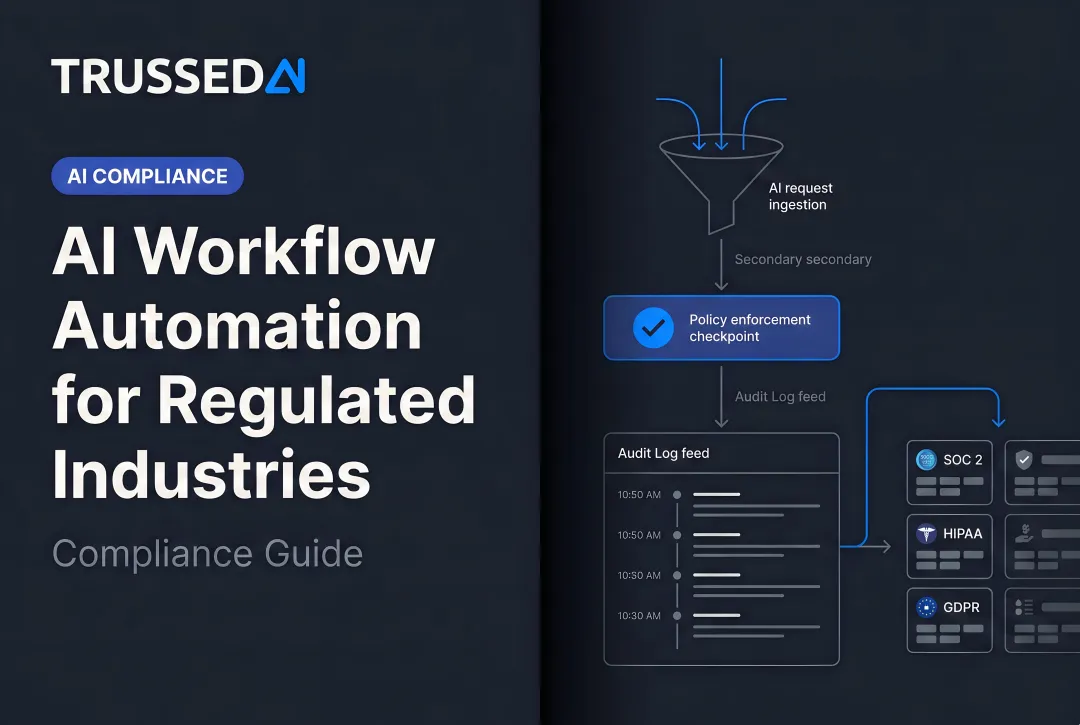

Runtime Governance: The Infrastructure Layer That Enforces Compliance in Production

Runtime governance enforces compliance controls as AI systems operate,intercepting requests, enforcing data handling rules, logging decisions, and flagging violations in real time rather than after the fact. This is the critical infrastructure gap that separates organizations with compliance documentation from organizations with actual compliance control.

What Production-Ready Runtime Governance Must Do:

- Enforce policies across AI apps, agents, and developer tools without requiring code changes in each application

- Route traffic intelligently to maintain SLAs

- Generate audit evidence automatically as a byproduct of every governed interaction

- Monitor compliance violations continuously rather than through periodic reviews

Trussed AI's control plane does this at the infrastructure layer , enforcing governance at runtime through drop-in proxy integration and automatic audit trail generation, so compliance evidence accumulates without manual effort.

The Operational Business Case:

Manual governance at scale is not viable. California's regulatory impact assessment for automated decision-making technology estimated first-year compliance costs for covered businesses between $447 million and $1.78 billion, requiring hundreds of hours per organization.

Automating governance through infrastructure rather than process reduces both compliance violations and the manual workload required to demonstrate compliance. Trussed AI's platform benchmarks reflect this: organizations using the control plane have reported a 50% reduction in manual governance workload and a 50% improvement in regulatory compliance posture.

Real-World Enforcement:

In July 2025, the Massachusetts Attorney General announced a $2.5 million settlement with student loan lender Earnest Operations LLC. The enforcement action alleged the company used algorithmic models that produced disparate impacts on protected groups, failed to test models for fair lending risks, allowed underwriters to override models without controls, and provided opaque adverse action notices.

This case makes clear that regulators will apply existing consumer protection law to AI systems , and that absence of controls, not just intent, determines liability. Organizations without runtime audit trails and override logging have no evidence to demonstrate compliance when enforcement comes.

Frequently Asked Questions

What is AI operationalization?

AI operationalization is the process of moving AI systems from pilot or development into reliable, governed production environments. It covers deployment plus the ongoing monitoring, policy enforcement, and audit infrastructure needed to keep AI systems compliant and performant at scale.

How is AI used in regulated industries?

Key use cases span multiple sectors, each with its own compliance obligations:

- Healthcare: clinical decision support, prior authorization

- Financial Solution: credit decisioning, fraud detection

- Insurance: underwriting and claims automation

- Higher education: advising and research assistance

What are the approaches to AI regulation in the U.S.?

U.S. AI regulation is sector-specific, not unified. HIPAA and FDA guidance govern healthcare; SR 11-7 and fair lending rules govern financial Solution; the NIST AI RMF provides a voluntary cross-sector reference. Enterprises operating across sectors must navigate multiple overlapping requirements simultaneously.

What is the difference between AI governance and AI operationalization?

AI governance refers to the policies, frameworks, and documentation an organization establishes to guide AI use. AI operationalization is the execution layer that puts AI into production with those policies actively enforced at runtime. Many organizations have governance frameworks but lack the infrastructure to operationalize them consistently.

What compliance frameworks apply to AI in U.S. regulated industries?

Key frameworks by sector: HIPAA and FDA guidance (healthcare), SR 11-7 and SOX (financial Solution), NAIC model bulletins (insurance), FERPA (education), and the NIST AI RMF as a cross-sector reference. Requirements are evolving and vary by use case and deployment context.

How do enterprises maintain audit readiness for AI systems in production?

Audit readiness requires continuous, automated logging of model decisions, data lineage, policy enforcement events, and system changes , not manual, retrospective documentation. Governance infrastructure that generates audit evidence as a byproduct of operations is the most scalable approach for regulated enterprises.