Introduction

Healthcare organizations are deploying AI at unprecedented speed - 71% of U.S. hospitals now use predictive AI integrated into their electronic health records, up from 66% in 2023. These systems influence diagnosis, clinical decision support, administrative workflows, and patient-facing interactions. Yet only 29% of hospitals have implemented and enforced policies covering AI model inventory, lineage, and sign-offs.

That governance gap carries real consequences. Regulators are already moving: the DOJ's $1.43 million penalty against Troy Health for AI-driven Medicare fraud and the Texas Attorney General's settlement with Pieces Technologies over deceptive hallucination metrics both signal that enforcement is no longer hypothetical. Shadow AI compounds the risk - 71% of healthcare workers use personal AI accounts for work, and 81% of data policy violations involve Protected Health Information (PHI).

This article covers the structural elements of a functioning AI governance program in healthcare: what each element does, and what breaks when one is missing.

TL;DR

- Healthcare AI governance combines people, policies, processes, and technology to keep AI deployments safe and HIPAA-compliant

- Core elements include governance committees, AI use policies, PHI protection controls, lifecycle management, and ethical oversight

- Without a formal program, organizations expose themselves to HIPAA violations, algorithmic bias, and preventable patient safety failures

- Policies only work when enforced at runtime - documentation alone doesn't prevent violations

- Governance programs need scheduled reviews tied to regulatory updates and new AI deployments - not a one-time setup

What Is an AI Governance Program in Healthcare?

An AI governance program is a formalized, organization-wide system of rules, processes, roles, and technical controls that governs how AI is selected, approved, deployed, monitored, and retired within a healthcare institution. This covers both internally developed and third-party AI tools.

How It Differs from General IT Governance

AI governance addresses risks unique to algorithmic systems that general data governance and IT security programs were not designed to handle:

- Algorithmic bias can produce unequal care delivery across demographic groups

- Model drift occurs as clinical practice and patient populations change, eroding AI performance over time

- Lack of explainability - clinicians can't trace or validate AI recommendations they can't interpret

- Autonomous decision-making that directly affects clinical outcomes and patient safety

Governance as an Operational Control System

Effective AI governance integrates with clinical workflows, procurement processes, vendor relationships, and existing governance bodies. It enforces policies at the point of execution , not as a checkbox exercise, but as a live control mechanism embedded in production AI systems.

Static policy documents don't stop a biased model from influencing a care decision. Operational governance does.

Why Healthcare AI Governance Cannot Be Left to Chance

Healthcare organizations face specific, quantifiable risks without formal governance structures in place.

Common Governance Failures

Without centralized oversight, organizations typically experience:

- Inconsistent AI adoption practices across departments with no centralized oversight

- Ungoverned use of third-party AI tools accessing PHI without proper evaluation or Business Associate Agreements

- Regulatory violations under HIPAA and emerging AI-specific laws

- Patient safety risks from unchecked algorithmic bias or model degradation

Documented Harms

These aren't hypothetical risks,published research shows exactly how they materialize.

A 2019 study published in Science by Obermeyer et al. found that a widely used commercial algorithm exhibited significant racial bias by using healthcare costs as a proxy for health needs, falsely concluding that Black patients were healthier than equally sick White patients.

A 2021 study on chest radiograph AI found that algorithms consistently underdiagnosed underserved patient populations, with underdiagnosis rates highest for intersectional subgroups like Hispanic female patients.

The Regulatory Burden

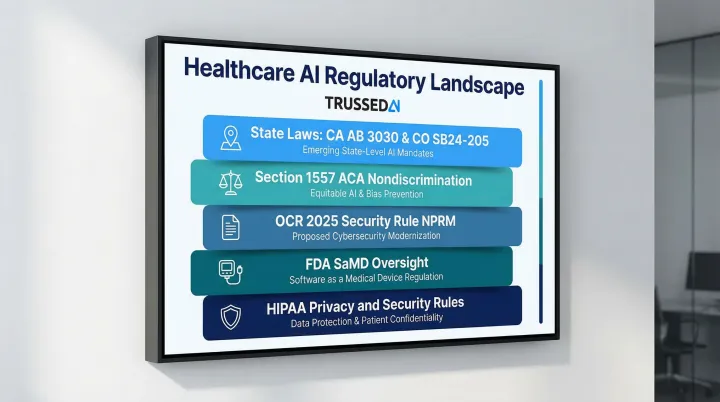

Healthcare organizations operate within a layered compliance environment:

- HIPAA Privacy and Security Rules requiring protection of electronic PHI in AI systems

- FDA oversight of AI-enabled medical devices through Software as a Medical Device (SaMD) frameworks

- OCR's 2025 Security Rule NPRM explicitly requiring AI to be included in HIPAA risk analyses

- Section 1557 of the Affordable Care Act requiring organizations to identify and mitigate discrimination risks when using patient care decision support tools

- State-level AI regulations including California's AB 3030 (requiring disclaimers for AI-generated patient communications) and Colorado's SB24-205 (requiring impact assessments for high-risk AI systems)

Each of these frameworks requires documented processes,and gaps in governance leave organizations exposed across all of them simultaneously.

Element 1: Governance Structure and Accountability

Element 1: Governance Structure and Accountability

The Need for a Dedicated AI Governance Committee

Healthcare organizations require a formal AI governance committee with clearly defined authority, scope, and decision-making power over AI adoption. In 2024, 66% of hospitals reported having a specific committee or task force for predictive AI, yet 74% indicated that multiple entities were accountable for evaluating AI , with a quarter citing four separate accountable entities. That fragmentation is where governance gaps form.

Committee Composition

An effective governance committee must include:

- Clinical representatives (physicians, nurses, CMIO)

- IT and informatics leaders who understand technical architecture

- Legal and compliance officers who interpret regulatory requirements

- Ethics representatives who evaluate fairness and patient impact

- Data privacy specialists who ensure PHI protection

- Operations leaders who understand workflow integration

- Patient representatives who bring lived experience perspectives

The AMA recommends a minimum viable committee of a clinical champion, data scientist or statistician, and administrative leader for quality. The Joint Commission and Coalition for Health AI (CHAI) add that committees should reflect the populations they impact.

Governance vs. Operational Responsibilities

The governance committee's responsibilities differ from individual department responsibilities:

| Governance Committee | Department/Product Teams |

|---|---|

| Overarching oversight: safety, equity, regulatory compliance, policy alignment | Feasibility, workflow integration, budgeting, product ownership |

| Risk classification and approval authority | Implementation and day-to-day operation |

| Monitoring and audit review | Performance tracking and user support |

When these roles blur, neither side owns accountability clearly - and that ambiguity is where compliance failures take root.

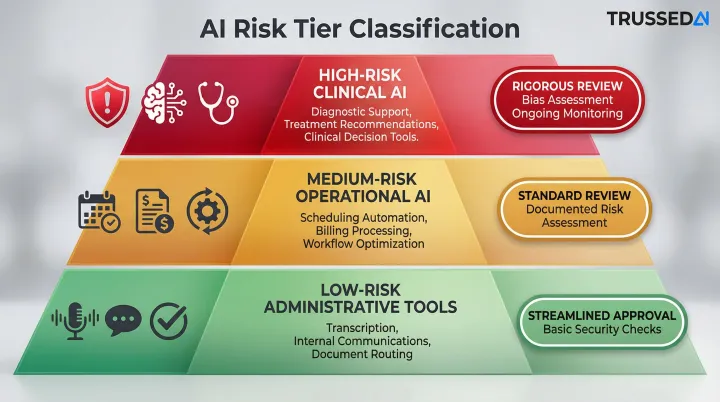

Tiered Risk Oversight Model

Not all AI tools require the same level of scrutiny. Governance structures must define risk tiers with corresponding approval requirements:

- High-risk clinical AI (diagnostic support, treatment recommendations): Rigorous review including clinical evidence evaluation, bias assessment, and ongoing monitoring

- Medium-risk operational AI (scheduling optimization, billing automation): Standard review with documented risk assessment

- Low-risk administrative tools (meeting transcription, internal communications): Streamlined approval with basic security checks

Risk tiering only works when the structure supporting it is formalized. At minimum, governance programs require:

- Executive sponsorship with clear authority

- Documented roles and responsibilities

- Formal committee charter

- Defined processes for membership review

- Escalation paths when monitoring flags concerns

Without these elements, the committee becomes advisory-only with no accountability mechanism.

Element 2: AI Use Policies, Risk Classification, and Regulatory Compliance

The Foundational AI Use Policy

The AI use policy defines:

- Scope of AI covered, including generative AI and machine learning

- Which tools are authorized for use within the organization

- Permitted and prohibited uses, including explicit prohibitions on entering PHI into publicly available AI tools

- Approval requirements before deploying any new AI tool

Stanford Medicine's policy prohibits the use of patient-identifying information or PHI in public AI tools. Johns Hopkins University guidelines explicitly prohibit entering nonpublic proprietary data, including clinical data, into third-party AI tools not approved by the institution.

Risk Classification Process

Risk classification evaluates and scores AI tools before deployment. From there, those scores directly inform which tools proceed to formal intake. Organizations should assess:

- Direct patient contact or clinical decision influence

- Data types accessed (PHI, sensitive health information)

- Regulatory status (FDA clearance, CE marking)

- Potential for bias or harm across patient subgroups

- Explainability and transparency of outputs

The International Medical Device Regulators Forum (IMDRF) categorizes Software as a Medical Device into four risk categories (I to IV) based on the state of the healthcare situation (Critical, Serious, Non-serious) and the significance of the information provided (Treat/Diagnose, Drive clinical management, Inform clinical management).

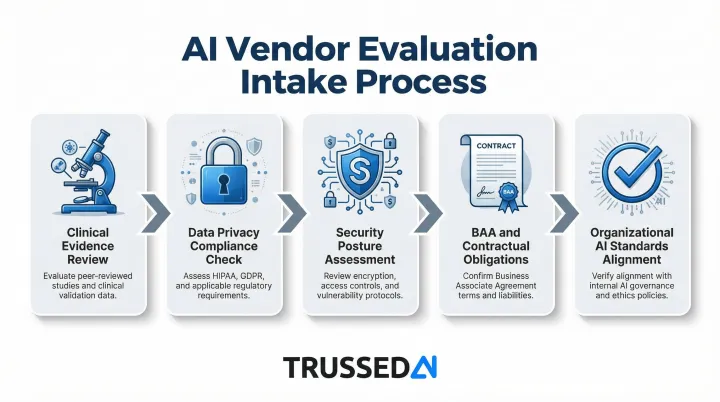

Formal Intake and Vendor Evaluation

Tools that clear risk classification then enter structured vendor evaluation covering:

- Clinical evidence demonstrating safety and effectiveness

- Data privacy compliance including HIPAA requirements

- Security posture and vulnerability management

- Contractual obligations including Business Associate Agreements for PHI access

- Alignment with organizational AI standards and risk tolerance

Regulatory Alignment

This intake process doesn't exist in isolation , AI use policies must map to the broader regulatory landscape:

- HIPAA Privacy and Security Rules

- Relevant state AI laws (California AB 3030, Colorado SB24-205)

- FDA guidance on AI-enabled medical devices

- NIST AI Risk Management Framework

- OCR's Section 1557 nondiscrimination requirements

Organizations should document this mapping to demonstrate compliance readiness during audits or regulatory inquiries.

Generative AI-Specific Policies

Generative AI tools introduce additional risks beyond standard clinical software - including data exposure, hallucination, and non-deterministic outputs. Policies must explicitly address:

- Prohibition of PHI entry into public LLM endpoints. OpenAI does not offer a Business Associate Agreement for ChatGPT's Free, Plus, Team, or Enterprise tiers, making these Solution non-compliant for clinical use.

- Requirements for human review of AI-generated clinical content

- Disclosure obligations when AI generates patient communications

- Validation processes for AI-generated clinical documentation

Element 3: Data Privacy, PHI Protection, and Security Controls

HIPAA Requirements for AI Systems

AI systems handling PHI are subject to HIPAA's Privacy and Security Rules. OCR's 2025 Security Rule NPRM explicitly states that electronic PHI in AI training data, prediction models, and algorithm outputs is protected by HIPAA. Regulated entities must include AI tools in their formal risk analyses and risk management activities , not treat them as a separate category.

Data Minimization and De-Identification

AI governance must include specific controls for how PHI is:

- Ingested under the minimum necessary standard - limit what enters the model

- Processed during training, fine-tuning, or inference with documented access controls

- Stored with encryption at rest and role-based access restrictions

- Shared with vendors only under executed Business Associate Agreements

- De-identified using HIPAA Safe Harbor or Expert Determination methods before research use

Organizations should implement technical safeguards to prevent unintended PHI exposure in model training or outputs.

AI-Specific Security Controls

AI systems face attack vectors that require dedicated controls beyond standard cybersecurity frameworks:

- Prompt injection - adversarial inputs that redirect or manipulate model behavior (demonstrated in a 2025 JAMA Network Open study)

- Model poisoning through corrupted training datasets (documented in a 2025 Nature Medicine study)

- Training data leakage triggered by crafted adversarial inputs

- Unauthorized access to model outputs that include or infer PHI

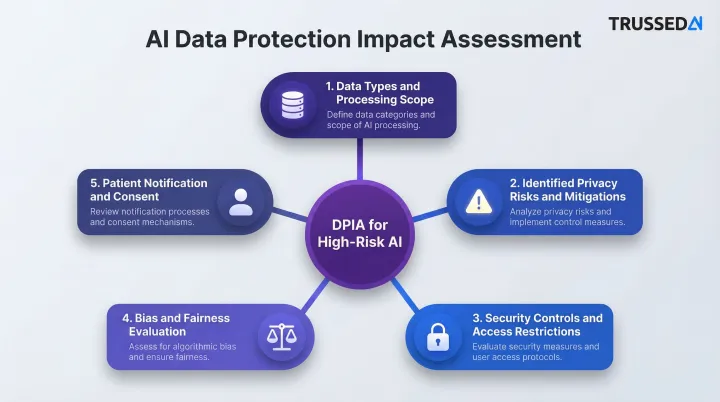

Data Protection Impact Assessments

High-risk AI deployments require Data Protection Impact Assessments (DPIAs) or equivalent AI-specific risk assessments. These assessments should cover:

- Data types accessed and the scope of processing activities

- Privacy risks identified, with documented mitigation measures

- Security controls and access restrictions in place

- Bias and fairness evaluation across patient subpopulations

- Patient notification and consent considerations

The NIST AI Risk Management Framework provides a structured approach , Govern, Map, Measure, Manage , to evaluate AI risks throughout the system lifecycle.

Business Associate Agreements for AI Vendors

Risk assessments and DPIAs only go so far - vendor contracts must carry equal weight. HHS OCR cloud computing guidance clarifies that any Cloud Service Provider creating, receiving, maintaining, or transmitting ePHI on behalf of a covered entity qualifies as a business associate and requires a BAA. That agreement must contractually require the vendor to safeguard ePHI, comply with the Security Rule, and , critically for AI , address how model outputs, training pipelines, and inference logs containing patient data are handled.

Element 4: AI Lifecycle Management and Continuous Monitoring

Centralized AI Inventory:

Organizations need a centralized AI inventory or registry that catalogs every AI tool deployed across the organization,including vendor-provided tools embedded in EHR systems. In 2024, 80% of hospitals used predictive AI sourced from their EHR developer, compared to 52% using third-party developed AI.

The inventory should document for each AI tool:

- Purpose and use case

- Risk tier classification

- Data accessed (PHI, clinical data types)

- Approval date and approving authority

- Oversight owner and responsible department

- Performance benchmarks and monitoring requirements

Continuous Monitoring Requirements:

AI models must be tracked post-deployment for:

- Model drift: performance degradation as clinical practice and patient populations shift over time

- Disparate impact across patient subgroups (bias emergence) that wasn't present at deployment

- Scope creep or unapproved applications that push AI beyond its approved use case

A 2025 study in JAMIA observed "fairness drift" in surgical models, noting that algorithmic fairness can change over time due to temporal changes in clinical practice and patient populations. The FDA warns that changes to AI systems, such as data drift, can lead to performance degradation, bias, or reduced reliability, directly impacting patient safety.

Operationalizing Monitoring at Scale:

Manual review processes cannot scale across multiple AI tools, models, and workflows. Organizations need governance platforms that enforce policies in real time, acting as a control plane between AI systems and the organization's data and workflows.

One architecture that addresses this: a drop-in proxy sitting in the flow of AI interactions, enforcing governance policies at runtime without requiring application code changes. Trussed AI uses this model , every AI interaction is evaluated against configured policies before execution, with automated audit trail generation, compliance violation flagging, and real-time enforcement. Governance travels with the AI system regardless of which model, tool, or environment it operates in.

Defined Escalation Paths:

When monitoring flags a concern - performance degradation, bias emergence, or policy violation - organizations need documented escalation processes:

- Who is notified and within what timeframe

- What investigation steps are triggered

- What authority can suspend or modify AI tool operation

- How findings are documented and reported to the governance committee

Element 5: Ethical AI, Algorithmic Transparency, and Bias Mitigation

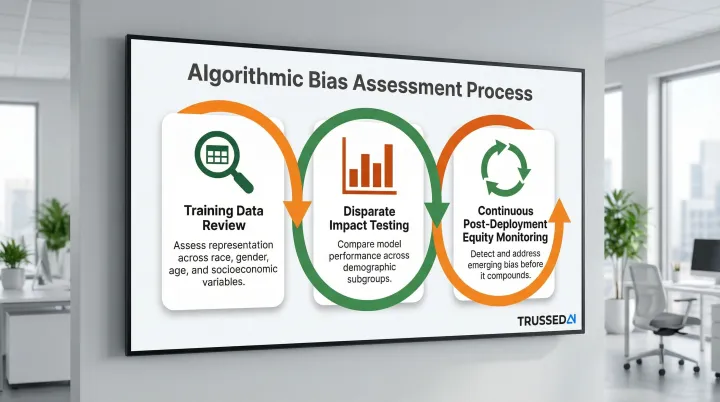

Algorithmic Bias Assessment:

Healthcare organizations have an ethical obligation to assess and mitigate algorithmic bias, particularly bias that could result in unequal access to care, misdiagnosis, or underdiagnosis for specific demographic groups.

A bias assessment process should include:

- Review training data for representation across race, gender, age, and socioeconomic variables

- Test for disparate impact by comparing model performance across demographic subgroups

- Monitor equity continuously post-deployment to catch emerging bias before it compounds

Explainability Requirements:

Healthcare AI governance programs should require that AI tools used in clinical decision support provide interpretable outputs. Clinicians must be able to understand how the AI reached a recommendation , unexplainable outputs should be flagged as high-risk requiring additional clinical oversight.

The Coalition for Health AI (CHAI) provides an Assurance Standards Guide that serves as a practical playbook for AI development and deployment in healthcare, with actionable guidance on ethics, quality assurance, and bias assessment.

Transparency Obligations:

Governance programs should define:

- When and how to inform patients that AI is being used in their care

- Documentation standards for AI-assisted clinical decisions

- Consent requirements for AI-driven use of patient data

- Clinician rights to override AI recommendations without penalty

Several of these obligations are now codified in law. Two mandates with direct compliance implications:

- California AB 3030 requires health facilities using generative AI for patient communications to include a disclosure that the content was AI-generated, along with instructions for reaching a human provider.

- HHS OCR's Section 1557 final rule requires regulated organizations to make reasonable efforts to identify and mitigate discrimination risk stemming from patient care decision support tools.

Common Gaps and Mistakes to Avoid When Building Your Program

Most healthcare organizations don't fail at governance because they ignored it. They fail because they built it on paper instead of in production. Three gaps appear consistently across programs that struggle.

Treating Governance as Documentation Rather Than Operational Control

Organizations that create policies but have no mechanism to enforce them at runtime (no monitoring, no automated compliance checks, no audit trails) create the appearance of governance without the reality of it.

The numbers back this up: while 79% of hospitals using predictive AI conducted post-implementation monitoring, only 29% reported having implemented and enforced policies covering AI model inventory, lineage, and sign-offs.

Technical controls that enforce policies in real time need to sit inside production systems - not in separate documents that staff may or may not consult.

Incomplete Vendor Oversight

Many healthcare organizations assume third-party AI tools come pre-governed. That assumption creates real compliance exposure. Vendor tools accessing PHI or influencing clinical decisions must still be:

- Evaluated against the organization's risk classification criteria

- Covered under appropriate Business Associate Agreements

- Included in ongoing monitoring and audit processes

- Subject to the same governance standards as internally developed tools

The OCR settlement with MMG Fusion for $10,000 and a three-year corrective action plan after a breach exposing the PHI of 15 million individuals demonstrates that organizations face severe penalties for failing to conduct accurate and thorough risk analyses on vendors and information systems handling their ePHI.

Absence of a Decommissioning Process

AI tools that underperform, develop bias, or fall out of alignment with organizational standards need a documented retirement process. Without one, outdated or unsafe tools stay in production by default - accumulating risk with each passing month.

A decommissioning process should define:

- Triggers for retirement (performance thresholds, bias detection, regulatory changes)

- Approval authority for decommissioning decisions

- Communication plans for affected users and departments

- Data retention and disposal requirements

- Documentation standards for audit trails

Conclusion

An effective AI governance program in healthcare is built on five interconnected elements: governance structure and accountability, AI use policies and risk classification, data privacy and PHI controls, lifecycle management and monitoring, and ethical AI and transparency. Weaknesses in any single element create systemic risk across the program.

Governance is an ongoing operational discipline - one that must evolve alongside the AI systems it oversees. As capabilities expand (from predictive models to autonomous agents) and regulatory requirements shift, programs built on static policies will fall behind. Organizations need real-time, enforceable controls that keep pace with production AI systems as they run.

The gap between AI adoption and governance maturity is closing under regulatory pressure. The DOJ's enforcement actions, OCR's updated Security Rule, and state-level AI laws make clear that healthcare organizations can no longer treat governance as optional. The question has shifted from whether to formalize AI governance to how quickly organizations can operationalize it - before exposure becomes a reportable incident. Platforms like Trussed AI exist precisely to close that gap, turning written policies into runtime controls that enforce themselves across every governed AI interaction.

Frequently Asked Questions

What should be included in an AI governance policy for healthcare?

At minimum, an AI governance policy should define permitted and prohibited AI uses, establish risk classification criteria, identify authorized tools, set data privacy requirements for PHI handling, and specify accountability and oversight responsibilities,including who approves new AI tools and who monitors ongoing performance.

How does an AI governance policy ensure HIPAA compliance in healthcare?

An AI governance policy supports HIPAA compliance by establishing controls for how AI systems access, process, and store PHI. Key controls include Business Associate Agreements with AI vendors, data minimization requirements, de-identification standards, and audit trail requirements that produce documentation for regulatory review.

What are the key principles of AI governance policy in healthcare?

Major frameworks - HIMSS, NIST, and AMA - converge on six core principles:

- Safety and patient protection

- Accountability and defined oversight

- Transparency and explainability

- Fairness and non-discrimination

- Privacy and data security

- Continuous monitoring

Each principle must be backed by concrete policies and controls to have any real effect.

How do the pillars of clinical governance relate to AI governance policy in healthcare?

Clinical governance (quality, safety, accountability, patient experience) and AI governance share the same foundational goals - both aim to ensure that clinical tools perform safely and equitably. AI governance extends clinical governance principles to address risks specific to algorithmic systems, such as model drift, bias, and lack of transparency.

Who should be on an AI governance committee in a healthcare organization?

An effective AI governance committee should include clinical representatives, IT and informatics leads, legal and compliance officers, ethics and patient advocacy representatives, data privacy specialists, and operational leaders. Define clear roles for each member, and build in a formal process for reviewing committee composition as your AI portfolio expands.