Introduction

Half of executives say translating AI principles into operational processes is their biggest barrier - even as 61% of organizations claim to be at a "strategic" or "embedded" stage of Responsible AI. AI now runs products, internal workflows, autonomous agents, and customer-facing systems. The governance structures meant to control that exposure haven't kept pace.

This guide covers:

- The definitions and distinctions between AI ethics, policy, and governance

- The five core ethical principles enterprises need to operationalize

- Major regulatory frameworks every organization should know

- A step-by-step approach to building an internal governance framework

- Why runtime enforcement, not just documented policy, is where governance succeeds or fails

TLDR

- AI ethics defines values; AI policy translates those values into rules; AI governance enforces them operationally

- Five ethical principles underpin responsible AI: fairness, transparency, accountability, privacy, and security

- EU AI Act, NIST AI RMF, and ISO/IEC 42001 are the three most consequential frameworks enterprises must understand

- Effective governance requires an AI inventory, assigned accountability, implemented controls, and continuous monitoring, and policy documents alone aren't enough

- The runtime gap between written policy and production enforcement is the primary governance failure enterprises face, and agentic AI is making it worse by multiplying autonomous decision points

What Is AI Ethics, Policy and Governance: Why Each Distinction Matters

AI ethics, policy, and governance are often used interchangeably, but they represent distinct layers, each with a different job:

- AI ethics: The philosophical foundation defining what AI should and shouldn't do

- AI policy: The formalized rules an organization or government sets to operationalize those values

- AI governance: The ongoing system of structures, roles, controls, and processes that ensures policy is actually enforced across the AI lifecycle

Conflating these terms causes organizations to treat a policy document as a governance program. A policy sitting in a shared drive cannot prevent a biased model output, catch a prompt injection attack, or generate an audit trail. Governance requires operational infrastructure, not just written principles.

Why AI Governance Is a Board-Level Concern

AI and technology risks now top the list of executive concerns. Nearly 43% of respondents in The Conference Board's 2026 C-Suite Outlook Survey named AI and technology as an investment priority for 2026, outpacing any other category. A January 2026 survey of 513 financial-sector C-suite executives showed a sharp shift: executives who once focused on classic financial misconduct now rank AI-related conduct as the top risk.

Governance maturity hasn't kept pace. Deloitte's 2026 State of AI report found that while worker access to AI rose by 50% in 2025, only one in five companies has a mature model for governing autonomous AI agents. Organizations are deploying faster than they can control, and regulators are starting to notice.

What's at Stake Without Governance

Without governance, enterprises face:

- Bias in automated decisions: The EEOC settled its first AI employment discrimination lawsuit against iTutorGroup for $365,000 after the company's software automatically rejected female applicants over 55 and male applicants over 60

- Regulatory penalties: Massachusetts AG reached a $2.5 million settlement with Earnest Operations LLC for AI underwriting models that produced unlawful disparate impact based on race and immigration status

- Data breaches and privacy violations: The French DPA fined Clearview AI €20 million for unlawful processing of personal data; the Dutch DPA followed with a €30.5 million fine

- Reputational damage: 233 incidents of ethical AI misuse were reported to the AI Incident Database in 2024, a 56.4% increase from 2023

- Liability exposure: In May 2025, a federal court granted conditional class certification in Mobley v. Workday, alleging the company's AI screening system disproportionately rejected applicants based on race, age, and disability

For regulated industries like healthcare, insurance, and financial Solution, these risks carry direct business consequences: lost contracts, regulatory scrutiny, and operational disruption.

The Five Core Ethical Principles of Responsible AI

Fairness and Bias Mitigation

AI models trained on historical data can systematically reproduce and amplify discrimination, including in hiring, lending, healthcare triage, and law enforcement. A 2019 study published in Science revealed that a widely used commercial prediction algorithm exhibited significant racial bias, as it predicted health care costs rather than illness, leading to Black patients being considerably sicker than White patients at a given risk score.

Bias can enter at multiple lifecycle stages:

- Data collection: Unrepresentative or historically biased datasets

- Feature selection: Choosing variables that correlate with protected characteristics

- Labeling: Human bias in training data annotation

- Model design: Algorithms optimized for accuracy without fairness constraints

- User training: Operators who over-rely on biased outputs

Governance must require diverse training data, algorithmic audits for disparate impact, and fairness-aware design. Organizations deploying AI in high-stakes domains must implement pre-deployment bias testing and continuous demographic outcome monitoring.

Transparency and Explainability

AI systems can reach correct conclusions through reasoning that no human can inspect. This is the "black box" problem. NIST IR 8367 distinguishes explainability (can the system articulate why it made a decision?) from interpretability (can a human understand the model's internal logic?)

High-stakes domains such as credit decisions, medical diagnoses, and parole recommendations require meaningful explainability, not just output disclosure. CFPB Circular 2022-03 explicitly states there is no "AI exemption" for Equal Credit Opportunity Act (ECOA) adverse action notices; creditors cannot rely on broad checklist reasons if they don't reflect the complex algorithm's actual logic.

Governance must:

- Map all AI model features to corresponding adverse action reasons

- Restrict use of "black-box" models in regulated decision-making unless outputs can be translated into specific, human-interpretable factors

- Document how model outputs connect to business decisions

Accountability and Human Oversight

As AI moves from assistive to autonomous (agentic) systems, determining who is responsible for an AI-driven outcome becomes genuinely difficult. The EU AI Act (Article 14) mandates that high-risk AI systems be designed to allow effective oversight by natural persons, including the ability to "intervene in the operation of the high-risk AI system or interrupt the system through a 'stop' button or a similar procedure."

EU AI Act Biometric Systems Requirement: "No action or decision is taken by the deployer on the basis of the identification resulting from the system unless that identification has been separately verified and confirmed by at least two natural persons."

Effective governance programs must:

- Designate accountable humans at each stage of the AI lifecycle

- Specify when human review is mandatory before acting on AI outputs

- Establish escalation paths for disputed outputs or system failures

- Define clear lines of responsibility when AI agents operate across multiple tools and systems

Privacy and Data Stewardship

AI systems are data-hungry, and governance must address the full data lifecycle, including lawful collection, purpose limitation, retention periods, and secure disposal. Combining datasets can create new privacy exposures even when each individual dataset is compliant on its own.

GDPR Article 22 grants data subjects the right not to be subject to a decision based solely on automated processing that produces legal effects or similarly significant impacts. The European Data Protection Board's Opinion 28/2024 goes further, stating that "AI models trained on personal data cannot, in all cases, be considered anonymous."

HIPAA provides two methods for de-identifying protected health information: Expert Determination and Safe Harbor. The Safe Harbor method requires removal of 18 specific identifiers, including names, geographic subdivisions smaller than a state, and date elements directly tied to an individual (year excepted), before data can be used in AI workflows.

Practically, this means governance controls must enforce purpose limitations at the point of data access, not just at collection, and ensure disposal protocols are triggered automatically when retention periods expire.

Security and Adversarial Robustness

AI systems face unique threats beyond standard cybersecurity:

- Prompt injection: Adversaries craft inputs that cause large language models to ignore intended instructions and execute unintended actions. The 2025 EchoLeak attack (CVE-2025-32711, CVSS 9.3) demonstrated zero-click, remote data exfiltration in Microsoft 365 Copilot via a crafted email

- Data poisoning: Adversaries control a subset of training data by inserting or modifying training samples

- Adversarial examples: Inputs designed to cause a model to make an incorrect prediction

- Model inversion attacks: Attempts to reconstruct training data from model outputs

- Membership inference: Determining whether a data sample was part of the training set

Security controls must cover each layer of the AI stack, including data, framework, model, and API surface. Critically, incident response protocols need to address AI-specific failure modes, not just adapt existing cybersecurity playbooks that weren't designed for model behavior.

The Global AI Regulatory Landscape

AI regulation is fragmented across jurisdictions, inconsistent in approach, and moving faster than most enterprises' legal and compliance functions can track. States have enacted over 480 AI-related bills, creating a patchwork compliance landscape. Organizations operating across jurisdictions must manage a compliance matrix, not a single standard.

The EU AI Act: The World's First Comprehensive AI Law

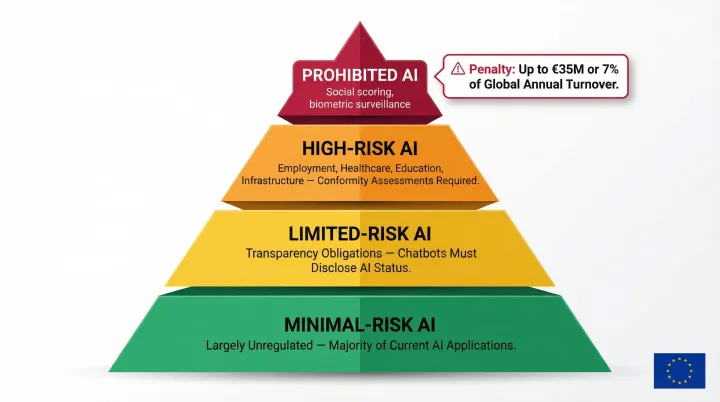

The EU AI Act entered into force on August 1, 2024, and will be fully applicable on August 2, 2026, with some exceptions (prohibited practices applied from February 2, 2025). The Act classifies AI into four risk tiers:

Risk Tier Structure:

- Prohibited AI: Social scoring, certain biometric surveillance

- High-risk AI: Employment, education, critical infrastructure, healthcare, subject to conformity assessments, technical documentation, human oversight, and incident reporting

- Limited-risk AI: Transparency disclosure obligations (e.g., chatbots must disclose they are AI)

- Minimal-risk AI: Largely unregulated

High-Risk Domains (Annex III):

- Biometrics

- Critical infrastructure

- Education and vocational training

- Employment, workers' management, and access to self-employment

- Access to and enjoyment of essential private Solution and essential public Solution and benefits

- Law enforcement

- Migration, asylum, and border control management

- Administration of justice and democratic processes

Extraterritorial Scope:

Article 2 states the Regulation applies to providers placing AI systems on the market in the Union, irrespective of whether they are established in the Union or a third country. Any organization serving EU customers must comply, regardless of where they are headquartered.

Penalties:

Non-compliance with prohibited practices can result in fines up to €35,000,000 or 7% of total worldwide annual turnover, whichever is higher.

The NIST AI Risk Management Framework and ISO/IEC 42001

NIST AI RMF is a voluntary US framework built around four core functions:

- GOVERN: Cultivates a culture of risk management and outlines processes that anticipate, identify, and manage risks

- MAP: Establishes the context to frame risks related to an AI system

- MEASURE: Employs tools and methodologies to analyze, assess, benchmark, and monitor AI risk and related impacts

- MANAGE: Entails allocating risk resources to mapped and measured risks on a regular basis

OMB Memorandum M-24-10 requires CFO Act agencies to implement minimum risk management practices for safety-impacting and rights-impacting AI by December 1, 2024, encouraging the use of the NIST AI RMF.

ISO/IEC 42001 is the first certifiable international management system standard for AI governance. Published in December 2023, ISO/IEC 42001 specifies requirements for establishing, implementing, maintaining, and continually improving an Artificial Intelligence Management System (AIMS).

Many enterprise procurement processes and regulated industries now require or strongly prefer ISO/IEC 42001 certification as evidence of governance maturity. Industry observers noted a sharp rise in ISO 42001 requirements appearing in enterprise RFPs across UK, EU, and US procurement cycles through 2024.

The United States: Executive Action, State Legislation, and the Deregulation Pivot

President Biden's Executive Order 14110 (October 2023) directed agencies to establish guidelines for AI safety, security, and testing, including AI red-teaming for generative AI. On January 23, 2025, President Trump signed Executive Order 14179, "Removing Barriers to American Leadership in Artificial Intelligence," directing the review and potential rescission of EO 14110 actions. Executive Order 14148 subsequently revoked EO 14110 outright.

The Trump administration's "America's AI Action Plan" emphasizes removing red tape and accelerating AI adoption in government and the military. This federal deregulatory shift has pushed governance responsibility further onto the private sector.

While federal mandates recede, states are filling the void:

- Texas HB 149 (effective January 2026) requires health care providers to disclose to patients when AI systems are used in diagnosis or treatment

- Colorado AI Act imposes strict obligations on AI system developers and deployers

- California is considering multiple AI-related bills

For enterprises in regulated industries, this means governance obligations are now largely self-defined, built from a combination of state mandates, international frameworks like the EU AI Act, and internal risk tolerance. Organizations that wait for federal clarity before acting are already behind.

How to Build an Enterprise AI Governance Framework

An effective enterprise AI governance framework is a layered system of principles, inventories, roles, controls, and monitoring, not a single policy document. It must cover the full AI lifecycle: procurement, development, deployment, operation, and decommissioning.

Step 1: Define Governing Principles and Risk Tolerance

Articulate the organization's core AI values and the outer limits of acceptable risk. Risk tolerance will differ by use case. A chatbot handling marketing content operates under different governance requirements than a model making credit decisions or medical triage recommendations.

Governance principles must be written in operational terms, not aspirational language. Instead of "We value fairness," write "All hiring and lending models must undergo quarterly bias audits measuring disparate impact across protected demographics, with results reviewed by the AI Ethics Committee."

Step 2: Build an AI Inventory and Classify Systems by Risk

Organizations must identify every AI system in use, including shadow AI, third-party tools, and developer-facing models, before they can govern them. Map each system against a risk classification using EU AI Act tiers or internal risk ratings.

Risk classification criteria:

- Domain (hiring, lending, healthcare, marketing)

- Decision authority (advisory vs. automated action)

- Data sensitivity (public, internal, PII, PHI)

- Regulatory scope (GDPR, HIPAA, ECOA)

Identify which systems require the most stringent oversight, human review requirements, and documentation obligations.

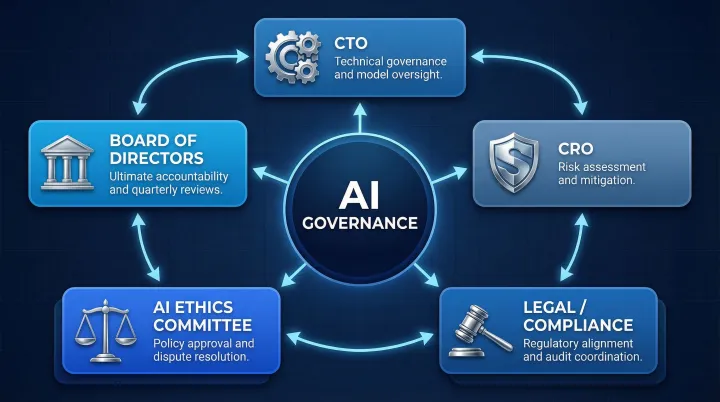

Step 3: Assign Accountability Across the AI Lifecycle

Define cross-functional roles required:

- CTO: Technical governance and model oversight

- CRO: Risk assessment and mitigation

- Legal/Compliance: Regulatory alignment and audit coordination

- AI Ethics and Compliance Committee: Policy approval and dispute resolution

- Board-level oversight: Ultimate accountability and quarterly governance reviews

Accountability must be assigned at each lifecycle stage, not just at deployment, with clear escalation paths and designated responsible parties for each governed AI system.

Step 4: Implement Controls, Audit Trails, and Compliance Evidence

Operational controls required:

- Regular bias audits and fairness assessments

- Explainability documentation for high-risk applications

- Human-in-the-loop requirements for critical decisions

- Data governance protocols (collection, retention, disposal)

- Incident response procedures specific to AI failures

- Automatically generated audit trails

Governance evidence should be a byproduct of every governed interaction, not something reconstructed after the fact for auditors. Every policy evaluation should be logged with results, model version, timestamp, and data lineage.

Step 5: Monitor Continuously and Iterate

Controls alone aren't enough. Models drift, data distributions shift, and new regulations emerge. Governance programs must keep pace. That means:

- Schedule quarterly governance reviews

- Monitor model performance and compliance metrics in production

- Conduct annual third-party audits

- Update policies, retrain or retire models, and adapt to new regulatory requirements

ISO/IEC 42001 Clause 9.3 requires top management to review the organization's AI management system at planned intervals to ensure its continuing suitability, adequacy, and effectiveness. In practice, that means governance reviews should be standing agenda items, not reactive responses to incidents or audits.

From Policy Documents to Production: The Runtime Governance Gap

The most common failure mode in enterprise AI governance is the gap between a well-crafted policy and actual enforcement. Most organizations have governance documents; far fewer have governance infrastructure. Policies written in Word documents cannot catch a prompt injection attack, prevent a model from producing a biased output, or generate an audit trail automatically.

This gap grows exponentially as organizations scale AI to multiple models, agents, and workflows operating simultaneously.

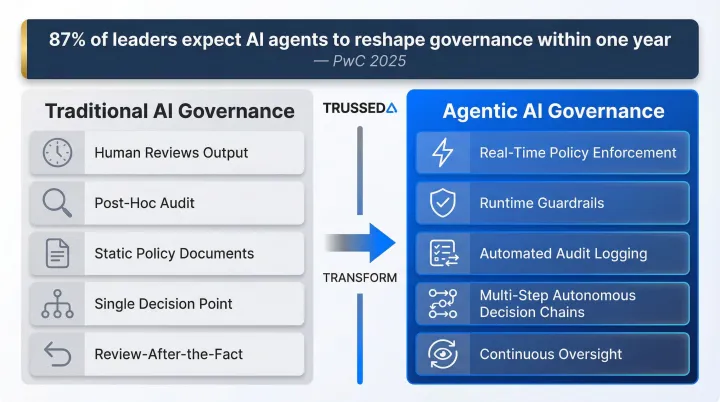

Why Agentic AI Makes This Gap Critical

Autonomous AI agents make sequences of decisions with minimal human intervention, often across multiple tools and APIs. Traditional governance assumes a human reviews outputs. Agentic systems may act before any human sees the result.

PwC's 2025 survey found that 87% of leaders expect AI agents to reshape governance within the next year, requiring a shift from static controls to continuous oversight. McKinsey's 2026 AI Trust Maturity Survey notes that in the age of agentic AI, organizations must contend with systems doing the wrong thing, such as taking unintended actions or operating beyond appropriate guardrails.

Governance must move from review-after-the-fact to enforcement-at-runtime, with real-time policy checks, guardrails, and audit logging embedded in the AI infrastructure layer.

Treating Governance as Infrastructure, Not Paperwork

The practical answer is a control plane that enforces policies at the point of AI execution, across models, agents, developer tools, and production applications, with continuous compliance monitoring, intelligent routing, and automatically maintained audit trails.

Trussed AI is built around this model: a drop-in AI control plane that turns static governance policies into real-time enforcement across the enterprise AI stack, designed for regulated industries where compliance violations carry direct business consequences. The platform sits as a proxy layer in the flow of AI interactions, providing real-time visibility and control without requiring changes to existing application code.

Frequently Asked Questions

What is the difference between AI ethics, AI policy, and AI governance?

AI ethics is the values foundation: what AI should and shouldn't do. AI policy translates those values into formal rules and requirements. AI governance is the operational system that enforces those rules across the AI lifecycle through structures, roles, controls, and processes. Organizations need all three, not just one.

What are the five core ethical principles of responsible AI?

The five principles are:

- Fairness: bias identification and mitigation

- Transparency: explainability of AI decisions

- Accountability: human oversight and clear ownership

- Privacy: responsible data stewardship

- Security: robustness against adversarial threats

These principles underpin frameworks like the EU AI Act, NIST AI RMF, and ISO/IEC 42001.

How does the EU AI Act's risk classification system work?

The EU AI Act classifies AI systems into four tiers:

- Prohibited: social scoring, certain biometric surveillance

- High-risk: employment, education, healthcare, critical infrastructure (conformity assessments and human oversight required)

- Limited-risk: transparency obligations, such as identifying chatbots to users

- Minimal-risk: largely unregulated

It applies to any organization serving EU markets, regardless of where they're based.

What is the NIST AI Risk Management Framework and do I have to use it?

NIST AI RMF is a voluntary US framework organized around four functions: Govern, Map, Measure, and Manage. While voluntary, it's now the baseline expectation for US federal agencies and is widely adopted across regulated industries. ISO/IEC 42001 is a related certifiable standard that many enterprises now require in procurement processes.

How does AI governance need to change for agentic AI systems?

Agentic AI operates autonomously across multi-step decision chains, making post-hoc review insufficient. Governance must shift to runtime enforcement with real-time policy checks, guardrails, and automatic audit logging embedded at the infrastructure layer. Every tool call, data access, and workflow trigger must be authorized against policy before execution occurs.

Who should be responsible for AI governance in an enterprise organization?

AI governance is a shared cross-functional responsibility. The CTO handles technical governance, the CRO manages risk assessment, Legal/Compliance ensures regulatory alignment, and an AI Ethics Committee provides oversight. The board retains ultimate accountability and should receive regular governance updates as a standing agenda item.