According to a 2025 McKinsey Global Survey, 78% of organizations use AI in at least one business function, yet only 28% report that their CEO oversees AI governance. Meanwhile, a 2025 KPMG survey found that 44% of U.S. workers use AI tools without proper authorization, highlighting the massive gap between policy existence and technical enforcement.

This guide breaks down the core components every AI governance policy needs, real-world examples from governments, enterprises, and regulated industries, a step-by-step framework for building one, and an explanation of how policies move from paper to operational enforcement.

TLDR

- An AI governance policy defines how organizations develop, deploy, monitor, and retire AI systems, covering acceptable use, accountability, and compliance

- Effective policies address 8-10 components: scope, principles, roles, acceptable use, data privacy, risk management, and enforcement

- The strongest real-world policies share three traits: explicit scope, named accountability, and built-in review cycles

- Building a policy requires cross-functional input from legal, IT, compliance, and HR, aligned to frameworks like NIST AI RMF, ISO/IEC 42001, or the EU AI Act

- Written policies are only the first step. Enforcing them at runtime across models, agents, and workflows is where governance actually takes effect

What Is an AI Governance Policy and Why It Matters Now

An AI governance policy is a formal organizational document establishing how AI systems are developed, procured, deployed, monitored, and retired. It includes rules for acceptable use, data handling, human oversight, accountability structures, and compliance with applicable regulations.

The gap between enterprise AI adoption and governance readiness is widening. While 78% of organizations use AI in at least one business function, only 28% have CEO-level oversight of AI governance. Meanwhile, 44% of U.S. workers admit using AI tools without authorization, and 46% have uploaded sensitive company information to public platforms.

Regulatory pressure is accelerating across jurisdictions:

- EU AI Act: Prohibited practices took effect February 2025; General-Purpose AI obligations apply August 2025

- California AB 2013: Requires generative AI training-data transparency by January 1, 2026

- Colorado SB24-205: Mandates reasonable care to avoid algorithmic discrimination, effective June 2026

Usage Policy vs. Governance Framework

An AI usage policy governs employee rules for using AI tools. A broader AI governance framework covers the full AI lifecycle, risk classification, oversight structures, and organizational accountability. The NIST AI Risk Management Framework defines governance as a cross-cutting capability that cultivates risk management culture, outlines processes, and aligns technical aspects with organizational values.

This guide covers both: the employee-facing usage rules organizations need today, and the comprehensive governance frameworks that keep AI deployment defensible as regulations tighten.

What Should an AI Governance Policy Include?

Regardless of organization size or industry, effective AI governance policies consistently cover 8-10 core components, structured into three layers: foundation, operations, and control.

Foundational Elements

Purpose, Scope, and Definitions

Precise scope language determines who the policy applies to, which AI systems it covers, and across which geographies and use cases. Clear definitions of "AI," "generative AI," "automated decision-making," and "AI output" prevent ambiguity during enforcement.

Scope that is too narrow creates gaps; scope that is too broad creates confusion. Effective policies define boundaries explicitly, such as:

- Employee-facing tools only vs. customer-facing AI systems

- Internal applications vs. third-party integrations

- Production deployments vs. developer and testing environments

AI Principles and Ethical Guidelines

Core ethical principles anchor the policy: fairness, transparency, accountability, human oversight, and non-discrimination. These should align with the NIST AI RMF's trustworthy AI characteristics:

- Valid and reliable

- Safe, secure, and resilient

- Accountable and transparent

- Explainable and interpretable

- Privacy-enhanced

- Fair with harmful bias managed

Every operational rule in the policy should trace back to one or more of these principles, creating a clear line from values to controls.

Operational Elements

Governance Roles and Accountability

Every AI governance policy needs a named oversight structure, typically an AI governance committee with representation from legal, IT/security, compliance, and business leadership. Define:

- Who approves new AI tools

- Who owns risk assessments

- Who manages incidents

- Who enforces policy violations

Without named accountability, policies become unenforceable. The NIST AI RMF Playbook recommends establishing interdisciplinary teams reflecting demographic diversity, broad domain expertise, and disciplines outside AI practice (law, sociology, psychology).

Acceptable Use, Data Privacy, and IP Ownership

Once roles are established, the policy needs to define what AI use is actually permitted. Specify acceptable vs. prohibited use cases, for example restrictions on using AI in high-stakes decisions without human oversight. Cover data requirements:

- Anonymization and encryption

- Access control

- Consent management

- Data classification tiers governing what information can be input to AI systems

Address intellectual property separately: who owns AI-generated outputs, and how to handle copyright risk from generative AI tools. Enterprises using third-party models face particular exposure here, as vendor agreements don't always transfer IP rights cleanly.

Compliance and Control Elements

Risk Management and Regulatory Alignment

Implement AI risk tiering (low, medium, high-risk systems) and require impact assessments before deployment. The EU AI Act classifies AI into unacceptable risk (prohibited practices like social scoring), high-risk (biometrics, critical infrastructure, employment), limited risk (transparency obligations), and minimal risk.

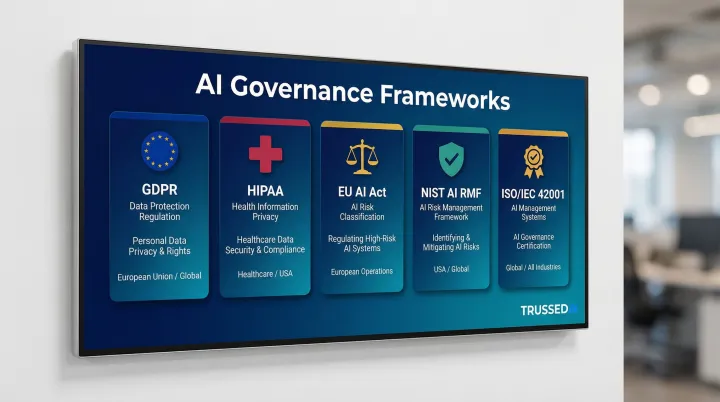

Identify key regulations and frameworks the policy should align to based on organizational context:

- GDPR for data protection

- HIPAA for healthcare

- EU AI Act for European operations

- NIST AI RMF for risk management

- ISO/IEC 42001 for AI management systems

Alignment requirements differ by industry and geography. Financial Solution must comply with model risk management guidance (OCC SR 11-7), while healthcare organizations must meet FDA Clinical Decision Support requirements.

Monitoring, Auditing, Enforcement, and Review Cadence

Define how the organization will verify compliance:

- Audit schedules

- Incident response procedures

- Violation consequences (up to and including termination)

Establish a defined review cycle, typically annual at minimum, with additional reviews triggered by regulatory changes or new system deployments. The City of San José recommends quarterly reviews due to rapid technology and legal evolution. ISO/IEC 42001 mandates continuous Plan-Do-Check-Act (PDCA) cycles for ongoing monitoring.

AI Governance Policy Examples Across Organizations

Government and Public Sector

City of San José

The City of San José's Generative AI Guidelines (updated April 2025) provide a practical municipal blueprint:

- Public records exposure: Information entered into Generative AI systems may be subject to Public Records Act requests

- Citation requirements: Users must cite AI-generated images, video, and audio to maintain public trust

- Work-specific accounts: Staff create accounts specifically for City use to separate public records from personal records

- Quarterly review cycle: Staff refer to the document quarterly as guidance changes rapidly

State of Ohio

Ohio's AI Model Policy (2026 deadline) includes:

- Formal AI Council to authorize Generative AI

- Procurement vetting process for new AI tools

- Workforce training requirements

- Data governance oversight by Chief Data Officer council

These public sector examples share a common architecture: bounded scope, named human accountability, and a documented process for keeping the policy current as regulations shift.

Enterprise and Corporate Policies

Enterprise policies address commercial risk vectors that public sector frameworks rarely prioritize: IP liability, vendor compliance, and cost attribution. Strong enterprise AI governance policies include:

- AI risk classification matrix defining low, medium, and high-risk systems

- Formal tool approval and procurement workflow preventing shadow AI

- Data classification tiers governing what information can be input to AI systems

- AI audit checklists for ongoing compliance verification

Enterprise policies also implement cost governance, tracking spending across teams, models, and applications with real-time attribution.

Healthcare and Financial Services

Regulated industries layer sector-specific requirements on top of general AI governance components.

Healthcare Requirements:

- HIPAA compliance for patient data

- Human oversight requirements for clinical decision support tools

- Documentation standards for AI-assisted diagnoses

- Independent review of AI recommendations (per FDA Clinical Decision Support Guidance, 2026)

- Source attribute disclosure for predictive tools, including training data details and external validation (per ONC HTI-1 Final Rule, 2024)

Financial Services Requirements:

- Explainability requirements for credit or underwriting models

- Bias testing documentation

- Alignment with consumer protection regulations

- Board oversight and model documentation accessible to non-technical reviewers (per OCC SR 11-7)

- Explainability vs. performance trade-off management for LLMs in critical applications (per Basel Committee guidance, 2025)

Education Institutions

Governance requirements extend beyond commercial industries into educational settings, where student welfare and equity add additional stakes. Taliaferro County Schools' AI policy demonstrates how educational organizations handle governance:

- Explicit restrictions on AI for high-stakes decisions (grading, discipline) without human oversight

- Bias and fairness scrutiny for tools used across student populations

- Separate guidelines for educators vs. students

- Privacy protections tied to FERPA and COPPA

- Emphasis on the irreplaceability of human interaction alongside AI

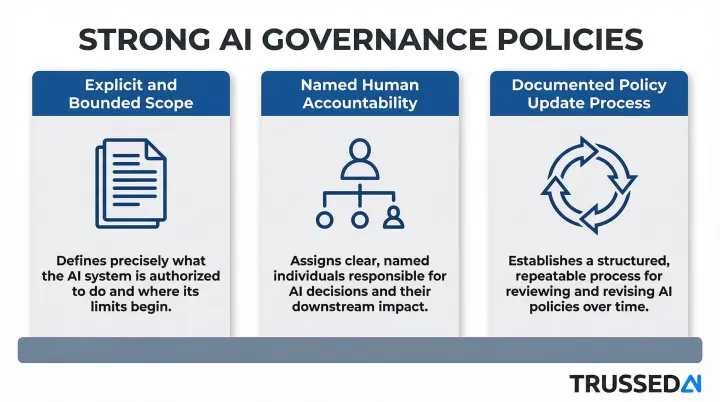

Key Patterns Across Strong Examples

Three elements consistently appear across effective AI governance policies:

- Explicit and bounded scope definitions preventing ambiguity about what the policy covers

- Named human accountability at each governance layer

- Documented process for updating the policy as technology and regulations evolve

How to Build Your AI Governance Policy: A Step-by-Step Framework

Assemble Stakeholders and Map Your AI Landscape

Before drafting a single policy clause, convene cross-functional stakeholders: legal, compliance, IT/security, HR, risk management, and business unit representatives from every team deploying AI tools.

Conduct a complete AI inventory, cataloging all tools currently in use, including shadow AI adopted without formal approval. Classify each by risk level and business function. NIST GOVERN 1.6 requires organizations to inventory AI systems, tracking attributes such as data dictionaries and incident response plans, with particular emphasis on high-risk models.

That inventory becomes the foundation for your policy's scope and risk tier structure. Without it, you're drafting in the dark.

Draft Policy Components and Align to Regulations

With your AI inventory complete, map each cataloged system to the policy components it requires, including data governance rules, access controls, incident response protocols, and accountability assignments.

Templates are useful starting points:

- Responsible AI Institute: Publishes an AI Policy Template aligned with ISO/IEC 42001 and NIST AI RMF

- UK DSIT: AI Assurance Framework provides guidance on using global technical standards to build internal governance

Every organization must customize for specific use cases, jurisdictions, and regulatory obligations. Require legal review before finalizing to ensure alignment with applicable laws.

Train Employees and Establish Reporting Mechanisms

Policy adoption requires more than distribution. Employees at all levels need role-appropriate training on what the policy requires, how to spot violations, and how to report concerns without fear of retaliation.

Effective programs layer multiple formats:

- Onboarding modules that introduce policy requirements from day one

- Ongoing updates as policies evolve with new regulations or AI use cases

- Webinars or briefings when significant changes take effect

- Anonymous reporting channels so employees can flag violations safely

Build In Enforcement and Review Cycles

Enforcement is what separates a living policy from a document that sits in a shared drive. Build accountability through:

- Regular audits

- Incident response workflows

- Defined consequences for violations

- A governance committee empowered to act

Establish a review schedule, minimum annual plus event-triggered reviews for significant regulatory changes or AI system expansions, and assign clear ownership for keeping the policy current.

From Written Policy to Operational Enforcement

Most organizations invest significant effort writing governance policies but struggle to enforce them consistently in practice. The problem compounds as AI systems expand beyond employee-facing chatbots to autonomous agents, multi-model pipelines, and developer toolchains where manual oversight cannot scale.

According to Deloitte's 2024 AI governance survey, while 70% of organizations have AI governance policies, 84% of executives are dissatisfied with their AI adoption pace, indicating that static governance frameworks create overhead without enabling secure deployment.

The Agentic AI Blind Spot

As organizations move toward autonomous AI agents, manual oversight is failing. Deloitte's 2026 State of AI report notes that only one in five companies has a mature model for governance of autonomous AI agents.

The NIST Generative AI Profile (2024) warns that GAI systems and multi-model pipelines require continuous monitoring, incident tracking, and "circuit breakers" (protocols to deactivate systems when necessary) because no one yet fully understands how they perform over time, unlike traditional AI systems.

What Operational Enforcement Requires

Closing the gap between written policy and live AI systems requires three things:

- Real-time policy checks on every AI interaction, not periodic spot audits

- Automated guardrails that block violations before they occur, not flags after the fact

- Audit trails generated automatically as a byproduct of governed interactions, not assembled manually ahead of a compliance review

Platforms like Trussed AI are built specifically for this enforcement layer, applying policy at runtime across models, agents, and developer environments via a drop-in proxy that requires no changes to existing application code.

For autonomous agents specifically, governance must operate at the execution layer, evaluating policy before every tool call, data access, and workflow trigger. This differs from standard LLM governance, which typically focuses on filtering outputs after generation. With agents, the risk isn't what the model says. It's the downstream actions the agent takes, including tool calls, data writes, and workflow triggers that may be difficult or impossible to reverse.

Frequently Asked Questions

What should be included in an AI governance policy?

Core components include purpose and scope, ethical principles, governance roles, acceptable use guidelines, data privacy and IP rules, risk management procedures, monitoring and auditing mechanisms, and a defined policy review cadence. These elements should align with frameworks like NIST AI RMF or ISO/IEC 42001.

How is an AI governance policy different from an AI usage policy?

An AI usage policy governs how employees interact with AI tools day-to-day. An AI governance policy is broader: it covers the full AI lifecycle, organizational accountability structures, risk classification, regulatory compliance, and technical controls across development, deployment, and retirement.

How often should an AI governance policy be reviewed?

Most organizations review AI governance policies at least annually, with additional reviews triggered by significant regulatory changes (such as new AI laws), major new AI system deployments, or reportable incidents. The City of San José recommends quarterly reviews due to rapid technology evolution.

What regulations should an AI governance policy address?

Key frameworks and regulations include NIST AI RMF, ISO/IEC 42001, the EU AI Act, GDPR, and HIPAA for healthcare. Applicable requirements vary by industry, geography, and the risk level of AI systems in use. Financial Solution must also align with OCC SR 11-7 and Basel Committee guidance.

How do you enforce an AI governance policy at scale?

Enforcement requires organizational mechanisms, including audits, training, incident response, and named accountability, paired with technical controls that apply policy rules consistently at runtime across all AI systems. Manual oversight alone cannot scale to autonomous agents and multi-model pipelines.

Can small organizations use enterprise AI governance policy templates?

Enterprise templates are valid starting points, but smaller organizations should scale them to their maturity level, prioritizing scope, ethical principles, acceptable use, and basic risk management before building out complex committee structures.