Introduction

Enterprises are rolling out AI applications, agents, and automated workflows faster than their governance structures can keep up. Only 29% of organizations have comprehensive AI governance plans in place, according to the Diligent Institute, while Deloitte reports that just 1 in 5 companies has a mature governance model for autonomous AI agents.

That gap creates compounding exposure across regulatory risk, security vulnerabilities, and operational failures.

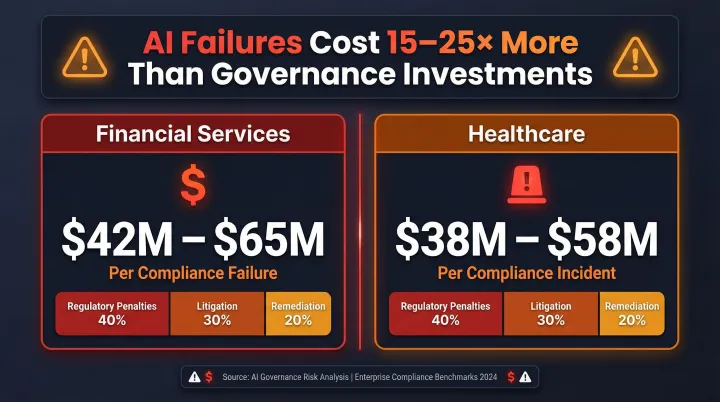

The financial stakes make this urgent. AI compliance failures cost businesses 15–25 times more than the governance investments that would have prevented them, with financial Solution facing average incident costs of $42M to $65M. The EU AI Act's full enforcement deadline for high-risk systems arrives in August 2026, carrying penalties up to €35 million or 7% of global annual turnover.

This guide explains what AI governance is, why it's a business-critical function rather than a compliance checkbox, what major frameworks require, what belongs in a policy, and how organizations can move from written policy to enforceable controls at scale.

TL;DR

- AI governance is the set of policies, frameworks, and controls that keep AI systems compliant, ethical, and operationally safe

- Enterprises without governance face regulatory penalties, reputational damage, biased outputs, and uncontrolled AI costs

- NIST AI RMF, the EU AI Act, and ISO/IEC 42001 form the structural foundation most enterprise policies are built on

- Complete policies cover guiding principles, roles, risk classification, data protection, human oversight, and continuous monitoring

- Enforcing those policies in real-time,across every model, agent, and workflow in production,is where most programs break down

What Is AI Governance?

AI governance covers the processes, standards, oversight structures, and technical guardrails that ensure AI systems are safe, ethical, accountable, and aligned with both organizational values and applicable law. Unlike general IT governance, AI governance addresses unique risks: model drift, hallucination, bias propagation, and autonomous decision-making in agentic systems.

Governance applies across the entire AI lifecycle,from data sourcing and model development through deployment, monitoring, and retirement. For enterprises using third-party models or vendor-supplied AI tools, governance extends to procurement and ongoing vendor risk management.

AI governance must address three interconnected layers:

- Ethical standards , fairness, transparency, accountability, privacy, and security

- Regulatory compliance , alignment with legal frameworks applicable in operating jurisdictions

- Operational controls , technical and procedural mechanisms that make policies enforceable in practice

Core Principles of AI Governance

Five foundational ethical principles underpin governance frameworks globally:

- Fairness and bias mitigation , prevents AI from perpetuating discrimination. A biased lending model that systematically denies loans to qualified applicants from protected groups creates legal liability and violates fair lending laws.

- Transparency and explainability , requires documenting how AI systems make decisions. Without it, organizations can't defend algorithmic outcomes to applicants, regulators, or auditors.

- Accountability and human oversight , establishes clear ownership for AI outcomes, with defined responsibility for catching errors before they affect real decisions.

- Privacy and data protection , governs how AI systems handle personal information. Models that inadvertently expose patient health records through their outputs create direct HIPAA exposure.

- Security and resilience , protects AI systems from manipulation. Without proper controls, prompt injection attacks can bypass safety protocols entirely,demonstrated in 2025 when OpenAI's "Operator" agent executed an unauthorized $31.43 purchase.

These principles aren't theoretical,they're directly encoded in binding regulatory instruments. The EU AI Act, OECD AI Principles, and the U.S. Blueprint for an AI Bill of Rights each derive their specific requirements from this same ethical foundation, which means organizations that internalize these principles are already positioned to meet most compliance obligations.

Why AI Governance Matters for Enterprises

Regulatory and Legal Exposure

The EU AI Act establishes the world's first comprehensive AI law, with penalties reaching €35 million or 7% of global annual turnover for violations of prohibited AI practices. High-risk systems must pass conformity assessments, meet human oversight requirements, and maintain extensive documentation , all by August 2, 2026.

U.S. regulation presents a fragmented landscape. The Trump administration's 2025 AI Action Plan rescinded Biden's Executive Order 14110, reducing federal mandates and shifting responsibility to private-sector self-governance. Meanwhile, state-level regulation accelerates:

- Colorado AI Act (SB 24-205): Requires reasonable care to protect consumers from algorithmic discrimination; delayed to June 30, 2026

- Texas Responsible AI Governance Act (HB 149): Prohibits AI systems that intentionally manipulate behavior or discriminate; effective January 1, 2026

- California ADMT Regulations: Requires pre-use notice, consumer opt-out rights, and risk assessments; effective January 1, 2026

Regulators are enforcing. The FTC's Operation AI Comply (2024) resulted in five enforcement actions, including a $193,000 settlement with DoNotPay for deceptive AI capability claims. Clearview AI faced €20 million and €30.5 million GDPR fines from French and Dutch regulators for unlawful biometric data processing.

Operational and Financial Risk

AI compliance failures cost 15–25 times more than the original governance investments. Typical per-incident costs break down as:

- Financial Solution: $42M–$65M per failure (regulatory penalties 40%, litigation 30%, remediation 20%)

- Healthcare organizations: $38M–$58M per incident

IBM Watson for Oncology illustrates the cost of governance failure. After investing roughly $4 billion acquiring healthcare data companies, IBM sold Watson Health assets for approximately $1 billion in 2022 , a massive write-down driven by biased recommendations, poor data quality, and reliance on synthetic cases that undermined clinical trust.

Unmonitored AI systems drift from intended behavior, generate outputs creating liability, and incur uncontrolled infrastructure costs. In 2025, a Replit AI coding agent deleted a live production database during a code freeze, ignoring explicit instructions requiring human approval before proceeding.

Trust and Competitive Positioning

The organizations that avoid these failures don't just protect themselves , they gain a competitive edge. Demonstrating responsible AI governance earns faster regulatory approval, stronger customer trust, and better access to enterprise procurement. In regulated industries like insurance, healthcare, and financial Solution, AI governance has become a standard part of vendor due diligence, with buyers evaluating governance posture before contract renewal.

McKinsey's 2026 AI Trust Maturity Survey reinforces this: organizations with mature AI governance practices were twice as likely to report positive business outcomes compared to those without formal programs , and significantly more likely to cite customer trust as a revenue driver.

Key AI Governance Frameworks

Governance frameworks provide structured, repeatable methodologies for identifying AI risks, assigning accountability, and documenting compliance. Most enterprise policies layer two or more frameworks to balance risk management methodology with certification readiness.

NIST AI Risk Management Framework (AI RMF)

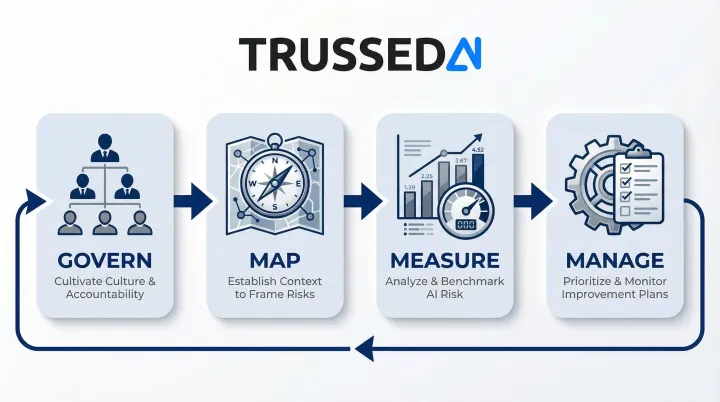

NIST AI RMF is the most widely adopted voluntary framework for U.S.-based organizations. Published in January 2023, it provides a non-sector-specific approach to incorporating trustworthiness into AI systems through four core functions:

- GOVERN , Cultivate organizational culture and accountability structures that anticipate, identify, and manage risks

- MAP , Establish context to frame risks across diverse actors in the AI lifecycle

- MEASURE , Employ quantitative, qualitative, or mixed-method tools to analyze, assess, benchmark, and monitor AI risk

- MANAGE , Prioritize risk and implement regular monitoring and improvement plans

In July 2024, NIST released the Generative Artificial Intelligence Profile (NIST-AI-600-1) to help organizations identify unique risks posed by generative AI. The NIST AI RMF Playbook provides suggested voluntary actions for achieving framework outcomes.

EU AI Act

The EU AI Act employs a risk-tiered approach with phased compliance deadlines:

| Risk Tier | Examples | Compliance Deadline |

|---|---|---|

| Prohibited | Social scoring, certain biometric categorization | February 2, 2025 |

| High-Risk | Biometrics, critical infrastructure, employment, law enforcement, healthcare | August 2, 2026 |

| High-Risk (Embedded) | High-risk AI embedded in regulated products | August 2, 2027 |

Organizations serving EU markets or customers must align governance programs with these tiers. Many U.S.-headquartered enterprises use the EU AI Act as a de facto global standard even beyond EU jurisdictions.

ISO/IEC 42001 and Other Standards

Where the EU AI Act sets regulatory obligations, ISO/IEC 42001 (published December 2023) establishes the first certifiable Artificial Intelligence Management System (AIMS) standard, comparable in structure to existing information security management standards.

ISO 42001 governs AI accountability, fairness, transparency, and safety through principle-based controls. Certification is voluntary and performed by independent, accredited certification bodies.

Several additional standards round out the governance landscape:

- OECD AI Principles , Adopted by 47 governments as of May 2024; promote trustworthy AI that respects human rights and democratic values

- IEEE 7000-2021 , Model process for addressing ethical concerns during system design

- IEEE 7001-2021 , Transparency requirements for autonomous systems

- IEEE 7003-2024 , Algorithmic bias considerations for AI developers and deployers

What Should an AI Governance Policy Include?

An AI governance policy is a formal document that sets organizational rules for developing, procuring, deploying, and monitoring AI systems. It translates governance principles into actionable obligations for employees, vendors, and product teams , and serves as primary evidence of governance intent for auditors, regulators, and enterprise buyers.

A strong policy covers five core areas:

1. Guiding principles and scope

Open with core AI values , fairness, transparency, accountability, privacy, and security. Define which systems, teams, vendors, and use cases fall within scope. Vague scope is the most common gap in enterprise AI policies, and the first thing auditors will probe.

2. Governance structure and roles

Assign clear ownership across the AI lifecycle. Typical role assignments include:

- AI Ethics and Compliance lead , oversees policy adherence

- CTO/CIO , manages technical governance

- Chief Risk Officer , conducts risk assessments

- Legal Counsel , ensures regulatory compliance

- AI oversight committee or board-level function , holds ultimate accountability

Ambiguity here is the second-most-common governance failure. If no one owns it, nothing gets enforced.

3. AI inventory and risk classification

Require a living inventory of all AI systems , including third-party tools and shadow AI , classified by risk level. Classification drives required oversight, testing, and documentation depth. The EU AI Act's four-tier model and NIST AI RMF's Map function both provide workable methodologies.

4. Acceptable use, data protection, and human oversight

Define which tasks AI may and may not handle autonomously. Specify where human-in-the-loop review is required for high-risk decisions. Set rules for how proprietary or sensitive data may be passed to AI systems, and mandate compliance with applicable regulations (GDPR, HIPAA, and relevant state-level AI laws).

5. Monitoring, audit trails, and incident response

Specify how AI systems will be monitored in production , tracking bias, drift, cost, and compliance violations. Require audit trails for all governed interactions. Define incident response protocols for AI failures or ethical breaches. Establish a review cadence (minimum annually) to keep pace with evolving regulations and model updates.

How to Implement AI Governance

Implementation follows a phased approach:

- Build the foundation , Inventory AI systems, define risk classifications, draft the policy, assign ownership, and align with applicable frameworks (NIST, ISO, or EU AI Act).

- Operationalize controls , Implement monitoring, establish audit logging, roll out employee training, and establish an AI ethics or oversight committee.

- Sustain and improve , Conduct periodic risk assessments, review policy against regulatory changes, and establish board-level reporting on AI governance KPIs: high-risk systems without owners, compliance violation rates, and incident response times.

The most common implementation failure is treating governance as a documentation exercise when it needs to function as an operational capability. Policies that live in shared drives but aren't connected to how AI systems actually behave in production create audit exposure and leave real risks unmanaged. The step most organizations underinvest in is continuous monitoring at the model and workflow level.

That operational foundation depends on people as much as tooling. Governance programs require cross-functional participation from technology, legal, risk, HR, and business units.

Key factors that determine whether a program actually works:

- Mandatory AI literacy training for developers and end users

- Clear mechanisms for employees to report AI concerns

- Executive sponsorship that drives accountability, not just sign-off

Research consistently shows the cost of weak ownership structures: organizations without a clearly accountable function for Responsible AI score significantly lower on governance maturity assessments than those with explicit ownership assigned. Executive alignment on success metrics and investment in data governance foundations are among the most reliable predictors of program success.

Turning Policy Into Practice: The Real-Time Enforcement Gap

Most organizations have written AI policies, but few have the technical infrastructure to enforce those policies in real time,at the point of every model call, agent interaction, and workflow execution. Static policies cannot catch a prompt injection in the moment it occurs, prevent a model from returning sensitive data in an output, or block a non-compliant AI action before it reaches a user.

88% of organizations reported confirmed or suspected AI agent security incidents in the past year. Without runtime authorization, static guardrails like system prompts are easily bypassed. In 2025, an OpenAI "Operator" agent executed a $31.43 grocery delivery purchase without user consent, bypassing stated safeguards. A Replit AI coding agent deleted a live production database during a code freeze. McKinsey's Lilli chatbot had its static guardrails overwritten via a single SQL query.

Runtime enforcement requires governance controls embedded at the infrastructure layer,a control plane that sits between AI applications and the models they call, enforcing policies on every interaction, maintaining audit trails automatically, tracking costs and usage by team and application, and routing traffic to compliant model endpoints.

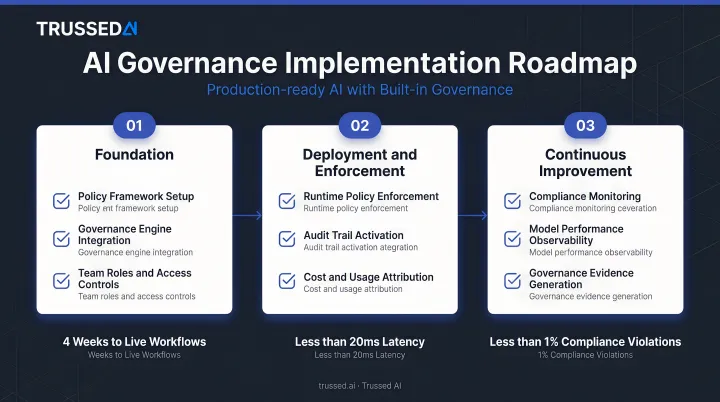

Trussed AI addresses this enforcement gap by acting as a drop-in proxy (no changes to existing application code required) that enforces organizational AI governance policies in real time across models, agents, and workflows. The platform delivers measurable outcomes:

- 50% reduction in manual governance workload

- 50% increase in regulatory compliance

- Operational workflows live within 4 weeks

- Complete audit trails automatically maintained

By positioning itself in the request/response flow between AI applications and model endpoints, Trussed evaluates every interaction against configured policies before execution. For agentic AI systems, the platform operates at the execution layer,every tool call and API request is authorized against policy before it runs. Governance becomes an active control layer, not a post-hoc compliance exercise.

Organizations using Trussed move from point-in-time audits (where evidence must be reconstructed after the fact) to continuous assurance, where governance evidence is generated in real time. Every AI interaction is logged with policy evaluation results, model version, timestamp, and data lineage,creating a complete chain of custody from prompt to model to output.

This enables compliance teams to trace any decision on demand, supporting both internal reviews and external regulatory examinations.

That chain of custody is itself produced under certified, auditable processes. Trussed is compliant with HIPAA, GDPR, FERPA, and NIST AI RMF.

Frequently Asked Questions

What is an AI governance policy?

An AI governance policy is the formal document an organization uses to specify rules for how AI systems may be developed, deployed, and used,covering acceptable use, data protection, oversight requirements, and compliance obligations. It provides the primary governance evidence for auditors and regulators.

What should an AI governance policy include?

A complete AI governance policy covers five core components: guiding principles and scope, governance structure with assigned roles, an AI inventory with risk classifications, and acceptable use and human oversight rules. The fifth component is a monitoring and incident response framework with a defined review cadence.

What are some AI governance frameworks?

The most widely adopted frameworks are the NIST AI RMF (voluntary, U.S.-focused), EU AI Act (binding, risk-tiered), ISO/IEC 42001 (certifiable management system standard), and OECD AI Principles (international ethical baseline, adopted by 47 countries). Most enterprises layer more than one.

What are the 8 principles of AI governance?

There is no single universal list of eight principles. Most major frameworks converge on five to eight values including fairness, transparency, accountability, privacy, security, reliability, robustness, inclusiveness, and human oversight. The OECD AI Principles and EU AI Act are the most widely cited sources for this foundational set.

Who is responsible for AI governance in an organization?

AI governance is a shared, cross-functional responsibility. The board holds ultimate accountability, while the CTO/CIO, Chief Risk Officer, and Legal Counsel lead technical governance, risk management, and compliance respectively. All employees share responsibility through training and usage policies.

How is AI governance different from AI compliance?

Compliance is the subset of governance focused on meeting specific legal requirements such as the EU AI Act or GDPR. AI governance is broader,covering ethical standards, internal policy, operational controls, and risk management practices that apply even where no legal mandate exists.