Introduction

Enterprises face a critical tension: GenAI adoption is accelerating across every business function, but governance has not kept pace. Organizations are deploying AI applications, agents, and workflows faster than their risk controls, policies, or compliance infrastructure can manage. While 88% of organizations now use AI in business operations, only 25% have comprehensive AI governance in place.

The consequences of that gap are tangible , regulatory exposure, operational failures from model drift and hallucinations, and direct legal liability. These aren't theoretical risks.

Air Canada was found liable for negligent misrepresentation after its AI chatbot provided incorrect information about bereavement fares. Earnest Operations LLC reached a $2.5 million settlement over allegations that its AI underwriting models produced disparate harm against protected groups. In both cases, the underlying technology worked , governance didn't.

If your organization is scaling AI and governance hasn't kept up, this guide is the starting point. It covers what responsible GenAI governance looks like in practice, the five pillars every enterprise deployment needs, the global regulatory frameworks shaping compliance requirements, and how to move beyond static policy documents to enforcement that works at runtime.

TLDR

- GenAI governance is operational infrastructure - not an optional policy exercise - for any enterprise deploying at scale

- Effective governance rests on five interdependent pillars: ethics and accountability, transparency, data governance, risk management, and lifecycle monitoring

- Key compliance anchors: NIST AI RMF (risk assessment), ISO/IEC 42001 (management systems), and the EU AI Act (legal obligations)

- The critical gap most enterprises miss: governance only works when enforced at runtime, not just documented in policy documents

- Regulated industries face stricter obligations and need governance that generates audit-ready evidence automatically

Why Enterprise GenAI Governance Can't Wait

The governance maturity gap is substantial and measurable. While over 70% of organizations have generative or predictive AI in production, few are measuring its financial impact or investing for long-term transformation. More concerning, 82% of AI policy violations are discovered after the fact, not prevented in real time.

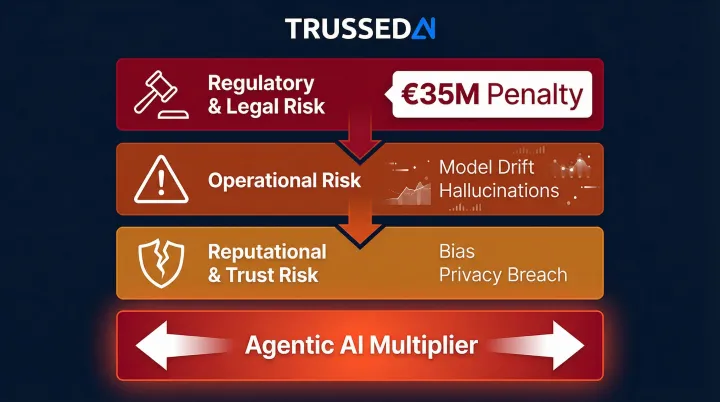

Three categories of risk emerge without governance:

Regulatory and Legal Risk: As AI laws like the EU AI Act and state-level regulations come into force, organizations without documented risk controls face substantial liability. The EU AI Act carries maximum penalties of up to €35 million or 7% of global annual turnover for prohibited practices. Companies that deployed AI without enterprise governance frameworks lost an average of $4.4 million per incident in 2025.

Operational Risk: Model drift, hallucinations, and unpredictable agentic behavior create production failures. Samsung experienced this firsthand when an engineer accidentally leaked sensitive internal source code by uploading it to ChatGPT, leading the company to ban AI chatbots across its workforce.

Reputational and Trust Risk: Biased outputs or privacy violations reaching customers erode trust and brand value. The exposure grows in direct proportion to how many customer interactions and business-critical decisions run through AI systems , meaning the longer governance is deferred, the larger the surface area for failure.

Each of these risks intensifies with agentic AI, where the attack surface expands from outputs to actions. 50% of organizations are piloting agentic AI, while 24% have it in production, yet only one in five companies has a mature governance model for autonomous AI agents. Agents that call APIs, modify data, and make decisions without human review don't just produce wrong answers , they take wrong actions, with consequences that can't always be reversed.

The Five Pillars of a Responsible GenAI Governance Framework

These five pillars are not independent checklists but interdependent controls that must work together across the full AI lifecycle - from model selection and data sourcing through deployment and decommissioning.

Ethics, Fairness, and Accountability

Fairness requires actively defining and testing for equitable outcomes across protected groups, not just assuming neutrality. NIST Special Publication 1270 identifies three major categories of AI bias: systemic, computational and statistical, and human-cognitive. Organizations must test for bias across all three dimensions.

Accountability means assigning specific, named human owners to each AI system so there is always a responsible party when something goes wrong. The fundamental principle: AI cannot be held accountable, only people can. Organizations that assign clear ownership for Responsible AI exhibit the highest average maturity levels (2.6), while those without clearly accountable functions lag behind materially (1.8).

NIST also flags team diversity - across experience, expertise, and backgrounds - as a direct risk mitigant for the people building and deploying these systems. That human dimension carries directly into how transparent those systems need to be.

Transparency and Explainability

Transparency operates on two dimensions. Internal transparency requires documenting model architecture, training data sources, known limitations, and decision logic so teams can audit and reproduce results. This enables technical teams to understand how systems reach conclusions and identify failure modes before they surface in production.

External transparency means communicating to users and regulators what the AI system does, what data it uses, and what safeguards are in place. The EU AI Act Article 13 requires that high-risk AI systems be designed to ensure their operation is sufficiently transparent to enable deployers to interpret a system's output and use it appropriately.

The NIST AI RMF distinguishes explainability (representation of the mechanisms underlying AI systems' operation) from interpretability (the meaning of AI systems' output in the context of their designed functional purposes). Both are necessary for complete transparency.

Data Governance and Privacy

AI governance is only as strong as the data foundation it sits on. Poor data quality, unlabeled sensitive data, and unauthorized data reuse in training are primary sources of model failure and compliance violations.

Strong data governance requires:

- Classification of data by sensitivity level and regulatory requirements

- Lineage tracking to understand data origins, transformations, and usage

- Purpose limitation ensuring data is only used for authorized purposes

- PII protection aligned with GDPR, HIPAA, or other applicable regulations

Organizations must configure data residency and retention policies to meet evolving regulatory requirements as data crosses jurisdictional boundaries in cloud and multi-model deployments.

Risk Management and Security

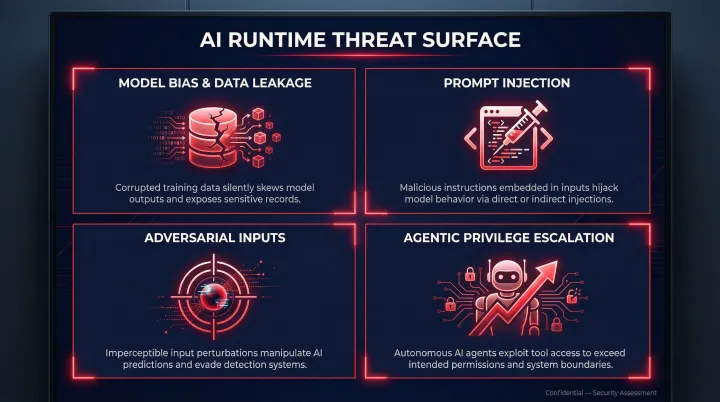

AI risk management is a continuous, structured process,not a one-time assessment. The threat surface spans multiple vectors:

- Model bias and data leakage from training data or inference outputs

- Prompt injection, where user prompts alter LLM behavior in unintended ways, including indirect injections via websites or external files

- Adversarial inputs designed to manipulate model outputs at runtime

- Agentic privilege escalation, where autonomous agents accumulate unintended permissions through policy drift or malicious manipulation

Security (protecting the AI stack from threats) and governance (deciding how AI is used and by whom) are complementary disciplines, not separate programs. Real-world incidents underscore why: a previously unknown vulnerability in OpenAI ChatGPT allowed sensitive conversation data to be exfiltrated without user knowledge via a hidden DNS-based communication path.

Lifecycle Oversight and Continuous Monitoring

Governance must span the entire AI lifecycle from initial design through production operation to eventual retirement. Continuous monitoring must track model performance, fairness indicators, policy compliance, and data drift, with automated alerts and structured incident review processes to catch problems before regulators do.

Under the EU AI Act, post-market monitoring is a legal obligation, binding providers and deployers to continuous oversight long after the system has cleared its conformity assessment. Most enforcement risk sits in production because models drift and conditions change,governance with no post-deployment monitoring cannot catch the problems regulators will eventually find.

Key Global AI Governance Frameworks Every Enterprise Should Know

Multiple international standards and regulatory frameworks now exist, and no single one is universal. Which ones apply depends on geography, industry, and AI use case risk level.

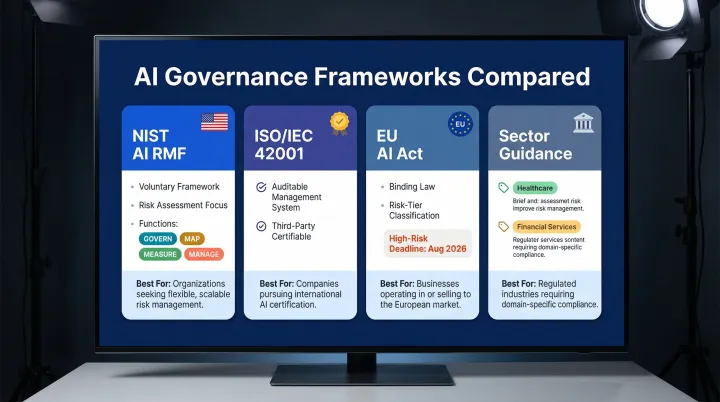

NIST AI Risk Management Framework

The NIST AI RMF 1.0, published in 2023, is voluntary and structured around four core functions: GOVERN, MAP, MEASURE, and MANAGE. It articulates the characteristics of trustworthy AI systems as: valid and reliable, safe, secure and resilient, accountable and transparent, explainable and interpretable, privacy-enhanced, and fair with harmful bias managed.

NIST AI RMF suits organizations starting their governance journey , particularly US-based ones. It's flexible, non-certifiable, and gives teams a structured starting point for risk assessment and policy planning.

ISO/IEC 42001

ISO/IEC 42001, published in 2023, is the world's first AI management system standard with a formal certification pathway. It provides a structured way to manage risks and opportunities associated with AI through an auditable management system.

ISO/IEC 42001 suits organizations that need demonstrable compliance credentials and formal third-party certification , particularly when customers or regulators require documented evidence of governance maturity.

EU AI Act

The EU AI Act (Regulation 2024/1689) is binding law that classifies AI systems by risk tier and mandates specific controls for high-risk applications. Prohibitions apply from February 2025, with high-risk AI rules applying from August 2026.

The Act is relevant for any enterprise with EU operations or customers. AI systems that perform profiling of natural persons are always considered high-risk, triggering substantial compliance obligations.

Complementary Standards and Sector Guidance

OECD AI Principles and UNESCO AI Ethics Recommendations provide high-level value commitments that underpin technical frameworks. NIST has published crosswalk documents mapping its framework to ISO/IEC 42001 and OECD principles, enabling enterprises to align multiple standards without duplicating effort.

Regulated industries should also map to sector-specific guidance. Key resources include:

- Healthcare: The Joint Commission and Coalition for Health AI's "Responsible Use of AI in Healthcare" (September 2025) provides non-binding guidance for clinical and health system contexts.

- Financial Services: The OCC's "Model Risk Management" booklet offers supervisory-aligned guidance for banks and financial institutions.

Practical Starting Point

With several overlapping frameworks in play, the question isn't which one is "right" , it's where to start. Organizations new to formal AI governance should begin with NIST AI RMF for risk assessment and policy planning, then layer in ISO/IEC 42001 controls if industry requirements or customer expectations call for third-party certification.

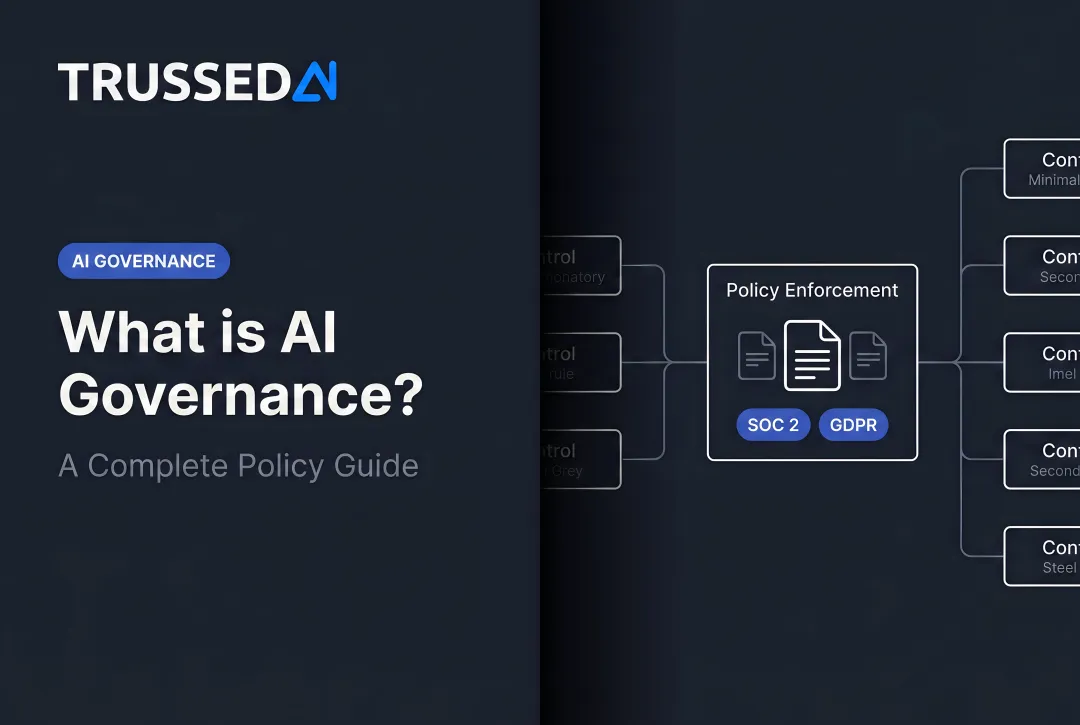

From Static Governance Policies to Real-Time Enforcement

Well-intentioned policy documents, ethics principles, and framework assessments do not automatically prevent a model from generating a harmful output, a developer from bypassing a data access control, or an agent from taking an unauthorized action in production. 50% of executives cite translating Responsible AI principles into operational processes as their biggest barrier to progress.

Governance only works when it is enforced at the point of execution, not just documented in a handbook.

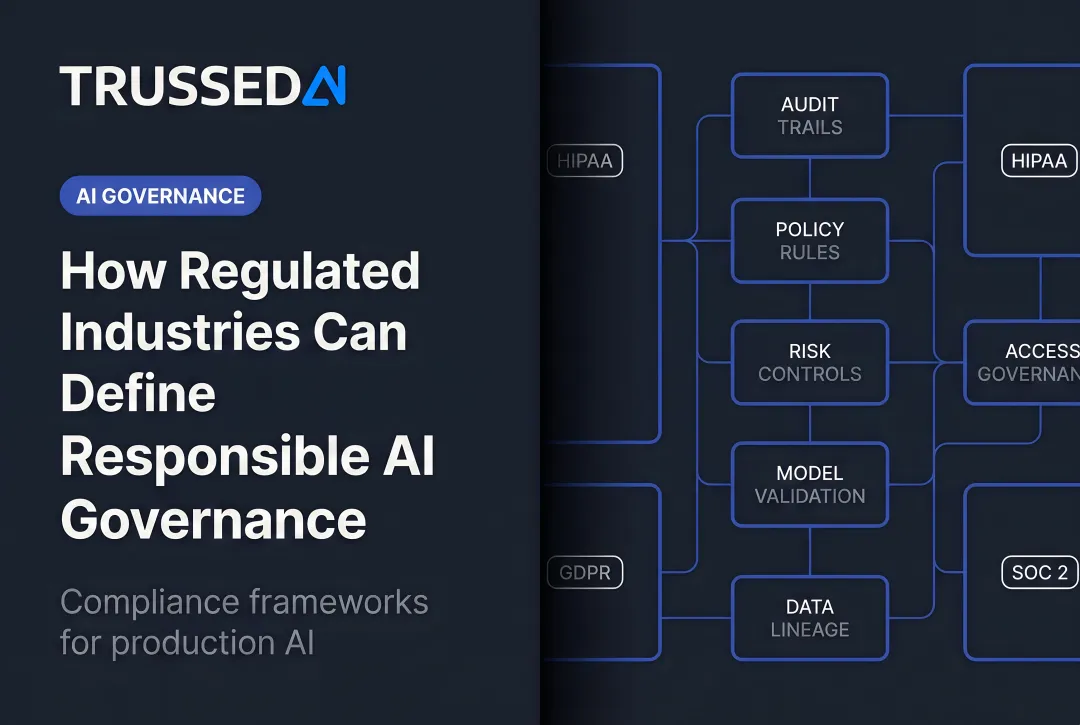

Runtime Governance Enforcement

Runtime governance means policies are evaluated in real time for every interaction , not reviewed periodically in audits after the fact. That includes:

- Which models can be used for which use cases

- What data can be passed to which models

- What content filters and output screens apply

- Which actions agents are permitted to take in production

This requires technical infrastructure that sits between applications, agents, and AI models , enforcing policies at runtime, maintaining complete audit trails, and generating compliance evidence as a byproduct of normal operations.

Trussed AI's control plane delivers this at sub-20ms latency with drop-in proxy integration that requires zero changes to application code, turning governance from a manual, retrospective activity into an automated, continuous one.

The Audit Trail Problem

Regulators and internal auditors increasingly expect enterprises to demonstrate governance compliance with evidence, not assertions. Organizations that rely on periodic manual audits face both higher workload and higher risk of gaps.

Governance infrastructure that generates structured, tamper-evident logs of every AI interaction as a side effect of normal operation addresses this without creating additional overhead. Every policy evaluation, model version, timestamp, and data lineage is captured automatically, enabling organizations to trace any decision on demand with complete chain of custody from prompt to model to output to action.

Cost and Velocity Dimension

That audit efficiency also has a direct cost implication. Ungoverned AI deployments accumulate technical and compliance debt that becomes increasingly expensive to address as deployments scale. Organizations that embed governance from the start reduce compliance costs and accelerate new AI use cases , because teams can ship with confidence rather than waiting for manual reviews.

Building Your Enterprise AI Governance Roadmap

Organizational Foundations First

The first governance investments should be structural,assigning clear AI ownership (whether a dedicated AI governance board, a CAIO role, or a cross-functional committee), defining risk tolerance and acceptable use policies, and inventorying all AI systems and use cases currently in production or development.

Governance programs that skip this step and jump straight to tooling typically fail: no one has authority to enforce the policies the tools are meant to implement. Research shows AI is actively reinforcing functional silos, with departments retreating into their own AI-powered worlds as performance suffers. Clear ownership and cross-functional coordination aren't optional extras , they're what make enforcement possible.

Risk-Tiered Approach

Not all AI systems require the same level of governance overhead. Categorize use cases by risk level:

- Low-risk productivity tools (internal summarization, draft generation)

- Medium-risk customer-facing applications (chatbots, recommendation engines)

- High-risk decision-making systems (credit decisions, healthcare diagnostics, employment screening)

Allocate governance controls to match that risk profile , harden oversight on high-risk, high-impact systems first, then scale controls across the rest of the portfolio.

Maturity Progression

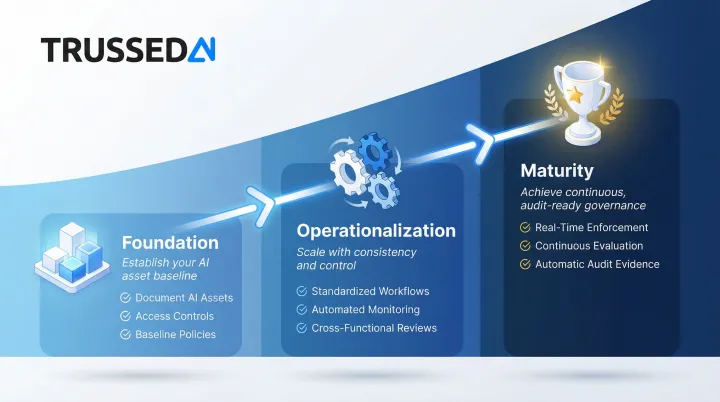

Plan for a three-phase approach rather than a one-time implementation:

Phase 1 , Foundation: Document AI assets, establish access controls, define baseline policies. The goal here is visibility and basic risk controls before anything else.

Phase 2 , Operationalization: Implement standardized workflows, automate monitoring, introduce cross-functional governance reviews. Governance gets embedded into development and deployment processes rather than bolted on afterward.

Phase 3 , Maturity: Continuous model evaluation, reproducible pipelines, real-time enforcement, and automatic audit evidence generation. At this stage, governance runs as infrastructure , producing compliance evidence as a byproduct of normal operations.

Each phase has predictable failure modes worth anticipating:

- Treating governance as a single team's responsibility rather than an organization-wide function

- Underinvesting in data quality, which undermines model reliability regardless of policy strength

- Writing policies that exist on paper without technical enforcement to back them up

Governance Metrics

Define metrics that give governance programs measurable targets:

- Reduction in compliance violations

- Mean time to detect and remediate model issues

- Documentation completeness rates

- Shortened review cycles for new AI use cases

- Audit readiness scores

Organizations should monitor post-implementation metrics including fairness, accuracy, transparency, robustness, bias, compliance, and security to ensure governance delivers measurable outcomes.

Frequently Asked Questions

What is the difference between AI governance and AI security?

AI governance defines how decisions are made about AI development, use, and oversight,including policies, accountability structures, and ethical standards. AI security protects the AI stack, data, and infrastructure from threats and unauthorized access. Both are required for responsible deployment; neither alone is sufficient.

Which AI governance framework should my enterprise start with,NIST AI RMF or ISO/IEC 42001?

NIST AI RMF is the better starting point for organizations new to governance because it is flexible, voluntary, and focused on risk assessment without requiring certification. ISO/IEC 42001 is better suited for organizations that need a formal, auditable management system with a certification pathway. Many enterprises use both in combination,NIST for risk methodology and ISO 42001 for certifiable management systems.

What are the biggest risks of deploying GenAI without a governance framework?

Three primary risk categories emerge: regulatory and legal liability as AI laws tighten globally, operational failures from model drift, hallucinations, and agentic misbehavior in production, and reputational damage from biased or harmful outputs reaching customers or regulators. According to recent industry estimates, organizations without governance frameworks lost an average of $4.4 million per incident in 2025.

How should governance frameworks account for agentic AI systems?

Agentic AI (systems that autonomously take actions, call APIs, and make decisions without human review) requires governance controls beyond content filtering. Organizations need permission boundaries, runtime enforcement before execution occurs, and audit trails covering every agent action,including agent-to-agent communication, shared memory access, and inter-system handoffs.

How do regulated industries like healthcare and financial Solution approach AI governance differently?

Regulated industries operate under binding compliance obligations (HIPAA, SOX, state AI laws, financial regulator guidance) that go beyond general governance best practices, requiring mandatory human-in-the-loop checkpoints for high-stakes decisions and audit trails that satisfy external examiners. Critically, organizations must generate audit-ready evidence automatically, not reconstruct it after a regulatory inquiry arrives.

How can an enterprise enforce AI governance policies in real time rather than just on paper?

Runtime enforcement requires a governance layer (often called an AI control plane or AI gateway) that evaluates and enforces policies at the point of every model interaction, agent action, or API call, rather than relying on periodic audits or developer self-attestation. A governance layer can generate compliance evidence automatically as a byproduct of this enforcement, creating continuous assurance rather than point-in-time audits.