The old CCM model alerts after a violation happens. AI systems need enforcement at the moment they act. This article explains why AI systems require a fundamentally different approach to continuous control monitoring, what AI-native CCM capabilities look like, and what the future holds for organizations in regulated industries.

TLDR

- AI-powered CCM shifts focus from static controls to dynamic model behavior, agent decisions, and real-time outputs

- Each inference, agent action, and workflow step can trigger a compliance violation. Monitoring must match that pace

- Runtime enforcement is where CCM is headed: blocking non-compliant AI behavior before exposure occurs

- Building AI-native governance now positions organizations to meet the EU AI Act, NIST AI RMF, and future mandates

What Is Continuous Control Monitoring?

Continuous Control Monitoring (CCM) is the ongoing, automated process of testing, validating, and enforcing an organization's controls in real or near-real time. Both major authorities align on the definition: ISACA describes it as enabling "near continuous (or at least high-frequency) monitoring of control operating effectiveness," while Gartner defines CCM software as platforms that "automatically and continuously test and verify the effectiveness of an organization's internal controls in real or near-real time."

Core components include:

- Control objectives aligned to business risk

- Automated testing and validation

- Real-time alerting and notifications

- Automated evidence generation

- Remediation workflows and tracking

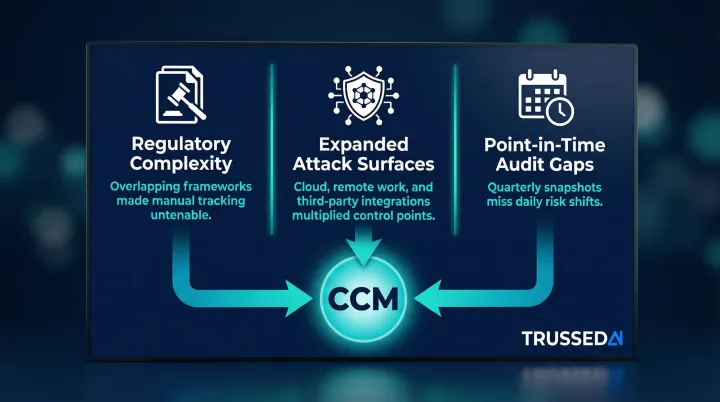

Traditional CCM has focused primarily on IT security controls: access management, patch status, configuration changes, and infrastructure monitoring. Three converging pressures pushed organizations toward continuous monitoring.

Why CCM Emerged

- Regulatory complexity multiplied. Compliance frameworks proliferated across industries with overlapping but distinct requirements, making manual tracking untenable.

- Attack surfaces expanded. Cloud adoption, remote work, and third-party integrations created far more control points than periodic audits could cover.

- Point-in-time audits fell short. Quarterly or annual reviews capture a single snapshot while the underlying risk environment shifts daily.

Major frameworks now explicitly require continuous monitoring:

NIST SP 800-53 Rev. 5 Control CA-7 mandates "ongoing monitoring of system and organization-defined metrics"

Information security management standards require organizations to determine "what needs to be monitored and measured" to evaluate security management system performance

Audit attestation standards verify control effectiveness over sustained periods, requiring ongoing evidence rather than point-in-time snapshots

Why AI Systems Demand a New Kind of CCM

Traditional controls have binary states: patched or unpatched, encrypted or not encrypted, access granted or denied. AI outputs are probabilistic and context-dependent. A model can be technically "deployed correctly" while still producing outputs that violate data privacy policies, generate biased decisions, or expose sensitive information, and none of that shows up in traditional CCM dashboards.

The Governance Gap Created by Rapid AI Adoption

Enterprise AI adoption is outpacing governance maturity faster than frameworks can keep up. While 88% of organizations report regular AI use in at least one business function, the majority remain in piloting stages. The governance gap is severe: 63% of breached organizations either lack an AI governance policy or are still developing one, and only 34% perform regular audits for unsanctioned AI.

This gap reflects the founding insight behind Trussed AI: organizations are deploying AI across products, agents, and internal tools faster than existing governance frameworks can manage.

The Expanded Compliance Surface AI Agents Create

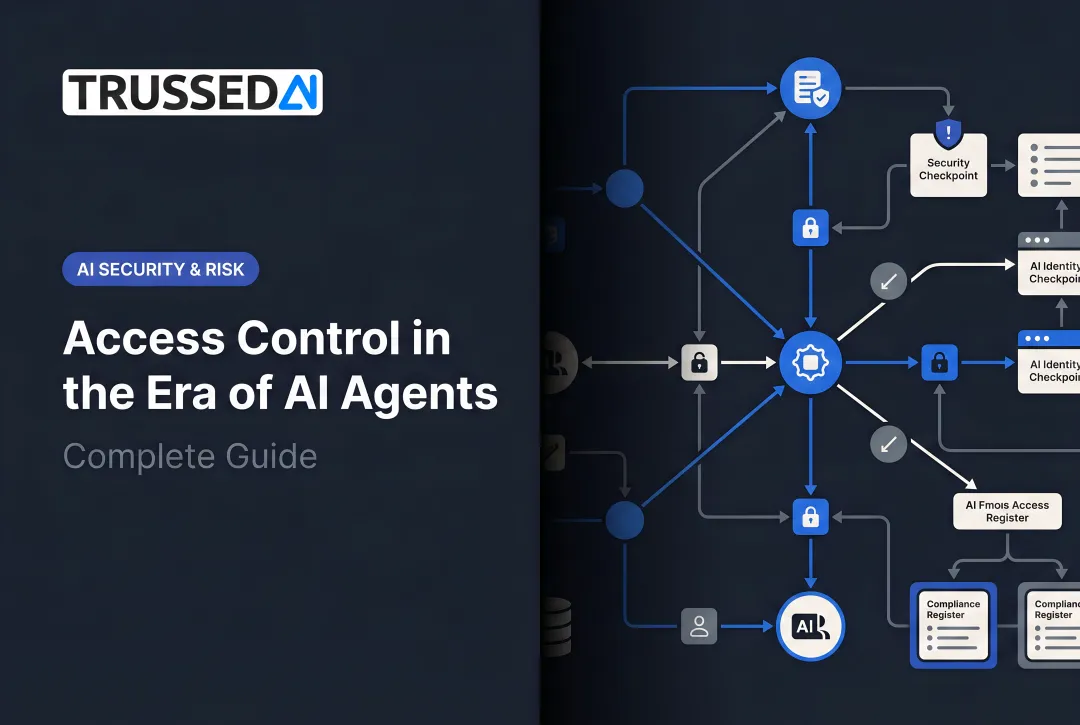

Unlike static applications, AI agents take multi-step actions autonomously, querying databases, calling APIs, generating customer communications, and making routing decisions. Each step is a potential compliance event.

Consider a healthcare AI agent summarizing patient data:

The agent receives a query, retrieves records from the EHR system, synthesizes information across multiple visits, and returns a summary to the clinician. At each step, including data retrieval, synthesis, and output generation, it could inadvertently surface Protected Health Information (PHI) in ways that violate HIPAA.

Traditional CCM tools monitor whether the EHR database has proper access controls. They don't watch what the agent does with that data at the inference layer.

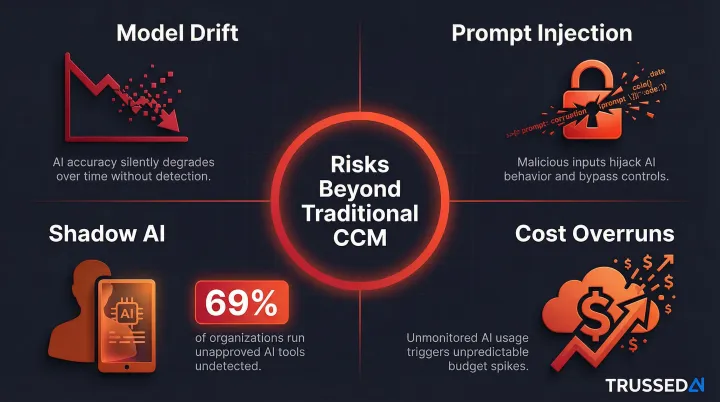

New Risk Categories Unique to AI

AI systems introduce risk categories that traditional IT controls cannot address:

- Model drift: outputs shift as models are updated or fine-tuned, degrading performance or introducing bias over time

- Prompt injection: adversarial inputs manipulate model behavior to bypass controls or extract sensitive information

- Shadow AI: 69% of organizations suspect or have confirmed employees using prohibited public GenAI, and one in five reported breaches tied to shadow AI

- Cost overruns: uncontrolled inference usage drives unpredictable cloud bills as teams experiment with models

These risks require monitoring that operates at the AI inference layer, not the network or infrastructure layer.

Trussed AI addresses this by enforcing policies at the inference layer in real time, detecting model drift, blocking prompt injection attempts, flagging unauthorized model usage, and attributing inference costs to teams and applications, so each of these risk categories has an active control rather than a retrospective alert.

Regulatory Pressure Building Around AI

Regulators are beginning to expect the same evidence-based compliance documentation for AI systems that they've long required for financial controls.

EU AI Act: Regulation (EU) 2024/1689 imposes strict lifecycle monitoring obligations for high-risk AI systems, with general prohibitions applying from February 2025 and high-risk obligations from August 2026. Article 12 mandates that systems "technically allow for the automatic recording of events (logs) over the lifetime of the system." Article 72 requires providers to establish systems that "actively and systematically collect, document and analyse relevant data" on system performance.

NIST AI RMF 1.0: The NIST AI Risk Management Framework emphasizes continuous monitoring across its core functions, requiring "ongoing monitoring and periodic review of the risk management process" (GOVERN 1.5) and mandating that "functionality and behavior of the AI system and its components are monitored when in production" (MEASURE 2.4).

Sector-Specific Mandates: The Federal Reserve's SR 11-7 guidance requires ongoing monitoring to "confirm that the model is appropriately implemented and is being used and performing as intended." The FDA's Predetermined Change Control Plans require that "deployed models are monitored for performance and re-training risks are managed."

For regulated industries, AI-specific CCM is no longer a best practice. It's an audit requirement.

How AI Transforms CCM: From Reactive Alerts to Runtime Enforcement

Traditional CCM tells you a control failed after the fact. AI-native CCM enforces policies at the moment of AI interaction, blocking a response that would violate a data handling policy, rerouting a request to a compliant model, or flagging an agent action before it executes. Think of it as the difference between a smoke alarm and a fire suppression system: one notifies, the other acts.

Automated Evidence Generation as a Structural Advantage

When every AI interaction passes through a governed control layer, the governed layer produces audit trails automatically, with no manual assembly required before an audit. Each prompt, response, policy decision, and exception becomes a timestamped, structured record.

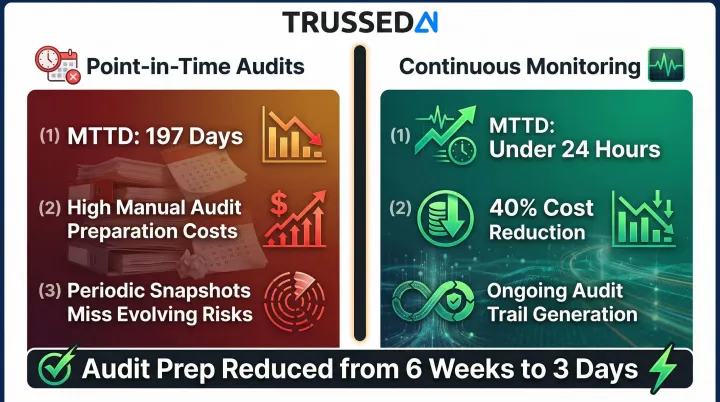

Trussed AI's platform generates governance evidence as a byproduct of every governed interaction. Organizations demonstrate compliance continuously rather than scrambling at audit time. Implementing continuous monitoring reduces audit preparation time from 6 weeks to 3 days.

Machine Learning Enhances Anomaly Detection

AI-powered monitoring establishes behavioral baselines for models and agents, then flags deviations invisible to rule-based systems:

- A model suddenly generating outputs with PII patterns

- An agent accessing data outside its normal scope

- A cost spike indicating runaway inference

- Output quality degradation suggesting model drift

Rule-based thresholds miss these signals entirely. They emerge across thousands of interactions, not individual events.

Predictive Risk Capabilities

AI systems analyzing historical control data can forecast which controls are likely to degrade and which workflows are approaching policy thresholds. Compliance gaps surface before they become violations, not after.

That predictive posture also changes how human reviewers spend their time. Rather than investigating past failures, teams are directed toward emerging risks while they're still manageable.

The Human-in-the-Loop Requirement

Effective AI-powered CCM focuses human attention rather than replacing it. Automated enforcement handles routine policy application at speed and scale, while escalation workflows surface genuine exceptions requiring human judgment. Key performance benchmarks include:

- Inline enforcement decisions: under 20ms latency

- Compliance violation rate: below 1% in production deployments

- Governance workload reduction: up to 50% less manual overhead

This structure preserves the human-in-the-loop principle regulators require without creating operational bottlenecks.

AI-Powered CCM in Practice: Key Use Cases

Regulated Industry AI Compliance

Healthcare, insurance, and financial Solution require monitoring AI outputs for PHI exposure, discriminatory patterns, and regulatory violations. 71% of U.S. hospitals reported using predictive AI in 2024, but entering PHI into tools like ChatGPT without a Business Associate Agreement constitutes unauthorized disclosure under HIPAA.

Financial Solution face similar scrutiny. Approximately 70% of financial Solution firms use AI for credit scoring and fraud detection, with regulators tightening expectations on how those outputs are explained and governed:

- The CFPB requires lenders to provide specific behavioral details for credit denials, rejecting generic explanations

- The SEC has penalized firms for "AI washing," resulting in $400,000 in civil penalties in 2024

Monitoring model outputs in production, not just reviewing configurations at deployment, is what regulators now expect. Trussed AI addresses this by intercepting AI outputs before they reach end users, applying data handling rules and bias filters at inference time, and generating the structured audit evidence that regulators in healthcare and financial Solution require without adding manual compliance overhead.

AI Agent and Agentic Workflow Governance

By 2023, 35% of organizations had adopted AI agents, with Gartner predicting 40% of enterprise applications will feature task-specific AI agents by 2026. As organizations deploy multi-agent systems that take autonomous actions, CCM must track decision chains across entire workflows.

"Control effectiveness" in agentic systems means monitoring an agent that can browse, retrieve, generate, and act, ensuring governance applies across agent-to-agent communication, shared memory access, and inter-system handoffs. Trussed AI addresses this by providing a unified control plane that tracks decision chains across multi-agent workflows, enforcing policies at each step and producing a timestamped record of every agent action for audit review.

Internal Developer Tool and Shadow AI Governance

Many compliance teams focus on customer-facing AI while overlooking AI tools engineers use internally, such as code assistants and LLM-based dev tools. The exposure is significant:

- 41% of employees have used AI in ways that contravene policies

- 38% acknowledge sharing sensitive work information with AI tools without permission

CCM that extends into developer environments catches data leakage, IP exposure, and policy violations at the source, before they reach production. Trussed AI addresses this by extending its proxy-based governance layer to internal developer tools, applying the same runtime controls to code assistants and LLM-based dev environments that it applies to production-facing AI applications.

Building an AI-Ready CCM Program

Start with a Control Inventory for AI Systems

Before monitoring can begin, organizations need visibility into what AI systems they're actually running: models, APIs, agents, third-party integrations, and internal developer tools. Many enterprises discover during this process that they have significantly more AI surface area than governance teams were tracking.

Organizations with high levels of shadow AI experienced breach costs averaging $670,000 higher than those with low or no shadow AI.

Define Policy-as-Code

Translate regulatory requirements, internal governance standards, and risk appetite into machine-readable, enforceable rules that can be applied at runtime. Policies that live in documents cannot be executed; policies encoded as rules can be enforced at every AI interaction.

Trussed AI's control plane takes this a step further: a drop-in proxy integration enforces governance at runtime across AI apps, agents, and developer tools, with zero changes to application code and production workflows live in about four weeks.

Establish Continuous Improvement Loops

CCM requires ongoing iteration. Define KPIs for AI governance health:

- Policy violation rate

- False positive rate

- Mean time to detect

- Audit readiness score

Review them on a defined cadence and use findings to refine policies and control thresholds. That cadence compounds quickly: organizations implementing continuous monitoring achieve MTTD under 24 hours compared to 197 days for point-in-time audits, with 40% cost reductions driven by eliminating intensive manual audit preparation.

Frequently Asked Questions

What is continuous control monitoring?

Continuous control monitoring is the ongoing, automated process of verifying that an organization's controls are operating effectively in real or near-real time, rather than relying on periodic point-in-time audits that only capture compliance status at specific moments.

Which are examples of types of continuous monitoring?

Key types include security control monitoring (access, configuration, patch status), compliance monitoring (policy adherence, regulatory requirements), financial control monitoring (transaction anomalies), and AI system monitoring (model outputs, agent behavior, inference cost tracking).

What role does continuous monitoring play in AI security?

Continuous monitoring detects adversarial inputs like prompt injection, flags anomalous model outputs, enforces data handling policies at inference time, and generates the audit evidence regulators increasingly require for AI systems in high-risk contexts. Trussed AI's platform performs each of these functions at the inference layer, operating as a governed control point between applications and the models they call.

What are the control strategies in AI?

Key AI control strategies include input validation and prompt guardrails, output filtering and policy enforcement, access controls on model APIs, behavioral monitoring for drift detection, and human-in-the-loop escalation for high-risk decisions.

How is AI-powered CCM different from traditional continuous control monitoring?

Traditional CCM monitors static, binary controls (patched or not, encrypted or not), while AI-powered CCM must govern dynamic, probabilistic outputs and autonomous agent actions. This requires runtime enforcement rather than just periodic checks. Trussed AI is built specifically for this gap, providing a drop-in control plane that enforces governance at the moment of every AI interaction rather than reviewing logs after the fact.

How do organizations implement continuous control monitoring for AI systems?

Begin with a full inventory of AI systems in use, then define governance policies as enforceable runtime rules. From there, deploy a monitoring layer at the inference level and treat audit trail generation as an ongoing process, not a pre-audit task. Trussed AI supports each stage of this process, from surfacing shadow AI during the inventory phase to enforcing policy-as-code at runtime and generating audit evidence automatically across every governed interaction.