Introduction

When MiFID II arrived, financial institutions faced a compliance earthquake: 30,000 pages containing between 1.5 million and 1.7 million paragraphs of regulatory text, costing the banking industry over €2.5 billion to implement. Globally, financial institutions now bear $206.1 billion annually in financial crime compliance costs alone. These numbers point to a fundamental problem,regulations are growing exponentially faster than compliance teams can process them manually.

Organizations are deploying AI to automate regulatory reporting faster than their governance frameworks can keep up,creating new regulatory exposure even as manual inefficiencies disappear. According to a 2024 industry study, 69% of compliance and IT professionals say AI adoption has outpaced their ability to implement adequate controls.

What follows is a practical look at how AI and machine learning are used in regulatory reporting right now,the real risks, the genuine gains, and what responsible deployment actually requires.

TLDR:

- AI automates data aggregation, regulatory interpretation, report generation, and real-time anomaly detection in compliance workflows

- Machine learning delivers up to 85% reduction in processing time and significantly lower false positive rates

- Critical risks include model explainability, algorithmic bias, vendor liability, and cross-border regulatory fragmentation

- Governance must be enforced at runtime across all AI systems,not just production but developer environments too

- Successful implementation requires data readiness, human oversight, and governance infrastructure from day one

What Is AI-Driven Regulatory Reporting?

Regulatory reporting is the ongoing obligation of regulated institutions,banks, insurers, healthcare organizations,to submit accurate, timely data to oversight bodies. Over the past decade, the volume, complexity, and frequency of these obligations have grown sharply. What once required quarterly submissions now demands near-real-time reporting across multiple jurisdictions with conflicting standards.

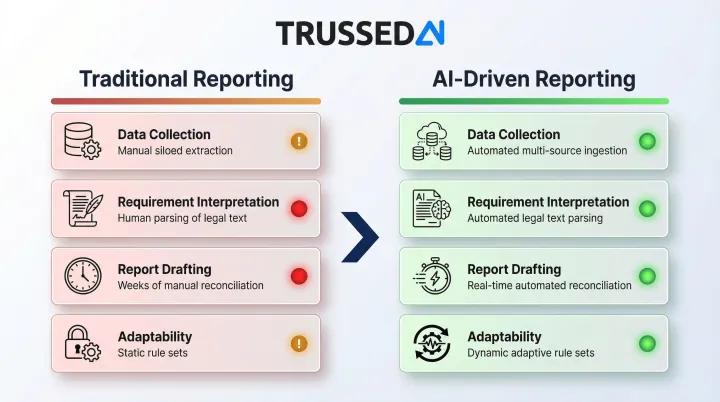

AI-driven regulatory reporting replaces manual, rule-based processes with automated systems that learn and adapt. The two approaches look nothing alike in practice:

| Traditional | AI-Driven | |

|---|---|---|

| Data Collection | Manual extraction from siloed systems | Automated aggregation of structured and unstructured data |

| Requirement Interpretation | Compliance teams parse dense legal texts | NLP reads regulatory documents and extracts actionable obligations |

| Report Drafting | Weeks of manual reconciliation per cycle | Generative AI produces formatted, regulator-ready reports |

| Adaptability | Static rule sets requiring manual updates | Models that learn and adjust to changing requirements |

The result: compliance cycles that once consumed weeks of labor now run in days,or continuously.

Three AI techniques do the heavy lifting:

- NLP: Parses dense regulatory text and converts obligations into machine-executable queries

- Machine learning: Flags anomalies, fraud signals, and threshold breaches in transaction data before they become violations

- Generative AI: Drafts structured compliance narratives and cross-references multiple frameworks simultaneously

Three industries are driving adoption fastest: financial Solution (AML, KYC, transaction reporting), insurance (Solvency II, IFRS 17), and healthcare (HIPAA, clinical reporting). While regulatory frameworks differ, the pressure is universal,compliance costs are unsustainable without automation.

How AI and ML Are Being Used in Regulatory Reporting

Automated Data Aggregation

Machine learning models pull structured and unstructured data from siloed internal systems, normalize it, and prepare it for reporting. This eliminates the manual reconciliation effort that historically consumed most compliance team time.

In one case, PwC deployed intelligent automation for ASC 606 revenue recognition compliance, reducing month-end close time by 85%,from three weeks to three hours,while increasing testing coverage from 25% to nearly 100%.

The efficiency gain is measurable: 80% of customer due diligence (CDD) processes are now automated in leading institutions, freeing compliance teams to focus on exception handling and risk analysis rather than data entry.

Interpreting Regulatory Requirements with NLP

Dense regulatory texts like MiFID II are nearly impossible for humans to process consistently at scale. NLP models ingest these documents and translate reporting requirements into machine-executable queries.

A 2018 proof of concept by the RegTech Council and JWG demonstrated how regulations can be processed using semantic technologies. The project ingested MiFID II's massive document set, using content classification tools to machine-read and semantically enrich paragraphs with regulatory topic tags. This automated the mapping of product governance clauses to functional business activities, proving that natural language regulations can be unpacked into machine-computable formats.

Critical dependency: Standardized industry definitions are essential. The Financial Industry Business Ontology (FIBO), maintained by the EDM Council, provides a formal, machine-readable vocabulary for financial contracts and concepts. Without these ontologies, ambiguity propagates from interpretation into output, creating compliance risk rather than reducing it.

Automated Report Generation with Generative AI

Generative AI transforms raw compliance data into structured, regulator-ready reports: formatted outputs with narrative summaries that cross-reference multiple regulatory frameworks at once. A single report can satisfy both AML and KYC requirements, cutting the effort required for separate filings.

Financial institutions are increasingly using GenAI to synthesize diverse data sources into financial crime reports, compressing submission timelines from weeks to days.

Real-Time Monitoring and Anomaly Detection

Machine learning models continuously scan transactions and operational data for anomalies or threshold breaches,surfacing potential violations in near-real-time instead of during periodic manual reviews.

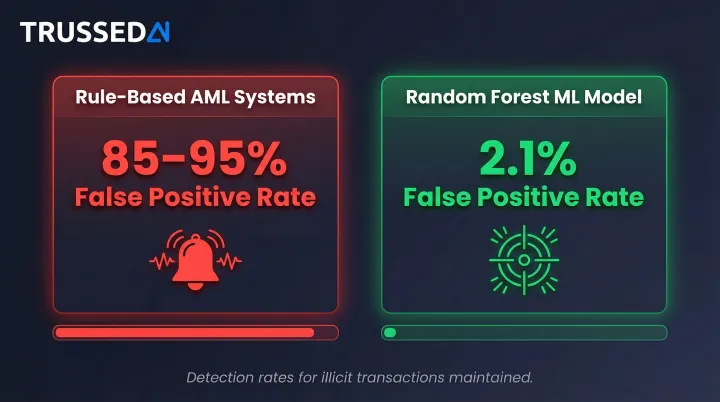

The impact on alert quality is substantial. Traditional rule-based AML systems generate false positive rates between 85% and 95%, overwhelming analysts with noise. By contrast:

- Rule-based systems: 85–95% false positive rate

- Random Forest ML model: 2.1% false positive rate, with maintained detection rates for potentially illicit transactions

That reduction frees analysts to investigate genuine risks rather than triaging irrelevant alerts.

The human-in-the-loop principle: ML flags issues and surfaces insights, but human compliance officers retain accountability for consequential decisions. This design principle is endorsed by regulators including the SEC and Bank of England - algorithms assist, humans decide.

Key Benefits of AI-Powered Regulatory Reporting

Efficiency and Cost Reduction

Automating data aggregation, interpretation, and report drafting frees compliance teams from repetitive manual tasks. With global financial crime compliance costs totaling $206.1 billion annually, the potential savings are substantial. Customer due diligence alone accounts for 66% of this spend , automation directly addresses the largest cost center.

Accuracy and Consistency

AI enforces uniform interpretation of reporting requirements across teams and jurisdictions. When different analysts handle the same regulation independently, discrepancies arise , and across multiple regulatory regimes, those discrepancies become costly. AI eliminates this variability, which is especially valuable for institutions managing compliance across borders.

Audit-Readiness and Traceability

Well-designed AI systems generate structured evidence of every governed interaction as a byproduct of normal operation. This replaces the retroactive scramble to reconstruct decision trails when regulators come knocking.

Key capabilities this enables:

- Instant retrieval of decision records tied to specific regulatory requirements

- Timestamped evidence trails covering every interpretation and action taken

- Audit responses that take hours rather than weeks to prepare

Critical Challenges: What Can Go Wrong

Model Explainability ("Black Box" Risk)

Many machine learning models,especially deep learning approaches,cannot easily articulate why they reached a particular output. This is a fundamental problem in regulated environments where every compliance action must be evidenced and defensible.

The NIST AI Risk Management Framework emphasizes that explainable AI systems are essential for debugging, monitoring, and governance. Regulators require that models applied to significant decisions be supported by a thorough understanding of how conclusions are reached. Explainable AI (XAI) is the evolving discipline addressing this gap,but firms deploying models today cannot wait for the field to mature. They need documentation and audit trails now, even for systems that lack full interpretability.

Data Bias and Integrity

Biased or incomplete training data propagates through ML models into biased outputs. This includes the risk of demographic bias in customer risk scoring, which can lead to financial exclusion of minority groups.

The European Banking Authority warns that due to the randomness associated with GenAI outputs, banks may face limited capabilities in explaining the logic behind biased decisions. Data review, source verification, and ongoing quality benchmarking are required steps before deploying AI in regulatory contexts,not optional safeguards to revisit later.

Cross-Border Regulatory Fragmentation

There is no universal standard for regulatory reporting across jurisdictions. AI models trained on one regulatory regime may produce inconsistent or non-compliant outputs when applied to another. This requires ongoing model updates and jurisdiction-specific fine-tuning, creating maintenance overhead that organizations often underestimate.

Vendor and Outsourcing Risk

Firms increasingly rely on third-party AI vendors for compliance tooling, but regulatory obligation does not transfer to the vendor. FINRA is explicit: institutions remain fully responsible for the accuracy and compliance of all outputs, including those generated by external AI systems.

In practice, this means firms must:

- Audit vendor tools before deployment and on a recurring basis

- Contractually require notification of any model changes

- Maintain internal records of vendor AI behavior and outputs

The EU's ESMA reinforces this position, mandating that investment firms' decisions remain the responsibility of management bodies, regardless of whether those decisions are executed by people or AI-based tools.

Regulatory Uncertainty Around AI Itself

AI use in regulated industries is itself subject to increasing regulatory scrutiny. Governance bodies like FINRA, the SEC, and EU regulators have published guidance requiring firms to supervise AI models under existing compliance frameworks. This means firms must govern their AI tools as rigorously as any other compliance system, adding another layer of complexity.

Governing the AI You Use for Regulatory Reporting

AI governance is not optional when AI generates compliance outputs. As organizations deploy AI agents and workflows to produce regulatory reports, those systems must operate within enforced policy boundaries. Static policies written in documents are insufficient,governance must be applied at runtime, enforcing rules as each AI interaction occurs.

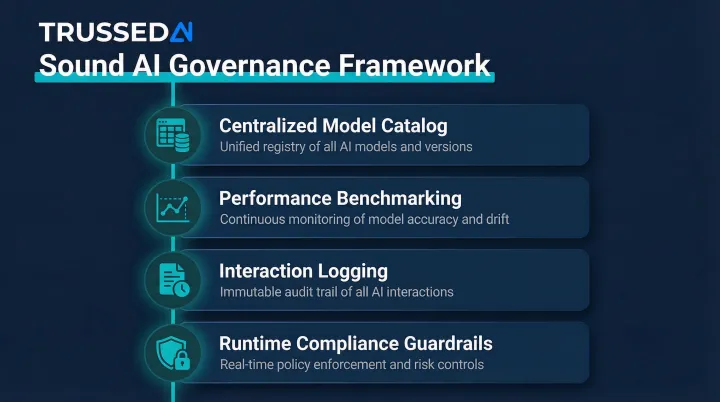

Components of a sound AI governance framework:

- Maintain a centralized catalog of all AI models, documenting their purpose, inputs, outputs, and validation status

- Track model outputs against performance benchmarks to detect drift, bias, and accuracy degradation

- Log every AI interaction with policy evaluation results, model version, timestamp, and data lineage

- Apply compliance guardrails at the point of execution across all AI apps and agents,including developer environments where models are first tested, not just production systems

Meeting these requirements in practice means enforcing governance at the point of execution, not as a post-hoc review. Trussed AI's control plane does this through a drop-in proxy architecture , organizations apply compliance guardrails across AI apps, agents, and workflows without rewriting application code. Every governed interaction automatically generates audit-ready evidence, with less than 1% compliance violations reported across production deployments.

One often-overlooked consideration: the governance platform itself must meet the same compliance bar it enforces. Trussed AI is built to be auditable directly and does not introduce new compliance exposure into the stack.

Implementation Considerations: Where to Start

Begin with a Data Readiness Assessment

Before deploying AI for regulatory reporting, audit the quality, completeness, and consistency of underlying data. The OCC Comptroller's Handbook notes that model output quality depends entirely on input data quality,errors in inputs lead to inaccurate outputs.

Key steps:

- Identify data silos and integration gaps

- Check for bias in historical datasets

- Establish whether standardized definitions exist for key reporting terms

- Map internal data to industry ontologies like FIBO

Prioritize Explainability and Human Oversight in Model Selection

Evaluate AI tools not just on accuracy benchmarks but on their ability to surface the reasoning behind outputs. Beyond accuracy, build in:

- Fallback processes for when models fail or produce unexpected results

- Clear thresholds defining when human review is mandatory before submission

- Documentation of model limitations accessible to compliance reviewers

FINRA expects firms to maintain ongoing monitoring of AI prompts and outputs, including human-in-the-loop review to check for errors or bias. That expectation applies regardless of how mature or accurate the underlying model is.

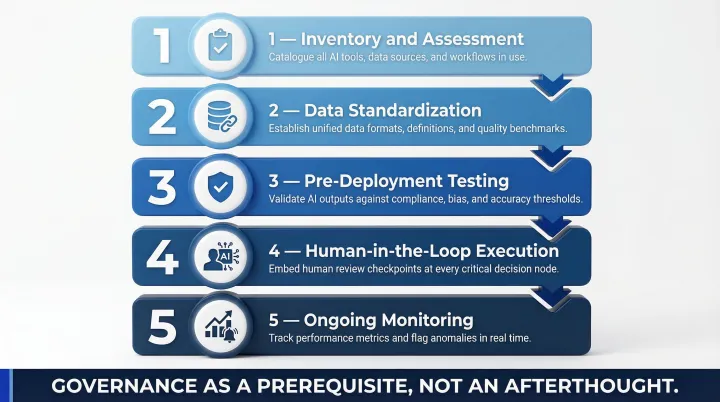

Plan for Governance Before Scaling

Deploy AI for regulatory reporting without parallel governance infrastructure and you accumulate compliance debt faster than you can manage it. Set up monitoring, audit trails, and policy enforcement frameworks before scaling , governance is a prerequisite, not a phase-two initiative.

A structured implementation roadmap ensures AI adoption scales safely:

- Inventory and assessment: Catalog all existing and planned AI models

- Data standardization: Map internal data to industry ontologies

- Pre-deployment testing: Conduct adversarial testing and evaluate explainability

- Human-in-the-loop execution: Deploy models alongside human reviewers for critical decisions

- Ongoing monitoring: Track model drift, false positive rates, and algorithmic bias

Getting the sequence right matters. Teams that treat governance as a parallel track , not an afterthought , typically see compliance violations stay below 1% and reach operational deployment in weeks rather than quarters.

Frequently Asked Questions

How can AI be used in regulatory reporting?

AI handles several core functions in regulatory reporting:

- Aggregates data automatically from multiple source systems

- Uses NLP to interpret and map regulatory requirements

- Generates structured reports via generative AI

- Monitors transactions in real time for anomalies and threshold breaches

Is AI being regulated in the US?

While there is no single federal AI law, regulators like the SEC, FINRA, and federal banking agencies have issued guidance requiring firms to supervise AI tools under existing compliance and supervisory frameworks. The EU AI Act has established formal risk-based requirements that affect US firms operating in European markets.

What are the biggest challenges of implementing AI in regulatory reporting?

The top three challenges are model explainability requirements in regulated environments, data bias and quality issues in training datasets, and the ongoing need to update models as regulations change across jurisdictions.

What is explainable AI (XAI) and why does it matter for regulatory reporting?

XAI refers to techniques that make machine learning model decisions interpretable and auditable. In regulatory contexts, this is essential because compliance actions must be documented and defensible to regulators , without it, organizations cannot justify why their AI systems made specific decisions.

How does machine learning reduce false positives in compliance monitoring?

ML models learn from historical case data to better distinguish genuine risk signals from routine activity, continuously refining detection thresholds. Rule-based systems apply static criteria that generate high false positive rates because they cannot adapt to new patterns. ML reduces false positives from 85-95% to as low as 2.1% while maintaining detection accuracy.

What governance framework is needed for AI-driven regulatory reporting?

Essential components include a model inventory with risk ratings, real-time policy enforcement across AI systems, automated audit trail generation, human-in-the-loop oversight for consequential decisions, and ongoing performance monitoring against compliance benchmarks. Governance must be enforced at runtime, not just documented in policies.