Introduction

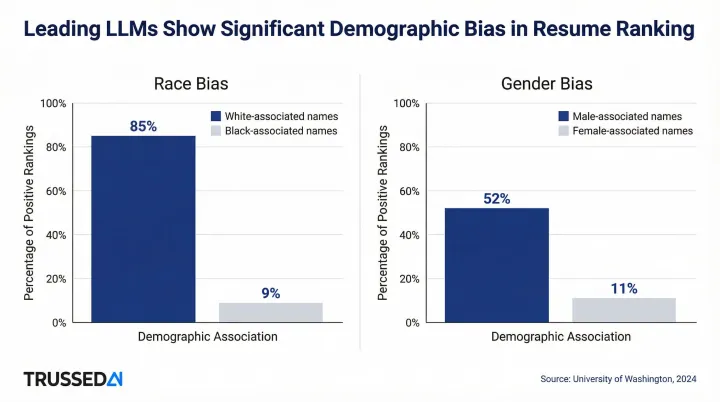

AI is now embedded in HR functions, including recruiting, performance management, and workforce analytics, faster than governance frameworks have kept pace. According to SHRM's 2025 Talent Trends report, 51% of organizations now use AI to support recruiting efforts, up from 26% just one year earlier. Yet this rapid adoption creates measurable compliance and bias risks: a 2024 University of Washington study found that AI resume screening systems preferred white-associated names 85% of the time versus Black-associated names just 9% of the time.

This guide is for HR leaders, CHROs, and enterprise technology decision-makers in regulated industries. It covers not just what AI governance in HR is, but how it functions operationally, the level where most published guidance falls short.

The stakes are concrete: AI in HR directly affects hiring, compensation, promotions, and terminations. Unchecked automation in these decisions creates legal liability under frameworks including the EU AI Act, GDPR Article 22, and U.S. state employment laws in California, Colorado, Illinois, and New York City.

TLDR

- AI governance in HR covers the policies, controls, and enforcement mechanisms that keep hiring and employment AI systems fair, transparent, and legally compliant

- Without it, AI in high-stakes employment decisions generates legal liability and discriminatory outcomes

- Runtime enforcement matters. Policy documents alone don't stop a model from making a biased call at 2 a.m.

- Top failure modes: shadow AI deployments, governance gaps in third-party vendor tools, and missing audit trails when decisions are disputed

What Is AI Governance in HR?

AI governance in HR is the operational framework that regulates how AI systems are developed, deployed, and monitored within human resources functions. It encompasses policies, technical controls, human oversight checkpoints, and audit mechanisms.

The intended outcome: ensuring AI-driven HR decisions are explainable, consistent, free from unlawful bias, and compliant with applicable employment and data protection regulations, while still delivering the efficiency gains AI promises.

Conflating it with adjacent concepts is where many organizations go wrong. Three terms frequently get used interchangeably, but they describe different layers of control:

- AI ethics in HR: Principle-level guidance on fairness, transparency, and accountability

- HR technology management: System administration, vendor contracts, and tool configuration

- AI governance in HR: The operational oversight layer between an AI system's outputs and the employment decisions those outputs influence

Without that third layer, organizations are left with principles that don't control system behavior, creating audit gaps and regulatory exposure when AI systems adapt or vendor tools contain undisclosed model logic.

Why Enterprises Need AI Governance in HR

Regulatory Pressure Driving Adoption

The EU AI Act classifies certain HR AI systems as high-risk applications subject to mandatory human oversight and transparency obligations. Systems used for recruitment, selection, promotion, termination, task allocation, and performance monitoring fall under this classification, with compliance obligations beginning August 2, 2026. GDPR Article 22 grants data subjects the right not to be subject to decisions based solely on automated processing that significantly affects them, including e-recruiting practices.

In the U.S., state-level frameworks create overlapping requirements:

- California: CCPA/CPRA regulations for Automated Decisionmaking Technology require detailed risk assessments, pre-use notice, and opt-out rights (effective April 2026)

- Colorado: SB24-205 requires developers and deployers to use reasonable care to avoid algorithmic discrimination, with mandatory impact assessments (effective February 2026)

- Illinois: HB 3773 prohibits employers from using AI that subjects employees to discrimination based on protected classes and requires notice (effective January 2026)

- New York City: Local Law 144 prohibits using automated employment decision tools without independent bias audits within the past year and 10 business days' candidate notice

Organizations without proactive governance face increasing litigation exposure. In Mobley v. Workday, a federal court granted conditional certification for ADEA claims alleging Workday's AI screening tools caused disparate impact based on race, age, and disability. The EEOC confirmed in May 2023 that employers can be held responsible under Title VII if an algorithmic tool causes disparate impact, even when designed by a third-party vendor.

What Breaks Down Without Governance

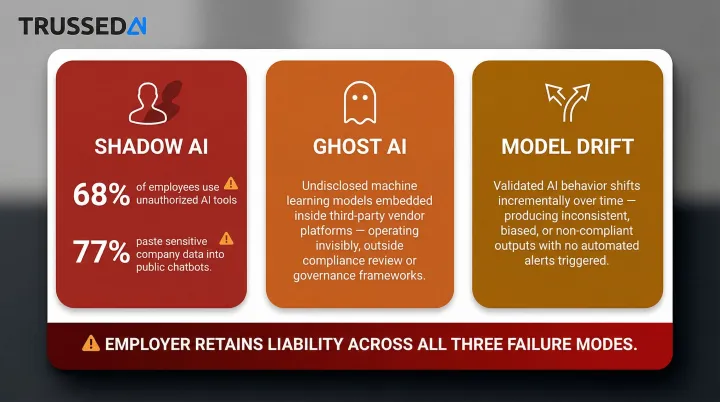

That legal exposure doesn't only come from tools you chose deliberately. Three operational failure modes routinely put employers at risk regardless of vendor contracts:

- Shadow AI: 68% of employees use unauthorized AI at work, with 77% pasting company data into chatbots, exposing sensitive HR records and PII without any oversight

- Ghost AI: Machine-learning models embedded within vendor sourcing platforms operate without employer awareness, making decisions no one internally can explain or audit

- Model drift: Deployed systems shift away from their original validated behavior through continued use and retraining, often without triggering any alert

In all three cases, the employer retains legal accountability even when a third-party vendor supplied the AI. The EEOC's guidance explicitly states that if a vendor is incorrect in its assessment that a tool does not result in adverse impact, the employer may still be liable. Governance isn't just about the tools you know you're running. It's about maintaining control over everything touching your HR decisions.

How Enterprise AI Governance in HR Works

Enterprise AI governance in HR is a continuous control loop, moving from policy definition through runtime enforcement to ongoing audit. Organizations that treat it as a one-time procurement checklist consistently find gaps when regulators come knocking.

Running that loop requires five inputs:

- HR use case inventory (which AI tools touch which employment decisions)

- Risk classification of each tool

- Applicable regulatory requirements by jurisdiction

- Vendor disclosure documentation

- Internal data governance standards

Step 1: Risk Classification and Policy Definition

Governance begins by mapping every AI system touching HR functions, both internal and vendor-supplied, against a risk tier framework:

- High-risk systems (influencing hiring, promotion, or termination) require the most rigorous oversight, including mandatory human decision checkpoints and documented rationale

- Lower-risk systems (administrative automation, scheduling) require lighter controls

Most enterprises undercount high-risk systems because vendors describe ML-driven tools as mere "automation." Organizations should inventory all AI-enabled HR tools and classify them based on their impact on employment decisions, not vendor marketing language.

Step 2: Runtime Policy Enforcement

Policies only govern outcomes if they are enforced at the moment of system execution, not reviewed after the fact. This means implementing technical controls that:

- Intercept AI outputs before they reach decision-makers

- Apply guardrails (e.g., filtering outputs that reference protected characteristics)

- Enforce access and usage rules

- Log every interaction for auditability

Platforms purpose-built as AI control planes, such as Trussed AI, operate as drop-in proxy layers that enforce governance at runtime across models, agents, and workflows without requiring changes to application code. The proxy sits in the flow of every AI interaction, evaluating policies before execution and maintaining oversight as systems scale.

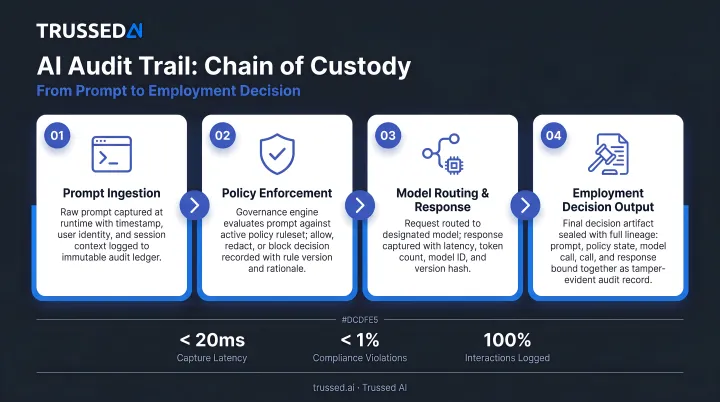

Step 3: Continuous Monitoring and Audit Trail Generation

Runtime enforcement handles the moment of execution. Continuous monitoring handles everything that changes afterward, including model drift, shifting regulations, and expanding vendor tool capabilities. In practice, this means:

- Regularly evaluating model outputs for bias patterns

- Tracking whether human oversight checkpoints are being used as intended

- Generating audit-ready evidence automatically

When regulators or employees contest a decision, documentation exists as a byproduct of governance operations rather than being rebuilt after the fact. Effective platforms log every AI interaction with four elements: policy evaluation results, model version, timestamp, and data lineage. Together, these create a complete chain of custody from prompt to output to action.

Where AI Governance Applies Across the HR Lifecycle

Talent Acquisition

Resume screening tools, automated candidate ranking, and AI-generated job descriptions require specific governance controls:

- Bias audits evaluating whether systems produce disparate impact across demographic groups

- Candidate transparency disclosures explaining how AI influences decisions

- Documented human review before offers

AI adoption in recruiting is the most prevalent HR use case, with 51% of organizations using AI for recruiting according to SHRM's 2025 Talent Trends report.

Performance Management and Workforce Analytics

AI used for performance scoring, flight-risk prediction, and succession modeling draws on longitudinal employee data that may encode historical inequities. Governance here requires:

- Defined data retention and access policies

- Explainability requirements for manager-facing recommendations

- Regular audits comparing outcomes across demographic groups

The EU AI Act requires that affected persons have the right to obtain clear and meaningful explanations of the AI system's role in decision-making for performance evaluation systems.

Vendor-Supplied AI Tools

Most enterprises outsource recruiting, screening, and workforce analytics to third-party vendors, but legal liability for AI-driven employment decisions typically remains with the employer. Governance must include:

- Contractual vendor disclosure requirements (what models are used, how outputs are generated, what data is retained)

- Pre-deployment audits

- Ongoing vendor performance reviews

Without these controls, employers carry liability for outcomes they never reviewed. The IAPP notes that employer liability for vendor AI is a critical blind spot in most governance frameworks.

Agentic and Generative AI in HR Workflows

Autonomous agents, including those that conduct initial candidate outreach, draft performance review summaries, or handle benefits queries, behave differently from deterministic AI tools. Their outputs vary across interactions, and that unpredictability is what makes them harder to govern.

Pre-deployment reviews alone aren't enough here. Governance frameworks must extend to real-time behavioral guardrails that activate at every step:

- Policy evaluated before each tool call and data access request

- Workflow triggers reviewed against authorization rules in real time

- Agent actions logged continuously, not just audited after the fact

This ensures agents cannot perform unauthorized actions regardless of what the underlying model produces.

Key Factors That Affect AI Governance Effectiveness in Enterprises

Data Quality and Bias Propagation

The primary input degrading governance outcomes is training data that reflects historical hiring or performance patterns shaped by discrimination. A 2024 University of Washington study evaluating three leading LLMs ranking resumes found systems preferred white-associated names 85% of the time versus Black-associated names 9% of the time, and male-associated names 52% of the time versus female-associated names 11% of the time.

Effective governance frameworks address this at both ends of the pipeline:

- Audit training datasets for protected-class correlations before deployment

- Monitor model outputs continuously for patterns that signal discriminatory behavior

- Document remediation steps taken when bias is detected

Cross-Functional Ownership Gaps

Governance effectiveness breaks down when accountability is fragmented. HR owns the use case, IT owns the system, Legal reviews contracts, and Compliance handles regulations, but no single function owns the continuous governance loop.

High-performing organizations establish a cross-functional AI governance committee with defined ownership for:

- Risk classification

- Vendor oversight

- Incident response

- Policy updates

Regulatory Jurisdiction Complexity

Multinational enterprises face governance requirements that vary by country and U.S. state, with different rules on automated decision-making, employee data rights, and required disclosures. The practical solution is a single framework calibrated to the most stringent applicable rules, whether that's the EU AI Act's transparency mandates, New York City's Local Law 144 bias audit requirements, or state-level privacy statutes. Managing separate compliance tracks per jurisdiction creates gaps. Defaulting to the highest standard eliminates them.

Common Pitfalls and Misconceptions About AI Governance in HR

Treating Governance as a Policy Document Exercise

The most common failure is equating governance with having an AI use policy or ethics statement. Both set principles but do not control system behavior. Real governance requires technical enforcement mechanisms that operate at the system level, not just at the awareness level.

When policy is not operationalized, adoption of unapproved tools and data exposure follows. Organizations need runtime enforcement that intercepts AI outputs and applies guardrails automatically, not static documents that employees may or may not follow.

Assuming Vendor Responsibility Covers Enterprise Liability

Enterprises routinely misinterpret vendor AI governance certifications (such as "responsible AI" branding) as a transfer of compliance responsibility. Legal frameworks in most jurisdictions hold the employing organization accountable for employment decisions regardless of whether a third-party AI system generated the recommendation.

Littler Mendelson and IAPP analyses confirm that employers retain responsibility for decisions executed by third-party HR AI vendors. If a vendor's tool results in adverse impact, the employer may still be liable.

Conflating Governance with One-Time Audits or Pre-Deployment Reviews

AI systems evolve through continued use, model retraining, and vendor updates. A governance posture that passes a tool at deployment but never re-evaluates it will fail when the model drifts or the regulatory environment changes.

Effective governance is continuous, not episodic. Ongoing monitoring must track:

- Model performance shifts that emerge after deployment or retraining

- Bias patterns that develop as the tool encounters new data distributions

- Policy violation rates against current regulatory requirements

- Vendor update logs that may alter model behavior without notice

Conclusion

AI governance in HR is not an optional risk management exercise. It is the operational layer that determines whether AI adoption in HR creates value or liability. Enterprises that treat it as a living control system rather than a documentation checklist achieve faster, safer deployment and are better positioned for regulatory scrutiny.

HR leaders in regulated industries should prioritize a governance architecture built around three capabilities:

- Runtime policy enforcement across models, workflows, and vendor-supplied tools

- Automated audit trail generation that produces compliance evidence as a byproduct of normal operations

- Extended oversight to third-party AI vendors, not just internally built systems

The cost of ungoverned AI in HR shows up in litigation, eroded employee trust, and regulatory penalties. With the EU AI Act taking effect in August 2026 and U.S. state laws already in force, the window for reactive governance is closing.

Platforms like Trussed AI are built specifically for this challenge, enforcing governance at runtime across the full AI stack, so enterprises can deploy HR AI systems confidently without sacrificing speed or compliance posture.

Frequently Asked Questions

What is the difference between AI governance and AI compliance in HR?

Compliance is meeting specific regulatory requirements (e.g., EU AI Act, GDPR), while governance is the broader operational framework, including controls, oversight structures, and enforcement mechanisms, that makes compliance achievable and sustainable. In practice, governance is what you build; compliance is what you demonstrate.

Who is responsible for AI governance in HR: HR, IT, or Legal?

Effective AI governance in HR requires shared ownership across HR, IT, Legal, and Compliance. Accountability gaps between these functions are among the most common reasons governance fails, which is why high-performing organizations establish cross-functional governance committees with clearly assigned roles.

How do enterprises govern AI embedded in third-party HR vendor tools?

Governance requires contractual disclosure requirements, pre-deployment audits of vendor AI logic, and ongoing vendor performance reviews. Employer liability for AI-influenced employment decisions stays with the hiring organization regardless of vendor involvement. Governance responsibility cannot be delegated.

What are the biggest regulatory risks of ungoverned AI in HR?

The primary risks include discriminatory outcomes in hiring or promotion attributable to biased models, violations of automated decision-making rights under GDPR or U.S. state AI laws, and increasing litigation exposure from candidates challenging AI-screened applications. The EU AI Act imposes fines up to €35 million or 7% of global annual turnover for violations.

How can enterprises detect shadow AI or ghost AI operating in HR systems?

Detection requires a formal AI inventory process covering vendor tool disclosure audits and procurement governance that mandates AI disclosure at evaluation. HR teams should also monitor hiring outcome data for unexplained pattern shifts, a common signal of undisclosed model activity. Track all AI-enabled tools, not just those explicitly marketed as "AI."

What does an audit-ready AI governance trail look like in HR?

An audit-ready trail is a time-stamped, automatically generated log of AI interactions, recording the policies applied, human review actions taken, model version, and data lineage at each decision point. It should be produced by the governance control layer itself, not assembled manually after the fact.