Introduction

According to an August 2025 HFMA report, 88% of health systems are using AI in some form, yet only 18% have mature governance structures in place - a 70-point gap. Financial Solution aren't far behind: 59% of finance leaders report active AI use while still struggling with model risk management and explainability.

The consequences are concrete. In healthcare, ungoverned AI threatens patient safety and regulatory compliance. In financial Solution, it introduces lending bias, creates fraud vulnerabilities, and invites regulatory action.

Both sectors are now under active enforcement pressure: the CFPB has explicitly rejected the "black box" defense for credit denials, and the ONC's HTI-1 rule mandates algorithmic transparency across health IT systems.

This guide breaks down the governance frameworks, regulatory requirements, and practical controls that healthcare and financial Solution organizations need to deploy AI responsibly , at scale.

TLDR

- AI governance is the combination of frameworks, policies, and runtime controls that keep AI systems compliant, auditable, and operationally accountable

- Healthcare compliance centers on HIPAA, FDA device oversight, and ONC transparency mandates; financial Solution compliance centers on SR 11-7, FINRA oversight, and DORA resilience requirements

- The most common failure: treating governance as documentation rather than operational control enforced at runtime

- Best practices share five pillars: risk classification, data governance, transparency, continuous monitoring, and human oversight

- Organizations that build governance infrastructure before scaling AI generate audit evidence automatically and cut compliance violations significantly

Why AI Governance in Regulated Industries Is Non-Negotiable

AI governance is the set of frameworks, policies, and operational controls that guide how AI systems are designed, deployed, monitored, and decommissioned. In regulated industries, the absence of governance isn't just a compliance gap , it's an operational liability.

In healthcare, AI errors affect clinical decisions and patient safety directly. A 2025 study of 691 FDA-cleared AI/ML devices found that 5.8% were recalled 113 times , primarily for software issues , with 489 adverse events reported, including one death.

Financial Solution carry equally serious exposure. Algorithmic failures introduce bias in lending, enable fraud, and trigger market instability. The DOJ's Combating Redlining Initiative has secured over $137 million in settlements against discriminatory lending practices.

Both sectors face aggressive regulatory enforcement. The CFPB's Circular 2023-03 explicitly states that creditors cannot use complex or opaque technology as a defense for violating equal credit opportunity requirements. Meanwhile, the ONC's HTI-1 rule requires 31 source attributes for predictive algorithms in certified health IT.

These regulatory requirements make one thing clear: enforcement isn't hypothetical. Yet most organizations are deploying AI faster than their governance infrastructure can track. When regulators ask how a specific AI decision was governed, most organizations need weeks to reconstruct the answer manually , which means audit readiness is reactive, not built in.

The Core Pillars of AI Governance for Regulated Industries

Effective AI governance rests on five interconnected pillars. Each addresses a distinct risk surface, but together they create a cohesive control framework.

Risk Classification and Inventory

Governance begins with visibility. Organizations must catalog all AI systems in use, including third-party vendor tools and shadow AI deployed without IT oversight, and classify them by risk level.

This inventory is the precondition for all governance work , you cannot govern what you cannot see. Risk tiers typically break down as:

- High risk: Clinical decision support, fraud detection, autonomous agents

- Medium risk: Predictive analytics, customer-facing recommendations

- Lower risk: Administrative automation, internal productivity tools

The classification determines which controls apply, how frequently systems are reviewed, and what level of human oversight is required.

Data Governance and Bias Management

AI models are only as trustworthy as their training data. Governance must enforce standards for:

- Data completeness and representativeness across demographic groups

- Provenance tracking to understand data sources and transformations

- Bias detection mechanisms to identify discriminatory patterns

In healthcare, biased training data leads to unequal diagnoses. The landmark 2019 Science study by Obermeyer et al. demonstrated that a widely used commercial algorithm falsely concluded Black patients were healthier than equally sick White patients because it used health costs as a proxy for health needs.

In financial Solution, biased credit models constitute fair lending violations. A 2024 CFPB pilot study revealed significant disparities in how lenders treat Black and white small business owners, with Black entrepreneurs receiving less encouragement to apply.

Transparency and Explainability

Detecting bias in training data is only half the equation. Regulators also require organizations to show their work , and that obligation is codified. The ONC HTI-1 rule requires developers to provide 31 source attributes for predictive Decision Support Interventions, enabling clinical users to assess algorithms for fairness, appropriateness, validity, effectiveness, and safety.

Financial regulators require model explainability for credit decisions. The CFPB explicitly rejected the "black box" defense, stating that creditors cannot rely on generic sample reasons if they don't specifically explain the denial.

Good documentation includes:

- Data lineage and feature selection rationale

- Validation methodology and performance metrics

- Known limitations and failure modes

- Demographic performance breakdowns

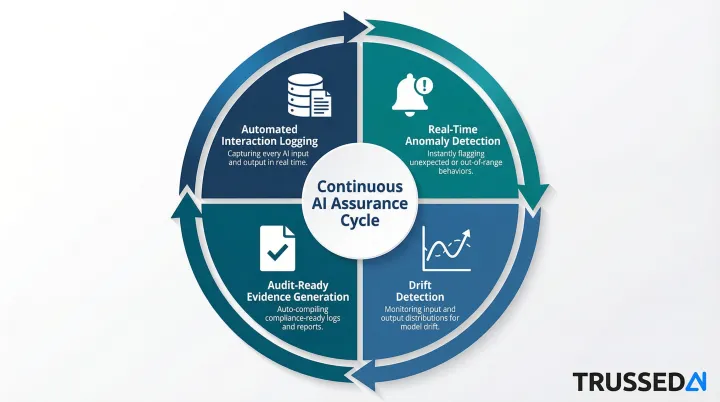

Continuous Monitoring and Audit Trails

AI systems degrade over time through model drift. Governance frameworks must require continuous monitoring against predefined KPIs, not just a one-time check at deployment.

Effective monitoring includes:

- Automated logging of every AI interaction

- Real-time anomaly detection against baseline performance

- Drift detection across input distributions and output patterns

- Audit-ready evidence generation without manual effort

This shifts organizations from point-in-time audits to continuous assurance, where governance evidence is captured automatically as a byproduct of every governed interaction.

Human Oversight and Accountability

Governance must define where human review is mandatory. High-stakes decisions in clinical care, loan approvals, and fraud detection require human checkpoints.

A cross-functional AI governance committee should include:

- Compliance and legal representatives

- Clinical or domain experts

- Data scientists and model developers

- Risk management professionals

Critically, this committee needs formal authority: the power to approve, monitor, and decommission AI systems. Advisory-only roles create accountability gaps that regulators will scrutinize.

AI Governance Best Practices in Healthcare

Healthcare AI operates under layered regulatory oversight from the FDA (AI-enabled medical devices), ONC (HTI-1 transparency rule), HHS/OCR (HIPAA), and state-level laws. This creates a complex compliance surface that governance frameworks must map explicitly , and address systematically across four core areas.

Align to Recognized Frameworks

Healthcare organizations should anchor governance to established standards:

- NIST AI Risk Management Framework for operational trustworthiness criteria

- ISO/IEC 42001 as a maturity and audit-readiness target

- CHAI's Responsible Use of AI guidance for healthcare-specific implementation

Using these frameworks signals governance maturity to regulators and partner vendors.

Implement Pre-Deployment Clinical Validation

Validate AI tools on your own patient population before clinical deployment,not just on vendor-reported benchmarks. This includes:

- Red-teaming generative AI applications to surface biased outputs across demographic subgroups

- Ethics review with multidisciplinary stakeholders before high-risk AI goes live

- Performance testing across patient cohorts to identify disparate impact

Centralize Compliance Reporting

Fragmented compliance tracking creates gaps and redundancy. Build a centralized AI compliance reporting system that tracks:

- Regulatory updates and their applicability to deployed systems

- Incident logs and root cause analysis

- Performance KPIs across all AI applications

- Audit findings and remediation status

Centralized tracking cuts response time when regulations change and gives boards a single, defensible view of AI risk exposure. It also creates the foundation for governing newer, higher-risk AI categories , particularly generative and agentic systems.

Govern Generative AI and Agentic AI Separately

Generative AI and agentic AI introduce risks beyond traditional ML controls: hallucination, prompt injection, and autonomous action chains.

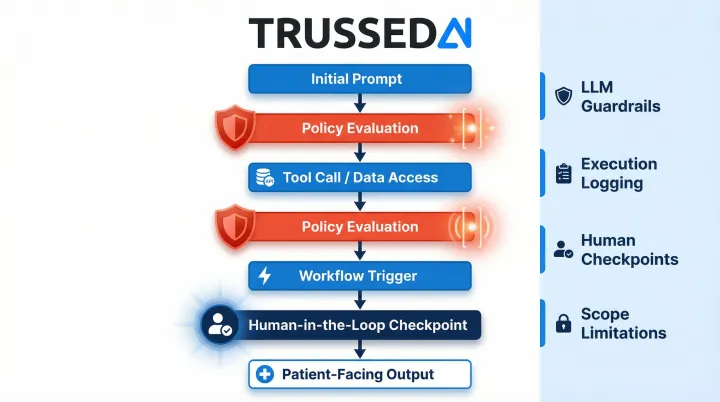

Governance must include:

- Specific guardrails for LLM outputs in clinical contexts

- Logging of all agentic task executions

- Human-in-the-loop checkpoints for patient-facing outputs

- Scope limitations on autonomous agent actions

Agentic systems executing multi-step workflows require policy evaluation at every tool call, data access, and workflow trigger , not just at the initial prompt. Runtime governance platforms like Trussed AI enforce these controls continuously, generating audit evidence as a byproduct of each governed interaction rather than as a separate compliance task.

AI Governance Best Practices in Financial Services

Financial Solution AI governance sits atop a layered regulatory stack: SR 11-7 model risk guidance, FINRA oversight, the EU's DORA regulation, and the emerging U.S. Treasury Financial Services AI RMF. Together, these frameworks create a distinct compliance surface from healthcare , one that places heavy emphasis on model validation, audit trails, and fair lending compliance.

Apply Model Risk Management Principles to All AI

Extend traditional model risk management practices to all AI systems,including machine learning and generative AI, not just quantitative risk models.

Each AI application should have:

- A designated model owner accountable for performance

- Independent validation records

- Defined performance thresholds that trigger review

- Documentation of model development and testing

Enforce Fairness and Fair Lending Compliance

Algorithmic bias is a legal violation under ECOA, the Fair Housing Act, and CFPB guidance. Governance must include:

- Disparate impact testing across protected classes for any AI used in credit decisions

- Documentation of fairness testing methodology and results

- Regular re-validation as models are updated or data distributions change

- Results available for regulatory examination on demand

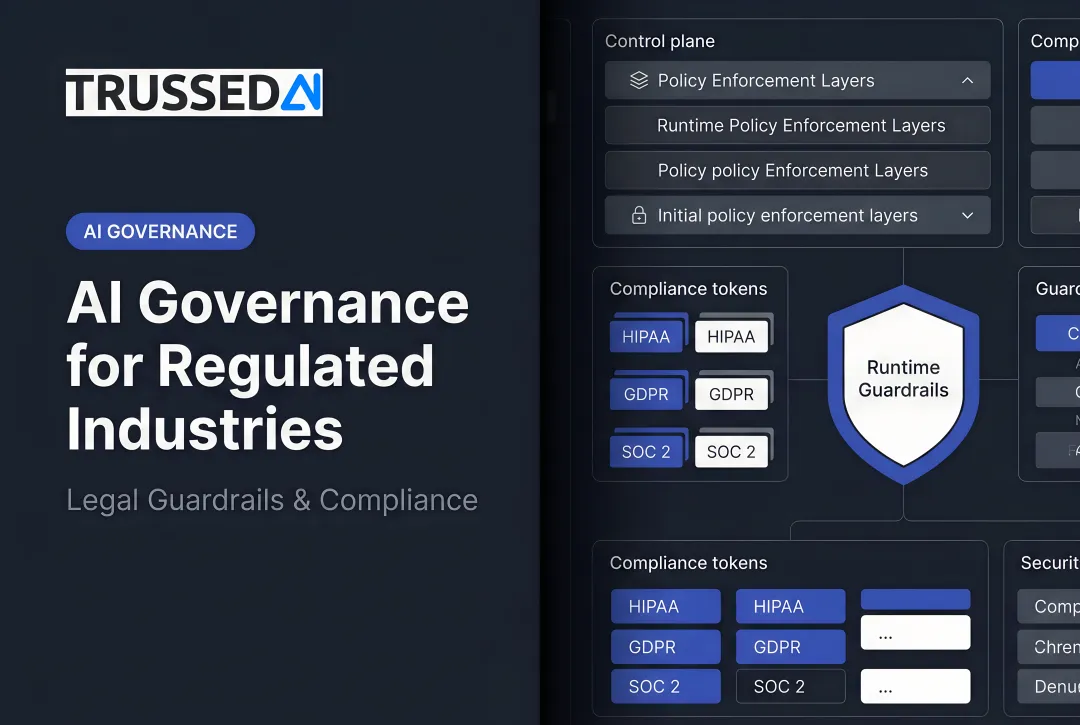

Build Real-Time Policy Enforcement into AI Infrastructure

Static policy documents fail when AI systems make decisions at machine speed. Financial institutions need governance controls enforced at runtime: every AI interaction checked against defined policies, logged, and flagged for exceptions before execution.

This matters most for agentic AI systems executing multi-step financial workflows. Platforms like Trussed AI enforce these controls as a drop-in proxy layer, evaluating policy before every tool call and API request , no changes to existing application code required.

Maintain Continuous Audit-Ready Evidence

Financial regulators expect organizations to produce complete, verifiable audit trails for AI-driven decisions on demand. Governance infrastructure should automate evidence generation, capturing:

- Model inputs, outputs, and versions

- Policy checks and evaluation results

- Human override logs and approval workflows

- Complete decision chain of custody

This turns audit preparation into a continuous byproduct of governed operations , not a scramble when regulators come calling.

How to Build an AI Governance Framework That Scales

The most common pitfall: building governance as a one-time policy project rather than operational infrastructure. Effective governance must be embedded into the AI deployment pipeline,from procurement through production monitoring,not bolted on afterward.

Practical starting sequence:

- Inventory all AI in use including vendor-embedded and shadow AI

- Classify systems by risk level based on decision impact and regulatory exposure

- Map regulatory obligations specific to your sector

- Select a framework anchor (NIST AI RMF for operational trustworthiness, ISO 42001 for management system maturity)

- Implement runtime enforcement and automated audit logging , platforms like Trussed AI enforce governance policies at runtime across AI apps, agents, and developer tools, generating audit trails automatically as a byproduct of every governed interaction

That last step becomes especially important as organizations move beyond single-model tools into multi-agent workflows. Agentic AI introduces governance challenges that static policies can't address , autonomous action chains, inter-agent communication, and decisions made without human review at every step.

Your framework needs to handle:

- Agent action boundaries , restricting what each agent can do based on role and context

- Inter-agent authorization , validating permissions as tasks pass between agents in a workflow

- Tool call enforcement , authorizing every external call against policy before it executes

- Escalation controls , defining when autonomous decisions require human-in-the-loop review

Any framework you build today should be designed with this in mind. Runtime enforcement isn't optional at scale , it's the mechanism that keeps agentic systems from operating outside defined boundaries.

Conclusion

In healthcare and financial Solution, governance is the infrastructure that makes AI adoption faster, lower-risk, and durable. Organizations that invest in governance early move faster, face fewer regulatory surprises, and earn greater trust from patients, customers, and regulators.

The question is not whether to build governance, but whether your current posture is operational or aspirational. Are your policies enforced at runtime with continuous monitoring, or are they documented without enforcement?

That gap,between documented policy and enforced policy,is where regulatory exposure lives. Trussed AI's enterprise control plane addresses it directly for regulated industries, converting static policies into runtime controls that activate at every AI interaction.

Ready to assess your governance readiness? Talk to a Trussed Advisor to explore how runtime governance can transform your AI operations.

Frequently Asked Questions

What is AI governance and why does it matter specifically for healthcare and financial Solution?

AI governance is the set of frameworks, policies, and operational controls guiding responsible AI deployment. Healthcare and financial Solution require sector-specific governance because AI errors carry direct regulatory consequences, patient safety risks, and financial liability,stakes that demand enforceable controls, not just documentation.

What regulatory frameworks apply to AI governance in healthcare?

Healthcare AI is governed by FDA oversight of AI-enabled medical devices, ONC HTI-1 transparency requirements, HIPAA data privacy obligations, and emerging state-level AI laws in Colorado, Utah, and California. NIST AI RMF and ISO 42001 provide the operational structure for implementing those requirements consistently.

How does AI governance in financial Solution differ from healthcare?

Financial Solution governance is anchored in model risk management (SR 11-7), fair lending law (ECOA, CFPB), and operational resilience requirements (DORA), with stronger emphasis on model validation and disparate impact testing. Healthcare focuses more heavily on patient safety and clinical data transparency.

What is the biggest mistake organizations make when building AI governance programs?

Treating governance as a documentation exercise. Static policies not enforced at runtime fail to catch violations as they occur and cannot generate the continuous audit evidence regulators expect.

What does "runtime AI governance" mean and why does it matter?

Runtime governance means policies are enforced at the moment AI makes a decision,not reviewed after the fact. Every model output is checked against defined rules, logged, and flagged for exceptions in real time.

How should organizations govern agentic AI systems differently from traditional AI models?

Agentic AI introduces autonomous multi-step action chains that require governance controls on task scope, inter-agent permissions, human escalation triggers, and full execution logging , capabilities that single-model governance frameworks aren't built to handle.