Introduction

Enterprises are deploying AI across products, agents, and workflows faster than governance infrastructure can absorb. Organizations are putting 11x more AI models into production year-over-year, with the average registering 261% more models year over year.

Yet despite 90% of organizations expanding privacy programs due to AI, only 12% describe their AI governance committees as "mature and proactive". That gap creates compounding exposure in regulatory risk, operational fragility, and rising costs.

Those model counts make scale the defining variable. A governance gap manageable at 5 AI systems becomes a material liability at 500, especially in healthcare, insurance, and financial Solution, where audit requirements are strict and tolerance for explainability gaps is near zero.

This post breaks down 10 specific governance challenges that surface or intensify at scale in 2026, moving from organizational to technical to operational dimensions. Taken alone, each is solvable. Taken together, under deployment pressure, they compound faster than most governance teams can respond.

TL;DR

- AI governance failures at scale emerge from fragmented ownership, invisible usage, and policies that exist on paper but are never enforced at runtime

- Only 12% of organizations have mature governance committees, while 67% report 101–250 proposed AI use cases but 94% have fewer than 25 in production

- The 10 challenges cover the full production lifecycle, from accountability and shadow AI to agentic systems, regulatory complexity, and cost control

- Solving them requires continuous, runtime-enforced governance, built into AI infrastructure rather than bolted on as a compliance checklist

Why AI Governance at Scale Is a Different Problem in 2026

AI governance at scale means governing not just a handful of approved models but dozens of AI applications, agents, developer tools, vendor integrations, and automated workflows across multiple teams and geographies. Most organizations were built to ship software, not to govern continuously adaptive, non-deterministic systems. Governance processes designed for predictable SaaS deployments break down when applied to probabilistic AI outputs that change with data drift, model updates, and user behavior.

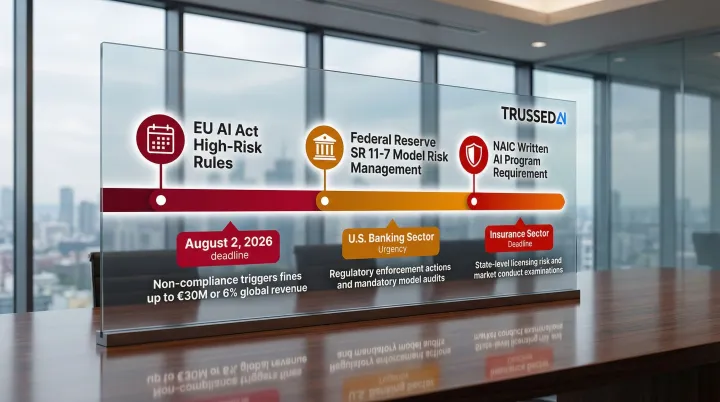

Three converging forces have expanded the governance surface faster than most enterprises' tooling and operating models can handle: the rise of agentic AI, widespread foundation model access, and tightening regulatory requirements. The compliance pressure alone is now concrete and dated:

- EU AI Act high-risk system rules take effect August 2, 2026

- Federal Reserve SR 11-7 mandates active model risk management for U.S. banks

- NAIC requires written AI programs for insurers operating in member states

Organizations that haven't aligned their governance infrastructure to these timelines aren't just facing audit risk. They're facing operational risk. Regulators can require a system to be pulled from production until compliance is demonstrated.

The Top 10 AI Governance Challenges at Scale in 2026

These challenges are drawn from structural patterns seen across large enterprises and regulated industries. Each is individually manageable, but cascading and compounding when AI deployment scales.

Challenge 1: Fragmented Ownership and Accountability

AI governance responsibility is typically split across CISO, Legal, Compliance, HR, and product teams, with each function owning a slice but no single authority owning enforcement outcomes. AI governance committees are dominated by IT/Technology (57%) and Cybersecurity (42%), while Legal/Risk/Compliance (35%) and Product (8%) lag significantly.

When an incident occurs: Reconstructing who was responsible becomes forensic archaeology rather than a built-in system property. 20% of digital trust professionals do not know who would be ultimately accountable if an AI system caused harm, and 56% indicate they do not know how quickly they could halt an AI system due to a security incident.

The larger the AI estate, the worse this problem gets. Policies are written but never enforced, and risk assessments happen in silos.

Challenge 2: Adoption Velocity Outrunning Governance Capacity

AI deployment cycles are measured in weeks while governance review cycles are measured in months. Risk committees have no mechanism to approve AI deployments fast enough, so employees fill the gap on their own terms. This is a tooling and process architecture problem, not a people problem.

Without automated audit trails, intent-based enforcement, and runtime guardrails, governance will always lag adoption regardless of team size. Gartner emphasizes that point-in-time audits are insufficient. Continuous monitoring and automated policy enforcement at runtime are critical as AI systems make autonomous decisions.

Organizations using AI governance tools get over 12x more AI projects into production than those without. When governance operates at deployment speed, it enables faster production releases, not fewer.

Challenge 3: Shadow AI as the Largest Unmanaged Risk Surface

Shadow AI refers to employees using unapproved AI tools spanning browser-based tools, IDE-embedded copilots, desktop apps, and personal accounts, most of which are invisible to IT. 47% of genAI users rely on personal apps, and 89% of logins to AI SaaS applications bypass organizational Single Sign-On (SSO).

Browser extensions as a side door: 20% of enterprise users have GenAI browser extensions installed, creating persistent data leakage vectors. The average organization sees 8.2 GB of data uploaded to SaaS AI apps per month.

Legacy DLP and CASB tools were not built for conversational AI. They lack visibility into bidirectional AI traffic intent and have no way to detect multi-turn cumulative data leakage. Network-level discovery is a prerequisite for any real governance program.

Challenge 4: Agentic AI Operating Beyond Traditional Guardrails

Agentic AI is a governance category of its own. Agents can autonomously call APIs, query databases, execute multi-step workflows, and make decisions using inherited credentials, with minimal human oversight. This creates an identity and authorization problem traditional IAM systems were not built for.

Gartner predicts 40% of enterprise applications will include integrated task-specific agents by 2026, up from less than 5% in 2025. Yet only 21% of companies currently have a mature governance model for autonomous agents.

Credential sprawl: Machine identities now outnumber human identities by 82 to 1. 50% of organizations have reported breaches linked to compromised machine identities (API keys, TLS certificates). 1 in 8 enterprise security breaches now involves an agentic system, with 78% of breached agents having significantly broader permission scopes than their designated function required.

Agents require tokens and API keys across many systems, which multiply rapidly and become over-privileged or stale without tight lifecycle management. The same governance policies applied to human employees must extend to machine actors.

Challenge 5: Data Governance Gaps Undermining AI Governance

AI governance without data governance is reactive. Organizations that lack data lineage, provenance tracking, and purpose limitation controls have no factual foundation to assess or manage AI risk at scale.

65% of organizations struggle to access relevant, high-quality data efficiently. While 66% report having a data tagging system in place, only 51% describe their system as comprehensive.

As AI systems are retrained, fine-tuned, and repurposed, datasets evolve and are reused in ways that were never audited. Bias or representativeness analyses performed once at launch become stale without embedded continuous monitoring. 77% of organizations identify IP protection of AI datasets as a top concern, and 70% acknowledge risk exposure from using proprietary or customer data in AI training.

Challenge 6: Post-Deployment Drift and Monitoring Failures

AI systems are dynamic. They operate on evolving data distributions, under shifting behavioral conditions, and in changing regulatory environments. Most organizations monitor only technical performance metrics, not distributional shifts, emergent feedback loops, or compliance drift.

Model data drift (changes in input statistical properties) and concept drift (changes in underlying logic) can cause catastrophic financial and reputational losses if left unmonitored.

Regulatory requirements: The EU AI Act Article 61 requires providers of high-risk AI systems to establish and document a proportionate post-market monitoring system.html) to actively collect and analyze performance data throughout the system's lifetime. The NIST AI RMF "MANAGE 4.1" subcategory requires post-deployment AI system monitoring plans, including mechanisms for incident response, recovery, and decommissioning.

Intervention thresholds, such as when to retrain, rollback, or escalate, are rarely defined in advance. They get negotiated ad hoc when anomalies appear, with no systematic link between monitoring signals and governance processes like risk committees or policy updates.

Challenge 7: Third-Party and Vendor AI Extending the Blind Spot

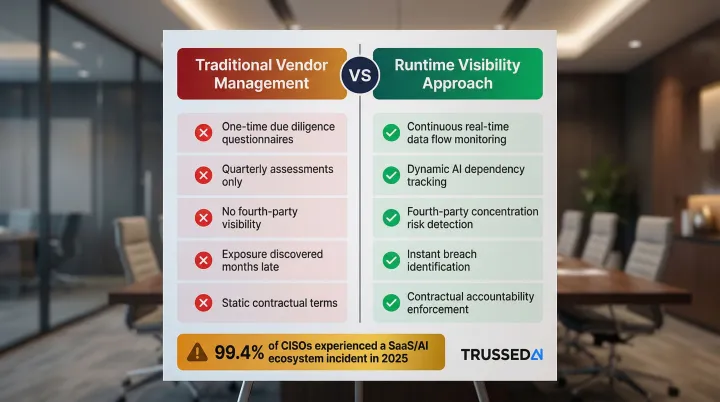

Organizations have no direct visibility into whether vendor-embedded AI models process their data compliantly, whether customer data feeds into model training, or which fourth-party AI Solution their vendors use. Traditional vendor management programs were not designed to answer these questions continuously.

99.4% of CISOs experienced at least one SaaS or AI ecosystem security incident in 2025, yet only 55% of organizations require contractual terms defining data ownership, responsibility, and liability with AI vendors.

One-time due diligence questionnaires discover exposure months after it occurs. Manual vendor questionnaires have hit a scaling wall, failing to provide visibility into fourth-party concentration risk or dynamic AI dependencies. What enterprises need is real-time runtime visibility into which data flows to vendor AI systems, not quarterly assessments.

Challenge 8: Multi-Jurisdictional Regulatory Complexity

Organizations operating globally face divergent AI regulatory obligations, including EU AI Act risk classification requirements, sector-specific U.S. rules (banking, healthcare, insurance), and evolving data localization requirements, with no single unified compliance framework that satisfies all jurisdictions simultaneously.

Gartner projects that fragmented AI regulation will quadruple and extend to 75% of the world's economies by 2030, driving $1 billion in total compliance spend. AI governance platform spending alone is expected to reach $492 million in 2026.

Compliance monitoring has no path to scale manually when AI systems operate across dozens of markets. Governance must continuously track which regulations apply to which systems and generate evidence of adherence as a byproduct of operation, not as a separate audit exercise.

High-risk systems under the EU AI Act must comply with:

- Article 11: Technical documentation requirements

- Article 12: Automatic recording of events and logs

- Article 13: Transparency and information provision to deployers

- Article 14: Design for effective human oversight

Challenge 9: Auditability and Explainability at Scale

Regulators, enterprise customers, and internal risk committees increasingly demand proof of how AI decisions were made, but most organizations lack the infrastructure to produce this evidence on demand. Governance activity is episodic, not embedded into every AI interaction.

Organizations that perform regular audits and assessments of AI system performance and compliance are over three times more likely to achieve high GenAI business value than those that do not.

Production AI systems handling millions of interactions require automated, continuous audit trail generation, not manually reconstructed logs, to hold up under regulatory scrutiny or litigation. U.S. federal agencies (CFPB, DOJ) continue to scrutinize algorithmic decision-making under the Equal Credit Opportunity Act (ECOA), emphasizing that creditors must be able to explain adverse actions and may not rely on "broad buckets" for automated credit denials.

Challenge 10: AI Cost Governance and Operational Sprawl

As AI deployments multiply across teams, models, and applications, compute and token costs become opaque. Organizations struggle to attribute spending to specific use cases, teams, or outcomes, making it impossible to decide which AI investments to scale or retire.

Gartner predicts a 10x increase in AI agent usage by 2027. Because agents run continuously, generate API calls, and consume compute tokens around the clock, they require strict economic tracking to prevent runaway cloud infrastructure costs.

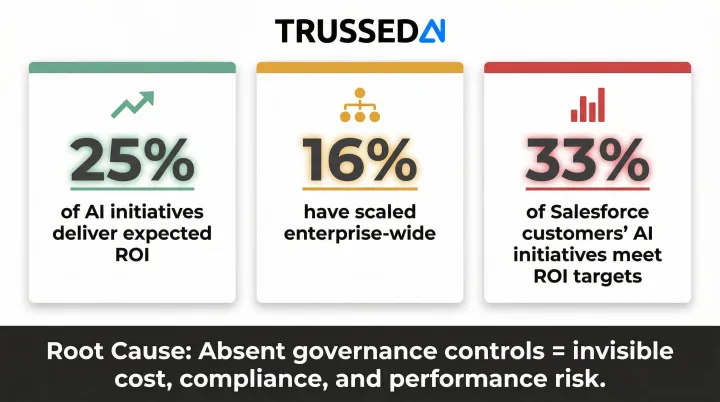

Only around 25% of AI initiatives deliver expected ROI, and just 16% have scaled enterprise-wide. Similarly, only 33% of Salesforce customers' AI initiatives are meeting ROI targets.

Uncontrolled AI cost sprawl signals absent governance controls. The same lack of visibility that makes costs unattributable also means compliance, security, and performance risks are similarly invisible.

From Static Policies to Runtime Control: How Enterprises Are Closing the Gap

The organizations making progress on these 10 challenges share a common architectural move: they stop treating governance as a pre-launch checklist and start enforcing it as a continuous runtime capability, where every AI interaction is governed, logged, and attributable.

In practice, this means a unified AI control plane sitting between applications and models, enforcing policies in real time, routing requests intelligently, generating audit evidence automatically, and providing full visibility across the AI stack, from developer environments to production systems, without requiring changes to application code.

Trussed AI was built specifically to address this gap. Enterprises in regulated industries, including insurance, healthcare, and financial Solution, use Trussed AI's control plane to enforce governance at runtime across AI apps, agents, and developer tools.

The platform integrates as a drop-in proxy with no changes to existing application code. Supported integration paths include:

- Python, TypeScript, and Go SDKs

- REST API

- Public cloud APIs via AWS and Google Cloud partnerships

Organizations using this approach report cutting manual governance workload by 50%, with audit-ready compliance evidence generated automatically as a byproduct of every governed interaction.

Deployment is flexible: teams can run self-managed instances in their own cloud or data center, or opt for Trussed-operated dedicated instances, both options fitting within existing AWS and Google Cloud environments without new infrastructure overhead.

Conclusion

The 10 challenges covered are not isolated technical problems. They form a cascade. Fragmented ownership enables velocity gaps, velocity gaps create shadow AI, invisible usage blocks auditability, and missing audit trails make regulatory compliance a guessing game at scale.

That cascade has a cost. Enterprises in regulated industries cannot afford to wait for governance maturity to catch up organically. The window to build governance infrastructure is the same window in which AI is being adopted. Retrofitting controls after the fact is far more expensive than building them in now, both in engineering effort and in regulatory exposure. Organizations that treat governance as infrastructure are the ones that scale AI without compounding the risks they already carry.

If your organization is deploying AI across teams, models, and workflows, Trussed AI's enterprise control plane enforces policy in real time, so you're not discovering compliance gaps in an audit six months after the fact.

Frequently Asked Questions

What is one of the challenges of scaling AI programs?

Governance infrastructure, including accountability structures, monitoring, and policy enforcement, fails to scale linearly with AI deployment. As the AI estate grows, the gap between written policies and runtime enforcement widens, creating compounding risk gaps that worsen with speed and scale.

What are the challenges of AI governance?

Key challenges include fragmented ownership, the gap between written policies and runtime enforcement, auditability failures, and governing systems that change after deployment. Each challenge compounds as organizations move from a handful of AI systems to hundreds.

Will data governance be replaced by AI?

No. AI can automate many data governance tasks like classification, lineage tracking, and anomaly detection, but it does not replace the organizational structures, accountability decisions, and regulatory obligations that constitute data governance. It handles the repetitive work so governance teams can focus on the decisions that require human judgment.

What are the challenges of conversational AI?

Conversational AI is bidirectional and unpredictable in output, which makes legacy keyword-based security tools unable to detect intent-based risks. Key challenges include multi-turn data leakage, prompt injection, and policy violations that accumulate across a conversation rather than triggering on a single request.

How is AI governance different in regulated industries?

Regulated industries face stricter auditability requirements, sector-specific rules (HIPAA, financial Solution regulations), and less tolerance for explainability gaps. Governance must produce verifiable evidence continuously and enforce controls in real time, not just exist as written policy.

What is the difference between AI governance and AI compliance?

Compliance is a subset of governance focused on meeting specific regulatory obligations. Governance is the broader operating model, including accountability structures, risk management, monitoring, and enforcement, that makes compliance repeatable and enforceable as the AI estate expands.