Introduction

Enterprises are deploying AI at unprecedented scale - autonomous agents, large language models, generative workflows - but governance frameworks have not kept pace. This creates real regulatory and security exposure, particularly in healthcare, insurance, and financial Solution.

According to IBM's 2025 Cost of a Data Breach report, 13% of organizations experienced an AI-related security incident, and 97% of those breached organizations lacked proper AI access controls. The proliferation of "Shadow AI" adds an average of $670,000 to total breach costs. Attackers are weaponizing AI too: CrowdStrike reports an 89% year-over-year increase in AI-enabled attacks, driving the average eCrime breakout time down to just 29 minutes.

NIST has responded with two interconnected developments: the updated Cybersecurity Framework 2.0 (now with a Govern function) and the new Cyber AI Profile (NIST IR 8596), which layers AI-specific cybersecurity priorities onto the existing framework. The Profile addresses how to secure AI systems, use AI for defense, and defend against AI-enabled attacks.

This guide covers the Cyber AI Profile in practical terms: the risk categories it defines, how it maps to CSF 2.0, and the specific controls enterprise AI teams need to implement to stay compliant in regulated environments.

TLDR

- NIST IR 8596 extends CSF 2.0 with three AI-specific focus areas: Secure, Defend, and Thwart - each mapped to the six CSF functions

- Traditional security programs miss AI-specific risks like prompt injection, data poisoning, model drift, and adversarial inputs without deliberate AI controls

- The Profile layers AI-specific priorities across all six CSF 2.0 functions with proposed priority levels to guide implementation

- Compliance is voluntary but treated as a de facto benchmark by regulators in healthcare, insurance, and financial Solution

- The biggest gap for most enterprises is runtime enforcement - continuously monitoring, governing, and auditing AI behavior in production

What Is the NIST Cyber AI Profile?

The Cyber AI Profile (NIST IR 8596, published December 2025 as a preliminary draft) is a Community Profile built on top of NIST CSF 2.0. It doesn't replace existing guidance but surfaces the highest-priority cybersecurity outcomes for organizations deploying or using AI systems.

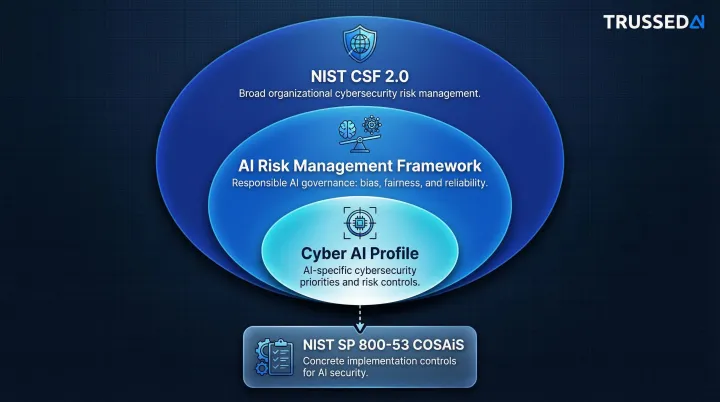

The Profile doesn't stand alone - it draws from two other NIST frameworks, each covering a distinct layer of risk:

- NIST CSF 2.0 covers broad cybersecurity risk management across all technology environments

- AI Risk Management Framework (AI RMF) addresses responsible AI use: bias, fairness, and reliability

- Cyber AI Profile sits at the intersection of both, with prioritized subcategories specific to AI deployments

A fourth document goes one level deeper: NIST's Control Overlays for Securing AI Systems (COSAiS) is a proposed overlays effort and concept paper - not a finalized SP 800-53 publication - that is developing implementation-focused guidance for applying specific security controls to AI environments, with the goal of translating the Profile's outcomes into concrete steps.

Who should use it:

The Profile is intended for any organization using, building, or procuring AI technologies - from standalone chatbots and document summarizers to AI-enabled enterprise workflows and autonomous agentic systems - regardless of maturity stage.

The Three AI Focus Areas: Secure, Defend, and Thwart

The Cyber AI Profile organizes all its guidance around three Focus Areas, each addressing a different angle of AI-related cybersecurity risk. These areas are interdependent - securing AI systems enables better defense, and understanding adversarial AI use informs how to build resilience.

Secure: Protecting Your AI Systems

The Secure focus area addresses cybersecurity challenges introduced when integrating AI into an organization's environment - covering the AI systems themselves (models, agents, prompts, training data), their supply chains, and the infrastructure they depend on.

Key point: AI vulnerabilities are more contextual, dynamic, and opaque than traditional software bugs, making them harder to identify, verify, and remediate. NIST explicitly notes that AI systems require different security approaches because their behavior changes based on inputs, training data, and environmental context - not just code logic.

Defend: Using AI to Strengthen Cybersecurity

The Defend focus area identifies opportunities to use AI to enhance cybersecurity operations - from anomaly detection and threat correlation to automated incident triage, compliance checks, and adversarial training simulations.

Critical caveat: AI defense capabilities must be continuously evaluated for maturity before being trusted for autonomous defensive action. Human-in-the-loop validation remains essential - deploying AI security tools without ongoing verification creates gaps that adversaries can exploit.

Thwart: Defending Against AI-Enabled Attacks

The Thwart focus area addresses how adversaries are using AI to conduct faster, more scalable, and harder-to-detect attacks. Threat types now include:

- Spear-phishing campaigns generated and personalized at scale

- Deepfake-based social engineering targeting executives and employees

- Malware that evades signature-based detection by generating novel code at runtime

- Autonomous AI agents capable of executing multi-stage attack campaigns without human direction

What differentiates these attacks is their speed, scale, and adaptability, which compresses the window for human response. Mandiant identified adaptive malware such as PROMPTFLUX and PROMPTSTEAL, which query large language models mid-execution for "just-in-time" code generation to evade static defenses.

How the Six CSF 2.0 Functions Apply to AI Systems

The Cyber AI Profile maps its AI-specific considerations onto all six CSF 2.0 core functions, with each Subcategory assigned a proposed priority (1 = High, 2 = Moderate, 3 = Foundational). What makes these considerations distinct is that standard CSF guidance doesn't account for AI-specific attack surfaces like model poisoning, agent autonomy, or inference-layer vulnerabilities - the items below do.

Starting with governance and working through to recovery, here's what changes when AI is in scope:

Govern

AI governance requires explicit updates to risk frameworks - existing policies rarely account for how AI systems behave, fail, or get compromised:

- Integrate AI-specific risks into formal risk appetite statements - don't assume existing frameworks cover AI adequately

- Apply supply chain oversight to training data provenance with the same rigor as software dependencies, including third-party model verification

- Assign named human accountability for decisions made by self-directed AI systems

- Increase policy review frequency - the AI threat landscape evolves faster than traditional software cycles

Identify

Standard asset inventories miss most of the AI attack surface. Identification requirements expand significantly:

- Inventory AI models, APIs, keys, agents, and their integrations and permissions - AI-specific components sit outside traditional IT asset management

- Track adversarial inputs, data poisoning, model drift, and concept drift as distinct vulnerability classes - these don't map to software CVEs

- Build AI-specific procedures into incident playbooks, including how to disable model autonomy and restore validated model versions

Protect

Protection controls must treat AI systems as distinct actors - not just as applications running on traditional infrastructure:

- Issue unique identities and credentials to AI systems and agents to enable traceability of AI-initiated actions

- Apply least-privilege access to AI agents specifically - limit what systems can access and execute, not only what users can do

- Log inference inputs and outputs at the model level - application logs don't capture the decisions driving AI behavior

- Restrict agents from executing arbitrary code to prevent unauthorized commands or unintended system access

- Maintain protected backups of model versions and clean training datasets to enable rapid recovery from poisoning or compromise

Detect

Standard application monitoring doesn't surface AI-specific indicators of compromise. Detection requires separate baselines and new monitoring categories:

- Track AI-generated network traffic independently from human-generated traffic - AI systems produce distinct patterns that require their own baselines

- Monitor for adversarial input patterns and anomalous model outputs, not just application-layer errors

- Create dedicated monitoring procedures for agent-initiated actions - traditional user activity monitoring leaves autonomous behavior invisible

- Flag unexpected changes in model output distributions as potential indicators of drift or tampering

Respond

AI incidents don't fit neatly into traditional triage categories. Response procedures need dedicated tracks:

- Create separate incident categories for adversarial attacks, data poisoning, and model compromise - each requires different triage and containment steps

- Preserve AI-specific forensic artifacts during investigation: model logs, inference tables, input/output chains, and training data provenance

- Explicitly check for adversary AI usage - incident analysis should include identifying AI-generated attack content or automated attack patterns

Recover

Recovery scope for AI incidents varies dramatically depending on what was compromised. Clean restoration requires validation steps that don't exist in traditional recovery playbooks:

- Validate that restored model versions and training datasets are free of residual poisoning or drift - don't assume backups are clean

- Evaluate how AI-driven defense tools performed during the incident and use findings to improve them

- Scope recovery complexity upfront: remediation can range from credential rotation to full model retraining, depending on the nature of the compromise

Key AI-Specific Cybersecurity Risks the Profile Targets

AI introduces novel attack surfaces that traditional cybersecurity programs don't cover:

Prompt injection - Manipulating LLM behavior through crafted inputs that override intended instructions

Data poisoning - Corrupting training data to alter model behavior in subtle, hard-to-detect ways

Model inversion - Extracting sensitive training data by analyzing model responses

Adversarial inputs - Causing misclassification or incorrect decisions through carefully crafted inputs

Excessive agency - AI agents taking unauthorized or unintended actions beyond their intended scope

Each of these vectors also extends into dependencies you don't control directly. According to IBM, supply chain compromises accounted for 30% of AI security incidents - a share that grows as organizations rely on third-party models and APIs.

AI Supply Chains Carry Risks Traditional Audits Miss

Unlike traditional software supply chains (hardware + code), AI supply chains include training data, pre-trained model weights, fine-tuning datasets, and external APIs - each a potential vector for introducing vulnerabilities. The NIST Profile explicitly calls for treating data provenance with the same rigor as software provenance.

Attacks Execute Faster Than Human Response Windows Allow

AI-enabled attacks operate at machine speed: scanning for vulnerabilities, crafting personalized phishing content, and executing multi-stage lateral movement in the time it takes a human analyst to triage an alert. This compresses detection and response windows significantly, making continuous, automated monitoring a core operational requirement.

A Practical NIST Compliance Checklist for AI Platform Teams

Step 1 - Inventory your AI assets

List all AI models (in-house and third-party), agents, APIs, keys, datasets, and their permissions and integrations. This is a prerequisite for every other NIST compliance activity.

Include:

- Data lineage and training data provenance, not just software components

- Model versions and their deployment locations

- API keys and their associated permissions

- Agent capabilities and integration points

Step 2 - Establish AI-specific governance policies

Update your risk appetite statements, access control policies, and incident response plans to include AI-specific scenarios (model compromise, data poisoning, adversarial input).

Key actions:

- Assign human accountability for AI system actions

- Create a review cadence that matches the pace of AI capability changes

- Define acceptable AI risk levels explicitly

- Document AI-specific incident categories and response procedures

Step 3 , Implement runtime monitoring and policy enforcement

Unlike traditional applications, AI systems require monitoring at the inference level - logging every model input, output, and action to detect adversarial patterns in real time.

Key actions:

- Log all model inputs, outputs, and agent actions for continuous anomaly detection

- Enforce governance policies automatically at inference time, not just at deployment

- Generate audit evidence as a standard output of every governed interaction

- Extend monitoring coverage to developer environments, not just production systems

Trussed AI's control plane handles this at the infrastructure layer, enforcing policies across models, agents, and developer environments with no application code changes required. This approach satisfies NIST's continuous oversight requirements while keeping compliance overhead low.

Step 4 , Protect data at every stage

Essential protections:

- Encrypt training data and model artifacts at rest and in transit

- Maintain versioned, regularly tested backups of clean training datasets and validated model checkpoints

- Implement output filtering to prevent sensitive data leakage through AI responses

- Apply least-privilege access to training data and model weights

Step 5 , Build and test AI-specific incident response

Define AI-specific incident categories and response playbooks that include disabling model autonomy, rolling back to a prior validated model version, and preserving AI forensic artifacts. Once playbooks are documented, validate them regularly. Conduct tabletop exercises that simulate:

- Prompt injection attacks

- Supply chain poisoning scenarios

- Deepfake-based social engineering against personnel

- Model compromise and data exfiltration

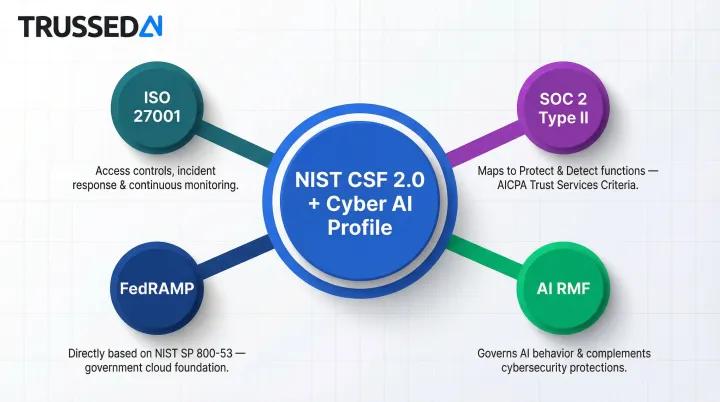

How NIST Aligns with Security Standards and the AI RMF

NIST CSF 2.0 and NIST SP 800-53 serve as a universal baseline that maps directly to other major frameworks. NIST provides official mapping tables that offer a general indication of SP 800-53 Rev 5 control coverage with respect to ISO/IEC 27001:2022.

Framework Alignment at a Glance

Information security management standards share strong alignment on access controls, incident response, and continuous monitoring

Audit attestation standards , Audit criteria map closely to the CSF's Protect and Detect functions. AICPA provides an official mapping comparing NIST 800-53 requirements to the 2017 Trust Services Criteria

FedRAMP , Directly based on NIST SP 800-53 controls, meaning NIST-aligned AI programs have a strong foundation for government cloud requirements

AI RMF and the Cyber AI Profile: How They Work Together

While the Cyber AI Profile focuses on cybersecurity risks associated with AI, the AI RMF addresses the broader responsible use of AI (trustworthiness, bias, fairness, reliability). NIST intends both to be used together - the AI RMF governs what the AI does, the Cyber AI Profile governs how it is protected.

Practical Mapping Guidance for Regulated Industries

That dual-framework structure translates directly into industry-specific compliance work. Most regulated organizations can map NIST controls to their sector's requirements without starting from scratch:

- Healthcare organizations , Map NIST Protect and Govern controls to HIPAA Security Rule safeguards. HHS has released an official crosswalk mapping HIPAA Security Rule requirements directly to the NIST Cybersecurity Framework

- Financial Solution firms , Map to NYDFS cybersecurity regulations and GLBA guidelines

- Insurance carriers and enterprise AI teams , Align NIST's audit-readiness requirements with automated evidence generation to reduce duplicative compliance work

Frequently Asked Questions

What is the NIST Cyber AI Profile (NIST IR 8596)?

NIST IR 8596 is a voluntary Community Profile published in December 2025 that extends NIST CSF 2.0 with AI-specific cybersecurity priorities, organized around three focus areas (Secure, Defend, Thwart) and mapped across the six CSF core functions. It sits alongside existing frameworks, adding AI-specific guidance without replacing them.

Is NIST compliance mandatory for AI platforms?

NIST frameworks are voluntary for most organizations, but they are widely used as regulatory benchmarks,especially in federal contracting (FedRAMP requires NIST SP 800-53). Regulators in banking, healthcare, and financial Solution increasingly reference NIST when evaluating cybersecurity diligence.

How does the NIST AI RMF differ from the Cybersecurity Framework for AI?

The AI RMF addresses trustworthiness and responsible use of AI broadly - bias, reliability, explainability - while the Cyber AI Profile focuses specifically on cybersecurity risks to and from AI systems. NIST designed both to be used together: the AI RMF governs AI behavior; the Cyber AI Profile protects AI infrastructure.

What are the highest-priority cybersecurity controls for AI systems under NIST?

NIST's Cyber AI Profile assigns High Priority (level 1) to four control areas:

- Inventorying AI assets and data flows

- Assigning unique identities and least-privilege access to AI systems

- Protecting data confidentiality and integrity across every lifecycle stage

- Implementing AI-specific incident response plans, including model rollback and forensic preservation

How do I map NIST CSF 2.0 to other security frameworks for AI deployments?

NIST SP 800-53 is the most direct mapping layer. Its access control, audit logging, incident response, and supply chain risk management controls align closely with the requirements of major security management frameworks - letting organizations build a single control set that satisfies multiple requirements.

How should enterprises in regulated industries start implementing NIST guidance for AI?

Start with an AI asset inventory and gap assessment against the Cyber AI Profile's High Priority (level 1) subcategories, then prioritize runtime monitoring, access controls, and AI-specific incident response. Organizations in insurance, healthcare, or financial Solution should also validate alignment with sector overlays such as HIPAA Security Rule safeguards or NYDFS cybersecurity regulations.