Introduction

When an AI system makes a consequential decision - flagging a loan application, generating a clinical summary, routing a customer escalation - can your organization explain what happened? Most enterprises cannot. They cannot identify who sent the prompt, which model responded, what data was accessed, or whether the output violated policy. That visibility gap is the core problem facing organizations deploying AI at scale.

According to McKinsey's 2025 State of AI report, 88% of organizations regularly use AI in at least one business function, with 62% experimenting with AI agents. Yet Deloitte found that only one in five companies possesses a mature governance model for autonomous systems. This disconnect creates massive operational blind spots and regulatory exposure.

Closing that gap starts with understanding what AI audit logging actually requires , and why traditional log management falls short. This guide covers what a capable AI audit logging tool must do and how enterprises can evaluate and implement one.

The stakes are highest in regulated industries - healthcare, insurance, financial Solution - where an unexplained AI decision carries legal consequences, not just operational ones. The EU AI Act's high-risk system requirements take effect August 2, 2026, with penalties up to €35 million or 7% of global turnover.

TL;DR

- AI audit logs are structured, tamper-resistant records of every AI interaction: prompt, model, data accessed, policy checks run, and output produced

- Traditional log management tools were not designed for probabilistic, multi-step AI systems and miss critical governance data

- Regulations like GDPR, HIPAA, the EU AI Act, and SOX require enterprises to demonstrate AI decision traceability

- Effective tools capture interactions in real time, enforce policies, mask sensitive data, and generate audit-ready evidence automatically

- Agentic and multi-model systems require logging that traces the full decision chain across every model call and tool invocation

What Is AI Audit Logging (And How It Differs from Traditional Logging)

AI audit logging is the automated, continuous capture of structured records for every material interaction with an AI system. It covers inputs (prompts), model identity and parameters, retrieved data sources, policy enforcement outcomes, and outputs. This differs fundamentally from general-purpose application logging, which captures system events but not AI-specific decision context.

Traditional software is deterministic: a log of inputs plus code version is sufficient to reproduce behavior. AI models are probabilistic. The same prompt can yield different outputs across runs, versions, or parameter settings, which means audit logs must capture far more context per interaction to make the record meaningful and reproducible.

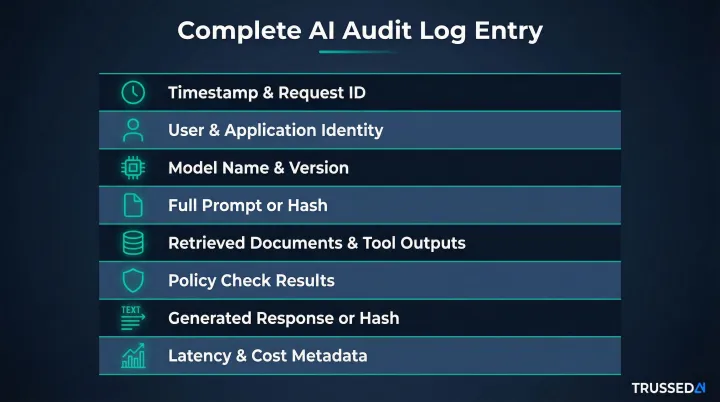

A complete AI audit log entry includes:

- Timestamp and request ID for temporal tracking

- User and application identity for attribution

- Model name and version to track behavior changes

- Full prompt (or cryptographic hash for sensitive content)

- Retrieved documents or tool outputs from RAG systems

- Policy check results (PII detection, content safety, prompt injection screening)

- Generated response (or hash)

- Latency and cost attribution metadata

Without this context, reconstructing what happened during an AI interaction becomes impossible. That's a critical gap when regulators or auditors require a full reconstruction of events.

Why Enterprises Cannot Ignore AI Audit Logging

Regulatory exposure is accelerating

Multiple regulations now create direct obligations around AI transparency and traceability:

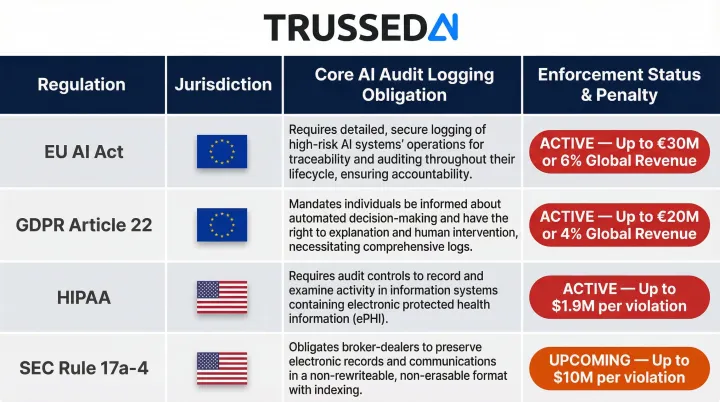

| Regulation | Core Logging Obligation | Enforcement Status |

|---|---|---|

| EU AI Act (Articles 12, 19, 21) | High-risk systems must auto-record events across their lifetime: period of use, reference databases, input data, and human verifiers | Enforcement begins August 2, 2026; penalties up to €35 million or 7% of global turnover |

| GDPR Article 22 | Data subjects have the right to contest decisions made solely by automated processing with legal effects | Active , Budapest Bank fined €650,000 for AI emotion detection; Caixabank fined €6 million for profiling failures |

| HIPAA (45 CFR § 164.312) | Hardware, software, and procedural mechanisms must record and examine activity in systems containing ePHI | 2025 HIPAA Security Rule NPRM mandates real-time, tamper-evident log controls |

| SEC Rule 17a-4 | Broker-dealers must preserve records via WORM format or an audit-trail alternative permitting re-creation of modified records | Compliance date for audit-trail alternative: May 3, 2023 |

Without audit logs, incident response is nearly impossible

When an AI system produces a harmful, biased, or non-compliant output, organizations need to reconstruct exactly what happened: which prompt triggered the output, which model version was running, what data was retrieved, and whether any guardrail fired.

Without that record, teams cannot determine root cause, cannot demonstrate remediation to regulators, and cannot prevent recurrence. Traditional application logs capture system events but miss the probabilistic decision context that makes AI interactions distinct.

These regulatory obligations compound the operational problem - a gap in logging creates both a compliance failure and a forensic dead end simultaneously.

The misuse and insider threat surface is real

AI systems create novel attack vectors. The 2026 MITRE ATLAS OpenClaw investigation demonstrated that exposed AI agents can be hijacked via indirect prompt injection to achieve remote code execution and credential harvesting. Because agents make autonomous decisions across operational systems, attackers can convert features into end-to-end compromise paths in seconds.

RAG-based data exfiltration is also verified. The "Morris II Worm" demonstrated a zero-click RAG attack where an adversarial self-replicating prompt, once ingested into a RAG database, forces the AI assistant to exfiltrate sensitive user data on future retrievals. Zenity researchers similarly manipulated Microsoft 365 Copilot via RAG poisoning to alter banking details in responses, tricking users into executing fraudulent wire transfers.

Shadow AI compounds the external threat. IDC found that 39% of EMEA employees use free AI tools at work, feeding sensitive corporate data into unmonitored models. Audit logs are the primary detection mechanism for both attack patterns.

Manual governance cannot scale

As organizations deploy dozens or hundreds of AI applications, agents, and developer tools, human-reviewed governance processes break down. Gartner found that only 11% of internal auditors feel very confident in their ability to provide effective oversight and assurance over top AI-related risks.

Audit logging must be automated and machine-queryable to scale with deployment. Without automation, compliance teams face an impossible manual burden reconstructing decisions weeks after they occur.

Audit trails build trust with stakeholders

Boards, regulators, customers, and enterprise procurement teams increasingly require evidence of AI governance as a condition of deployment or purchase. A well-maintained audit trail is a competitive asset, not just a compliance checkbox. Organizations that can demonstrate continuous governance evidence gain faster regulatory approval and stronger customer confidence.

What AI Audit Logs Should Capture

Prompt and context logs

Every input to an AI model - user prompt, system prompt, conversation history, and any injected context - must be recorded. However, storing full prompts creates a secondary data privacy liability. OWASP and NIST recommend tokenization and cryptographic hashing to maintain verifiable audit trails without exposing raw text.

SHA-256 hashing lets enterprises verify that a prompt matches a stored hash without exposing sensitive content in the logging plane. This approach satisfies NIST Privacy Framework 1.1 guidance on de-identification while maintaining forensic usefulness.

Model interaction logs

Capture which model processed the request:

- Provider, model name, version, and endpoint

- Parameters used (temperature, max tokens, top-p)

- Performance metadata (latency, token count, cost)

Version tracking is critical because model behavior can change between releases even with identical prompts. Without version data, reproducing a decision becomes impossible.

Data access and retrieval logs

For RAG (Retrieval-Augmented Generation) systems, audit logs must record:

- Which documents or database records were retrieved

- From which source

- With what query

- Whether any retrieved document carried a sensitivity label or access restriction

This is the AI-specific analog to database access logging in traditional systems. When an AI agent accesses a document containing PHI or PII, that access must be logged with the same fidelity as a database query in conventional audit systems.

Policy enforcement logs

Log every guardrail or policy check executed:

- PII detection results

- Prompt injection screening outcomes

- Content safety evaluation scores

- Output toxicity ratings

Each log entry must include the result (pass/fail), the specific rule triggered, and any action taken (block, redact, flag for review). This log type transforms audit data into compliance evidence - it proves policies ran and what they decided.

Trussed AI's control plane generates governance evidence as a byproduct of every enforced interaction via a drop-in proxy,no application code changes required. This makes it practical to instrument every AI touchpoint, from developer tools to production agents.

Agent action and tool call logs (agentic systems)

Policy enforcement logs cover individual interactions, but agentic systems introduce a different challenge: sequences of interdependent actions across APIs, files, databases, and sub-agents. Each action must be logged with the same fidelity as a model call.

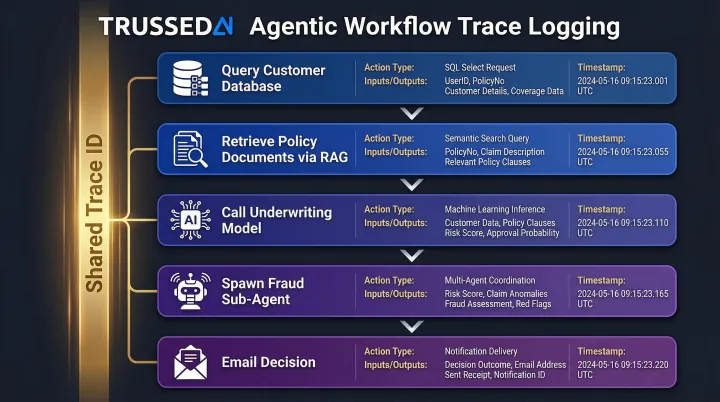

The audit trail for an agentic workflow must be a causally linked chain - not a flat list of events. Investigators need to follow the decision path end to end. For example, a claims processing agent might:

- Query a customer database (tool call)

- Retrieve policy documents (RAG retrieval)

- Call an underwriting model (model interaction)

- Email a decision (tool call)

Each step must carry:

- A shared trace ID and parent step reference

- The action type with inputs and outputs

- A timestamp

Without this causal chain, answering "which tool call caused the agent to access data it shouldn't have?" becomes untraceable during an investigation.

Key Capabilities to Evaluate in AI Audit Logging Tools

Real-time capture with minimal latency impact

Audit logging must happen synchronously with every AI interaction. Post-hoc batch logging creates gaps that can miss the very events regulators care about. Evaluate tools on whether logging is in the critical path with negligible performance impact.

Enterprise-grade platforms should target sub-20ms overhead so governance does not degrade user experience. Trussed AI's control plane enforces policies and captures audit data in real time, operating as a proxy layer that sits between applications and AI models without requiring code changes.

Immutability and tamper resistance

Logs must be trustworthy in regulatory or legal contexts. Technical requirements include:

- Append-only storage

- Cryptographic signing or hashing of log entries

- Integration with WORM (write-once-read-many) storage or equivalent controls

SEC Rule 17a-4 now allows an "audit-trail alternative" that permits re-creation of modified records, provided the system time-stamps all modifications and tracks the chain of custody. The 2025 HIPAA Security Rule NPRM mandates real-time, tamper-evident logs.

Logs stored in mutable databases or local file systems are insufficient for compliance use cases. Enterprises must architect storage to meet these regulatory standards before the first production deployment.

Sensitive data masking and privacy controls

Storing every prompt and response creates a secondary data privacy liability. Evaluate tools on their ability to detect and mask PII, PHI, and other regulated data within logs automatically, using tokenization or hashing while preserving enough metadata for forensic usefulness.

This is especially critical in healthcare and financial Solution deployments, where capturing full prompts could violate HIPAA or GDPR data minimization principles. OWASP's Top 10 for LLM Applications (2025) recommends implementing tokenization and redaction to sanitize sensitive information before processing.

Native policy enforcement integration

Distinguish between tools that are purely passive loggers (record after the fact) versus tools that integrate logging with real-time policy enforcement (log the policy decision at the moment it fires).

Integrated policy logging creates a single, authoritative record of what the policy decided and when , rather than forcing teams to reconstruct compliance posture from raw interaction data after the fact.

Trussed AI's control plane generates governance evidence as a direct byproduct of every enforced interaction. The platform operates as a drop-in proxy that requires no application code changes, making it practical to instrument every AI touchpoint, including developer tools and production agents.

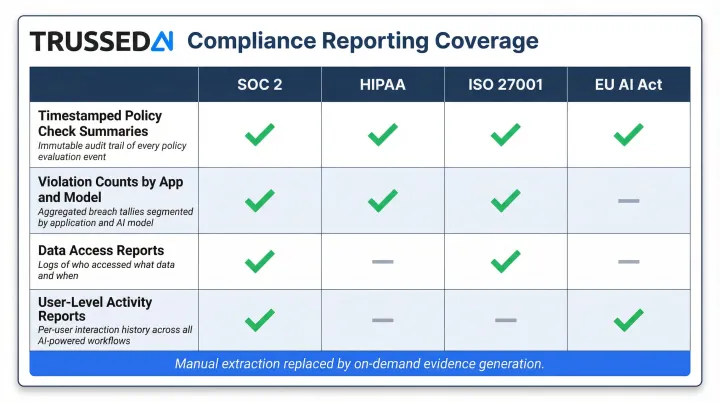

Compliance reporting and audit-ready evidence generation

That policy-level evidence only has value if it can be surfaced quickly. Evaluate whether tools automatically produce the artifacts auditors and regulators require:

- Timestamped policy check summaries

- Violation counts by application and model

- Data access reports

- User-level activity reports

Look for pre-built report templates aligned to specific frameworks (HIPAA, EU AI Act, NIST AI RMF). Manual extraction and formatting of audit data is time-consuming and error-prone. Automated compliance reporting cuts what previously took compliance teams weeks of manual extraction down to on-demand evidence generation.

Multi-tenant and cross-model coverage

Enterprise AI environments include dozens of models (proprietary, open-source, fine-tuned), multiple deployment environments (cloud, on-prem, hybrid), and multiple teams or business units.

Audit logging tools must support:

- Multi-tenant log schemas with consistent attribution fields (tenant ID, application ID, model ID, user ID)

- Cross-model provider support without requiring provider-specific instrumentation

- Unified governance policies that apply regardless of which model or environment is in use

Without this coverage, enterprises face fragmented audit trails that create compliance gaps and make incident investigation nearly impossible.

AI Audit Logging for Agentic and Multi-Model Systems

Agentic systems raise the logging challenge significantly. A single user request can trigger a chain of model calls, tool invocations, memory reads and writes, and sub-agent spawns,each capable of accessing sensitive data or producing a policy-relevant output.

Example: A claims processing agent receives a customer inquiry. It:

- Queries a customer database to retrieve policy details

- Calls an underwriting model to assess risk

- Retrieves historical claims data from a RAG system

- Spawns a sub-agent to verify fraud indicators

- Emails the decision to the customer

Flat logging of only the first prompt and final response misses the majority of the decision surface. Investigators cannot determine which step accessed restricted data or which model call produced a policy violation.

End-to-end trace logging for agentic systems

Each step in the agent chain must carry:

- A shared trace ID that links all steps in the workflow

- A parent step reference to establish causal relationships

- The action type (model call, tool call, memory access)

- The inputs and outputs for that step

- A timestamp

This causal chain structure makes it possible to answer questions like "which tool call caused the agent to access data it wasn't supposed to?" during investigations.

Two emerging standards define how this structure should be implemented. The OpenTelemetry GenAI SIG is standardizing AI telemetry, defining span operations like invoke_agent, create_agent, and execute_tool. These systems use the W3C Trace Context standard to assign a unique trace ID that persists across all Solution, letting downstream Solution continue the trace rather than restart it.

Regulatory scrutiny of agentic systems

The technical requirements above don't exist in isolation , regulators are catching up fast. Under the EU AI Act's high-risk system requirements and NIST AI RMF guidance, agentic systems will face the sharpest compliance scrutiny of any AI deployment type. The NIST AI Agent Standards Initiative, launched in February 2026, is developing industry-led protocols to ensure AI agents operate securely and interoperate across platforms.

Enterprises deploying agents in production today should treat comprehensive trace logging as a baseline requirement, not a future roadmap item. Regulators across jurisdictions increasingly expect organizations to reconstruct any agent decision path on demand , and audit gaps in multi-step workflows are among the first things investigators look for.

Best Practices for Implementing AI Audit Logging

Start from the control plane, not the application layer

Instrumenting individual applications one by one is slow, inconsistent, and creates coverage gaps. The most scalable approach is to deploy logging at the infrastructure layer,via a gateway, proxy, or control plane that intercepts all AI traffic,so that every application is covered automatically without code changes.

This approach ensures consistent log schemas across diverse AI environments. Developers don't need to remember to add logging calls, and governance teams don't need to chase down every new AI application to ensure it's instrumented.

Once logging is standardized at the infrastructure layer, the next decision is how long to keep those logs - and who can access them.

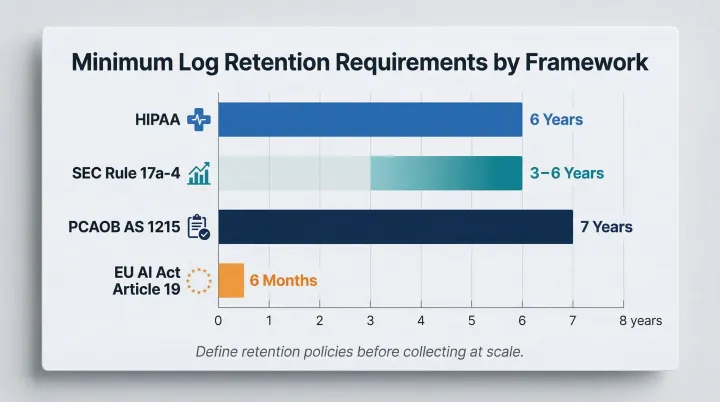

Define log retention and access policies before you collect

Audit logs containing AI interaction data have regulatory retention requirements that vary by framework:

- HIPAA: 6 years for documentation

- SEC Rule 17a-4: 3 to 6 years depending on record type

- PCAOB AS 1215: 7 years

- EU AI Act: At least 6 months (Article 19)

Teams should define retention periods, access controls (who can query logs and under what conditions), and data classification policies for log content before collecting at scale. Storing logs without governance policies creates a separate compliance liability.

With retention policies in place, the final step is routing those logs into the tools your security and compliance teams already use.

Integrate logs into existing security and compliance workflows

AI audit logs should feed into existing SIEM tools (Splunk, Elastic, Microsoft Sentinel) and GRC platforms so that AI-related events appear alongside other security and compliance telemetry.

NIST IR 8587 explicitly requires that token and assertion usage data integrate with SIEM and User and Entity Behavior Analytics (UEBA) systems. Without this integration, AI governance becomes a separate dashboard that compliance teams must monitor in isolation.

The practical payoff: AI anomalies trigger the same alerting and incident response workflows as any other security event,no parallel processes, no gaps in coverage.

Frequently Asked Questions

What are some of the steps of auditing an AI model?

Key steps include:

- Define the model's scope and risk tier

- Collect interaction logs (prompts, outputs, data accessed, policy decisions)

- Evaluate outputs against compliance policies and fairness criteria

- Review the audit trail for violations or anomalies

- Document findings with remediation actions

Can you use AI for audits?

Yes, AI can assist the audit process itself by automatically analyzing large volumes of log data, flagging anomalies, classifying policy violations, and generating compliance summaries. However, human oversight of AI-assisted audit findings remains essential, particularly in regulated industries.

What is an AI audit trail?

An AI audit trail is a chronological, tamper-resistant record of every interaction with an AI system. It captures who made the request, which model responded, what data was accessed, what policies were enforced, and what output was produced , giving organizations a complete basis to reconstruct any AI decision after the fact.

What is the standard for AI audit?

No single universal standard exists yet. The EU AI Act, NIST AI RMF, ISO/IEC 42001, and domain-specific regulations like HIPAA and SOX each create audit and traceability requirements for AI systems. Enterprises should map their logging practices to the frameworks most relevant to their industry.

How is AI audit logging different from traditional log management?

Traditional log management captures deterministic system events , API calls, database queries, errors. AI audit logging must also capture probabilistic model behavior, policy enforcement outcomes, retrieved data context, and agent action chains. That additional context has no equivalent in conventional software and requires purpose-built tooling to record meaningfully.

What data should be captured in an AI audit log?

Core fields include:

- User and application identity

- Model name and version

- Prompt or prompt hash (for sensitive content)

- Retrieved documents or tool outputs

- Policy check results

- Generated response or hash, latency, token count, and cost attribution

Agentic systems also require a trace ID linking all steps in a multi-model chain.