Introduction

Organizations are deploying AI faster than governance can keep pace. Teams across enterprises are integrating generative AI tools into daily workflows, autonomous agents are executing decisions without human oversight, and most companies have either no formal AI policy or a PDF document buried in a shared drive that no one enforces.

The consequences are measurable. Companies without enterprise AI governance frameworks lost an average of $4.4 million per incident in 2025. Meanwhile, 78% of employees admit to using AI tools their employer never approved, and 11% of what they paste into tools like ChatGPT is confidential.

Enforcement is already happening under existing law. In 2025, Massachusetts fined Earnest Operations $2.5 million for algorithmic lending bias, not under new AI legislation but under long-standing fair lending statutes.

That gap between documentation and enforcement is exactly what this guide addresses. It covers what a responsible AI policy is, what it must contain, how to build one, and how to ensure it actually runs in production, not just on paper.

TLDR:

- A responsible AI policy translates ethical principles into operational rules teams can follow

- Core principles include fairness, transparency, accountability, privacy, and human oversight

- Policies must cover data governance, testing protocols, prohibited uses, and incident response

- Building the policy requires cross-functional input and clear ownership

- Enforcement requires runtime controls: static PDFs cannot govern dynamic AI systems

What Is a Responsible AI Policy?

A responsible AI policy is a formal document that sets requirements, prohibitions, and principles governing how an organization develops, deploys, and manages AI systems. It converts ethical values like fairness and transparency into operational rules every team can follow.

The NIST AI Risk Management Framework defines the "GOVERN" function as cultivating a culture of risk management and outlining processes, documents, and organizational schemes that anticipate, identify, and manage risks. ISO/IEC 42001 takes this further by providing a certifiable management system standard that mandates documented policies and procedures within a Plan-Do-Check-Act cycle.

What a Responsible AI Policy Is NOT

A responsible AI policy is not simply aspirational principles posted on a company intranet, nor is it a one-time compliance checkbox. The distinction matters:

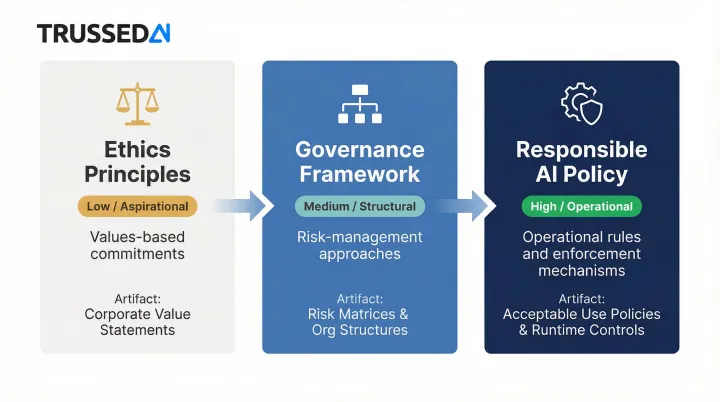

| Concept | Definition | Enterprise Artifact | Enforceability |

|---|---|---|---|

| Ethics Principles | High-level, values-based commitments | Corporate value statements | Low (Aspirational) |

| Governance Framework | Structured risk-management approaches | Risk matrices, organizational structures | Medium (Structural) |

| Responsible AI Policy | Organization-specific operational rules and enforcement mechanisms | Acceptable use policies, CI/CD gates, runtime controls | High (Operational) |

A policy document sitting in a shared drive doesn't stop a model from producing biased outputs, leaking sensitive data, or crossing regulatory boundaries at runtime. Enforcement requires operational controls, not just documented intent.

Relationship to AI Governance

AI governance is the overall strategic framework for managing AI risk. The responsible AI policy is the operational core that makes it actionable, providing the specific procedures, controls, and accountability mechanisms that turn governance strategy into daily practice.

The Core Principles Your Responsible AI Policy Must Cover

Five foundational principles anchor any responsible AI policy, grounded in global standards including NIST AI RMF and ISO/IEC 42001. The absence of any one creates specific, identifiable risk exposure.

Fairness and Non-Discrimination

AI systems trained on historical data can encode and amplify existing biases, leading to discriminatory outcomes in high-stakes domains. The consequences are documented and severe:

- A widely used healthcare triage algorithm exhibited significant racial bias: Black patients assigned the same risk score were considerably sicker than White patients at the same rating

- The COMPAS recidivism algorithm incorrectly classified Black defendants as higher risk 45% more often than White defendants

- Amazon scrapped an AI recruiting tool after discovering it penalized resumes containing "women's," because it was trained on male-dominated hiring data

Your policy must mandate:

- Diverse, representative training data across protected classes

- Regular bias audits before and after deployment

- Defined fairness criteria aligned with regulatory requirements

- Documentation of dataset composition and known limitations

The EU AI Act requires high-quality training, validation, and testing datasets that are relevant, representative, and free of errors to minimize discriminatory outcomes.

Transparency and Explainability

These are distinct concepts. Transparency answers "what happened" in the system: what data was used, which model version ran, what decision was made. Explainability answers "how" a specific decision was reached, such as why this applicant was rejected or why this loan was denied.

Under GDPR Article 22, data subjects have the right not to be subject to decisions based solely on automated processing, and controllers must provide "meaningful information about the logic involved." Yet only 17% of organizations report actively working to mitigate explainability risks.

Your policy must require:

- Documentation of model logic and decision pathways

- Explainable AI (XAI) techniques for decisions affecting people

- Model cards or equivalent documentation for all production systems

- Clear disclosure when AI is making or influencing decisions

Accountability and Governance

When an AI system causes harm, accountability must be assigned to a specific human or team, not distributed across the organization until it disappears. The NIST AI RMF requires that roles, responsibilities, and lines of communication for managing AI risks be documented and clear. Executive leadership must own decisions about risks associated with AI system development and deployment.

Your policy must map:

- Clear ownership for every AI system's development, deployment, and ongoing performance

- Accountability structures for third-party and vendor-supplied models

- Escalation paths when systems fail or produce harmful outputs

- Executive sponsorship for organizational AI risk management

Privacy and Data Protection

AI systems are data-intensive by design, and that creates real exposure. In 2023, Samsung banned generative AI tools after employees uploaded sensitive source code and internal meeting recordings to ChatGPT. Generative AI tools now account for 32% of all unauthorized data movement across enterprises.

Your policy must enforce:

- Strict data collection, storage, use, and retention standards

- Specific requirements around PII, consent, and data minimization

- Protection against data leakage from generative AI models

- Controls preventing confidential data from entering unapproved AI systems

Human Oversight and Control

Agentic AI systems execute decisions faster than any manual review process. The EU AI Act Article 14 mandates that high-risk AI systems be designed for effective human oversight, with the ability to intervene or interrupt operations when risks to health, safety, or fundamental rights arise.

Your policy must define:

- Which decisions require mandatory human review

- What triggers an override or intervention

- How escalation paths work when AI systems exceed authority

- Graduated autonomy levels for different AI system types

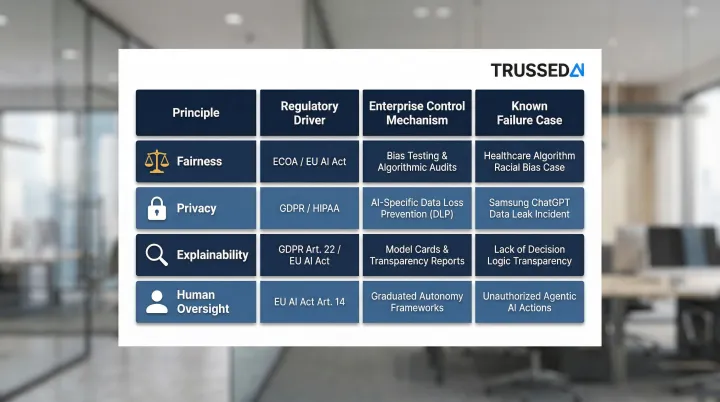

| Core Principle | Regulatory Driver | Enterprise Control Mechanism | Known Failure Case |

|---|---|---|---|

| Fairness | ECOA, EU AI Act | Pre-deployment bias testing, dataset representativeness checks | Healthcare triage algorithm under-identifying Black patients |

| Privacy | GDPR, HIPAA | AI-specific DLP, prompt redaction, blocking PHI uploads | Samsung engineers leaking proprietary source code to ChatGPT |

| Explainability | GDPR (Art. 22), EU AI Act | Feature importance analysis, Model Cards | Lack of meaningful information about logic involved |

| Human Oversight | EU AI Act (Art. 14) | Graduated autonomy, exception-based human escalation triggers | Autonomous agents executing unauthorized actions |

What Your Responsible AI Policy Must Include

Beyond principles, a policy must contain specific functional components that address the full AI lifecycle: data sourcing, model development, deployment, and eventual retirement.

Data Governance and Quality Standards

Without clear data standards, even well-designed models can produce unreliable or discriminatory outputs. Define requirements for data collection, labeling, quality checks, and access controls, and prohibit the use of unvetted datasets for any model operating in regulated or high-stakes contexts.

Specific requirements:

- Approved data sources and vetting procedures

- Data quality thresholds and validation protocols

- Access controls limiting who can use what data

- Retention and deletion schedules aligned with regulations

- Data lineage tracking from collection through model training

Algorithm Design and Testing Protocols

Models deployed without structured validation are a liability. Every AI system should complete a defined development and testing lifecycle before it touches production.

Mandatory checkpoints:

- Fairness assessments across demographic groups

- Adversarial testing for robustness and security

- Bias evaluations using established metrics

- Documented performance benchmarks

- Model cards or equivalent documentation

Model cards, standardized documentation introduced by Mitchell et al. (2019), capture performance benchmarks across demographic conditions, intended use contexts, and evaluation procedures, giving reviewers a consistent basis for approval decisions.

Acceptable and Prohibited Use Definitions

Ambiguity about permitted use cases leads to inconsistent decisions and avoidable exposure. The policy should clearly categorize AI use cases: approved, conditionally approved (requiring additional review), and outright prohibited.

Examples of prohibited uses:

- Using AI for surveillance without consent

- Using unapproved external generative AI tools with company data

- Deploying AI as sole decision-maker for employment or credit outcomes

- Social scoring or manipulative behavioral techniques

The EU AI Act Article 5 explicitly prohibits deploying subliminal, manipulative, or deceptive techniques to distort behavior, and social scoring.

Incident Reporting and Response

Define what constitutes an AI incident, who must be notified, in what timeframe, and how the organization documents lessons learned and remediates systems.

AI incidents include:

- Unexpected or biased outputs

- Bias discovery in production systems

- Security breaches or data leakage

- Compliance violations

- Harm to individuals or groups

The EU AI Act defines a "serious incident" as an event leading to death, serious harm to health, serious disruption of critical infrastructure, or infringement of fundamental rights. Providers must report serious incidents to market surveillance authorities no later than 15 days after becoming aware, or within 2 days for widespread infringements.

Compliance Alignment

Identify which regulatory frameworks the policy must align with based on the organization's industry and geography.

Common frameworks:

- EU AI Act: High-risk AI systems in EU markets

- NIST AI RMF: US federal agencies and contractors

- ISO/IEC 42001: Certifiable AI management systems

- GDPR: Personal data processing in EU

- HIPAA: Healthcare data in US

- Sector-specific rules: Financial Solution, insurance, education

Organizations operating across multiple jurisdictions should identify overlapping requirements early and align their policy to the most stringent applicable standard, as this approach typically satisfies all others by default.

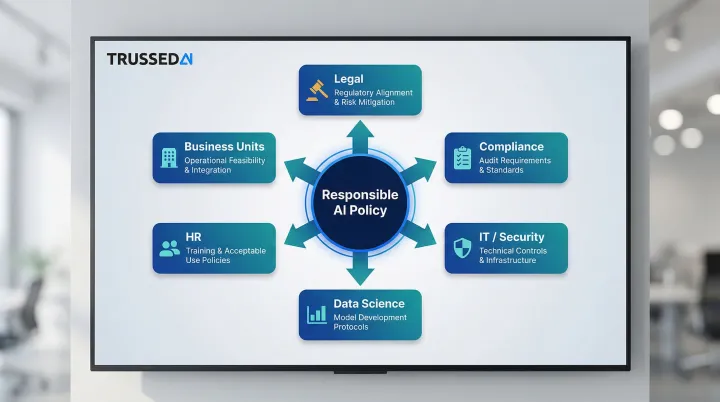

How to Build Your Responsible AI Policy: A Step-by-Step Approach

Building a responsible AI policy takes coordination across legal, compliance, data science, HR, and operations. No single team owns it, and no single team can enforce it alone.

Step 1: Inventory Your AI Systems and Risks

Before writing policy, map every AI system currently in use or in development: what it does, who uses it, what data it touches, and what could go wrong.

The scope of unmanaged AI use is broader than most organizations expect. 78% of employees admit to using AI tools not approved by their employer, and 71.6% of generative AI access happens via non-corporate accounts, bypassing traditional identity management entirely. You cannot govern what you cannot see.

Inventory requirements:

- Catalog all AI systems, including developer tools and third-party integrations

- Document data sources, model types, and decision authority

- Identify high-risk systems requiring stricter controls

- Discover shadow AI through network monitoring and employee surveys

The NIST AI RMF requires mechanisms to inventory AI systems, resourced according to organizational risk priorities.

Step 2: Define Principles, Objectives, and Scope

Establish what values will guide AI use and what the policy will cover. Scope gaps are a leading cause of policy failure.

Scope must include:

- All employees, contractors, and third-party integrations

- Developer tools and environments

- Autonomous agents and multi-agent systems

- Vendor-supplied models and APIs

- Shadow AI and unapproved tools

Clear scope prevents loopholes where teams claim certain AI systems "don't count" under the policy.

Step 3: Draft, Review, and Assign Ownership

Bring stakeholders together to create the policy, assign each section a named owner, and build in legal review for regulatory alignment.

Cross-functional drafting process:

- Legal: Regulatory alignment and risk mitigation

- Compliance: Audit requirements and evidence standards

- IT/Security: Technical controls and infrastructure

- Data Science: Model development and testing protocols

- HR: Employee training and acceptable use

- Business Units: Operational feasibility and use case validation

A governance committee or AI ethics board should serve as the ongoing steward, not just a one-time drafting team. This committee reviews policy effectiveness, approves exceptions, and updates the policy as regulations and technology evolve.

Step 4: Train, Communicate, and Enable the Organization

Even a well-drafted policy fails if employees never see it. Rollout is its own workstream, distinct from drafting.

Implementation requirements:

- Role-based training for developers, business users, and executives

- Real-world scenario exercises demonstrating policy application

- Accessible language, not just legal text

- Clear channels for employees to raise concerns or flag violations

- Regular refreshers as the policy evolves

Only 41% of companies with an AI strategy make their AI policies accessible to employees or require acknowledgment. That gap, between a signed document and actual employee awareness, is where most governance programs break down.

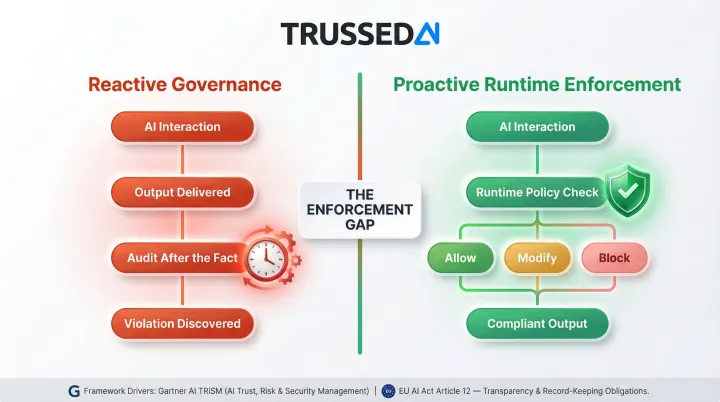

Why Writing the Policy Is Just the First Step: The Enforcement Gap

Most organizations treat responsible AI policy as a documentation exercise. They write the principles, get legal sign-off, publish the PDF, and assume the work is done. But a document in a shared drive doesn't stop a model from producing biased outputs, leaking sensitive data, or violating a regulatory boundary at runtime.

The Enforcement Gap in Practice

In production environments, especially those using generative AI and autonomous agents, AI interactions happen at millisecond speed, across dozens of applications, without any human in the loop. Static policies cannot keep pace with dynamic AI behavior.

97% of AI-related breach victims lacked proper access controls, highlighting that enforcement, not policy, is the most significant vulnerability. As organizations deploy agentic AI, manual oversight becomes mathematically impossible. Agentic AI systems don't just execute instructions. They decide what actions to take, delegate authority, and operate continuously, introducing ambiguity around intent and responsibility.

What Real Enforcement Requires

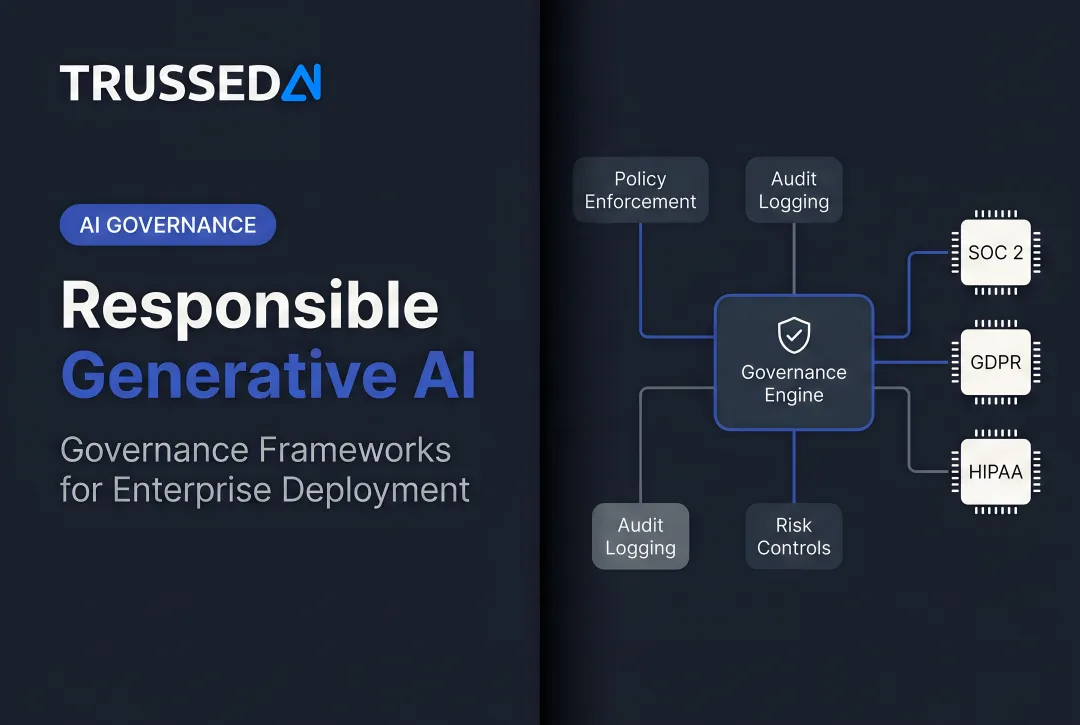

Real enforcement requires runtime policy controls that intercept AI interactions as they happen, check them against defined rules, and either allow, modify, or block outputs before they reach users or downstream systems. This moves governance from reactive (audit after the fact) to proactive (enforce in real time).

Two major frameworks reinforce this requirement:

- Gartner's AI TRiSM framework emphasizes runtime inspection and enforcement focused on real-time AI interactions, models, and applications.

- EU AI Act Article 12 mandates that high-risk AI systems automatically record events over the system's lifetime to ensure traceability and facilitate post-market monitoring.

Infrastructure as Governance

Meeting these requirements demands infrastructure designed for enforcement, not documentation. Trussed AI functions as a control plane that enforces governance policies at runtime across AI apps, agents, and developer tools. Audit-ready evidence is generated automatically as a byproduct of every governed interaction, turning static policies into controls that operate with less than 20ms latency at production scale.

How to Maintain and Evolve Your Responsible AI Policy

An AI policy is a living document. The regulatory landscape, AI capabilities, and your organization's AI footprint are all shifting continuously. The EU AI Act Article 72 requires providers to establish a post-market monitoring system, actively collecting, documenting, and analyzing performance data on high-risk AI systems across their entire operational lifetime.

Policy Review Cycles

At minimum, conduct annual policy reviews. More frequent reviews are necessary in fast-moving regulated contexts or after major AI system changes.

Review triggers:

- New AI system deployments or significant capability changes

- Regulatory changes in operating jurisdictions

- Significant AI incidents or near-misses

- Material shifts in organizational AI strategy

- Audit findings or compliance gaps

These triggers align with what the NIST AI RMF GOVERN function requires: planned, ongoing monitoring and periodic review of risk management outcomes, with organizational roles and responsibilities defined in advance.

Metrics for Policy Effectiveness

Track these signals to evaluate whether your policy is working:

- Model failure rates and performance degradation

- Compliance audit outcomes and findings

- Number of incidents reported and time to resolution

- Bias testing results across protected classes

- Employee training completion and knowledge retention

- Regulatory changes requiring policy updates

- Cost overruns or usage violations

The Role of Continuous Monitoring

Continuous monitoring tools reduce the burden of manual policy maintenance. When governance evidence is generated automatically as a byproduct of every AI interaction, rather than assembled manually before each audit, teams spend less time on compliance overhead and more time on improvement.

Organizations using Trussed AI's runtime governance platform achieve a 50% reduction in manual governance workload and a 50% increase in regulatory compliance, with operational workflows live in as little as 4 weeks. That speed comes from having audit-ready evidence already on hand. No scrambling to reconstruct compliance decisions when regulators come knocking.

Frequently Asked Questions

What is the difference between a responsible AI policy and an AI governance framework?

Governance is the overarching strategy and structure for managing AI risk across the organization. A responsible AI policy is the operational document within that framework, translating governance principles into specific, enforceable rules and procedures for teams deploying and managing AI systems.

Who should own and maintain a responsible AI policy?

Ownership is inherently cross-functional, typically led by a governance committee with representatives from legal, compliance, IT, data science, and key business units. A designated executive sponsor (such as a CISO or Chief AI Officer) should be accountable for ongoing enforcement and policy evolution.

How often should a responsible AI policy be reviewed and updated?

At least annually, with additional out-of-cycle reviews triggered by major events such as new AI system deployments, regulatory changes, significant AI incidents, or material shifts in the organization's AI strategy. Fast-moving regulated industries may require quarterly reviews.

Does a responsible AI policy need to address generative AI and autonomous AI agents separately?

Yes. Generative AI and agentic systems introduce risks, including data leakage, hallucinations, and autonomous decision-making, that standard policies often don't cover. Explicitly scope these system types and define requirements for human oversight, output validation, and execution-layer controls.

How do you enforce a responsible AI policy across a large organization?

Training and documentation are necessary but not sufficient. Enforcement requires runtime controls embedded into how AI systems operate, with policies checked and applied at the moment of each AI interaction. Automated mechanisms should prevent violations before they occur, not just surface them in after-the-fact audits.

What are the most common mistakes organizations make when building a responsible AI policy?

The most common pitfalls are:

- Treating the policy as a one-time document rather than a living control

- Scoping it too narrowly to cover third-party tools and AI agents

- Assigning ownership to a single team rather than a cross-functional body

- Relying on manual reviews instead of runtime enforcement mechanisms