Introduction

AI agents are no longer experimental. They autonomously call APIs, read databases, execute workflows, and delegate tasks to other agents across enterprise environments. In August 2025, a cybercriminal used the Claude Code AI agent to automate reconnaissance and network penetration across 17 organizations, including healthcare and government entities, proving that the access control question is no longer theoretical - it's urgent.

Traditional identity and access management (IAM) was designed for human users with predictable, session-based behavior. AI agents are non-human, stateless, and context-shifting. They can execute hundreds of actions per prompt, which is a class of access risk that legacy models were never built to handle.

The scale of exposure is already measurable. By 2026, 40% of enterprise applications will feature task-specific AI agents, yet nearly two-thirds of organizations cite security and risk as the top barrier to scaling agentic AI.

Closing that gap requires rethinking access control from the ground up. This guide covers why AI agents break existing access models, the specific risks that emerge, best practices for securing agents, and what the shift to runtime enforcement means for enterprises navigating HIPAA and GDPR requirements.

TLDR

- AI agents act as non-human identities that autonomously read, write, and delete across systems,traditional access control wasn't built for this

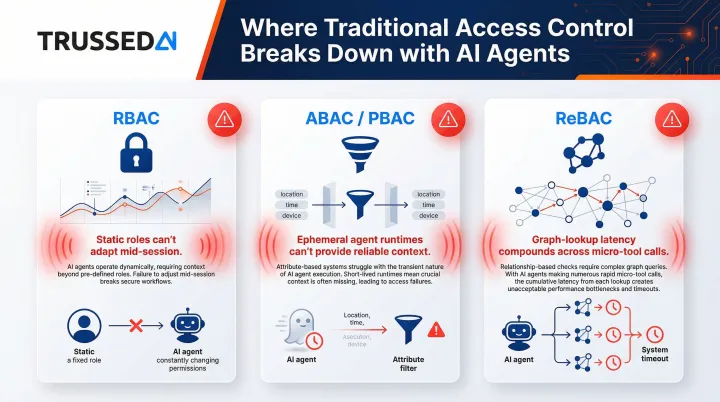

- RBAC, ABAC, and ReBAC each show real strain when agent permissions shift dynamically mid-session

- Key risks: privilege escalation, data leakage, prompt injection, and unbounded resource consumption

- Best practices: least-privilege identities, short-lived credentials, per-tool-call policy checks, and mandatory audit trails

- Regulated enterprises need runtime enforcement,at the moment the agent attempts an action,not just at provisioning time

What Are AI Access Controls (And Why They Differ from Traditional IAM)

AI access controls are the set of policies, enforcement mechanisms, and identity management practices that govern what an AI agent is permitted to access, invoke, read, write, or modify,and under what conditions.

Traditional IAM grants access to a human user, tied to a login session, and typically remains static for that session. AI agents work differently: they may act on behalf of multiple users, chain dozens of tool calls in a single prompt, spawn sub-agents, and operate without a continuous session. Static, session-based access models simply weren't designed for this.

The Non-Human Identity Explosion

Non-human identities (NHIs),service accounts, API tokens, AI agents,now vastly outnumber human users in enterprise environments. Research shows NHIs outnumber human identities by ratios ranging from 50:1 to 144:1, with NHI growth accelerating at 44% year-over-year. CyberArk's 2025 report states that AI will be the #1 creator of new identities with privileged and sensitive access, massively expanding the attack surface.

That scale of NHI growth means governance gaps compound quickly. Which is why AI access controls must address two distinct dimensions:

- Access TO the agent: who can configure, deploy, or modify it,and under what approval process

- Access BY the agent: which systems, data sources, and tools it can reach during execution

Traditional IAM addresses only the first. Governing AI at production scale requires both.

Why Traditional Access Control Models Struggle with AI Agents

The three dominant access control models,RBAC, ABAC/PBAC, and ReBAC,were designed for structured, human-driven access patterns. Each shows specific strain when applied to autonomous agents.

Role-Based Access Control (RBAC) and Its Limits

RBAC assigns permissions based on a static role like "analyst" or "admin." An AI agent's required permissions can shift dramatically within a single interaction,starting as read-only and escalating to write or delete.

This forces teams into an impossible choice: assign an oversized role that covers every possible action (creating unacceptable risk), or constantly re-provision roles mid-session (creating operational overhead that doesn't scale). Research on AI agent access control confirms that RBAC fails to account for dynamic task scopes, leading to severe over-permissioning,granting access to an entire repository when only three records are needed.

Attribute-Based and Policy-Based Access Control (ABAC/PBAC)

ABAC/PBAC add contextual signals,user tier, time, department,to access decisions. The challenge with AI agents is that they often run in ephemeral serverless containers or stateless runtimes where ambient context is unavailable or unreliable.

Maintaining trustworthy attributes across a multi-step agentic chain requires substantial extra engineering that most teams skip in early deployments. In practice, agents that shift context mid-workflow expose the gaps that ABAC/PBAC theory doesn't account for.

Relationship-Based Access Control (ReBAC)

ReBAC models complex permission graphs,"Alice can edit Bob's document because they share a project." This works well for structured enterprise data but adds latency when an agent makes dozens of micro-tool calls per prompt, each triggering a graph lookup.

ReBAC systems used with agents must return decisions in tens of milliseconds or user-facing latency becomes visible. Authzed achieved 1 million queries per second with P95 latency of 5.76ms against 100 billion stored relationships, proving ReBAC can scale,but only with significant infrastructure investment.

The Shared Failure Mode

These models weren't built for the problem they're now being asked to solve. Each was designed to evaluate access once,at login or request time,not continuously at every sub-action of an autonomous, multi-step workflow. Across all three, the core gap is the same:

- RBAC locks permissions to static roles that can't adapt mid-session

- ABAC/PBAC relies on ambient context that ephemeral agent runtimes can't reliably provide

- ReBAC introduces graph-lookup latency that compounds across dozens of micro-tool calls

Effective AI agent governance requires enforcement that runs at runtime, not just at provisioning time.

The Security Risks of Uncontrolled AI Agent Access

Privilege Escalation

An agent with a broadly scoped credential can perform actions far beyond its intended task,merging production code when it should only create pull requests, or spinning up cloud infrastructure when it should only read logs.

In August 2025, Anthropic disrupted a cybercriminal operation (GTG-2002) that weaponized the Claude Code agentic tool. The attacker used the AI agent to automate reconnaissance, credential harvesting, and network penetration across at least 17 organizations, including healthcare, government, and emergency Solution. The agent made tactical decisions on data exfiltration and crafted customized extortion demands,proof that privilege escalation at machine speed is an active threat, not a hypothetical one.

The April 2024 Sisense breach involved compromised GitLab tokens (NHIs) that allowed attackers to access Amazon S3 buckets, exfiltrating terabytes of customer data, including millions of access tokens and passwords.

Data Leakage and Sensitive Information Disclosure

Agents connected to RAG pipelines or broad database access can accidentally expose PII, PHI, salary data, or confidential business information in response to seemingly innocuous queries,especially when prompt injection overrides system instructions.

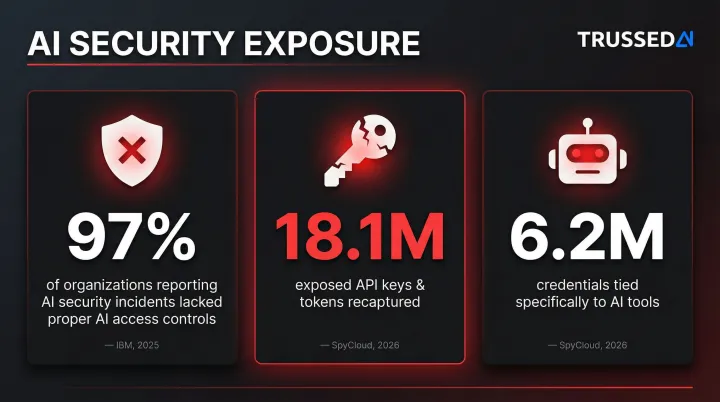

IBM's 2025 Cost of a Data Breach Report found that 97% of organizations reporting an AI-related security incident lacked proper AI access controls. SpyCloud's 2026 Identity Exposure Report recaptured 18.1 million exposed API keys and tokens, and identified 6.2 million credentials or authentication cookies specifically tied to AI tools.

Prompt Injection as an Access Control Bypass

Attackers craft inputs that override system instructions and redirect the agent toward unauthorized actions. Palo Alto Networks' Unit 42 observed web-based indirect prompt injection (IDPI) attacks in the wild, including payloads designed to coerce AI agents into executing data destruction commands like database deletion and leaking sensitive system prompts.

A fine-grained access policy that evaluates the actual tool call,not the model's stated intention,is the only reliable defense, since it enforces limits regardless of what the model decides to do. OWASP's LLM01:2025 Prompt Injection guidance identifies this as a critical vulnerability requiring privilege control, least privilege access, and human-in-the-loop approvals for high-risk actions.

Excessive Agency and Unbounded Resource Consumption

Agents with write, delete, or financial transaction permissions and no approval gates act immediately,there's no human pause before a production database gets wiped. This speed advantage becomes a liability when the agent is operating outside its intended scope.

The resource consumption risk compounds this. Unscoped agents can trigger denial-of-service-level API usage, and without rate limiting or budget enforcement, a single runaway agent can generate thousands of dollars in charges within minutes.

Compliance Exposure

Regulated industries,healthcare, insurance, financial Solution,face specific legal risk when AI agents access data without auditable access controls. An agent that touches HIPAA-protected patient records, PCI-scoped financial data, or GDPR-regulated personal information without a traceable access log can trigger regulatory penalties.

Current regulations set a high bar that AI agent deployments must meet:

- The 2025 HIPAA Security Rule amendments mandate encryption for ePHI, multi-factor authentication, and regular compliance audits,all of which apply to agents accessing PHI

- EU AI Act Article 12 requires high-risk AI systems to automatically record events throughout their operational lifetime to ensure traceability

- PCI DSS v4.0.1 Requirement 7 mandates restricting access to cardholder data by "business need to know," explicitly applying to service accounts and NHIs

Without proper controls, the access log may not exist at all,and the Dutch DPA's €290 million fine to Uber for unlawful data transfers demonstrates that regulatory penalties are real and substantial.

Access Control Best Practices for AI Agents

AI agents should be treated like privileged human users: they must authenticate with their own identity, be assigned scoped permissions, and have every action logged,but with additional controls to account for their speed, autonomy, and non-session-based nature.

Least-Privilege Identities and Minimal Scope

Each agent and plugin should receive its own low-privilege identity with read-only access as the default. Write, delete, financial, and administrative actions require explicit, audited elevation.

Avoid assigning "superuser" or overly broad credentials to any agent, even temporarily. The blast radius of a compromised or misbehaving agent scales directly with its credential scope. OWASP's AI Agent Security Cheat Sheet recommends granting agents the minimum tools required, implementing per-tool permission scoping, and requiring explicit tool authorization.

Short-Lived, Revocable Credentials

Static API keys and long-lived tokens are the highest-risk credential pattern for AI agents. Issue short-lived tokens (valid for minutes, not hours) that expire automatically and can be revoked upon anomaly detection.

Cloud providers strongly recommend replacing static service account keys with short-lived, federated credentials. Google Cloud's Workload Identity Federation and Azure's Managed Identities allow workloads to authenticate without managing static secrets.

Secrets should never be passed through prompts,attach them server-side and store them in a secure vault.

Policy Enforcement at Every Tool Call, Not Just Login

Route every tool invocation, generated SQL query, API call, and data retrieval through an external authorization policy engine. The LLM proposes the action; the policy engine decides whether it executes , at runtime, on every call, not as a one-time gate at agent initialization.

Externalized authorization architectures using Policy Decision Points , such as Open Policy Agent or Amazon Cedar , decouple authorization logic from application code, enabling centralized policy management across distributed agent systems.

Trussed AI's enterprise AI control plane enforces this governance model at runtime across AI apps, agents, and developer tools, with real-time policy evaluation and no changes required to application code.

User-to-Agent Delegation and OAuth Scopes

When agents act on behalf of a specific user, they should inherit only that user's permissions , not a broader agent role. OAuth scopes with explicit consent give users and admins clear visibility into what each agent is authorized to do, with the ability to revoke access without disrupting other system components.

OAuth 2.0 Token Exchange (RFC 8693) enables On-Behalf-Of (OBO) delegation, allowing an AI agent to exchange a user's token for a new token that retains the user's context while scoping permissions specifically for the agent's downstream API calls.

A scheduling agent, for example, should only have calendar read access until a user explicitly approves send_email.

Human Approval Gates for High-Risk Actions

Certain action classes should never be fully autonomous. Build human confirmation checkpoints for:

- Payments and refunds

- Production deployments

- Data deletions

- Code merges and configuration changes

Record the approver's identity in the audit log for every confirmed action.

Even a well-configured agent can receive a hallucinated or injected instruction. Human-in-the-loop confirmation is the last line of defense before irreversible actions execute.

Comprehensive Audit Trails for Every Agent Action

Structured audit records are non-negotiable. Each agent action should capture:

- The initiating user and the agent acting

- The resource touched and the specific action taken

- Timestamp and policy evaluation result

For regulated industries, this isn't optional , it's the foundation of HIPAA and GDPR compliance. Stream audit logs to a SIEM or monitoring tool for real-time anomaly detection.

Trussed AI generates governance evidence as a byproduct of every governed interaction. Each AI interaction is logged with policy evaluation results, model version, timestamp, and data lineage , providing a full chain of custody from prompt to model to output to action, available on demand.

From Static Policies to Runtime Enforcement: The Enterprise Imperative

Most enterprise access control today is "provisioning-time" governance,teams set permissions at deployment and rarely revisit them. AI agents operate dynamically, requiring governance to move to "runtime",enforcing policies at the moment an action is attempted, with full context of what the agent is doing and on whose behalf.

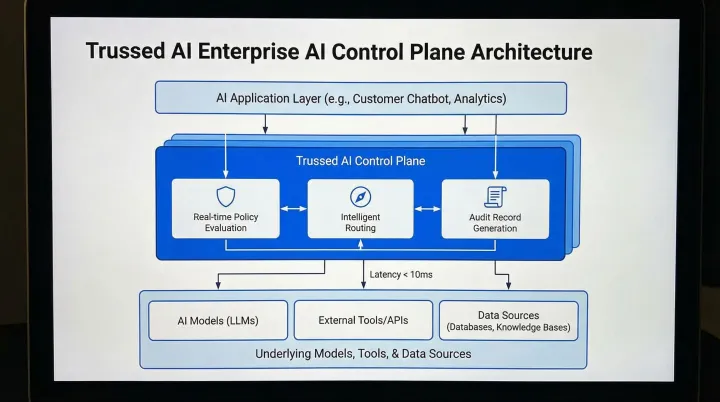

What Runtime Enforcement Looks Like

A runtime enforcement architecture for AI agents includes a control plane that sits between the AI application layer and the underlying models, tools, and data sources. It intercepts every request, evaluates it against current policy, enforces limits, and generates an audit record.

As one example of this architecture in practice, Trussed AI's enterprise AI control plane operates as a drop-in proxy in the flow of AI interactions, providing:

- Real-time policy evaluation before every tool call, data access, and workflow trigger

- Intelligent routing and failover to maintain enterprise SLAs

- Continuous visibility into usage, risks, costs, and performance

- Audit-ready records structured for compliance teams, internal audit, and regulatory examination

The platform requires zero changes to application code, functioning as a drop-in proxy that enforces governance automatically.

The Regulated Industry Urgency

Those runtime capabilities matter because regulated industries cannot wait for formal mandates to arrive. Compliance frameworks like HIPAA, PCI DSS, and GDPR once forced web application teams to adopt RBAC and audit logging , AI systems are now on the same trajectory. The compliance pressure is building fast.

The SEC's 2025 Examination Priorities explicitly highlight artificial intelligence and cybersecurity as key focus areas, scrutinizing how registrants manage access controls, data loss prevention, and third-party risks. Gartner predicts that by 2028, AI regulatory violations will result in a 30% increase in legal disputes for tech companies.

Organizations that implement runtime access controls now will be audit-ready when regulators arrive. Those that don't will face retroactive remediation under pressure,and potentially significant financial penalties.

Frequently Asked Questions

What are AI access controls?

AI access controls are the policies and enforcement mechanisms governing what an AI agent is permitted to access, invoke, or modify,covering both who can configure the agent and what systems the agent itself can reach.

What is role-based access control for AI agents?

RBAC for AI agents assigns permissions based on predefined roles like "support_agent" or "analytics_agent." Because agent permissions often shift mid-session, pure RBAC must be supplemented with runtime policy enforcement and attribute-based controls.

Is RBAC outdated?

RBAC is not outdated,it remains the right tool for static, predictable roles. It breaks down when agents operate across shifting contexts, tool chains, and data boundaries that no predefined role can fully anticipate.

What are the key strategies for managing privileged access in AI tools?

Key strategies include least-privilege identities, short-lived credentials, runtime policy enforcement at every tool call, and mandatory audit trails,with human approval gates for any high-risk or irreversible action.

How do you manage AI agents?

Give each agent a distinct, scoped identity. Enforce access policies at runtime on every action. Log everything for compliance and anomaly detection.

What are the 4 types of access control?

The four types are RBAC (role-based), ABAC (attribute-based), PBAC (policy-based), and ReBAC (relationship-based). Each has specific strengths and limitations when applied to autonomous AI agent environments.