Introduction

Enterprises are caught in a compliance bind: the same AI systems processing personal data at scale are also the ones creating obligations under GDPR and CCPA that human teams struggle to manage manually. Governance infrastructure hasn't kept pace with deployment velocity.

The financial exposure is real. Cumulative GDPR fines have surpassed €7.1 billion as of early 2026, with €1.2 billion issued in 2025 alone. CCPA violations now cost up to $7,988 per intentional violation under 2025 inflation-adjusted penalties.

Compliance failure at this scale is a business-critical risk. Regulators have shifted from complaint-driven enforcement to proactive audits, and the FTC is actively using "algorithmic disgorgement" to force destruction of AI models trained on improperly obtained data.

This guide covers what GDPR and CCPA specifically require from AI systems, the unique compliance gaps AI creates that legacy tools cannot address, and what AI governance tools must actually deliver to keep enterprises compliant in production.

TLDR

- GDPR covers any organization processing EU residents' personal data; CCPA covers for-profit businesses above defined thresholds handling California residents' data

- Both regulations require lawful processing, data minimization, user rights fulfillment, and documented audit trails,all of which AI systems complicate

- AI creates compliance risks beyond traditional data handling: automated decision-making, inability to erase data from trained models, and autonomous agent behavior

- Effective compliance tools cover consent automation, anonymization, user rights fulfillment, audit evidence generation, and real-time policy enforcement

- Compliance can't live only in documentation: it must be enforced at runtime, in production, on every AI interaction

What GDPR and CCPA Actually Require from AI Systems

GDPR's Core AI Obligations

GDPR Article 22 grants individuals the right "not to be subject to a decision based solely on automated processing, including profiling, which produces legal effects concerning him or her or similarly significantly affects him or her."

This prohibition applies unless specific conditions are met: the decision is necessary for contract performance, authorized by EU law, or based on explicit consent with appropriate safeguards in place.

Key requirements include:

- Lawful basis for processing: Consent is the most common basis but the hardest to maintain for AI systems operating at scale

- Data minimization and purpose limitation: AI can only process data that's necessary for clearly defined purposes

- Mandatory DPIAs: Article 35 requires Data Protection Impact Assessments for high-risk AI processing, particularly when deploying new technologies

- Right to human intervention: Individuals can request human review of any automated decision that affects them

Maximum penalties reach €20 million or 4% of global annual revenue, whichever is higher.

CCPA's AI Obligations and 2026-2027 Expansions

California's framework operates differently. The CCPA grants consumers the right to know what personal data is collected, the right to delete, and the right to opt out of data sales,with a broad definition of "sale" that can capture AI-driven data sharing.

Critical 2026-2027 developments:

- January 2026 audit expansion: The CPPA now mandates annual cybersecurity audits for businesses processing data of 250,000+ consumers, or sensitive data of 50,000+ consumers with over $25M in revenue

- 2027 ADMT rules: Businesses using Automated Decision-Making Technology for significant decisions,employment, lending, housing,must provide pre-use notices and honor opt-out requests

- Penalties: $2,663 per unintentional violation; $7,988 per intentional violation or any violation involving minors (2025 adjusted figures)

Key Regulatory Differences

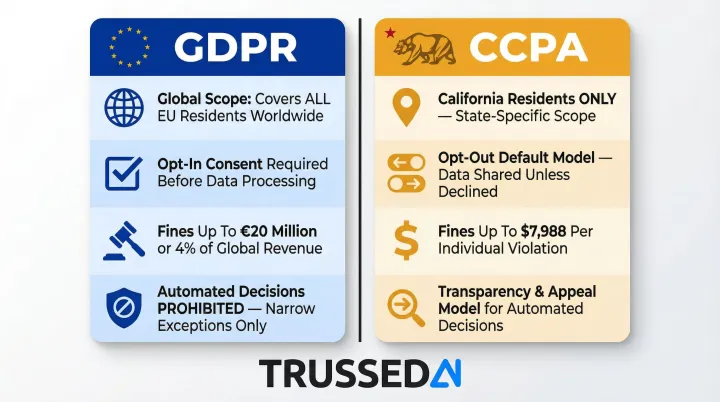

| Framework | Geographic Scope | Consent Model | Maximum Fines | Automated Decisions |

|---|---|---|---|---|

| GDPR | Global (EU residents) | Opt-in required | €20M or 4% revenue | Prohibited with narrow exceptions |

| CCPA | California residents | Opt-out default | $7,988 per violation | Transparency + appeal model |

Both frameworks share a common thread: the stricter the decision's impact on individuals, the heavier the compliance burden. That's especially evident in the use cases that draw the most regulatory scrutiny.

High-Risk AI Use Cases

The following AI applications trigger the strictest obligations under both GDPR and CCPA:

- Credit and lending decisions , automated underwriting and loan approvals

- Hiring and HR screening , resume filtering, interview scoring, promotion algorithms

- Healthcare triage or diagnostics , clinical decision support and patient risk scoring

- Behavioral profiling and targeted advertising , personalization engines and ad targeting

- Insurance underwriting , risk assessment and premium calculation

Each scenario requires documented lawful basis, audit trails, and,in most cases,a mechanism for human review before a decision takes effect.

The EU AI Act Layer

Organizations operating in Europe face stacked obligations. The EU AI Act, fully applicable for high-risk systems from August 2026, classifies AI systems used in biometrics, critical infrastructure, employment, credit scoring, and law enforcement as high-risk. These require conformity assessments, technical documentation, and human oversight,adding a third compliance layer on top of existing GDPR requirements.

Why AI Systems Create Compliance Gaps Legacy Tools Cannot Close

The Scale and Speed Problem

AI systems process millions of personal data points per second and make thousands of automated decisions simultaneously. Enterprise structured data is forecast to grow at 49.3% CAGR through 2028, reaching nearly 70 exabytes annually. The volume and velocity at which AI operates makes manual compliance review structurally impossible. Compliance must be embedded in the system, not reviewed after the fact.

The Right-to-Erasure Paradox

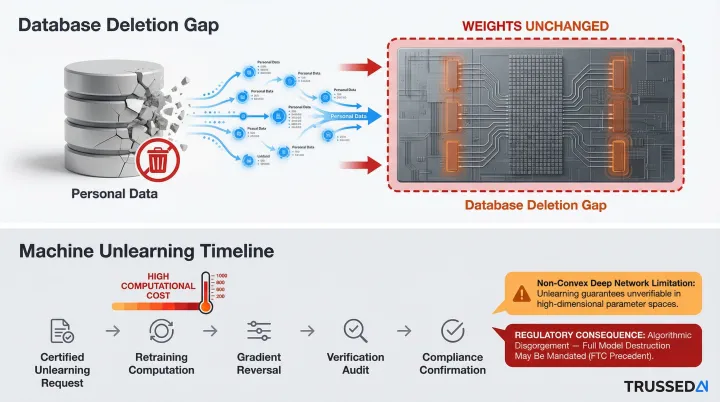

GDPR Article 17 and CCPA both grant individuals the right to have their data deleted. But when personal data has been incorporated into a model's training weights or used to fine-tune a foundation model, true erasure is technically complex or impossible.

Machine learning models act as lossy compressors of training data, implicitly encoding what they observed during training. Deleting a database record does not make a model forget. The two most common technical barriers are:

- Database deletion gaps: Removing source records leaves the model's weights unchanged, preserving the data's influence

- Costly unlearning methods: Certified machine unlearning requires high computational overhead and breaks down with non-convex losses in deep neural networks

Regulators have acted on this tension. The European Data Protection Board notes that if personal data is processed unlawfully to develop an AI model, authorities may order erasure of the specific dataset or, if impossible, the entire AI model itself. The FTC actively uses "algorithmic disgorgement",forcing companies to delete models developed using improperly obtained data.

The practical answer: apply data minimization and consent validation before data enters the training pipeline.

Agentic AI Amplifies Every Compliance Risk

Autonomous AI agents make real-time decisions, call external APIs, access multiple data stores, and take multi-step actions. Each step may process personal data in ways that are dynamic and hard to predict. Unlike traditional software with deterministic data flows, agentic AI creates compliance exposure that cannot be fully anticipated at design time and must be governed at runtime.

Six Capabilities AI Compliance Tools Must Deliver

Consent Management Automation

Consent is not a one-time event,it must be tracked, honored, and enforced at the moment of each data processing operation. AI compliance tools must check and enforce consent status in real time before any personal data is processed, not just at the point of collection.

Manual consent tracking at scale creates both lag and error. DSAR volumes surged 246% between 2021 and 2024, with organizations struggling to maintain accurate consent records across distributed AI systems. Automated consent management ensures that every AI interaction validates consent status before processing, preventing violations before they occur.

Data Minimization and Retention Enforcement

Effective compliance tools must actively prevent AI systems from accessing or storing more data than necessary for the defined processing purpose,not just document the policy. This means:

- Automated enforcement of data access boundaries

- Triggered deletion when retention periods expire

- Controls that stop AI models from ingesting data they have no lawful basis to process

Policy enforcement must happen at the execution layer, not as a post-hoc review. When an AI agent attempts to access a data store or call an API, the system evaluates the request against defined policies before execution.

Anonymization and De-identification

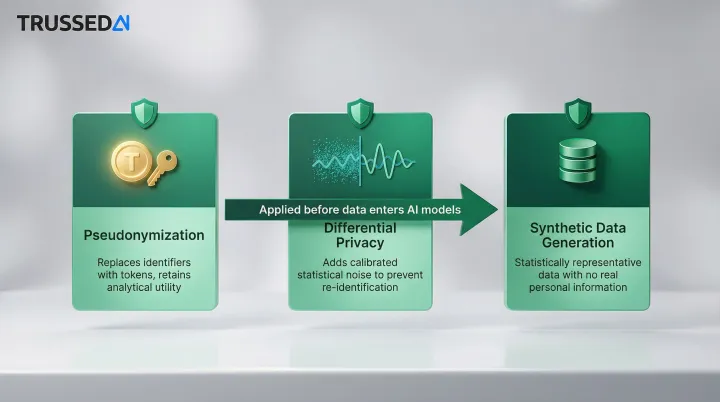

Three techniques matter most for AI compliance:

- Pseudonymization: replaces identifiers with tokens while retaining analytical utility

- Differential privacy: adds calibrated statistical noise to outputs to prevent re-identification

- Synthetic data generation: produces statistically representative data without real personal information

Tools must apply these before personal data enters AI models, particularly training pipelines, to reduce both GDPR and CCPA exposure. This is especially critical given the right-to-erasure challenges once data influences model weights.

Data minimization and anonymization reduce exposure,but they don't eliminate the obligation to respond when individuals exercise their rights.

User Rights Fulfillment

GDPR and CCPA both require organizations to respond to data subject requests,access, deletion, portability, correction,typically within 30–45 days. For AI systems, this means tools must locate personal data across:

- Structured and unstructured data stores

- AI-generated outputs

- In some cases, flag where data has influenced model behavior

Manual processing costs an average of $1,524 per DSAR, with typical processing taking 3–4 weeks per request. Automated DSAR tools can reduce processing time to 5–7 days and cut staff hours by 80%,numbers that shift the economics at any scale.

Automated Audit Trail and Evidence Generation

Regulators are moving from complaint-driven enforcement to proactive audits. The CPPA's 2026 expanded audit authority requires businesses to conduct annual cybersecurity audits and submit certifications covering 18 specific components,including multi-factor authentication, encryption, zero trust architecture, and audit-log management.

Organizations must produce evidence of compliant behavior at any time, not just after an incident. AI compliance tools should generate complete, tamper-proof logs of every data access, model invocation, processing decision, and policy check,continuously, as a byproduct of governed operations rather than a separate documentation exercise.

Trussed AI's control plane takes this approach: audit trails are maintained automatically with every governed AI interaction, so compliance evidence is always current without manual overhead.

Real-Time Policy Enforcement

The defining capability that separates modern AI compliance infrastructure from legacy governance is where enforcement happens: at runtime, not in documentation.

Policies must be enforced at the point of execution,when the model is called, when the agent takes an action, when data is accessed,across every AI application, agent, and workflow. Organizations that rely solely on design-time policies and periodic audits will have compliance gaps that neither their legal team nor their auditors will discover until it's too late.

What Should Your AI Data Privacy Policy Include

Core Policy Elements

A complete AI data privacy policy starts with knowing what systems you have and how they handle personal data:

- Current inventory of all AI systems and the categories of personal data each accesses

- Documented lawful basis for each AI processing activity

- Disclosure language for automated decision-making , including what decisions AI makes, how to request human review, and how to appeal

- Defined data retention schedules with associated deletion triggers

Research shows that 80% of employees use unapproved AI tools, creating a "shadow AI" crisis that adds $670,000 to average breach costs. Without comprehensive inventory, compliance governance cannot be enforced on systems that are invisible.

Vendor and Employee Governance

The policy must address employee use of third-party AI tools,public-facing LLMs like ChatGPT or Copilot,that may inadvertently ingest personal data. This includes:

- Acceptable use policies for external AI Solution

- Data processing agreement requirements for all AI subprocessors

- What data vendors can use for model training and whether opt-outs are available

- Access controls and approval workflows for new AI tool adoption

Review Cadence Requirement

Those vendor and employee controls only hold if the policy stays current. AI-specific privacy policies need more frequent review than traditional data governance documents , at least quarterly.

Two forces drive that cadence: regulatory timelines (CCPA ADMT rules take effect in 2027, EU AI Act enforcement is phased) and rapid AI capability evolution inside the enterprise. A policy written six months ago may not reflect the tools your teams are using today.

Building Your AI Compliance Action Plan

Start with AI Inventory and Data Mapping

Compliance governance cannot be enforced on systems that are invisible. The first step is identifying every AI application, agent, and workflow in production or development, mapping the personal data each touches, and documenting the regulatory jurisdictions of affected data subjects.

Gartner predicts that by 2030, more than 40% of enterprises will experience security or compliance incidents linked to unauthorized shadow AI. Organizations with high levels of shadow AI experience average breach costs of $4.63 million,a $670,000 premium over those with low or no shadow AI.

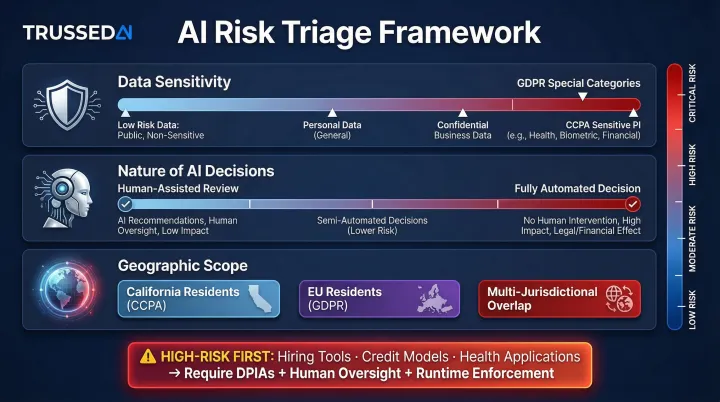

Prioritize by Risk Exposure

Not all AI systems carry equal compliance weight. Triage by three factors:

- Sensitivity of data processed - Special categories under GDPR, sensitive PI under CCPA

- Nature of AI decisions - Fully automated vs. human-assisted

- Geographic scope of affected users - EU residents, California residents, multi-jurisdictional

High-risk systems,hiring tools, credit models, health applications,should be the first to receive DPIAs, human oversight controls, and runtime policy enforcement.

Deploy Runtime Governance Infrastructure

Once high-risk systems are identified, static policies and periodic audits won't keep pace with production AI environments. Organizations need a governance layer that enforces compliance at the point of execution , across every model, agent, and workflow , rather than reviewing violations after they occur.

Trussed AI's enterprise AI control plane addresses this directly. It converts compliance policies into real-time enforcement, generating audit-ready evidence as a natural output of every governed interaction. Key operational benchmarks include:

- Under 20ms enforcement latency , governance runs without slowing production systems

- Under 1% compliance violation rate , across governed models, agents, and workflows

- Enterprise-grade governance , meeting audit requirements for regulated industries

- Complete audit trails , maintained automatically in real time, not reconstructed after the fact

The platform is designed for insurance, healthcare, and financial Solution organizations that need continuous compliance monitoring alongside operational AI systems.

Frequently Asked Questions

What are the GDPR AI requirements?

GDPR requires AI systems to have a lawful basis for processing (usually consent), follow data minimization and purpose limitation principles, conduct mandatory DPIAs for high-risk AI, and respect Article 22 rights,which include the right to request human intervention for automated decisions with significant effects.

What is CCPA for AI?

CCPA grants California residents rights over personal data used by AI systems,including the right to know, delete, and opt out of data sales. New 2026–2027 rules add mandatory risk assessments and transparency requirements for AI-driven automated decision-making, with pre-use notices and opt-out mechanisms required for significant decisions.

What is the difference between GDPR and CCPA compliance?

GDPR is global and opt-in based with higher maximum fines (€20M or 4% revenue); CCPA is California-specific and opt-out based ($7,988 per intentional violation). GDPR prohibits certain automated decisions outright with narrow exceptions, while CCPA focuses on transparency and the right to appeal.

What should an AI policy include regarding data privacy?

A complete AI data privacy policy should cover:

- Inventory of AI systems and their data usage

- Documented lawful basis for each processing activity

- Automated decision-making disclosures with human review and appeal processes

- Retention and deletion schedules

- Governance of employee and vendor AI tools, including data processing agreements

What is a compliance standard for AI?

There is no single universal AI compliance standard. Organizations must satisfy applicable data privacy laws (GDPR, CCPA), sector-specific regulations (HIPAA, GLBA), and emerging frameworks like the EU AI Act, with enterprise security governance controls demonstrating commitment to security and compliance.

How is AI HIPAA compliant?

AI systems handling protected health information (PHI) must meet HIPAA's Security and Privacy Rules,including access controls, audit logging, minimum necessary data use, and Business Associate Agreements with any AI vendors, even those that only process encrypted ePHI.