Introduction

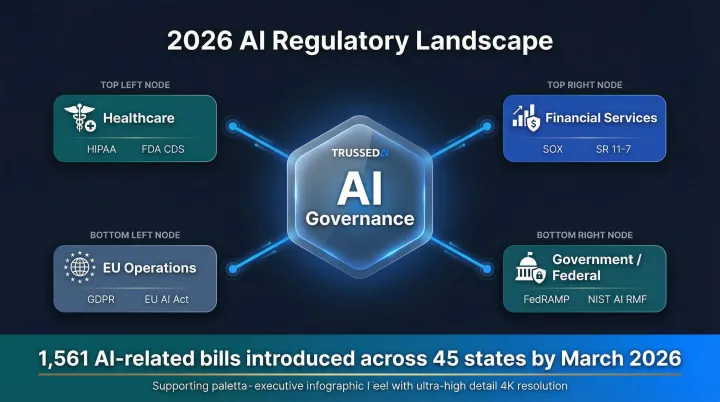

Regulated industries are deploying AI automation at scale, but governance infrastructure hasn't kept pace. State lawmakers introduced 1,561 AI-related bills across 45 states by March 2026, a 145.8% increase from 2024, while organizations scramble to deploy AI faster than they can govern it. This creates the "governance gap" , a dangerous disconnect between AI innovation and regulatory control that exposes organizations to compliance violations, operational failures, and escalating costs.

For compliance leaders in healthcare, financial Solution, and other regulated sectors, the reality is this: AI workflow automation handles the tedious, evidence-intensive parts of compliance work effectively , but only when the infrastructure beneath it is built correctly. Deploy AI without runtime governance, and you're accelerating risk faster than you can detect it.

This guide covers:

- The regulatory landscape shaping AI deployment in 2026

- Where automation genuinely adds compliance value

- Non-negotiable features to require in any governance tool

- How to implement a program that satisfies even the most demanding audit teams

TLDR

- AI workflow automation reduces compliance burden only when built on proper governance infrastructure,automated evidence collection and runtime enforcement must be designed in, not bolted on

- Organizations face overlapping frameworks (HIPAA, GDPR, SOX, FedRAMP) with distinct requirements for auditability, access controls, and data handling

- Non-negotiable tool requirements include third-party security attestation, role-based access controls, audit logging, and real-time policy enforcement

- Start with high-frequency, low-risk workflows; keep humans in the loop for high-stakes decisions; govern automation assets like production code

- Building on compliant infrastructure now means being audit-ready when the next regulation lands

The Compliance Gauntlet: Regulatory Frameworks Shaping AI Deployment

The regulatory environment for AI automation is a layered stack that shifts by industry and geography. Depending on your sector, relevant frameworks may include:

- Healthcare: HIPAA privacy rules and FDA Clinical Decision Support guidance

- Financial Solution: SOX controls and SR 11-7 model risk management requirements

- EU data handlers: GDPR data minimization and explainability mandates

- Government contractors: FedRAMP security baselines

- Most regulated organizations: Security attestation expectations from partners and auditors

The pain rarely comes from the rules themselves - it comes from the evidence. Each framework requires documented proof that controls exist and are operating effectively. Manual evidence collection across dozens of systems is the primary compliance bottleneck, not policy writing. Engineers often spend 40-60 hours per framework hunting through logs, screenshots, and configuration files to prove controls worked as intended.

That evidence burden is about to get heavier. The regulatory environment is accelerating, not stabilizing. As of March 2026, lawmakers in 45 states introduced 1,561 AI-related bills,already surpassing the full-year 2024 total of 635. The EU AI Act now requires that high-risk AI systems technically allow for automatic recording of events (logs) over the lifetime of the system and mandates human oversight during operation. Federal agencies are leveraging Executive Order 14110 to enforce guidelines for developing safe, secure, and trustworthy AI systems aligned with the NIST AI Risk Management Framework.

Regulated organizations must build compliance infrastructure that can adapt to new frameworks without full-system rebuilds. That means designing governance into the AI deployment stack from day one - so each new regulation adds a policy update, not a three-month remediation project.

How AI Workflow Automation Reshapes Compliance Work

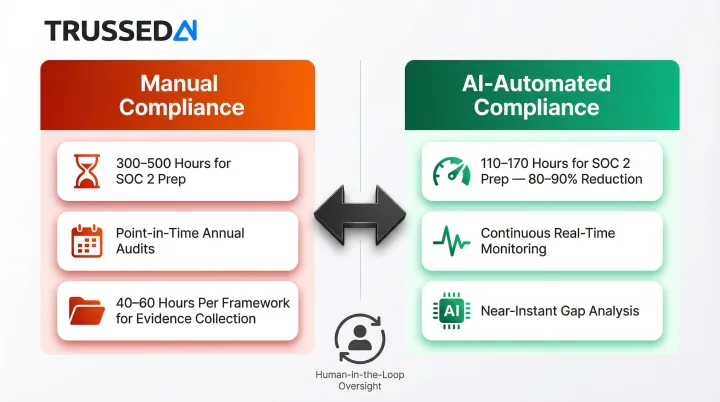

Automated Evidence Collection

The most immediate ROI from AI automation in compliance is eliminating "screenshot hunting." AI-powered workflows automatically pull logs, configurations, access records, and HR data from integrated systems,in consistent formats, with timestamps, ready for auditors on demand. AI can cut manual evidence collection time by 80-90%. Preparing for a first compliance audit manually often takes 300-500 hours; AI-assisted preparation reduces this to just 110-170 hours.

The reliability improvement matters as much as the speed. Manual collection introduces human error, inconsistent formatting, and gaps. Automated collection generates the same evidence structure every time, reducing audit findings caused by documentation quality rather than actual control failures.

Continuous Monitoring vs. Point-in-Time Audits

Legacy compliance operates on annual or quarterly snapshots that miss drift between cycles. AI-driven platforms analyze system logs and unstructured data in real time to detect compliance drift before it becomes an audit finding. Organizations move from scrambling before audits to maintaining continuous readiness.

Regulatory and operational governance relies on point-in-time audits, but in an era where AI agents can iterate, adapt, or drift across complex workflows in real time, an audit conducted last Tuesday is an obsolete safety measure by Wednesday morning. Continuous monitoring closes this gap by evaluating controls as they operate, not months after the fact.

Intelligent Gap Analysis

Generative AI compares new or updated regulations against an organization's existing control set, surfacing gaps before they become findings. What previously required weeks of manual cross-referencing becomes a near real-time feedback loop. In a documented case study, Mathematica reduced turnaround time for CMS rule comparisons from 3-4 hours to under 30 minutes,a 94% cost reduction,for an activity performed up to 10 times per year.

This capability is especially valuable as regulations evolve. When a new state AI law passes or GDPR guidance updates, AI can immediately flag which existing controls need modification and which new controls must be implemented.

Centralized Workflow Governance

AI platforms unify compliance tasks,evidence collection, policy update notifications, control testing, approval routing,into governed, repeatable workflows. This reduces human error, ensures consistency across audit cycles, and eliminates shadow automation (ad hoc scripts, personal spreadsheets) that creates compliance blind spots.

Shadow AI is a significant exposure. Nearly half of all generative AI users,47%,are still using personal AI applications rather than organization-managed tools. In healthcare specifically, 40% of professionals have encountered unauthorized AI tools in the workplace, and nearly 20% admit to using them.

Platforms like Trussed AI address this by enforcing governance at runtime across AI apps, agents, and developer tools,so shadow automation can't quietly accumulate outside the audit trail. Centralized governance prevents the fragmentation that creates compliance blind spots.

Human-in-the-Loop Design

Centralized governance only works if authority stays with the right people. AI handles the data-intensive, repetitive work so human experts can focus on risk strategy, control design, and interpreting complex regulations. High-stakes decisions (policy drafting, vendor due diligence, risk assessments) must retain human ownership, with AI surfacing information and recommendations rather than rendering final determinations.

The EU AI Act explicitly mandates this approach for high-risk systems, requiring that they be designed and developed in such a way that they can be effectively overseen by natural persons during the period in which they are in use. Effective AI compliance automation preserves human authority while removing repetitive manual work.

Non-Negotiable Compliance Features for AI Tools in Regulated Environments

Third-Party Security Attestation

Understand the difference: Type I assessments show controls existed on a specific date. Type II evaluations cover controls over a period, typically 6-12 months, demonstrating sustained, consistent operation. Type II is the minimum standard for regulated environments because it proves controls work over time, not just during an audit snapshot.

Request the actual attestation report from vendors, not just a compliance page or marketing summary. The report details which Trust Services Criteria were evaluated (security, availability, processing integrity, confidentiality, privacy) and what the auditor found.

Role-Based Access Controls and SSO

RBAC is a HIPAA and GDPR requirement because it enforces the principle of least privilege,ensuring each user or AI agent accesses only the data its specific function requires. Pair RBAC with Single Sign-On (SSO) to maintain centralized identity management and streamline access revocation when roles change.

One critical governance feature often overlooked: separation between who builds or modifies workflows and who executes them. This prevents unauthorized changes to approved compliance workflows and creates an audit trail showing who changed what and when.

Comprehensive, Tamper-Evident Audit Logging

Session-level logs showing a tool was used are insufficient for HIPAA, SOX, and FedRAMP audits. Operation-level logs are required,capturing:

- Agent or user identity

- Which data was accessed

- What action was performed

- Who authorized the workflow

- Timestamps for every event

These logs must feed SIEM systems and satisfy multiple frameworks simultaneously. HIPAA requires hardware, software, and/or procedural mechanisms that record and examine activity in information systems containing ePHI. GDPR Article 30 mandates that controllers maintain records of processing activities under their responsibility. FedRAMP Rev 5 requires strict adherence to Audit and Accountability controls, including AU-11 which requires organizations to retain audit records for a specified time period.

Private Deployment and Data Sovereignty Options

SaaS-only platforms may be disqualifying for organizations with strict data residency, sovereignty, or security requirements. Healthcare organizations processing PHI and financial institutions with sensitive customer data cannot route that data through shared public inference endpoints.

On-premises or private cloud deployment,including private AI model hosting via options such as AWS Bedrock, Azure AI, or self-hosted models,is a technical requirement for many regulated environments, not a preference. Verify that vendors support deployment in your cloud or data center with full control over where data resides and how it's processed.

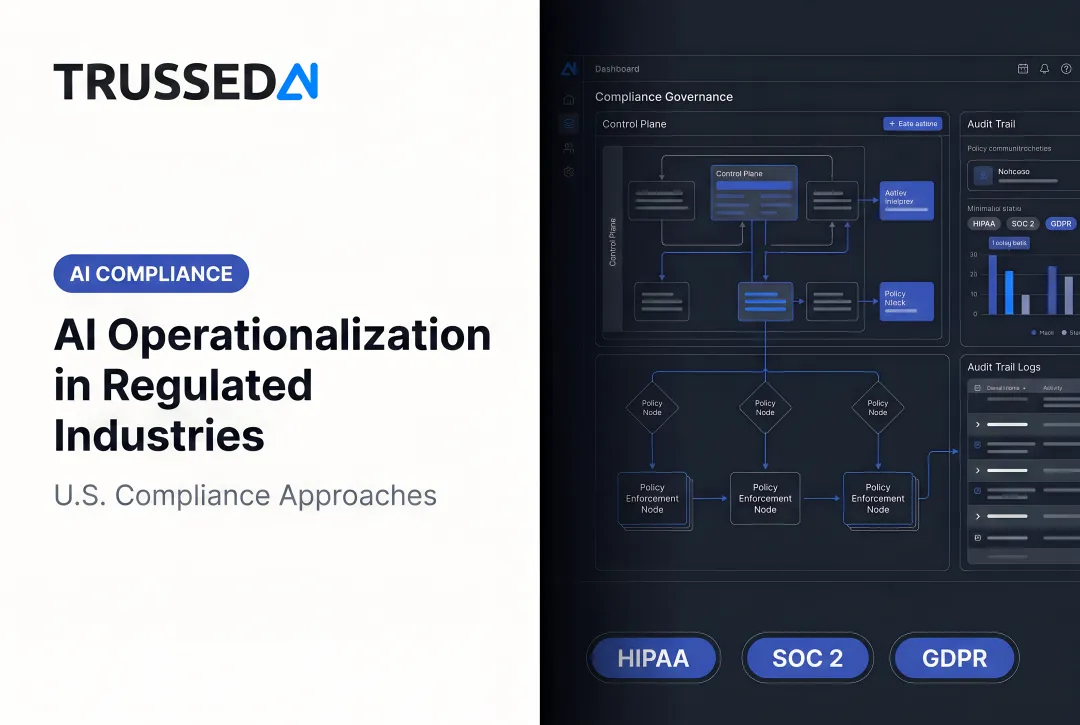

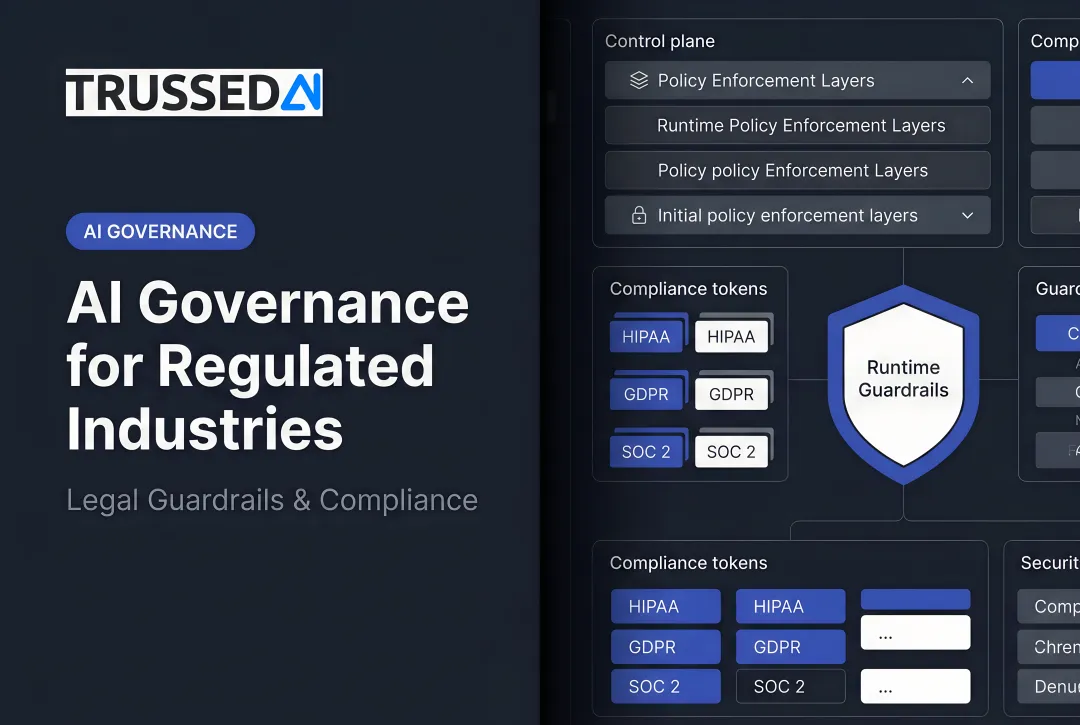

Real-Time Policy Enforcement at Runtime

This is the feature most commonly missing from general-purpose automation tools but essential for regulated environments. The distinction matters:

Static policies: Written in documents, checked manually, enforced through human review processes

Runtime enforcement: Policies evaluated automatically at the moment of every AI interaction, before actions execute

Platforms offering a drop-in control plane architecture enforce governance at runtime across AI apps, agents, and developer tools without requiring code changes. Organizations using this approach achieve measurable results:

- Less than 1% compliance violations in production environments

- Complete audit trails generated automatically as a byproduct of every governed interaction

Trussed AI, for example, delivers this as a drop-in proxy,enforcing runtime governance across AI apps, agents, and developer tools.

Building Your Compliant AI Automation Program: A Practical Framework

Start Small with High-Frequency, Low-Risk Workflows

Begin with a compliance control that has high repetition,such as evidence collection for a specific control framework or access log review for a HIPAA audit,rather than a complex, sensitive workflow. This "prove, then expand" method validates integrations, surfaces gaps, and builds internal confidence before scaling to higher-stakes processes.

Example starting points:

- Automated collection of user access logs for quarterly reviews

- Scheduled extraction of configuration settings for change management audits

- Automated vendor security questionnaire tracking and evidence requests

Build a Cross-Functional Governance Team from Day One

AI automation in regulated industries cannot live in one department. Build a cross-functional oversight team that spans the functions most exposed to compliance risk:

- IT/Infrastructure handles technical implementation and system integration

- Legal covers regulatory interpretation and risk assessment

- Data Science/AI Engineering owns model behavior and technical controls

- Compliance manages framework requirements and audit preparation

Define clear ownership for workflow governance, change control, and escalation paths. Shadow automation,AI tools used informally without governance oversight,creates some of the hardest-to-detect compliance gaps. Despite 94% of IT leaders feeling confident in detecting AI misuse, 62% report seeing staff experiment with unsanctioned AI tools.

Governance structure answers the "who owns this" question before an auditor asks it. That same discipline applies to every workflow your team deploys.

Treat Automated Workflows as Governed Compliance Assets

Every automated workflow should be documented with its purpose, inputs, outputs, and the controls it supports. In GxP-regulated environments (pharmaceuticals, medical devices, clinical research), reproducibility is a hard requirement,workflows must be version-controlled with the same discipline as production code.

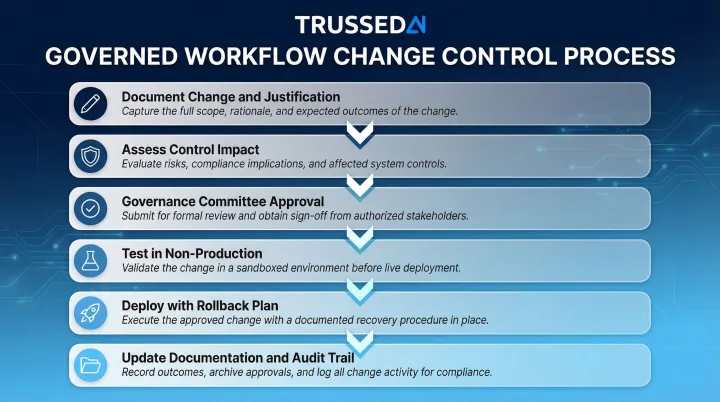

Changes must follow a defined change control process before implementation:

- Document the proposed change and business justification

- Assess impact on existing controls and compliance posture

- Obtain approval from governance committee

- Test in non-production environment

- Deploy with rollback plan

- Update documentation and audit trail

Vendor Assessment and Business Associate Agreements

For healthcare organizations, any AI vendor processing PHI is a business associate under HIPAA and must execute a HIPAA-compliant BAA before any PHI flows to their systems. Many commercial AI vendors will not sign BAAs, making them categorically ineligible for PHI-touching workflows regardless of other capabilities. 58% of surveyed healthcare organizations have not signed a BAA for an AI email tool, and 21% mistakenly think it's not required.

The same principle applies across regulated industries. Treat vendor compliance verification,BAAs for HIPAA, DPAs for GDPR, attestation reports for applicable frameworks,as a gate in the assessment process, not a post-deployment cleanup step.

Vendor assessment checklist:

- Third-party security attestation report (request actual document, not marketing claims)

- Willingness to sign BAA (healthcare) or DPA (GDPR)

- Private deployment options for sensitive data

- Audit logging capabilities and retention policies

- Incident response and breach notification procedures

Define and Monitor for Compliance Drift

Compliance drift is inevitable without active monitoring. Model behavior changes, access pattern anomalies, and shifting regulatory requirements all create exposure over time. Continuous compliance monitoring dashboards track policy violations, access events, and workflow deviations in real time,catching drift before it becomes an audit finding.

Monitor these indicators:

- Unauthorized access attempts or privilege escalations

- Policy violation frequency and patterns

- Model output quality degradation

- Workflow execution failures or anomalies

- Regulatory requirement changes affecting existing controls

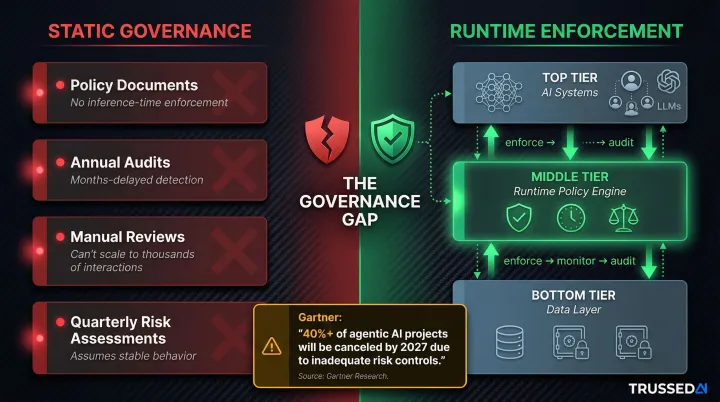

The Governance Gap: Why Runtime Enforcement Is the Missing Layer

Most regulated organizations have a governance gap they don't fully see: AI applications, agents, and workflows are deploying faster than governance infrastructure can follow. A HIPAA policy document or certified platform doesn't guarantee those policies are being enforced at the moment AI systems process sensitive data.

Static governance frameworks , written policies, annual risk assessments, quarterly audits , were built for human-operated systems with predictable, slow-changing behavior. AI systems, especially agentic AI with multi-step reasoning and dynamic decision-making, require governance that operates at the speed of inference, evaluating every interaction against policy constraints in real time.

The gap shows up in predictable patterns:

- Policy documents define what should happen, but nothing enforces it at inference time

- Annual audits catch violations months after sensitive data was exposed

- Manual reviews can't scale to thousands of AI interactions per hour

- Quarterly risk assessments assume system behavior is stable between reviews

Gartner predicts over 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear business value, or inadequate risk controls. The primary cause isn't technical failure,it's governance failure. Organizations deploy agents that can execute autonomous actions in milliseconds, then attempt to govern them with quarterly reviews and manual audits.

What that failure points to is an architectural gap, not a documentation problem. The missing layer is a runtime control plane , infrastructure that sits between AI systems and the data they access, enforcing policy on every interaction rather than reviewing logs after the fact. Trussed AI was built specifically for this layer, giving regulated enterprises the ability to govern AI interactions automatically, at the point they occur, rather than retrospectively.

Frequently Asked Questions

What are the most effective AI tools for automating regulatory compliance tasks in financial Solution?

Effective tools for financial Solution compliance share several non-negotiable characteristics:

- Third-party security attestation with operation-level audit logging

- Role-based access controls and private deployment options for sensitive data

- Automated evidence collection supporting SOX and SR 11-7 model risk requirements

- Real-time policy enforcement rather than point-in-time audits

Are there any HIPAA compliant AI tools?

HIPAA compliance for AI tools means the vendor must sign a Business Associate Agreement (BAA), implement minimum necessary access controls, maintain tamper-evident audit logs, and encrypt PHI at rest and in transit. "HIPAA compliant" is not a certification,it's a set of operational requirements verified through vendor assessment and BAA execution.

When integrating AI into data processing, what should be done to ensure compliance with privacy laws?

Privacy law compliance during AI integration requires four controls:

- Enforce data minimization so AI processes only data necessary for its defined purpose

- Restrict data access to authorized functions via role-based controls

- Maintain documented data lineage and audit trails throughout processing

- Confirm vendor compliance with applicable frameworks (GDPR, HIPAA, CCPA) before any data flows to their systems

How can AI technologies be leveraged to enhance data protection while ensuring compliance with HIPAA regulations?

AI strengthens HIPAA compliance by automating PHI identification, continuously monitoring access patterns for anomalies, and enforcing role-based controls at runtime. Audit evidence generates automatically at every governed interaction, cutting both manual workload and violation rates.

What is AI automation in finance?

AI automation in finance uses automated workflows to handle repetitive, rule-based tasks: transaction monitoring, fraud detection, regulatory reporting, risk assessment, and compliance evidence collection. These workflows operate under governance frameworks like SOX and SR 11-7, which require documented, auditable, and explainable decision processes.

How do you handle version control and documentation when using AI-generated code or automated workflows?

Treat automated workflows as governed code assets with the same controls applied to production software:

- Maintain version control with change logs for every workflow update

- Require documented approval before deploying changes to production

- In GxP environments, apply Computer System Validation (CSV) principles, including installation and performance qualification

In regulated contexts, each change must link to an authorized approver with a timestamp , creating a defensible audit trail if regulators request evidence.