Introduction

OpenAI's 2025 State of Enterprise AI report reveals a striking productivity gain: workers save 40–60 minutes per day using OpenAI models in their workflows. Yet this acceleration has created a widening gap , most organizations are deploying AI faster than their governance structures can manage. Gartner predicts 40% of agentic AI projects will fail by 2027 if proper controls aren't established, while Cyberhaven reports that 39.7% of all AI interactions involve sensitive data.

The core problem: governance for OpenAI deployments isn't just about acceptable-use policies and ethics documents. It requires controlling model access, enforcing data privacy at inference time, managing API costs, and maintaining audit-ready compliance evidence in real time.

Static policies alone can't do this. Two examples that illustrate the gap:

- A policy document won't intercept a prompt containing patient health information before it reaches OpenAI's servers

- A usage dashboard won't stop a runaway agent from triggering thousands of dollars in unbudgeted API calls

This guide covers what it takes to govern OpenAI deployments in production:

- The four governance pillars that matter at scale

- How to move from static policy to runtime enforcement

- The compounded risks introduced by agentic workflows and the Assistants API

- The infrastructure layer needed to make governance enforceable in production

TLDR

- Enterprise governance for OpenAI requires real-time enforcement at every model interaction, not just policy documents

- The four pillars are access control, data privacy, compliance audit trails, and cost attribution

- OpenAI's native controls (system prompts, zero-data-retention) are necessary but insufficient for enterprise requirements

- Agentic workflows using the Assistants API introduce compounded risks requiring dedicated controls

- Deploy a governance layer between your apps and the OpenAI API , policy enforcement with zero code changes required

What Enterprise AI Governance Actually Means for OpenAI Deployments

Enterprise AI governance for OpenAI is the set of controls, policies, and enforcement mechanisms that regulate how OpenAI models are accessed, what data flows through them, how outputs are monitored, and how usage is tracked.

This is distinct from the broader corporate governance changes at OpenAI as an organization. The focus here is specifically on how enterprises govern their own use of the OpenAI API.

Standard IT governance frameworks don't transfer directly to OpenAI deployments for three reasons:

- API calls are stateless and non-deterministic. OpenAI models generate different responses to identical prompts, making output validation and behavior prediction different from conventional application testing.

- New attack vectors require new controls. Prompt injection lets attackers manipulate model behavior through crafted inputs. The EchoLeak vulnerability (CVE-2025-32711) demonstrated zero-click data exfiltration via a single email processed by an LLM , a threat standard input validation cannot detect because natural language doesn't parse like structured data.

- Hallucinated outputs can become authoritative decisions. When AI-generated content flows into claims approval, financial reporting, or medical recommendations, the NIST Generative AI Profile warns that "confabulation" can present erroneous content with high confidence , a risk traditional software governance never contemplated.

Three Layers of Governance

Enterprises must address governance at three distinct layers:

Provider-level governance: OpenAI's own policies, enterprise agreements, Business Associate Agreements (BAA) for HIPAA, and data processing agreements. These define what OpenAI can do with your data and what protections are contractually guaranteed.

Platform-level governance: Organizational controls over API keys, model access, usage dashboards, and configuration. This is what OpenAI provides natively through organization settings.

Runtime-level governance: Enforcement at the moment of every inference call - inspecting prompts for sensitive data, validating access permissions, applying cost controls, and logging interactions for audit. This layer requires infrastructure the enterprise must build or adopt.

Why OpenAI's Native Controls Are Insufficient for Enterprise Needs

OpenAI provides essential baseline controls for enterprise customers: organization-level API key management, usage dashboards, system prompt configuration, data processing agreements, and zero-data-retention (ZDR) options. These are necessary but not sufficient.

The critical gaps:

- No cross-team policy enforcement. Organization settings apply uniformly - you can't enforce different data handling policies for HR versus customer support using the same API key pool.

- No real-time content filtering tied to business rules. System prompts can guide model behavior, but they can't inspect incoming prompts for PII, mask sensitive fields before transmission, or block outputs that violate regulatory requirements.

- No per-project cost attribution. Usage dashboards show aggregate consumption but can't attribute costs to specific teams, applications, or cost centers, making budget enforcement and chargeback impractical.

- No automated audit trail generation. OpenAI logs API requests for abuse monitoring (unless ZDR is enabled), but those logs aren't structured for regulatory audit. You can't generate HIPAA-compliant evidence or SOX audit trails from raw API logs.

- No guardrails for dynamic inputs in agentic workflows. The Assistants API is explicitly excluded from ZDR and retains application state until manually deleted. Multi-step agentic workflows require governance at the action level , and OpenAI provides no native mechanism for this.

The Four Pillars of Enterprise OpenAI Governance

Pillar 1 - Access and Identity Control

Controlling which teams, applications, and users can call which OpenAI models (GPT-4o, o1, o3) - with what permissions, and under what conditions - is the foundation of access governance. This includes model-version pinning to prevent behavior drift when OpenAI updates default model versions.

The mechanics:

- Centralized API key management: Not team-level keys scattered across repos and environment variables. GitGuardian's 2026 report found 28.65 million hardcoded secrets leaked in public GitHub commits in 2025,a 34% increase year-over-year. AI-service secrets specifically grew 81%, with AI-assisted commits leaking secrets at double the baseline rate.

- Role-based model access: Engineering teams may access GPT-4o for prototyping, while production applications are restricted to specific model versions with approved configurations.

- Rate limiting per application or cost center: Prevent individual teams or applications from consuming disproportionate resources.

- Environment separation: Enforce distinct governance policies for dev, staging, and production,preventing test data from flowing through production models or production API keys from appearing in development repositories.

These controls map to CIS Control 5 (Account Management) and Control 6 (Access Control Management), which mandate centralized credential management and access revocation processes.

Pillar 2 - Data Privacy and Input/Output Control

Every prompt sent to OpenAI contains potential PII, PHI, or proprietary business data. Governance must enforce what data is permissible in inputs, filter or mask sensitive fields before they leave the enterprise perimeter, and screen outputs for data leakage or policy violations before they reach end users.

The regulatory dimension:

- Healthcare (HIPAA): PHI transmitted to OpenAI requires a BAA and appropriate input/output safeguards. Research shows that with sophisticated adversarial capabilities, PII extraction rates from LLMs can increase fivefold.

- Financial Solution (SOX/GLBA): AI-assisted financial processes require the same data protection controls as any other processing system. The FTC Safeguards Rule (16 CFR Part 314) mandates monitoring and logging of authorized user activity.

- EU operations (GDPR): Article 30 requires records of processing activities, and the EU AI Act imposes logging and record-keeping obligations on high-risk AI systems through Article 12 (logging capabilities) and Article 26 (obligations of deployers), together requiring that high-risk AI systems automatically generate and retain logs.

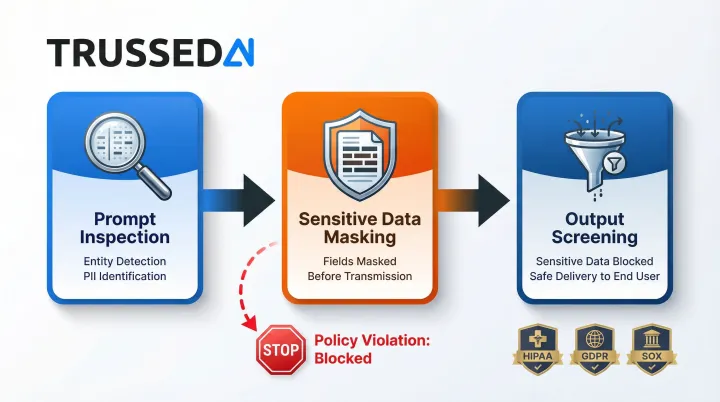

Enforcement mechanisms:

- Prompt inspection and entity detection before transmission

- Output screening for sensitive data before delivery to end users

- Policy violations flagged or blocked immediately at the API layer

Pillar 3 , Compliance and Audit Trail Generation

Compliance governance for OpenAI requires a complete, immutable log of every model interaction: input, output, model version, timestamp, user/application identity, and latency. This evidence must be generated automatically , at production AI scale, manual review is not practical.

Continuous compliance monitoring: Rather than discovering non-compliant behavior in quarterly reviews after damage is done, policy checks run on every call and flag or block violations immediately.

Regulatory requirements:

- HIPAA: 45 CFR 164.312(b) requires audit controls with 6-year retention (164.316)

- SOX: PCAOB AS 2201 requires auditable internal controls over financial reporting

- EU AI Act: Article 11 requires technical documentation for high-risk systems with continuous logging

Pillar 4 - Cost Governance and Attribution

Token costs scale sharply as enterprises move from pilots to production. Without per-team, per-application, or per-project attribution, finance and engineering leaders have no visibility into where spend is going, no ability to enforce budgets, and no way to optimize model selection.

The token economics problem:

| Model | Input (per 1M tokens) | Output (per 1M tokens) |

|---|---|---|

| GPT-4o-mini | $0.15 | $0.60 |

| GPT-4o | $2.50 | $10.00 |

| o1 | $15.00 | $60.00 |

| o3-pro | $20.00 | $80.00 |

Source: OpenAI Pricing (retrieved 2026-04-06)

Moving from GPT-4o to o1 represents a 6x cost increase on inputs and outputs. Community reports document unexpected daily spikes from unconstrained background tasks,one user saw $67 in two days (5.2M tokens) despite normal daily usage under $1.00.

Governance mechanisms:

- Continuous cost tracking by team and application

- Budget thresholds triggering alerts or fallbacks

- Model routing logic sending appropriate tasks to cost-efficient models (GPT-4o-mini for classification vs. o1 for reasoning)

- Chargeback reporting for regulated cost allocation

Moving from Static Policies to Runtime Enforcement with OpenAI

Static governance - acceptable use policies, model usage guidelines, AI ethics frameworks - cannot intercept a non-compliant prompt in real time. For production OpenAI deployments, only runtime enforcement provides meaningful protection.

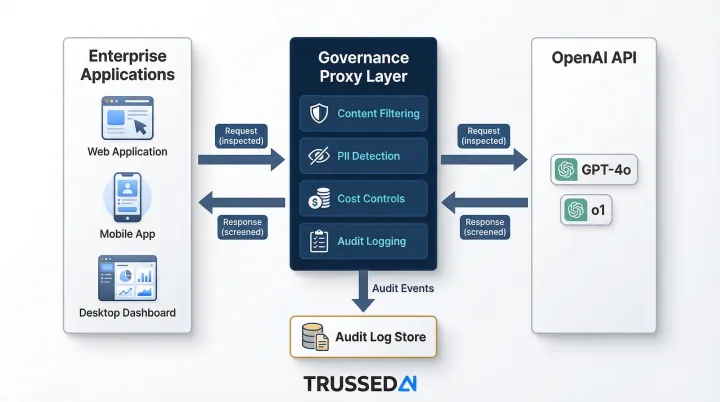

The Proxy Architecture Pattern

Place a governance enforcement layer between enterprise applications and the OpenAI API. This proxy intercepts every request and response, applies policy checks across content filtering, PII detection, cost controls, and access validation. It logs each interaction for audit, then either passes, modifies, or blocks the call , all within sub-20ms to maintain production SLAs.

What runtime enforcement enables:

- Blocks prompt injection attempts before they reach the model

- Masks PHI in prompts before transmission to OpenAI

- Enforces model-version consistency across all calls from a given application

- Generates compliance evidence as a byproduct of every interaction, not a separate reporting process

Implementation Without Code Changes

A well-designed governance proxy integrates as a drop-in layer requiring zero changes to existing application code. Developers point their OpenAI API calls to the governance endpoint rather than directly to OpenAI, and all enforcement happens transparently.

The result: policy enforcement stops being a manual review step and starts operating continuously at the point of every API call - without adding engineering overhead to the teams building on OpenAI.

Compliance Frameworks and OpenAI Model Usage

Regulatory Requirements Mapped to OpenAI Governance

HIPAA (Healthcare): PHI cannot be transmitted to OpenAI without a BAA and appropriate input/output safeguards. OpenAI offers a HIPAA BAA covering Eligible Services including the Zero Retention API and ChatGPT Enterprise. Organizations must implement technical controls to prevent unauthorized PHI transmission and maintain 6-year audit logs.

GDPR/EU AI Act (European Union): GDPR Article 28 requires Data Processing Agreements with processors, while Article 30 mandates records of processing activities. The EU AI Act requires high-risk AI systems to maintain automatically generated logs under Article 12 (logging capabilities) and Article 26 (obligations of deployers, including record-keeping), with human oversight for high-risk decisions.

SOX and GLBA (Financial Services): PCAOB AS 2201 requires audit trails for AI-assisted financial processes. The FTC Safeguards Rule (16 CFR Part 314) mandates policies and procedures to monitor and log authorized user activity.

OpenAI's Enterprise Data Agreements

OpenAI's Zero Data Retention (ZDR) option excludes customer content from abuse monitoring logs. However, ZDR has critical limitations:

ZDR-ineligible endpoints:

/v1/assistants- Retains application state until deleted/v1/files- Retains uploaded files until deleted/v1/videos- Retains video files until deleted

Organizations using the Assistants API or file uploads will have data retained even with ZDR enabled at the organization level. Additionally, Enterprise Key Management (EKM) does not support the Assistants API.

The Agentic Compliance Challenge

These ZDR limitations become more consequential when AI agents enter the picture. When an agent takes actions - writing code, sending emails, querying databases - rather than just generating text, the compliance perimeter widens across every downstream system it touches. Governance frameworks built for static LLM outputs don't cover autonomous action chains, where a single policy gap can cascade through an entire workflow before anyone detects it.

Governing OpenAI Agents and Agentic Workflows

Agents operate at a scale and speed that outpaces traditional governance. OpenAI's 2025 report identifies agentic workflow automation as a top API use case across multiple verticals, while IDC predicts agent use will increase tenfold by 2027, with token and API call loads rising a thousandfold. That growth curve makes governance harder to retrofit , and harder to ignore.

Why Agentic Governance Is Different

OpenAI Assistants and multi-step agent workflows execute sequences of tool calls, access multiple data sources, and take actions with real-world consequences. A single governance failure can propagate across an entire workflow rather than affecting only one interaction.

OWASP LLM06 warns of "Excessive Agency" , where autonomous chains execute destructive actions because the agent was granted excessive functionality, permissions, or autonomy.

Closing that exposure requires specific controls, not general policy.

Specific Governance Controls Needed

- Restrict tool-call access so agents can only invoke the tools their deployment explicitly requires - no production database access for a support bot

- Enforce data scope boundaries so an HR chatbot can't query financial systems, and a code assistant can't reach customer PII

- Require human approval before agents execute actions with financial, legal, or safety consequences

- Tie every agent call to a specific deployment identity, not an anonymous API key, so audit trails can show which agent took which action in which application

The Assistants API Governance Gap

The Assistants API enables agents with code_interpreter and file_search tools, but it's explicitly excluded from ZDR and EKM. Organizations deploying Assistants must implement their own governance layer to:

- Log every tool call with full context

- Enforce data handling policies on file uploads

- Prevent unauthorized tool invocations

- Maintain audit trails for agent actions

Building Your Enterprise OpenAI Governance Infrastructure

Infrastructure Components Required

A production-grade governance stack requires five core components:

- Governance proxy/control plane - intercepts every OpenAI API request and response before it reaches your application

- Policy engine - enforces content filtering, access validation, cost controls, and compliance checks in real time

- Audit log store - immutable record of every interaction, including policy evaluation results, timestamps, and data lineage

- Cost attribution dashboard - real-time spend visibility broken down by team, application, and model

- IAM integration - connects governance to existing identity systems for user-level access control

Building this from scratch typically takes 6–12 months of engineering time and requires ongoing maintenance as OpenAI's API evolves. That's time most enterprises can't afford when AI deployments are already in motion.

Trussed AI: Enterprise AI Control Plane

Trussed AI sits as a governed proxy in front of OpenAI API calls, enforcing real-time policies for content, access, cost, and compliance. Audit trails are generated automatically as a byproduct of every governed interaction - no manual collection required.

Key capabilities:

- Runtime policy enforcement across models, agents, and workflows

- Sub-20ms added latency maintaining production SLAs

- Zero application code changes required for integration

- Model-level cost attribution for budget tracking and chargeback

- Automated compliance evidence generation for HIPAA, GDPR, SOX, and EU AI Act

- Enterprise-grade security governance

Evaluation Questions for Governance Infrastructure

When evaluating any governance solution, ask:

- Does it enforce policies at runtime or only report after the fact? Real-time enforcement stops violations before they occur; post-hoc dashboards only tell you what already went wrong.

- Does it integrate without application code changes? At scale, refactoring dozens of AI applications to add governance is a project in itself - one most teams will deprioritize.

- Does it support model-level cost attribution? Team-level budgets and chargeback models require granular spend data, not just aggregate API totals.

- Does it generate audit-ready compliance evidence automatically? With thousands of daily AI interactions, manual evidence collection becomes a full-time job.

- Can it handle production API volumes without adding latency? Any solution that adds 300ms or more per call will eventually be bypassed by engineering teams under pressure to ship.

Frequently Asked Questions

What is the difference between OpenAI's built-in safety controls and enterprise AI governance?

OpenAI's safety controls (content moderation, usage policies) govern what the model will and won't do. Enterprise governance governs how your organization uses the model,covering access control, data privacy, cost attribution, and compliance evidence that OpenAI doesn't provide natively.

How do I prevent sensitive data from being sent to OpenAI in production?

Implement a governance proxy that inspects and masks PII/PHI in prompts before transmission to OpenAI. Use entity detection rules or regex/ML-based classifiers to ensure sensitive data never leaves your enterprise perimeter in plaintext.

What regulations apply to enterprises using OpenAI models in healthcare and financial Solution?

Healthcare organizations must comply with HIPAA (BAA plus input/output controls for PHI). Financial Solution face GLBA and SOX requirements for audit trails and data protection. EU operations must also address GDPR and EU AI Act risk-classification rules for high-stakes AI use cases.

How can enterprises control and attribute OpenAI API costs across teams?

Route all API calls through a centralized layer that tags requests with team and project metadata. That layer tracks token consumption in real time and enforces per-team budget thresholds, preventing runaway spend as adoption scales from pilot to production.

What are the biggest governance risks specific to OpenAI Assistants and agent deployments?

In agentic workflows, a single policy failure can propagate across an entire sequence of tool calls and actions. Mitigating this requires tool-authorization controls, scope boundaries, and human-in-the-loop enforcement at the agent level - not just the prompt level.

How do I create an automated audit trail for OpenAI model interactions for compliance purposes?

A governance proxy captures and logs every request and response - with model version, timestamp, user identity, and policy check results - automatically. Compliance evidence is generated as a byproduct of normal operations rather than requiring separate manual review processes.