Introduction

AI is no longer experimental. Organizations across insurance, healthcare, financial Solution, and higher education are running AI in production - deploying models, agents, and workflows at scale. But governance, trust, and security have not kept pace with deployment velocity. This gap between what AI systems can do and what organizations can safely control has become the defining risk of 2026.

95% of C-suite leaders reported AI incidents in the past two years, with 39% describing the damage as "extremely severe." Companies without governance frameworks lost an average of $4.4 million per incident in 2025. Regulators are responding with enforcement,the EU AI Act's high-risk system requirements are now in force, carrying fines up to 7% of global revenue.

Regulatory pressure is intensifying, agentic AI is introducing risk categories that traditional frameworks weren't built to handle, and analysts like Gartner and Forrester have elevated AI TRiSM from a best practice to a board-level imperative. This report maps the market landscape, key trends, and what regulated enterprises need to do before enforcement catches up with deployment.

TLDR

- AI TRiSM is the framework enterprises use to enforce safety, reliability, and compliance across production AI systems

- Market growing sharply in 2026,Gartner projects 35% CAGR, reaching $13.8 billion by 2030 (source verification required before publication)

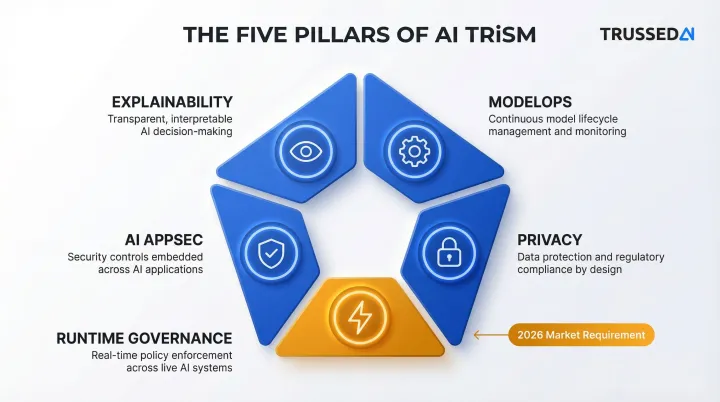

- Traditional four pillars now extended by a fifth: real-time runtime enforcement

- Embedding governance at the infrastructure level , not as an afterthought , creates measurable competitive advantage

- Regulated industries face highest stakes and stand to gain most from early AI TRiSM adoption

The AI TRiSM Market in 2026: Size, Growth, and Key Drivers

The AI TRiSM market is experiencing explosive growth. Gartner estimates the market at $3.1 billion in 2025, projecting a 35% CAGR to reach $13.8 billion by 2030. IDC's parallel analysis of the AI Governance Platforms market shows even stronger momentum,$2.5 billion in 2025 growing at 42% CAGR to $15.5 billion by 2030.

Core Business Forces Accelerating Demand

Three structural drivers are fueling this growth:

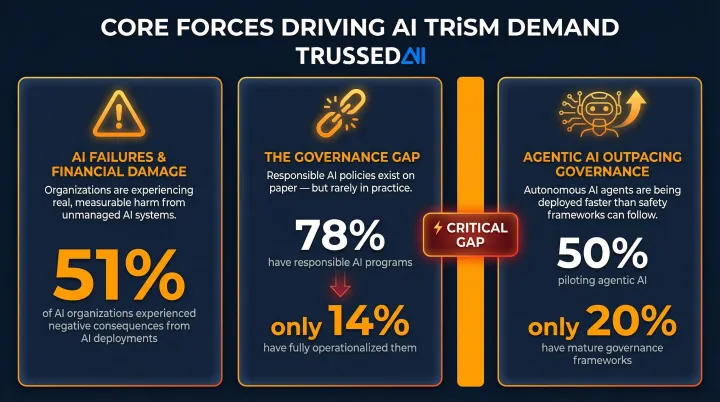

High-profile AI failures creating direct financial damage. McKinsey found that 51% of organizations using AI experienced negative consequences, with nearly one-third citing AI inaccuracy issues. The financial impact is severe,Air Canada paid damages after its chatbot provided incorrect information, attorneys faced $6,000 in sanctions for AI-generated fake legal citations, and Clearview AI received a €30.5 million fine for illegal biometric data processing.

The governance gap as a structural driver. While 78% of companies have established responsible AI programs, only 14% have operationalized them, according to Accenture. Gartner reinforces this disconnect: through 2026, 80% of unauthorized AI transactions will stem from internal policy violations, not external attacks,highlighting the failure of non-enforced policies.

Agentic AI deployment outpacing governance maturity. Forrester reports that 50% of organizations are piloting agentic AI, with 24% already in production. Yet only 20% have mature governance models for autonomous agents, according to Deloitte. That 30-point gap between deployment and governance maturity is driving procurement decisions across the enterprise market.

The Demand-Side Shift

The question has shifted from whether to govern AI to how to do it at production scale without adding latency or operational overhead. Accenture found that 42% of companies already devote over 10% of their AI budget to responsible AI, with 79% planning to reach this threshold within two years.

That spend is concentrated in regulated industries, where AI adoption is already widespread. NAIC's 2025 survey found AI in active use across insurance lines:

- 92% of health insurers

- 88% of auto insurers

- 70% of home insurers

These organizations face pressure from three directions simultaneously: internal risk functions, external regulators, and enterprise clients requiring governance proof before vendor onboarding.

The Four Pillars of AI TRiSM , and a Fifth the 2026 Market Now Demands

Explainability

Explainability makes AI model decision-making transparent and interpretable. Without it, organizations cannot diagnose errors, detect bias, or satisfy auditors. Techniques include feature importance analysis and continuous model behavior monitoring,addressing the black-box problem that regulatory frameworks now legally require organizations to solve.

The consequences of opacity are real. Italy's Data Protection Authority initially imposed a €15 million fine on OpenAI for transparency failures under GDPR, and courts have sanctioned attorneys for submitting AI-generated briefs containing fabricated citations.

ModelOps

ModelOps governs how AI models are versioned, tested, retrained, and maintained throughout their lifecycle. Without it, organizations cannot detect data drift, manage model degradation, or scale AI reliably. Enterprise SLA requirements punish unpredictable model behavior,and ModelOps is what keeps that behavior in check.

AI AppSec

AI applications face unique attack vectors traditional cybersecurity tools weren't built to detect:

- Prompt injection , Research demonstrated 95% attack success rates against GPT-3.5 and GPT-4 in controlled environments

- Training data poisoning , Manipulating model behavior through corrupted training data

- Adversarial inputs , Crafted inputs designed to fool models

- Model extraction , Stealing model logic through API queries

AI AppSec requires encryption of model data, supply chain controls, and runtime input/output validation beyond standard application security. CrowdStrike reported an 89% year-over-year increase in attacks conducted by AI-enabled adversaries in February 2026.

Privacy

AI systems routinely ingest personal data at scale, triggering obligations under GDPR, HIPAA, and sector-specific regulations. Privacy failures carry both regulatory fines and reputational consequences,Clearview AI's €30.5 million fine demonstrates the stakes.

Common privacy-enhancing techniques include:

- Noise injection , Adds statistical randomness to obscure individual data points

- Tokenization , Replaces sensitive identifiers with non-sensitive equivalents

- Differential privacy , Provides mathematical guarantees against re-identification

- Data minimization , Limits ingestion to only what the model actually requires

Together, these four pillars define established AI TRiSM practice. What the 2026 market has added is a fifth,one that makes the other four enforceable.

The Emerging Fifth Pillar , Runtime Governance

Gartner's 2025 Market Guide elevated runtime inspection and enforcement from a feature to a mandatory capability.

This is the 2026 market's defining capability gap. Most organizations have policies written down but no mechanism to enforce them dynamically as AI systems act autonomously. Runtime governance means policy enforcement applied to every model interaction, agent action, and workflow execution in real time - not reviewed after the fact. Regulators are increasingly requiring organizations to demonstrate that controls were active at the moment of each AI decision, not reconstructed from logs afterward.

Five Trends Reshaping the AI TRiSM Landscape in 2026

Agentic AI Multiplies Governance Complexity

Unlike single-model deployments, AI agents take autonomous sequences of actions across tools, APIs, and data systems. Each action: a potential governance event. TRiSM frameworks built for predictive models weren't designed for agents making chained decisions, which creates a new category of runtime risk organizations must explicitly govern.

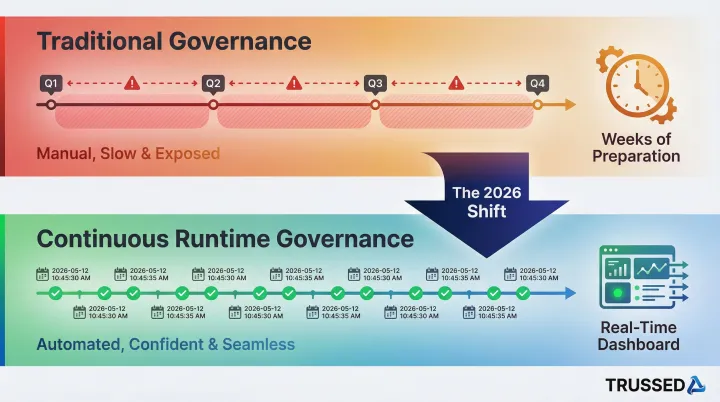

The Shift from Static Policies to Real-Time Control

AI governance used to mean policy documents and periodic audits. In 2026, that model is breaking down , every AI interaction now needs to be inspected against policy in milliseconds, not reviewed in quarterly reports. Gartner predicts that by 2030, 50% of AI agent deployment failures will be due to insufficient runtime enforcement.

Consolidation Toward Unified Control Planes

Organizations that deployed point solutions for bias detection, model monitoring, security, and compliance are finding fragmented tooling creates blind spots and audit gaps. The market trend is toward unified platforms providing a single governance layer across models, agents, and developer tools.

Recent acquisitions signal where the market is heading:

- OpenAI acquired Promptfoo for automated security testing of AI outputs

- Google acquired Wiz for $32 billion to build a unified cloud and AI security platform

- Databricks acquired Antimatter and SiftD.ai to add agentic SIEM capabilities

AI Governance as a Procurement and Revenue Requirement

In regulated industries, enterprises increasingly require AI governance proof from vendors - documented audit trails, runtime compliance evidence, and demonstrable security controls. Organizations that can demonstrate governed AI are winning enterprise deals; those that cannot are being disqualified.

Two regulatory instruments are accelerating this shift. The NAIC Model Bulletin requires insurers to conduct due diligence and secure contractual audit rights from AI vendors. ISO/IEC 42001:2023 is appearing in RFPs as a procurement yardstick, with buyers demanding evidence such as AI risk registers.

Audit-Ready Evidence Generation Becoming Non-Negotiable

Regulators and enterprise buyers are moving beyond asking whether an organization has an AI policy , they're requiring evidence that policies are enforced continuously. The practical gap: most organizations still compile governance evidence manually before audits, a process that takes weeks and leaves enforcement gaps between review cycles. The emerging requirement is continuous, automatic evidence generation , timestamped logs of every governed interaction that exist before the auditor ever asks.

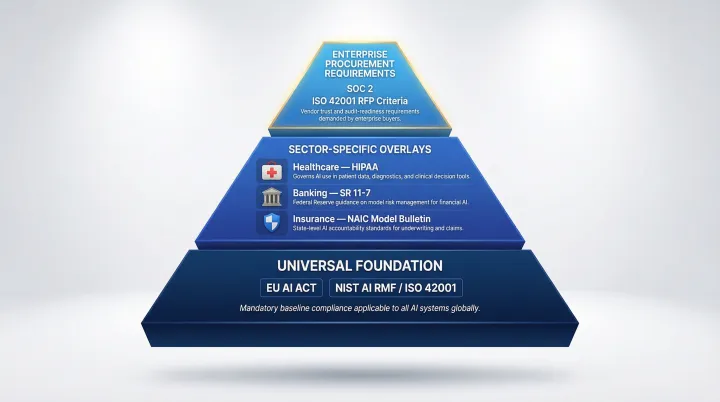

The 2026 Regulatory Landscape Every Enterprise Must Understand

EU AI Act

Phase 2 obligations took effect in 2025, with high-risk AI system requirements around transparency, human oversight, and documentation now in force. Organizations operating in or selling to EU markets must demonstrate risk classification, conformity assessments, and ongoing technical documentation.

Risk-tiering system:

- Unacceptable-risk , Banned systems (social scoring, exploitation of vulnerabilities)

- High-risk , Strict requirements for systems in critical sectors (biometric identification, employment, law enforcement, credit scoring)

- Limited-risk , Transparency obligations (users must know they're interacting with AI)

- Minimal-risk , No mandatory obligations

Non-compliance carries fines up to €35 million or 7% of global revenue for prohibited practices, and up to €15 million or 3% of revenue for other violations.

NIST AI Risk Management Framework and ISO 42001

NIST AI RMF (voluntary in the US but widely adopted as a contractual baseline) and ISO 42001 (the international AI management system standard) are becoming procurement requirements. Organizations pursuing federal contracts, healthcare partnerships, or financial Solution relationships must demonstrate alignment with one or both frameworks.

| NIST AI RMF | ISO 42001 | |

|---|---|---|

| Type | Voluntary risk framework | Certifiable management system standard |

| Purpose | Guidance for doing AI risk management | Third-party audit proof of systematic governance |

| Best for | Internal risk practices | Demonstrating compliance to external parties |

Sector-Specific Overlays

Regulated industries face governance obligations beyond general AI frameworks. These sector rules layer on top of , not instead of , the EU AI Act and NIST/ISO baseline:

- Healthcare , HIPAA for AI systems processing protected health information

- Banking , SR 11-7 model risk management guidance

- Insurance , NAIC Model Bulletin requiring formal AI governance programs (adopted by Connecticut, Illinois, Maryland, Pennsylvania, Washington)

For enterprises operating across sectors, the practical challenge isn't meeting any single regulation - it's building governance infrastructure that satisfies all of them without maintaining a separate compliance program for each.

The Biggest Challenges Holding Organizations Back

The Governance-to-Production Gap

Most organizations have developed AI policies at the executive level, but those policies have no mechanism for runtime enforcement. Accenture found that 43% of companies have yet to fully operationalize monitoring and control processes, with 52% of generative AI users lacking any monitoring measures.

Retrofitting governance into production AI systems creates friction on two fronts:

- Technical: code changes required, latency sensitivity, and multi-model environments

- Organizational: cross-functional ownership disputes and a near-total absence of purpose-built tooling

Talent and Skills Shortage

The skills required , understanding model behavior, data pipeline risks, regulatory compliance, and operational security in combination , are rare. ISC2 and ISACA research highlights how rapid AI adoption is reshaping cybersecurity skills requirements faster than organizations can hire or train. Most enterprises cannot staff their way out of this gap, which is why automated governance tooling has moved from nice-to-have to operational necessity.

Scaling Governance Without Scaling Overhead

Every new AI system an enterprise deploys adds to its governance surface , and tooling designed for a handful of models breaks down at enterprise scale. Hundreds of models, dozens of agents, and thousands of daily interactions demand infrastructure that can grow alongside AI deployment without a proportional increase in headcount or manual oversight.

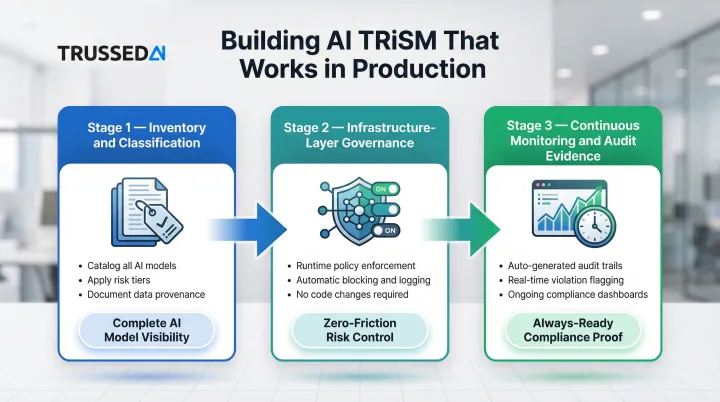

How to Build an AI TRiSM Strategy That Works in Production

Start with Inventory and Classification

Governance cannot be enforced on systems that haven't been discovered and classified. Organizations should begin by:

- Cataloging all AI models, agents, applications, and data flows,including embedded AI in third-party tools

- Applying risk tiers based on regulatory exposure, data sensitivity, and decision consequence

- Documenting data provenance, model versions, and system access modes

NIST AI RMF explicitly calls for AI systems inventory in its GOVERN 1.6 subcategory. ISO 42001 mandates inventory maintenance as a core certification requirement.

Implement Governance at the Infrastructure Layer, Not the Policy Layer

Policy documents don't stop a model from leaking sensitive data at 2am. Runtime enforcement does. Governance embedded directly in infrastructure,operating via a control plane or proxy layer,catches violations as they happen, not after the fact.

Runtime enforcement means:

- Policy checks applied at every model call, agent action, and API interaction

- Automatic blocking, logging, and audit trail generation

- Governance that operates continuously, not reviewed after the fact

Trussed AI's control plane applies this model: a proxy layer that enforces governance at runtime across AI apps, agents, and developer tools, with less than 20ms latency and no changes required to application code.

Prioritize Continuous Monitoring and Audit-Ready Evidence

Governance programs that only produce evidence at audit time leave organizations exposed between review cycles. Continuous monitoring shifts that dynamic by:

- Generates audit trails automatically as a byproduct of operation

- Flags policy violations in real time

- Provides dashboards for ongoing oversight,not just pre-audit scrambles

When audit evidence is generated automatically at every governed interaction, compliance stops being a quarterly scramble and becomes an operational baseline.

Frequently Asked Questions

What is the difference between AI TRiSM and traditional cybersecurity frameworks?

AI TRiSM extends beyond traditional cybersecurity by addressing AI-specific risks like model bias, data drift, adversarial inputs, and explainability. Traditional frameworks were designed for static software systems that don't learn or make autonomous decisions.

What are the four pillars of AI TRiSM?

The four pillars are Explainability (making AI transparent), ModelOps (lifecycle governance), AI AppSec (security against AI-specific attacks), and Privacy (data protection compliance).

How does the EU AI Act impact AI TRiSM requirements in 2026?

The EU AI Act uses a risk-tiered approach where high-risk AI systems now require documented technical controls, human oversight mechanisms, and ongoing conformity assessments. Organizations without AI TRiSM infrastructure will struggle to demonstrate compliance and face fines up to 7% of global revenue.

What industries need AI TRiSM the most?

Regulated industries,healthcare, financial Solution, insurance, and government/public sector,face the highest stakes due to sensitive data, high-consequence AI decisions, and overlapping regulatory obligations. These sectors are disproportionate buyers of AI TRiSM solutions.

What is runtime AI governance and why does it matter in 2026?

Runtime governance is policy enforcement applied to every AI interaction in real time, rather than reviewed after the fact. As agentic AI makes autonomous decisions at scale, real-time control has become the critical gap between having an AI policy and actually enforcing it.

How long does it typically take to implement an AI TRiSM framework?

A basic governance foundation,policy definition, asset inventory, monitoring setup,is achievable in weeks. A mature, continuous AI TRiSM program requires months of iteration, though infrastructure-first platforms with drop-in integration can get operational workflows live in as little as four weeks.