Introduction

Most enterprises can name their AI models. They can describe their use cases. They can map deployment timelines across business units. But when asked to identify the infrastructure layer that governs how those models behave in production, the room goes quiet.

AI governance is the load-bearing layer beneath enterprise AI - invisible until something fails. Unlike the visible stack of models, applications, and data pipelines, governance infrastructure operates in the background, enforcing policies, generating audit evidence, and maintaining control boundaries. When it works, no one notices.

When it doesn't, the consequences cascade: unauthorized data exposure, regulatory violations, runaway costs, and operational failures that erode trust in AI systems.

Organizations moved from AI experimentation to AI dependency faster than their governance structures adapted. Pilots became production systems. Experiments became critical decision engines. The gap between policy intent and operational reality widened.

What worked for a handful of experimental chatbots cannot scale to hundreds of autonomous agents operating across customer workflows, internal tools, and third-party integrations.

AI governance is infrastructure , not a compliance document, not a committee meeting. Enterprises that treat it as such, building enforcement mechanisms, monitoring systems, and audit trails directly into the AI stack, are the ones scaling AI without stalling. This article breaks down what that infrastructure consists of, why static policies fail in production, and what it actually costs to retrofit governance after the fact.

TLDR

- Only 21% of companies deploying agentic AI have mature governance models, despite 74% planning deployment within two years

- Real governance infrastructure means runtime enforcement, continuous monitoring, and automatic audit trail generation - policy documents alone don't cut it

- Ungoverned AI exposes enterprises to shadow AI, model drift, regulatory non-compliance, and breach costs averaging $4.63 million

- Agentic systems that act autonomously across workflows expose the failure modes of static policies most visibly

- Governance built into the AI stack from day one is cheaper and simpler than retrofitting after scale

The Governance Gap That Enterprise AI Created

AI transitioned from experimental technology to core operational dependency in less than 24 months for most enterprises. When AI moves from a pilot chatbot to the decision logic behind credit scoring, claims processing, or patient triage, the nature of a governance failure changes. What was once a minor inconvenience (a chatbot giving an incorrect answer) becomes systemic risk: discriminatory lending, fraudulent claim approvals, or misdiagnosed patients.

The problem is that enterprises scaled AI faster than they built the systems to govern it. According to McKinsey's 2025 Global Survey on AI, nearly two-thirds of organizations have not yet begun scaling AI across the enterprise, and only 28% report that their CEO is responsible for overseeing AI governance. Leadership gaps create execution gaps.

The Shadow AI Problem

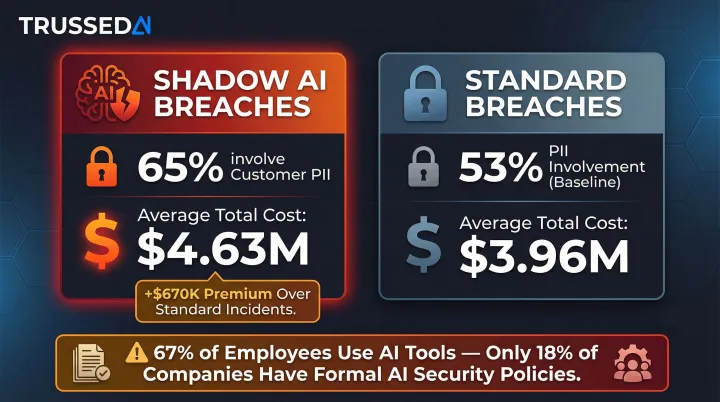

Just as shadow IT plagued the early cloud era, shadow AI now operates across enterprises without formal oversight. 67% of employees use AI tools at work, yet only 18% of companies have formal AI security policies. Worse, one in five organizations reported a breach due to shadow AI, with 68% of employees using free-tier AI tools via personal accounts and 57% inputting sensitive data.

When shadow AI incidents occur, 65% involve customer PII compromise , 12 percentage points above the 53% global average for standard breaches. The financial impact is severe: breaches involving shadow AI cost organizations an average of $670,000 more than standard incidents, bringing total breach costs to $4.63 million.

Model Drift as Governance Failure

Model drift represents a governance failure mode unique to AI: a model that performed accurately in Q1 may produce skewed outputs by Q4 as underlying data distributions shift. Unlike traditional software where bugs are deterministic and reproducible, model drift is probabilistic and emergent.

Without infrastructure to detect and respond to drift, decisions are made on increasingly unreliable foundations. The problem typically stays invisible until a regulatory audit or customer complaint forces it into view.

The Cost of Retrofitting Governance

Organizations that scale AI without governance structures often hit a compliance or operational wall 12-18 months into deployment.

At that point, they face a sprawling inventory of disconnected models, inconsistent data privacy standards, and a compliance function that blocks further deployment until governance is formalized.

The Massachusetts Attorney General's $2.5 million settlement with Earnest Operations LLC demonstrates the financial and operational cost of governance failures. The student loan lender's AI underwriting models led to disparate harm against Black, Hispanic, and non-citizen applicants. The settlement required implementation of a detailed corporate governance structure and robust written policies , governance imposed by regulators after the damage was done, not architected before deployment.

Why Policy Documents Are Not Governance Infrastructure

Most organizations have AI policies: responsible use guidelines, ethical AI principles, risk matrices written by committees. Very few have AI governance infrastructure: systems that enforce those policies at the moment an AI model receives a prompt, generates an output, or triggers an action.

The distinction is critical. No organization would consider a written acceptable-use policy to be a substitute for firewalls, intrusion detection systems, and access controls. Network security requires infrastructure that enforces policy in real time. The same logic applies to AI. Policies define intent; infrastructure enforces it.

Runtime Enforcement vs. Retrospective Audits

Runtime enforcement means every interaction with an AI model,every prompt, every response, every agent action,passes through a layer that checks it against defined policies in real time. This contrasts sharply with audit-after-the-fact approaches, where violations are discovered only during periodic reviews, long after damage is done.

IBM's 2025 Cost of a Data Breach Report found that 63% of breached organizations either lack an AI governance policy or are still developing one. Critically, of those with policies, only 34% perform regular audits for unsanctioned AI. Policy without enforcement is theater.

Samsung Electronics learned this in 2023 when employees uploaded sensitive internal source code and meeting notes to ChatGPT despite existing data protection expectations. The company banned ChatGPT use after the leaks, but the damage was done,policy existed, enforcement did not.

Infrastructure That Enforces Policy

Trussed AI's control plane model exemplifies the infrastructure approach: a drop-in proxy that enforces governance policies at runtime across AI apps, agents, and developer tools without requiring changes to application code. Policies defined once are enforced continuously across every model, workflow, and environment the platform governs. The system operates as an active control layer in the execution path , not a static document sitting outside it.

The Hidden Layers: What AI Governance Infrastructure Actually Consists Of

A governance stack is the technical and operational infrastructure that makes AI behavior predictable, auditable, and controllable. This hidden layer separates enterprises that scale AI from those that stall.

Runtime Policy Enforcement

Runtime enforcement is the active layer: it intercepts AI interactions and applies defined rules about data access, output filtering, content restrictions, and model selection before outputs reach users or downstream systems. This differs from logging (passive recording) and periodic auditing (retrospective review) in one fundamental way , it acts, rather than observes.

When an agent requests access to customer records, runtime enforcement evaluates whether that access violates PII protection policies before the data is retrieved. When a model generates a response containing sensitive information, enforcement filters or blocks the output before it reaches the user. Policies are applied at the point of execution, not buried in audit logs for someone to find later.

Continuous Monitoring and Drift Detection

Continuous monitoring tracks model outputs over time to detect performance degradation, bias emergence, or behavioral drift. This must be automated and ongoing , quarterly reviews don't catch what changes between them. Models operating in dynamic environments , financial markets, healthcare diagnostics, customer service , require real-time monitoring of both model behavior and input data quality.

Drift detection identifies when a model's statistical properties diverge from baseline performance. Without automated monitoring, organizations discover drift only after customer complaints or regulatory inquiries expose the problem.

Audit Trail and Evidence Generation

Every governed interaction should automatically generate a record presentable to regulators, auditors, or internal review boards. The difference between raw logs and governance evidence matters here: logs record what happened; governance evidence demonstrates that the right controls were applied, in a format auditors can actually use.

Mature governance infrastructure generates this evidence as a byproduct of normal operation. When a regulator asks how a specific AI decision was governed, the answer is immediate: policy evaluation results, model version, timestamp, data lineage - all structured and queryable, not manually reconstructed after the fact.

Security, Access Control, and Cost Visibility

Security and guardrails control which models can be accessed, what data they can see, and what outputs they can produce. Access controls prevent unauthorized model use. Data leakage prevention protects sensitive information from exposure through AI interactions.

Cost attribution tracks AI spend in real time across teams, models, and applications. This is a governance problem because untracked AI consumption leads to budget overruns and makes it impossible to justify or optimize investment. 98% of FinOps practitioners now manage AI spend, up from 31% in 2024, yet many struggle with visibility due to variable pricing models. Average monthly AI spending will reach $85,521 in 2025, a 36% increase from 2024, with 65% of IT leaders reporting unexpected charges from consumption-based pricing.

Without that visibility, AI spend becomes a black box , and black boxes don't survive budget reviews.

Agentic AI: Where Static Governance Breaks Down

Agentic AI: systems that initiate actions, invoke tools, coordinate multi-step tasks, and operate across organizational boundaries with limited human oversight. These systems represent a qualitatively different governance challenge than standard LLM deployments.

Deloitte's January 2026 State of AI in the Enterprise report found that close to three-quarters of companies plan to deploy agentic AI within two years, yet only 21% report having a mature governance model for autonomous AI agents. The absence of governance maturity means operational exposure: agents operating without policy enforcement at each step of their workflows.

Why Static Policies Fail for Agents

A policy that says "don't share PII" has no mechanism to stop an agent that dynamically retrieves customer records as part of a multi-tool workflow. Static policies define boundaries at the application level, defining what the system should or shouldn't do overall. Agents, by contrast, operate at the action level: calling APIs, querying databases, triggering workflows, and passing data between tools.

Governance infrastructure for agents must enforce policies at each step of an autonomous workflow, not just at the input/output boundary. That means:

- Tool invocations authorized against policy in real time

- Data access evaluated for compliance at the point of retrieval

- Downstream actions validated before execution

The OWASP Top 10 for Agentic Applications (December 2025) highlights critical vulnerabilities including Agent Goal Hijack (hidden prompts turning copilots into exfiltration engines), Tool Misuse, and Identity & Privilege Abuse. Demonstrated research confirms that LLM agents can autonomously exploit vulnerabilities and execute multi-step attacks , making these among the most operationally significant risks in enterprise AI today.

Human-in-the-Loop as Governance Mechanism

Human-in-the-loop governance defines precisely which decision types require human review, what triggers escalation, and what conditions pause or terminate an agent's operation. Effective agentic governance distinguishes between routine decisions that can proceed autonomously and high-risk decisions requiring human judgment , not every action needs a reviewer, but the ones that do must be enforced at the infrastructure level.

The EU AI Act mandates that high-risk AI systems be designed so they can be effectively overseen by natural persons, with human oversight requirements taking effect in August 2026. Governance infrastructure must operationalize this requirement by addressing three concrete obligations:

- Defining escalation triggers that route high-stakes decisions to human reviewers

- Maintaining audit trails that document where and when human review occurred

- Ensuring agents cannot programmatically bypass oversight mechanisms

Building Governance In, Not Bolting It On

Governance built into the AI stack at deployment is operationally cheaper, technically simpler, and more effective than governance retrofitted onto systems designed without it. This principle operationalizes through three actions:

- Integrate a governance layer at deployment , a control plane that operates as a proxy between AI applications and underlying models, enforcing policies at runtime without code changes to existing systems

- Define policies before production , data access restrictions, cost limits, output filters, and escalation triggers should be set before use cases go live, not after incidents occur

- Establish monitoring from day one , continuous monitoring, audit trail generation, and drift detection should begin with the first production interaction, not after scale creates visibility gaps

Cross-Functional Ownership Model

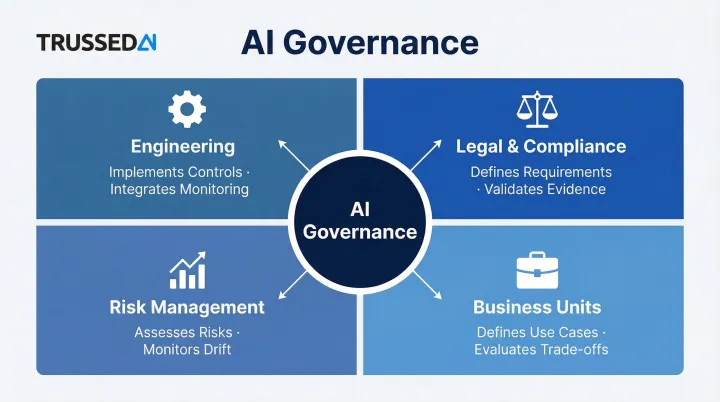

Technical controls only work when someone owns them. Mature AI governance requires defined accountability across engineering teams, legal and compliance, risk management, and business unit leaders , each with specific responsibilities:

- Engineering: Implements governance controls, integrates monitoring, maintains audit infrastructure

- Legal and Compliance: Defines regulatory requirements, reviews policies, validates evidence generation

- Risk Management: Assesses operational risks, defines escalation criteria, monitors drift and failures

- Business Units: Defines use case requirements, validates policy applicability, evaluates governance trade-offs

The most effective structures embed governance accountability into performance criteria rather than treating it as a separate audit function. When engineering teams are measured on governance compliance alongside feature delivery, governance becomes operational infrastructure rather than bureaucratic overhead.

Compliance Evidence Generation for Regulated Industries

For enterprises in regulated industries - insurance, healthcare, financial Solution - governance infrastructure means maintaining continuous compliance evidence that maps to specific regulatory requirements.

| Regulation | Governance Requirement | Enforcement Approach |

|---|---|---|

| HIPAA | Technical controls monitoring real-time activity in systems with ePHI | Runtime monitoring and access controls |

| EU AI Act | Automatic logging, traceability, human oversight for high-risk systems | Continuous audit trails and escalation mechanisms |

| Federal Reserve SR 11-7 | Continuous monitoring for model drift and unexpected behavior | Automated drift detection and alerting |

| ISO/IEC 42001:2023 | Traceability, transparency, continuous monitoring of AI systems | Evidence generation as operational byproduct |

Compliance evidence generated automatically as a byproduct of governed AI interactions is a clear operational improvement over evidence assembled manually after the fact. When every interaction is logged with policy evaluation results, model version, timestamp, and data lineage, regulatory inquiries can be answered with contemporaneous evidence , not weeks of manual reconstruction.

Trussed AI gets governance workflows operational in under four weeks. Every governed interaction automatically produces audit-ready evidence, so compliance becomes a continuous state rather than a quarterly scramble.

Frequently Asked Questions

What is the difference between AI governance and traditional IT governance?

Traditional IT governance focuses on systems with deterministic, auditable behavior,servers, databases, networks that behave predictably. AI governance addresses systems that learn, adapt, and produce probabilistic outputs, requiring runtime enforcement, drift detection, and continuous evidence generation rather than just access controls and change management.

What does AI governance infrastructure actually consist of?

AI governance infrastructure spans five core capabilities:

- Runtime policy enforcement , evaluating rules at the moment of execution

- Continuous monitoring and drift detection , tracking model behavior over time

- Automated audit trail generation , creating compliance evidence as a byproduct

- Security and guardrails , controlling access and outputs

- Cost attribution , tracking spend across teams and models

Why do static AI policies fail in production?

Static policies define intent but have no enforcement mechanism at the moment AI systems operate. Without a runtime layer that checks each interaction against defined rules, violations are discovered only retrospectively,long after operational or regulatory damage has occurred. Policies must be enforced in real time to be effective.

How does governance for agentic AI differ from governance for standard AI models?

Agentic systems operate autonomously across multi-step workflows, invoke external tools, and take actions that static policies cannot intercept. Governance for agents must enforce rules at each step of a workflow,every tool call, data access, and system interaction,not just at input/output boundaries where traditional governance operates.

What are the consequences of scaling AI without governance infrastructure?

Shadow AI, inconsistent standards, regulatory exposure, and costly retroactive fixes are the most common outcomes. The operational risk is measurable: 97% of organizations that experienced AI-related breaches lacked proper AI access controls.

How long does it take to implement AI governance infrastructure?

Timelines depend on complexity and organizational readiness. Purpose-built platforms with drop-in proxy integration and zero-code-change deployment can bring governance workflows live in weeks,organizations with cross-functional alignment typically reach operational status in about four weeks.