Introduction

GRC teams face a dual challenge in 2026: AI has become both the most powerful tool to modernize governance programs and the newest, most rapidly evolving risk category that governance frameworks must manage. 60% of GRC users still manage compliance manually using spreadsheets, while simultaneously deploying AI systems faster than their governance processes can adapt. This creates exposure in regulatory risk, operational fragility, and rising costs that traditional compliance frameworks cannot address.

2026 is where these pressures converge. The EU AI Act's core provisions become fully applicable on August 2, 2026, establishing risk-based AI categories with penalties reaching €35 million or 7% of global turnover. Meanwhile, 23% of organizations are already scaling agentic AI systems that autonomously execute decisions across business functions.

Yet 66% of directors report their boards have limited to no knowledge or experience with AI, and nearly one-third say AI doesn't appear on board agendas at all. GRC teams can no longer treat AI as a peripheral IT concern when regulators, executives, and operational reality all demand accountability.

TL;DR

- Agentic AI automates GRC workflows including control monitoring, audit evidence generation, and regulatory change mapping , cutting the 11,800 manual hours SOX compliance alone consumes annually

- Real-time compliance monitoring replaces quarterly audit snapshots, generating continuous evidence streams rather than periodic reports assembled under deadline pressure

- AI governance is becoming a formal GRC discipline, requiring asset inventory, model risk classification, runtime enforcement, and drift monitoring

- Shadow AI and embedded third-party AI create invisible risk surfaces, with over 80% of workers using unapproved AI tools that traditional frameworks cannot detect

- EU AI Act enforcement and rising board accountability demands require governance infrastructure built for dynamic AI systems, not periodic compliance snapshots

Agentic AI Is Redefining What GRC Automation Looks Like

Agentic AI in GRC means deploying systems that autonomously execute compliance checks, generate audit trails, monitor policy adherence in real time, flag anomalies, and trigger remediation workflows,without waiting for manual triggers from GRC staff. Unlike traditional automation that follows rigid scripts, agentic systems interpret regulatory requirements, map them to existing controls, and adapt their monitoring as rules evolve.

Where this adoption is appearing:

- Scans new regulations, identifies affected controls, and maps compliance gaps without manual review

- Assesses third-party AI systems against risk frameworks continuously, updating vendor scores as behaviors change

- Generates audit evidence as a byproduct of every governed interaction, eliminating end-of-cycle documentation scrambles

- Evaluates every AI interaction against organizational policies before execution, preventing violations rather than detecting them after the fact

The productivity opportunity here is substantial. The average SOX compliance program requires 11,800 hours annually, with tests of effectiveness consuming the largest share of that time.

Adoption is accelerating: 49% of organizations now use technology for 11 or more compliance activities, and 46% are piloting or using AI in data and predictive analytics. Across financial Solution, 50% of firms report increased productivity, efficiency, and cost savings from compliance technology.

The governance nuance this introduces:

When an AI agent flags a risk incorrectly or approves a non-compliant action, accountability becomes ambiguous. GRC programs must answer some uncomfortable questions before deploying these systems:

- Who owns the outcome when an agent approves a vendor that later fails compliance?

- Which decisions require human confirmation versus full automation?

- What escalation paths exist when agent confidence falls below acceptable thresholds?

Organizations need risk-tiered decision logic that routes high-stakes calls to human judgment while allowing agents to handle routine compliance validation autonomously.

With that governance scaffolding in place, agentic AI handling routine work frees GRC teams to focus where human judgment actually matters: strategic risk decisions, vendor oversight, and regulatory interpretation. The teams that define clear escalation boundaries now will be the ones operating GRC as a strategic function,rather than a documentation backlog,by 2026.

Continuous Compliance Monitoring Replaces Point-in-Time Audits

Traditional GRC relied on quarterly or annual audit snapshots to assess control effectiveness. AI now enables always-on monitoring of transactions, model behaviors, access controls, and policy adherence,generating a continuous stream of compliance evidence rather than a periodic report.

How this manifests in practice:

- Updates control status dashboards, policy violations, and risk indicators continuously rather than monthly

- Flags deviations against regulatory requirements as they occur, not months later during an audit

- Generates audit-ready evidence packages throughout the year, eliminating last-minute documentation scrambles

Regulatory penalties confirm the cost of detection gaps. The UK FCA fined Sigma Broking £1.08 million for failing to submit accurate transaction reports over 5 years due to incorrect system setups that persisted undetected. ESMA fined REGIS-TR €1.37 million for inadequate policies and operational risk failures. Both would have been detectable earlier with real-time monitoring.

Regulators are shifting expectations accordingly. Article 72 of the EU AI Act mandates that providers of high-risk AI systems establish post-market monitoring to actively and systematically collect performance data throughout the system's lifetime, enabling continuous compliance evaluation rather than point-in-time certification.

The operational challenge:

Continuous monitoring generates high volumes of signals. Without intelligent prioritization, compliance teams grow desensitized to constant notifications. GRC programs must build escalation logic and risk-tiered thresholds to focus human attention on meaningful deviations. Critical violations should surface immediately; low-severity issues can be batched for periodic review.

AI Governance Becomes a Core GRC Function

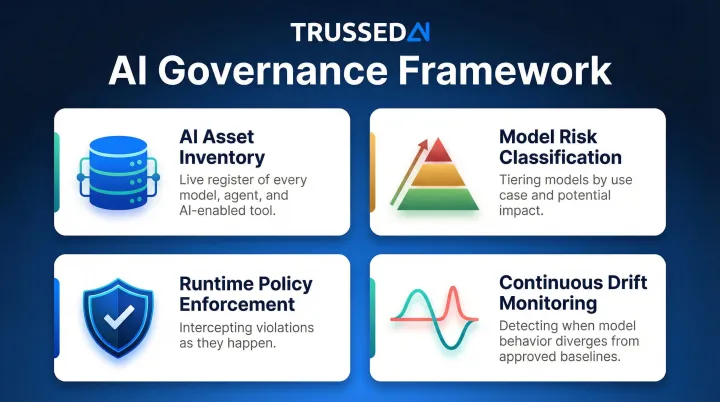

As enterprises deploy AI applications, agents, and workflows across business units, governing those AI systems becomes a dedicated workstream within GRC programs. This means tracking AI inventory, classifying model risk, enforcing runtime policies, and generating audit evidence of AI compliance,well beyond documenting policies in static documents.

Core elements GRC teams must now manage for AI systems:

- AI asset inventory , a live register of every model, agent, and AI-enabled tool across the organization, including deployment location and designated owner

- Model risk classification , tiering models by use case and potential impact so governance standards match actual exposure, not a one-size-fits-all checklist

- Runtime policy enforcement , intercepting violations as they happen, not surfacing them weeks later during a compliance review

- Continuous drift monitoring , detecting when model behavior quietly diverges from approved baselines, even without an explicit model update

The current gap is substantial. Only 25% of organizations have fully implemented AI governance programs, and just 27% of boards have formally incorporated AI governance into committee charters. Only 31% have a formal, comprehensive AI policy in place. In healthcare, only 12% of U.S. hospitals have formal AI governance frameworks aligned with the NIST AI RMF.

The practical infrastructure requirement:

Static, document-based policies cannot enforce themselves at runtime. Organizations need control plane infrastructure that intercepts AI interactions and applies governance rules dynamically. Platforms like Trussed AI address this by turning policies into real-time runtime enforcement with complete audit trails generated automatically, deployed as a drop-in integration with no changes to existing application code.

Why this trend is accelerating into 2026:

Several converging forces are pushing AI governance from optional to mandatory:

- The EU AI Act bans prohibited practices outright (subliminal manipulation, social scoring) and requires documented controls for high-risk systems , covering biometrics, critical infrastructure, and beyond

- High-risk system requirements include risk management, data governance, logging, human oversight, and cybersecurity , each of which needs traceable evidence

- NIST AI RMF adoption is expanding across regulated sectors, with healthcare and financial Solution under the most direct pressure

- Boards and regulators now expect AI risk to appear on enterprise risk registers alongside cybersecurity and operational risk

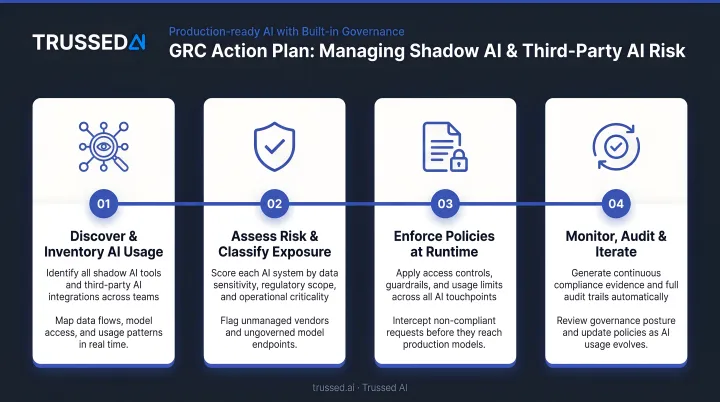

Shadow AI and Third-Party AI Expand the Risk Surface GRC Must Cover

Employees adopt unauthorized AI tools to improve personal productivity (shadow AI), and vendors embed AI capabilities into products without disclosing model behaviors, data handling, or change processes. Both create GRC blind spots that policies alone cannot address.

Over 80% of workers use unapproved AI tools in their jobs, and 60% say they will use shadow AI if it helps meet deadlines. Meanwhile, 60% of organizations have experienced at least one data exposure event linked to a public generative AI tool, with related breaches costing an average of $4.2 million globally.

The exposure gets worse when shadow AI touches regulated data. A healthcare employee using an unapproved tool to summarize patient records creates HIPAA exposure that no audit trail can retroactively capture , and the same logic applies across financial Solution, insurance, and any environment where compliance depends on knowing exactly what processed what data.

What GRC teams must now do:

- Discover all AI in use , including developer environments, business workflows, and embedded vendor capabilities , not just approved applications

- Require vendor transparency on AI components: model behaviors, data handling practices, and change management processes before procurement

- Track unauthorized API calls to public AI Solution and unapproved browser extensions through IT and security monitoring

- Maintain an approved AI technology list and enforce it at the network and application layer, not just through policy

The NIST AI RMF updates emphasize that non-transparent integration of upstream third-party components diminishes accountability, requiring organizations to inventory third-party entities and establish approved technology lists. Platforms like Trussed AI address this directly by providing full visibility across the AI stack , including developer environments and production systems , so GRC teams can enforce approved-tool policies in real time rather than discovering violations after the fact.

What's Driving the AI-in-GRC Shift

Regulatory acceleration:

Sector-specific AI rules are moving from guidance to enforceable requirements across multiple fronts:

- The EU AI Act reaches full enforcement in 2026, establishing risk-based AI categories with fines up to €35 million or 7% of global annual turnover for prohibited practices , and applies beyond EU borders to any provider whose AI output targets EU users

- EIOPA published an Opinion in August 2025 clarifying AI governance and risk management expectations for the insurance sector

- The FDA issued draft guidance in January 2025 on lifecycle management for AI-enabled device software in healthcare

Technology maturation:

AI capabilities for real-time monitoring, anomaly detection, and policy enforcement have matured sufficiently for enterprise deployment. At the same time, AI adoption inside organizations is outpacing the governance frameworks meant to manage it , generating governance debt that regulators are now actively scrutinizing.

Competitive and cost-efficiency pressures:

Organizations that demonstrate robust AI governance gain competitive advantage in regulated industries where customer trust is a differentiator. GRC teams face headcount constraints and cannot scale manual oversight linearly with AI portfolio growth. AI-powered governance is how GRC teams close that gap without proportional headcount growth.

Opportunities Created

AI in GRC enables significant reduction in manual compliance workload, faster audit readiness, continuous risk visibility, and the ability to scale governance across a growing AI portfolio without proportional headcount growth.

Gartner projects that effective AI governance technologies could reduce regulatory expenses by 20%, freeing up resources for innovation. A Forrester TEI study on Workiva's platform found a 208% ROI, saving 3,565 hours annually on reporting and 2,011 hours on auditing tasks.

Organizations implementing modern AI governance frameworks report measurable gains:

- 50% reduction in manual governance workload

- 50% increase in regulatory compliance

- ~4 weeks to get operational workflows live

That frees GRC teams to focus on higher-stakes decisions , evaluating emerging AI risks, advising on policy changes, and managing third-party model exposure , rather than assembling compliance documentation by hand.

Challenges GRC Teams Must Navigate

Static Policies Cannot Govern Dynamic AI Systems

Model drift, behavioral shifts, and new deployment contexts mean yesterday's compliant AI may be today's liability. GRC must shift from policy documentation to continuous control enforcement.

AI "hallucinations" occur anywhere from 3% to 25% of the time, contributing to business errors when AI-generated content is used without proper human oversight.

Talent and Organizational Gaps

The technical challenge is compounded by a people problem. Effective AI governance requires GRC professionals to understand AI/ML system behaviors , and AI/ML teams to understand regulatory requirements. Few organizations have both, leaving critical AI behaviors ungoverned.

The data reflects how wide this gap has grown:

- 78% of Chief Audit Executives cite data analysis as the top competency they want to improve on their staff

- Nearly 60% of respondents cite knowledge and training gaps as the primary barrier to implementing responsible AI practices

Bridging this gap requires both tooling and organizational structures , shared platforms, defined accountability frameworks, and cross-functional training , that most organizations are still building.

Future Signals: What GRC Leaders Should Watch Through 2027

Signal 1: Regulatory specificity will increase

Sector regulators in financial Solution, healthcare, and insurance are expected to issue AI-specific guidance that moves beyond principles to prescriptive requirements. The UK FCA is conducting a long-term review into AI and retail financial Solution with a deadline of February 24, 2026, to shape future regulatory models. The EBA's 2026 work programme includes specific follow-up actions to map AI Act requirements against sectoral measures.

GRC teams that begin mapping current AI inventories to anticipated requirements now will be better positioned than those waiting for final rules. Practical starting points include:

- Cataloging AI systems by use case, risk tier, and regulatory jurisdiction

- Identifying gaps between current documentation and likely disclosure requirements

- Assigning ownership for each system ahead of formal obligations

Signal 2: Multi-agent orchestration will create new attribution challenges

AI architectures are shifting from single models to interconnected agent networks,where agents call other agents, tools, and external APIs in sequence. Tracing accountability for a compliance failure back to a specific decision point in these systems is a problem current GRC frameworks weren't built to solve.

Chain-of-custody evidence across distributed agent systems, where decisions cascade through multiple autonomous actors, requires new instrumentation. Organizations deploying agentic workflows should treat attribution logging as a first-order design requirement, not an afterthought.

Signal 3: AI governance and traditional GRC platforms will converge

Spending on AI governance platforms is expected to reach $492 million in 2026 and surpass $1 billion by 2030. Gartner notes that traditional GRC tools are not equipped for real-time AI decision automation, pushing organizations toward specialized platforms that enforce policy at runtime. Organizations that integrate AI governance into existing GRC workflows now will carry lower compliance costs into 2027 than those still managing disconnected point solutions when consolidation pressure peaks.

Conclusion

2026 presents GRC professionals with a dual mandate: AI is both the most powerful tool available to modernize governance programs and the newest, most rapidly evolving risk category that those same programs must manage. Organizations that act on both dimensions now , deploying AI to automate compliance workloads while building governance infrastructure for AI systems themselves , will be better positioned when regulatory enforcement intensifies.

GRC leaders who start building that infrastructure today will enter 2027 with a clear competitive and regulatory advantage over peers still running documentation-based, periodic-audit frameworks. Three capabilities will separate leaders from laggards:

- Real-time policy enforcement applied at the point of every AI interaction

- AI asset visibility across every model, agent, and workflow in production

- Continuous compliance evidence generated automatically, not assembled manually before audits

The organizations that treat AI governance as operational infrastructure , not a compliance checkbox , are the ones that will scale AI confidently through what comes next.

Frequently Asked Questions

What are the principles of AI governance?

AI governance rests on five core principles:

- Transparency , decisions must be explainable and auditable

- Accountability , clear ownership of AI outcomes and failures

- Fairness , no discriminatory bias in training data or outputs

- Security , protection from adversarial misuse and data breaches

- Continuous oversight , ongoing monitoring, not one-time deployment validation

What are the types of AI risk?

AI risk falls into five primary categories:

- Model risk , flawed design or biased training data producing bad outputs

- Security risk , adversarial attacks, prompt injection, and data breaches

- Regulatory risk , non-compliance with laws like the EU AI Act

- Decision-making risk , high-stakes automated decisions without human review

- Operational risk , model drift, system failure, or performance degradation in production

Can AI do risk management?

Yes. AI can automate risk assessments, monitor controls continuously, detect anomalies in real time, and generate audit-ready evidence as a byproduct of governed interactions. Human judgment remains essential, though , for interpreting findings, setting risk appetite, and making high-stakes governance calls.

What are the topics of AI governance and policy?

AI governance spans several interconnected domains:

- AI inventory management , tracking what models and agents are in use

- Model risk classification by use case and impact level

- Bias and fairness auditing across training data and outputs

- Regulatory adherence , EU AI Act, NIST AI RMF, and sector-specific rules

- Third-party AI oversight and runtime policy enforcement for deployed systems

What is the difference between AI governance and traditional GRC?

Traditional GRC governs static processes and documented controls that rarely change. AI governance must address systems where behavior can shift without explicit updates , due to model drift or new training data. That demands continuous monitoring, runtime policy enforcement, and audit trails capturing decision chains, not just periodic compliance reviews.

How does agentic AI change GRC requirements?

Agentic AI,systems that autonomously take actions and make decisions,introduces accountability gaps when agents operate outside direct human supervision. GRC programs must define agent-specific controls, establish human escalation checkpoints for high-stakes decisions, and maintain audit trails that capture not just outputs but the reasoning chain behind autonomous decisions, including which tools were called and what data was accessed.