Introduction

Enterprises are racing to deploy LLMs across applications, workflows, and autonomous agents - but governance has not kept pace with the infrastructure decisions being made underneath these deployments. 88% of organizations now use AI in at least one business function, yet fewer than 1% have fully operationalized responsible AI. Across industries, the default approach is the same: build for speed and scale, treat governance as something to layer on later.

specifically how governance requirements force concrete infrastructure choices, from storage and compute to routing, latency budgets, and observability.

TLDR - Key Takeaways

- LLM governance is an active infrastructure constraint, not a policy add-on

- Each governance requirement drives specific infrastructure decisions with real cost tradeoffs

- Runtime enforcement requires different architecture than design-time governance

- Agentic AI introduces governance challenges most current infrastructure has not accounted for

- Regulated industries must treat compliance mandates as infrastructure requirements from day one

What Is LLM Governance and Why Does It Stress-Test AI Infrastructure

LLM governance encompasses the policies, controls, and operational processes that ensure large language models operate within defined parameters across their full lifecycle: from development through deployment, monitoring, and retirement.

Traditional software governance assumes predictable outputs. LLM governance can't. Model drift, non-deterministic outputs, and bias amplification mean behavior under novel inputs is never guaranteed. Generative AI models sample from learned distributions, introducing intrinsic stochasticity that makes static input validation insufficient. Governance must operate continuously across the full inference path.

The Governance Maturity Gap

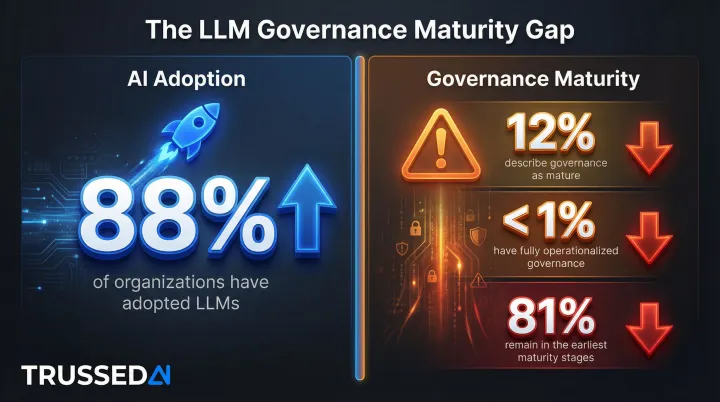

The pace of LLM adoption has dramatically outrun governance maturity:

- 88% of organizations use AI in at least one business function, up from 78% in 2024

- Only 12% describe their governance efforts as mature

- Fewer than 1% have fully operationalized responsible AI

- 81% remain in the earliest stages of governance maturity

This gap creates real operational risk. When governance is bolted on after deployment, policy violations go undetected until damage is done, audit trails require manual reconstruction, and compliance evidence takes weeks to compile instead of hours. That's not a process problem - it's an infrastructure problem.

Governance as Infrastructure Requirement

When organizations deploy LLMs in production, governance demands - audit trails, policy enforcement, model versioning, access control - must be served by the infrastructure itself. These create infrastructure load and architectural constraints, not just process checklists.

Key governance components that interact directly with infrastructure:

- Regulatory compliance (GDPR, HIPAA, EU AI Act, NIST AI RMF): requires data residency controls, retention policies, and audit-ready evidence generation

- Data security and privacy controls: encryption, access segmentation, and PII/PHI handling enforced at the infrastructure layer

- Real-time monitoring and anomaly detection: generates substantial telemetry volumes that need dedicated storage and indexing pipelines

- Human oversight mechanisms: escalation infrastructure and circuit-breaker patterns built into the request flow

- Continuous model evaluation: versioning systems, A/B testing infrastructure, and rollback capabilities that run alongside production traffic

NIST's AI Risk Management Framework Generative AI Profile identifies risks unique to or exacerbated by generative AI, including confabulation (hallucinations), harmful bias, and information integrity issues. It recommends establishing transparency policies for documenting training data origin and evaluating risk-relevant capabilities before deployment. Both requirements translate directly into infrastructure constraints.

How Governance Requirements Translate Directly into Infrastructure Decisions

Each governance requirement forces a specific infrastructure design decision. Here's the systematic mapping:

Audit Logging and Traceability → Storage and Data Pipeline Architecture

Complete audit trails of every model interaction, decision input/output, and policy enforcement event require dedicated, tamper-evident logging infrastructure. High-throughput LLM deployments generate substantial log volumes that stress traditional logging systems.

The scale challenge: A single LLM trace is approximately 25KB,containing prompts, completions, token counts, costs, and embeddings,which is 50x larger than a traditional web request trace (~500 bytes). A moderately busy LLM application with 10,000 users per day, each generating 5 interactions with 10 traces per interaction, produces 500,000 traces daily,equating to 12.5GB of log data per day or 4.5TB annually.

Audit infrastructure must address:

- Write-optimized storage to handle high-velocity log ingestion without degrading application performance

- Retention policies aligned with regulatory requirements (HIPAA mandates 6 years; SEC Rule 17a-4 requires complete time-stamped audit trails)

- Log indexing for rapid retrieval enabling targeted query of specific interactions during audits or incident investigations

- Separation of audit data from operational data to prevent tampering and ensure compliance with immutability requirements

Real-Time Policy Enforcement → Latency Budget and Compute Architecture

Enforcing content policies, guardrails, and compliance rules in real-time - at inference time - adds a processing step to every LLM call. This creates a latency budget problem: governance cannot be so slow that it degrades user experience or breaks SLAs.

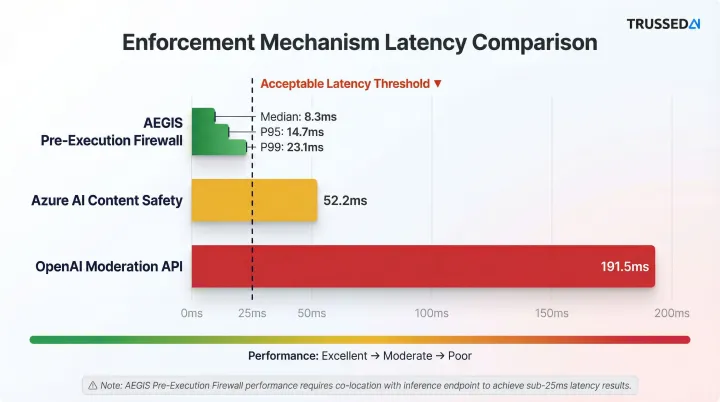

Measured latency overhead of enforcement mechanisms:

| Enforcement Mechanism | Provider | Latency Overhead |

|---|---|---|

| AEGIS Pre-Execution Firewall | arXiv Research | Median: 8.3ms, P95: 14.7ms, P99: 23.1ms |

| Azure AI Content Safety | TrueFoundry Benchmark | 52.2ms |

| OpenAI Moderation API | TrueFoundry Benchmark | 191.5ms |

Purpose-built pre-execution firewalls add negligible latency (<25ms at P99), while API-based moderation Solution can add 50ms to nearly 200ms of overhead - enough to degrade user experience in interactive applications.

Architectural tradeoff: Enforcement logic must be co-located with inference, not routed to a remote service. This requires a proxy or sidecar pattern integrated into the AI serving layer. AWS recommends using Amazon API Gateway as an access layer in front of LLM endpoints to handle authorization, quotas, throttling, and policy enforcement.

Multi-Model Management → Routing, Failover, and Redundancy Infrastructure

Enterprise LLM deployments almost never rely on a single model. Governance requirements - routing sensitive data only to models with specific compliance certifications, or failing over to a backup when a primary model produces non-compliant outputs - demand intelligent routing infrastructure.

Governance-driven routing policies:

- Geo-location-based routing: Directs user requests to different LLM providers or regions based on physical location to comply with data governance laws like GDPR or HIPAA

- Compliance certification-based routing: Ensures PHI/PII data flows only to HIPAA-compliant models or deployments with specific data residency guarantees

- Multi-LLM routing strategies: AWS outlines dynamic routing approaches, including LLM-assisted routing (using a classifier LLM to direct prompts to specialized models) and semantic routing (using embeddings to match prompts to task categories)

Implementing these policies requires load balancers and API gateways that evaluate governance context - data classification, user role, compliance zone - before each routing decision, not after.

Access Control and Data Privacy → Identity and Network Architecture

Role-based access controls for LLM interactions - limiting which users or applications can invoke which models, access which data, or override which guardrails - must be enforced at the infrastructure level, not just the application layer.

Application-layer controls alone don't hold. Applications can be bypassed, API keys can leak, and developers can route around application logic. Infrastructure-layer enforcement ensures policies apply regardless of how users or applications attempt to access AI systems.

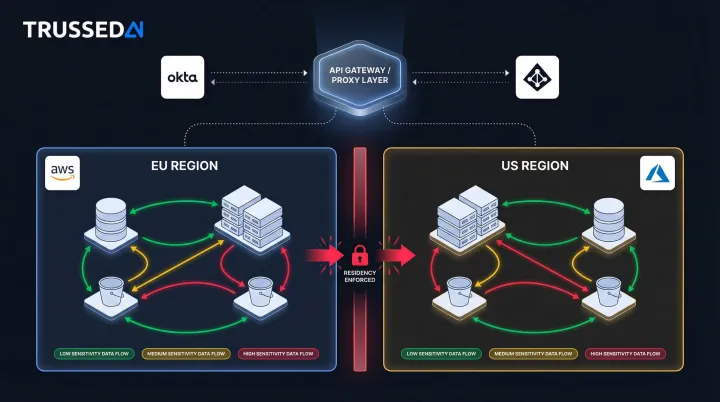

Identity and network controls to enforce:

- Integrate with enterprise identity providers (Okta, Azure AD, Ping Identity) to authenticate every AI request

- Evaluate fine-grained permissions at the API gateway or proxy layer, not inside the application

- Segment AI workloads by data sensitivity classification to contain blast radius if a model or integration is compromised

- Enforce data residency controls that constrain where classified data can travel across regions or providers

Azure OpenAI's Data Zone Standard deployments contractually guarantee that data at rest and all AI processing remain within a specific geographic boundary,for example, the European Union,so data never leaves the designated region.

AWS Bedrock provides similar controls, letting customers specify which AWS Regions store and process content, with explicit commitments against moving or replicating data outside chosen regions.

Design-Time vs. Runtime Governance: The Architecture Divide

Design-time governance encompasses policies, model selection criteria, training data standards, and architectural guardrails established before deployment. Runtime governance refers to enforcement that occurs during live inference,intercepting, evaluating, and acting on every interaction in real time.

Many organizations default to design-time governance because it feels familiar,it mirrors traditional software development practices where testing and validation occur before release. For production LLMs, that approach leaves critical gaps that only surface under real-world conditions.

The Failure Mode of Design-Time-Only Governance

Design-time governance assumes the model will behave at runtime as it did during evaluation. This assumption breaks down because LLM behavior is context-dependent, and production inputs differ from test conditions. Policy violations, unexpected outputs, and adversarial prompts are runtime phenomena.

Research on runtime governance for AI agents demonstrates that prompt-level instructions shape the distribution over paths but provide no strict enforcement. An agent may ignore instructions outright or be manipulated into overriding them through prompt injection.

Gartner's 2025 TRiSM report concludes that runtime enforcement is no longer optional. Without real-time monitoring and automated guardrails, development-time policies have little measurable impact in production.

Without runtime enforcement, governance gaps surface only after a violation has already occurred,leaving organizations exposed to regulatory action and reputational damage before they can respond.

The Control Plane Model: Architectural Solution to Runtime Governance

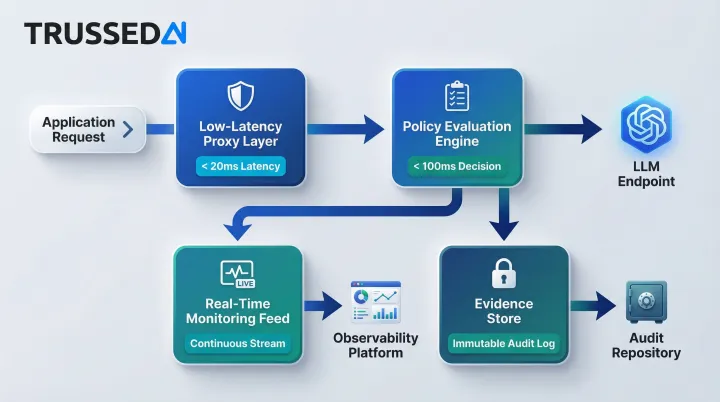

A governance control plane sits between applications and LLM endpoints, enforcing policies on every request and response without requiring changes to application code. This architecture requires four core infrastructure components:

1. Low-Latency Proxy Layer Routes every request through governance evaluation at the API boundary before it reaches any model. Latency overhead must stay minimal - governance that slows responses by hundreds of milliseconds is governance teams will route around.

2. Policy Evaluation Engine Evaluates inputs, outputs, and context against defined rules - covering role-based access, data classification, and compliance requirements - while maintaining sub-100ms decision times under production load.

3. Real-Time Monitoring Feed Streams telemetry on every interaction, policy decision, and enforcement action to observability platforms, creating continuous visibility rather than periodic snapshots.

4. Evidence Store Records governance decisions immutably as they occur, so audit-ready evidence exists as a byproduct of normal operations - no manual reconstruction required during regulatory examination.

Trussed AI implements this architecture,a drop-in control plane that enforces governance at runtime across AI apps, agents, and developer tools with sub-20ms latency and without code changes to existing applications. The platform operates as a proxy through public APIs, providing real-time visibility and control without disrupting existing systems.

Infrastructure Cost Implications

Design-time governance appears cheaper upfront, but it accumulates governance debt as scale increases. Each new model, agent, or edge case adds complexity that design-time controls weren't built to handle. Retrofitting governance into existing AI workflows creates operational risk and inefficient workflows, with maintenance and compliance rework consuming 10-20% of annual AI budgets.

Runtime governance requires upfront infrastructure investment. The tradeoff is that it eliminates the retrofitting cycle entirely - policies applied at the control plane level scale across every new model or workflow automatically. Gartner projects that effective governance technologies could reduce regulatory expenses by 20%, compounding returns as AI deployments grow.

Agentic AI and Multi-Agent Systems: The Governance Frontier Infrastructure Isn't Ready For

Agentic AI - LLMs operating autonomously across multi-step tasks, invoking tools, calling APIs, and coordinating with other agents - introduces a category of governance risk that most current infrastructure was not designed to handle. Multi-agent risks are among the least-covered risk subdomains in existing AI governance documents, per research on the AI governance landscape.

The Scale of the Agentic Governance Challenge

Gartner predicts that at least 15% of day-to-day work decisions will be made autonomously through agentic AI by 2028, up from 0% in 2024. Yet only about 30% of organizations reach a maturity level of three or higher in agentic AI controls, according to McKinsey's 2026 AI Trust Maturity Survey. Gartner predicts over 40% of agentic AI projects will fail by 2027 due to unintended decisions violating policies, runaway costs, and lack of transparency.

Documented Incidents in Agentic Systems

Autonomous agents expand the attack surface, allowing threats to traverse systems at machine speed:

| Incident | Description | Impact |

|---|---|---|

| McKinsey "Lilli" Red-Team (2026) | Autonomous agent compromised internal AI platform | Gained broad system access in under two hours |

| AgentFlayer (2025) | 0-click indirect prompt injection via ChatGPT Connectors | Extracted sensitive data from Google Drive without user interaction |

| EchoLeak / CVE-2025-32711 (2025) | Prompt injection payload hidden in document speaker notes | Zero-click data exfiltration via Microsoft Copilot |

Infrastructure Challenges Agentic AI Creates for Governance

These incidents point to four structural gaps that existing infrastructure wasn't built to address:

Policy propagation across chains: Traditional governance evaluates a single input-output pair. Agents execute multi-step workflows where Step 3's output becomes Step 4's input - a violation at Step 2 may not surface until Step 5 causes real harm.

Tool calls bypass guardrails: When agents call external APIs, search databases, or execute code, those requests typically bypass the content filters applied to LLM outputs. The result is a governance blind spot at the exact point where consequential actions happen.

Agent-to-agent traffic is invisible: Multi-agent systems coordinate through shared memory, message passing, and workflow handoffs. Infrastructure designed for single request-response interactions has no observability surface for these interactions.

Harm outpaces human review: Agents operate at machine speed. By the time a human reviews one decision, the agent may have executed dozens of downstream actions based on it.

Governance-Ready Infrastructure for Agentic AI

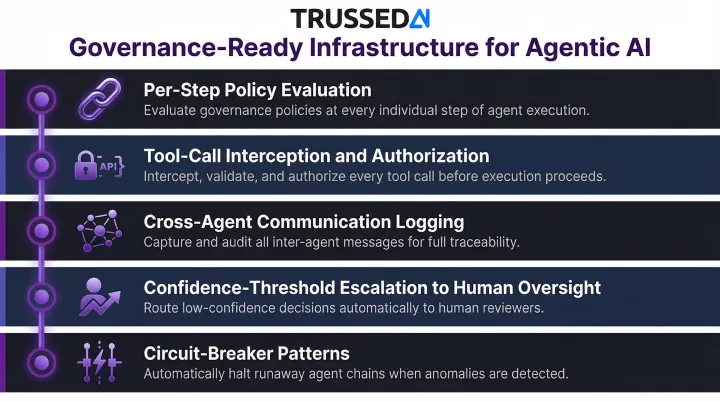

Infrastructure designed for agentic governance must include:

- Per-step policy evaluation - covering every tool call, data access, and intermediate decision across the full execution chain, not just input/output pairs

- Tool-call interception and authorization - validating every API request against policy before execution, closing the guardrail bypass gap

- Cross-agent communication logging - capturing agent-to-agent interactions, shared memory access, and workflow handoffs

- Confidence-threshold-based escalation to human oversight - automatically routing high-risk decisions to human review before execution

- Circuit-breaker patterns - halting agent execution when a governance violation is detected mid-chain, preventing cascading failures

Governance-Driven Infrastructure Design for Regulated Industries

In healthcare (HIPAA), financial Solution (PCI-DSS), insurance, and other regulated industries, compliance requirements shape infrastructure decisions before a single line of code is written. These aren't governance preferences - they're constraints with legal consequences.

Compliance as Infrastructure Constraint

Data residency rules force specific cloud region configurations. Azure OpenAI's Data Zone Standard deployments contractually guarantee that data and processing remain within specified geographic boundaries. PHI/PII handling requirements constrain which models can be used and how data flows through systems. Audit trail requirements set minimum retention and retrieval standards that must be built into storage architecture.

The Cost of Non-Compliance

Regulators are actively enforcing existing laws against ungoverned AI deployments:

- Earnest Operations (2025): Massachusetts fined the student loan company $2.5 million for failing to mitigate risks of disparate harm from AI underwriting models

- Pieces Technologies (2024): Texas settled with the healthcare AI company over false statements about generative AI accuracy and safety (hallucination rates) in products deployed in Texas hospitals

The EU AI Act imposes fines up to €35 million or 7% of global annual turnover for prohibited AI practices, and up to €15 million or 3% of turnover for other violations.

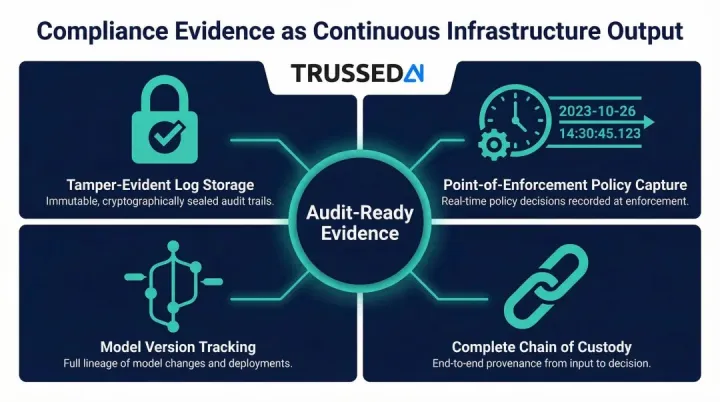

Compliance Evidence as Continuous Infrastructure Output

In regulated industries, governance infrastructure should generate audit-ready evidence as a byproduct of normal operation - not require a dedicated effort during audits. That means shifting from archaeology - reconstructing evidence weeks after decisions - to continuous assurance where governance records generate themselves in real time.

This requires four infrastructure capabilities:

- Tamper-evident log storage with cryptographic verification, so records can't be altered after the fact

- Policy decisions captured at the moment of enforcement, with timestamps that hold up under audit scrutiny

- Model version tracking tied to each transaction - which model, which policy version, which dataset

- Complete chain of custody from prompt to output to downstream action, retrievable on demand

Every infrastructure decision - cloud provider selection, model hosting approach, data pipeline design, logging architecture - carries compliance implications. Evaluating these before implementation is far cheaper than retrofitting controls after a regulator asks why they weren't there to begin with.

Building a Governance-Ready AI Infrastructure Stack

A governance-ready AI infrastructure comprises five layers, each with distinct responsibilities:

1. Data Layer - Privacy-preserving pipelines, compliant storage, data classification, and residency controls

2. Model Serving Layer - Intelligent routing, failover, access controls, and multi-model orchestration

3. Governance Enforcement Layer (Control Plane) - Runtime policy evaluation, real-time guardrails, and authorization

4. Observability Layer - Real-time monitoring, alerting, telemetry collection, and anomaly detection

5. Reporting Layer - Compliance evidence generation, audit trail retrieval, and regulatory documentation

Organizations don't need to build all layers simultaneously, but should design with this stack in mind from the start. Retrofitting governance onto ungoverned infrastructure is far more expensive than building it in from the beginning.

Practical Sequence for Enterprises

Audit logging and monitoring first. Comprehensive logging of all AI interactions - capturing inputs, outputs, model versions, and timestamps - creates the foundation for everything that follows. Without this baseline visibility, no downstream governance control is reliable.

Access controls and data handling policies second. Once visibility is established, enforce who can access which models, which data classifications require which handling procedures, and which users have authorization for sensitive operations.

Runtime enforcement through a governance control plane last. Move from manual policy enforcement to automated, real-time governance. Platforms like Trussed AI accelerate this path - enabling enterprises to reach operational governance workflows in weeks rather than months, operating as a drop-in proxy with no application code changes required.

Common Infrastructure Gaps Creating Governance Exposure

- Logs stored in mutable systems where records can be altered or deleted, undermining compliance requirements

- Governance checks running after the fact - in batch reviews or manual audits - rather than at the moment of inference

- No model version tracking, making it impossible to reconstruct which version produced which output for root cause analysis

- Access controls scoped to the application level (who can use the app) rather than the model level (who can invoke which models with which data)

- No visibility into multi-step agent execution chains, tool invocations, or agent-to-agent communication

Addressing these gaps doesn't require replacing existing infrastructure , it requires adding a governance layer that instruments what's already there. Gartner projects that spending on AI governance platforms will reach $492 million in 2026 and surpass $1 billion by 2030, driven by regulatory mandates and the operational necessity of governing AI at scale.

Frequently Asked Questions

What is LLM governance and how does it differ from traditional AI governance?

LLM governance covers policies, controls, and monitoring specifically designed for large language models. It differs from traditional AI governance because LLMs produce non-deterministic, generative outputs that can violate policy even under benign inputs, requiring runtime enforcement rather than rule-based pre-deployment controls alone.

How do governance requirements directly affect AI infrastructure costs?

Governance adds infrastructure components (audit logging storage, policy enforcement compute, observability tooling) with associated costs. However, retrofitting governance onto ungoverned infrastructure is far more expensive. Governance debt compounds with scale and can result in regulatory penalties that dwarf upfront infrastructure investment.

What is the difference between design-time and runtime LLM governance?

Design-time governance sets policies before deployment (model selection, training standards, architecture guardrails), while runtime governance enforces those policies on every live inference interaction. Runtime governance requires an additional proxy or control plane layer, but is necessary because LLM behavior in production diverges from behavior in testing.

How should regulated industries (healthcare, financial Solution) approach LLM infrastructure governance differently?

Regulated industries must treat compliance mandates (HIPAA, GDPR) as infrastructure design requirements from the start. That means data residency constraints, PHI/PII-aware routing, immutable audit logging, and compliance evidence generation belong in the AI serving layer by design - not bolted on after deployment.

What governance infrastructure changes are required to support agentic AI deployments?

Agentic AI requires governance infrastructure that evaluates policies across multi-step execution chains, not just single request-response interactions. This means building tool-call interception, per-step policy evaluation, cross-agent logging, and human-in-the-loop escalation triggers directly into agent execution infrastructure.

Can governance be added to existing AI infrastructure without rebuilding from scratch?

Yes, governance can be layered onto existing AI infrastructure through a proxy-based control plane that intercepts and evaluates LLM interactions without requiring application code changes. However, the earlier governance is designed into the infrastructure, the lower the cost and operational disruption of implementation.