Introduction: Why EU AI Act Penalties Demand Your Attention Now

The EU AI Act is already in force. Enforcement began in February 2025 for prohibited AI practices and expands to most high-risk AI requirements by August 2026. Companies face fines reaching €35 million or 7% of global annual turnover - penalties that substantially exceed GDPR's maximum.

The stakes extend beyond EU borders. Under Article 2, the Act applies to any organization whose AI systems process EU citizen data or produce outputs affecting the EU, regardless of where the company is established. US, UK, and non-EU companies deploying AI systems that touch European markets fall within scope.

For organizations deploying AI across products, internal tools, or customer-facing systems, the compliance window is narrowing. This article breaks down the specific penalty tiers, which violations trigger the largest fines, and what regulators will be looking for when enforcement scales through 2026.

TLDR: Key Takeaways

- The EU AI Act imposes fines up to €35 million or 7% of global turnover for deploying prohibited AI practices,exceeding GDPR maximums

- Penalties apply across the AI value chain: developers, deployers, importers, distributors, and authorized representatives all face liability

- General-purpose AI model providers face separate fines up to €15 million or 3% of global turnover under Article 101

- Enforcement operates on a phased timeline: prohibited practices became enforceable February 2025, with high-risk system requirements active August 2026

- Continuous audit evidence generation is non-negotiable: periodic compliance reviews miss violations that occur between check cycles

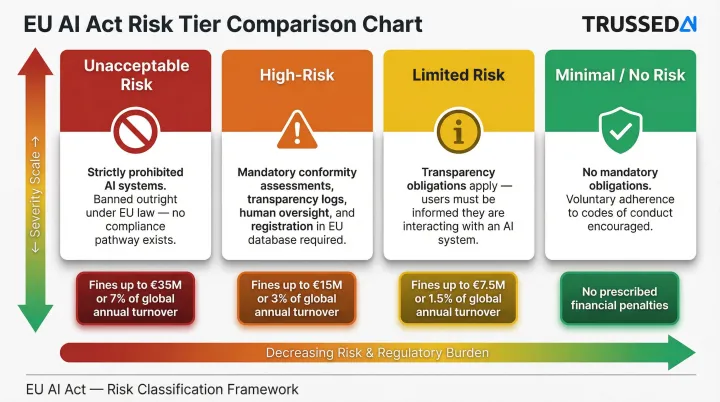

Understanding the EU AI Act's Risk-Based Penalty Structure

The EU AI Act calibrates penalties to the level of risk an AI system poses to fundamental rights, safety, and society. Unacceptable risk systems face the steepest financial consequences, while limited-risk systems carry lighter obligations. This means the same AI tool could incur vastly different fines depending on its use case and deployment context.

Four Risk Classifications

The Act categorizes AI systems into four tiers:

| Risk Tier | Compliance Obligations | Enforcement Impact |

|---|---|---|

| Unacceptable Risk | Strict prohibition,cannot be placed on market or used | Highest fines (€35M or 7% of global turnover) |

| High-Risk | Mandatory technical documentation, conformity assessments, human oversight, EU database registration | Significant fines (€15M or 3% of global turnover) |

| Limited Risk | Transparency obligations,users must be informed they're interacting with AI | Moderate enforcement |

| Minimal/No Risk | No specific mandatory obligations, voluntary codes encouraged | No direct penalties |

Annex III High-Risk Categories

Article 6 and Annex III identify specific use cases as high-risk, including:

- Biometric identification and categorization systems

- Critical infrastructure safety components (water, gas, electricity, traffic management)

- Education and vocational training (admissions decisions, grading systems)

- Employment and HR management (recruitment, performance evaluation, task allocation)

- Access to essential Solution (credit scoring, insurance underwriting, emergency dispatch)

- Law enforcement applications (risk assessments, evidence reliability evaluation)

- Migration, asylum, and border control systems

- Administration of justice and democratic processes

If your AI application falls into any of these categories, you're automatically subject to high-risk system requirements and fines up to €15M or 3% of global annual turnover.

Phased Enforcement Timeline

Enforcement is already underway, not approaching:

- February 2, 2025: Prohibitions on banned AI practices (Article 5) became enforceable

- August 2, 2025: GPAI model requirements, notified body obligations, and governance structures became enforceable

- August 2, 2026: General application date for high-risk AI system requirements (with some exceptions extending to August 2027)

Understanding which enforcement tier applies to your organization starts with identifying your role under the Act.

Who Is Subject to Penalties

The Act distinguishes between multiple roles, each carrying distinct obligations and liability exposure:

- Providers who develop and place AI systems on the market carry the broadest compliance burden

- Deployers who use AI systems in their operations must meet oversight and monitoring requirements

- Importers bringing AI systems from outside the EU assume responsibility for third-party compliance

- Distributors making AI systems available in the EU market face documentation and traceability obligations

- Authorized representatives acting on behalf of non-EU providers share liability for regulatory violations

A single organization can hold multiple roles simultaneously, multiplying compliance obligations and penalty risk.

The Three Penalty Tiers for AI Operators (Article 99)

Tier 1 - Violations of Prohibited AI Practices: Up to €35M or 7% of Global Turnover

Article 99(3) establishes the highest penalty tier for deploying AI systems banned under Article 5. The fine ceiling is €35 million or 7% of total worldwide annual turnover, whichever is higher. This exceeds GDPR's maximum penalty of 4% of global turnover, making intentional violations potentially more punishing than the most serious data protection breaches.

Specific banned practices include:

- Subliminal manipulation techniques beyond conscious awareness that materially distort behavior causing significant harm

- Exploitation of vulnerabilities tied to age, disability, or socio-economic situations to distort behavior

- Social scoring that evaluates individuals based on social behavior leading to unjustified detrimental treatment

- Predictive policing risk assessments based solely on profiling or personality traits

- Facial scraping of images from the internet or CCTV to build recognition databases

- Emotion inference in workplaces or educational institutions (except for medical or safety reasons)

- Biometric categorization based on sensitive traits such as race, political opinions, or sexual orientation

- Real-time remote biometric identification in publicly accessible spaces for law enforcement, with narrow exceptions for missing persons searches or imminent terrorist threats

When determining fines, regulators weigh intent heavily , deliberate violations face steeper penalties than negligent ones, with less room for mitigation arguments.

Tier 2 - Violations of High-Risk AI System Obligations: Up to €15M or 3% of Global Turnover

Fines up to €15 million or 3% of global annual turnover apply under Article 99(4) for failures to meet high-risk AI system obligations. These penalties reach across the entire AI value chain, targeting each operator role specifically:

Triggering violations include:

- Article 16: Provider obligations , failing to maintain quality management systems or conduct conformity assessments

- Article 22: Authorized representative duties , inadequate mandate execution

- Article 23: Importer obligations , insufficient verification of provider compliance

- Article 24: Distributor obligations , failing to verify conformity before making systems available

- Article 26: Deployer obligations , inadequate human oversight or input data relevance checks

- Articles 31–34: Notified body requirements , failure to meet assessment and certification standards

- Article 50: Transparency obligations , not informing users they're interacting with AI systems

Beyond article-level violations, regulators also target execution failures in day-to-day operations:

Common practical failures include:

- Failing to maintain required technical documentation specified in Annex IV

- Not implementing a risk management system for high-risk AI

- Skipping mandatory human oversight mechanisms

- Not registering a high-risk AI system in the EU database before deployment

- Inadequate conformity assessment procedures

These failures are common in healthcare AI, HR automation, credit scoring systems, and critical infrastructure applications , sectors where many organizations deploy AI without fully understanding their high-risk classification.

Tier 3 - Supplying Incorrect or Misleading Information: Up to €7.5M or 1% of Global Turnover

Article 99(5) imposes fines up to €7.5 million or 1% of global annual turnover when operators provide incorrect, incomplete, or misleading information to national competent authorities or notified bodies during compliance inquiries. This tier also covers failure to cooperate with competent authorities under Article 21.

What appears to be a minor documentation issue can escalate to substantial fines, making response quality to regulatory inquiries a compliance risk in itself. Organizations that cannot immediately produce automatically generated logs, technical documentation, or conformity assessment records when requested face significant penalty exposure.

Special Cases: GPAI Model Fines and Union Body Penalties

Article 101 GPAI Fine Structure

The European Commission holds exclusive enforcement authority over general-purpose AI model providers, separate from national market surveillance authorities. Under Article 101, the Commission can impose fines up to €15 million or 3% of global annual turnover on GPAI providers who intentionally or negligently violate GPAI obligations.

Triggering violations include:

- Failing to provide requested documentation to the Commission

- Refusing Commission access for model evaluation

- Failing to implement requested risk-mitigation measures

- Not notifying the Commission within two weeks of meeting the systemic risk threshold

Systemic Risk Designation Threshold

Under Article 51(2), GPAI models trained with more than 10^25 floating point operations (FLOPs) are presumed to carry systemic risk , a designation providers can contest, but which automatically triggers stricter obligations and heightened AI Office oversight. Meeting this threshold creates immediate notification duties; failure to notify is one of the four direct triggers for Article 101 fines.

As of mid-2025, the Commission has not published an official list designating specific GPAI models as presenting systemic risk under Article 51. The GPAI Code of Practice has been issued, but the absence of a formal designation list means providers operating near the FLOPs threshold carry ongoing compliance uncertainty.

Article 100 , EU Institutions and Bodies

EU institutions are not exempt. Under Article 100, the European Data Protection Supervisor acts as the competent authority for Union institutions, bodies, offices, and agencies, with power to impose:

- Up to €1.5 million for non-compliance with Article 5 prohibited practices

- Up to €750,000 for non-compliance with other requirements or obligations

For enterprises selling AI systems to EU institutions , or integrating with their workflows , this creates a secondary compliance layer. Your system's behavior inside an EU institution is subject to EDPS enforcement, not national authorities, which affects both contractual liability and audit requirements.

How EU AI Act Enforcement Actually Works

Dual Enforcement Structure

Enforcement operates through two parallel channels:

National market surveillance authorities handle enforcement for most AI operators (providers, deployers, importers, distributors) at the member state level. Under Article 70, Member States were required to designate at least one notifying authority and at least one market surveillance authority by August 2, 2025.

The European AI Office,established in January 2024 within the European Commission,holds exclusive enforcement authority over GPAI model providers. This centralized structure means a single authority oversees GPAI providers regardless of which EU member state they operate in.

Fine Determination Factors

Article 99(7) lists considerations that determine the final fine amount within each tier's ceiling:

- Nature, gravity, and duration of the violation

- Whether the violation was intentional or negligent

- Size and market share of the offending organization

- Financial benefit gained or losses avoided

- Degree of cooperation with authorities

- Previous violations by the same operator

In practice, no two penalty outcomes are identical. Organizations demonstrating good-faith cooperation, rapid mitigation, and no prior violations receive more favorable treatment than repeat offenders who obstruct investigations.

Extraterritorial Enforcement

Enforcement is not limited to EU-based companies. Article 2 explicitly extends jurisdiction to any provider placing AI systems on the EU market or whose AI output affects EU citizens, regardless of location. Member States must report penalties imposed annually to the Commission, giving the Commission visibility into how penalties are applied across all 27 member states.

Practical Steps to Reduce Your Exposure to EU AI Act Penalties

Start with Accurate Risk Classification

Risk classification is the foundational step,organizations must accurately assess whether each AI application falls under prohibited, high-risk, limited-risk, or minimal-risk categories before any compliance program can be designed. Misclassifying a high-risk system as limited-risk is itself a compliance failure that triggers Tier 2 penalties.

Use Annex III as your starting point. The regulation provides specific use case categories that automatically qualify as high-risk. If your AI system falls into any Annex III category,biometrics, critical infrastructure, education, employment, essential Solution, law enforcement, migration, or justice administration,it's high-risk by definition.

Maintain Continuous Documentation, Not Reconstructed Evidence

High-risk AI systems require technical documentation, conformity assessments, risk management system records, and automatically generated logs. The absence of these at the moment of a regulatory inquiry can trigger Tier 3 or Tier 2 penalties even if the underlying system is otherwise compliant.

Audit trails must be maintained continuously, not reconstructed after the fact. Article 11 requires providers to draw up technical documentation before a high-risk AI system is placed on the market. Annex IV mandates this documentation include:

- System architecture and intended purpose

- Training data methodologies

- Human oversight measures and intervention controls

- Risk management system records

Organizations that cannot immediately produce these records during an Article 21 information request face material penalty exposure.

The harder problem is the "archaeology" problem: regulators ask how a specific decision was governed, and organizations need weeks to reconstruct an answer. Governance infrastructure that generates audit evidence automatically - as a byproduct of every AI interaction - eliminates that gap before it becomes a liability.

Leverage Regulatory Sandboxes as a Protected Compliance Pathway

Article 57 establishes AI regulatory sandboxes,controlled testing environments supervised by national authorities where organizations can develop and test AI systems in real-world conditions with reduced enforcement exposure. Article 57(12) provides explicit protection: if prospective providers observe the sandbox plan and follow national competent authority guidance in good faith, no administrative fines shall be imposed for infringements during experimentation.

Spain has pioneered this approach. The Spanish Agency for the Supervision of Artificial Intelligence launched the first European regulatory sandbox in 2024, selecting 12 high-risk AI projects in April 2025 to test compliance frameworks. This provides a legitimate route to innovation without incurring penalty risk during development.

Implement Human Oversight Proactively

For high-risk AI systems, deployers must assign human oversight to qualified personnel with necessary training and authority. Organizations that can demonstrate a functioning human-in-the-loop process,not just policy language,are in a materially better position if an investigation is triggered.

Human oversight means:

- Trained personnel with authority to intervene in AI decisions

- Clear escalation workflows when AI outputs require review

- Documentation of oversight actions and intervention decisions

- Technical controls that enable human operators to override AI recommendations

The "30% rule" referenced in some industry commentary is not a legal requirement in the EU AI Act,it's an informal business heuristic suggesting humans retain roughly 30% of decision-making for oversight while AI handles 70% of repetitive tasks. While this aligns conceptually with Article 14's human oversight requirement, compliance teams should recognize that no statutory 30% threshold exists.

Documenting that oversight in real time , rather than reconstructing it later , is what separates a defensible compliance position from an exposed one.

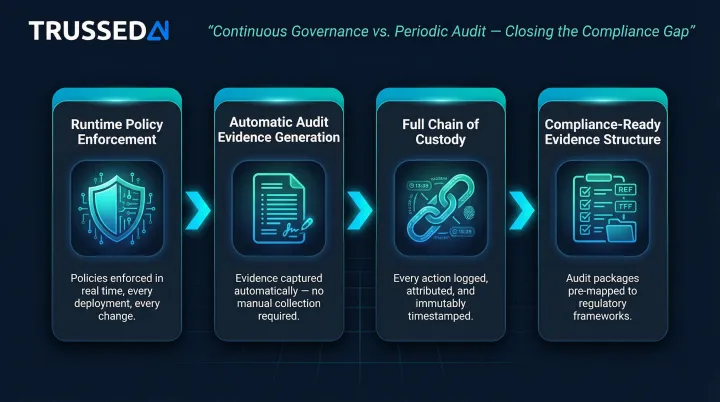

Build Continuous Governance Infrastructure

The Act's enforcement structure demands continuous governance rather than periodic compliance audits. Organizations deploying AI across multiple applications, teams, or workflows need policy enforcement that happens at runtime, not quarterly reviews that discover violations months after they occur.

Continuous governance means:

- Policies enforced at the moment of execution, not discovered in retrospective audits

- Audit evidence generated automatically as a byproduct of every governed interaction

- Complete chain of custody from prompt to model to output to action, available instantly on demand

- Evidence structured for compliance teams, internal audit, and regulatory examination

Trussed AI's control plane operates as a drop-in proxy , zero changes to application code , enforcing governance policies across models, agents, and workflows as each interaction occurs. Every interaction is logged with policy evaluation results, model version, timestamp, and data lineage, creating the assurance evidence that Article 99 investigations require.

Organizations using this approach report a 50% reduction in manual governance workload and a 50% increase in regulatory compliance, with operational workflows live within four weeks.

Frequently Asked Questions

What are the penalties for non-compliance with the EU AI Act?

The Act sets three penalty tiers: €35 million or 7% of global turnover for prohibited AI practices (Article 5); €15 million or 3% for high-risk AI system failures; and €7.5 million or 1% for misleading authorities. GPAI providers are governed separately under Article 101, with fines up to €15 million or 3% of global turnover.

What are the consequences of serious violations of the EU AI Act?

Serious violations,particularly deployment of prohibited AI systems under Article 5,result in the highest fines (€35 million or 7% of global turnover, whichever is higher), mandatory system withdrawal from the EU market, and significant reputational damage. Under Article 99(7), intentional violations draw harsher penalties than negligent ones during fine determination.

What is the 30% rule for AI under the EU AI Act?

There is no legally binding "30% rule" in the EU AI Act. It's an informal industry heuristic suggesting AI handle ~70% of repetitive tasks while humans retain 30% for oversight and ethical judgment. The concept aligns loosely with Article 14's human oversight requirement, but the 30% figure carries no legal weight.

What does Article 57 of the EU AI Act cover?

Article 57 establishes AI regulatory sandboxes,supervised environments where organizations can develop and validate AI systems under real conditions with reduced enforcement risk. Under Article 57(12), participants who follow sandbox plans and authority guidance in good faith are explicitly protected from administrative fines during experimentation.

Does the EU AI Act apply to companies outside the EU?

Yes, the Act has broad extraterritorial reach. Article 2 applies to any provider placing AI systems on the EU market or whose AI outputs affect EU citizens, regardless of whether the company is based in the US, UK, or elsewhere. Non-EU companies whose AI systems process EU citizen data or produce outputs used within the EU fall within scope.

How are EU AI Act fines calculated, and can they be reduced?

Fines are set case-by-case within each tier's ceiling, with Article 99(7) allowing reductions for factors like authority cooperation, harm mitigation efforts, and company size. Article 99(6) protects SMEs and startups specifically: their fines are capped at whichever threshold is lower,the percentage or the fixed amount,unlike larger enterprises, which face the higher figure.