Introduction

Worldwide generative AI spending is projected to reach $644 billion in 2025, representing a 76.4% increase from 2024. Enterprise leaders expect an average of 75% growth in LLM budgets over the next year as AI spending moves from innovation budgets to permanent core IT budget lines.

Yet most organizations discover the true cost of their AI infrastructure only when the invoice arrives, a single line item with no breakdown by feature, team, or model. One compromised API key generated $82,314 in unauthorized charges in just 48 hours for a startup normally spending $180/month. Another team's misconfigured agent burned $800 overnight in a retry loop.

LLM API costs spiral when teams lack visibility and runtime governance. This article covers five practical ways to monitor and optimize spend: real-time cost tracking, prompt optimization, semantic caching, intelligent model routing, and budget caps with enforcement.

TL;DR

- LLM API costs accumulate silently across teams, models, and features; you can't cut what you can't see

- Token volume, model tier selection, and agentic workflow amplification are the top cost drivers

- Semantic caching reduces API calls by 15-88% for repetitive workloads

- Intelligent model routing delivers 35-85% cost savings while maintaining output quality

- Budget caps with failover prevent runaway spend before it hits your bill

How LLM API Costs Typically Build Up

LLM API costs rarely appear as a single large decision. They accumulate gradually across many small requests, growing as usage spreads across teams and workflows. Common contributors include:

- A support chatbot making 10,000 queries per day

- A document analysis pipeline processing 500 files per hour

- A development team running prompt tests against production budgets

Each source looks manageable in isolation. Together, they add up fast.

Costs are largely invisible during development and early testing. A few dozen API calls during prototyping cost pennies. But in production, when request volume, context length, and model choices interact at scale, often without any team-level attribution in place, the same workflow can generate thousands of dollars per month.

Agentic and multi-step workflows make this worse. Tool-augmented agentic systems require an average of 9.2 times more LLM calls per request than single-turn approaches. A single user action can trigger multiple chained LLM calls, each billed independently, making the total cost per workflow hard to anticipate without instrumentation.

Key Cost Drivers for LLM APIs

Primary Technical Drivers

LLM API costs are driven by three technical factors:

- Token consumption (input + output combined)

- Model tier pricing differences across providers

- Request frequency at scale

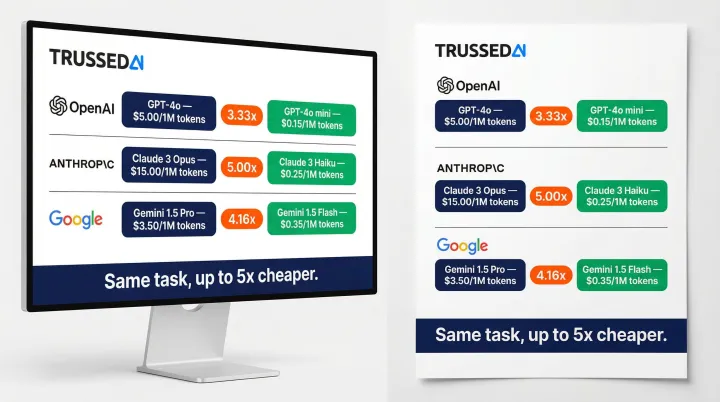

The same task routed to different models can vary in cost by 3x to 5x. For example:

| Provider | Frontier Model | Input Price (per 1M tokens) | Smaller Tier Model | Input Price (per 1M tokens) | Cost Differential |

|---|---|---|---|---|---|

| OpenAI | GPT-5.4 | $2.50 | GPT-5.4-mini | $0.75 | 3.33x |

| Anthropic | Claude Opus 4.6 | $5.00 | Claude Haiku 4.5 | $1.00 | 5.00x |

| Gemini 2.5 Pro | $1.25 | Gemini 2.5 Flash | $0.30 | 4.16x |

Prices are illustrative. Verify current rates against each provider's official pricing documentation.

Routing tasks to smaller models like Haiku 4.5 or GPT-5.4-mini yields immediate 3-5x cost reductions compared to default frontier model usage.

Organizational Decisions That Amplify Cost

Beyond the technical layer, how teams deploy and manage LLMs determines whether those technical costs stay controlled or compound:

- Using a single premium model for all tasks regardless of complexity, routing frontier models to simple classification or lookup tasks that smaller models handle equally well

- Passing full conversation history in every request without summarization or truncation

- Running LLMs in development and staging against production budgets without separate cost tracking or limits

The Deepest Cost Driver: Absence of Attribution

These organizational habits are manageable once teams can see what's driving costs. That visibility is where most enterprises struggle. The FinOps Foundation's 2026 report cites "visibility into AI costs" and "allocating AI costs to business units" as top enterprise challenges.

Without per-team, per-feature, per-model attribution, no amount of technical optimization closes the gap. A control plane that tracks cost in real time across all AI interactions, providing that attribution at the model and application level, is the prerequisite for any meaningful optimization effort. Trussed AI's enterprise AI control plane is built specifically to provide this visibility before teams touch a single routing rule.

5 Ways to Monitor and Optimize LLM API Costs

Effective cost optimization requires both monitoring (seeing where costs originate) and targeted action (changing the decisions and conditions that drive them). The five ways below address both dimensions.

Way 1: Establish Real-Time Cost Tracking and Attribution

Cost attribution is the prerequisite to all other optimization. A single line-item bill tells you nothing actionable.

What proper attribution looks like:

- Cost segmented by team, feature, model, and environment (dev vs. production)

- Individual workflow or session-level cost tracking

- Real-time visibility into exactly where AI dollars are going

How it works:

Route all LLM traffic through a centralized gateway or proxy that tags every request with metadata and logs token consumption, model used, latency, and calculated cost in real time. This does not require application code changes when implemented as a drop-in proxy.

Session-level cost tracking for multi-step workflows:

Grouping related API calls into a single "session" reveals the true cost of completing a task. "Document analysis costs $X per session on average" is more actionable than seeing disconnected individual calls, and it's essential for pricing, capacity planning, and ROI analysis.

In agentic workflows where a single user action can trigger 9.2x more LLM calls than single-turn approaches, session-level tracking prevents cost surprises. Without this visibility, 31% of queries exhibiting semantic similarity to prior requests represent silent, compounding waste.

Priority actions after attribution is configured:

- Identify the top cost-driving features or teams

- Distinguish between high-value and low-value spend

- Use this data to prioritize which of the remaining four optimization strategies to apply first

Way 2: Optimize Prompts to Reduce Token Waste

Prompt inefficiency is one of the most common and correctable sources of excess token consumption.

Key waste patterns:

- Verbose system prompts that repeat context on every call

- Full conversation history passed without summarization

- Few-shot examples included when zero-shot would suffice

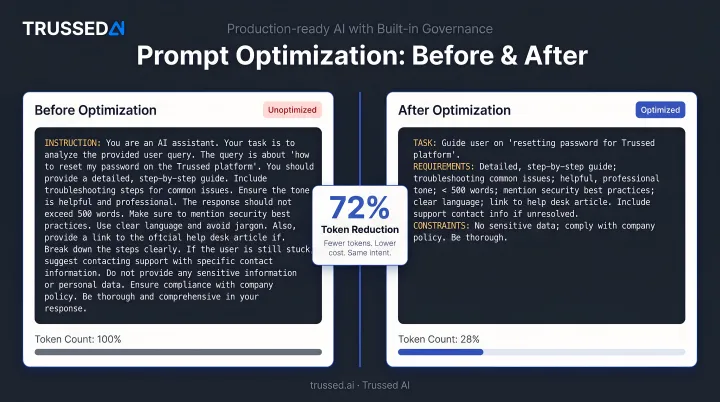

Before/after example:

Before (78 tokens):

You are a helpful assistant designed to analyze customer support tickets and categorize them into one of the following categories: billing, technical support, account management, or general inquiry. Please read the following ticket carefully and provide the most appropriate category based on the content.

After (22 tokens):

Categorize this support ticket as: billing, technical, account, or general.

Same instruction, 72% fewer tokens.

Prompt governance practices that scale:

- Version prompts to track efficiency gains over time

- Use structured templates that enforce token budgets

- Limit RAG context to the most relevant retrieved passages, not all candidate chunks

Token reduction in prompts has a compounding effect, as every call benefits. Extractive compression methods enable up to 10x compression with minimal accuracy degradation.

Way 3: Implement Semantic Caching for Repeated Queries

Research shows 31% of LLM queries exhibit semantic similarity to previous requests. Semantic caching serves stored responses when a new query is sufficiently similar to a prior one, eliminating the API call entirely.

Two types of caching:

- Exact-match caching: Identical requests return cached responses instantly at zero cost

- Semantic caching: Vector similarity matching catches paraphrased or near-duplicate queries

Best use cases for caching:

- FAQ and support bots

- Content lookup

- Reference generation

- Development and testing environments

Not appropriate for:

- Requests requiring real-time data

- Personalized outputs

- Sensitive user information

Realistic cost reduction benchmarks:

AWS ElastiCache benchmarks using real user chatbot queries reduced LLM inference costs by up to 86% and improved average latency by up to 88%. A semantic caching architecture study reduced API calls by up to 68.8% across various query categories.

| Similarity Threshold | Cache Hit Ratio | Cost Savings | Latency Reduction |

|---|---|---|---|

| 0.99 | 23.5% | 15.8% | 17.1% |

| 0.95 | 56.0% | 51.9% | 57.7% |

| 0.80 | 87.6% | 84.6% | 86.1% |

| 0.75 | 90.3% | 86.3% | 88.3% |

A similarity threshold of 0.75 to 0.80 offers an optimal balance, delivering ~85% cost savings while maintaining >91% accuracy.

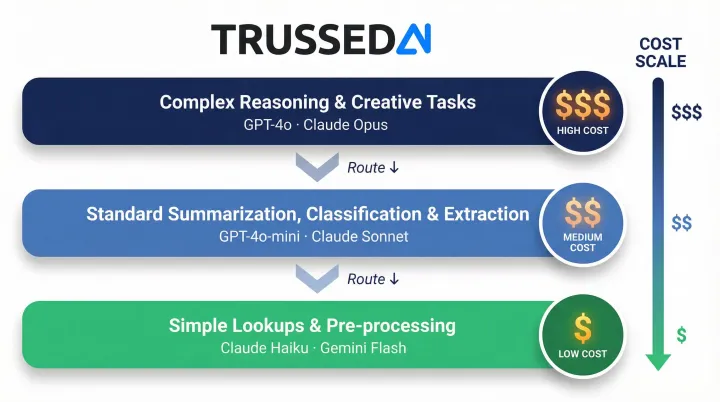

Way 4: Route Requests to the Right Model for the Task

Not every request needs the most powerful (and expensive) model available. A tiered routing strategy assigns each request to the lowest-cost model capable of handling it well.

Simple model routing framework:

- Complex reasoning and creative tasks → Frontier models (GPT-4o, Claude Opus)

- Standard summarization, classification, and extraction → Mid-tier models (GPT-4o-mini, Claude Sonnet)

- Simple lookups and pre-processing → Lightweight or open-source models (Claude Haiku, Gemini Flash)

Intelligent routing mechanisms:

- Rule-based routing: Assign by task type or feature. For example, send all classification tasks to Haiku

- Classifier-based routing: Assess query complexity at runtime and route accordingly. Complex queries go to frontier models, simple queries to lightweight ones

Weighted routing at the gateway layer lets teams start conservatively by sending a small percentage of traffic to a cheaper model and increasing that share as quality is validated.

Benchmarked savings:

RouteLLM from UC Berkeley/LMSYS achieved an 85% cost reduction while maintaining 95% of GPT-4's performance. Amazon Bedrock Intelligent Prompt Routing yielded 48% cost savings routing between Claude 3 Haiku and Claude 3.5 Sonnet V2.

Provider-level prompt caching:

Model routing and provider-level caching stack well together. Major providers like Anthropic and OpenAI offer prompt caching that reduces costs significantly for requests with repeated long system prompts or static context.

- Anthropic: Cache reads discounted by 90% (e.g., $0.50/1M for Opus vs. $5.00/1M base). Cache writes cost 25% more than base input tokens.

- OpenAI: Cached input tokens receive a 50-90% discount (e.g., GPT-4o cached input at $0.25/1M, a 90% discount from $2.50/1M base). No cache write premium.

Prompt caching stacks with model routing for compound savings.

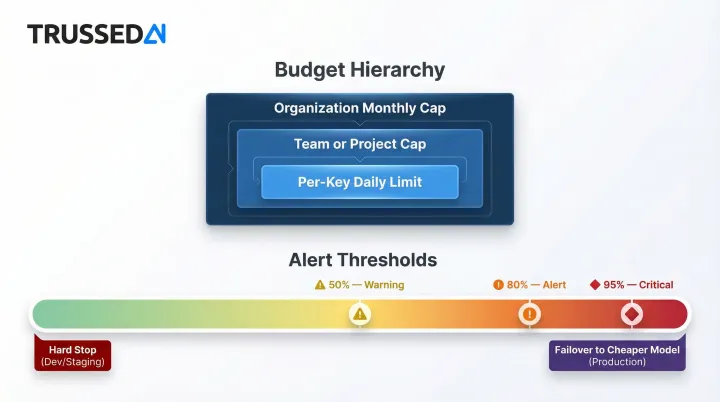

Way 5: Enforce Budget Caps and Spend Controls

Knowing costs are rising does not stop them. Hard budget controls enforced at the gateway layer prevent runaway spend from reaching the bill.

Multi-tier budget hierarchy:

- Organization-level monthly caps

- Team-level or project-level caps

- Per-key daily limits

Configurable behavior when a cap is hit:

- Hard stop in dev/staging environments

- Automatic failover to a cheaper model in production

Alert thresholds as an early-warning layer:

Graduated alerts at 50%, 80%, and 95% of budget give teams time to investigate and respond before limits are breached.

Failure mode this prevents:

Automated batch jobs, misconfigured agents, or heavy individual users silently consuming budget over a weekend with no notification until invoice time. One misconfigured agent asked GPT-4 "should I retry?" 847 times in an infinite loop, costing $63 overnight.

Virtual API keys with per-key budgets:

In multi-developer or multi-agent environments, virtual API keys give each team or workflow its own spending envelope, preventing one team's overrun from affecting others.

Conclusion

Reducing LLM API costs depends first on knowing where costs originate. Attribution and visibility are the prerequisites that make the other four strategies actionable from day one.

Cost optimization is not a one-time project but an ongoing governance practice. As models change, usage scales, and new agents are deployed, cost patterns shift. Teams that treat cost management as continuous runtime governance, monitoring, enforcing controls, and iterating on routing and prompt strategies, stay ahead of cost problems rather than reacting after the damage is done.

Trussed AI's enterprise AI control plane is built for exactly this kind of ongoing oversight. Key capabilities include:

- Real-time cost tracking and attribution across teams, models, and workflows

- Intelligent routing to optimize model selection at runtime

- Budget enforcement with configurable failover, no application code changes required

Frequently Asked Questions

What are the biggest drivers of high LLM API costs in production?

Token volume (input + output combined), model tier selection, lack of per-feature attribution, and the cost amplification effect of agentic or multi-step workflows are the primary drivers. Agentic systems require an average of 9.2x more LLM calls per request than single-turn approaches, multiplying costs rapidly.

How do I attribute LLM API costs to specific teams or features?

Route all LLM traffic through a centralized gateway or proxy that tags requests with team, feature, and environment metadata. This enables per-dimension cost attribution without requiring application code changes, giving you real-time visibility into which teams, features, and environments are driving spend.

What is the difference between semantic caching and prompt caching?

Semantic caching stores and reuses LLM responses based on query similarity, eliminating redundant API calls entirely. Prompt caching is a provider-side feature that reduces the cost of re-processing repeated system prompts or static context within a single model's context window.

Can I reduce LLM API costs without switching to cheaper models?

Yes. Prompt optimization, semantic caching, budget caps, and better attribution can each deliver meaningful savings without changing the underlying model. Prompt compression enables up to 10x token reduction, and semantic caching can reduce API calls by 15-88% for repetitive workloads.

How do budget caps work without breaking production applications?

Production-safe budget controls use soft failover behavior, automatically routing requests to a lower-cost model when a cap is hit, rather than hard stops, which are better suited to dev and staging environments. This maintains availability while preventing runaway spend.

How much can organizations realistically save by optimizing LLM API costs?

Semantic caching delivers 15-88% cost reduction depending on similarity thresholds applied. Intelligent model routing achieves 35-85% savings for routed workloads, with extreme cases reporting up to 98% savings. Organizations combining multiple strategies commonly reach 40-60% overall cost reduction.