Introduction

Between January 2025 and early 2026, the Trump Administration issued multiple executive orders on AI and put forward a national legislative framework, while sector regulators in finance, healthcare, and insurance moved ahead with their own enforcement priorities. For enterprises deploying AI in production, two compliance tracks are running simultaneously, and neither is waiting for the other to catch up.

This article covers the key federal policy actions, what the National AI Legislative Framework's seven objectives require in practice, and what regulated-industry enterprises should do now.

TLDR

- Federal AI policy shifted decisively toward innovation. The Trump Administration built a unified framework designed to override conflicting state laws

- Key actions include Executive Order 14179 (January 2025), the "Winning the Race" AI Action Plan (July 2025), and the National Framework (March 2026)

- The March 2026 Legislative Framework sets seven objectives covering child safety, IP, free speech, workforce, and federal preemption

- Compliance is two-layered: evolving federal standards plus sector-specific rules from HHS, OCC, and state insurance regulators

- Build AI governance infrastructure now. Waiting for final rules means playing catch-up under pressure

The Trump Administration's AI Policy Agenda: Key Actions from 2025 to 2026

Since January 2025, the Trump administration has moved quickly to reshape federal AI policy, prioritizing U.S. competitiveness and pushing back against a growing web of state-level AI regulations. Here's how that policy arc unfolded.

Executive Order 14179 (January 23, 2025), titled "Removing Barriers to American Leadership in Artificial Intelligence," set the tone immediately. It revoked the prior administration's AI executive order and directed federal agencies to eliminate regulatory barriers to AI adoption and deployment.

The July 2025 "Winning the Race: America's AI Action Plan" framed AI as a national competitiveness issue. The plan emphasized innovation infrastructure, international engagement, and accelerating deployment of AI applications across sectors. It explicitly encouraged open-source and open-weight AI to ensure startups and academics could innovate without relying solely on closed-model providers.

Executive Order 14365 (December 11, 2025), "Ensuring a National Policy Framework for Artificial Intelligence," established the policy of a "minimally burdensome national standard." This order created two key mechanisms:

- AI Litigation Task Force under the Attorney General to challenge state AI laws on grounds they violate the Commerce Clause or are preempted by federal law

- Commerce Department mandate to evaluate and identify onerous state AI laws within 90 days

The December 2025 order also directed the FTC Chairman to issue a policy statement explaining how the FTC Act's prohibition on unfair and deceptive practices applies to AI models and could preempt state laws requiring alterations to AI outputs. As of April 2026, that FTC policy statement has not been published, a notable gap enterprises in regulated industries are still watching closely.

The March 2026 National AI Legislative Framework marked the administration's first formal move to codify these priorities into law. The White House transmitted legislative recommendations to Congress, fulfilling the December 2025 directive and signaling that federal preemption of state AI rules could soon shift from executive policy to statute.

The March 2026 National AI Legislative Framework: 7 Key Objectives Explained

The framework provides Congress with recommendations covering seven high-level objectives. The Administration's stated preference is to rely on existing sector-specific regulators rather than create a new federal AI rulemaking body. The objectives below are grouped thematically, not sequentially.

Protecting Children, Free Speech, and Communities

Objective 7, Federal Preemption: Congress is asked to pass a preemptive federal AI law to prevent "fifty discordant state regulations." For enterprises operating across multiple states, this is the most consequential objective in the framework. A single federal standard would replace a patchwork of up to 50 differing state AI laws, significantly reducing compliance complexity.

The framework preserves state authority over:

- Generally applicable consumer and child protection laws

- State zoning and infrastructure siting

- A state's own procurement and use of AI in public Solution

Federal vs. State AI Regulation: The Preemption Battle

The Trump Administration argues that state-by-state AI regulation creates compliance burdens for startups and enterprises, can force AI models to produce biased or inaccurate outputs, and may unconstitutionally impinge on interstate commerce. Colorado's algorithmic discrimination law (SB24-205) is cited as a specific example of problematic state regulation.

The Litigation Mechanism

The AI Litigation Task Force, established under the December 2025 executive order, is authorized to challenge state AI laws in court on grounds they:

- Violate the Commerce Clause

- Are preempted by existing federal law

- Are otherwise unlawful

As of April 2026, the Task Force has not filed any public lawsuits against specific state AI laws, yet state-level implementation continues to move forward regardless, with Colorado's law taking effect in June 2026.

State Laws Still in Force

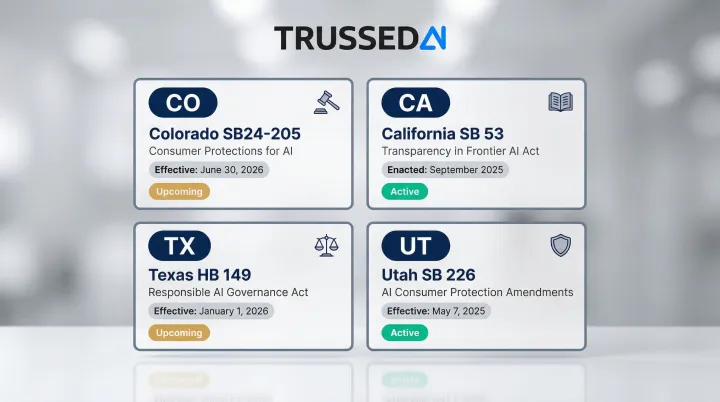

In 2025, all 50 states introduced AI-related legislation, with 38 states adopting approximately 100 measures. Key enacted laws include:

| State | Law | Status |

|---|---|---|

| Colorado | Consumer Protections for AI (SB24-205) | Effective June 30, 2026 |

| California | Transparency in Frontier AI Act (SB 53) | Enacted September 2025 |

| Texas | Responsible AI Governance Act (HB 149) | Effective January 1, 2026 |

| Utah | AI Consumer Protection Amendments (SB 226) | Effective May 7, 2025 |

Multi-State Compliance Obligations Today

With no comprehensive federal AI legislation in place, the current landscape remains a patchwork. Enterprises operating across multiple states face different obligations simultaneously, and sector-specific regulators, including HHS, OCC, and state insurance commissioners, retain existing authority over AI use in their domains regardless of any emerging federal framework.

The preemption carve-outs built into that framework also preserve state authority in several key areas:

- Fraud prevention and consumer protection enforcement

- Child safety regulations

- Infrastructure zoning requirements

- State government's own use of AI

Many state requirements will continue to bind enterprises even after federal legislation eventually passes.

What These Policies Mean for Enterprises in Regulated Industries

While the federal framework signals a lighter regulatory touch on AI innovation, sector-specific regulators in healthcare, financial Solution, and insurance are independently advancing AI governance requirements. Regulated enterprises face dual compliance obligations regardless of the federal-state debate.

Overlapping Regulatory Authorities

The framework's emphasis on existing regulators means enterprises must map their AI deployments to multiple overlapping authorities:

- FTC consumer protection standards

- Sector-specific agency guidance (HHS for healthcare, OCC for banking)

- Surviving state laws

- Industry-specific standards (NAIC for insurance)

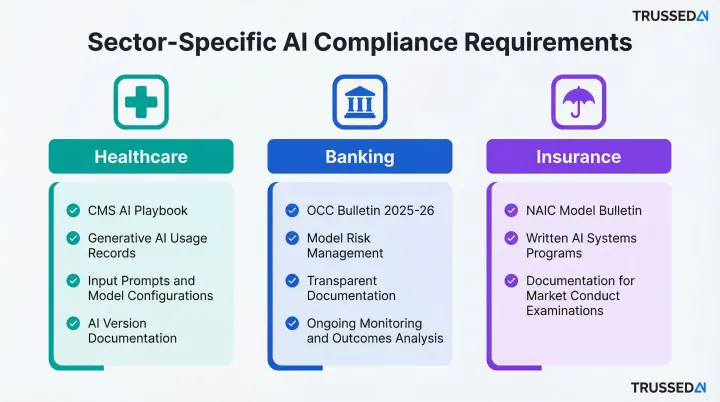

Sector-Specific Requirements Remain Fully Intact

Healthcare: The CMS Artificial Intelligence Playbook requires comprehensive records of all generative AI tool use, including input prompts, model configurations, training datasets, and evaluation activities. When AI generates work products supporting official actions, those products must include the AI model version and prompts used.

Banking: The OCC Bulletin 2025-26 clarifies Model Risk Management for community banks, emphasizing that MRM should be commensurate with risk exposures and business activities. Documentation must be transparent and explainable, with ongoing monitoring and outcomes analysis.

Insurance: State insurance commissioners rely on the NAIC Model Bulletin on the "Use of Artificial Intelligence Systems by Insurers," requiring insurers to establish written AI Systems Programs and maintain documentation regulators may request during market conduct examinations.

Building Audit Readiness Now

The framework's direction toward federal disclosure standards and the pending FTC policy statement on AI outputs mean enterprises need to build audit-trail infrastructure now, before formal requirements arrive.

Manual evidence reconstruction is time-consuming and error-prone. Organizations that wait for mandates to solidify often find themselves scrambling to piece together logs, model versions, and policy evaluations after the fact.

Platforms like Trussed AI generate governance evidence as a byproduct of every governed AI interaction, making organizations audit-ready before mandates arrive. The platform automatically maintains complete audit trails, logging policy evaluation results, model versions, timestamps, and data lineage. That means demonstrating compliance to sector-specific regulators in real time, not scrambling to reconstruct evidence after an examination begins.

How to Stay AI-Compliant as Policy Continues to Evolve

Organizations should treat the current period as a "governance runway", using the time before federal legislation passes to build internal AI governance infrastructure that will meet whichever standard emerges.

Map to Sector-Specific Guidance First

Start with your existing sectoral regulator's guidance as the baseline:

- Healthcare organizations: HHS AI guidance and CMS playbook

- Banking institutions: OCC model risk management standards

- Insurance companies: NAIC AI bulletin and state commissioner requirements

These obligations are unaffected by the federal-state preemption debate and represent your immediate compliance floor.

Build Governance Infrastructure That Scales

Establish foundational capabilities now:

- Policy documentation and version control

- Risk classification frameworks aligned to NIST AI Risk Management Framework

- Model inventory and monitoring systems

- Audit trail generation for all AI interactions

Implement Runtime Policy Enforcement

Trussed AI enforces governance policies at runtime across models, agents, and workflows, turning static compliance requirements into real-time operational controls. Key deployment characteristics include:

- Less than 1% compliance violations across governed interactions

- Complete audit trails generated automatically as a byproduct of every interaction

- Drop-in proxy integration with zero application code changes

- Deployment in approximately four weeks

With enforcement infrastructure in place, the next priority is staying ahead of the policy changes that will require it.

Stay Current on Federal and State Developments

Monitor three parallel tracks:

- Federal legislative progress on the March 2026 framework

- Sector-specific regulator guidance updates

- State law developments in jurisdictions where you operate

Because the AI Litigation Task Force has not yet challenged state laws in court, assume state requirements remain binding until formally preempted.

Frequently Asked Questions

What is the US federal government's AI policy and action plan?

The current federal AI policy is shaped by Executive Order 14179 (January 2025) and the July 2025 AI Action Plan, both focused on maximizing U.S. AI leadership through minimal regulatory burden. The March 2026 National AI Legislative Framework provides Congress with specific legislative recommendations across seven objectives.

What AI programs does the US government run?

Key government-run AI programs include:

- NIST AI Risk Management Framework: voluntary framework for managing AI risk

- NSF National AI Research Institutes: funding network connecting 500+ institutions globally

- CISA AI Security Roadmap: cybersecurity guidance for AI systems

- GSA AI Center of Excellence: established by the AI in Government Act of 2020

What is an AI governance program?

An AI governance program is an organization's internal framework for managing AI risks, ensuring compliance, and enforcing policies across AI systems. It covers model oversight, audit trails, security controls, and accountability mechanisms aligned with regulatory requirements.

What is House Bill 7396?

H.R. 7396 (119th Congress) is the "Native American Entrepreneurial Opportunity Act," introduced February 5, 2026, to establish the Office of Native American Affairs within the Small Business Administration. It contains no provisions related to artificial intelligence.

Does the federal AI policy preempt all state AI laws?

No comprehensive federal AI law has passed, so state requirements remain binding. The Administration is pursuing preemption of conflicting state AI laws, but the proposed framework explicitly preserves state authority over child protection, consumer fraud, zoning, and states' own use of AI.

How should enterprises prepare for changing U.S. AI regulations?

Enterprises should take three concrete steps now: build internal AI governance infrastructure, map current deployments to sector-specific regulatory guidance, and implement runtime policy enforcement. These actions keep organizations compliant with current obligations while positioning them to adapt quickly as federal standards evolve.