Introduction

Enterprises face a dual governance crisis: third parties are embedding AI into their products without disclosure, while the AI vendor ecosystem has expanded into a risk surface that traditional TPRM tools were never designed to address. A 2026 security report reveals that 98% of organizations now use at least one third-party SaaS application with embedded AI capabilities, yet fewer than 30% have a formal AI vendor risk assessment process in place.

AI TPRM operates on two critical dimensions: managing the risks that AI vendors introduce into your organization, and using AI capabilities to run a more effective TPRM program. The exposure is significant - 99.4% of US CISOs surveyed experienced at least one SaaS or AI ecosystem security incident in 2025, with 13% reporting breaches of AI models or applications.

This guide delivers a clear picture of AI-specific risks, a practical framework for evaluating AI vendors, and how to build governance that keeps pace with AI's rate of change.

TLDR

- AI vendors introduce risks - bias, hallucinations, model drift, black-box decisions - that standard questionnaires aren't built to catch

- One AI product typically spans multiple model providers, data vendors, and infrastructure layers,each carrying its own risk exposure

- Governance must shift from annual assessments to continuous monitoring - AI model behavior can change with no new software release

- Regulators in financial Solution, healthcare, and the EU are already issuing AI-specific third-party requirements that go beyond existing frameworks

- TPRM is changing too,AI now automates due diligence, powers predictive risk scoring, and enables live vendor monitoring in place of periodic reviews

What Is AI Third-Party Risk Management?

AI TPRM is the practice of identifying, evaluating, and continuously monitoring the risks that arise when vendors, suppliers, or service providers use AI in their products or service delivery. Unlike traditional TPRM, it addresses new risk categories: model drift, algorithmic bias, explainability failures, and training data lineage issues.

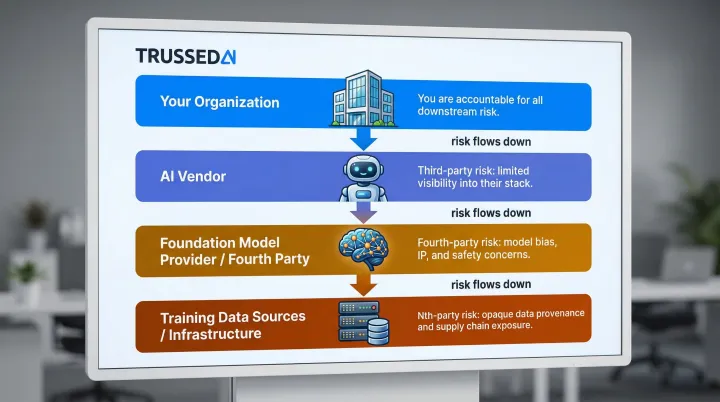

The NIST AI Risk Management Framework defines third-party entities as "providers, developers, vendors, and evaluators of data, algorithms, models, and/or systems and related Solution for another organization." That definition points to a layered problem, not a single risk type.

Organizations must assess the AI vendor directly, the AI models those vendors use (often licensed from a fourth party), the training data sourced from yet another party, and the infrastructure layers underneath,this is the nth-party problem in AI.

A Forrester study found that 45% of organizations experienced third-party-related business interruptions over a two-year period , a rate that rises sharply when AI dependencies go unmapped.

The Awareness Gap

Research suggests that 47% of employees use generative AI platforms through personal accounts their companies aren't overseeing. This shadow AI creates blind spots where enterprises lack visibility into vendor AI use, even when those vendors have embedded AI capabilities into their core products.

Gartner predicts that 40% of enterprise applications will include task-specific AI agents by end of 2026, up from less than 5% in 2025. Most of those deployments will arrive without a governance baseline in place.

The Unique Risks AI Vendors Introduce

Data Risk and Training Data Reuse

When organizations share data with AI vendors, that data may be used to train or fine-tune models, stored in environments outside the organization's control, or exposed to other model users. The Federal Trade Commission has explicitly warned that it may be unfair or deceptive for a company to adopt more permissive data practices,such as using consumer data for AI training,through surreptitious, retroactive amendments to terms of service or privacy policies.

Standard data handling clauses in contracts often fail to address training data reuse. Organizations should demand:

- Explicit data lineage documentation showing where training data originated

- Prohibitions or permissions around using organizational data for model training

- Confirmation that proprietary data is isolated from multi-tenant model improvements

- Clear data retention and deletion policies specific to AI contexts

Compliance and Regulatory Risk

AI vendors,especially newer entrants,may not be built to meet the compliance requirements of regulated industries. An AI vendor's non-compliance becomes the customer's compliance violation, across every regulated sector the vendor touches.

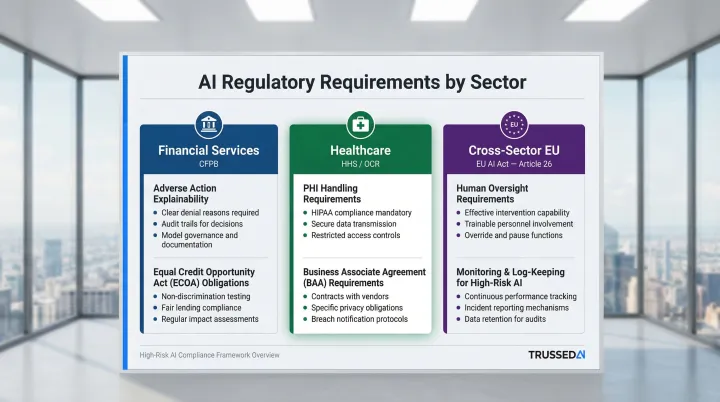

Regulatory Guidance by Sector:

| Sector | Regulatory Body | Enforcement Focus |

|---|---|---|

| Financial Services | CFPB | The Equal Credit Opportunity Act requires creditors to explain specific reasons for adverse actions; complex algorithms or "black-box" AI models are not a defense for failing to provide accurate explanations |

| Healthcare | HHS/OCR | Tracking technology vendors that create, receive, maintain, or transmit PHI on behalf of a regulated entity are Business Associates and require a Business Associate Agreement (BAA) |

| Cross-Sector (EU) | EU AI Act | Imposes strict obligations on deployers of high-risk AI systems, including human oversight, monitoring, and log-keeping (Article 26) |

Regulators across jurisdictions are uniformly rejecting the premise that AI complexity excuses organizations from existing compliance, fair lending, and data protection obligations.

Operational and Black-Box Risk

Many AI models cannot explain their outputs, creating audit and accountability problems when AI-driven decisions affect credit, insurance underwriting, or patient care. Model drift,where an AI system's performance degrades over time as data patterns shift,compounds this. Google Flu Trends missed the peak of the 2013 flu season by 140% precisely because search behavior changed in ways the model wasn't built to track.

That degradation can happen between formal assessment cycles with no code change and no software release, which means point-in-time vendor assessments miss the risk entirely. Continuous monitoring is the only reliable control.

AI-Specific Concentration Risk

The monitoring challenge above gets harder when organizations depend on a small number of AI providers. If that provider goes down, changes its terms, or faces regulatory action, there are few fallback options,and the failures cascade fast. A Zapier survey of enterprise executives found that 74% report day-to-day disruption or outright reliance on their primary AI vendor, and 47% state that ending AI Solution would disrupt key business functions.

Gartner identifies cloud concentration as a top emerging risk, noting that high vendor dependence reduces future technology options and allows vendors to exert significant influence. Mapping the AI supply chain to its model-layer dependencies is a critical but often overlooked step.

Reputational and Bias Risk

When a third-party AI system produces biased, discriminatory, or factually incorrect outputs, liability flows back to the enterprise deploying it. "AI washing",vendors overstating their AI capabilities or safeguards,makes this worse, because organizations build risk programs around capabilities that don't exist.

In March 2024, the SEC charged two investment advisers a total of $400,000 in civil penalties for making false and misleading statements about their purported use of artificial intelligence. Technical validation during due diligence,not just vendor representations,is the only way to verify what an AI system actually does. IBM reports that 60% of AI-related security incidents led to compromised data and 31% caused operational disruption. Bias and reputational risk carry real financial consequences.

A Step-by-Step Framework for AI Vendor Risk Management

Step 1 , AI Strategy and Needs Identification

Before evaluating vendors, define your organization's risk appetite for AI use across specific business functions: credit scoring, claims processing, patient intake, diagnostic support. For each use case, document the required level of model transparency, auditability, and explainability.

A healthcare organization, for instance, might require full explainability for diagnostic AI while accepting lower transparency for administrative chatbots. This internal baseline becomes the benchmark for every downstream vendor decision.

Step 2 , Vendor Identification and Tiering

Build a vendor inventory that specifically flags AI use (including vendors that have embedded AI without explicit disclosure) and tier them by AI risk level based on:

- Sensitivity of data shared with the vendor

- Criticality of the business function affected

- Degree of AI autonomy in decisions

- Regulatory exposure of the use case

Mission-critical AI vendors (automated underwriting, fraud detection, diagnostic support) warrant the deepest scrutiny. Supporting vendors (scheduling assistants, document summarization) may require lighter-touch assessments.

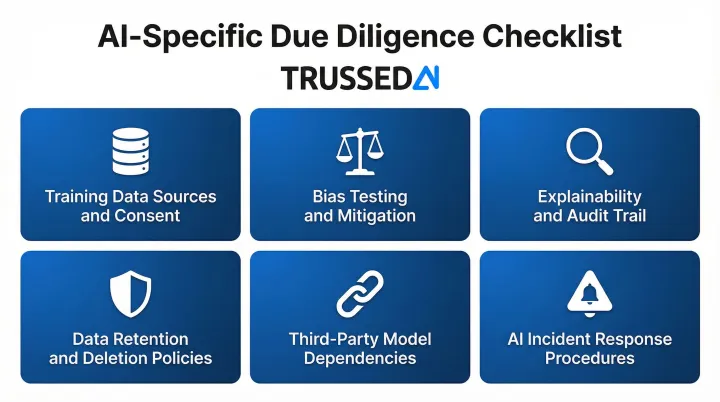

Step 3 , AI-Specific Due Diligence

Standard due diligence questionnaires miss AI-specific risks. A rigorous AI-focused assessment should address:

- Model training data sources and consent , Where did training data originate, and was it obtained with proper consent?

- Bias testing and mitigation practices , How does the vendor test for and address algorithmic bias?

- Explainability and audit trail capabilities , Can the vendor explain model decisions and provide audit trails?

- Data retention and deletion policies , How is organizational data handled, stored, and deleted?

- Third-party model dependencies , Does the vendor rely on foundation models from other providers?

- Incident response procedures specific to AI failures , What happens when the model drifts or produces incorrect outputs?

The FS-ISAC Generative AI Vendor Risk Assessment Guide and NIST AI RMF provide structured frameworks for this assessment.

Step 4 , Contract Management and AI-Specific Provisions

Contracts with AI vendors must go beyond standard SLAs to include:

- Mandatory disclosure of AI use changes and new model deployments

- Explicit prohibitions or permissions around training data use

- Audit and testing rights for AI systems

- Requirements for bias monitoring and explainability reporting

- Breach notification timelines specific to AI incidents

The EU Public Buyers Community published updated EU AI model contractual clauses in 2024, including incident reporting clauses requiring providers to promptly notify deployers of any "Serious Incident" and cooperate in investigations.

Schedule a contract review whenever a vendor announces a new model version, a change in data sourcing, or an update to their AI stack - not just at annual renewal.

Step 5 , Continuous Monitoring and Audit

Annual assessments are insufficient: model behavior can shift without a single software release. Ongoing AI vendor monitoring includes:

- Tracking model performance metrics for degradation or drift

- Watching for regulatory and adverse media signals about the vendor's AI practices

- Monitoring for changes to the vendor's model providers or data sources

- Conducting periodic re-assessments when the vendor adds new AI capabilities

Automated monitoring tools can flag anomalies faster than manual reviews. For organizations managing AI at scale across multiple vendors and workflows, this becomes a governance volume problem , there are simply too many interactions to audit by hand. Platforms like Trussed AI address this directly, turning governance policies into real-time enforcement at every AI interaction, with audit trails generated automatically as a byproduct.

Step 6 , Offboarding and Residual Risk Elimination

Terminating an AI vendor relationship requires more than revoking access. Three residual risks demand explicit attention:

- Verify that proprietary data used in model training has been deleted or returned

- Confirm that any fine-tuned models built on organizational data are decommissioned

- Map the transition gap , the window between offboarding one vendor and fully operationalizing a replacement carries elevated risk

The most common oversight: organizations negotiate data deletion verbally rather than contractually. Draft offboarding requirements at contract signing - including deletion verification timelines and decommissioning obligations - so they are enforceable when the relationship ends.

How AI Tools Are Transforming the TPRM Function Itself

From Periodic to Continuous Monitoring

AI-powered TPRM platforms can monitor thousands of vendors in real time by ingesting news feeds, financial signals, regulatory updates, adverse media, and cybersecurity bulletins simultaneously - replacing annual questionnaire cycles with live risk scoring.

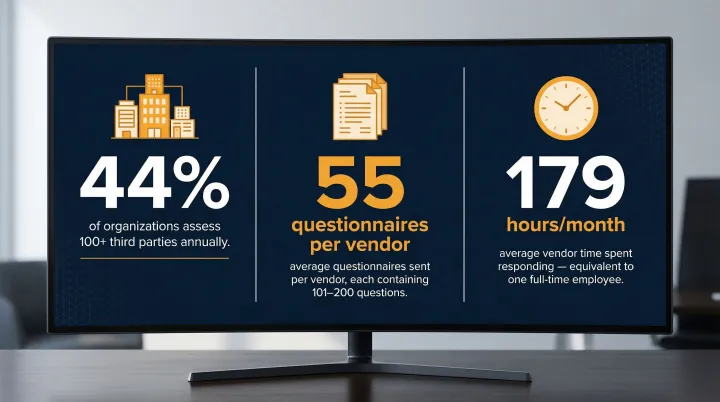

Traditional TPRM is highly resource-intensive. The scale of the problem tells the story:

- 44% of organizations assess more than 100 third parties each year

- EY reports companies send an average of 55 questionnaires per vendor, with 45% containing 101–200 questions each

- The average vendor responds to 37.3 assessment requests monthly, spending 179 hours , the equivalent of one full-time employee

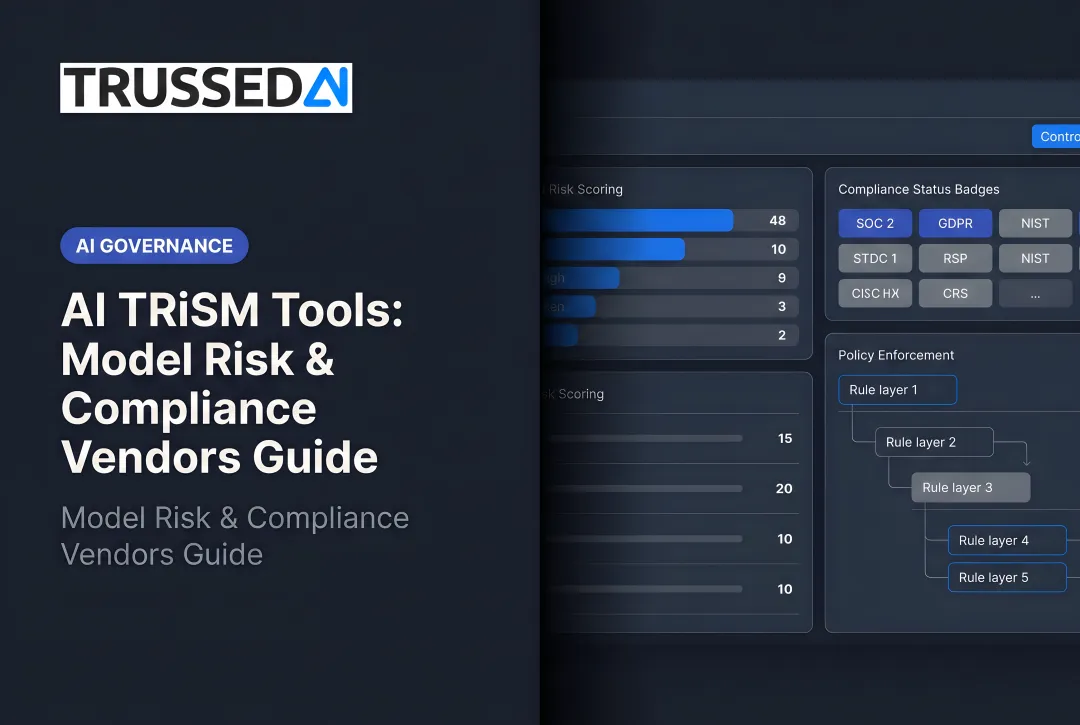

AI Automation Across the TPRM Lifecycle

AI automation transforms key TPRM processes:

- Vendor onboarding - Automated data extraction from documents, AI-generated risk profiles

- Due diligence - AI-powered document analysis of security certifications, insurance certificates, compliance records

- Risk scoring - Dynamic scorecards updated in real time rather than at assessment intervals

This allows TPRM teams to prioritize human review on the highest-risk vendors rather than processing routine paperwork. 54% of organizations state their top goal in investigating AI for TPRM is to speed up questionnaire completion by automatically completing responses using existing evidence.

Predictive Risk Intelligence

Machine learning models trained on historical vendor incident data can identify early warning signals,financial distress patterns, compliance anomalies, supply chain dependencies,before they escalate into disruptions. Traditional TPRM programs only surface these problems after an incident has already hit operations or compliance records.

Agentic AI takes this further: autonomous systems continuously evaluate vendor risk signals across your entire portfolio, escalating only the cases that require human judgment , turning risk monitoring from a scheduled activity into a persistent control.

The Centralization Opportunity

EY's 2025 TPRM Survey found that 57% of organizations now run centralized, enterprise-wide TPRM programs , up from 54% in 2023. Centralized programs consistently outperform siloed approaches across vendor inventory management, risk modeling, and governance maturity.

AI makes this practical at scale. Enterprise-wide risk visibility that once required dedicated analyst teams can now be maintained through a single governance layer , applied consistently across business units, geographies, and vendor tiers.

Building Enterprise-Grade AI TPRM Governance

The Governance Gap

Most organizations face a critical gap: policies exist on paper but are enforced inconsistently, audits happen after the fact, and there is no mechanism to catch compliance violations at the moment they occur across AI vendor interactions. Static governance documents don't translate into runtime controls,the gap between policy and practice is where incidents happen.

70% of organizations have AI governance policies, yet 84% of executives are dissatisfied with their AI adoption pace,a sign that current governance frameworks are creating overhead rather than enabling secure deployment. The gap runs deeper still: 63% of breached organizations either lack an AI governance policy entirely or are still developing one.

Trussed AI was built specifically for this problem , converting static governance policies into runtime enforcement across every AI vendor interaction, with audit trails generated as a natural byproduct rather than a manual afterthought.

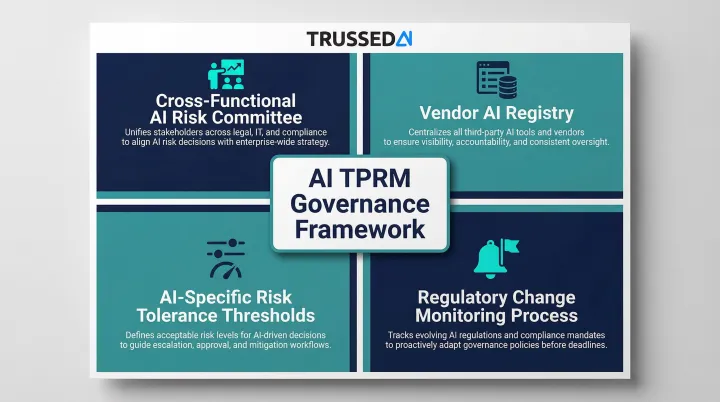

Governance Framework Components

A complete AI TPRM governance framework includes:

- Cross-functional AI risk committee with clear ownership (not buried under a single business unit)

- Vendor AI registry updated continuously, tracking which vendors use AI, which models they depend on, and when those dependencies change

- AI-specific risk tolerance thresholds documented by use case (credit decisions require higher explainability than chatbots)

- Regulatory change monitoring process that tracks AI-relevant regulations (EU AI Act, proposed US frameworks, sector-specific guidance) and translates them into vendor contract requirements

Recognized Frameworks and Certifications

Organizations should anchor their AI TPRM governance to:

- NIST AI RMF , Establishes governance functions including GOVERN 6.1 (policies addressing third-party AI risks) and MANAGE 3.1 (regular monitoring of third-party AI risks)

- ISO/IEC 42001 (published December 2023) , Specifies requirements for establishing, implementing, maintaining, and continually improving an Artificial Intelligence Management System (AIMS)

- COBIT for AI Governance (published 2025) , Addresses ethics, accountability, transparency, and compliance across the AI lifecycle

When evaluating AI vendors, security certifications are a baseline expectation but should be supplemented with AI-specific attestations (such as NIST AI RMF alignment) and audit rights that allow you to verify AI-specific controls.

Frequently Asked Questions

How can AI help with vendor management?

AI automates time-consuming TPRM tasks,document analysis, risk scoring, continuous monitoring of vendor signals,allowing teams to process more vendors with greater accuracy and shift human effort toward high-risk decisions rather than routine administration. AI-powered platforms replace periodic questionnaire cycles with real-time risk intelligence.

What are the 4 pillars of risk management?

The four pillars are identify, assess, mitigate, and monitor. In AI vendor risk, "monitor" now requires continuous real-time oversight rather than periodic reviews because AI systems can drift between formal assessment cycles,model performance can degrade without any code change or software release.

What is the difference between traditional TPRM and AI TPRM?

AI TPRM addresses model-specific risks (bias, drift, black-box decisions), assesses nth-party dependencies behind the AI product, and requires continuous monitoring because AI behavior changes without new software releases. Traditional TPRM's annual assessments and standard questionnaires don't capture these categories.

How do you assess an AI vendor's risk during due diligence?

Go beyond standard questionnaires and ask about training data consent, bias testing, explainability, third-party model dependencies, and data retention policies. Structured frameworks like the FS-ISAC Generative AI Vendor Risk Assessment Guide and NIST AI RMF provide comprehensive coverage.

What regulations govern third-party AI risk management?

The EU AI Act (Article 26) requires human oversight, monitoring, and log-keeping for high-risk AI tools. In the US, NIST AI RMF offers voluntary guidance, while OCC/FFIEC governs financial institutions and HIPAA applies to healthcare AI vendors handling PHI. Requirements vary by jurisdiction and sector.

What is model drift and why does it matter for vendor risk?

Model drift occurs when an AI system's performance degrades over time as real-world data patterns shift away from training data,meaning a vendor's AI tool can become less accurate, biased, or unreliable without any code change. This makes continuous post-contract monitoring essential,point-in-time assessments cannot detect gradual performance degradation.