Introduction

Worldwide AI spending is forecast to reach nearly $1.5 trillion in 2025, with enterprise AI infrastructure budgets expected to more than triple between 2025 and 2028. Yet behind this explosive growth lies a dangerous reality: in multi-tenant AI environments, a single tenant's runaway agent loop or misconfigured workflow can exhaust a shared budget in hours, triggering service failures across every other tenant on the platform.

This isn't a billing problem,it's a service continuity and governance crisis. When one tenant's agentic workflow reprocesses the same documents overnight undetected, or when an integration retries on transient errors until a monthly cap hits mid-cycle, the entire platform suffers.

Every tenant loses access simultaneously, regardless of their individual usage patterns or budget discipline.

Multi-tenant AI costs are not inherently uncontrollable. They become expensive through three compounding factors: lack of isolation between tenants, insufficient visibility at runtime, and delayed cost attribution after the fact. This article examines each through a cost management lens,showing how organizations can move from reactive reconciliation to proactive controls that protect both budgets and service availability.

TL;DR

- Multi-tenant AI costs accumulate silently,and only surface when budgets breach or Solution degrade

- One tenant's usage pattern affects every other tenant's performance and spend; this is the noisy neighbor problem

- Provider-level spending caps protect the provider, not your tenants,per-tenant enforcement must live inside your runtime

- Controlling costs requires three things working in parallel: smarter design, tighter operational controls, and real attribution infrastructure

- Without real-time cost tracking across teams, models, and applications, overruns go unattributed and tenants can't be billed accurately

How Multi-Tenant AI Costs Build Up

In multi-tenant AI environments, costs don't appear as a single line item. They accumulate gradually across token consumption, model invocations, retries, and agent loops,often across dozens of tenants sharing the same provider account or inference endpoint.

The compounding nature of cost build-up makes small inefficiencies invisible at the individual request level but catastrophic at scale. Oversized context windows, redundant embeddings, and unthrottled retries are harmless in isolation. Multiply them across hundreds of tenants and thousands of daily interactions, and they compound into budget-breaking patterns.

The most dangerous costs hide until scale or failure exposes them:

- A tenant's agentic workflow reprocessing the same documents

- An integration retrying on transient errors overnight

- A shared monthly cap that hits mid-cycle and kills access for every tenant simultaneously

These patterns aren't hypothetical. One documented incident involved a $47,000 API bill generated in 11 days when two LangChain agents locked into an infinite recursive loop exchanging clarification requests.

A separate case saw a $700+ cost overrun in 72 hours when an AI agent entered a "Zombie Worker" loop. The cloud provider's self-healing feature kept restarting failed tasks without checkpointing, producing infinite retries with no circuit breaker to stop them.

Cost attribution compounds the problem in shared infrastructure. When multiple tenants use the same model endpoint, embedding pipeline, or inference cluster, separating which tenant drove which portion of the invoice requires purpose-built metering at the application layer.

Key Cost Drivers for Multi-Tenant AI Services

Tenant Resource Isolation Design

The dominant cost driver is tenant resource isolation design. Architectures that pool all tenants onto fully shared infrastructure with no per-tenant budget boundaries allow individual tenant behavior,heavy usage, loops, misconfigured automation,to consume resources meant for all others. The cost structure of the entire platform becomes hostage to the most aggressive tenant.

This is the "noisy neighbor" problem playing out at the economic layer. In shared queues or infrastructure, a noisy tenant sending large volumes of messages creates backlogs, increasing dwell time and degrading performance for every other tenant. Without isolation controls, one tenant's burst traffic can exhaust a monthly budget in a single afternoon.

Model Selection as Structural Cost Driver

In multi-tenant setups, organizations often default to the most capable,and expensive,model for all tenants regardless of task complexity. A free-tier tenant using GPT-4-class models for simple text classification generates the same per-token cost as an enterprise tenant using it for genuinely complex reasoning.

Model cost differentials are substantial:

| Vendor | Frontier Model | Input Cost | Output Cost | Smaller Model | Input Cost | Output Cost |

|---|---|---|---|---|---|---|

| OpenAI | GPT-4o | $2.50/1M tokens | $10.00/1M tokens | GPT-4o mini | $0.15/1M tokens | $0.60/1M tokens |

| Anthropic | Claude Opus 4 | $15.00/1M tokens | $75.00/1M tokens | Claude Haiku 3.5 | $0.80/1M tokens | $4.00/1M tokens |

| Gemini 1.5 Pro | $3.50/1M tokens | $10.50/1M tokens | Gemini 1.5 Flash | $0.075/1M tokens | $0.30/1M tokens |

Pricing approximate and subject to change. Verify current rates with each vendor.

Frontier models cost between 5× and 16× more than their smaller counterparts. Without intentional model tier assignment by tenant or task, this differential becomes a structural cost multiplier.

Runtime Enforcement Gaps

Model selection only matters if you can enforce it before requests run. Without pre-execution budget checks that block or degrade requests, cost overruns surface through post-billing reconciliation , by which point there is no operational recourse. AI inference drives unpredictable, usage-based costs that existing contracts cannot contain.

Preventive controls like token budgets per task, context window pruning, and circuit breakers are required to stop cost spirals before they start. Agentic workflows consume 10 to 30 times more tokens per task than standard chatbots , waiting for the billing cycle to catch overruns isn't a viable strategy.

Shared Component Cost Allocation

Infrastructure shared across tenants,load balancers, vector databases, embedding pipelines, API gateways,cannot be easily attributed to individual tenants. Organizations either absorb shared overhead costs or make allocation guesses that misrepresent per-tenant unit economics.

The problem compounds at the billing layer. Major cloud providers aggregate AI charges at the account or project level, obscuring per-tenant costs. OpenAI's Usage Dashboard does not consolidate cost data across organizations; costs are attributed at the org level. Google Cloud spend is aggregated at the organization level. Without application-layer metering, shared infrastructure remains a cost attribution black box.

Maturity-Dependent Cost Driver Shifts

Cost drivers shift in priority depending on deployment maturity. Early-stage multi-tenant AI deployments are dominated by model selection and isolation gaps. Mature platforms are dominated by allocation accuracy and governance overhead as regulatory requirements in industries like healthcare, insurance, and financial Solution demand audit-ready cost evidence per tenant.

Specific compliance obligations driving this include:

- Healthcare (HIPAA): Audit controls must log the operation, data accessed, agent identity, and timestamp for any system touching ePHI

- Insurance: AI-assisted underwriting requires documented governance, internal controls, and auditability to satisfy state regulators

- Cross-industry (NIST AI RMF): Organizations should maintain a tamper-proof history of generated content to enable traceability and support transparency obligations

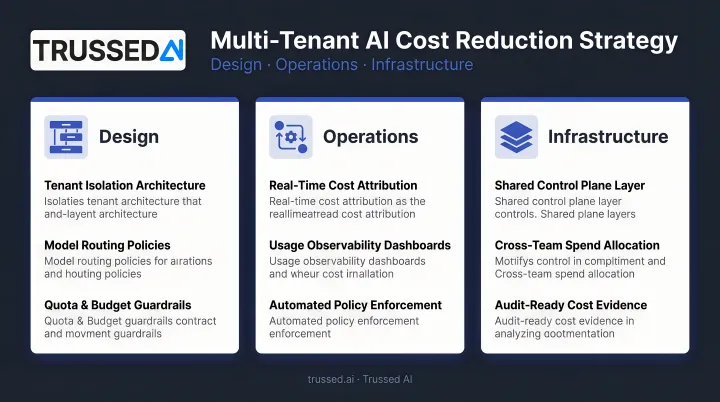

Cost-Reduction Strategies for Multi-Tenant AI Services

Cost reduction in multi-tenant AI is never one-dimensional. Strategies must address how the architecture is designed, how it is governed in operation, and how the surrounding infrastructure is structured. Applying any one category in isolation leaves the others as uncontrolled cost surfaces.

Strategies That Reduce Costs by Changing Decisions

These approaches address cost at the design and configuration stage,before tenants ever generate a request. The decisions made here determine the economic ceiling and floor for every tenant interaction.

Choose the tenant isolation model intentionally based on cost profile. Pool small, lower-value tenants onto shared infrastructure for resource efficiency. Give high-value or regulated enterprise tenants dedicated or semi-isolated resources. Resist defaulting to full isolation for all tenants, which duplicates infrastructure costs, or full pooling for all tenants, which creates noisy neighbor exposure and complicates chargeback.

Design hierarchical budget scopes from the start rather than retrofitting them. Structure budgets as nested levels,tenant, then team or department, then application or workflow, then individual agent or run. This hierarchy means a single tenant cannot exhaust platform-wide resources, and cost containment decisions made at any level automatically protect all levels above it.

Match model selection to tenant tier and task complexity by design. Define which model tiers are available to which subscription or service tiers. Reserve high-cost frontier models for tasks or tenants that genuinely require them, and route simpler tasks to cheaper or smaller models as a default policy. Model selection is typically the single biggest lever on per-tenant inference costs.

Strategies That Reduce Costs by Changing How It Is Managed

These approaches reduce cost by improving control and visibility while the system is actively running. The goal is to intercept cost events before they become cost incidents.

Implement pre-execution budget enforcement rather than post-billing reconciliation. Budget checks that run before a model call is dispatched can block or degrade the request when a tenant's balance is exhausted. Post-billing reconciliation can only tell you what happened; it cannot prevent the next overrun. This shift from reactive to preventive is the highest-leverage operational change in multi-tenant AI cost management.

Establish real-time cost attribution across teams, models, and applications,not just at the provider billing level. Without this, shared infrastructure costs become undifferentiated overhead that no one owns and no one optimizes. Platforms like Trussed AI provide real-time cost tracking and attribution as a built-in capability of the AI control plane, making per-tenant cost visibility a byproduct of every governed interaction rather than a separate analytics project.

Use quotas alongside budgets to govern consumption patterns, not just cumulative spend. Budgets answer "how much can this tenant spend?",quotas answer "how fast?" Without quotas, a tenant can exhaust a monthly budget in a single afternoon through burst traffic, agent parallelism, or misconfigured automation. Combining both creates a predictable usage envelope that protects platform margins and tenant expectations simultaneously.

Strategies That Reduce Costs by Changing the Context Around It

These approaches address the surrounding infrastructure and architectural environment. In many multi-tenant AI deployments, the platform setup itself is the dominant cost driver, not any single tenant's behavior.

Replace provider-level spending caps with tenant-level enforcement inside your own runtime. Provider caps operate at the account level, apply to all tenants combined, and are reactive: they report what was spent, not what is being spent. When usage hits the cap, Azure disables the subscription for the rest of the billing period, deallocating virtual machines and suspending Solution. Closing or suspending an active Cloud Billing account stops all billable Solution across linked projects.

Moving enforcement to your own runtime layer means each tenant's budget is checked atomically before execution, scoped to that tenant only, with no impact on other tenants when exhausted. Cost incidents become cost boundaries.

Build application-level metering for shared components rather than attempting to allocate shared infrastructure costs top-down from provider invoices. Log tenant identifiers at the request level across model invocations, embedding calls, and API gateway traffic. Aggregate this data asynchronously to produce per-tenant unit economics. This approach works regardless of how resources are shared and produces the granular cost data required for accurate chargeback and profitability analysis in regulated industries.

AWS Bedrock Application Inference Profiles allow custom cost allocation tags (e.g., tenant ID, project ID) to be applied directly to on-demand models, enabling per-tenant cost tracking even when infrastructure is shared.

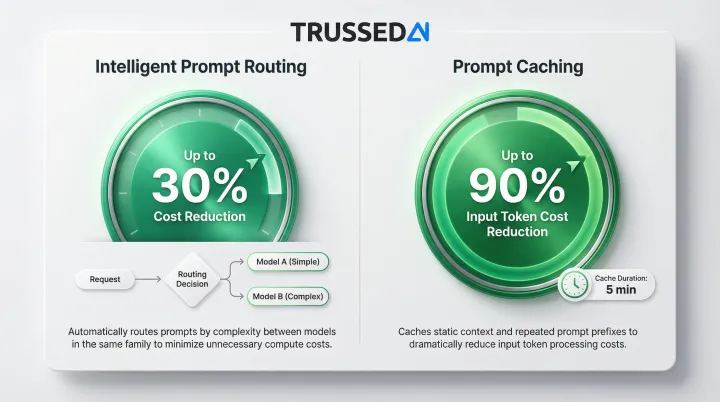

Use intelligent model routing and failover as a cost control mechanism. When a tenant's allocated budget for a primary model approaches exhaustion, route subsequent requests to a less expensive model tier rather than denying service outright:

- Intelligent prompt routing can reduce costs by up to 30% by routing requests between models in the same family based on prompt complexity

- Prompt caching can reduce input token costs by up to 90% by caching static context for up to 5 minutes

This preserves tenant experience, reduces cost per interaction for the remainder of the period, and avoids the operational disruption of hard denials , particularly valuable for tiered SaaS platforms where service continuity is a contractual obligation.

Conclusion

Reducing costs in multi-tenant AI Solution starts with knowing where cost actually originates. Organizations that cut AI spend without that diagnosis often target the wrong layer and create new problems in the process. The four cost origin points that matter:

- Isolation boundary , tenant separation model drives infrastructure baseline

- Model selection layer , capability mismatches inflate per-call costs

- Runtime enforcement layer , unthrottled usage by high-volume tenants distorts attribution

- Shared infrastructure attribution gap , unallocated overhead lands on the wrong budget lines

Cost management in multi-tenant AI doesn't end at architecture. Tenant behavior shifts, usage scales unevenly, new models enter the stack, and regulatory requirements for cost evidence keep expanding. Sustained control requires real-time visibility and enforcement at every layer , not periodic audits after the bill arrives.

Frequently Asked Questions

What is cost allocation in multi-tenant AI Solution?

Cost allocation in multi-tenant AI Solution attributes AI infrastructure expenses (model inference, token consumption, embeddings, API calls) to specific tenants, teams, or applications. This differs from provider billing, which aggregates all costs at the account level with no per-tenant breakdown.

How do you prevent one tenant's AI usage from impacting others?

This requires per-tenant budget enforcement at the runtime layer, not provider-level caps, so that when one tenant's budget is exhausted, only that tenant is blocked or degraded while all others continue operating normally. This is an isolation architecture problem, not a billing configuration problem.

What is the difference between AI cost budgets and quotas in multi-tenant systems?

Budgets track cumulative economic exposure (dollars or tokens spent) and block further spend when exhausted. Quotas govern operational boundaries (requests per hour, concurrent agents) and prevent burst patterns that could exhaust a budget in a short window. Both are needed together for predictable cost control.

How do you track AI costs per tenant when using shared model infrastructure?

Shared model infrastructure requires application-layer metering (logging tenant identifiers at the request level and aggregating usage data asynchronously) because provider invoices cannot be disaggregated by tenant. Purpose-built metering pipelines are more reliable than sampling-based monitoring tools.

What causes unexpected cost spikes in multi-tenant AI deployments?

The most common causes include:

- Agent loops or recursive tool calls generating unbounded token consumption

- Retry storms on transient errors

- Misconfigured automation running at scale overnight

- Burst usage from a single tenant exhausting shared capacity

Pre-execution budget checks are the primary defense against all of these.

How granular should per-tenant AI budget controls be?

Match granularity to your actual risk surface:

- Tenant-level budgets first, for isolation

- Run or session-level caps next, to prevent individual execution runaway

- Workflow and agent-level budgets as the platform matures

Over-engineering precision from day one adds complexity without proportional benefit.