Introduction

AI spending is exploding, but most enterprises can't explain where the money is going. Worldwide spending on AI reached $2.52 trillion in 2026, a 44% year-over-year increase. Organizations now spend an average of $1.2 million annually on AI-native applications alone, up 108% from 2025.

Yet 78% of IT leaders report unexpected charges tied to consumption-based AI pricing , and 61% have cut other projects to cover those unplanned costs.

The challenge isn't just the headline price. API pricing pages list per-token rates, but actual enterprise AI costs include infrastructure, talent, governance tooling, and compliance overhead. This article breaks down what AI really costs at different scales, what drives those costs up, and how to build a budget that holds when usage grows.

TLDR

- Cost range: Basic API usage runs hundreds of dollars monthly; enterprise deployments with governance can reach hundreds of thousands to millions annually

- Price drivers: Model tier, token volume, agentic complexity, compliance requirements, and governance overhead

- Who pays more: Regulated industries and organizations running autonomous agents pay substantially more than basic API users

- Biggest trap: API access is just the entry fee , compute, talent, governance tooling, and compliance routinely exceed it

How Much Does AI Cost? (Pricing Overview)

AI pricing has no universal answer. Costs shift based on deployment model, usage volume, model capability, and how deeply AI is integrated into business workflows.

The two most common budgeting mistakes both stem from misunderstanding AI pricing structure. First, teams underbudget by accounting only for API or subscription costs, leaving out infrastructure, governance, and talent. Second, organizations get hit with surprise charges when consumption-based billing scales faster than anticipated. That second problem is widespread - and often the more expensive one.

Entry-Level / API Access and SaaS Add-Ons

What's Typically Included:

- Access to hosted AI APIs with per-token LLM pricing

- AI feature add-ons in existing SaaS platforms (AI copilots, assistants)

- Minimal infrastructure overhead

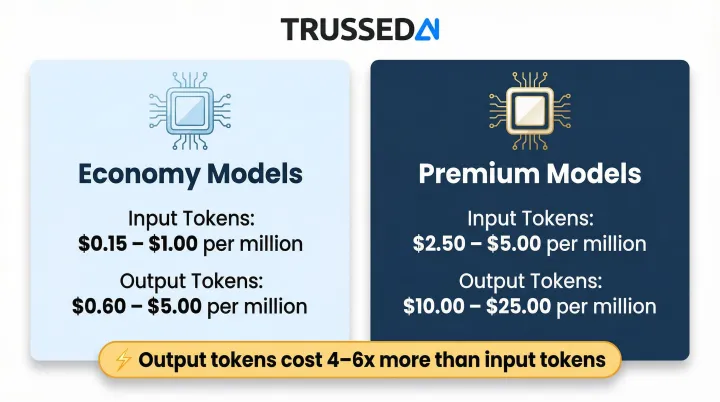

Current Token Pricing (2026):

- Economy models: $0.15–$1.00 per million input tokens, $0.60–$5.00 per million output tokens

- Premium models: $2.50–$5.00 per million input tokens, $10.00–$25.00 per million output tokens

For reference, OpenAI's GPT-4o mini costs $0.15 input/$0.60 output per million tokens, while Claude Opus 4.6 runs $5.00 input/$25.00 output.

Best For:

- Small teams and individual users

- Early-stage AI experimentation

- Organizations using AI as a feature within existing tools rather than a core capability

Mid-Range / Enterprise AI Deployment

What's Typically Included:

- AI-native applications (purpose-built tools)

- Cloud-hosted model inference at meaningful volume

- Initial integration and setup costs

- Usage-based billing that scales with team size

Organizations spent an average of $55.7 million on SaaS in 2026, with AI-native application spend averaging $1.2 million annually. That figure shifts considerably based on usage patterns and team size.

Best For:

- Organizations scaling AI across multiple departments

- Embedding AI into customer-facing workflows

- Predictable but growing usage patterns

High-End / Full-Stack Enterprise AI with Governance

What's Typically Included:

- Custom or fine-tuned models

- Dedicated compute infrastructure

- Multi-agent systems

- Compliance tooling and audit capabilities

- Specialized AI talent

Worldwide AI infrastructure spending reached $1.36 trillion in 2026, with software spending at $452 billion. Large enterprises deploying AI at scale face costs in the hundreds of thousands to millions annually.

Best For:

- Enterprises in regulated industries (healthcare, insurance, financial Solution)

- Organizations deploying autonomous AI agents in production at scale

- Environments where governance and reliability are non-negotiable

Key Factors That Affect the Cost of AI

What a business pays for AI is determined by technical decisions, organizational scale, and external compliance requirements,not just which model they choose.

Type and Capability of AI

The type of AI functionality you deploy dramatically impacts cost. Simple text classification tasks consume minimal resources, while generative LLMs require far more compute, and multi-step agentic systems multiply costs exponentially.

Agentic systems are the clearest example of this multiplication. A single agentic task can trigger 6-10 underlying LLM calls as the agent iterates through reasoning loops, evaluates options, calls tools, and refines outputs,each call accumulating token costs. What appears to be one user interaction becomes a cascade of billable events.

Volume and Scale of Usage

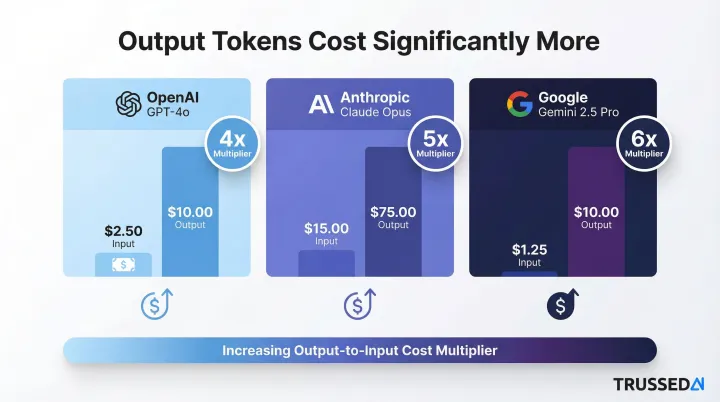

Token consumption, number of queries per user, and frequency of AI interactions directly compound costs. Output tokens cost significantly more than input tokens across all major providers,typically 4x to 6x more expensive.

Output token premium examples:

- OpenAI GPT-4o: output tokens cost 4x more ($10.00 vs. $2.50 per million tokens)

- Anthropic Claude Opus: output tokens cost 5x more ($75.00 vs. $15.00 per million tokens)

- Google Gemini 2.5 Pro: output tokens cost 6x more ($10.00 vs. $1.25 per million tokens)

This pricing structure makes high-output use cases like content generation, code synthesis, and conversational agents substantially more expensive than retrieval or classification tasks.

Infrastructure and Compute

Beyond API fees, GPU compute, cloud infrastructure, and vector storage for RAG retrieval add significant ongoing expenses. Open-source models carry no licensing cost, but hosting, DevOps, and maintenance investment can exceed commercial API access costs once you factor in engineering time and infrastructure overhead.

Labor and AI Talent

Skilled personnel represent a cost layer that many organizations underestimate at the outset. Key labor expenses include:

- Data scientists and ML engineers to build and maintain AI pipelines

- Prompt engineers to optimize model performance and output quality

- DevOps and platform engineers for infrastructure management

- Training and enablement programs as AI tools roll out across teams

In many enterprise deployments, labor costs rival or exceed direct API spend,particularly during the first 12-18 months of production rollout.

Compliance and Regulatory Requirements

Organizations in regulated industries face additional cost layers: data residency controls, explainability and audit tooling, legal reviews, and breach response capabilities.

Fragmented AI regulation will drive $1 billion in total compliance spend by 2030, with spending on AI governance expected to reach $492 million in 2026. Non-compliance penalties under the EU AI Act can reach €35 million or 7% of global revenue. As frameworks like the EU AI Act mature, these costs will only increase.

The True AI Cost Breakdown: One-Time vs. Recurring

The advertised price of AI is almost always just the starting point. The true total cost of ownership spans initial procurement, ongoing usage, infrastructure, and governance overhead,and for most enterprises, the recurring costs dwarf the upfront ones.

Initial Procurement and Licensing

This covers API access agreements, SaaS subscription commitments, or enterprise license fees for AI-native platforms. Many AI licenses now shift to consumption-based models with hybrid subscription floors, making upfront costs less predictable than they appear.

Inference and Usage Costs

The largest and most volatile ongoing cost for most organizations,every query, token, agent task, or API call accumulates. Estimated wasted cloud spend increased to 29% in 2026, reversing a five-year downward trend and reflecting growing cost complexity from AI.

Usage-based billing causes invoices to spike when adoption scales. Research shows a consistent gap between planned and actual AI cloud spend: 78% of IT leaders report unexpected charges tied to AI features or consumption-based pricing.

Infrastructure, Integration, and Maintenance

This category covers:

- Cloud compute and storage tied to AI workloads

- API integration maintenance and model update cycles

- DevOps overhead for keeping AI systems reliable in production

Teams routinely underestimate the engineering hours required after deployment. Unlike traditional software, AI systems need continuous monitoring and adjustment as models and usage patterns evolve.

Governance, Compliance, and Oversight Costs

The most commonly overlooked category. This includes monitoring AI outputs for policy violations, maintaining audit trails, ensuring regulatory compliance, and managing risk in production. Without automated tooling, it becomes a substantial manual workload.

Trussed AI's control plane addresses this directly: it generates audit evidence automatically and provides real-time cost attribution across teams, models, and applications,cutting manual governance workload by up to 50%. The platform applies policies automatically in real time, which eliminates weeks of manual compliance reconstruction when regulators ask how specific AI decisions were governed.

Low-Cost vs. High-Cost AI , What's the Real Difference?

The gap between a low-cost AI setup and a production-grade enterprise deployment is not just about price,it's about what breaks when you scale, and what it costs to fix it.

Performance and Reliability

Economy models and minimal infrastructure can handle low-stakes, low-volume tasks , but output quality degrades under load. For customer-facing or decision-critical workflows, that inconsistency has a direct business cost.

Premium deployments pair intelligent routing with automatic failover. When a model degrades or fails, traffic shifts to a backup provider without manual intervention, protecting enterprise SLAs and keeping production systems running.

Compliance and Audit Readiness

Basic API access with no governance layer leaves organizations exposed , especially in healthcare, financial Solution, and insurance. Without audit trails, demonstrating compliance to a regulator becomes a weeks-long manual reconstruction effort.

Enterprise deployments handle this automatically. Every AI interaction is logged with:

- Policy evaluation results

- Model version and timestamp

- Data lineage and request context

That log becomes audit-ready evidence without any additional work, eliminating the scramble when regulators come knocking.

Long-Term Total Cost of Ownership

The lower-cost path appears cheaper upfront. Compliance violations, model failures, and uncontrolled usage sprawl are expensive to fix after the fact , and the manual workload required to do so compounds as AI usage grows.

Upfront investment in governance infrastructure typically reduces long-term costs by eliminating that manual overhead. Automated policy enforcement and continuous compliance monitoring cut the need for manual reviews and post-incident reconstruction, reducing operational risk exposure over time.

How to Estimate the Right AI Budget , and What Most Teams Get Wrong

The right AI budget is not the lowest number that gets a project approved,it's the one that accounts for how AI behaves in production over time, not just in a proof of concept.

Factors to Include in Any AI Budget Estimate

Core components:

- Intended use case and expected query/agent volume

- Model tier required for accuracy and compliance needs

- Infrastructure and integration requirements

- Labor for deployment, maintenance, and enablement

- Ongoing governance, monitoring, and compliance overhead

- Cost buffer for usage spikes (15-25% recommended based on industry volatility data)

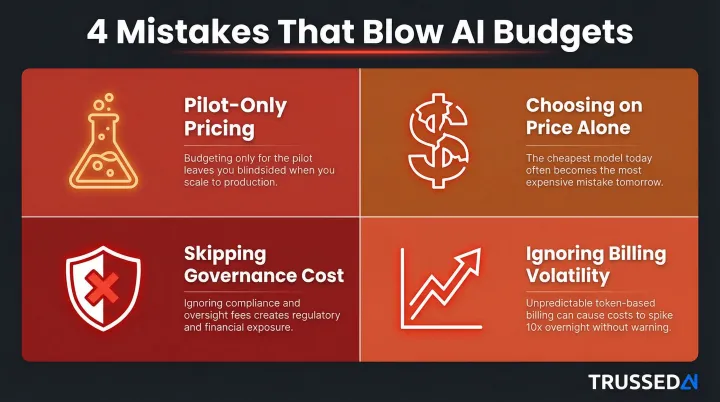

Common Mistakes That Blow AI Budgets

Most budget failures trace back to the same four mistakes:

- Pilot-only pricing: Proof-of-concept costs rarely reflect production reality. Token consumption, infrastructure, and governance overhead all multiply at scale.

- Choosing on price alone: Economy models may hit functional requirements but often fall short on consistency, audit readiness, or data protection in regulated environments.

- Skipping governance cost: Manual governance doesn't scale. As AI adoption expands across teams, disconnected tools and manual reviews create exponentially growing workload.

- Ignoring billing volatility: Without budget caps and real-time alerts, usage scales faster than visibility , creating billing surprises that force cuts elsewhere.

Practical Stress Test

Before finalizing any AI budget, model a scenario where AI costs increase 3-5x from current pricing. Gartner predicts that by 2030, performing inference on a large language model will cost GenAI providers over 90% less than in 2025, but warns that "falling GenAI provider token costs will not be fully passed on to enterprise customers." As token consumption rises faster than token costs fall, overall inference costs are expected to increase.

Confirm your business case holds under higher pricing scenarios. For high-volume, AI-dependent workflows , particularly in insurance, healthcare, or financial Solution , a 3-5x cost scenario isn't a worst case; it's a planning baseline. If the business case breaks at that threshold, the deployment isn't production-ready yet.

Conclusion

The cost of enterprise AI in 2026 is rarely what the pricing page suggests. Between token consumption, infrastructure, talent, compliance overhead, and governance tooling, the true cost is multi-layered , shaped by scale, use case, and the regulatory environment your AI operates in.

Organizations that budget effectively look beyond API rates to understand the full cost stack , and put governance infrastructure in place before a billing surprise or compliance failure forces their hand. Three practices separate those that stay ahead from those that scramble:

- Account for recurring costs across the entire AI stack, not just model APIs

- Build usage buffers into budgets before production workloads scale

- Implement real-time cost attribution so teams, applications, and models each carry their share

That visibility is what turns cost management from a quarterly fire drill into a routine operational control.

Frequently Asked Questions

How expensive is each AI query?

Per-query costs depend on model tier and token consumption. Economy models like GPT-4o mini cost roughly $0.001–$0.003 per typical query, while premium models like Claude Opus can cost $0.05–$0.15 per query. Agentic workflows multiply cost per user-visible query since they trigger multiple underlying calls,a single agent task can generate 6–10 LLM calls.

What is the average cost of an AI agent?

Agentic pricing varies widely. Salesforce Agentforce charges $2.00 per conversation; Intercom's Fin AI Agent costs $0.99 per resolved outcome. Self-built agents on LLM APIs accumulate token and tool-call costs that vary with workflow complexity and must be tracked at the task level.

What hidden costs should businesses expect when deploying AI?

Beyond model fees, common hidden costs include governance overhead, vector storage for RAG systems, retry costs from failed requests, and engineering labor to maintain AI in production. Most organizations underestimate the operational workload required to govern AI at scale.

How do usage-based AI pricing models work?

Usage-based pricing charges per unit of consumption,tokens, API calls, conversations, or completed tasks. Fifty percent of product managers now charge usage-based fees for generative AI capabilities, and 51% of SaaS companies use hybrid models pairing base subscriptions with metered add-ons. Without caps or alerts, this creates real budget risk when consumption scales faster than expected.

How can enterprises control AI costs as they scale?

Start with model tiering,routing simple tasks to cheaper models,then add prompt optimization, budget caps, and real-time cost attribution to track which workflows and teams are driving spend. Platforms like Trussed AI provide cost tracking across models, teams, and applications with enforceable limits that trigger alerts or hard stops before costs overrun.

Why do AI costs vary so much between vendors and models?

Pricing differences reflect underlying compute requirements, model capability, infrastructure overhead, and competitive strategy. Some vendors are currently pricing below sustainable levels to capture market share, meaning cost structures may shift significantly over the next 2–4 years. Additionally, output tokens consistently cost 4–6x more than input tokens, so generation-heavy workloads face substantially higher costs than retrieval or classification tasks.