The compliance gap is stark: traditional cloud security tools were built to audit static infrastructure,VMs, storage buckets, network configurations. They cannot govern autonomous agents making real-time decisions, multi-cloud data flows, or the continuous stream of model inference calls that define modern AI operations. As fragmented AI regulation quadruples by 2030 and extends to 75% of the world's economies, organizations face a critical question: how do you maintain compliance when your AI systems operate faster than your governance can follow?

This article covers the unique compliance challenges multi-cloud AI creates, the regulatory frameworks now in force, the essential capabilities compliance tools must deliver, and the layered tooling strategy organizations need to close governance gaps across distributed AI infrastructure.

TLDR

- Traditional infrastructure tools miss multi-cloud AI blind spots: agent actions, cross-cloud data flows, and runtime model behavior

- The EU AI Act and NIST AI RMF require model behavior logging, human oversight, and technical documentation,beyond standard infrastructure controls

- Layer CNAPPs, AI governance platforms, policy-as-code, and unified observability to achieve full compliance coverage

- No single platform covers everything; deploy complementary tools across infrastructure, runtime behavior, audit evidence, and identity management

Why Multi-Cloud AI Infrastructure Creates Unique Compliance Challenges

Traditional cloud compliance was designed around fixed assets. CSPM tools scan for misconfigured S3 buckets, overly permissive IAM roles, and unencrypted databases,infrastructure that changes slowly and predictably. AI workloads introduce fundamentally different variables: inference calls that execute thousands of times per hour, autonomous agents querying databases and invoking APIs without human intervention, and data flows that cross provider boundaries within milliseconds.

These dynamic elements fall outside the detection scope of standard cloud security platforms. A CSPM tool can verify that your AWS inference endpoint has encryption enabled, but it cannot evaluate whether the model output contains sensitive data that violates GDPR, or whether an agent's database query exceeds the scope authorized under HIPAA.

The compliance surface has shifted from infrastructure configuration to runtime system behavior - and most organizations are monitoring the former while exposing risk through the latter.

The Multi-Cloud Coverage Gap

When a single AI workflow routes inference through AWS Bedrock, retrieves context from a GCP vector database, and logs interactions through Azure monitoring Solution, no single provider's native compliance tooling sees the complete chain. Each platform captures its own slice:

- AWS CloudTrail logs the inference call

- GCP Audit Logs record the database query

- Azure Monitor tracks the logging event

Reconstructing the full workflow for audit purposes requires manual correlation across three separate systems,a process that breaks down at scale and creates regulatory blind spots that routine audits miss entirely.

Organizations face a governance velocity gap: AI teams deploy new models, integrate third-party APIs, and add tools to agent workflows faster than compliance programs can review them. Gartner predicts over 40% of agentic AI projects will fail by 2027 due to policy violations and lack of controls (Gartner, 2024). In regulated industries like healthcare and financial Solution, this misalignment between deployment speed and governance cadence directly translates to regulatory exposure. A clinical documentation assistant deployed Monday may be querying patient records by Wednesday,before the compliance team has reviewed data access policies or confirmed HIPAA logging requirements are met.

Agentic AI Creates Audit Trail Complexity Standard Logging Can't Handle

When an autonomous agent reads files from cloud storage, queries a customer database, and calls an external API to complete a single workflow, each action raises accountability questions traditional compliance tools weren't built to answer:

- Which policy authorized the database query?

- What data did the agent access?

- Did the external API call expose sensitive information?

Tracing a compliance violation back through an agent's decision chain requires purpose-built logging that captures governance decisions,not just infrastructure events. Standard cloud audit logs record what happened, not why it was permitted.

According to Flexera's 2026 State of the Cloud report, 73% of organizations operate hybrid cloud estates, and 89% reported multi-cloud usage in 2024. As AI workloads distribute across these environments, the compliance challenge scales exponentially: more providers, more Solution, more autonomous systems,and governance frameworks built for a simpler era.

Key Compliance Frameworks for Multi-Cloud AI Infrastructure

Foundational Cloud Compliance Standards

Multi-cloud AI infrastructure must satisfy the same foundational compliance requirements as traditional cloud workloads, but AI systems introduce new technical surfaces where these controls apply.

Enterprise security management standards validate that organizations maintain effective security, availability, processing integrity, confidentiality, and privacy controls over time. For AI infrastructure, this means logging every inference call, maintaining access controls on model endpoints, and ensuring AI systems meet defined SLAs. The key requirement: controls must operate consistently across all cloud providers, not just exist on paper.

Information security management requirements include access logging, privileged access controls, and information access restrictions. AI deployments must log model interactions, restrict access to training data and inference endpoints, and control privileged operations like model deployment across AWS, GCP, and Azure simultaneously.

HIPAA Security Rule mandates specific technical safeguards for systems handling protected health information:

- Access controls: unique user identification, emergency access procedures, automatic logoff, encryption

- Audit controls that record and examine activity across all system components

- Data integrity protections and transmission security

Healthcare AI systems must apply these controls to the AI interaction layer itself , logging who accessed patient data through an AI assistant, encrypting model outputs containing PHI, and maintaining audit trails of AI-driven clinical decisions.

GDPR imposes data protection requirements on AI systems handling personal data in the EU, including the right not to be subject to decisions based solely on automated processing (Article 22). Organizations must implement technical measures to ensure AI systems processing EU citizen data maintain privacy, provide transparency into automated decision-making, and enable individuals to challenge AI-driven outcomes.

AI-Specific Regulatory Frameworks Gaining Traction

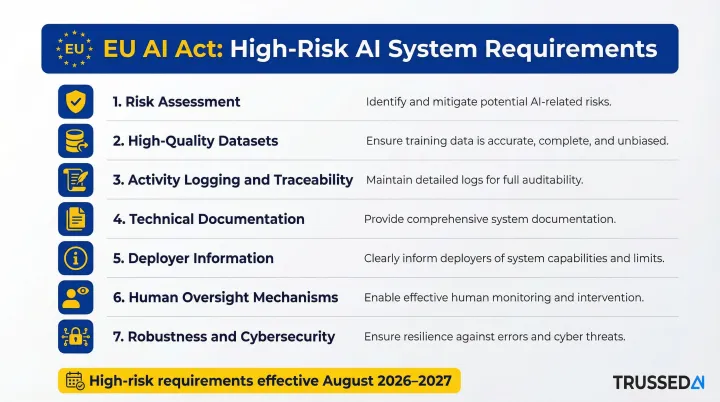

The EU AI Act entered into force on August 1, 2024, with high-risk system requirements taking effect in August 2026 and 2027. The regulation classifies AI systems into risk tiers,unacceptable (prohibited), high-risk, transparency, and minimal,with technical requirements scaling to risk level.

High-risk AI applications must implement:

- Adequate risk assessment and mitigation

- High-quality datasets for training and operation

- Activity logging to ensure traceability of decisions

- Detailed technical documentation

- Clear information provided to system deployers

- Appropriate human oversight mechanisms

- High levels of robustness, cybersecurity, and accuracy

These requirements differ in kind from infrastructure-focused compliance standards. The EU AI Act regulates model behavior and system accountability, not just whether your cloud resources are properly configured. Organizations must demonstrate that their AI systems make traceable decisions, maintain human oversight capabilities, and meet accuracy thresholds,requirements that infrastructure compliance tools cannot validate.

The NIST AI Risk Management Framework (AI RMF 1.0), released in January 2023, provides a structured approach to AI governance through four core functions: Govern, Map, Measure, and Manage. Unlike traditional security frameworks focused on technical controls, the AI RMF recognizes that AI systems are socio-technical in nature,influenced by societal dynamics, human behavior, and training data that changes over time. This means continuous risk management rather than point-in-time assessments , a meaningful departure from how most organizations have historically approached cloud infrastructure compliance.

Cross-Framework Mapping Tools

The CSA Cloud Controls Matrix (CCM) v4.1, released in January 2026, provides 207 controls across 17 security domains, offering a unified framework that maps to multiple compliance standards simultaneously. For organizations operating under overlapping requirements,such as HIPAA combined with other industry frameworks,the CCM enables identification of shared controls, reducing duplicated compliance effort.

The CSA AI Controls Matrix (AICM), released in July 2025 and updated in October 2025, extends this approach specifically to AI systems with 243 control objectives across 18 domains. The AICM provides cross-mappings to ISO 42001, NIST AI RMF 1.0, and BSI AIC4, enabling organizations to implement a single technical control,such as logging AI inference calls with complete context,in a way that satisfies multiple regulatory requirements at once. In multi-cloud AI environments, that cross-framework mapping capability is critical - rebuilding controls for each framework independently multiplies compliance overhead without adding protection.

Must-Have Capabilities in Compliance Tools for Multi-Cloud AI

Unified multi-cloud visibility is the prerequisite for all other compliance functions. Effective tools must aggregate compliance posture across AWS, GCP, and Azure,not just surface findings from individual cloud provider dashboards.

This visibility must extend to every AI-specific infrastructure layer:

- GPU compute clusters running training workloads

- Model serving endpoints handling inference requests

- Vector databases storing embeddings

- Agent orchestration layers coordinating multi-step workflows

Without coverage across all cloud providers and AI components, compliance teams cannot answer basic regulatory questions about system-wide posture.

Runtime policy enforcement is what separates AI compliance tools from infrastructure scanning platforms. Traditional CSPM tools identify misconfigurations after deployment,valuable, but insufficient for AI systems where the compliance risk emerges at the moment of inference. AI compliance requires policies enforced at execution: flagging or blocking non-compliant model calls, preventing unauthorized data access, and stopping out-of-policy agent actions as they occur, not hours later during a security scan.

Trussed AI exemplifies this capability by acting as a drop-in proxy that enforces governance at runtime across multi-cloud AI deployments. The platform sits in the flow of AI interactions, evaluating policy before every tool call, data access, and workflow trigger,without requiring application code changes. When an agent attempts to query a database containing sensitive customer information, Trussed authorizes the request against policy before it executes, blocking violations immediately rather than logging them for post-hoc review.

Automated audit evidence generation eliminates the manual compliance workload that bogs down most organizations. Compliance teams should not spend weeks reconstructing decision chains from raw cloud logs. Purpose-built tools capture governed interactions, policy decisions, and violations as structured, queryable evidence,generated automatically as a byproduct of every governed AI interaction.

When regulators ask how a specific AI decision was made, the evidence should be instantly available: complete chain of custody from prompt through model selection to output and action, with policy evaluation results, model versions, timestamps, and data lineage all captured without additional instrumentation.

AI-specific behavioral controls address the coverage gap that CNAPPs cannot close. Infrastructure compliance tools excel at detecting misconfigured S3 buckets and overly permissive IAM roles,but they stop there. They cannot monitor model outputs for data leakage, trace data lineage through RAG pipelines, or audit the action trails of autonomous agents.

Organizations need tools that go further:

- Evaluate prompt and response content for sensitive information exposure

- Track what data AI systems accessed and how it moved through processing pipelines

- Log every tool call and API request that agents make across cloud Solution

These capabilities are absent in traditional cloud security platforms and represent exactly where AI-specific regulatory violations occur.

Cross-framework control mapping prevents compliance teams from rebuilding the same technical control multiple times to satisfy different regulations. Tools should map a single implementation,logging an AI inference call with complete context,to multiple regulatory requirements simultaneously. That same log entry should satisfy HIPAA access logging requirements and EU AI Act traceability mandates without requiring separate logging mechanisms for each framework. This capability becomes essential as AI regulation fragments globally; organizations cannot afford to implement parallel compliance infrastructure for every jurisdiction.

Core Tool Categories for Multi-Cloud AI Compliance

Cloud-Native Application Protection Platforms (CNAPPs)

CNAPPs provide the foundational infrastructure compliance layer. Platforms such as Wiz, Orca Security, Palo Alto Prisma Cloud, and SentinelOne Singularity Cloud deliver comprehensive coverage of cloud misconfigurations, IAM posture issues, vulnerability findings, and compliance benchmarking against frameworks including CIS across all major cloud providers.

Leading CNAPP vendors each approach AI-specific posture management differently:

- Wiz AI-SPM: Full-stack visibility through AI-BOM (bill of materials), AI configuration baselines, data security posture management for AI workloads, and runtime monitoring that detects rogue agents operating outside governance

- Orca Security AI-SPM: Covers 50+ AI models with comprehensive AI/ML inventory, plus runtime threat detection for workloads and identities interacting with AI models and MCP servers

- Palo Alto Prisma Cloud: Visibility and control over data, model integrity, and access through AI-SPM; Prisma AIRS 3.0 adds an AI Agent Gateway that enforces agent runtime and identity security

CNAPPs are essential for infrastructure-level compliance, but they stop at the infrastructure boundary. They validate that your environment is configured correctly , not that your AI systems behave compliantly. Model behavior, agent actions, and real-time inference all fall outside their scope, which means CNAPPs must be paired with AI-specific governance tools to close the gap.

AI Governance and Runtime Control Planes

This emerging category addresses the compliance gap CNAPPs cannot close: runtime governance of AI systems themselves. AI governance platforms enforce policies across models, agents, and workflows in real time, generate structured audit trails automatically, and provide centralized visibility into AI-specific compliance metrics across cloud providers.

Gartner distinguishes AI governance platforms as a separate market from traditional GRC tools, projecting the category will reach $492 million in 2026. These platforms support regulations including the EU AI Act and NIST AI RMF, automate policy enforcement at runtime, map data usage across AI pipelines, and offer evidence collection tools for audit-ready documentation.

Trussed AI is a purpose-built AI control plane that integrates as a drop-in proxy, enforcing governance across multi-cloud AI deployments without requiring changes to existing application code. The platform enforces policies at the execution layer,evaluating authorization before every tool call, data access, and workflow trigger,and generates complete audit trails automatically. Every AI interaction is logged with policy evaluation results, model version, timestamp, and data lineage, providing continuous compliance monitoring and audit-ready evidence. Organizations achieve operational workflows within four weeks, with a 50% reduction in manual governance workload and 50% increase in regulatory compliance.

Infrastructure-as-Code and Policy-as-Code Tools

HashiCorp Terraform with Sentinel or Open Policy Agent (OPA) enables compliance-at-deployment by codifying regulatory requirements as machine-readable policies automatically checked when AI infrastructure is provisioned. This shift-left approach catches non-compliant configurations before they reach production,preventing the creation of publicly accessible model endpoints, blocking deployment of GPU clusters without encryption, and enforcing data residency requirements at provisioning time.

Native OPA support in Terraform Cloud allows Rego-based policies to operate alongside Sentinel, giving organizations flexibility in policy language while maintaining automated governance and compliance checking without manual review bottlenecks. By catching violations at deployment time, policy-as-code reduces the surface area that runtime tools must monitor and prevents compliance debt from accumulating in production environments.

Audit Logging, Observability, and SIEM Platforms

Cloud-native audit Solution and aggregation platforms capture the telemetry required to demonstrate compliance to auditors and enable cross-cloud correlation of events.

AWS CloudTrail captures API calls for Amazon Bedrock and Amazon Q as events, while CloudTrail Lake consolidates activity events from AWS and external sources including other cloud providers, enabling multi-cloud audit correlation from a single platform.

GCP Audit Logs write Data Access and Admin Activity audit logs for Vertex AI, capturing who accessed AI Solution, what operations they performed, and when interactions occurred.

Datadog LLM Observability provides end-to-end tracing across AI agents with visibility into inputs, outputs, latency, token usage, and errors. The platform automatically parses Google Cloud audit logs and integrates with AWS CloudTrail, enabling unified observability across multi-cloud AI deployments. Cross-cloud correlation matters most when a single AI workflow spans multiple cloud accounts. Reconstructing the complete interaction chain for compliance purposes requires unified visibility , isolated events in separate provider consoles are not enough.

Identity and Access Management for AI Systems

AI workloads introduce IAM challenges that traditional cloud identity tools were not designed to handle:

- Model inference service accounts that require least-privilege access to training data

- Dynamic agent permissions for autonomous systems that query databases and invoke APIs based on runtime context

- High-frequency identity controls for systems accessing sensitive data thousands of times per hour without human intervention

Cloud Infrastructure Entitlement Management (CIEM) capabilities within CNAPPs, combined with cloud-native IAM Solution, control and audit who , and what AI system , can access what data across cloud environments. CIEM manages and secures access rights and permissions, defining what actions a cloud identity can perform. In AI contexts, this becomes critical because AI agents operate through service identities, requiring least-privilege permissions to prevent unauthorized tool invocation or privilege escalation. When an agent's service account carries excessive permissions, infrastructure-focused IAM tools can flag the risk. Enforcing the correct boundary at runtime, however, requires AI-specific governance platforms operating at the execution layer.

Best Practices for Rolling Out AI Compliance Tooling

Use a Layered Tooling Strategy

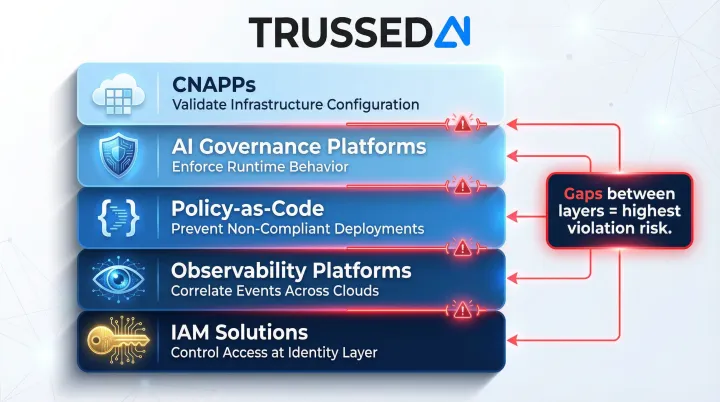

A single platform won't cover the full AI compliance stack. Layer infrastructure-level CNAPPs with AI-specific runtime governance tools and observability platforms so coverage spans from cloud configuration to live model behavior. Each layer addresses a distinct compliance surface:

- CNAPPs validate infrastructure configuration

- AI governance platforms enforce runtime behavior

- Policy-as-code prevents non-compliant deployments

- Observability platforms correlate events across clouds

- IAM solutions control access at the identity layer

The gaps between these layers are where violations most commonly occur. Organizations that rely exclusively on CNAPPs miss runtime AI behavior violations. Those using only runtime governance tools miss infrastructure misconfigurations. Effective compliance requires all layers working together.

Shift Compliance Left in AI Pipelines

Embed policy-as-code checks into CI/CD and MLOps pipelines so non-compliant configurations and model deployment parameters are caught before production,not discovered during audits or post-incident investigations.

The remediation cost difference is significant. Fixing a misconfigured model endpoint during deployment takes minutes; catching the same issue after it has processed customer data for weeks creates regulatory exposure and potential breach notification obligations.

Establish Monitoring with Clear Team Ownership

Continuous compliance monitoring only works when accountability is defined. Assign each compliance domain to a specific team:

- Infrastructure team owns CNAPP findings and remediates cloud misconfigurations

- AI platform team manages runtime governance alerts and policy violations

- Security team correlates cross-cloud events and investigates incidents

- Compliance team maintains regulatory mappings and audit evidence

With ownership established, implement automated alerting for policy drift across all cloud environments.

Review cycles matter as much as alerting. The EU AI Act, NIST AI RMF, and jurisdiction-specific AI regulations are all evolving,programs that review policies annually will fall behind. Quarterly reviews aligned with regulatory updates keep governance current as new rules take effect.

Frequently Asked Questions

Which technology is often paired with AI for compliance monitoring?

Machine learning and behavioral analytics are commonly integrated with AI compliance monitoring tools to enable anomaly detection, automated policy enforcement, and continuous posture assessment. These technologies identify unusual patterns in AI system behavior that may indicate policy violations or security incidents.

Which framework is commonly used for assessing cloud security compliance?

NIST CSF and the CSA Cloud Controls Matrix are among the most widely used frameworks for cloud security compliance. For AI workloads specifically, the EU AI Act and NIST AI RMF are increasingly required in regulated enterprise environments.

What makes AI compliance in multi-cloud different from traditional cloud compliance?

AI compliance must govern runtime model behavior, agent actions, and inference-level audit trails. Infrastructure configuration alone is not sufficient. Multi-cloud environments mean no single provider's native tooling has end-to-end visibility across the full AI workflow, requiring purpose-built tools that span cloud boundaries.

What's a common compliance tool used in cloud environments?

CNAPPs like Wiz and Palo Alto Prisma Cloud are widely used infrastructure compliance tools. AI governance platforms are purpose-built to govern AI workloads at runtime , a layer traditional cloud tools were not designed to address.

How do compliance tools handle AI agents and real-time model outputs?

Most traditional cloud compliance tools do not address this layer. AI governance platforms close this gap by acting as runtime proxies that log, evaluate, and enforce policies on model calls, agent actions, and workflow outputs as they occur. The result is audit-ready evidence of AI system behavior generated automatically.

What is a common tool used for automating cloud infrastructure deployment in compliance workflows?

HashiCorp Terraform is a widely used infrastructure-as-code tool for multi-cloud provisioning, often paired with policy-as-code frameworks like Open Policy Agent (OPA) or Sentinel to enforce compliance requirements at deployment time.